The encoder-decoder model provides a pattern for using recurrent neural networks to address challenging sequence-to-sequence prediction problems such as machine translation.

Encoder-decoder models can be developed in the Keras Python deep learning library and an example of a neural machine translation system developed with this model has been described on the Keras blog, with sample code distributed with the Keras project.

This example can provide the basis for developing encoder-decoder LSTM models for your own sequence-to-sequence prediction problems.

In this tutorial, you will discover how to develop a sophisticated encoder-decoder recurrent neural network for sequence-to-sequence prediction problems with Keras.

After completing this tutorial, you will know:

- How to correctly define a sophisticated encoder-decoder model in Keras for sequence-to-sequence prediction.

- How to define a contrived yet scalable sequence-to-sequence prediction problem that you can use to evaluate the encoder-decoder LSTM model.

- How to apply the encoder-decoder LSTM model in Keras to address the scalable integer sequence-to-sequence prediction problem.

Kick-start your project with my new book Long Short-Term Memory Networks With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Update Jan/2020: Updated API for Keras 2.3 and TensorFlow 2.0.

How to Develop an Encoder-Decoder Model for Sequence-to-Sequence Prediction in Keras

Photo by Björn Groß, some rights reserved.

Tutorial Overview

This tutorial is divided into 3 parts; they are:

- Encoder-Decoder Model in Keras

- Scalable Sequence-to-Sequence Problem

- Encoder-Decoder LSTM for Sequence Prediction

Python Environment

This tutorial assumes you have a Python SciPy environment installed. You can use either Python 2 or 3 with this tutorial.

You must have Keras (2.0 or higher) installed with either the TensorFlow or Theano backend.

The tutorial also assumes you have scikit-learn, Pandas, NumPy, and Matplotlib installed.

If you need help with your environment, see this post:

Encoder-Decoder Model in Keras

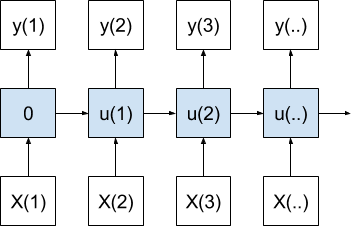

The encoder-decoder model is a way of organizing recurrent neural networks for sequence-to-sequence prediction problems.

It was originally developed for machine translation problems, although it has proven successful at related sequence-to-sequence prediction problems such as text summarization and question answering.

The approach involves two recurrent neural networks, one to encode the source sequence, called the encoder, and a second to decode the encoded source sequence into the target sequence, called the decoder.

The Keras deep learning Python library provides an example of how to implement the encoder-decoder model for machine translation (lstm_seq2seq.py) described by the libraries creator in the post: “A ten-minute introduction to sequence-to-sequence learning in Keras.”

For a detailed breakdown of this model see the post:

For more information on the use of return_state, which might be new to you, see the post:

For more help getting started with the Keras Functional API, see the post:

Using the code in that example as a starting point, we can develop a generic function to define an encoder-decoder recurrent neural network. Below is this function named define_models().

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

# returns train, inference_encoder and inference_decoder models def define_models(n_input, n_output, n_units): # define training encoder encoder_inputs = Input(shape=(None, n_input)) encoder = LSTM(n_units, return_state=True) encoder_outputs, state_h, state_c = encoder(encoder_inputs) encoder_states = [state_h, state_c] # define training decoder decoder_inputs = Input(shape=(None, n_output)) decoder_lstm = LSTM(n_units, return_sequences=True, return_state=True) decoder_outputs, _, _ = decoder_lstm(decoder_inputs, initial_state=encoder_states) decoder_dense = Dense(n_output, activation='softmax') decoder_outputs = decoder_dense(decoder_outputs) model = Model([encoder_inputs, decoder_inputs], decoder_outputs) # define inference encoder encoder_model = Model(encoder_inputs, encoder_states) # define inference decoder decoder_state_input_h = Input(shape=(n_units,)) decoder_state_input_c = Input(shape=(n_units,)) decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c] decoder_outputs, state_h, state_c = decoder_lstm(decoder_inputs, initial_state=decoder_states_inputs) decoder_states = [state_h, state_c] decoder_outputs = decoder_dense(decoder_outputs) decoder_model = Model([decoder_inputs] + decoder_states_inputs, [decoder_outputs] + decoder_states) # return all models return model, encoder_model, decoder_model |

The function takes 3 arguments, as follows:

- n_input: The cardinality of the input sequence, e.g. number of features, words, or characters for each time step.

- n_output: The cardinality of the output sequence, e.g. number of features, words, or characters for each time step.

- n_units: The number of cells to create in the encoder and decoder models, e.g. 128 or 256.

The function then creates and returns 3 models, as follows:

- train: Model that can be trained given source, target, and shifted target sequences.

- inference_encoder: Encoder model used when making a prediction for a new source sequence.

- inference_decoder Decoder model use when making a prediction for a new source sequence.

The model is trained given source and target sequences where the model takes both the source and a shifted version of the target sequence as input and predicts the whole target sequence.

For example, one source sequence may be [1,2,3] and the target sequence [4,5,6]. The inputs and outputs to the model during training would be:

|

1 2 3 |

Input1: ['1', '2', '3'] Input2: ['_', '4', '5'] Output: ['4', '5', '6'] |

The model is intended to be called recursively when generating target sequences for new source sequences.

The source sequence is encoded and the target sequence is generated one element at a time, using a “start of sequence” character such as ‘_’ to start the process. Therefore, in the above case, the following input-output pairs would occur during training:

|

1 2 3 4 |

t, Input1, Input2, Output 1, ['1', '2', '3'], '_', '4' 2, ['1', '2', '3'], '4', '5' 3, ['1', '2', '3'], '5', '6' |

Here you can see how the recursive use of the model can be used to build up output sequences.

During prediction, the inference_encoder model is used to encode the input sequence once which returns states that are used to initialize the inference_decoder model. From that point, the inference_decoder model is used to generate predictions step by step.

The function below named predict_sequence() can be used after the model is trained to generate a target sequence given a source sequence.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# generate target given source sequence def predict_sequence(infenc, infdec, source, n_steps, cardinality): # encode state = infenc.predict(source) # start of sequence input target_seq = array([0.0 for _ in range(cardinality)]).reshape(1, 1, cardinality) # collect predictions output = list() for t in range(n_steps): # predict next char yhat, h, c = infdec.predict([target_seq] + state) # store prediction output.append(yhat[0,0,:]) # update state state = [h, c] # update target sequence target_seq = yhat return array(output) |

This function takes 5 arguments as follows:

- infenc: Encoder model used when making a prediction for a new source sequence.

- infdec: Decoder model use when making a prediction for a new source sequence.

- source:Encoded source sequence.

- n_steps: Number of time steps in the target sequence.

- cardinality: The cardinality of the output sequence, e.g. the number of features, words, or characters for each time step.

The function then returns a list containing the target sequence.

Scalable Sequence-to-Sequence Problem

In this section, we define a contrived and scalable sequence-to-sequence prediction problem.

The source sequence is a series of randomly generated integer values, such as [20, 36, 40, 10, 34, 28], and the target sequence is a reversed pre-defined subset of the input sequence, such as the first 3 elements in reverse order [40, 36, 20].

The length of the source sequence is configurable; so is the cardinality of the input and output sequence and the length of the target sequence.

We will use source sequences of 6 elements, a cardinality of 50, and target sequences of 3 elements.

Below are some more examples to make this concrete.

|

1 2 3 4 5 |

Source, Target [13, 28, 18, 7, 9, 5] [18, 28, 13] [29, 44, 38, 15, 26, 22] [38, 44, 29] [27, 40, 31, 29, 32, 1] [31, 40, 27] ... |

You are encouraged to explore larger and more complex variations. Post your findings in the comments below.

Let’s start off by defining a function to generate a sequence of random integers.

We will use the value of 0 as the padding or start of sequence character, therefore it is reserved and we cannot use it in our source sequences. To achieve this, we will add 1 to our configured cardinality to ensure the one-hot encoding is large enough (e.g. a value of 1 maps to a ‘1’ value in index 1).

For example:

|

1 |

n_features = 50 + 1 |

We can use the randint() python function to generate random integers in a range between 1 and 1-minus the size of the problem’s cardinality. The generate_sequence() below generates a sequence of random integers.

|

1 2 3 |

# generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(1, n_unique-1) for _ in range(length)] |

Next, we need to create the corresponding output sequence given the source sequence.

To keep thing simple, we will select the first n elements of the source sequence as the target sequence and reverse them.

|

1 2 3 |

# define target sequence target = source[:n_out] target.reverse() |

We also need a version of the output sequence shifted forward by one time step that we can use as the mock target generated so far, including the start of sequence value in the first time step. We can create this from the target sequence directly.

|

1 2 |

# create padded input target sequence target_in = [0] + target[:-1] |

Now that all of the sequences have been defined, we can one-hot encode them, i.e. transform them into sequences of binary vectors. We can use the Keras built in to_categorical() function to achieve this.

We can put all of this into a function named get_dataset() that will generate a specific number of sequences that we can use to train a model.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# prepare data for the LSTM def get_dataset(n_in, n_out, cardinality, n_samples): X1, X2, y = list(), list(), list() for _ in range(n_samples): # generate source sequence source = generate_sequence(n_in, cardinality) # define target sequence target = source[:n_out] target.reverse() # create padded input target sequence target_in = [0] + target[:-1] # encode src_encoded = to_categorical([source], num_classes=cardinality) tar_encoded = to_categorical([target], num_classes=cardinality) tar2_encoded = to_categorical([target_in], num_classes=cardinality) # store X1.append(src_encoded) X2.append(tar2_encoded) y.append(tar_encoded) return array(X1), array(X2), array(y) |

Finally, we need to be able to decode a one-hot encoded sequence to make it readable again.

This is needed for both printing the generated target sequences but also for easily comparing whether the full predicted target sequence matches the expected target sequence. The one_hot_decode() function will decode an encoded sequence.

|

1 2 3 |

# decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] |

We can tie all of this together and test these functions.

A complete worked example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

from random import randint from numpy import array from numpy import argmax from keras.utils import to_categorical # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(1, n_unique-1) for _ in range(length)] # prepare data for the LSTM def get_dataset(n_in, n_out, cardinality, n_samples): X1, X2, y = list(), list(), list() for _ in range(n_samples): # generate source sequence source = generate_sequence(n_in, cardinality) # define target sequence target = source[:n_out] target.reverse() # create padded input target sequence target_in = [0] + target[:-1] # encode src_encoded = to_categorical([source], num_classes=cardinality) tar_encoded = to_categorical([target], num_classes=cardinality) tar2_encoded = to_categorical([target_in], num_classes=cardinality) # store X1.append(src_encoded) X2.append(tar2_encoded) y.append(tar_encoded) return array(X1), array(X2), array(y) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # configure problem n_features = 50 + 1 n_steps_in = 6 n_steps_out = 3 # generate a single source and target sequence X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 1) print(X1.shape, X2.shape, y.shape) print('X1=%s, X2=%s, y=%s' % (one_hot_decode(X1[0]), one_hot_decode(X2[0]), one_hot_decode(y[0]))) |

Running the example first prints the shape of the generated dataset, ensuring the 3D shape required to train the model matches our expectations.

The generated sequence is then decoded and printed to screen demonstrating both that the preparation of source and target sequences matches our intention and that the decode operation is working.

|

1 2 |

(1, 6, 51) (1, 3, 51) (1, 3, 51) X1=[32, 16, 12, 34, 25, 24], X2=[0, 12, 16], y=[12, 16, 32] |

We are now ready to develop a model for this sequence-to-sequence prediction problem.

Encoder-Decoder LSTM for Sequence Prediction

In this section, we will apply the encoder-decoder LSTM model developed in the first section to the sequence-to-sequence prediction problem developed in the second section.

The first step is to configure the problem.

|

1 2 3 4 |

# configure problem n_features = 50 + 1 n_steps_in = 6 n_steps_out = 3 |

Next, we must define the models and compile the training model.

|

1 2 3 |

# define model train, infenc, infdec = define_models(n_features, n_features, 128) train.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy']) |

Next, we can generate a training dataset of 100,000 examples and train the model.

|

1 2 3 4 5 |

# generate training dataset X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 100000) print(X1.shape,X2.shape,y.shape) # train model train.fit([X1, X2], y, epochs=1) |

Once the model is trained, we can evaluate it. We will do this by making predictions for 100 source sequences and counting the number of target sequences that were predicted correctly. We will use the numpy array_equal() function on the decoded sequences to check for equality.

|

1 2 3 4 5 6 7 8 |

# evaluate LSTM total, correct = 100, 0 for _ in range(total): X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 1) target = predict_sequence(infenc, infdec, X1, n_steps_out, n_features) if array_equal(one_hot_decode(y[0]), one_hot_decode(target)): correct += 1 print('Accuracy: %.2f%%' % (float(correct)/float(total)*100.0)) |

Finally, we will generate some predictions and print the decoded source, target, and predicted target sequences to get an idea of whether the model is working as expected.

Putting all of these elements together, the complete code example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 |

from random import randint from numpy import array from numpy import argmax from numpy import array_equal from keras.utils import to_categorical from keras.models import Model from keras.layers import Input from keras.layers import LSTM from keras.layers import Dense # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(1, n_unique-1) for _ in range(length)] # prepare data for the LSTM def get_dataset(n_in, n_out, cardinality, n_samples): X1, X2, y = list(), list(), list() for _ in range(n_samples): # generate source sequence source = generate_sequence(n_in, cardinality) # define padded target sequence target = source[:n_out] target.reverse() # create padded input target sequence target_in = [0] + target[:-1] # encode src_encoded = to_categorical([source], num_classes=cardinality) tar_encoded = to_categorical([target], num_classes=cardinality) tar2_encoded = to_categorical([target_in], num_classes=cardinality) # store X1.append(src_encoded) X2.append(tar2_encoded) y.append(tar_encoded) return array(X1), array(X2), array(y) # returns train, inference_encoder and inference_decoder models def define_models(n_input, n_output, n_units): # define training encoder encoder_inputs = Input(shape=(None, n_input)) encoder = LSTM(n_units, return_state=True) encoder_outputs, state_h, state_c = encoder(encoder_inputs) encoder_states = [state_h, state_c] # define training decoder decoder_inputs = Input(shape=(None, n_output)) decoder_lstm = LSTM(n_units, return_sequences=True, return_state=True) decoder_outputs, _, _ = decoder_lstm(decoder_inputs, initial_state=encoder_states) decoder_dense = Dense(n_output, activation='softmax') decoder_outputs = decoder_dense(decoder_outputs) model = Model([encoder_inputs, decoder_inputs], decoder_outputs) # define inference encoder encoder_model = Model(encoder_inputs, encoder_states) # define inference decoder decoder_state_input_h = Input(shape=(n_units,)) decoder_state_input_c = Input(shape=(n_units,)) decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c] decoder_outputs, state_h, state_c = decoder_lstm(decoder_inputs, initial_state=decoder_states_inputs) decoder_states = [state_h, state_c] decoder_outputs = decoder_dense(decoder_outputs) decoder_model = Model([decoder_inputs] + decoder_states_inputs, [decoder_outputs] + decoder_states) # return all models return model, encoder_model, decoder_model # generate target given source sequence def predict_sequence(infenc, infdec, source, n_steps, cardinality): # encode state = infenc.predict(source) # start of sequence input target_seq = array([0.0 for _ in range(cardinality)]).reshape(1, 1, cardinality) # collect predictions output = list() for t in range(n_steps): # predict next char yhat, h, c = infdec.predict([target_seq] + state) # store prediction output.append(yhat[0,0,:]) # update state state = [h, c] # update target sequence target_seq = yhat return array(output) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # configure problem n_features = 50 + 1 n_steps_in = 6 n_steps_out = 3 # define model train, infenc, infdec = define_models(n_features, n_features, 128) train.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy']) # generate training dataset X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 100000) print(X1.shape,X2.shape,y.shape) # train model train.fit([X1, X2], y, epochs=1) # evaluate LSTM total, correct = 100, 0 for _ in range(total): X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 1) target = predict_sequence(infenc, infdec, X1, n_steps_out, n_features) if array_equal(one_hot_decode(y[0]), one_hot_decode(target)): correct += 1 print('Accuracy: %.2f%%' % (float(correct)/float(total)*100.0)) # spot check some examples for _ in range(10): X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 1) target = predict_sequence(infenc, infdec, X1, n_steps_out, n_features) print('X=%s y=%s, yhat=%s' % (one_hot_decode(X1[0]), one_hot_decode(y[0]), one_hot_decode(target))) |

Running the example first prints the shape of the prepared dataset.

|

1 |

(100000, 6, 51) (100000, 3, 51) (100000, 3, 51) |

Next, the model is fit. You should see a progress bar and the run should take less than one minute on a modern multi-core CPU.

|

1 |

100000/100000 [==============================] - 50s - loss: 0.6344 - acc: 0.7968 |

Next, the model is evaluated and the accuracy printed.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

We can see that the model achieves 100% accuracy on new randomly generated examples.

|

1 |

Accuracy: 100.00% |

Finally, 10 new examples are generated and target sequences are predicted. Again, we can see that the model correctly predicts the output sequence in each case and the expected value matches the reversed first 3 elements of the source sequences.

|

1 2 3 4 5 6 7 8 9 10 |

X=[22, 17, 23, 5, 29, 11] y=[23, 17, 22], yhat=[23, 17, 22] X=[28, 2, 46, 12, 21, 6] y=[46, 2, 28], yhat=[46, 2, 28] X=[12, 20, 45, 28, 18, 42] y=[45, 20, 12], yhat=[45, 20, 12] X=[3, 43, 45, 4, 33, 27] y=[45, 43, 3], yhat=[45, 43, 3] X=[34, 50, 21, 20, 11, 6] y=[21, 50, 34], yhat=[21, 50, 34] X=[47, 42, 14, 2, 31, 6] y=[14, 42, 47], yhat=[14, 42, 47] X=[20, 24, 34, 31, 37, 25] y=[34, 24, 20], yhat=[34, 24, 20] X=[4, 35, 15, 14, 47, 33] y=[15, 35, 4], yhat=[15, 35, 4] X=[20, 28, 21, 39, 5, 25] y=[21, 28, 20], yhat=[21, 28, 20] X=[50, 38, 17, 25, 31, 48] y=[17, 38, 50], yhat=[17, 38, 50] |

You now have a template for an encoder-decoder LSTM model that you can apply to your own sequence-to-sequence prediction problems.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Related Posts

- How to Setup a Python Environment for Machine Learning and Deep Learning with Anaconda

- How to Define an Encoder-Decoder Sequence-to-Sequence Model for Neural Machine Translation in Keras

- Understand the Difference Between Return Sequences and Return States for LSTMs in Keras

- How to Use the Keras Functional API for Deep Learning

Keras Resources

- A ten-minute introduction to sequence-to-sequence learning in Keras

- Keras seq2seq Code Example (lstm_seq2seq)

- Keras Functional API

- LSTM API in Keras

Summary

In this tutorial, you discovered how to develop an encoder-decoder recurrent neural network for sequence-to-sequence prediction problems with Keras.

Specifically, you learned:

- How to correctly define a sophisticated encoder-decoder model in Keras for sequence-to-sequence prediction.

- How to define a contrived yet scalable sequence-to-sequence prediction problem that you can use to evaluate the encoder-decoder LSTM model.

- How to apply the encoder-decoder LSTM model in Keras to address the scalable integer sequence-to-sequence prediction problem.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Is this model suited for sequence regression too? For example the shampoo sales problem

You could try, but generally LSTMs do not perform well on autoregression problems:

https://machinelearningmastery.com/suitability-long-short-term-memory-networks-time-series-forecasting/

Hello, can this example be applied to the prediction of floating point numbers?For example [0.123,0.234,0.345], the target is [0.234,0.123],

Yes, see an example here:

https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

Hi, thank you for your post. I am new to LSTM. It looks like the target should always be a subset of the source, could we use LSTM to predict the target which is not a subset of the source? For example, the source is [0.123,0.234,0.345], and the target is [0.564, 0.923, 0.667]? Thanks in advance.

Yes, you can.

Hi. is it possible to have multi layers of LSTM in encoder and decoder in this code? thank you for your great blog

Yes, but I don’t have an example. For this specific case it would require some careful re-design.

Hi, maybe this time you got any idea of multi-layer of LSTM autoencoder?

Perhaps this will help as a start:

https://machinelearningmastery.com/lstm-autoencoders/

@Teimour @Dk @jason Brownlee Here is a great example of a multilayer encoder-decoder using the Keras functional API.

Could you give some more detail as to how it would need to be modified to have multiple LSTM layers in the decoder? I am working on something similar and looking to add layers to the decoder.

How can I extract the bottleneck layer to extract the important features with sequence data?

You could access the returned states to get the context vector, but it does not help you understand which input features are relevant/important.

Thank you Jason!

You’re welcome.

Thanks for the wonderful tutorial, Jason!

I am facing an issue, though: I tried to execute your code as is (copy-pasted it), but it throws an error:

Using TensorFlow backend.

Traceback (most recent call last):

File “C:\Users\User\Documents\pystuff\keras_auto.py”, line 91, in

train, infenc, infdec = define_models(n_features, n_features, 128)

File “C:\Users\User\Documents\pystuff\keras_auto.py”, line 40, in define_models

encoder = LSTM(n_units, return_state=True)

File “C:\Users\User\Anaconda3\envs\py35\lib\site-packages\keras\legacy\interfaces.py”, line 88, in wrapper

return func(*args, **kwargs)

File “C:\Users\User\Anaconda3\envs\py35\lib\site-packages\keras\layers\recurrent.py”, line 949, in __init__

super(LSTM, self).__init__(**kwargs)

File C:\Users\User\Anaconda3\envs\py35\lib\site-packages\keras\layers\recurrent.py”, line 191, in __init__

super(Recurrent, self).__init__(**kwargs)

File “C:\Users\User\Anaconda3\envs\py35\lib\site-packages\keras\engine\topology.py”, line 281, in __init__

raise TypeError(‘Keyword argument not understood:’, kwarg)

TypeError: (‘Keyword argument not understood:’, ‘return_state’)

I am using an anaconda environment (python 3.5.3). What could have possibly gone wrong?

Perhaps confirm that you have the most recent version of Keras and TensorFlow installed.

I had the same problem, and updated Keras (to version 2.1.2) and TensorFlow (to version 1.4.0). The problem above was solved. However, I now see that the shapes of X1, X2, and y are ((100000, 1, 6, 51), (100000, 1, 3, 51), (100000, 1, 3, 51)) instead of ((100000, 6, 51), (100000, 3, 51), (100000, 3, 51)). Why could this be?

I’m not sure, perhaps related to recent API changes in Keras?

Here’s how I fixed the problem.

At the top of the code, include this line (before any ‘from numpy import’ statements:

import numpy as np

Change the get_dataset() function to the following:

Notice instead of returning array(X1), array(X2), array(y), we now return arrays that have been squeezed – one axis has been removed. We remove axis 1 because it’s the wrong shape for what we need.

The output is now as it should be (although I’m getting 98% accuracy instead of 100%).

Thanks for sharing.

Perhaps confirm that you have updated Keras to 2.1.2, it fixes bugs with to_categorical()?

yes, we have to reshape the model and then everything works fine

what is(n_in, cardinality)?

I am facing difficulty in using it with a user-defined variable.for random what should be the input and input shape for the arguments

See this:

https://machinelearningmastery.com/faq/single-faq/what-is-the-difference-between-samples-timesteps-and-features-for-lstm-input

I removed the brackets [ ] in the parameters to to_categorical instead. I think because parameters like target etc are already arrays the brackets are not necessary.

Fair enough.

I was facing the same issue, your comment helped me solve it! Thanks!

As I was facing the same issue I think the simpler solution is just use:

to_categorical(source, num_classes=cardinality)instead of

to_categorical([source], num_classes=cardinality).shapefor the 3 lists.

I also had to do that to get the data in the right format. There’s also a function tensorflow.one_hot which I prefer over to_categorical. They do the same thing.

Hi Jason!

Are the encoder-decoder networks suitable for time series classification?

In my experience LSTMs have not proven effective at autoregression compared to MLPs.

See this post for more details:

https://machinelearningmastery.com/suitability-long-short-term-memory-networks-time-series-forecasting/

for people later, you don’ t need to use squeeze,

Let ‘s try:

from

src_encoded = to_categorical([source], num_classes=cardinality)

tar_encoded = to_categorical([target], num_classes=cardinality)

tar2_encoded = to_categorical([target_in], num_classes=cardinality)

to:

src_encoded = to_categorical(source, num_classes=cardinality)

tar_encoded = to_categorical(target, num_classes=cardinality)

tar2_encoded = to_categorical(target_in, num_classes=cardinality)

Thanks.

Thanks Jason, for writing blogs for machinelearningmastery.com. I have seen no. of blogs.

I have one query – –

In my dataset —-

no. of columns are 5 (inputs),

No. of outputs – 3 (feature , which I want to predict) and

number of rows – 34079.

Then how to put these parameters in your code –

n_features = 34079

n_steps_in = 5

n_steps_out = 3

# generate training dataset

X1, X2, y = get_dataset(n_steps_in, n_steps_out, n_features, 1)

print(X1.shape,X2.shape,y.shape)

I am getting error like ——–>>> Your session crashed after using all available RAM. View runtime logs. I am using 14Gb RAM. please suggest me how to arrange my parameters as per your code.. thanks in advance.

Sounds like you might need to reduce your dataset size or use a machine with more RAM?

Thanks Jason for for reply,

but I think, I may not be keeping parameters properly in the variables.

In my dataset —-

no. of columns are 5 (inputs),

No. of outputs – 3 (feature , which I want to predict) and

number of rows – 34079.

then how to put in your variables —

n_features = ???

n_steps_in = 5

n_steps_out = 3

sample = ???

thanks

Ah I see, this will help you map your problem onto the terminology of samples, time steps, features:

https://machinelearningmastery.com/faq/single-faq/what-is-the-difference-between-samples-timesteps-and-features-for-lstm-input

Does that help?

Hi Jason,

running the get_dataset the function returns one additional row in the Arrays X1, X2, y:

(1,1,6,51) (1,1,3,51)(1,1,3,51).

This results form numpy.array(), before the X1, X2, y are lists with the correct sizes:

(1,6,51) (1,3,51)(1,3,51).

It seems the comand array() adds an additional dimension. Could you help on solving this problem?

how to train the keras ,it has to identify capital letter and small letter has to same

Please suggest any tactics for it.

Start by clearly defining your problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Could you please give me a tutorial for a case where we are input to the seq2seq model is word embeddings and outputs is also word embeddings. I find it frustrating to see a Dense layer at the end. this is what is stopping a fully seq2seq model with input as well as output word embeddings.

Why would we have a word embedding at the output layer?

If embeddings are coming in, we’d want to have embeddings going out (auto-encoder). Imagine input = category (cat, dog, frog, wale, human). These animals are quite different, so we represent w/ embedding. Rather than having a dense output of 5 OHE, if an embedding is used for output, the assumption is that, especially if weights are shared between inputs + outputs, we could train the net with and give it more detail about what exactly each class is… rather than use something like cross entropy.

Ok.

Is there such a tutorial yet? Sounds interesting.

I’ve not written one, I still don’t get/see benefit in the approach. Happy to be proven wrong.

Hi Jason,

I am trying to put embedding layer at encoder and decoder input with dense layer as it as you mentioned in the above code.

Later in the prediction function call, when I give 3D input to target_seq, it throws dimensional error: Error when checking input: expected Decoder_input to have 2 dimensions, but got array with shape (1, 1, 341), here 341 is my original feature dimension which is compressed to 32 by embedding layer.

Could you please advice, what’s going wrong.

I cannot debug your changes, sorry. Perhaps post your code and error to stackoverflow?

Thanks Jason. Just wanted to get an idea in scenarios when decoder_input and decoder_output have different dimensions, what happens to your prediction function (target_seq) shape?

Could you please guide.

Not sure off the cuff, I think you will need to experiment.

Hi ! When adding dropout and recurrent_dropout as LSTM arguments on line 40 of the last complete code example with everything else being the same, the code went wrong. So how can I add dropout in this case? Thanks!

I give a worked example of dropout with LSTMs here:

https://machinelearningmastery.com/use-dropout-lstm-networks-time-series-forecasting/

Hi Jason! Thanks for your great effort to put encoder decoder implementations here. As Dinter mentioned, when dropout is added, the code runs well for training phase but gives following error during prediction.

InvalidArgumentError (see above for traceback): You must feed a value for placeholder tensor ‘lstm_1/keras_learning_phase’ with dtype bool

[[Node: lstm_1/keras_learning_phase = Placeholder[dtype=DT_BOOL, shape=, _device=”/job:localhost/replica:0/task:0/cpu:0″]()]]

How to fix the problem in this particular implementation?

[Note: Your worked example of dropout however worked for me, but the difference is you are adding layer by layer in sequential model there which is different than this example of encoder decoder]

Sorry to hear that, perhaps it’s a bug? See if you can reproduce the fault on a small standalone network?

When I tried with exactly the same network in the example you presented and added dropout=0.0 in line 10 and line 15 of define_models function, the program runs but for other values of dropout it gives error. Also changing the size of network, for example, number of units to 5, 10, 20 does give the same error.

Any idea to add dropout?

line 5: encoder = LSTM(n_units, return_state=True,dropout=0.0)

line 10: decoder_lstm = LSTM(n_units, return_sequences=True, return_state=True,dropout=0.0)

See this tutorial:

https://machinelearningmastery.com/use-dropout-lstm-networks-time-series-forecasting/

Hi Jason,

what is the difference between specifying the model input:

Model( [decoder_inputs] + decoder_states_inputs,….)

and this

Model([decoder_inputs,decoder_states_inputs],…)

Does the 1st version add the elements of decoder_states_inputs array to corresponding elements of decoder_inputs

Hi Jason, Thanks for sharing this tutorial. I am only confused when you are defining the model. This is the line:

train, infenc, infdec = define_models(n_features, n_features, 128)

It is a silly question but why n_features in this case is used for the n_input and for the n_output instead of n_input equal to 6 and n_output equal to 3 ?

I look forward to hearing from you soon.

Thanks

Good question, because the model only does one time step per call, so we walk the input/output time steps manually.

Hi Jason, I got the same doubt as above. Can you please explain once again. Sorry, I didn’t understand your reply

I would like to see the hidden states vector. Because there are 96 training samples, there would be 96 of these (each as a vector of length 4).

I added the “return_sequences=True” in the LSTM

model = Sequential()

model.add( LSTM(4, input_shape=(1, look_back), return_sequences=True ) )

model.add(Dense(1))

model.compile(loss=’mean_squared_error’, optimizer=’adam’)

model.fit(trainX, trainY, epochs=20, batch_size=20, verbose=2)

But, I get the error

Traceback (most recent call last):

File “”, line 1, in

File “E:\ProgramData\Anaconda3\lib\site-packages\keras\models.py”, line 965, in fit

validation_steps=validation_steps)

File “E:\ProgramData\Anaconda3\lib\site-packages\keras\engine\training.py”, line 1593, in fit

batch_size=batch_size)

File “E:\ProgramData\Anaconda3\lib\site-packages\keras\engine\training.py”, line 1430, in _standardize_user_data

exception_prefix=’target’)

File “E:\ProgramData\Anaconda3\lib\site-packages\keras\engine\training.py”, line 110, in _standardize_input_data

‘with shape ‘ + str(data_shape))

ValueError: Error when checking target: expected dense_24 to have 3 dimensions, but got array with shape (94, 1)

How can I make this model work, and also how can I view the hidden states for each of the input samples (should be 96 hidden states).

Thank you.

Return sequences does not return the hidden state, but instead the outcome from each time step.

This post might clear things up for you regarding outputs and hidden states:

https://machinelearningmastery.com/return-sequences-and-return-states-for-lstms-in-keras/

Hi Jason,

I like your blog posts. I have some code based on this post, but I get this error message all the time. There’s something wrong with my dense layer. Can you point it out? Here units is 300 and tokens_per_sentence is 25.

an error message:

ValueError: Error when checking target: expected dense_layer_b to have 2 dimensions, but got array with shape (1, 25, 300)

this is some code:

Perhaps the data does not match the model, you could change one or the other.

I’m eager to help, but I cannot debug the code for you sorry.

hi. so I think I needed to set ‘return_sequences’ to True for both lstm_a and lstm_b.

Dear Jason,

Do you think that this algorithm works for weather prediction? For example, by having the input integer variables as dew point, humidity, and temperature, to predict rainfall as output

Only for demonstration purposes.

In practice, weather forecasts are performed using simulations of physics models and are more accurate than small machine learning models.

Hi Jason.

I would like tou ask you, how could I use this model with float numbers? Yours training data seems like this:

[[

[0,0,0,0,1,0,0]

[0,0,1,0,0,1,0]

[0,1,0,0,0,0,0]]

.

.

.

]]

I would need something like this:

[[

[0.12354,0.9854,5875, 0.0659]

[0.12354,0.9854,5875, 0.0659]

[0.12354,0.9854,5875, 0.0659]

[0.12354,0.9854,5875, 0.0659]

]]

Whan i run your model with float numbers, the network doesn’t learn. Should I use some different LOSS function?

Thank you

The loss function is related to the output, if you have a real-valued output, consider mse or mae loss functions.

How to add bidirectional layer in encoder decoder architecture?

Use it directly on the encoder or decoder.

Here’s how to in Keras:

https://machinelearningmastery.com/develop-bidirectional-lstm-sequence-classification-python-keras/

Hi.

I would like to use your model with word embedding. I was inspired by https://blog.keras.io/a-ten-minute-introduction-to-sequence-to-sequence-learning-in-keras.html ->features -> word-level model

I decoded words in sentences as integer numbers. My input is list of sentences with 15 words. [[1,5,7,5,6,4,5, 10,15,12,11,10,8,1,2], […], [….], …]

My model seems:

encoder_inputs = Input(shape=(None,))

x = Embedding(num_encoder_tokens, latent_dim)(encoder_inputs)

x, state_h, state_c = LSTM(latent_dim, return_state=True)(x)

encoder_states = [state_h, state_c]

# Set up the decoder, using

encoder_statesas initial state.decoder_inputs = Input(shape=(None,))

x = Embedding(num_decoder_tokens, latent_dim)(decoder_inputs)

x = LSTM(latent_dim, return_sequences=True)(x, initial_state=encoder_states)

decoder_outputs = Dense(num_decoder_tokens, activation=’softmax’)(x)

# Define the model that will turn

#

encoder_input_data&decoder_input_dataintodecoder_target_datamodel = Model([encoder_inputs, decoder_inputs], decoder_outputs)

Whan i try to train the model, i get this error: expected dense_1 to have 3 dimensions, but got array with shape (10, 15). Could you help me with that please?

Thank you

Hello,

I also have the same issue. could you solve your problem?

Hi ,

I am trying to do seq2seq problem using Keras – LSTM. Predicted output words matches with most frequent words of the vocabulary built using the dataset. Not sure what could be the reason. After training is completed while trying to predict the output for the given input sequence model is predicting same output irrespective of the input seq. Also using separate embedding layer for both encoder and decoder.

Can you help me what could be the reason ?

Question elaborated here:

https://stackoverflow.com/questions/49638685/keras-seq2seq-model-predicting-same-output-for-all-test-inputs

Thanks.

It suggests the model has not learned the problem.

Here are a list of ideas to try:

https://machinelearningmastery.com/improve-deep-learning-performance/

Hi Jason,

In your current setup, how would you add a pre-trained embedding matrix, like glove?

Here are some examples of loading a word embedding:

https://machinelearningmastery.com/use-word-embedding-layers-deep-learning-keras/

Hi,

Could you help me with my problem? I think that a lot of required logic is implemented in your code but I am very beginner in python. I want to predict numbers according to input test sequence (seq2seq) so output of my decoder should by sequence of 6 numbers (1-7). U can imagine it as lottery prediction. I have very long input vector of numbers 1-7 (contains sequenses of 6 numbers) so I don’t need to generate test data. I just need to predict next 6 numbers which should be generated. 1,1,2,2,3 -> 3,4,4,5,5,6

Thank you

Perhaps here would be a good place for you to get stared with LSTMs:

https://machinelearningmastery.com/start-here/#lstm

Thank you Jason, it was very helpful for me. And can you give me advice or link how to correctly initiate and train network with multiple input sequences?

They can be side by side:

https://machinelearningmastery.com/multivariate-time-series-forecasting-lstms-keras/

Or separate inputs entirely:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Thank you Jason for this tutorial it gets me started. I tried to use larger sequence where input is 400 series of numbers and output is 40 numbers. I have problem with categorical because of index error and i don’t know how to set or get the the value for cardinality/n_features. Can you give me an idea on this?

Im also not clear if this is a classification type of model. Can you please confirm. Thanks

You can integer encode or one hot encode categorial input features. Here is a tutorial on the topic:

https://machinelearningmastery.com/how-to-one-hot-encode-sequence-data-in-python/

This post will make the distinction between classification and regression clear:

https://machinelearningmastery.com/classification-versus-regression-in-machine-learning/

Hi,

thank you for this totu, please i receive this error when i run your example :

ValueError: Error when checking input: expected input_1 to have 3 dimensions, but got array with shape (100000, 1, 6, 51)

Hi! I have the same problem.. Did you solve it ? Thanks!

change this:

src_encoded = to_categorical([source], num_classes=cardinality)[0]

tar_encoded = to_categorical([target], num_classes=cardinality)[0]

tar2_encoded = to_categorical([target_in], num_classes=cardinality)[0]

Did you tried Gary’s answer?

Hi Jason, thank you very much for the tutorial. Is it possible to decode many sequences simultaneously instead of decoding a single sequence one character at a time? The inference for my project takes too long and I thought that doing it in larger batches may help.

You could use copies of the model to make prediction in parallel for different inputs.

Hi im just wondering why do we need to use to_categorical if the sequence is already numbers. On my case i have a series of input features(numbers) and another series of output features(number). Should i still use to_categorical method?

Good question, it will one hot encode the numbers:

https://keras.io/utils/#to_categorical

Hi Jason,

I am working on word boundary detection problem where dataset containing .wav files in which a sentence is spoken are given, and corresponding to each .wav file a .wrd file is also given which contains the words spoken in a sentence and also its boundaries (starting and end boundaries).

Our task is to identify word boundaries in test .wav file (words spoken will also be given).

I want to do this with sequential models .

What I have tried is:

I have read .wav files using librosa module in numpy array (made it equal to max size using padding)

Its output is like 3333302222213333022221333302222133333 (for i/p ex: I am hero)

where (0:start of word, 1:end of word, 2:middle, 3:space)

means I want to solve this as supervised learning problem, can I train such model with RNN?

Sounds like a great project.

I don’t have examples of working with audio data sorry, I cannot give you good off the cuff advice.

Perhaps find some code bases on related modeling problems and use them for inspiration?

Hello.

I’ve tried this example for word-level chatbot. Everything works great, on a small data (5000 sentences)

When I use dataset of 50 000 sentences something is wrong. Model accuracy is 95% but when i try to chat with this chatbot, responses are generated randomly. Chatbot is capable of learning sentences from dataset, but it use them randomly to respond to users questions.

How it is possible, when accuracy is so hight?

Thanks

Perhaps it memorized the training data? E.g. overfitting?

Sir, how to create confusion matrix, evaluated and the accuracy printed for this model :

# Define an input sequence and process it.

encoder_inputs = Input(shape=(None, num_encoder_tokens))

encoder = LSTM(latent_dim, return_state=True)

encoder_outputs, state_h, state_c = encoder(encoder_inputs)

# We discard

encoder_outputsand only keep the states.encoder_states = [state_h, state_c]

# Set up the decoder, using

encoder_statesas initial state.decoder_inputs = Input(shape=(None, num_decoder_tokens))

# We set up our decoder to return full output sequences,

# and to return internal states as well. We don’t use the

# return states in the training model, but we will use them in inference.

decoder_lstm = LSTM(latent_dim, return_sequences=True, return_state=True)

decoder_outputs, _, _ = decoder_lstm(decoder_inputs,

initial_state=encoder_states)

decoder_dense = Dense(num_decoder_tokens, activation=’softmax’)

decoder_outputs = decoder_dense(decoder_outputs)

# Define the model that will turn

#

encoder_input_data&decoder_input_dataintodecoder_target_datamodel = Model([encoder_inputs, decoder_inputs], decoder_outputs)

model.compile(optimizer=’rmsprop’, loss=’categorical_crossentropy’, metrics=[‘accuracy’])

model.fit([encoder_input_data, decoder_input_data], decoder_target_data,

batch_size=batch_size,

epochs=epochs,

validation_split=0.2)

model.summary()

Here is information on how to calculate a confusion matrix:

https://machinelearningmastery.com/confusion-matrix-machine-learning/

Hi Jason,

Thank you for this awesome tutorial, so useful. I have one simple question. Is there any specific

reason why use 50+1 as n_features?

Please advise

The +1 is to leave room for the “0” value, for “no data”.

Hi Jason,

Thanks for all the wonderful tutorial ..great work.

i have question,

in time series prediction does multivariate give better result then uni-variate.

eg.- for “Beijing PM2.5 Data Set” we have multivariate data will the multivariate give better results, or by taking the single pollution data for uni-variate will give better result.

2 – what is better encoder-decoder or normal RNN for time series prediction.

For both questions, it depends on the specific problem.

Hi Jason, here it looks like one time step in the model. What do I have to change to add here more time steps in the model ?

# returns train, inference_encoder and inference_decoder models

def define_models(n_input, n_output, n_units):

# define training encoder

encoder_inputs = Input(shape=(None, n_input))

encoder = LSTM(n_units, return_state=True)

encoder_outputs, state_h, state_c = encoder(encoder_inputs)

encoder_states = [state_h, state_c]

# define training decoder

decoder_inputs = Input(shape=(None, n_output))

decoder_lstm = LSTM(n_units, return_sequences=True, return_state=True)

decoder_outputs, _, _ = decoder_lstm(decoder_inputs, initial_state=encoder_states)

decoder_dense = Dense(n_output, activation=’softmax’)

decoder_outputs = decoder_dense(decoder_outputs)

model = Model([encoder_inputs, decoder_inputs], decoder_outputs)

# define inference encoder

encoder_model = Model(encoder_inputs, encoder_states)

# define inference decoder

decoder_state_input_h = Input(shape=(n_units,))

decoder_state_input_c = Input(shape=(n_units,))

decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c]

decoder_outputs, state_h, state_c = decoder_lstm(decoder_inputs, initial_state=decoder_states_inputs)

decoder_states = [state_h, state_c]

decoder_outputs = decoder_dense(decoder_outputs)

decoder_model = Model([decoder_inputs] + decoder_states_inputs, [decoder_outputs] + decoder_states)

# return all models

return model, encoder_model, decoder_model

Hi Jason,

Thank you very much! I would like to ask a question which seems silly…When training the model, we store the weights in training model. Are the inference encoder and inference decoder empty? At predict stage, the training model is not used, so how are the training model weights used to predict?

Looking forward to your reply. Thank you!

No silly questions here!

The state of the model is reset at the end of each batch.

Get it. Thank you a lot!

Glad to hear it.

Can you kindly explain this a bit more. I don’t seem to understand how model.fit relates to inference_encoder/decoder. Thank you!

(great post, btw) I could also use a bit more. Here is my misunderstanding, 3 models were instantiated (train, infenc, infdec). One was trained (train) and developed weights that (hopefully) would generate accurate predictions.

Then the other two models are used to test the predictive power, but I do not understand where the weights from

trainwere parsed and transferred to infenc and infdec, respectively. (and, I suspect I fundamentally am missing something about the keras infrastructure which informs this)Same question here, was wondering how the weights from train are used in infenc

Good question.

I recommend reviewing the define_models() function, you can see that model weights are shared between the models – but each model provides a different use case.

Can this model described in this blog post be used when we have variable length input? How.

And also variable length output.

Yes, it processes time steps one at a time, therefore supports variable length by default.

Hi Jason how to deal and implement this model if you have time series data with 3D shape (samples, timesteps, features) and can not /want not one hot encode them? Thank you in advance.

You can integer encode the inputs and use an embedding layer on the input.

how would that look like ? And can I not do it with the inference part later only ? Sorry my questions could sound a bit stupid, I try to understand the topics.

I provide a ton more help on preparing data for LSTMs here:

https://machinelearningmastery.com/faq/single-faq/how-do-i-prepare-my-data-for-an-lstm

Hi Jason,

thank you for sharing your codes. I used this for my own problem and it works, but I still get good prediction results on values that are far away from the ones used in the training. What could my problem be ? I made a regression using mse for loss, do I need a different loss function ?

I have some suggestions for improving model skill here that may give you some ideas:

https://machinelearningmastery.com/improve-deep-learning-performance/

Hi Jason, great contribution! when I use timeseries data for this model, can I also use not the shfitet case in the target data for y, so: model.fit([input, output], output) , so output=input.reversed() and than would this make sense as well? because I want to use sliding windows for input and output; and than shfiting by one for the output being would not make sense in my eyes.

Not sure I follow sorry.

Perhaps this post will make things clearer:

https://machinelearningmastery.com/convert-time-series-supervised-learning-problem-python/

lets say I trained with shape (1000,20,1) but I want to predict with (20000,20,1) than this would not work, because the sample size is bigger. how do I have to adjust this ?

output=list()

for t in range(2):

output_tokens, h, c = decoder_model.predict([target_seqs] + states_values)

output.append(output_tokens[0,0,:])

states_values = [h,c]

target_seq = output_tokens

Why would it not work?

it says the following: index 1000 is out of bounds for axis 0 with size 1000,

so the axis of the second one needs to be in the same length. But I will not train with the same length of data that I am predicting. How can this be solved?

Perhaps double check your data.

still not working Jason, Unfortunately it seems like variable sequence length is not possible ? I trained my model with a part of the whole data and wanted to use in the inference the whole data ?

I correct myself: to I have to padd my data with zeros in the beginning of my training data since I want to use the last state of the encoder as initial state ?

I have 2 questions:

1- This example is using teacher forcing, right? I’m trying to re-implement it without teacher forcing but it fails. When I define the train model as (line 49 of your example): model = Model(encoder_inputs, decoder_outputs), it gives me this error: RuntimeError: Graph disconnected: cannot obtain value for tensor Tensor(“decoder_inputs:0”, shape=(?, n_units, n_output_features), dtype=float32) at layer “decoder_inputs”. The following previous layers were accessed without issue: [‘encoder_inputs’, ‘encoder_LSTM’]

2- Can you please explain a bit more about how you defined the decoder model? I don’t get what is happening in the part between line 52 to 60 of the code (copied below)? Why do you need to re-define the decoder_outputs? How the model is defined?

# define inference decoder

decoder_state_input_h = Input(shape=(n_units,))

decoder_state_input_c = Input(shape=(n_units,))

decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c]

decoder_outputs, state_h, state_c = decoder_lstm(decoder_inputs, initial_state=decoder_states_inputs)

decoder_states = [state_h, state_c]

decoder_outputs = decoder_dense(decoder_outputs)

decoder_model = Model([decoder_inputs] + decoder_states_inputs, [decoder_outputs] + decoder_states)

# return all models

Correct. If performance is poor without teacher forcing, then don’t train without it. I’m not sure why you would want to remove it?!?

The decoder is a new model that uses parts of the encoder. Does that help?

HI Jason, I looked at different pages and found that a seq2seq is not possible with variable length without manipulating the data with zeros etc. So in your example, is it right that you can only use in inference data with shape (100000,timesteps,features), so not having variable length? If not how you can change that ? thanks a lot for responding!

Generally, variable length inputs can be truncated or padded and a masking layer can be used to ignore the padding.

Alternately, the above model can be used time-step wise, allowing truely multivariate inputs.

nice explanation, a last question: my timesteps woud be the same in all cases,just the sample size woud be different. The keras padding sequences shows only padding for timesteps or am I wrong ? in that case I would need to pad my samples

Good question. Only the time steps and feature dimensions are fixed, the number of samples can vary.

Jason,

These are really awesome little tutorials and you do a great job explaining them.

Just as an FYI, to help out other readers, I had to use tensorflow v1.7 to get this to run with Keras 2.2.0. With older versions I got a couple of errors while initializing the models and with the newer v1.8 I got some session errors with TF.

Thanks for the tip.

Hello Jason, Your work has always been awesome. I have learned a lot form your tutorials.

I am trying a simple encoder-decoder model following your example but facing some problem.

my issue is vocabulary size where it is around 5600, which makes my one hot encoder really big and puts my pc to freeze. can you give some simple example in which I don’t need to one hot encode my data? I know about sparse_categorical_crossentropy but I am facing trouble implementing it. Maybe my approach is wrong. your guidance can help me a lot.

Thanks once again for such great tutorials…..

Perhaps try a word embedding instead?

Or, perhaps try training on an EC2 instance?

Hi Jason

Why I got this shape ((100000, 1, 6, 51), (100000, 1, 3, 51), (100000, 1, 3, 51)) when I run your code….Or Do I need to reshape or i did not copy correct

Because it shows you get ((100000,6, 51), (100000,3, 51), (100000,3, 51))

Is your version of Keras up to date?

Did you copy all of the code exactly?

Hi Jason, the version is different i put this

X1 = np.squeeze(array(X1), axis=1)

X2 = np.squeeze(array(X2), axis=1)

y = np.squeeze(array(y), axis=1)

Its Ok now, What are you doing is called many to one encoding? and in decoding case is it called one to many or?

another question I want to remove those one hot encode, I want to encode the exactly number and predict the exactly number

Also to remove this option src_encoded = to_categorical([source], num_classes=cardinality)

but I got a lot of error

I have advice on changing between regression/classification in keras:

https://machinelearningmastery.com/faq/single-faq/how-can-i-change-a-neural-network-from-regression-to-classification

Try removing the bracket in the to_categorical argument, that is, try using

to_categorical(source, num_classes=cardinality) instead of

to_categorical([source], num_classes=cardinality).

+500

A bit annoying that this mishap is not fixed. My guess it is an intentional overlooking intended to enhance training value of the tutorial 🙂

A teaching method of sorts…

I think the API changed. I need to fix it…

Hi Jason,

good explanations appreciate your sharings! I wanted to ask if in the line for the inference prediction

for t in range(n_steps):

you also could go with not the n_steps but also with one more ? Or why are you looping with n_steps? you also would get a result with for t in range(1), right= I hope you understood what I try to find out.

Sorry, i don’t follow. Perhaps you can provide more details?

so in your code here below, you are predicting in a for loop with n_steps, what needs n_steps to be ? Can you also go with 1? or do you need that n_steps from the data shape (batch,n_steps,features) as timesteps? Because when you infdec.predict isnt the model is taking the whole target_seq for prediction at once why you need n_steps?

for t in range(n_steps):

# predict next char

yhat, h, c = infdec.predict([target_seq] + state)

# store prediction

output.append(yhat[0,0,:])

# update state

state = [h, c]

# update target sequence

target_seq = yhat

return array(output)

Sure, you can set it to 1.

and what is the benefit of using 1 or a higher iteration number ?

for multi-step forecasts.

In the “def define_models()”, there are three models: model, encoder_model, decoder_model,

what is the function of encoder_model and decoder_model? Can I use only the “model” for

prediction? Looking forward to your reply

You can learn more about the architecture here:

https://machinelearningmastery.com/encoder-decoder-long-short-term-memory-networks/

How do we use Normalized Discounted Cumulative Rate (NDCR) for evaluating the model?

Is it necessary to use NDCR or we can live with accuracy as a performance metric?

What is NDCR exactly? I’ve never heard of it.

Hi, thank you for your great description. why you didn’t use “rmsprop” optimizer?

I find I get great results from Adam, an extension of RMSProp:

https://machinelearningmastery.com/adam-optimization-algorithm-for-deep-learning/

Hi,

I added the embediing matrix to your code and received an error related to matrix dimentions same as what Luke mentioned in the previous comments. do you have any post for adding embedding matrix to encoder-decoder?

I have some suggestions here:

https://machinelearningmastery.com/faq/single-faq/why-does-the-code-in-the-tutorial-not-work-for-me

Hi Jason,

I have a low-level question. why did you train the model in only “one” epoch?wasn’t better to choose the higher number?

Because we have a very large number of random samples.

Perhaps re-read the tutorial?

Thank you, Jason. How can we save the results of our model to use them later on?

See this tutorial:

https://machinelearningmastery.com/save-load-keras-deep-learning-models/

In your code, I suppose the 3 models should be saved (i.e., the 3 returned by defined_models):

train, infenc, infdec = define_models(n_features, n_features, 128)

Yes, perhaps try using the save() function to save each.

Hi Jason, I was using your code to solve a regression problem. I have the data defined exactly like you have but instead of casting them to one-hot-vectors I wish to leave them integers or floats.

I modified the code as follows:

def define_models(n_input, n_output, n_units):

# define training encoder

encoder_inputs = Input(shape=(None, n_input))

encoder = LSTM(n_units, return_state=True)

encoder_outputs, state_h, state_c = encoder(encoder_inputs)

encoder_states = [state_h, state_c]

# define training decoder

decoder_inputs = Input(shape=(None, n_output))

decoder_lstm = LSTM(n_units, return_sequences=True, return_state=True)

decoder_outputs, _, _ = decoder_lstm(decoder_inputs, initial_state=encoder_states)

decoder_dense = Dense(n_output, activation='relu')

decoder_outputs = decoder_dense(decoder_outputs)

model = Model([encoder_inputs, decoder_inputs], decoder_outputs)

# define inference encoder

encoder_model = Model(encoder_inputs, encoder_states)

# define inference decoder

decoder_state_input_h = Input(shape=(n_units,))

decoder_state_input_c = Input(shape=(n_units,))

decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c]

decoder_outputs, state_h, state_c = decoder_lstm(decoder_inputs, initial_state=decoder_states_inputs)

decoder_states = [state_h, state_c]

decoder_outputs = decoder_dense(decoder_outputs)

decoder_model = Model([decoder_inputs] + decoder_states_inputs, [decoder_outputs] + decoder_states)

# return all models

return model, encoder_model, decoder_model

However the model does not learn to predict for more than one future timesteps, gives correct prediction for only one ahead. Could you please suggest some solution. Much thanks!

I make the prediction then as follows:

def predict_sequence(infenc, infdec, source, n_steps):

state = infenc.predict(source)

output = list()

pred=np.array([0]).reshape(1,1,1)

for t in range(n_steps):

yhat, h, c = infdec.predict([pred]+state)

output.append(yhat[0,0,0])

state = [h, c]

pred = yhat

return array(output)

I have some ideas here:

https://machinelearningmastery.com/improve-deep-learning-performance/

Hello Khan, I have a similar proble the network not predicting more than one step ahead. did you find a solution?

Hi Jason, thanks for great serial about MLs,

In this tutorial, i have a bit complex (I’m a beginner in Python. I just have a little AI and ML theory).

y set (target sequence) is a part of X1. Clearly, y specified by X (reserve and subset). So,

1, In train model, Have you use y in X1 as input sequence ( sequence X1(y) => sequence y )

2, Can i define y separate with X1 (such as not subset, reserve…)?

Thanks you!

Not sure I follow. Perhaps this will help you define your problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

hi sir

i want to generate seq from pretrained embedding and in details i have a set of embedding list and its corresponding sentence.and i want to have a model to generate sentence with that embedding data. but i don’t know how to develop that model.

You can use a model like an LSTM to generate text, but not an embedding alone.

Hi Jason,

Great work again! I am a big fan of your tutorials!

I am working on a water inflow forecast problem. I have access to weather predictions and I want to predict the water inflow, given the historical values of both weather and inflows.

I have tried a simple LSTM model with overlapping sequences (time series to supervised), taking weather predictions and past inflows as input, and outputting future inflows. This works pretty well.

Seeing all this Seq2Seq time series forecast trend, I have also tried it, by encoding the weather forecast and decoding them to water inflow (with past inflows as decoder inputs), expecting even better results.

But this Seq2Seq model is performing very poorly. Do you have an idea why? Should I give up this kind of models for my problem?

Perhaps try improving the performance of the model:

https://machinelearningmastery.com/improve-deep-learning-performance/

Hi Jason,

I have read couple of your blogs about seq2seq and lstm. Wondering how should I combine this encoder-decoder model with attention? My scenario is kind of translation.

Sure, attention often improves the performance of an encoder-decoder model.

hey Jason, I have 2 questions :

question 1 – how do you estimate a “confidence” for a whole sequence prediction in a neural translation model. i guess you could multiply the individual probabilities for each time-step. somehow i’m not sure that is a proper way to do it. another approach is to feed the output sequence probabilities into a binary classifier (confident/not-confident) and take its value as the confidence score.

question 2 – how do ensure that the whole sequence prediction in a neural translation model is the optimal sequence. if you have a greedy approach where you take the max at each time-step you end up with a sequence which itself is probably not the most likely one. i’ve heard of beam search and other approaches. do you have an idea of the state-of-the-art ?

You can do a greedy search through the predicted probabilities to get the most likely word at each step, but it does not mean that it is the best sequence.

Perhaps look into a beam search.

Hi Luke,

I am dealing with the same scenario of sequences of integers.

Before you input the sequence, you need to reshape the sequences to 3D and best to train the model in mini batches as it will reset the states after every iteration which works really well for LSTM based model.

Hope that helps.

Why not apply TimeDistributed to the Decoder as in https://machinelearningmastery.com/develop-neural-machine-translation-system-keras/ ?

In this case we have a dynamic 1-step model – e.g. it’s not needed.

Hi Jason !

Can this model be used to summarize the sentence ? Am working on abstractive summarizer and trying to build one using encoder decoder with attention.

Can you help me out.

I have a number of posts on summarization:

https://machinelearningmastery.com/?s=text+summarization&post_type=post&submit=Search

Thank you for the great tutorial!! There is one thing I don’t understand, hopefully you can clarify:

We train a model as:

train, infenc, infdec = define_models(n_features, n_features, 128)

train.compile(optimizer=’adam’, loss=’categorical_crossentropy’, metrics=[‘acc’])

train.fit([X1, X2], y, epochs=1)

However, the inference encoder/decoder models (infenc and infdec) seem to still un-trained.

I don’t understand how, where, or when the information (weights, states, etc.) from the “train model” is transfer to the inference models.

At prediction time, you said that:

“During prediction, the inference_encoder model is used to encode the input sequence once which returns states that are used to initialize the inference_decoder model. From that point, the inference_decoder model is used to generate predictions step by step.”

But aren’t the inference_encoder and inference_decoder un-trained?

The weights are trained, it is just we have two references to the same weights so we can use them different ways (training/inference).

Hi Jason,

Is it possible to apply Batchnormalization with the above code?

Sure.

Here’s some examples of using batchnorm that you can adapt:

https://machinelearningmastery.com/how-to-accelerate-learning-of-deep-neural-networks-with-batch-normalization/

Hi Jason,

Since the above code doesn’t use Keras Sequential model, so it doesn’t allow to add Batchnormalization layer directly to the model.

Could you please help if we want to apply Bn in the code above?

You can add it to the model using the functional API:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Thanks Jason. Your posts are really helpful !

Thanks Jason!

I’m glad it helped.

Hi Jason,

I just wonder why you specified the “epochs” to 1. Is it just because the accuracy already reach 100%?

If it were not the accuracy of 100% with the “epochs” equal to 1, should I switch to any other epoch number to get increased accuracy?

Thank you in advance!

Kyu

Not quite, it is because we defined a very large number of random examples that acted like a proxy for epochs.

You could use fewer examples and more epochs to achieve the same effect.

Hi Jason,

nice post as always, I enjoy reading your blog. I got on question here. I might missed something, but why do we need the shifted target sequence as input for the decoder? and one more question: why do we need to shift it? Would be nice if you can clearify that for me. Thanks in advance 🙂

Cheers,

Tobs

To transform it into a supervised learning problem, for example, see this:

https://machinelearningmastery.com/convert-time-series-supervised-learning-problem-python/

Hi Jason,

Could you please help with gru based inference model for autoencoder.

/Ashima

You can use the LSTM autoencoder in this tutorial and change the layers to GRU:

https://machinelearningmastery.com/lstm-autoencoders/

Thanks Jason

You’re welcome.

Hi Jason,

Thank you very much for your blog and its excellent content. Regarding this post, it seems that the encoder_outputs is not used. Why don’t we use this out-put? Can we put an auto-decoder on top of it to reproduce the input1? Would this help the network in predicting the sequences?

Bests

Mostafa

It is used – the encoder output is connected to the decoder.

Hi Jason, I see the encoder_outputs is not used at all. Any purpose capturing it in a variable? I see only the states being propagated to the decoder.

Not really.

Hi Jason, Thanks for the tutorial! I want to add BatchNormalization and Dropout layer after the decoder LSTM layer. Do you have any idea how I can do it?

Regards,

Gunay

Be careful with dropout on LSTMs, use LSTM specific dropout:

https://machinelearningmastery.com/how-to-reduce-overfitting-with-dropout-regularization-in-keras/

Also batch norm and dropout can interact, be careful not to use them side by side – always test.

Hi Jason,

Thank you so much for your exciting blog. It’s great and I have a question.

What if we have more than one input series that are related to each other and want to predict the result?

For example, predicting the weather which we have more than one features and series?

You can have parallel input features or have a multi-input model.

I show how to do both here:

https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

Great, thanks a lot

Hi Jason,

Thanks for the great post.

I need to ask one question that you may think is very silly question.

I have a sequence of integers indicating the locations visited by some user xyz having user type “category1”. I have many users belonging to one of the category and each user has visited some locations.

Given a sequence of some user, I want to predict on which location that user will go next. Also, I want to predict it by taking into consideration the user type/category.

The question is, how can we use the above post for the stated problem since in the post, the values are predicted in reverse order only.

Perhaps there are multiple ways to frame the problem. I’d encourage you to brainstorm and then test a few to see what works.

It sounds like a time series classification problem. I think some of the tutorials here will give you ideas:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

@Jason, while giving free tips, mind that code should be working in the real world for large datasets. To_categorical is the worst thing to use and not keeping the memory footprint optimized. Hence, I would recommend re-edit of this post and using “sparse_softmax_cross_entropy_with_logits” as a proxy for “categorical_cross_entropy”. Seq2seq models are generally trained on large vocab datasets and your examples sadly don’t work. One has to go to other places for a solution. BTW, your posts are intuitive.

Thanks for the suggestion.

Hi Jason, I am trying with machine translation and going through all your articles.

This post is an implementation of “Encoder-Decoder Model for Sequence-to-Sequence Prediction in Keras” with some sophisticated code in place.

I have seen one more post of your’s at

https://machinelearningmastery.com/develop-neural-machine-translation-system-keras/

and

https://machinelearningmastery.com/encoder-decoder-long-short-term-memory-networks/

which is also introucing to the Encoder-Decoder LSTMs for

sequence-to-sequence prediction with example Python code which is very simple

model = Sequential()

model.add(LSTM(…, input_shape=(…)))

model.add(RepeatVector(…))

model.add(LSTM(…, return_sequences=True))

model.add(TimeDistributed(Dense(…)))

Can you please let me know the exact difference between both of these ? is the later one with simple 5 lines of code the implementation of this sophistcated in a better way using LSTM’s? or which is better?

I generally teach an approach to the encoder-decoder that uses an autoencoder architecture as it often gives the same or better results as the more advanced encoder-decoder.

More here:

https://machinelearningmastery.com/lstm-autoencoders/

So the one in this post isn’t autoencoder but have teacher forcing in place, can we have teacher forcing in place for auto-encoder approaches even?

Correct.

Hi Jason, thank you for this tutorial!

I have a question about teacher forcing.

if I understand your example correctly, you are not using here any validation set (is it right?).

What if I add validation split during fitting, would the model use teacher forcing during validation phase as well?

Would this choice be correct, or should the model use greedy or beam search during the validation to simulate a more realistic performance?

Thank you!

Not quite. I use walk forward validation.

You can learn more about teacher forcing here:

https://machinelearningmastery.com/teacher-forcing-for-recurrent-neural-networks/

Hi Jason,