In this article, you will learn how to apply a structured decision tree to choose the right agentic design pattern for any AI system you are building.

Making developers awesome at machine learning

Making developers awesome at machine learning

In this article, you will learn how to apply a structured decision tree to choose the right agentic design pattern for any AI system you are building.

In this article, you will learn about seven leading LLM observability tools that help AI engineers monitor, evaluate, and debug large language model applications running in production.

In this article, you will learn what prompt compression is, why it matters for agentic AI loops, and how to implement it practically using summarization and instruction distillation.

This article illustrates how to implement a permission-gated tool in a Python agent, resulting in a robust, cost-free interception mechanism based on a simple decorator pattern.

In this article, you will learn how to design, scale, and secure tool calling in AI agents so that the layer connecting model reasoning to real-world action holds up in production.

Describing and implementing two simple yet effective approaches to ensure AI agent safety: semantic drift based of cosine distance and confidence thresholding based on log-probability entropy.

In this article, you will learn what agentic RAG is, how it differs from traditional RAG, and when to use it.

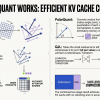

In this article, you will learn how TurboQuant, a novel algorithmic suite recently launched by Google, achieves advanced compression of large language models and vector search engines with no loss of accuracy.

In this article, you will learn how to build production-ready AI agents in Python using Pydantic AI, with structured outputs, custom tools, and dependency injection.

In this article, you will learn what context engineering is and how to apply it systematically to keep AI agents reliable, cost-efficient, and accurate in production.