Sequence prediction is different from other types of supervised learning problems.

The sequence imposes an order on the observations that must be preserved when training models and making predictions.

Generally, prediction problems that involve sequence data are referred to as sequence prediction problems, although there are a suite of problems that differ based on the input and output sequences.

In this tutorial, you will discover the different types of sequence prediction problems.

After completing this tutorial, you will know:

- The 4 types of sequence prediction problems.

- Definitions for each type of sequence prediction problem by the experts.

- Real-world examples of each type of sequence prediction problem.

Kick-start your project with my new book Long Short-Term Memory Networks With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Gentle Introduction to Making Predictions with Sequences

Photo by abstrkt.ch, some rights reserved.

Tutorial Overview

This tutorial is divided into 5 parts; they are:

- Sequence

- Sequence Prediction

- Sequence Classification

- Sequence Generation

- Sequence to Sequence Prediction

Sequence

Often we deal with sets in applied machine learning such as a train or test sets of samples.

Each sample in the set can be thought of as an observation from the domain.

In a set, the order of the observations is not important.

A sequence is different. The sequence imposes an explicit order on the observations.

The order is important. It must be respected in the formulation of prediction problems that use the sequence data as input or output for the model.

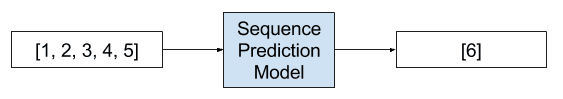

Sequence Prediction

Sequence prediction involves predicting the next value for a given input sequence.

For example:

- Given: 1, 2, 3, 4, 5

- Predict: 6

Example of a Sequence Prediction Problem

Sequence prediction attempts to predict elements of a sequence on the basis of the preceding elements

— Sequence Learning: From Recognition and Prediction to Sequential Decision Making, 2001.

A prediction model is trained with a set of training sequences. Once trained, the model is used to perform sequence predictions. A prediction consists in predicting the next items of a sequence. This task has numerous applications such as web page prefetching, consumer product recommendation, weather forecasting and stock market prediction.

— CPT+: Decreasing the time/space complexity of the Compact Prediction Tree, 2015

Sequence prediction may also generally be referred to as “sequence learning“.

Learning of sequential data continues to be a fundamental task and a challenge in pattern recognition and machine learning. Applications involving sequential data may require prediction of new events, generation of new sequences, or decision making such as classification of sequences or sub-sequences.

— On Prediction Using Variable Order Markov Models, 2004.

Technically, we could refer to all of the following problems in this post as a type of sequence prediction problem. This can make things confusing for beginners.

Some examples of sequence prediction problems include:

- Weather Forecasting. Given a sequence of observations about the weather over time, predict the expected weather tomorrow.

- Stock Market Prediction. Given a sequence of movements of a security over time, predict the next movement of the security.

- Product Recommendation. Given a sequence of past purchases of a customer, predict the next purchase of a customer.

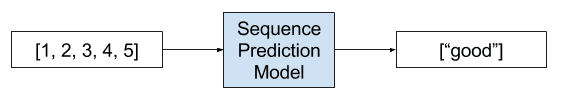

Sequence Classification

Sequence classification involves predicting a class label for a given input sequence.

For example:

- Given: 1, 2, 3, 4, 5

- Predict: “good” or “bad”

Example of a Sequence Classification Problem

The objective of sequence classification is to build a classification model using a labeled dataset D so that the model can be used to predict the class label of an unseen sequence.

— Chapter 14, Data Classification: Algorithms and Applications, 2015

The input sequence may be comprised of real values or discrete values. In the latter case, such problems may be referred to as discrete sequence classification.

Some examples of sequence classification problems include:

- DNA Sequence Classification. Given a DNA sequence of ACGT values, predict whether the sequence codes for a coding or non-coding region.

- Anomaly Detection. Given a sequence of observations, predict whether the sequence is anomalous or not.

- Sentiment Analysis. Given a sequence of text such as a review or a tweet, predict whether sentiment of the text is positive or negative.

Sequence Generation

Sequence generation involves generating a new output sequence that has the same general characteristics as other sequences in the corpus.

For example:

- Given: [1, 3, 5], [7, 9, 11]

- Predict: [3, 5 ,7]

[recurrent neural networks] can be trained for sequence generation by processing real data sequences one step at a time and predicting what comes next. Assuming the predictions are probabilistic, novel sequences can be generated from a trained network by iteratively sampling from the network’s output distribution, then feeding in the sample as input at the next step. In other words by making the network treat its inventions as if they were real, much like a person dreaming

— Generating Sequences With Recurrent Neural Networks, 2013.

Some examples of sequence generation problems include:

- Text Generation. Given a corpus of text, such as the works of Shakespeare, generate new sentences or paragraphs of text that read like Shakespeare.

- Handwriting Prediction. Given a corpus of handwriting examples, generate handwriting for new phrases that has the properties of handwriting in the corpus.

- Music Generation. Given a corpus of examples of music, generate new musical pieces that have the properties of the corpus.

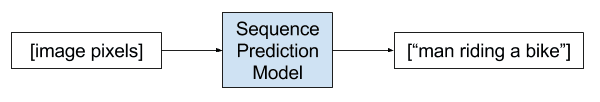

Sequence generation may also refer to the generation of a sequence given a single observation as input.

An example is the automatic textual description of images.

- Image Caption Generation. Given an image as input, generate a sequence of words that describe an image.

Example of a Sequence Generation Problem

Being able to automatically describe the content of an image using properly formed English sentences is a very challenging task, but it could have great impact, for instance by helping visually impaired people better understand the content of images on the web. […] Indeed, a description must capture not only the objects contained in an image, but it also must express how these objects relate to each other as well as their attributes and the activities they are involved in. Moreover, the above semantic knowledge has to be expressed in a natural language like English, which means that a language model is needed in addition to visual understanding.

— Show and Tell: A Neural Image Caption Generator, 2015

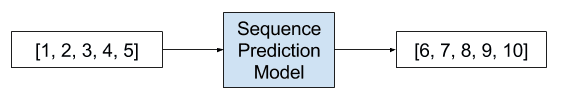

Sequence-to-Sequence Prediction

Sequence-to-sequence prediction involves predicting an output sequence given an input sequence.

For example:

- Given: 1, 2, 3, 4, 5

- Predict: 6, 7, 8, 9, 10

Example of a Sequence-to-Sequence Prediction Problem

Despite their flexibility and power, [deep neural networks] can only be applied to problems whose inputs and targets can be sensibly encoded with vectors of fixed dimensionality. It is a significant limitation, since many important problems are best expressed with sequences whose lengths are not known a-priori. For example, speech recognition and machine translation are sequential problems. Likewise, question answering can also be seen as mapping a sequence of words representing the question to a sequence of words representing the answer.

— Sequence to Sequence Learning with Neural Networks, 2014

It is a subtle but challenging extension of sequence prediction where rather than predicting a single next value in the sequence, a new sequence is predicted that may or may not have the same length or be of the same time as the input sequence.

This type of problem has recently seen a lot of study in the area of automatic text translation (e.g. translating English to French) and may be referred to by the abbreviation seq2seq.

seq2seq learning, at its core, uses recurrent neural networks to map variable-length input sequences to variable-length output sequences. While relatively new, the seq2seq approach has achieved state-of-the-art results in not only its original application – machine translation.

— Multi-task Sequence to Sequence Learning, 2016.

If the input and output sequences are a time series, then the problem may be referred to as multi-step time series forecasting.

- Multi-Step Time Series Forecasting. Given a time series of observations, predict a sequence of observations for a range of future time steps.

- Text Summarization. Given a document of text, predict a shorter sequence of text that describes the salient parts of the source document.

- Program Execution. Given the textual description program or mathematical equation, predict the sequence of characters that describes the correct output.

Further Reading

This section provides more resources on the topic if you are looking go deeper.

- Sequence on Wikipedia

- CPT+: Decreasing the time/space complexity of the Compact Prediction Tree, 2015

- On Prediction Using Variable Order Markov Models, 2004

- An Introduction to Sequence Prediction, 2016

- Sequence Learning: From Recognition and Prediction to Sequential Decision Making, 2001

- Chapter 14, Discrete Sequence Classification, Data Classification: Algorithms and Applications, 2015

- Generating Sequences With Recurrent Neural Networks, 2013

- Show and Tell: A Neural Image Caption Generator, 2015

- Multi-task Sequence to Sequence Learning, 2016

- Sequence to Sequence Learning with Neural Networks, 2014

- Recursive and direct multi-step forecasting: the best of both worlds, 2012

Summary

In this tutorial, you discovered the different types of sequence prediction problems.

Specifically, you learned:

- The 4 types of sequence prediction problems.

- Definitions for each type of sequence prediction problem by the experts.

- Real-world examples of each type of sequence prediction problem.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

So I assume it’s fair to say that every time-series is an example of sequence prediction but not vice-versa? Thanks for the interesting post.

Corect Mike.

Hi Jason,

I need your help with time series classification. I have measurements of different medical parameters for patients captured at every one hour. The output label is whether the patient has Acute Kidney Injury(AKI) or not. Based on the first 12 hour data, we should find out whether the patient has the risk of suffering from AKI or not (After 12 hours). I guess this falls under classification approach (Sequence Classification). However I have only one label (AKI == 0). So should this be considered as Anomaly detection in Time series or Sequence classification? Since I have more than 100 patients data for 12 hour (100 * 12 datapoints with multiple input variables), how do I retain the time factor? As there is only one class, how do I do the training? I am quite stuck as there isn’t no proper example for a beginner like me to understand. Can you please share your insights/ guide me as to how to approach this problem/direct me to the appropriate resource?

One idea, you could frame the problem as “does the event occur in this sequence or not”.

Then treat it as sequence classification, much like activity recognition:

https://machinelearningmastery.com/how-to-develop-rnn-models-for-human-activity-recognition-time-series-classification/

Hello, Jason. I would like to congratulate you on the excellent article. This was very helpful to me. Have you done or thought something to predict the next element of some binary sequence based on the frequency stability of the sequence?

Thanks.

Not directly, no.

Exactly after 1yr am reading your comments 😉

How you are relating and stating this.? can you give me some lights on this ” every time-series is an example of sequence prediction but not vice-versa”

A time series is a sequence of observations: 1, 2, 3, 4

Not all sequences are a time series. The ordering could be something other than time.

So, can we say that problems like 20-question game require sequence prediction to solve? and we can use recurrent neural network to implement?

The system asks questions and after each answer, we predict an answer which helps to determine the next question. Right?

Thanks, that was exactly what I need.

I expect Q&A is a sequence prediction problem.

I have not worked on an example so I cannot give you advice about whether RNNs are appropriate. I would recommend a search on google scholar.

Hi Jason.

Could LSTM do multi-step forecasts? I have two examples below:

1. input the [1,2,3] sequence to predict the [4,5,6,7,8,9,10,…15] sequence;

2. input the [1,2,3] sequence to predict the [10,11,12] sequence.

If LSTM can do, could you give a lesson on this kind of problems?

Thank you very much.

Yes, see here:

https://machinelearningmastery.com/multi-step-time-series-forecasting-long-short-term-memory-networks-python/

Hello Jason,

Thank you for this post, it is very useful and interesting.

I´m thinking about the following problem…, Given a single input sequence, we want to predict several sequences, that can be of different lengths. For instance, this problem can be encountered in the Alternative Splicing phenomenon, where given a single RNA sequence, we can obtain multiple proteins.

My questions are:

1- Have the problem “Input: One sequence -> Output: Several sequences” been studied in the literature?

2- Can LSTMs solve this type of problem?

Best and thanks

I have not seen this, but LSTMs could address it. Consider a multiple-output model:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Jason –

I enjoyed this post and I believe it may help me solve a predictive problem I’ve been pondering.

The data is primarily text based, time series data involving an ‘actor’ object that I receive information on. That information, other than the date/time information is also text. I know that given information sequence ‘A’ that the next informational sequence is most often ‘B’. However there may well be several other sequences that are also highly likely.

What I’m looking for is a learning method that can identify anomalous information reports so they can be reviewed and subsequently validated as either truly anomalous or potentially a new, yet valid, sequenced item.

Anything you might be able to point me towards would be greatly appreciated.

Thanks!

I would recommend investigating the field of time series anomaly detection. Perhaps start on google scholar?

Thanks Jason, I spent a considerable amount of time yesterday looking into what you suggested.

Just to clarify, the timestamps only serve to order the reports as they arrive, they have little significance beyond that.

Do any of your publications deal with pointing an unsupervised, or minimally supervised, method at this sort of data? As opposed to say numeric data?

I’ve done a considerable amount of ‘crunching’ of the data (it’s billions of rows) and have built a reference table of the likely ‘next event’ given the previous event. However that solution is not as robust, nor as flexible as I’d like it to be.

LSTM and GAN appear to show promise for what I’m trying to do yet most of the examples I’ve seen don’t seem to fit very well with the data I have to work with.

Again, I will appreciate any insight you could share.

Thanks!

Sorry, I don’t have material on semi-supervised learning at this stage, I hope to cover it in the future.

I would recommend testing a suite of methods as well as a suite of different framings of the problem to see what works best.

I know this is a long ago reply, but I’m now looking for something similar. Is there any updates by now? Thanks in advance!

Hi Storm…Please elaborate on your specific goals. That will enable us to better guide you.

I have a dataset containing text-based log-entries with no anomalies. I encoded the log-entries into numeric integers representing different events. For example:

25.09.2024 Event 1

26.09.2024 Event 2

27.09.2024 Event 5

etc.

I’m trying to train a network that learns the event sequences in the provided dataset and make predictions for possible upcoming event sequences. Ultimately I’m trying to use the trained network to check for anomalous event ocurrences.

I didn’t one-hot encoded the events in my dataset, because there are many different possible events and one-hot encoding would result in super lengthy data and subsequently very long training time. I tried using some open-source trained models online but they give decimal prediction results, which should not happen since all events are integers. The same happens when I implement your LSTM code on another page.

Is my data considered as a time series? Or what should I modify in such case that predictions of events/integers can be made?

Do you have any publications or suggestions on what should I look for? Thank you very much for any insights!

Thanks Jason!

You’re welcome.

Hello Jason,

Thank you for such informative article.

But I am not able to fit a prediction problem I’ve been working on in any category you have mentioned.

I have data of a person who visits certain places in a sequence from a sample of places.

let’s say he wants to visit [‘NY’, ‘LA’, ‘DC’, ‘TX’, ‘FL’] then he’ll visit it in this sequence [‘TX’, ‘LA’, ‘NY’,’FL’, ‘DC’].

I have historical data of his previous visits in sequence.

[‘TX’, ‘LA’, ‘NY’,’FL’, ‘DC’]

[‘AK’, ‘FL’, ‘NY’] and so on.

so for a random list of places i need to predict in which sequence he is gonna visit those places.

I’ll really appreciate if you can point me toward something.

Thanks

Perhaps this post will help you describe your problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Hi Jason,

My interest in ML is application part of it. I am from VLSI field.

The area of ML is very vast and I don’t know where to start with for my problem.

Below is a brief description of my problem.

The system i am testing basically generate events. Sequence of these are of interest to me.

One can manually look at these event sequence and recognize them to be useful. But manual process is very cumbersome and also there could be millions of events within which one has to look for interesting events.

The interesting event sequence are known a-priori. The spacing between these events can vary though.

Do you have any suggestion as to what I should be trying out to begin with?

I am not looking for solutions actually but only for guidance.

Yes, use LSTMs.

Take an example from the blog as a starting point and adapt it for your problem.

Start here:

https://machinelearningmastery.com/start-here/#lstm

Sure. Thanks, Jason. I will read through and get back if needed

Hello Jason,

Thank you for this tutorial which is very interesting, but I would like to find a sequential dataset that I can use in my research for the predictive maintenance algorithm.

My best advice is to contrive a problem for research purposes that has the properties you require.

Thank you Jason. Very interesting post!

Just a quick but also confusing question of mine. Let’s say I have [4, 5, 6] as input, I want to output

[14, 15, 16] or [24, 25, 26] and etc… Of course I have the training dataset which takes the input as [1, 2, 3] and the output as [11, 12, 13], [21, 22, 23] and etc.. which means I have one-to-many (not the name of model type here) relationship in my training set. I am wondering whether the RNN(or LSTM) can even recognize these relationships simultaneously. Another thing is, since we only need to find 1 to 11, 2 to 12… is seems that if I change order of my training dataset, i.e. [2, 3, 1] as input, [12, 13, 11] as output, the model can still learn the correspondent pattern. So here it might violate the principle that ORDER IS IMPORTANT. I have read a lot of your valuable blogs and learned a lot. But still can not find the answer. Any response is really appreciated!

Sure, my advice would be to try it and see how you go.

The model could output two length n vectors or two sequences of n=3 timesteps. Try both.

I would suggest exploring multiple output models with one “sub-model” for each output, see here:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

I’m eager to hear how you go.

So I have this data set of images that represent grid-wise crime (frequency) on daily basis. So I have a series of images i1,i2,i3,… in, and I want to forecast or predict in+1th and beyond images(crime hotspots or frequency). How do you think I should approach this problem?

This post might help you define your problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Hello Jason,

I’d have a question regarding Time series, forex, there is a pattern named double-bottom looks like the “W” letter, as input sequence this pattern can take any arbitrary length (in time), how should I deal with this problem? Can I transform this input sequence to a sequence of fixed length?

Thanks

What do you mean deal with it? Predict this pattern?

If so, develop a dataset of examples with/without the pattern and fit a model to classify them.

Hi Jason,

sorry for not being explicit enough. I want to classify some time series but the length of the time series patterns, which are inputs here, are required

to be known in advance. However, such information is not always available. In

addition, patterns of different lengths may co-exist in a

time series dataset (for example the forex “W” pattern might be 8 or 38 in length, we don’t know it in advance).

How to present such inputs to the machine if their length is not known in advance?

Thanks!

Interesting.

The different lengths you can address with zero padding and a mask input layer. I have many examples on the blog:

The scale invariance might require some experimentation. Perhaps an LSTM can do it. Perhaps a CNN is required or some other compressed interpretation of the sequence.

Is it possible to sort the results of the prediction?

OR the NN will give the prediction results in descending order based on the prediction values?

I’m not sure I understand. If your model outputs a sequence, why would you need to sort it?

i am sorry ! I should have been more specific.

I meant, for normal cases where the output is not a sequence, can the NN give the prediction results in descending order based on the prediction values?

You can output a prediction probability for each class in a classification problem, then rank the probabilities.

Is that what you mean?

If so, you can use a softmax in the output layer and have one neuron for each class in your problem.

If I have 5 classes and do what you asked to do (using softmax in the output layer and having one neuron for each class), the probabilities I get looks like this for each prediction:

[[ 1.32520108e-05, 7.61212826e-01, 2.38773897e-01, 1.89434655e-08, 1.21214816e-08],

[ 3.46436082e-07, 1.17851084e-03, 9.88936901e-01, 8.01233668e-03, 1.87186315e-03],……..]

and these values are not in any order.

So how can I rank them in an order?

The probabilities will be in the order of the classes (e.g. 1-5 ) for the one hot encoded class values used to train the model.

Hi Jason,

I have a problem where I have training data of tag-ids and I would like to extract the pattern by learning from it. Which models are suitable to train on this sort of data? I see this as a unsupervised learning problem and in current scenario we solve it using the help of regular expressions.

Tag-ids are in this format

eg:

400-SG-01002-A600

50-SG-01010-A600/B1

V-0514

STEEL-ETAGE-1-FRMW

Given a collection of words, I should be able to find out which word is a tag-id based on the learning

This framework will help you define your problem in terms of predictive modeling:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Hi Jason,

Thank you for all your material.

I’m new on this area and I’m looking for help.

The LSTM models I found to study always work with only one feature, but I would like to give more classes as input to the network.

To be more specific, I would like to use as input and output to the network: [FeatureA][FeatureB][FeatureC].

FeatureA is a categorial class with 100 different possible values.

FeatureB and FeatureC are categorial class too but only have 5 unique values.

Any sugestions or tutorials on how to do this?

A class is an output, not input.

Here is an example of an LSTM with multiple inputs:

https://machinelearningmastery.com/multivariate-time-series-forecasting-lstms-keras/

If you have categorical inputs, you can use a one hot encoding or integer encoding prior to modeling.

For multi-class outputs, you can use a Dense layer on the output after the LSTMs with softmax and one neuron per output class, here is an example of a dense without the lstm for multi-class classification:

https://machinelearningmastery.com/multi-class-classification-tutorial-keras-deep-learning-library/

Hello Jason,

Thank you for the article.

I have N datasets and each data-set has 3 features and 1 target. All the features and target have X data points in time. I want to train a LSTM on 80% of datasets and test on rest 20%.

My problem is not exactly forecasting but multiple sequences to sequence prediction. Could you please tell me how to set the input shape my data set.

dataset1 –>

[feature1 –> [0,1,2]

feature2 –> [4,6,8]

feature3 –> [3,5,7]

target –> [1,1,2] ]

Thank you

This will help you shape your data:

https://machinelearningmastery.com/faq/single-faq/how-do-i-prepare-my-data-for-an-lstm

Thank you. So, based on the articles, am i correct in setting the shape of the input data as (number of train datasets, length of any feature array, number of features) ?

Do you have any article that dealt with this kind of example?

Thank you again for all the articles you shared. They are very informative.

Generally, I cannot comment on “correctness” without getting deeply involved in your project.

I would recommend reviewing how to prepare data for the LSTM, perhaps reviewing what has worked on other problems, then try a suite of ways of framing the problem to see what works best for your specific case.

Thank you

Hi

You have best site and best article I learn a lot of solution.

I have question: my data set is numbers and i need predict after number from previous numbers and just 4 targets tar[54,26,18,32] which sequence is true for data set?

Thanks.

Sounds like a many-to-one sequence prediction problem.

806,046,009,905??????????????

What was the problem exactly?

is the sequence prediction algorithm same the Convolutional neural network algorithm?

or it has the same idea

You can use both LSTMs or 1D CNNs for sequence prediction.

Hi!

I have this problem:

https://ai.stackexchange.com/questions/6741/regression-with-more-than-one-output-neural-network

What kind of sequence do you think it could be?

Perhaps you can summarize your problem in a sentence for me?

I tried to shorten my problem description, but I couldn’t make it fit in one sentence because I felt there was too much to say. Hope you don’t mind.

There is a system in which researchers receive a classification that can be C, B, A or A1, where C is the lowest and A1 is the highest.

This classification is based on the number of products that the researcher has in his profile.

I want to make a recommendation of the number of products that a researcher must do to improve their classification within the system, taking into account the number of products and the classification that they currently have.

Sounds like a constraint optimization problem rather than a machine learning problem.

I’d recommend looking into the field of ‘operations research’ and their methods for constraint optimization.

The thing is that the recommendations must be personalized, according to the profile of the researcher. Because there are several categories of products, some are mandatory to go up in category, but besides mandatory products you can choose among several.

For example, if an investigator is a lawyer, it should be unlikely that the system would suggest making products related to medicine, or it might suggest it, in case there is activity of that type in his profile.

These sounds like constraints in an optimization algorithm, like a bin packing problem or knapsack problem. It does not sound like a recommender system, but I could be wrong.

To build a recommender system for this, you need to give products or activities for the researchers scores to measure how important they are for them. Then you can build a user-based or item-based recommender system. Hope it helps.

You need to give scores for products or activities of researchers to measure how important they are for them. Then you can build a user-based or item-based recommender system. Hope it helps.

hello jason i have a many to one sequence forecast question. i was hoping you could tell me how to get one number correct in massachusetts lottery keno game

a wager of one spot for $20 pays $50 back

i know its an rng with seed and algorithm

i know you have to play when it is busy

This is a common question that I answer here:

https://machinelearningmastery.com/faq/single-faq/can-i-use-machine-learning-to-predict-the-lottery

Hi Jason,

I have a problem which, according to me, does not fit any of the above situations.

Given a disparate set of entries, and a sequence as an output, is it possible to predict what the sequence would be with a different set of entries?

For example:

(a,b,c,d) always gives [d,a,b,c]

(a,c,b,d) also always gives [d,a,b,c]

and so on

Assuming it is trained with every possible letter, I want to know what (a,c,d,e) would give, for example.

One approach I had was to convert this to a sequence to sequence matching problem by feeding in every permutation of the inputs as a sequence, and matching it to the output, but in such a scenario I may not require NN in the first place.

Do you have any insight to offer on this?

Perhaps this framework will help you understand whether your problem can be framed as a supervised learning problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Can i use this to store data of over 50 years and use it to predict what could happen in the tenth year.If so, how can i do it

Sure, you could try.

Once you fit the model, you can call model.predict() to make an out of sample forecast.

I have tens of examples on the blog, try the search.

Hi Jason,

I was wondering if there is really any difference between sequence-to-sequence and sequence prediction problems (assuming length/dimension of sequence is known and fixed).

If there is no difference, then how would one decide between employing GAN or an ordinary neural network model?

Thanks,

I don’t follow, can you give an example?

Can we apply this to predict clinical events based on past data of others , I want to see if certain muscoskeletal injuries have a sequence to it

Perhaps.

Thanks for this tutorial Jason.

I have a problem where we have sensor data with different parameters and we want to predict the CO alarm. As per the different values of the variables we have to predict when the next alarm would take place. The data is a time stamp data. Please guide me how to proceed with such business problems.

Aside this I have sent you LinkedIn invite please accept it.

Thanks in advance.

Jaideep Negi

Perhaps this process will help:

https://machinelearningmastery.com/how-to-develop-a-skilful-time-series-forecasting-model/

Hi Jason,

I am trying to predict categorical data with example 6.7 . Each row has some categorical data as below,

[ABC,DEF,GHI, XXX]

[GHI,BTY,,AAA,PPP]

[DEF,XYZ,BBB,GHI]

I followed below steps,

1) Label encoded all values

2) Looped all rows, one hot encode it and train LSTM

3) Predict

But when I do evaluate, I found I am getting same prediction value for all test data.

I exactly followed your code as in example 6.7in LSTM with Python ebook. Also, when I tried to compile your code in 6.7, I was getting error.

Perhaps the problem is challenging or does not have enough data or the model needs to be tuned?

What error are you getting with the code in the tutorial?

Hi,

Good posts Jason. If I would like to do my Ph.D in Sequence Prediction specifically in stock market prediction in India which of your series is most suited for it

None.

Hi jason

could you explain which model is good for stock market prediction and why?

This is a common question that I answer here:

https://machinelearningmastery.com/faq/single-faq/can-you-help-me-with-machine-learning-for-finance-or-the-stock-market

Dear Sir,

I need an LSTM training and testing algorithm of time sequence prediction for deeply study. Is there any book or tutorial in this regards?

Thanks

Azad

Yes, you can start here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason,

I’m having a hard time adopting this methodology to a classification problem with more than one time series. For example, a data set for customer churn or employee attrition where each customer/employee” can have their own time series. Is an LSTM NN the best way to model such a problem or is a classification algorithm with features that capture the time variant information better?

Thanks!

Ronen

Perhaps try a few different framings of the problem, this might give you some ideas:

https://machinelearningmastery.com/faq/single-faq/how-to-develop-forecast-models-for-multiple-sites

Hi Ronen & Jason, I have the same problem, where I need to predict the sequence of multiple customers & I have around 20k customers or more than that. Now I cant develop individual model for each customer. nor cant cluster the customers as each customer has their own pattern of sequences. Can u guys help me here?

Then perhaps try training a model that learns across customers.

Many thanks for your article. My problem is extracting a sequence of words representing two parts of relations. So the input is an annotated sentence with two chunks of words related to each other. The output is sequence of words representing part 1 and part 2 of the relation. Could you please advise what type of sequence is this and what is the appropriate model to use.

Thanks

I’m not sure off hand, perhaps you can give a short example?

for example, the following sentence has two parts related with Conditional relationship.

your teacher says [if you study hard], [you will pass the exam], however, I don’t think you have enough time.

the parts are enclosed in square brackets (for illustration). The model needs to extract these two chunks

Hmmm, I think you’ll have to do some research on this.

Off the cuff, the simplest approach would be to have one model output chunks with some marker between chunks, but I expect there are more efficient approaches.

Hi Jason,

Thank you for all the amazing blogs,

I would be grateful if you can clarify the following for me.

Say I have one-minute data sample collected from soccer matches with 20 features. I have just over 1500 games to train and test the model.

I tried to implement LSTM model for multiple feature forecast. I trained/tested the model with lag 5 and got a score of 91%.

My question is, given only the first-minute values, is it possible to make a prediction for the remaining 90 minutes of the game.

So my input shape will be (1,1,20) and expected output will have a shape (89,6).

I really appreciate any suggestion.

Thank you,

Abey

That would be a challenging prediction problem!

Nevertheless, try it and see.

Hello Jason –

Thanks for your selflessness with these gems (articles).

I want to mainly predict ‘when’ a patient-level event will occur in hospitals. For instance, there was an article I read a while ago on building an algorithm that could predict onset of sepsis in a patient almost 24 hours prior to the onset. What’s the better algorithm for doing this and what kind of a sequence issue is this (sounds like 1,2,3,4,5 –> 6 based on timestamps)? I can work on predicting who’s at risk but the ‘when’ they’re likely to have that event is the real question.

Thanks,

Elijah

Sounds like a great problem.

I recommend testing a suite of algorithms on the problem, e.g. start with MLP and explore CNN and LSTM. This framework will help you to get started:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi: now I have a problem. I have some time series with different length. I want to use LSTM auto encoder(or any other deep learning methods) to extract the features from the time series. How can I do that? I’m looking forward to your reply. Thanks a lot.

Perhaps this approach will help as a starting point:

https://machinelearningmastery.com/lstm-autoencoders/

Hi Jason,

I am working on a model to predict the next page clicked by the user based on the click sequence data of more than lakhs of users. The sequences are of varying length. Which model will be most appropriate to predict the next clicked page?

Perhaps explore an LSTM model:

https://machinelearningmastery.com/start-here/#lstm

Hi Jason,

I enjoyed reading this article!

What’s the difference between “sequence generation” and “sequence to sequence prediction”?

If the input in “sequence generation” is also a sequence, then it looks very similar to “sequence to sequence prediction” right?

Thanks!

Good question.

Generally, sequence generation involves giving the model a seed and getting a much longer sequence out, e.g. a few words in and a few paragraphs out, like a simple language model.

Seq2Seq often refers translating a input sequence to an output sequence, such that they are directly related, like German to English or text to summary, etc.

Hi Jason,

Thanks for this tutorial! I have a question about product sequences..

Suppose I have data for a single customer and all the products he has purchased in the last year.

For example: cust_id : x1

order history : order_id_1 : [product1 , product2, product3] order_id_2 : [product1 , product2 , product5]

what is the best way to predict the next set of products the customer might buy with probabilities..

Thanks

I recommend testing a suite of framings of the problem in order to discover what works best for your specific dataset.

Perhaps you can model per customer?

Perhaps you can model per customer group?

Perhaps you can model across all customers?

Perhaps you can model by product categories?

…

Let em know how you go.

Hi Jason,

Thanks for the reply! I was going to first try out by modelling per customer, but I’m not getting what model to use? I’m new to this, sorry for the silly question!

Thanks

I recommend testing a suite of models in order to discover which works best for your dataset.

This may help:

https://machinelearningmastery.com/faq/single-faq/what-algorithm-config-should-i-use

Hi Jason,

I loved this article!

how can I predict the upcoming exam questions using 10 past exams? like what algorithms or using machine learning to find the sequence. Thanks

Hmmm. That is a very hard problem.

Perhaps you can model it as a language generation problem – for fun?

Okay thank you ! and how to I do that? I am a novice. Please share any article, reading material, book, you tube video or your own suggestion. Really appreciate your help 🙂

It would require a lot of testing development – e.g. there are no right answers, you must discover what works. I don’t recommend it as a project for a beginner.

Perhaps start here on something simpler:

https://machinelearningmastery.com/start-here/#deeplearning

Thank you Jason 🙂

Hi Jason,

Thanks for the blog post. I do have some queries.

Say example i have an input data set :

2018, Q1 – Category classes 1, 2, 3

2018, Q2 – Category classes 1, 2, 3, 5

2018, Q3 – Category classes 3, 4

2018, Q4 – Category classes 1, 3, 4, 5

I want to predict 2019. Q1 with category classes 1, 2, 4 (For example)

* In total i have category classes : 1, 2, 3, 4, 5

From where i am seeing this, it looks like a combination of sequence classification and sequence prediction. Using only historical data as input to predict the next sequence of classification as an output.

May i know what approach should i go about working on this? As for categorical classification/sequence classification would require of me to have the input data set for the classification (in this case, wont be a prediction).

From this blog, i noticed also i should not shuffle my data set?

Thanks

This might be a multi-label (not multi-class) time series classification problem, where a given interval requires the prediction of zero or more labels/classes.

A good place to start might be here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason,

Your tutorials are awesome. But still its hard to follow .

Can you share some weather forecasting toy example? using a few features?

Yes, see here:

https://machinelearningmastery.com/multivariate-time-series-forecasting-lstms-keras/

why do you respond when you ask for weather forecast and stay away when you ask for financial forecast?

I avoid advice on finance problems, here’s why:

https://machinelearningmastery.com/faq/single-faq/can-you-help-me-with-machine-learning-for-finance-or-the-stock-market

Hi,

Is there a way to generate a seed out of a sequence of numbers?

Example:

I have this list of numbers:

03 08 11 17 19 26 28 31 36 37

How can I get the seed value from this list ?

Thank’s in advance

Regards

If the sequence is random or pseudo-random, then no, it’s not a learnable function.

Hi Jason,

Thank you for this great article, your other posts on LSTM are also very helpful!

It is the following ‘sequence’ definition that I have a hard time wrapping my head around.

The data that I have consists of multiple time series, say I have 200 ‘blocks’ of spatial time series. Within each block I have the location of an object per time step, say each minute, whereas the recorded length of each block is 2 hours. For the same time steps I have factors of influence on the next location of the object, for example wind speeds.

The time ‘blocks’ themselves do not create a complete time series, one block may be 2 hours recorded on the 28th of May in 2016, the other block may be 2 hours recorded on the 6th of June 2019, etc.

In a way, this problem can be described as a Sequence Generation problem you address in this article, I can feed a sequence of wind speeds of the same length of the location sequence I want to predict, add constants that give an initial starting point to the model, and ‘translate’ or ‘predict’ a sequence of locations.

What I do wonder is whether this model is capturing the characteristics –within- the blocks, as the new location of the object depends on the location it was (at least) one time step before, hence within such a time series block it is more of a Sequence Prediction problem. Though this is not what I’m ultimately interested in, as I want to generate a complete motion sequence, rather than predicting the next motion steps given part of a location sequence. Do you think a Sequence Generation LSTM can capture this ‘within’ dependency of the timesteps?

Thank you very much in advance!

Sounds like a great problem!

I would encourage you to explore diffrent framings of the problem. e.g. per-location, per-location-time, across locations/times, etc. See what works.

Think of the problem in terms of inputs and outputs. This might also give you ideas:

https://machinelearningmastery.com/faq/single-faq/how-to-develop-forecast-models-for-multiple-sites

Let me know how you go.

Hello Doctor Jason. If I have like 20 sequences/trajectories. Can I train my network with 5 of those sequences/trajectories and then train the network to predict the remaining 15 sequences/trajectories?

If so, do you have any example ,tutorial or resources that I can follow? I can predict within one sequence/trajectory by going some steps back and predicting a step forward. But my goal is to predict full trajectories. Thanks.

Sure.

Yes, this might be a good place to start:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi,

is it necessary to have equal no. of input variables during and training and during prediction. I am trying to teach an LSTM network an algorithm so that if I give one input (The first State,t=0) it would predict the final state(t=500). I have the whole sequence between t=0 to t=500 to train it with. I tried to train the network using the initial 499 steps as training input and the 500th step as the output. But this implies that i have to input 499 steps as input during the prediction stage too , which completely undermine my objective to obtain the final step by just giving input of intial time step.

The i tried to train the LSTM network giving only the first and last time step as the input and output. Which resulted in overfitting. I tried simple to complex network rchitecture different activation function but to no avail.

Can you suggest a solution, Is there anyway i can train the network on all time steps but for prediction only need to input one single intial steps.

(The algorithm is Metropolis algorithm on ising model)

Thanks in Advance…

I recommend framing the prediction problem based on how you intend to use the model.

E.g. if you want to make prediction based on the prior 7 days of data only, then construct the model to take 7 days of input for each sample, etc.

Hello Jason,

In sequence classification problem, instead of predicting the classes [‘good’ or ‘bad’] on inputting a whole sequence [1,2,3,4,5], I just want to provide only a part of sequence as input e.g [1,2,3], and the network should predict whether it belongs to [‘good’ or ‘bad’].

So in my case, how can i approach this issue ?

Could you suggest me any links or papers ???

Note: I am using LSTM’s for this problem.

Perhaps adapt the example in this post:

https://machinelearningmastery.com/sequence-classification-lstm-recurrent-neural-networks-python-keras/

May i use timdistributed layer after my lstm layer like you have mentioned in

‘https://machinelearningmastery.com/timedistributed-layer-for-long-short-term-memory-networks-in-python/’

Perhaps. Not directly though.

Hello Jason,

If i follow the link which you have suggested (https://machinelearningmastery.com/sequence-classification-lstm-recurrent-neural-networks-python-keras/) whether I can able to predict the class [‘good review’, ‘bad review], if only the part of the words given as Input into the trained model ?

Overview about my work

My data contains Vehicle CAN signal, dynamics data.

X_train.shape = (271,100,4)

# 271 segments, each segment is of shape 100*4

# every row in 100*4 corresponds to each Time step (t0, t1, t2, t3,…..t99)

Y_train.shape = (195,)

# each segment out of 271 segments belongs to either 0 or 1 (2 classes)

# [0,0,1,0,1,0,0,0,0,1,………………………………………………..1,0]

X_test.shape = (31,100,4) # 31 segment of shape 100*4

Y_test .shape = (31,)

MY REQUIREMENT

After training , my model should predict the correct class (either 0 or 1) if i give only a part of segment as input, say, I am sending my testing data as (31,60,4) or (31,70,4) or (31,80,4) (31,90,4) and the model should predict which class each segments belong to.

I would be happy if you provide me some hint to continue further

You must train the model in the way you intend to use it.

That means that if you want a prediction from a partial input, then you must train your model in this way.

Hi Jason, I’m completely lost when trying to choose the type of predictive model for my problem. Is it autoregressive model, Conditional Random Field, Hidden Markov Model or other? Can you please give me some advise?

78, 18, 51, 89, 19, 43, 62, 28, 94, 49

Suppose, everyday I’m given 10 data, and an example was listed above. They’re numbers generated by two devices, namely Device A and Device B. Each of them is capable to generate numbers from 0 to 9.

The first number in the data is generated by Device A, while the second number is generated by Device B. For instance, for the first data of “78”, “7” was generated by Device A and “8” was generated by Device B. Similarly, for the last data of “49”, “4” was generated by Device A, and “9” was generated by Device B.

I want to be able to predict the next outcome variable after the last “49”.

I have a total of 300 historical data for 30 days.

From my initial investigation for the 300 data, every device tends to produce repeated sequences. For instance, Device A will repeat the sequence “6-2-9-4” (as in the last 4 data). That means this sequence appeared twice within the 300 historical data for Device A. For another example, the sequence “8-1-9-9” (the 2nd to the 5th data) in Device B appeared twice, too. Each of them produces at least three repeated sequences.

I’d like to predict the next outcome variable after the last “49”. Which model is more appropriate?

Thank you in advance!

Yes, follow this process:

https://machinelearningmastery.com/how-to-develop-a-skilful-time-series-forecasting-model/

Thank you for your reply, Jason. May I know why do you think that this could be a time series problem?

I’m sorry for the misrepresentation, Jason. The data was taken on every Monday, Thursday and Friday. 10 data per day. Can i still model it as a time series problem?

Perhaps this post will help you to determine if time series forecasting is an appropriate framing of your dataset:

https://machinelearningmastery.com/time-series-forecasting/

I don’t know, I got the impressive that the observations were ordered by time. Sorry if that was incorrect.

Thanks again, Jason! I think it’s a time series.

Great.

I have read so many of your tutorials and blogs and it helped me a lot. You are a legend.

Thanks, I’m happy they’re useful to you!

Hi Jason,

thanks for all your tutorials about time serial and its generation.

Ive just came up with a new problem where im not sure ML is the right approach or if its even possible at all. Can you please give me your opinion about that project?

Its about multiple vibration motors which run simultaneously and play 5 different musters each. They aim to stimulate some kind of emotions (my labels).

Is it possible, given an emotionially label, to generate new vibration pattern for each motor with similar attributes?

I´ve considered interpreting my 5 vib.sequences as matrix and perform smth like a cnn on a 5xn matrix, where n is the number of vibrations in each sequence or to use some kind of RNN you presented in some of your articles.

If you have any ideas i´d appreciate your view.

Best wishes

Kenny

Yes. I believe you are looking for a generative model for time series data.

I don’t have a tutorial on this topic, but perhaps some searching on google or scholar.google.com will point you in the right direction.

Ty!! I´ll check this out.

Hi Jason,

Having you is a blessing for ML seekers like me, thanks!

I’ve just got a problem for which I’m struggling how to formulate and define as a ML problem.

The dataset contains blood units that have been collected from a supplier, and after going through a sequence of statuses (each status occurs in a certain time and location), they result in one of the statuses “Transfused” or “Discarded”.

The thing that I’m looking for is the pattern of discards (or something that helps me predict the possibility of being discarded for a certain blood unit).

Please let me know if more clarification needed.

I’d appreciate you advising me / refering me to a material.

Best regards,

Jaber

Good question Jaber, I believe this framework may help:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Hello Jason,

Firstly, I am very thankful for all your ML blogs and books. They are very helpful for a fresher like myself.

I am currently working with solar irradiance hourly time series. I have the hourly data for several years which are then clustered into representative/typical days(say 10 days). And each day of the year is assigned to one such typical day number/ index. Thus resulting in a sequence of 365 terms with numbers ranging from 1 to 10. for one year. And I have this sequence for several years. I need a model to forecast this sequence. I tried using SARIMA model but I am not sure how to use it for discrete numbers.

Please help me find a time series classification or categorization model that could also accommodate seasonality.

Best regards,

Anuj.

Perhaps try some of the models here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hey Jason,

Thank you very much for your dedication, your selflessness is a huge help for our beginners.

If I have a set of pictures with temperature changes (about 27,000 picture frames), this picture shows the trend of temperature change. Can I predict the following 200 frames of the trend of temperature change from these previous 27000 picture frames, provided that there is no trend information for the subsequent temperature changes in my training data sets, and only the first 27,000 frames are in the training set.

Best wishes,

mallota

Perhaps try it and see?

Hi Jason,

Thanks for all your tutorials and blog posts!

I’m working on a educational problem for high school students. In each year (n), student (i) participates in several courses (j) and I have his/her grades for each course (A). Then when students finish their high school, they all submit their grades and a “Statement of Purpose” letter (B) to college. Then the college ranks students (C) and decide to either accept or reject (D) them. Therefore, for each student I want to predict his/her ranking as well as being accepted or rejected to a college knowing his/her grades in each course across different years. So my inputs are A(i,j,n) and B(i) while my outputs are C(i) and D(i).

Now I want to have a Machine Learning model to predict C(i) and D(i) based on the X(i,j,n) and B(i) inputs. To my understanding my dataset is a sequential data and I need to use “sequence prediction” model, is this correct? And if so, what’s the best method for doing this, should I use RNN?

Again thanks for your help.

Rajit

That sounds like a fun project.

Perhaps try modeling it and see if the framing is effective?

hello jason. pls i like to ask

is it possible to do sequence labeling or tagging with xgboost

if it is true, kindly direct me to a link where i can read more about it.

i have searched a lot but yet to see what i am looking for, thanks in advance

Perhaps.

I don’t have examples sorry.

Hi JASON

I have a text data.

I need to predict the mean funniness( estimated funniness) from 0 to 3 corresponding to every single sentence.

Can you tell me how sequence method can help me

Start by collecting or preparing a dataset made of text and funniness scores.

Thank you.

I already have a labeled data set.Now how i start working on it.

You can follow the tutorials here to learn how to model sequence prediction problems with neural networks:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason, thank you for your great tutorials!

Do you think modern NLP transformers with long memory like GPT-2 could outperform LSTM on non-language sequence prediction tasks like medical history or user behavior modeling? I googled hard, but didn’t find any examples of this approach.

Good question – probably.

Perhaps try it on your dataset and see?

Thank you Sir for your help.

You’re welcome.

Hello Jason,

I came to this article while searching for my problem on Google.

So far, I’m a naive in Data Science area.

Problem / requirement statement:

We have a power generator, which is continuously running. It’s suggested maintenance time is after 1000 hours. We don’t want to rely on it’s documented schedule. There could be a time when the machine require early maintenance.

So we want to devise a mechanism for prediction by which we can pre-plan the maintenance window and intimate the teams about it’s downtime.

We continuously receive sensor’s data of it and keep storing all that information. I am not sure whether this is a Sequence Prediction problem? Is it related to LSTM? if Yes, then how? and if No then which algorithm or technique we shall consider to address this problem?

Your guidance and input to this would be very helpful.

Perhaps model it as a time series classification task. The tutorials here will help you to get started:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Alright, thanks. Will read it thru and let you know if i face any problem.

Hi Jason. I have a question about how to solve sequence comparison tasks. Say I’m trying to predict the winner of two tennis players and as my inputs I want two sequences of their respective careers (all previous matches and relevant stats). How would I go about modelling this with LSTMs? My feeling is I wouldnt want one large sequence model as there isnt a relationship between the neighbouring timesteps so I would imagine I want two different LSTMs that merge somehow?

Regards,

Louis

A rating system might be more appropriate than an LSTM.

Nevertheless, a sequence of scores or prior outcomes might be a start, e.g. a time series classification task for win/loss.

Thanks for your reply. Interesting idea. Perhaps an encoder-decoder setup and then train on the winner of two encoded players?

I’m attempting to train on the sequence of prior outcomes using a shared LSTM layer from two input sequences and then a softmax classification layer but it is struggling to learn. Potentially not enough training data.

There may be many ways to frame the problem. I’d encourage you to test many approaches, see what works/sticks.

Hi Jason,

I am working on a problem where the input is a sequence, like an acceleration vs time signal . However the output is another quantity (not acceleration). Could you please tell any traditional ML methods (other than RNN) that uses sequential information of input data to predict a different output quantity. I think these kinds of problems don’t belong to sequence prediction, sequence classification, sequence generation nor sequence to sequence prediction. Thanks,

Yes, the tutorials here will provide a starting point:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hello, Jason

I’m really happy to read this.

I have a quick question,

what is the difference between ‘sequence generation’ and ‘sequence to sequence’?

For me,

It seems like same cause both of them generate sequences.

Could you please tell me the difference between them?

Thank you

Perhaps seq2seq assumes both a sequence in and out and sequence generation does not make an assumption about the impetus.

Hello Jason.

Thank you for the concise article. I liked how you classified sequence modeling tasks that make it easy to visualize real-world use cases.

Is it possible that a sequence prediction task can be achieved such that at each time step features are fed as input and to output once again features? The features I mention are the same that would be usually fed into a feedforward neural network for classification/regression tasks. From what I’ve noticed, every example uses ID for both input and output in sequence modeling tasks. Is this the only way?

Thank you.

Not sure I follow your question.

No need to pass in id’s they are not predictive (most likely). Perhaps this will help:

https://machinelearningmastery.com/how-to-connect-model-input-data-with-predictions-for-machine-learning/

Hello,

Thank you for this tutorial.

I have a dataset with some gait parameters (step length, stride length, etc) of 100 people taken 3 times at different times (every 6 months). Now I have to train my model on this dataset and predict if the person has a disease or not for any new person’s data that is given. How can I take all this 3 data of parameters in my model for training considering the time factor? I checked time series forecasting but it looks like for that the dataset should be dependent on continuous-time instances. It doesn’t seem like sequence prediction problem too. How can i go about this?

Perhaps prototype a few different models with different framings of the data and discover what works well.

Jason can you please help me to predict a new sequence from a set of sequences

example

a1 a2 a5

a1 a2 a4

output sequence a1 a2 ie containing maximum appearances of a particular variable in th out put

This might be a good place to start:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason,

Thanks for the wonderful article!

I’ve a data which shows a sequence at different time and target variable is to predict if a customer will buy a product or not (binary)

ex.

cust_id event1 time1 event2 time2 event3 time3 …… event7 time7 target

123 a 15 b 55 d 12 a 23 0

245 b 25 a 65 e 25 d 15 1

sequence alphabet is repeated in my case , like for customer 123 sequence is abdecg and then again ‘a’

similarly for each customer the events in sequence might repeat like abcaabdf , bdbdcf , etc

how can i handle such data ? what should be the input data format for using RNN?

Thanks in aadvance!

Perhaps try exploring models per customer, across customer groups, across all customers, and compare results.

Hi Jason ,

Thanks for the reply.

Could you please elaborate on how the structure of input data could be in this case? matrix form ? or how would it look like .. as an input to the model?

Also,on comparing models across customers. Should there be as many models as #customers?

This can help you prepare inputs:

https://machinelearningmastery.com/faq/single-faq/what-is-the-difference-between-samples-timesteps-and-features-for-lstm-input

You may need to experiment around the number/type of models to see what works/makes sense.

Thanks for the article. It was useful.!

Thank you.

You’re welcome.

Hi Jason,

So, I have a dataframe each rows of which represent some low-level user activity on a computer associated with a higher-level business process activity. The high-level business process activity is comprised of sequences of such low-level activities represented by each row. The columns of the data frame looks like this:

| Business Process Activity | Case | Application | Activity of the User | Username | Time since startup | Sender email | Sender name | Receiver email | Attachment filename | Body of document |

The rows contain the low-level activities that are associate with a business process activity with each low-level activity being part of a sequence identified by the case.

Now, I want to convert each of the rows into a feature vector for training but each column of the row depict different type of data. Some are numerical value, some are textual data and some of the columns are empty for some of the rows. How can I convert these into vectors for training?

This framework will help:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

If there is a time series of observations, this too will help:

https://machinelearningmastery.com/time-series-forecasting-supervised-learning/

Hello Jason what an amazing material, I have learnt a lot thanks.

My question is that I have a dataset contains only a single column having numbers from 0-99 with an unknown sequence so I am trying to train the model on these values and then get predictions by giving any value from the dataset and our model predict the next?

How to do that because many values are also repeating so please give me any suggestions.

Thanks in anticipation.

Thanks.

Perhaps start with a linear model like an SARIMA:

https://machinelearningmastery.com/how-to-grid-search-sarima-model-hyperparameters-for-time-series-forecasting-in-python/

Thanks Jason for all your wonderful tutorials. I have been trying to work on a use case where I have a sequence of events in IT operations. There a number of situations when one event would happen and often a few has another event which looks completely different would occur. This happens repeatedly for thousands of events every day.

I was looking to your advice on how can I identify all such situations for different kinds for different kinds of situations which are not obvious.

Perhaps start by thinking about what you want to predict. E.g. an event, the number of events in an interval, whether an event occurred in an interval, etc.

Hi Jason, thank you so much for all your work. You’ve aided me numerous times in understanding very advanced concepts in a very intuitive way.

I currently have a problem that I hope you can help with.

I am coding a data set that takes 7 feature dimensions (5 personality type dimensions, outcome and successive answers) and compares them with the output for 3 possible classes. Each class is mutually exclusive so I have currently developed a multi class classification model.

My problem is, I want to take into account how personality type may have a bearing on successive answers. That is, based upon personality type, someone may choose one class more frequently than the other, and I want to make sure the model takes that sequential classification into account. However, I am not sure if the way I’ve coded successive answers right now captures that (every time a consecutive response is recorded, the number increased by +1)

Can you please explain, or point me to literature for how to perform that calculation in the most appropriate way given the goal?

Thanks again for all your work.

You’re welcome.

I recommend following this process to work through your project:

https://machinelearningmastery.com/start-here/#process

Hi Jason,

Thank you for your informative articles.

I am trying to do a Sequence Prediction to predict the “next click” of a user browsing in a web software, based on the history sessions and other meta data, like the user type, age etc.

It is not clear to me how to prepare the data given that each session in the history has different length? As opposed to stock forecasting which is a long list of values, when modeling user sessions, each session has a start and an end , and its not clear to me how to model them?

Also, how can I add the “metadata” in addition to the clicks?

Thanks!!

You’re welcome.

Each item/page is probably a category, you can represent it with an ordinal encoding, one hot encoding, or embedding.

You can have one input to the model for the categorical data and one for other meta data.

Hi jason.

i have 90 arrays sequence as input and want to predict 91 array as output kindly if u can help me ?

Perhaps start with linear models here:

https://machinelearningmastery.com/start-here/#timeseries

Thank you Jason for the valuable information. I have a question:

if we have different states of an object (contains different attributes, some of them are static and others are dynamics) in the past and we want to predict the future state of the object. This is what I understand is a sequence generation task. So, we can include also the static attributes with the input, or is it better to add them in a different way?

You’re welcome.

Yes, you can have a model that has one input for the sequence and another input for the static data, called a multi-input model.

You must use the functional API, this will help you to get started:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Hi Jason,

Thanks for the informative articles. i have a question:

say i have a sequence [1,2,3,4,2,5,3,4] which is associated with 3 categorical features. i want to predict the future sequence wiith the 3 categorical features as input. (basically a reverse of sequence classification)

can you kindly suggest me the workflow?

thank you very much.

I don’t think that would be enough data, e.g. generate a sequence from a categorical input.

Hi Jason,

I need your help. I am using LSTM autoencoders with anomaly detection to train my model. I have different data measurements. We have a few labels like valid V and for invalid we have classified some other labels G, H. We would like to train model where for invalid model it must show anamoly detection. I dont know whether i convey my query properly. Also my dataset is the collection of number of json files. I dont know How I can use json files in the python code. I didnt find any sample version. Could you help with this?

I recommend first loading your data into numpy arrays as the first step. Sorry, I don’t have example of loading this type of data.

Hello Jason,

Thank you so much for the amazing tutorial.

I am currently trying to develop a model to predict a sequence of hourly bids for an electricity market. I am using historical Bids consisting of ( quantity of electricity , price ). For each hour of the day there is a number of bids (lets say 1500 bids per each hour). My input is going to be the installed capacity of each electricity generation technology ( 7 different values ). The thing is I am new to neural networks and I am trying to learn more on how I can develop such a model. I would really appreciate if you can share some insights on how I can get this work or any reference I could use for a similar problem. Is it possible to predict the bids right away or I have to break it into Quantity and price first?

Thank you so much for your time and assistance.

You’re welcome.

This might be a good place to start:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason,

Thank you for your articles, they are always super informative and useful!

I want to predict, given a sequence of true and false, whetheter the next one will be a true or a false. Ofc it would be nicer if I could predict a longer sequence, because if that would be accurate I would have the proofs that I’m searching for. To make it simple, I want to check if the matchmaking of a popular online game is rigging the matches to make you likely to have a near 50% winrate. If there is some kind of manipulation, it should be possible to create something that guesses, given the results of the last x games of someone, if he’s likely to lose the next game or not, while if there’s no manipulation, it should be impossible to make something like that, especially if the dataset contains data from many different players that don’t play together. For this reason, the traning data will be simply the sequence of win and losses of the last x games for a y number of people. What I wanted to ask you is: how much data would be optimal to get decent results (like, for example, the last 100 games of 100 players) and which libraries/frameworks/models would be optimal for this specific case.

Thank you in advance for your time

It is difficult to tell and really depends on the nature of the sequence. I can always make one like this: always X[t+N]=X[t] for some large N and X[t+1] is random and independent of X[t] all other cases. Then whatever you set for your sample, I can always use a larger N to make your model useless.

Hi Jason,

I am doing a project where for a specific role (current role) I want to predict future three roles (in sequence) based on current role, region, technical skills, average experience. Can you tell me this problem is based on which of the sequence prediction methods mentioned in your post. Also how can I approach this problem. Which methods can I use to do this?

That is close to sequence prediction. Depends on how your sequence is presented, there can be different models to do it. This one is an example: https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

heyy, ive been pondering on this for quite sometime and had a few naive approaches but none of them seem to be working well, is it possible to train a NN model to convert all the odd numbers to the nearest even and get back the same data back (kinda like invertible NN), i think RNN is definetly in order to get anywhere close to the required model.

It doesn’t sound mathematically possible to get the same data back. But did you try to make a model for that?

yes i did, i used the rnn model for this one but it didnt get it all right made like a few mistakes here n there about 95% of the data was correct, so i thought this is do able.

few suggestions were to use autoencoder by stack overflow community

Hello,

In case we want to predict the probability of a next digit appearing to a sequence should we use a Markovian chain? For example, the probability of 2 appearing after 1 is some percentage and then the probability of three appearing after that 2 is some percentage. So in the end I have 123

Hi Christina…I would recommend proceeding with your approach.

Hi Jason, Thank you for this nice article. I would like to know if I want to perform sequence generation using LSTM, how is the Mean squared error defined ? is the square of the error averaged over both the number of test (or training) instances and the number of elements in the predicted test (or training) sequence?

Hi Pratibha…The following discussion may add clarity:

https://stackoverflow.com/questions/57968421/mean-squred-error-interpretation-in-lstm-model-bidirectional-or-multiparallel

Thanks

I have a sequence numerical dataset for classification task. my dataset in CSV format is like this:

first row: [[0,1,0],[5,0,1],[4,1,1]]=>target= 5

second row: [[1,2,0],…, [5,0,1]]=>target=2

third row:[[5,1,0],[5,0,2],[6,0,0],[1,2,0]]=>target=3

…

When I train the dataset using transformers, accuracy doesn’t raise more than 48%. I don’t know what is the problem. I have been using common tokenizers such as wordtokenizer or bert tokenizer, etc.

I’m not sure if i’m using a correct tokenizer or maybe maybe I need to do some preprocessing on my data.

Pleade guide me about it.

Hi Moha…The following resource may be of interest:

https://machinelearningmastery.com/transformer-models-with-attention/