The encoder-decoder architecture for recurrent neural networks is proving to be powerful on a host of sequence-to-sequence prediction problems in the field of natural language processing such as machine translation and caption generation.

Attention is a mechanism that addresses a limitation of the encoder-decoder architecture on long sequences, and that in general speeds up the learning and lifts the skill of the model no sequence to sequence prediction problems.

In this tutorial, you will discover how to develop an encoder-decoder recurrent neural network with attention in Python with Keras.

After completing this tutorial, you will know:

- How to design a small and configurable problem to evaluate encoder-decoder recurrent neural networks with and without attention.

- How to design and evaluate an encoder-decoder network with and without attention for the sequence prediction problem.

- How to robustly compare the performance of encoder-decoder networks with and without attention.

Kick-start your project with my new book Long Short-Term Memory Networks With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Note May/2020: The underlying APIs have changed and this tutorial may no longer be current. You may require older versions of Keras and TensorFlow, e.g. Keras 2 and TF 1.

How to Develop an Encoder-Decoder Model with Attention for Sequence-to-Sequence Prediction in Keras

Photo by Angela and Andrew, some rights reserved.

Tutorial Overview

This tutorial is divided into 6 parts; they are:

- Encoder-Decoder with Attention

- Test Problem for Attention

- Encoder-Decoder without Attention

- Custom Keras Attention Layer

- Encoder-Decoder with Attention

- Comparison of Models

Python Environment

This tutorial assumes you have a Python 3 SciPy environment installed.

You must have Keras (2.0 or higher) installed with either the TensorFlow or Theano backend.

The tutorial also assumes you have scikit-learn, Pandas, NumPy, and Matplotlib installed.

If you need help with your environment, see this post:

Encoder-Decoder with Attention

The encoder-decoder model for recurrent neural networks is an architecture for sequence-to-sequence prediction problems.

It is comprised of two sub-models, as its name suggests:

- Encoder: The encoder is responsible for stepping through the input time steps and encoding the entire sequence into a fixed length vector called a context vector.

- Decoder: The decoder is responsible for stepping through the output time steps while reading from the context vector.

A problem with the architecture is that performance is poor on long input or output sequences. The reason is believed to be because of the fixed-sized internal representation used by the encoder.

Attention is an extension to the architecture that addresses this limitation. It works by first providing a richer context from the encoder to the decoder and a learning mechanism where the decoder can learn where to pay attention in the richer encoding when predicting each time step in the output sequence.

For more on attention in the encoder-decoder architecture, see the posts:

- Attention in Long Short-Term Memory Recurrent Neural Networks

- How Does Attention Work in Encoder-Decoder Recurrent Neural Networks

Test Problem for Attention

Before we develop models with attention, we will first define a contrived scalable test problem that we can use to determine whether attention is providing any benefit.

In this problem, we will generate sequences of random integers as input and matching output sequences comprised of a subset of the integers in the input sequence.

For example, an input sequence might be [1, 6, 2, 7, 3] and the expected output sequence might be the first two random integers in the sequence [1, 6].

We will define the problem such that the input and output sequences are the same length and pad the output sequences with “0” values as needed.

First, we need a function to generate sequences of random integers. We will use the Python randint() function to generate random integers between 0 and a maximum value and use this range as the cardinality for the problem (e.g. the number of features or an axis of difficulty).

The function generate_sequence() below will generate a random sequence of integers to a fixed length and with the specified cardinality.

|

1 2 3 4 5 6 7 8 9 |

from random import randint # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # generate random sequence sequence = generate_sequence(5, 50) print(sequence) |

Running this example generates a sequence of 5 time steps where each value in the sequence is a random integer between 0 and 49.

|

1 |

[43, 3, 28, 34, 33] |

Next, we need a function to one hot encode the discrete integer values into binary vectors.

If a cardinality of 50 is used, then each integer will be represented by a 50-element vector of 0 values and 1 in the index of the specified integer value.

The one_hot_encode() function below will one hot encode a given sequence of integers.

|

1 2 3 4 5 6 7 8 |

# one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) |

We also need to be able to decode an encoded sequence. This will be needed to turn a prediction from the model or an encoded expected sequence back into a sequence of integers we can read and evaluate.

The one_hot_decode() function below will decode a one hot encoded sequence back into a sequence of integers.

|

1 2 3 |

# decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] |

We can test out these operations in the example below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

from random import randint from numpy import array from numpy import argmax # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # generate random sequence sequence = generate_sequence(5, 50) print(sequence) # one hot encode encoded = one_hot_encode(sequence, 50) print(encoded) # decode decoded = one_hot_decode(encoded) print(decoded) |

Running the example first prints a randomly generated sequence, then the one hot encoded version, then finally the decoded sequence again.

|

1 2 3 4 5 6 7 |

[3, 18, 32, 11, 36] [[0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0] [0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0] [0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0] [0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0] [0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0]] [3, 18, 32, 11, 36] |

Finally, we need a function that can create input and output pairs of sequences to train and evaluate a model.

The function below named get_pair() will return one input and output sequence pair given a specified input length, output length, and cardinality. Both input and output sequences are the same length, the length of the input sequence, but the output sequence will be taken as the first n characters of the input sequence and padded with zero values to the required length.

The sequences of integers are then encoded then reshaped into a 3D format required for the recurrent neural network, with the dimensions: samples, time steps, and features. In this case, samples is always 1 as we are only generating one input-output pair, the time steps is the input sequence length and features is the cardinality of each time step.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# prepare data for the LSTM def get_pair(n_in, n_out, n_unique): # generate random sequence sequence_in = generate_sequence(n_in, n_unique) sequence_out = sequence_in[:n_out] + [0 for _ in range(n_in-n_out)] # one hot encode X = one_hot_encode(sequence_in, n_unique) y = one_hot_encode(sequence_out, n_unique) # reshape as 3D X = X.reshape((1, X.shape[0], X.shape[1])) y = y.reshape((1, y.shape[0], y.shape[1])) return X,y |

We can put this all together and demonstrate the data preparation code.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 |

from random import randint from numpy import array from numpy import argmax # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # prepare data for the LSTM def get_pair(n_in, n_out, n_unique): # generate random sequence sequence_in = generate_sequence(n_in, n_unique) sequence_out = sequence_in[:n_out] + [0 for _ in range(n_in-n_out)] # one hot encode X = one_hot_encode(sequence_in, n_unique) y = one_hot_encode(sequence_out, n_unique) # reshape as 3D X = X.reshape((1, X.shape[0], X.shape[1])) y = y.reshape((1, y.shape[0], y.shape[1])) return X,y # generate random sequence X, y = get_pair(5, 2, 50) print(X.shape, y.shape) print('X=%s, y=%s' % (one_hot_decode(X[0]), one_hot_decode(y[0]))) |

Running the example generates a single input-output pair and prints the shape of both arrays.

The generated pair is then printed in a decoded form where we can see that the first two integers of the sequence are reproduced in the output sequence followed by a padding of zero values.

|

1 2 |

(1, 5, 50) (1, 5, 50) X=[12, 20, 36, 40, 12], y=[12, 20, 0, 0, 0] |

Encoder-Decoder Without Attention

In this section, we will develop a baseline in performance on the problem with an encoder-decoder model without attention.

We will fix the problem definition at input and output sequences of 5 time steps, the first 2 elements of the input sequence in the output sequence and a cardinality of 50.

|

1 2 3 4 |

# configure problem n_features = 50 n_timesteps_in = 5 n_timesteps_out = 2 |

We can develop a simple encoder-decoder model in Keras by taking the output from an encoder LSTM model, repeating it n times for the number of timesteps in the output sequence, then using a decoder to predict the output sequence.

For more detail on how to define an encoder-decoder architecture in Keras, see the post:

We will configure the encoder and decoder with the same number of units, in this case 150. We will use the efficient Adam implementation of gradient descent and optimize the categorical cross entropy loss function, given that the problem is technically a multi-class classification problem.

The configuration for the model was found after a little trial and error and is by no means optimized.

The code for an encoder-decoder architecture in Keras is listed below.

|

1 2 3 4 5 6 7 |

# define model model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features))) model.add(RepeatVector(n_timesteps_in)) model.add(LSTM(150, return_sequences=True)) model.add(TimeDistributed(Dense(n_features, activation='softmax'))) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) |

We will train the model on 5,000 random input-output pairs of integer sequences.

|

1 2 3 4 5 6 |

# train LSTM for epoch in range(5000): # generate new random sequence X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) # fit model for one epoch on this sequence model.fit(X, y, epochs=1, verbose=2) |

Once trained, we will evaluate the model on 100 new randomly generated integer sequences and only mark a prediction correct when the entire output sequence matches the expected value.

|

1 2 3 4 5 6 7 8 |

# evaluate LSTM total, correct = 100, 0 for _ in range(total): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])): correct += 1 print('Accuracy: %.2f%%' % (float(correct)/float(total)*100.0)) |

Finally, we will print 10 examples of expected output sequences and sequences predicted by the model.

Putting all of this together, the complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 |

from random import randint from numpy import array from numpy import argmax from numpy import array_equal from keras.models import Sequential from keras.layers import LSTM from keras.layers import Dense from keras.layers import TimeDistributed from keras.layers import RepeatVector # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # prepare data for the LSTM def get_pair(n_in, n_out, cardinality): # generate random sequence sequence_in = generate_sequence(n_in, cardinality) sequence_out = sequence_in[:n_out] + [0 for _ in range(n_in-n_out)] # one hot encode X = one_hot_encode(sequence_in, cardinality) y = one_hot_encode(sequence_out, cardinality) # reshape as 3D X = X.reshape((1, X.shape[0], X.shape[1])) y = y.reshape((1, y.shape[0], y.shape[1])) return X,y # configure problem n_features = 50 n_timesteps_in = 5 n_timesteps_out = 2 # define model model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features))) model.add(RepeatVector(n_timesteps_in)) model.add(LSTM(150, return_sequences=True)) model.add(TimeDistributed(Dense(n_features, activation='softmax'))) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) # train LSTM for epoch in range(5000): # generate new random sequence X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) # fit model for one epoch on this sequence model.fit(X, y, epochs=1, verbose=2) # evaluate LSTM total, correct = 100, 0 for _ in range(total): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])): correct += 1 print('Accuracy: %.2f%%' % (float(correct)/float(total)*100.0)) # spot check some examples for _ in range(10): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) print('Expected:', one_hot_decode(y[0]), 'Predicted', one_hot_decode(yhat[0])) |

Running this example will not take long, perhaps a few minutes on the CPU, no GPU is required.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

The accuracy of the model was reported at just under 20%.

|

1 |

Accuracy: 19.00% |

We can see from the sample outputs that the model does get one number in the output sequence correct for most or all cases, and only struggles with the second number. All zero padding values are predicted correctly.

|

1 2 3 4 5 6 7 8 9 10 |

Expected: [47, 0, 0, 0, 0] Predicted [47, 47, 0, 0, 0] Expected: [43, 31, 0, 0, 0] Predicted [43, 31, 0, 0, 0] Expected: [14, 22, 0, 0, 0] Predicted [14, 14, 0, 0, 0] Expected: [39, 31, 0, 0, 0] Predicted [39, 39, 0, 0, 0] Expected: [6, 4, 0, 0, 0] Predicted [6, 4, 0, 0, 0] Expected: [47, 0, 0, 0, 0] Predicted [47, 47, 0, 0, 0] Expected: [39, 33, 0, 0, 0] Predicted [39, 39, 0, 0, 0] Expected: [23, 2, 0, 0, 0] Predicted [23, 23, 0, 0, 0] Expected: [19, 28, 0, 0, 0] Predicted [19, 3, 0, 0, 0] Expected: [32, 33, 0, 0, 0] Predicted [32, 32, 0, 0, 0] |

Custom Keras Attention Layer

Now we need to add attention to the encoder-decoder model.

At the time of writing, Keras does not have the capability of attention built into the library, but it is coming soon.

Until attention is officially available in Keras, we can either develop our own implementation or use an existing third-party implementation.

To speed things up, let’s use an existing third-party implementation.

Zafarali Ahmed an intern at Datalogue developed a custom layer for Keras that provides support for attention, presented in a post titled “How to Visualize Your Recurrent Neural Network with Attention in Keras” in 2017 and GitHub project called “keras-attention“.

The custom attention layer is called AttentionDecoder and is available in the custom_recurrents.py file in the GitHub project. We can reuse this code under the GNU Affero General Public License v3.0 license of the project.

A copy of the custom layer is listed below for completeness. Copy it and paste it into a new and separate file in your current working directory called ‘attention_decoder.py‘.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 |

import tensorflow as tf from keras import backend as K from keras import regularizers, constraints, initializers, activations from keras.layers.recurrent import Recurrent, _time_distributed_dense from keras.engine import InputSpec tfPrint = lambda d, T: tf.Print(input_=T, data=[T, tf.shape(T)], message=d) class AttentionDecoder(Recurrent): def __init__(self, units, output_dim, activation='tanh', return_probabilities=False, name='AttentionDecoder', kernel_initializer='glorot_uniform', recurrent_initializer='orthogonal', bias_initializer='zeros', kernel_regularizer=None, bias_regularizer=None, activity_regularizer=None, kernel_constraint=None, bias_constraint=None, **kwargs): """ Implements an AttentionDecoder that takes in a sequence encoded by an encoder and outputs the decoded states :param units: dimension of the hidden state and the attention matrices :param output_dim: the number of labels in the output space references: Bahdanau, Dzmitry, Kyunghyun Cho, and Yoshua Bengio. "Neural machine translation by jointly learning to align and translate." arXiv preprint arXiv:1409.0473 (2014). """ self.units = units self.output_dim = output_dim self.return_probabilities = return_probabilities self.activation = activations.get(activation) self.kernel_initializer = initializers.get(kernel_initializer) self.recurrent_initializer = initializers.get(recurrent_initializer) self.bias_initializer = initializers.get(bias_initializer) self.kernel_regularizer = regularizers.get(kernel_regularizer) self.recurrent_regularizer = regularizers.get(kernel_regularizer) self.bias_regularizer = regularizers.get(bias_regularizer) self.activity_regularizer = regularizers.get(activity_regularizer) self.kernel_constraint = constraints.get(kernel_constraint) self.recurrent_constraint = constraints.get(kernel_constraint) self.bias_constraint = constraints.get(bias_constraint) super(AttentionDecoder, self).__init__(**kwargs) self.name = name self.return_sequences = True # must return sequences def build(self, input_shape): """ See Appendix 2 of Bahdanau 2014, arXiv:1409.0473 for model details that correspond to the matrices here. """ self.batch_size, self.timesteps, self.input_dim = input_shape if self.stateful: super(AttentionDecoder, self).reset_states() self.states = [None, None] # y, s """ Matrices for creating the context vector """ self.V_a = self.add_weight(shape=(self.units,), name='V_a', initializer=self.kernel_initializer, regularizer=self.kernel_regularizer, constraint=self.kernel_constraint) self.W_a = self.add_weight(shape=(self.units, self.units), name='W_a', initializer=self.kernel_initializer, regularizer=self.kernel_regularizer, constraint=self.kernel_constraint) self.U_a = self.add_weight(shape=(self.input_dim, self.units), name='U_a', initializer=self.kernel_initializer, regularizer=self.kernel_regularizer, constraint=self.kernel_constraint) self.b_a = self.add_weight(shape=(self.units,), name='b_a', initializer=self.bias_initializer, regularizer=self.bias_regularizer, constraint=self.bias_constraint) """ Matrices for the r (reset) gate """ self.C_r = self.add_weight(shape=(self.input_dim, self.units), name='C_r', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.U_r = self.add_weight(shape=(self.units, self.units), name='U_r', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.W_r = self.add_weight(shape=(self.output_dim, self.units), name='W_r', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.b_r = self.add_weight(shape=(self.units, ), name='b_r', initializer=self.bias_initializer, regularizer=self.bias_regularizer, constraint=self.bias_constraint) """ Matrices for the z (update) gate """ self.C_z = self.add_weight(shape=(self.input_dim, self.units), name='C_z', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.U_z = self.add_weight(shape=(self.units, self.units), name='U_z', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.W_z = self.add_weight(shape=(self.output_dim, self.units), name='W_z', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.b_z = self.add_weight(shape=(self.units, ), name='b_z', initializer=self.bias_initializer, regularizer=self.bias_regularizer, constraint=self.bias_constraint) """ Matrices for the proposal """ self.C_p = self.add_weight(shape=(self.input_dim, self.units), name='C_p', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.U_p = self.add_weight(shape=(self.units, self.units), name='U_p', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.W_p = self.add_weight(shape=(self.output_dim, self.units), name='W_p', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.b_p = self.add_weight(shape=(self.units, ), name='b_p', initializer=self.bias_initializer, regularizer=self.bias_regularizer, constraint=self.bias_constraint) """ Matrices for making the final prediction vector """ self.C_o = self.add_weight(shape=(self.input_dim, self.output_dim), name='C_o', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.U_o = self.add_weight(shape=(self.units, self.output_dim), name='U_o', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.W_o = self.add_weight(shape=(self.output_dim, self.output_dim), name='W_o', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.b_o = self.add_weight(shape=(self.output_dim, ), name='b_o', initializer=self.bias_initializer, regularizer=self.bias_regularizer, constraint=self.bias_constraint) # For creating the initial state: self.W_s = self.add_weight(shape=(self.input_dim, self.units), name='W_s', initializer=self.recurrent_initializer, regularizer=self.recurrent_regularizer, constraint=self.recurrent_constraint) self.input_spec = [ InputSpec(shape=(self.batch_size, self.timesteps, self.input_dim))] self.built = True def call(self, x): # store the whole sequence so we can "attend" to it at each timestep self.x_seq = x # apply the a dense layer over the time dimension of the sequence # do it here because it doesn't depend on any previous steps # thefore we can save computation time: self._uxpb = _time_distributed_dense(self.x_seq, self.U_a, b=self.b_a, input_dim=self.input_dim, timesteps=self.timesteps, output_dim=self.units) return super(AttentionDecoder, self).call(x) def get_initial_state(self, inputs): # apply the matrix on the first time step to get the initial s0. s0 = activations.tanh(K.dot(inputs[:, 0], self.W_s)) # from keras.layers.recurrent to initialize a vector of (batchsize, # output_dim) y0 = K.zeros_like(inputs) # (samples, timesteps, input_dims) y0 = K.sum(y0, axis=(1, 2)) # (samples, ) y0 = K.expand_dims(y0) # (samples, 1) y0 = K.tile(y0, [1, self.output_dim]) return [y0, s0] def step(self, x, states): ytm, stm = states # repeat the hidden state to the length of the sequence _stm = K.repeat(stm, self.timesteps) # now multiplty the weight matrix with the repeated hidden state _Wxstm = K.dot(_stm, self.W_a) # calculate the attention probabilities # this relates how much other timesteps contributed to this one. et = K.dot(activations.tanh(_Wxstm + self._uxpb), K.expand_dims(self.V_a)) at = K.exp(et) at_sum = K.sum(at, axis=1) at_sum_repeated = K.repeat(at_sum, self.timesteps) at /= at_sum_repeated # vector of size (batchsize, timesteps, 1) # calculate the context vector context = K.squeeze(K.batch_dot(at, self.x_seq, axes=1), axis=1) # ~~~> calculate new hidden state # first calculate the "r" gate: rt = activations.sigmoid( K.dot(ytm, self.W_r) + K.dot(stm, self.U_r) + K.dot(context, self.C_r) + self.b_r) # now calculate the "z" gate zt = activations.sigmoid( K.dot(ytm, self.W_z) + K.dot(stm, self.U_z) + K.dot(context, self.C_z) + self.b_z) # calculate the proposal hidden state: s_tp = activations.tanh( K.dot(ytm, self.W_p) + K.dot((rt * stm), self.U_p) + K.dot(context, self.C_p) + self.b_p) # new hidden state: st = (1-zt)*stm + zt * s_tp yt = activations.softmax( K.dot(ytm, self.W_o) + K.dot(stm, self.U_o) + K.dot(context, self.C_o) + self.b_o) if self.return_probabilities: return at, [yt, st] else: return yt, [yt, st] def compute_output_shape(self, input_shape): """ For Keras internal compatability checking """ if self.return_probabilities: return (None, self.timesteps, self.timesteps) else: return (None, self.timesteps, self.output_dim) def get_config(self): """ For rebuilding models on load time. """ config = { 'output_dim': self.output_dim, 'units': self.units, 'return_probabilities': self.return_probabilities } base_config = super(AttentionDecoder, self).get_config() return dict(list(base_config.items()) + list(config.items())) |

We can make use of this custom layer in our projects by importing it as follows:

|

1 |

from attention_decoder import AttentionDecoder |

The layer implements attention as described by Bahdanau, et al. in their paper “Neural Machine Translation by Jointly Learning to Align and Translate.”

The code is explained well in the original post and linked to both the LSTM and attention equations.

A limitation of this implementation is that it must output sequences that are the same length as the input sequences, the specific limitation that the encoder-decoder architecture was designed to overcome.

Importantly, the new layer manages both the repeating of the decoding as performed by the second LSTM, as well as the softmax output for the model as was performed by the Dense output layer in the encoder-decoder model without attention. This greatly simplifies the code for the model.

It is important to note that the custom layer is built upon the Recurrent layer in Keras, which, at the time of writing, is marked as legacy code, and presumably will be removed from the project at some point.

Encoder-Decoder With Attention

Now that we have an implementation of attention that we can use, we can develop an encoder-decoder model with attention for our contrived sequence prediction problem.

The model with the attention layer is defined below. We can see that the layer handles some of the machinery of the encoder-decoder model itself, making defining the model simpler.

|

1 2 3 4 5 |

# define model model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True)) model.add(AttentionDecoder(150, n_features)) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) |

That’s it. The rest of the example is the same.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 |

from random import randint from numpy import array from numpy import argmax from numpy import array_equal from keras.models import Sequential from keras.layers import LSTM from attention_decoder import AttentionDecoder # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # prepare data for the LSTM def get_pair(n_in, n_out, cardinality): # generate random sequence sequence_in = generate_sequence(n_in, cardinality) sequence_out = sequence_in[:n_out] + [0 for _ in range(n_in-n_out)] # one hot encode X = one_hot_encode(sequence_in, cardinality) y = one_hot_encode(sequence_out, cardinality) # reshape as 3D X = X.reshape((1, X.shape[0], X.shape[1])) y = y.reshape((1, y.shape[0], y.shape[1])) return X,y # configure problem n_features = 50 n_timesteps_in = 5 n_timesteps_out = 2 # define model model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True)) model.add(AttentionDecoder(150, n_features)) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) # train LSTM for epoch in range(5000): # generate new random sequence X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) # fit model for one epoch on this sequence model.fit(X, y, epochs=1, verbose=2) # evaluate LSTM total, correct = 100, 0 for _ in range(total): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])): correct += 1 print('Accuracy: %.2f%%' % (float(correct)/float(total)*100.0)) # spot check some examples for _ in range(10): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) print('Expected:', one_hot_decode(y[0]), 'Predicted', one_hot_decode(yhat[0])) |

Running the example prints the skill of the model on 100 randomly generated input-output pairs.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

With the same resources and same amount of training, the model with attention performs much better.

|

1 |

Accuracy: 95.00% |

Spot-checking some sample outputs and predicted sequences, we can see very few errors, even in cases when there is a zero value in the first two elements.

|

1 2 3 4 5 6 7 8 9 10 |

Expected: [48, 47, 0, 0, 0] Predicted [48, 47, 0, 0, 0] Expected: [7, 46, 0, 0, 0] Predicted [7, 46, 0, 0, 0] Expected: [32, 30, 0, 0, 0] Predicted [32, 2, 0, 0, 0] Expected: [3, 25, 0, 0, 0] Predicted [3, 25, 0, 0, 0] Expected: [45, 4, 0, 0, 0] Predicted [45, 4, 0, 0, 0] Expected: [49, 9, 0, 0, 0] Predicted [49, 9, 0, 0, 0] Expected: [22, 23, 0, 0, 0] Predicted [22, 23, 0, 0, 0] Expected: [29, 36, 0, 0, 0] Predicted [29, 36, 0, 0, 0] Expected: [0, 29, 0, 0, 0] Predicted [0, 29, 0, 0, 0] Expected: [11, 26, 0, 0, 0] Predicted [11, 26, 0, 0, 0] |

Comparison of Models

Although we are getting better results from the model with attention, the results were reported from a single run of each model.

In this case, we seek a more robust finding by repeating the evaluation of each model multiple times and reporting the average performance over those runs. For more information on this robust approach to evaluating neural network models, see the post:

We can define a function to create each type of model, as follows.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

# define the encoder-decoder model def baseline_model(n_timesteps_in, n_features): model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features))) model.add(RepeatVector(n_timesteps_in)) model.add(LSTM(150, return_sequences=True)) model.add(TimeDistributed(Dense(n_features, activation='softmax'))) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model # define the encoder-decoder with attention model def attention_model(n_timesteps_in, n_features): model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True)) model.add(AttentionDecoder(150, n_features)) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model |

We can then define a function to fit and evaluate the accuracy of a fit model and return the accuracy score.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

# train and evaluate a model, return accuracy def train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features): # train LSTM for epoch in range(5000): # generate new random sequence X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) # fit model for one epoch on this sequence model.fit(X, y, epochs=1, verbose=0) # evaluate LSTM total, correct = 100, 0 for _ in range(total): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])): correct += 1 return float(correct)/float(total)*100.0 |

Putting this together, we can repeat the process of creating, training, and evaluating each type of model multiple times and reporting the mean accuracy over the repeats. To keep running times down, we will repeat each model evaluation 10 times, although if you have the resources, you could increase this to 30 or 100 times.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 |

from random import randint from numpy import array from numpy import argmax from numpy import array_equal from keras.models import Sequential from keras.layers import LSTM from keras.layers import Dense from keras.layers import TimeDistributed from keras.layers import RepeatVector from attention_decoder import AttentionDecoder # generate a sequence of random integers def generate_sequence(length, n_unique): return [randint(0, n_unique-1) for _ in range(length)] # one hot encode sequence def one_hot_encode(sequence, n_unique): encoding = list() for value in sequence: vector = [0 for _ in range(n_unique)] vector[value] = 1 encoding.append(vector) return array(encoding) # decode a one hot encoded string def one_hot_decode(encoded_seq): return [argmax(vector) for vector in encoded_seq] # prepare data for the LSTM def get_pair(n_in, n_out, cardinality): # generate random sequence sequence_in = generate_sequence(n_in, cardinality) sequence_out = sequence_in[:n_out] + [0 for _ in range(n_in-n_out)] # one hot encode X = one_hot_encode(sequence_in, cardinality) y = one_hot_encode(sequence_out, cardinality) # reshape as 3D X = X.reshape((1, X.shape[0], X.shape[1])) y = y.reshape((1, y.shape[0], y.shape[1])) return X,y # define the encoder-decoder model def baseline_model(n_timesteps_in, n_features): model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features))) model.add(RepeatVector(n_timesteps_in)) model.add(LSTM(150, return_sequences=True)) model.add(TimeDistributed(Dense(n_features, activation='softmax'))) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model # define the encoder-decoder with attention model def attention_model(n_timesteps_in, n_features): model = Sequential() model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True)) model.add(AttentionDecoder(150, n_features)) model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model # train and evaluate a model, return accuracy def train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features): # train LSTM for epoch in range(5000): # generate new random sequence X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) # fit model for one epoch on this sequence model.fit(X, y, epochs=1, verbose=0) # evaluate LSTM total, correct = 100, 0 for _ in range(total): X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features) yhat = model.predict(X, verbose=0) if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])): correct += 1 return float(correct)/float(total)*100.0 # configure problem n_features = 50 n_timesteps_in = 5 n_timesteps_out = 2 n_repeats = 10 # evaluate encoder-decoder model print('Encoder-Decoder Model') results = list() for _ in range(n_repeats): model = baseline_model(n_timesteps_in, n_features) accuracy = train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features) results.append(accuracy) print(accuracy) print('Mean Accuracy: %.2f%%' % (sum(results)/float(n_repeats))) # evaluate encoder-decoder with attention model print('Encoder-Decoder With Attention Model') results = list() for _ in range(n_repeats): model = attention_model(n_timesteps_in, n_features) accuracy = train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features) results.append(accuracy) print(accuracy) print('Mean Accuracy: %.2f%%' % (sum(results)/float(n_repeats))) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Running this example prints the accuracy for each model repeat to give you an idea of the progress of the run.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

Encoder-Decoder Model 20.0 23.0 23.0 18.0 28.000000000000004 28.999999999999996 23.0 26.0 21.0 20.0 Mean Accuracy: 23.10% Encoder-Decoder With Attention Model 98.0 91.0 94.0 93.0 96.0 99.0 97.0 94.0 99.0 96.0 Mean Accuracy: 95.70% |

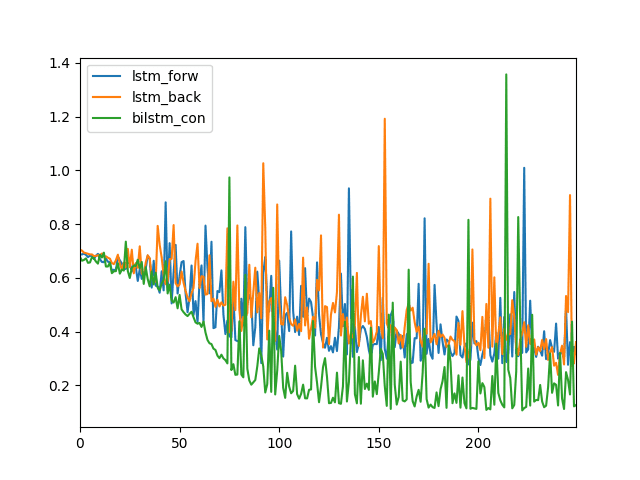

We can see that even averaged over 10 runs, the attention model still shows better performance than the encoder-decoder model without attention, 23.10% vs 95.70%.

A good extension to this evaluation would be to capture the model loss each epoch for each model, take the average, and compare how the loss changes over time for the architecture with and without attention.

I expect that this trace would show attention achieving better skill much faster and sooner than the non-attentional model, further highlighting the benefit of the approach.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

- Attention in Long Short-Term Memory Recurrent Neural Networks

- How Does Attention Work in Encoder-Decoder Recurrent Neural Networks

- Encoder-Decoder Long Short-Term Memory Networks

- How to Evaluate the Skill of Deep Learning Models

- How to Visualize Your Recurrent Neural Network with Attention in Keras, 2017.

- keras-attention GitHub Project

- Neural Machine Translation by Jointly Learning to Align and Translate, 2015.

Summary

In this tutorial, you discovered how to develop an encoder-decoder recurrent neural network with attention in Python with Keras.

Specifically, you learned:

- How to design a small and configurable problem to evaluate encoder-decoder recurrent neural networks with and without attention.

- How to design and evaluate an encoder-decoder network with and without attention for the sequence prediction problem.

- How to robustly compare the performance of encoder-decoder networks with and without attention.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

The timing of this post couldn’t have been more accurate. I’ve spent hours and days on google looking for a reliable Keras implementation of attention. Can’t wait to test this on my specific problem definition. Thanks a ton Jason!

I’m glad to hear that Chetan!

Let me know how you go.

test soft

Your test worked.

Thank you for this post!

Unfortunately the kernel crashes on my laptop! I don’t know why (no RAM issues)

I use Keras==2.0.8 and TF==1.3.0

Ouch. Perhaps there is something up with your environment.

This post might help if you need to set things up from scratch:

https://machinelearningmastery.com/setup-python-environment-machine-learning-deep-learning-anaconda/

Jason, very nice tutorial on probably the most important and most powerful neural application architecture (seq2seq with attention – since it is equivalent to a self programming turing machine – it sees an input stream of symbols, then can move back and forth using attention and write out a stream of symbols).

In fact theoretically it is super-turing, because it works with continuous (real) representation instead of Turing symbolic notation. google ‘recurrent networks super turing’ for proofs.

I am looking forward to attention being integrated into Keras and your revised code later, but no one can match your ability to setup the problem, generate data, explain step by step.. Keep up the great work.

Ravi Annaswamy

Thanks Ravi, I really appreciate your support! You made my day 🙂

Jason, I think in order to show the power of sequence mapping, we need to try two things:

1. The input sequence should be of variable length (not always 5). For example you can make it a length of 10 max, but it should generate sequences of any length between say 4 to 10 (remaining zeros).

2. The output should not be just zeroing of values, but more complex output for example, the first and last non zero value of the sequence…

something like the example built here:

https://talbaumel.github.io/attention/

I am working on a modification of your excellent code, to illustrate this extended task, will post shortly.

Yes, you could easily modify the above example to achieve these requirements.

Dr.Jason,

You have done an excellent application and framework code.

I wanted to expose the great value of this architecture and modularity of

this code by attempting a harder problem. Harder in two ways:

First we want to make the input sequence variable length from example to example.

Second, we want the output to be one that requires attention and long term memory,

across the length!

So we come up with this task:

Given a input sequence which is variable length with zero padding…

[6, 8, 7, 2, 2, 6, 6, 4, 0, 0]

I wanted the network to pick out and output the first and last non-zero of the series

[6, 4, 0, 0, 0, 0, 0, 0, 0, 0]

To make it even more interesting task for memory, we want it to output

the two numbers in reverse order:

input:

[6, 8, 7, 2, 2, 6, 6, 4, 0, 0]

output

[4, 6, 0, 0, 0, 0, 0, 0, 0, 0]

This would require that the algorithm figure out that we are selecting the first and last of the sequence,

and then writing out them in reverse order! It really needs some kind of a turing machine that can

go back and forth on the sequence and decide when to write what! Can the seq2seq with attention LSTM do this?

Let us try out.

Here are few more training cases created:

[5, 5, 3, 3, 2, 0, 0, 0, 0, 0] [2, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[4, 7, 7, 4, 3, 9, 0, 0, 0, 0] [9, 4, 0, 0, 0, 0, 0, 0, 0, 0]

[2, 6, 7, 6, 5, 0, 0, 0, 0, 0] [5, 2, 0, 0, 0, 0, 0, 0, 0, 0]

[9, 8, 2, 8, 8, 7, 9, 1, 5, 0] [5, 9, 0, 0, 0, 0, 0, 0, 0, 0]

I made the following changes to your excellent code to make this possible:

1. In order to use 0 as the padding character, we make the unique letters from 1 to n_unique.

# generate a sequence of random integers

def generate_sequence(length, n_unique):

return [randint(0, n_unique-2)+1 for _ in range(length)]

I think in your original code also you should adopt the above mechanism so that 0 is reserved as padding

symbol and generated sequence only contains 1 to n_unique. I think this will increase accuracy to 100% in your tests too.

2. In order to simplify the domain, for faster training, I restricted the range of values:

n_features = 8

n_timesteps_in = 10

n_timesteps_out = 2

That is the input has a max of 10 positions but anywhere between 4 to 9 of these could be nonzero sequence, as shown below.

The input only uses an alphabet of 8 numbers instead of the 50 you used.

3. Correspondingly the get_pair was modified to generate the series above:

# prepare data for the LSTM

def get_pair(n_in, n_out, cardinality, verbose=False): # edited this to add verbose flag

# generate random sequence

sequence_in = generate_sequence(n_in, cardinality)

real_length = randint(4,n_in-1) # i added this

sequence_in = sequence_in[:real_length] + [0 for _ in range(n_in-real_length)] # i added this

sequence_out = [sequence_in[real_length-1]]+[sequence_in[0]] + [0 for _ in range(n_in-2)] # i edited this

if verbose: # added this for testing

print(sequence_in,sequence_out) # added this

# one hot encode

X = one_hot_encode(sequence_in, cardinality)

y = one_hot_encode(sequence_out, cardinality)

# reshape as 3D

X = X.reshape((1, X.shape[0], X.shape[1]))

y = y.reshape((1, y.shape[0], y.shape[1]))

return X,y

4. With these changes:

for _ in range(5):

a=get_pair(10,2,10,verbose=True)

generates:

[6, 8, 7, 2, 2, 6, 6, 4, 0, 0] [4, 6, 0, 0, 0, 0, 0, 0, 0, 0]

[5, 5, 3, 3, 2, 0, 0, 0, 0, 0] [2, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[4, 7, 7, 4, 3, 9, 0, 0, 0, 0] [9, 4, 0, 0, 0, 0, 0, 0, 0, 0]

[2, 6, 7, 6, 5, 0, 0, 0, 0, 0] [5, 2, 0, 0, 0, 0, 0, 0, 0, 0]

[9, 8, 2, 8, 8, 7, 9, 1, 5, 0] [5, 9, 0, 0, 0, 0, 0, 0, 0, 0]

5. Result of training on this dataset:

Encoder-Decoder Model

20.0

12.0

18.0

19.0

9.0

10.0

16.0

12.0

12.0

11.0

Encoder-Decoder With Attention Model

100.0

100.0

100.0

100.0

100.0

100.0

100.0

100.0

100.0

100.0

Yes!

This shows the capacity of recurrent neural models to learn arbitrary programs from example input and output pairs!

Of course, one can increase length of the sequence and also the n_unique to make the task harder, but I do not expect

dramatic failure as we gradually increase to reasonable values.

I am really very happy that you put together this excellent example. Please feel free to add this extension application to your excellent article/books if it will add value. Also please review the changes to make sure I have not made any errors.

The only complaint I have is that the keras implementation of attention is very slow. (I think the pytorch implementation will be

far faster because of avoiding a few layers of abstraction..but I may be wrong, will try it..)

Ravi

Attached the complete code for reproducibility:

from random import randint

from numpy import array

from numpy import argmax

from numpy import array_equal

from keras.models import Sequential

from keras.layers import LSTM

from keras.layers import Dense

from keras.layers import TimeDistributed

from keras.layers import RepeatVector

from attention_decoder import AttentionDecoder

# generate a sequence of random integers

def generate_sequence(length, n_unique):

return [randint(0, n_unique-2)+1 for _ in range(length)]

# one hot encode sequence

def one_hot_encode(sequence, n_unique):

encoding = list()

for value in sequence:

vector = [0 for _ in range(n_unique)]

vector[value] = 1

encoding.append(vector)

return array(encoding)

# decode a one hot encoded string

def one_hot_decode(encoded_seq):

return [argmax(vector) for vector in encoded_seq]

# prepare data for the LSTM

def get_pair(n_in, n_out, cardinality, verbose=False):

# generate random sequence

sequence_in = generate_sequence(n_in, cardinality)

real_length = randint(4,n_in-1)

sequence_in = sequence_in[:real_length] + [0 for _ in range(n_in-real_length)]

sequence_out = [sequence_in[real_length-1]]+[sequence_in[0]] + [0 for _ in range(n_in-2)]

if verbose:

print(sequence_in,sequence_out)

# one hot encode

X = one_hot_encode(sequence_in, cardinality)

y = one_hot_encode(sequence_out, cardinality)

# reshape as 3D

X = X.reshape((1, X.shape[0], X.shape[1]))

y = y.reshape((1, y.shape[0], y.shape[1]))

return X,y

# define the encoder-decoder model

def baseline_model(n_timesteps_in, n_features):

model = Sequential()

model.add(LSTM(150, input_shape=(n_timesteps_in, n_features)))

model.add(RepeatVector(n_timesteps_in))

model.add(LSTM(150, return_sequences=True))

model.add(TimeDistributed(Dense(n_features, activation=’softmax’)))

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘acc’])

return model

# define the encoder-decoder with attention model

def attention_model(n_timesteps_in, n_features):

model = Sequential()

model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True))

model.add(AttentionDecoder(150, n_features))

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘acc’])

return model

# train and evaluate a model, return accuracy

def train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features):

# train LSTM

for epoch in range(5000):

# generate new random sequence

X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features)

# fit model for one epoch on this sequence

model.fit(X, y, epochs=1, verbose=0)

# evaluate LSTM

total, correct = 100, 0

for _ in range(total):

X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features)

yhat = model.predict(X, verbose=0)

if array_equal(one_hot_decode(y[0]), one_hot_decode(yhat[0])):

correct += 1

return float(correct)/float(total)*100.0

# configure problem

n_features = 8

n_timesteps_in = 10

n_timesteps_out = 2

n_repeats = 10

# evaluate encoder-decoder model

print(‘Encoder-Decoder Model’)

results = list()

for _ in range(n_repeats):

model = baseline_model(n_timesteps_in, n_features)

accuracy = train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features)

results.append(accuracy)

print(accuracy)

print(‘Mean Accuracy: %.2f%%’ % (sum(results)/float(n_repeats)))

# evaluate encoder-decoder with attention model

print(‘Encoder-Decoder With Attention Model’)

results = list()

for _ in range(n_repeats):

model = attention_model(n_timesteps_in, n_features)

accuracy = train_evaluate_model(model, n_timesteps_in, n_timesteps_out, n_features)

results.append(accuracy)

print(accuracy)

print(‘Mean Accuracy: %.2f%%’ % (sum(results)/float(n_repeats)))

Great work!

I get the following error while import the .py file

from attention_decoder import AttentionDecoder

ImportError Traceback (most recent call last)

in ()

—-> 1 from attention_decoder import AttentionDecoder as ad

ImportError: cannot import name ‘AttentionDecoder’

Sorry to hear that, I have some suggestions here:

https://machinelearningmastery.com/faq/single-faq/why-does-the-code-in-the-tutorial-not-work-for-me

Dimensions must be equal, but are 150 and 50 for ‘AttentionDecoder_1/MatMul_4’ (op: ‘MatMul’) with input shapes: [?,150], [50,150].

I am using Keras==2.0

and tensorflow==1.13.1

Please specify for which version code is fully functional.

Thanks in advance.

I recommend using the new attention layers in TensorFlow 2.

Hi @Ravi, @Jason,

Thanks for the great post. Is it possible to give variable timesteps as the input for RepeatVector for variable input length ?

For instance, instead of defining a fixed size of n_timesteps_in as 10, I want to read the entire input sequence as a whole.

model.add(RepeatVector(n_timesteps_in))

Not really.

I have a problem i am making a model in NMT for English to Hindi but its prediction is not good very bad how can i improve my prediction.

Here are some suggestions:

https://machinelearningmastery.com/improve-deep-learning-performance/

here is verbose evaluation

for _ in range(5):

X,y = get_pair(n_timesteps_in, n_timesteps_out, n_features,verbose=True)

yhat = model.predict(X, verbose=0)

print(one_hot_decode(yhat[0]))

[5, 5, 1, 6, 1, 4, 5, 0, 0, 0] [5, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[5, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[5, 5, 4, 7, 2, 1, 3, 0, 0, 0] [3, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[3, 5, 0, 0, 0, 0, 0, 0, 0, 0]

[3, 4, 7, 6, 3, 1, 3, 1, 1, 0] [1, 3, 0, 0, 0, 0, 0, 0, 0, 0]

[1, 3, 0, 0, 0, 0, 0, 0, 0, 0]

[1, 4, 1, 4, 7, 2, 2, 3, 4, 0] [4, 1, 0, 0, 0, 0, 0, 0, 0, 0]

[4, 1, 0, 0, 0, 0, 0, 0, 0, 0]

[1, 5, 1, 4, 7, 6, 3, 7, 7, 0] [7, 1, 0, 0, 0, 0, 0, 0, 0, 0]

[7, 1, 0, 0, 0, 0, 0, 0, 0, 0]

Awesome work Ravi!

Would you please upload these codes into https://gist.github.com?

First of all, thank you so much for this worth reading article. It clarified me a lot about how to implement autoencoder model in Keras.

I just have a little confused point that I wish you would explain. Why do you need to transform an original vector of integers into a 2D matrix containing a one hot vector of each integer? Can’t you just send that original vector of integers into the encoder as an input?

Thank you again for this worthful article, Dr. Brownlee

You can, but the one hot encoding is richer and often results in better model skill.

Thank you Dr. Brownlee, would one hot encoding is better for a situation that the number of cardinality is much greater than this example? Like fitting an encoder with lots of text documents, which will result in huge number of encoder’s keys

In that case, it might be better to use a distributed representation like a word embedding:

https://machinelearningmastery.com/develop-word-embeddings-python-gensim/

In case of multiple LSTM layers, is the AttentionDecoder layer supposed to stay after all LSTMs only once or it must be inserted after each LSTM layert?

The attention is only used directly after the encoder.

Hi Jason, following up on Hendirk’s question, then how can I stack multple LSTM layers with attention. Do i initialise the first decoder layer as AttentionDecoder and follow it up with Keras’s LSTM layers? Thanks for the super informative post!

Attention would only be required on the first level of the decoder. LSTM layers may then be added after that.

Usually, when people have 5 input and 2 output steps, we use

model.add(LSTM(size, input_shape=(n_timesteps_in, n_features)))

model.add(RepeatVector(n_timesteps_out)) # this is different from input steps

model.add(LSTM(size, return_sequences=True))

This makes sense, as suggested

“we need to repeat the single vector outputted from the encoder network to obtain a sequence which has the same length with the output sequences”.

Wonder if this must be changed.

Yes, the RepeatVector approach is not a pure encoder-decoder as defined in the first papers, but often performs as well or better in my experience.

Hi Jason,

Thanks for such a well explained post on this topic. You mention the limitation that output sequences are the same length as the input sequences in case of the attention encoder decoder model used.

Could you please give an idea what should be done in an attention based model when output and input lengths are not same? I was wondering if we can use a RepeatVector(output_timesteps) in the current attention model on the encoder output and then feed it to the AttentionDecoder?

This implementation of attention cannot handle input and output sequences with different lengths, sorry.

Hi Json, If this implementation of attention cannot handle input and output sequences with different lengths…then it cant be used for language translation task right? please advise

Probably not.

By running your example (the “with attention part”, I’ve gotten the following error:

ValueError: Dimensions must be equal, but are 150 and 50 for ‘AttentionDecoder/MatMul_4’ (op: ‘MatMul’) with input shapes: [?,150], [50,150].

Ensure you have the latest version of Keras.

My keras version is 2.0.2

Perhaps try 2.0.8 or higher?

also when I upgrade keras to 2.0.9

I got the following problem

from keras.layers.recurrent import Recurrent, _time_distributed_dense

“unresolved reference _time_distributed_dense”

Interesting, perhaps the example requires Keras 2.0.8. This was the version I used when developing the example.

Recurrent is not found in tensorflow 2, got error when import it

ImportError: cannot import name ‘Recurrent

The line itself is “from tensorflow.keras.layers import Recurrent ”

How do you import that layer? any Idea

I believe the above tutorial is not compatible with the latest version of the APIs.

Then how can I use this tutorial? I tried to find some ways, but failed.

Hi Woo…Please provide more detail regarding what exactly failed in your implementation of the code listings so that I can better assist you.

also when I upgrade keras to 2.0.9

I got the following problem

from keras.layers.recurrent import Recurrent, _time_distributed_dense

“unresolved reference _time_distributed_dense”

Hi Jason. thank you for your great tutorials. I have 2 questions:

1) is there any Dense layer after Decoder in Attention code?

2)should features input be equal to features output or not ( their length should be equal as you mentioned)?

thank you, again

Yes, there is normally a dense output after the decoder (or a part of the decoder).

Features can vary. Normally/often you would have more input features than output features.

Hi Jason,

from keras.models import Model,

How this Model() layer will works in keras?

Great question, you can learn more in this post:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Hi Jason,

thank you so much for this great tutorial, I’m actually trying to build an encoder with attention, so the attention should be in the encoder part, can you explain please how this can be adapted ?

Many thanks 🙂

Generally, attention is in the decoder, not the encoder. Sorry, I don’t have an example of an encoder with attention.

Hi Jason,

i’m trying to use this great implementation for seq2seq to encode text. I have a dialogue turn from user A that I’ll decode to get dialogue turn from user B. I am using the following code

seq2seq = Sequential()

seq2seq.add(Embedding(output_dim=args.emb_dim,

input_dim=MAX_NB_WORDS,

input_length=MAX_SEQUENCE_LENGTH,

weights=[embedding_matrix],

mask_zero=True,

trainable=True))

seq2seq.add(LSTM(units=args.hidden_size, return_sequences=True))

seq2seq.add(AttentionDecoder(args.hidden_size, args.emb_dim))

seq2seq.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘acc’])

But actually I don’t know how I can compare the decoded vector to the turn vector that I already have.

My dialogue vectors are already encoded using keras preprocessing text_to_sequence and padded.

Many thanks !

I assume you are outputting a sequence of integers. These integers must be mapped back to words using whatever scheme you used to encode your training data.

Hi, Jason

Thanks for this tutorial. I’m trying a word embedding seq2seq model. But I’m stuck with how to build the model.

I use tokenizer and pad_sequences to encode Xtrain and ytrain, and then processing ytrain through to_categorical.

The format of input fed into the model is just like the ones in this tutorial: 1 input of Xtrain and ytrain for each epoch.

And it seems there’s something wrong with the embedding layer. But I can’t figure out why.

model = Sequential()

model.add(Embedding(vocab_size, 150, input_length=max_length))

model.add(Bidirectional(LSTM(150, return_sequences=True)))

model.add(AttentionDecoder(150, n_features))

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘acc’])

ValueError: Error when checking input: expected embedding_8_input to have shape (None, 148) but got array with shape (148, 1)

Sorry, I have another question. Can I just fit the model directly instead of using for loop to train the model for each epoch?

If I just fit the model directly, I got another error message:

Error when checking target: expected AttentionDecoder to have 3 dimensions, but got array with shape (200, 1321)

Thank you very much.

You can, but you must change the shape of the data you are feeding to the network.

This post might help to get you started with embedding layers:

https://machinelearningmastery.com/use-word-embedding-layers-deep-learning-keras/

Remember to one hot encode your input data.

Hi, Jason

Thanks for your suggestion. and it works. However, I change the problem in this tutorial a little bit, and get stuck again.

In this tutorial, the definition of the problem is given Xtrain, for example, [3, 5, 12, 10, 18, 20], and then, we echo the first two element, so the ytrain looks like [3, 5, 0, 0, 0, 0].

Now, I want to find the specific continuous two numbers in a sequence but those two continuous numbers are located at different location within each sequence.

For example, what I want is [16, Z] where Z is any number, and [16, Z] is within an sequence, Xtrain.

So, Xtrain and ytrain look like:

Xtrain ytrain

[3, 1, 10, 14, 8, 20, 16, 7, 9, 19] [16, 7, 0, 0, 0, 0, 0, 0, 0, 0]

[6, 1, 23, 16, 9, 12, 22, 8, 0, 17] [16, 9, 0, 0, 0, 0, 0, 0, 0, 0]

[9, 13, 15, 12, 16, 2, 5, 1, 10, 8] [16, 2, 0, 0, 0, 0, 0, 0, 0, 0]

I think the key point is to transform the format of Xtrain and ytrain. One-hot encoding Xtrain remains the same just like this tutorial. But right now I have no idea how to fit ytrain into the model. I tried several ways to transform the format of ytrain, such as,

1. One-hot encoding ytrain, but it doesn’t work.

2. One-hot encoding the location of [16, Z], but it seems nonsense.

3. Changing the format of ytrain to, for example, [0, 0, 0, 0, 0, 0 16, 7, 0, 0], and then one-hot encoding this sequence, but it

still doesn’t work.

Do you have any suggestion or idea on such problem? Thank you very much.

You could model it as a summarization task with the full sequence in and 2 elements out.

For that you can use an encoder-decoder without attention.

Is there an updated version of this example that uses TimeDistributed in Keras instead of _time_distributed_dense ?

Not at this stage, I am waiting for attention to be officially supported by Keras.

About _time_distributed_dense problem: I copied the code of the _time_distributed_dense function from keras/python/keras/layers/recurrent.py file into the attention_decoder.py file (before the AttentionDecoder class) and the Jason’s code worked for me.

Nice!

Can you send me the code to copy into the AttentionDecoder class? ninjajake@gmail.com

This trick saves my day. Who wants the code – https://github.com/keras-team/keras/blob/b587aeee1c1be3633a56b945af3e7c2c303369ca/keras/layers/recurrent.py

Now we have in Keras.io https://keras.io/api/layers/attention_layers/attention/

was that one you referred to? but I didn’t understand the arguments completely to use between the encoder and decoder. Is any example available?

Hi Jason,

Many thanks for the excellent post (as always!)

I was wondering: could we have learnt a model for the continuous data? That is, instead of one hot encoding the input and the output, if we feed in the raw sequence? I wondered as I have not yet seen a Seq2Seq with attention for continuous data. I was thinking of writing a simple model based on your post, to denoise a sine signal. It should be a case of Seq2seq on same length sequences.

Sure, but it may be a harder problem for the model to learn.

Thanks! When I just used the code in this blog post as it is (with attention), I didn’t see any reduction in loss function when using continuous data. That’s when I wondered if the attention implementation shared here is only for discrete data?

Nice.

No, it is independent of the data.

Hey Nipun Batra… I am doing my final year project based on continuous data. I was wondering if you can share with me the encoder-decoder code you have been able to make work for your purpose. Thank You Shreya

How should the decode differ between 0 and empty row

(zero is a one_hot_code vector that has 1 on the first entry, and empty is line of zeros)?

Sorry, not sure I follow. Are you able to give more context?

One more

If the number of features of the input differs from the features of the output. we have to change this line:

model.add(TimeDistributed(Dense(n_features, activation=’softmax’)))

Should we do more?

Features or time steps in the output?

My question was on the case of n_feauteres (make it in and out) but I belive that the legnth of the seuqnece matters too. The first one I resloved as I wrote not sure it is enough/

Hi Jason,

It’s a great demonstration and thank you very much for that.

I am wondering if you are aware of any way to get back the attention vector *at*? Since it is not model’s parameters, accessing via keras.backend.get_value() seems doesn’t work. Thank you.

Hi Jason,

Great tutorial! This really helped my understanding a lot. May I ask how to modify this attention seq2seq to a batched version? Thanks!

How would the batch approach to updates impact attention?

Hi Jason,

in the step function in AttentionDecoder can we use keras lstm layer iinstead of building it from scratch?

Not sure I follow. Perhaps try it.

It seems that the poor score without attention is mostly due to an optimization problem. I am able to achieve >95% without accuracy by just using a reasonable batch size (32)

Nice, thanks for the note Paul. What config did you use?

I just wanted to let you know your hard work on this site is appreciated. It’s been incredibly helpful for me learning something so complex 😀

Thank you so much!

Thanks, I’m glad to hear that.

Hi, thanks for the website. Is really saving me on my Bachelor Thesis.

Can we train an SVM using the context vector?

You’re welcome.

Sure. To what end?

hi Jason,

i met an issue when running the code.

Traceback (most recent call last):

File “gutils.py”, line 50, in

model.add(AttentionDecoder(150, n_features))

……

# calculate the attention probabilities

# this relates how much other timesteps contributed to this one.

et = K.dot(activations.tanh(_Wxstm + self._uxpb),

K.expand_dims(self.V_a))

……

File “/home/wanjb/anaconda3/lib/python3.6/site-packages/tensorflow/python/framework/tensor_util.py”, line 421, in make_tensor_proto

raise ValueError(“None values not supported.”)

ValueError: None values not supported.

my environment:

Anaconda: conda 4.4.10

Python 3.6.4 :: Anaconda, Inc.

could u have a look on this? thanks!

Sorry to hear that:

– Are you able to confirm that TensorFlow and Keras are up to date?

– Are you able to confirm that you copied all of the code?

– Are you able to confirm that you are running the code from the command line?

hi Jason,

the problem have been solved as ‘Denis January 12, 2018 at 3:50 am’ described.

thank you Denis!

Great!

Hi

name ‘K’ is not defined

Thank you very much for yet another great post! Would this architecture be suitable for time series forecasting where you have sequences of multiple features to forecast a sequence of a single target? The sequence lengths of the features are longer then the length of the target sequence to be forecast.

All the examples I have seen so far are showing one feature sequence as input to one output target sequence.

Maybe, try it and see.

Hi Jason ..

Thank you for the this information ..

I have one question ..

Can I use this implementation in my Translation Model ..

I use encoder – decoder as following:

“””””

embedded_output = embedding_layer(embedding_input)

# ================================ Encoder ================================

encoder = LSTM(lstm_units, return_sequences=True, return_state=True, name=’encoder’)

encoder_outputs, state_h, state_c = encoder(embedded_output)

encoder_states = [state_h, state_c]

#….

embedding_Ar_input = Input(shape=(MAX_Ar_SEQUENCE_LENGTH,))

embedded_Ar_output = embedding_Ar_layer(embedding_Ar_input)

# ================================ Decoder ================================

# We set up our decoder to return full output sequences,

decoder_lstm = LSTM(lstm_units, return_sequences=True, return_state=True, name=’decoder’)

decoder_outputs, _, _ = decoder_lstm(embedded_Ar_output, initial_state=encoder_states)

# SoftMax

decoder_dense = Dense(output_vector_length, activation=’softmax’, name=’softmax’)

outputs_model = decoder_dense(attention)

“””””

and what is n_features? what it is representing??

n_features mean max decoder sequence ?

hi, Jason can you tell me what actually verbose do ?

It turns on output during training so that you can see what the model us doing during training (e.g. skill and progress).

so, what is the difference between verbose =1 or 2 or none , and which attention mechanism is the best for machine translation ?

Verbose 0 turns off verbose output, verbose 1 gives a progress bar, verbose 2 gives one line per epoch.

See this post on good NMT architectures:

https://machinelearningmastery.com/configure-encoder-decoder-model-neural-machine-translation/

so, what is the difference between verbose =1 or 2 or none , and which attention mechanism is the best for machine translation ?

hi, can we use this model for the translation of one language to another ?

Here is an example:

https://machinelearningmastery.com/develop-neural-machine-translation-system-keras/

Thanks for wonderful tutorial.

In a Custom Keras Attention Layer(AttentionDecoder Class), I am wondering if you can let me know why you implement the predicted word(yt) at time step t

in such a way that the previous generated word(ytm), previous hidden state(stm), and calculated context vector(context)

are added with its weights.

What you implemented is as follows:

yt = activations.softmax(

K.dot(ytm, self.W_o)

+ K.dot(stm, self.U_o)

+ K.dot(context, self.C_o)

+ self.b_o)

I coudn’t find any mentions except the very first definition about calculating the next word like: P(yt|y1,…yt-1, X) = g(yi-1, si, ci)

I am not sure if this equation indicates the way you did when calculating yt.

As mentioned, I did not implement the custom attention layer. I am not the best person to answer questions about it.

Hi Jason I try to understand LSTMs and I am very new. Could you please explain this following code a bit easier:

# define model

model = Sequential()

model.add(LSTM(150, input_shape=(n_timesteps_in, n_features)))

model.add(RepeatVector(n_timesteps_in))

model.add(LSTM(150, return_sequences=True))

model.add(TimeDistributed(Dense(n_features, activation=’softmax’)))

I understand the part of repeatvector and timdistrubuted. What I do not understand are the 150 hidden units, do they have to be the same ? and what happens if they are 1 ? It would be nice if you have any source for a visualized explanation of the structure. Thank you in advance.

You can change the number of units to anything you wish.

How could we apply this with multivariable time series ?

Sure.

Hi Jason!

I am trying to understand image captioning with attention model. I had seen your tutorial on image captioning. Can you please suggest me some resource, so that i can implement it using attention model in keras?

Thanks You!

I am waiting for Keras to get official support for attention.

Hi Jason !

I have gone through your tutorial on image captioning as given on following link.

https://machinelearningmastery.com/develop-a-deep-learning-caption-generation-model-in-python/

Can we use this attention model that is given by you for image captioning where CNN is used as Encoder and RNN is used as Decode?

Please suggest me.

Thank You.

Perhaps.

I hope to give more examples of attention when Keras officially supports it.

Thanks for wonderful tutorials. I learned a lot from your site.

I tried your code. It seems to simply removing the first LSTM from the baseline model will get perfect predictions for this example. Not sure attention layer is necessary here.

model = Sequential()

model.add(LSTM(150, input_shape=(n_timesteps_in, n_features), return_sequences=True))

model.add(Dense(n_features, activation=’softmax’))

It may not be, it’s just a demonstration.

Hi Jason, I want to to make model encoder Bidirectional-LSTM, decoder Bidirectional-LSTM. In theoretically is it possible as Bi-LSTM in your proposed models?

Sure.

Hi J! Thanks for a great hands-on tutorial … It works as intended and results are indeed improved with attention… however, when examining the at vector from attention_decoder, it does not show the desired activations…

Example:

Input: [29, 9, 47, 0, 12], output: [29, 9, 0, 0, 0] (correct)

at vector at first output (rounded): [6.2*10^(-12), 5.6*10^(-7), 1.5, 90.0, 8.4]

I would have expected the first of these numbers to be greatest as it should influence the output more than the remining four… What do you think about this? Could you inspect the at vector and see if you get the same results?

Nice observation, it may need further investigation.

Even I am seeing the same thing. Most of the probabilities are on the last 3 digits and it is never on the first 2 digits.

Thanks Jason for the great tutorial! Very helpful.

You’re welcome.

Great work, Jason!

You’re welcome.

Hi Jason,

Thanks for the wonderful post. I have a small query, to give you context I am working on text data and in my case the input and output lengths are quite different. So wanted to check if we can tweak this code so that attention can be applied where encoder and decoder have different lengths. It will be helpful if you can direct me to an resource where this has been implemented or guide as to how can I make changes in the Attention class to incorporate this.

Thanks,

Nilanjan

You might have to use a different implementation of attention.

Hi Jason,

Many thanks for very helpful posts. I have also gone through your image captioning code:

def define_model(vocab_size, max_length):

# feature extractor model

inputs1 = Input(shape=(4096,))

fe1 = Dropout(0.5)(inputs1)

fe2 = Dense(256, activation=’relu’)(fe1)

# sequence model

inputs2 = Input(shape=(max_length,))

se1 = Embedding(vocab_size, 256, mask_zero=True)(inputs2)

se2 = Dropout(0.5)(se1)

se3 = LSTM(256)(se2)

# decoder model

decoder1 = add([fe2, se3])

decoder2 = Dense(256, activation=’relu’)(decoder1)