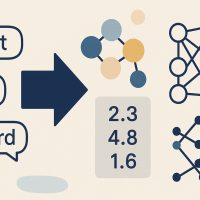

Word embeddings provide a dense representation of words and their relative meanings.

They are an improvement over sparse representations used in simpler bag of word model representations.

Word embeddings can be learned from text data and reused among projects. They can also be learned as part of fitting a neural network on text data.

In this tutorial, you will discover how to use word embeddings for deep learning in Python with Keras.

After completing this tutorial, you will know:

- About word embeddings and that Keras supports word embeddings via the Embedding layer.

- How to learn a word embedding while fitting a neural network.

- How to use a pre-trained word embedding in a neural network.

Kick-start your project with my new book Deep Learning for Natural Language Processing, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Updated Feb/2018: Fixed a bug due to a change in the underlying APIs.

- Updated Oct/2019: Updated for Keras 2.3 and TensorFlow 2.0.

How to Use Word Embedding Layers for Deep Learning with Keras

Photo by thisguy, some rights reserved.

Tutorial Overview

This tutorial is divided into 3 parts; they are:

- Word Embedding

- Keras Embedding Layer

- Example of Learning an Embedding

- Example of Using Pre-Trained GloVe Embedding

Need help with Deep Learning for Text Data?

Take my free 7-day email crash course now (with code).

Click to sign-up and also get a free PDF Ebook version of the course.

1. Word Embedding

A word embedding is a class of approaches for representing words and documents using a dense vector representation.

It is an improvement over more the traditional bag-of-word model encoding schemes where large sparse vectors were used to represent each word or to score each word within a vector to represent an entire vocabulary. These representations were sparse because the vocabularies were vast and a given word or document would be represented by a large vector comprised mostly of zero values.

Instead, in an embedding, words are represented by dense vectors where a vector represents the projection of the word into a continuous vector space.

The position of a word within the vector space is learned from text and is based on the words that surround the word when it is used.

The position of a word in the learned vector space is referred to as its embedding.

Two popular examples of methods of learning word embeddings from text include:

- Word2Vec.

- GloVe.

In addition to these carefully designed methods, a word embedding can be learned as part of a deep learning model. This can be a slower approach, but tailors the model to a specific training dataset.

2. Keras Embedding Layer

Keras offers an Embedding layer that can be used for neural networks on text data.

It requires that the input data be integer encoded, so that each word is represented by a unique integer. This data preparation step can be performed using the Tokenizer API also provided with Keras.

The Embedding layer is initialized with random weights and will learn an embedding for all of the words in the training dataset.

It is a flexible layer that can be used in a variety of ways, such as:

- It can be used alone to learn a word embedding that can be saved and used in another model later.

- It can be used as part of a deep learning model where the embedding is learned along with the model itself.

- It can be used to load a pre-trained word embedding model, a type of transfer learning.

The Embedding layer is defined as the first hidden layer of a network. It must specify 3 arguments:

It must specify 3 arguments:

- input_dim: This is the size of the vocabulary in the text data. For example, if your data is integer encoded to values between 0-10, then the size of the vocabulary would be 11 words.

- output_dim: This is the size of the vector space in which words will be embedded. It defines the size of the output vectors from this layer for each word. For example, it could be 32 or 100 or even larger. Test different values for your problem.

- input_length: This is the length of input sequences, as you would define for any input layer of a Keras model. For example, if all of your input documents are comprised of 1000 words, this would be 1000.

For example, below we define an Embedding layer with a vocabulary of 200 (e.g. integer encoded words from 0 to 199, inclusive), a vector space of 32 dimensions in which words will be embedded, and input documents that have 50 words each.

|

1 |

e = Embedding(200, 32, input_length=50) |

The Embedding layer has weights that are learned. If you save your model to file, this will include weights for the Embedding layer.

The output of the Embedding layer is a 2D vector with one embedding for each word in the input sequence of words (input document).

If you wish to connect a Dense layer directly to an Embedding layer, you must first flatten the 2D output matrix to a 1D vector using the Flatten layer.

Now, let’s see how we can use an Embedding layer in practice.

3. Example of Learning an Embedding

In this section, we will look at how we can learn a word embedding while fitting a neural network on a text classification problem.

We will define a small problem where we have 10 text documents, each with a comment about a piece of work a student submitted. Each text document is classified as positive “1” or negative “0”. This is a simple sentiment analysis problem.

First, we will define the documents and their class labels.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

# define documents docs = ['Well done!', 'Good work', 'Great effort', 'nice work', 'Excellent!', 'Weak', 'Poor effort!', 'not good', 'poor work', 'Could have done better.'] # define class labels labels = array([1,1,1,1,1,0,0,0,0,0]) |

Next, we can integer encode each document. This means that as input the Embedding layer will have sequences of integers. We could experiment with other more sophisticated bag of word model encoding like counts or TF-IDF.

Keras provides the one_hot() function that creates a hash of each word as an efficient integer encoding. We will estimate the vocabulary size of 50, which is much larger than needed to reduce the probability of collisions from the hash function.

|

1 2 3 4 |

# integer encode the documents vocab_size = 50 encoded_docs = [one_hot(d, vocab_size) for d in docs] print(encoded_docs) |

The sequences have different lengths and Keras prefers inputs to be vectorized and all inputs to have the same length. We will pad all input sequences to have the length of 4. Again, we can do this with a built in Keras function, in this case the pad_sequences() function.

|

1 2 3 4 |

# pad documents to a max length of 4 words max_length = 4 padded_docs = pad_sequences(encoded_docs, maxlen=max_length, padding='post') print(padded_docs) |

We are now ready to define our Embedding layer as part of our neural network model.

The Embedding has a vocabulary of 50 and an input length of 4. We will choose a small embedding space of 8 dimensions.

The model is a simple binary classification model. Importantly, the output from the Embedding layer will be 4 vectors of 8 dimensions each, one for each word. We flatten this to a one 32-element vector to pass on to the Dense output layer.

|

1 2 3 4 5 6 7 8 9 |

# define the model model = Sequential() model.add(Embedding(vocab_size, 8, input_length=max_length)) model.add(Flatten()) model.add(Dense(1, activation='sigmoid')) # compile the model model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy']) # summarize the model print(model.summary()) |

Finally, we can fit and evaluate the classification model.

|

1 2 3 4 5 |

# fit the model model.fit(padded_docs, labels, epochs=50, verbose=0) # evaluate the model loss, accuracy = model.evaluate(padded_docs, labels, verbose=0) print('Accuracy: %f' % (accuracy*100)) |

The complete code listing is provided below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

from numpy import array from keras.preprocessing.text import one_hot from keras.preprocessing.sequence import pad_sequences from keras.models import Sequential from keras.layers import Dense from keras.layers import Flatten from keras.layers.embeddings import Embedding # define documents docs = ['Well done!', 'Good work', 'Great effort', 'nice work', 'Excellent!', 'Weak', 'Poor effort!', 'not good', 'poor work', 'Could have done better.'] # define class labels labels = array([1,1,1,1,1,0,0,0,0,0]) # integer encode the documents vocab_size = 50 encoded_docs = [one_hot(d, vocab_size) for d in docs] print(encoded_docs) # pad documents to a max length of 4 words max_length = 4 padded_docs = pad_sequences(encoded_docs, maxlen=max_length, padding='post') print(padded_docs) # define the model model = Sequential() model.add(Embedding(vocab_size, 8, input_length=max_length)) model.add(Flatten()) model.add(Dense(1, activation='sigmoid')) # compile the model model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy']) # summarize the model print(model.summary()) # fit the model model.fit(padded_docs, labels, epochs=50, verbose=0) # evaluate the model loss, accuracy = model.evaluate(padded_docs, labels, verbose=0) print('Accuracy: %f' % (accuracy*100)) |

Running the example first prints the integer encoded documents.

|

1 |

[[6, 16], [42, 24], [2, 17], [42, 24], [18], [17], [22, 17], [27, 42], [22, 24], [49, 46, 16, 34]] |

Then the padded versions of each document are printed, making them all uniform length.

|

1 2 3 4 5 6 7 8 9 10 |

[[ 6 16 0 0] [42 24 0 0] [ 2 17 0 0] [42 24 0 0] [18 0 0 0] [17 0 0 0] [22 17 0 0] [27 42 0 0] [22 24 0 0] [49 46 16 34]] |

After the network is defined, a summary of the layers is printed. We can see that as expected, the output of the Embedding layer is a 4×8 matrix and this is squashed to a 32-element vector by the Flatten layer.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

_________________________________________________________________ Layer (type) Output Shape Param # ================================================================= embedding_1 (Embedding) (None, 4, 8) 400 _________________________________________________________________ flatten_1 (Flatten) (None, 32) 0 _________________________________________________________________ dense_1 (Dense) (None, 1) 33 ================================================================= Total params: 433 Trainable params: 433 Non-trainable params: 0 _________________________________________________________________ |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Finally, the accuracy of the trained model is printed, showing that it learned the training dataset perfectly (which is not surprising).

|

1 |

Accuracy: 100.000000 |

You could save the learned weights from the Embedding layer to file for later use in other models.

You could also use this model generally to classify other documents that have the same kind vocabulary seen in the test dataset.

Next, let’s look at loading a pre-trained word embedding in Keras.

4. Example of Using Pre-Trained GloVe Embedding

The Keras Embedding layer can also use a word embedding learned elsewhere.

It is common in the field of Natural Language Processing to learn, save, and make freely available word embeddings.

For example, the researchers behind GloVe method provide a suite of pre-trained word embeddings on their website released under a public domain license. See:

The smallest package of embeddings is 822Mb, called “glove.6B.zip“. It was trained on a dataset of one billion tokens (words) with a vocabulary of 400 thousand words. There are a few different embedding vector sizes, including 50, 100, 200 and 300 dimensions.

You can download this collection of embeddings and we can seed the Keras Embedding layer with weights from the pre-trained embedding for the words in your training dataset.

This example is inspired by an example in the Keras project: pretrained_word_embeddings.py.

After downloading and unzipping, you will see a few files, one of which is “glove.6B.100d.txt“, which contains a 100-dimensional version of the embedding.

If you peek inside the file, you will see a token (word) followed by the weights (100 numbers) on each line. For example, below are the first line of the embedding ASCII text file showing the embedding for “the“.

|

1 |

the -0.038194 -0.24487 0.72812 -0.39961 0.083172 0.043953 -0.39141 0.3344 -0.57545 0.087459 0.28787 -0.06731 0.30906 -0.26384 -0.13231 -0.20757 0.33395 -0.33848 -0.31743 -0.48336 0.1464 -0.37304 0.34577 0.052041 0.44946 -0.46971 0.02628 -0.54155 -0.15518 -0.14107 -0.039722 0.28277 0.14393 0.23464 -0.31021 0.086173 0.20397 0.52624 0.17164 -0.082378 -0.71787 -0.41531 0.20335 -0.12763 0.41367 0.55187 0.57908 -0.33477 -0.36559 -0.54857 -0.062892 0.26584 0.30205 0.99775 -0.80481 -3.0243 0.01254 -0.36942 2.2167 0.72201 -0.24978 0.92136 0.034514 0.46745 1.1079 -0.19358 -0.074575 0.23353 -0.052062 -0.22044 0.057162 -0.15806 -0.30798 -0.41625 0.37972 0.15006 -0.53212 -0.2055 -1.2526 0.071624 0.70565 0.49744 -0.42063 0.26148 -1.538 -0.30223 -0.073438 -0.28312 0.37104 -0.25217 0.016215 -0.017099 -0.38984 0.87424 -0.72569 -0.51058 -0.52028 -0.1459 0.8278 0.27062 |

As in the previous section, the first step is to define the examples, encode them as integers, then pad the sequences to be the same length.

In this case, we need to be able to map words to integers as well as integers to words.

Keras provides a Tokenizer class that can be fit on the training data, can convert text to sequences consistently by calling the texts_to_sequences() method on the Tokenizer class, and provides access to the dictionary mapping of words to integers in a word_index attribute.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

# define documents docs = ['Well done!', 'Good work', 'Great effort', 'nice work', 'Excellent!', 'Weak', 'Poor effort!', 'not good', 'poor work', 'Could have done better.'] # define class labels labels = array([1,1,1,1,1,0,0,0,0,0]) # prepare tokenizer t = Tokenizer() t.fit_on_texts(docs) vocab_size = len(t.word_index) + 1 # integer encode the documents encoded_docs = t.texts_to_sequences(docs) print(encoded_docs) # pad documents to a max length of 4 words max_length = 4 padded_docs = pad_sequences(encoded_docs, maxlen=max_length, padding='post') print(padded_docs) |

Next, we need to load the entire GloVe word embedding file into memory as a dictionary of word to embedding array.

|

1 2 3 4 5 6 7 8 9 10 |

# load the whole embedding into memory embeddings_index = dict() f = open('glove.6B.100d.txt') for line in f: values = line.split() word = values[0] coefs = asarray(values[1:], dtype='float32') embeddings_index[word] = coefs f.close() print('Loaded %s word vectors.' % len(embeddings_index)) |

This is pretty slow. It might be better to filter the embedding for the unique words in your training data.

Next, we need to create a matrix of one embedding for each word in the training dataset. We can do that by enumerating all unique words in the Tokenizer.word_index and locating the embedding weight vector from the loaded GloVe embedding.

The result is a matrix of weights only for words we will see during training.

|

1 2 3 4 5 6 |

# create a weight matrix for words in training docs embedding_matrix = zeros((vocab_size, 100)) for word, i in t.word_index.items(): embedding_vector = embeddings_index.get(word) if embedding_vector is not None: embedding_matrix[i] = embedding_vector |

Now we can define our model, fit, and evaluate it as before.

The key difference is that the embedding layer can be seeded with the GloVe word embedding weights. We chose the 100-dimensional version, therefore the Embedding layer must be defined with output_dim set to 100. Finally, we do not want to update the learned word weights in this model, therefore we will set the trainable attribute for the model to be False.

|

1 |

e = Embedding(vocab_size, 100, weights=[embedding_matrix], input_length=4, trainable=False) |

The complete worked example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 |

from numpy import array from numpy import asarray from numpy import zeros from keras.preprocessing.text import Tokenizer from keras.preprocessing.sequence import pad_sequences from keras.models import Sequential from keras.layers import Dense from keras.layers import Flatten from keras.layers import Embedding # define documents docs = ['Well done!', 'Good work', 'Great effort', 'nice work', 'Excellent!', 'Weak', 'Poor effort!', 'not good', 'poor work', 'Could have done better.'] # define class labels labels = array([1,1,1,1,1,0,0,0,0,0]) # prepare tokenizer t = Tokenizer() t.fit_on_texts(docs) vocab_size = len(t.word_index) + 1 # integer encode the documents encoded_docs = t.texts_to_sequences(docs) print(encoded_docs) # pad documents to a max length of 4 words max_length = 4 padded_docs = pad_sequences(encoded_docs, maxlen=max_length, padding='post') print(padded_docs) # load the whole embedding into memory embeddings_index = dict() f = open('../glove_data/glove.6B/glove.6B.100d.txt') for line in f: values = line.split() word = values[0] coefs = asarray(values[1:], dtype='float32') embeddings_index[word] = coefs f.close() print('Loaded %s word vectors.' % len(embeddings_index)) # create a weight matrix for words in training docs embedding_matrix = zeros((vocab_size, 100)) for word, i in t.word_index.items(): embedding_vector = embeddings_index.get(word) if embedding_vector is not None: embedding_matrix[i] = embedding_vector # define model model = Sequential() e = Embedding(vocab_size, 100, weights=[embedding_matrix], input_length=4, trainable=False) model.add(e) model.add(Flatten()) model.add(Dense(1, activation='sigmoid')) # compile the model model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy']) # summarize the model print(model.summary()) # fit the model model.fit(padded_docs, labels, epochs=50, verbose=0) # evaluate the model loss, accuracy = model.evaluate(padded_docs, labels, verbose=0) print('Accuracy: %f' % (accuracy*100)) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Running the example may take a bit longer, but then demonstrates that it is just as capable of fitting this simple problem.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

[[6, 2], [3, 1], [7, 4], [8, 1], [9], [10], [5, 4], [11, 3], [5, 1], [12, 13, 2, 14]] [[ 6 2 0 0] [ 3 1 0 0] [ 7 4 0 0] [ 8 1 0 0] [ 9 0 0 0] [10 0 0 0] [ 5 4 0 0] [11 3 0 0] [ 5 1 0 0] [12 13 2 14]] Loaded 400000 word vectors. _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= embedding_1 (Embedding) (None, 4, 100) 1500 _________________________________________________________________ flatten_1 (Flatten) (None, 400) 0 _________________________________________________________________ dense_1 (Dense) (None, 1) 401 ================================================================= Total params: 1,901 Trainable params: 401 Non-trainable params: 1,500 _________________________________________________________________ Accuracy: 100.000000 |

In practice, I would encourage you to experiment with learning a word embedding using a pre-trained embedding that is fixed and trying to perform learning on top of a pre-trained embedding.

See what works best for your specific problem.

Further Reading

This section provides more resources on the topic if you are looking go deeper.

- Word Embedding on Wikipedia

- Keras Embedding Layer API

- Using pre-trained word embeddings in a Keras model, 2016

- Example of using a pre-trained GloVe Embedding in Keras

- GloVe Embedding

- An overview of word embeddings and their connection to distributional semantic models, 2016

- Deep Learning, NLP, and Representations, 2014

Summary

In this tutorial, you discovered how to use word embeddings for deep learning in Python with Keras.

Specifically, you learned:

- About word embeddings and that Keras supports word embeddings via the Embedding layer.

- How to learn a word embedding while fitting a neural network.

- How to use a pre-trained word embedding in a neural network.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Thank you Jason,

I am excited to read more NLP posts.

Thanks.

Thanks man, It was really helpful.

You’re welcome.

after embedding,have to have a “Flatten()” layer? In my project, I used a dense layer directly after embedding. is it ok?

Try it and see.

I appreciate how well updated you keep these tutorials. the first thing I always look at, when I start reading is the update date. thank you very much.

You’re welcome.

I require all of the code to work and keep working!

Hi, Jason:

when one_hot encoding is used, why is padding necessary? Doesn’t one_hot encoding already create an input of equal length?

The one hot encoding is for one variable at one time step, e.g. features.

Padding is needed to make all sequences have the same number of time steps.

See this:

https://machinelearningmastery.com/faq/single-faq/what-is-the-difference-between-samples-timesteps-and-features-for-lstm-input

I split my data into 80-20 test-train and I’m still getting 100% accuracy. Any idea why? It is ~99% on epoch 1 and the rest its 100%.

Consider using the procedure in this post to evaluate your model:

https://machinelearningmastery.com/evaluate-skill-deep-learning-models/

Use drop-out 20%, your model is overfit!!

Thank you Jason. I always find things easier when reading your post.

I have a question about the vector of each word after training. For example, the word “done” in sentence “Well done!” will be represented in different vector from that word in sentence “Could have done better!”. Is that right? I mean the presentation of each word will depend on the context of each sentence?

No, each word in the dictionary is represented differently, but the same word in different contexts will have the same representation.

It is the word in its different contexts that is used to define the representation of the word.

Does that help?

Yes, thank you. But I still have a question. We will train each context separately, then after training the first context, in this case is “Well done!”, we will have a vector representation of the word “done”. After training the second context, “Could have done better”, we have another vector representation of the word “done”. So, which vector will we choose to be the representation of the word “done”?

I might misunderstand the procedure of training. Thank you for clarifying it for me.

No. All examples where a word is used are used as part of the training of the representation of the word. There is only one representation for each word during and after training.

I got it. Thank you, Jason.

Hi Jason,

any ideas on how to “filter the embedding for the unique words in your training data” as mentioned in the tutorial?

The mapping of word to vector dictionary is built into Gensim, you can access it directly to retrieve the representations for the words you want: model.wv.vocab

HI Jason,

I am really appreciated the time U spend to write this tutorial and also replying.

My question is about “model.wv.vocab” you wrote. is it an address site?

It does not work actually.

No, it is an attribute on the model.

Hi, Jason

Good day.

I just need your suggestion and example. I have two different dataset, where one is structured and the other is unstructured. The goal is to use the structured to construct a representation for the unstructured, so apply use word embedding on the two input data but how can I find the average of the two embedding and flatten it to one before feeding the layer into CNN and LSTM.

Looking forward to your response.

Regards

Abbey

Sorry, what was your question?

If your question was if this is a good approach, my advice is to try it and see.

Hi, Jason

How can I find the average of the word embedding from the two input?

Regards

Abbey

Perhaps you could retrieve the vectors for each word and take their average?

Perhaps you can use the Gensim API to achieve this result?

Hi Jason,

I have a set of documents(1200 text of movie Scripts) and i want to use pretrained embeddings. But i want to update the vocabulary and train again adding the words of my corpus. Is that possible ?

Sure.

Load the pre-trained vectors. Add new random vectors for the new words in the vocab and train the whole lot together.

Hi Jason…Could you also help us with R codes for using Pre-Trained GloVe Embedding

Sorry, I don’t have R code for word embeddings.

Hi Jason, really appreciate that you answered all the replies! I am planning to try both CNN and RNN (maybe LSTM & GRU) on text classification. Most of my documents are less than 100 words long, but about 5 % are longer than 500 words. How do you suggest to set the max length when using RNN?If I set it to be 1000, will it degrade the learning result? Should I just use 100? Will it be different in the case of CNN?

Thank you!

I would recommend experimenting with different configurations and see how the impact model skill.

Dear Hao,

Did you try RNN(LSTM or GRU) on text classification?If yes then can you plz provide me the code??

Here is an example:

https://machinelearningmastery.com/sequence-classification-lstm-recurrent-neural-networks-python-keras/

I’d like to thank you for this post. I’ve been struggling to understand this precise way of using keras for a week now and this is the only post I’ve found that actually explains what each step in the process is doing – and provides code that self-documents what the data looks like as the model is constructed and trained. This makes it so much easier to adapt to my particular requirements.

Thanks, I’m glad it helped.

In the above Keras example, how can we predict a list of context words given a word? Lets say i have a word named ‘sudoku’ and want to predict the sourrounding words. how can we use word2vec from keras to do that?

It sounds like you are describing a language model. We can use LSTMs to learn these relationships.

Here is an example:

https://machinelearningmastery.com/text-generation-lstm-recurrent-neural-networks-python-keras/

No, what i meant was for word2vec skip-gram model predicts a context word given the center word. So if i train a word2vec skip-gram model, how can i predict the list of context words if my center word is ‘sudoku’?

Regards,

Azim

I don’t know Azim.

You can get the cosine distance between the words, and the one that is having the least distance would surround it.. here is the link:

https://github.com/Hvass-Labs/TensorFlow-Tutorials

Go to Natural Language Processing and you can find a cosine function there, use them to find yours..

Hi Jason,

Thanks for your useful blog I have learned a lots.

I am wondering if I already have pretrained word embedding, is that possible to set keras embedding layer trainable to be true? If it is workable, will I get a better result, when I only use small size of data to pretrain the word embedding model. Many thanks!

You can. It is hard to know whether it will give better results. Try it.

Hey Jason,

Is it possible to perform probability calculations on the label? I am looking at a case where it is not simply +/- but that a given data entry could be both but more likely one and not the other.

Yes, a neural network with a sigmoid or softmax output can predict a probability-like score for each class.

I’m doing something like this except with my own feature vectors — but to the point of the labels — I do ternary classification using categorical_crossentropy and a softmax output. I get back an answer of the probability of each label.

Nice!

Hey Jason!

Thanks for a wonderful and detailed explanation of the post. It helped me a lot.

However, I’m struggling to understand how the model predicts a sentence as positive or negative.

i understand that each word in the document is converted into a word embedding, so how does our model evaluate the entire sentence as positive or negative? Does it take the sum of all the word vectors? Perhaps average of them? I’ve not been able to figure this part out.

Great question!

The model interprets all of the words in the sequence and learns to associate specific patterns (of encoded words) with positive or negative sentiment

Hi Jason,

Thanks a lot for your amazing posts. I have the same question as Ravil. Can you elaborate a bit more on “learns to associate specific patterns?”

Good question Ken, perhaps this post will make it clearer how ml algorithms work (a functional mapping):

https://machinelearningmastery.com/how-machine-learning-algorithms-work/

Does that help?

Thanks for your reply. But I was trying to ask is that how does keras manage to produce a document level representation by having the vectors of each word? I don’t seem to find how was this being done in the code.

Cheers.

The model such as the LSTM or CNN will put this together.

In the case of LSTMs, you can learn more here:

https://machinelearningmastery.com/start-here/#lstm

Does that help?

Hi Jason,

First, thanks for all your really useful posts.

If I understand well your post and answers to Ken and Ravil, the neural network you build in fact reduces the sequence of embedding vectors corresponding to all the words of a document to a one-dimensional vector with the Flatten layer, and you just train this flattening, as well as the embedding, to get the best classification on your training set, isn’t it?

Thank you in advance for your answer.

Sort of.

words => integers => embedding

The embedding has a vector per word which the network will use as a representation for the word.

We have a sequence of vectors for the words, so we flatten this sequence to one long vector for the Dense model to read. Alternately, we could wrap the dense in a timedistributed layer.

Aaah! So nothing tricky is done when flattening, more or less just concatenating the fixed number of embedding vectors that is the output of the embedding layer, and this is why the number of words per document has to be fixed as a setting of this layer. If this is correct, I think I’m finally understanding how all this works.

I’m sorry to bother you more, but how does the network works if a document much shorter than the longest document (the number of its words being set as the number of words per document to the embedding layer) is given to the network as training or testing? It just fills the embedding vectors of this non-appearing words as 0? I’ve been looking for ways to convert all the word embeddings of a text to some sort of document embedding, and this just seems a solution too simple to work, or that may work but for short documents (as well as other options like averaging the word vectors or taking the element-wise maximum of minimum).

I’m trying to do sentiment analysis for spanish news, and I have news with like 1000 or more words, and wanted to use pre-trained word embeddings of 300 dimensions each. Wouldn’t it be a size way too huge per document for the network to train properly, or fast enough? I imagine you do not have a precise answer, but I’d like to know if you have tried the above method with long documents, or know that someone has.

Thank you again, I’m sorry for such a long question.

Yes.

We can use padding for small documents and a Masking input layer to ignore padded values. More here:

https://machinelearningmastery.com/handle-missing-timesteps-sequence-prediction-problems-python/

Try different sized embeddings and use results to guide the configuration.

Okay, thank you very much! I will give it a try.

the chinese word how to vector sequence

Sorry?

me bot trying interact comments born with lstm

Hi Jason,

I have successfully trained a model using the word embedding and Keras. The accuracy was at 100%.

I saved the trained model and the word tokens for predictions.

MODEL.save(‘model.h5’, True)

TOKENIZER = Tokenizer(num_words=MAX_NB_WORDS)

TOKENIZER.fit_on_texts(TEXT_SAMPLES)

pickle.dump(TOKENIZER, open(‘tokens’, ‘wb’))

When predicting:

– Load the saved model.

– Setup the tokenizer, by loading the saved word tokens.

– Then predict the category of the new data.

I am not sure the prediction logic is correct, since I am not seeing the expected category from the prediction.

The source code is in Github: https://github.com/hilmij/keras-test/blob/master/predict.py

Appreciate if you can have a look and let me know what I am missing.

Best regards,

Hilmi.

Your process sounds correct. I cannot review your code sorry.

What was the problem exactly?

Thank you, Jason! Your examples are very helpful. I hope to get your attention with my question. At training, you prepare the tokenizer by doing:

t = text.Tokenizer();

t.fit_on_texts(docs)

Which creates a dictionary of words:numbers. What do we do if we have a new doc with lost of new words at prediction time? Will all these words go the unknown token? If so, is there a solution for this, like can we fit the tokenizer on all the words in the English vocab?

You must know the words you want to support at training time. Even if you have to guess.

To support new words, you will need a new model.

Hello Jason!

In Example of Using Pre-Trained GloVe Embedding, do you use the word embedding vectors as weights of the embedding layer?

Yes.

Very nice set of Blogs of NLP and Keras – thanks for writing them.

As a quick note for others

When I tried to load the glove file with the line:

f = open(‘../glove_data/glove.6B/glove.6B.100d.txt’)

I got the error

UnicodeDecodeError: ‘charmap’ codec can’t decode byte 0x9d in position 2776: character maps to

To fix I added:

f = open(‘../glove_data/glove.6B/glove.6B.100d.txt’,encoding=”utf8″)

This issue may have been caused by using Windows.

Thanks for the tip Alex.

Hi Jason,

Wonderful tutorials!

I have a question. Why do we have to one-hot vectorize the labels? Also, if I have a pad sequence of ex. [2,4,0] what the one hot will be? I’m trying to understand better one hot vectorzer.

I appreciate your response!

We don’t one hot encode the labels, we one hot encode the words.

Perhaps this post will help you better understand one hot encoding:

https://machinelearningmastery.com/how-to-one-hot-encode-sequence-data-in-python/

Hi Jason,

Thank you for your excellent tutorial. Do you know if there is a way to build a network for a classification using both text embedded data and categorical data ?

Thank you

Sure, you could have a network with two inputs:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Thank you Jason

How to do sentence classification using CNN in keras ? please help

See this tutorial:

https://machinelearningmastery.com/develop-word-embedding-model-predicting-movie-review-sentiment/

Fantastic explanation, thanks so much. I’m just amazed at how much easier this has become since the last time I looked at it.

I’m glad the post helped Stuart!

Hi Jason …the 14 word vocab from your docs is “well done good work great effort nice excellent weak poor not could have better” For a vocab_size of 14, this one_shot encodes to [13 8 7 6 10 13 3 6 10 4 9 2 10 12]. Why does 10 appear 3 times, for “great”, “weak” and “have”?

Sorry, I don’t follow Stuart. Could you please restate the question?

Hi Jason, the encodings that I provided in the example above came from kerasR with a vocab_size of 14. So let me ask the same question about the uniqueness of encodings using your Part 3 one_hot example above with a vocab_size of 50.

Here different encodings are produced for different new kernels (using Spyder3/Python 3.4):

[[31, 33], [27, 33], [48, 41], [34, 33], [32], [5], [14, 41], [43, 27], [14, 33], [22, 26, 33, 26]]

[[6, 21], [48, 44], [7, 26], [46, 44], [45], [45], [10, 26], [45, 48], [10, 44], [47, 3, 21, 27]]

[[7, 8], [16, 42], [24, 13], [45, 42], [23], [17], [34, 13], [13, 16], [34, 42], [17, 31, 8, 19]]

Pleas note that in the first line, “33” encodes for the words “done”, “work”, “work”, “work” & “done”. In the second line “45” encodes for the words “excellent” & “weak” & “not”. In the third line, “13” encodes “effort”, “effort” & “not”.

So I’m wondering why the encodings are not unique? Secondly, if vocab_size must be much larger then the actual size of the vocabulary?

Thanks

The one_hot() function does not map words to unique integers, it uses a hash function that can have collisions, learn more here

https://keras.io/preprocessing/text/#one_hot

Thanks Jason, In your Part 4 example, the Tokenizer approach always gives the same encodings and these appear to be unique.

Yes, I recommend using Tokenizer, see this post for more:

https://machinelearningmastery.com/prepare-text-data-deep-learning-keras/

Great article Jason.

How do you convert back from an embedding to a one-hot? For example if you have a seq2seq model, and you feed the inputs as word embeddings, in your decoder you need to convert back from the embedding to a one-hot representing the dictionary. If you do it by using matrix multiplication that can be quite a large matrix (e.g embedding size 300, and vocab of 400k).

The output layer can predict integers directly that you can map to words in your vocabulary. There would be no embedding layer on the output.

Hi,

Very helpful article.

I have created word2vec matrix of a sentence using gensim and pre-trained Google News vector. Can I just flatten this matrix to a vector and use that as a input to my neural network.

For example:

each sentence is of length 140 and I am using a pre-trained model of 100 dimensions, therefore:- I have a 140*100 matrix representing the sentence, can i just flatten it to a 14000 length vector and feed it to my input layer?

It depends on what you’re trying to model.

Great article, could you shed some light on how do Param # of 400 and 1500 in two neural networks come from? Thanks

Oh! Is it just vocab_size * # of dimension of embedding space?

1. 50 * 8 = 400

2. 15* 100 = 1500

What do you mean exactly?

Great post! I’m working with my own corpus. How would I save the weight vector of the embedding layer in a text file like the glove data set?

My thinking is it would be easier for me to apply the vector representations to new data sets and/or machine learning platforms (mxnet etc) and make the output human readable (since the word is associated with the vector).

You could use get_weights() in the Keras API to retrieve the vectors and save directly as a CSV file.

get_weights() for what exactly? does it need a loop?

get_weights is a function that will return the weights for a model or layers, depending on what you call it on exactly:

https://keras.io/layers/about-keras-layers/

Clear Short Good reading, always thank you for your work!

Thanks.

Hello Jason,

I have a dataset with which I’ve attained 0.87 fscore by 5 fold cross validation using SVM.Maximum context window is 20 and one hot encoded.

Now, I’ve done whatever has been mentioned and getting an accuracy of 13-15 percent for RNN models where each one has one LSTM cell with 3,20,150,300 hidden units. Dimensions of my pre-trained embeddings is 300.

Loss is decreasing and even reaching negative values, but no change in accuracy.

I’ve tried the same with your CNN,basic ANN models you’ve mentioned for text classification .

Would you please suggest some solution. Thanks in advance.

I have some ideas here:

https://machinelearningmastery.com/improve-deep-learning-performance/

When I copy the code of the first box I get the error:

AttributeError: ‘int’ object has no attribute ‘ndim’

in the line :

model.fit(padded_docs, labels, epochs=50, verbose=0)

Where is the problem?

Copy the the code from the “complete example”.

Hi Jason,

I’ve got the same error, also will running the “compete example”.

What can be the cause?

Try casting the labels to numpy arrays.

i get the same!

I have fixed and updated the examples.

Carsten, you need labels to be numpy.array not just list.

Hi Jason,

If I have unkown words in training set, how can I assign the same random initialize vector to all of the unkown words when using pretrained vector model like glove or w2v. thanks!!!

Why would you want to do that?

If my data is in specific domain and I still want to leverage general word embedding model(e.g. glove.6b.100d trained from wiki), then it must has some OOV in domain data, so. no no mather in training time or inference time it propably may appear some unkown words.

It may.

You could ignore these words.

You could create a new embedding, set vectors from existing embedding and learn the new words.

Amazing Dr. Jason!

Thanks for a great walkthrough.

Kindly advice on the following.

On the step of encoding each word to integer you said: “We could experiment with other more sophisticated bag of word model encoding like counts or TF-IDF”. Could you kindly elaborate on how can it be implemented, as tfidf encodes tokens with floats. And how to tie it with Keras, passing it to an Embedding layer please? I’m keen to experiment with it, hope it could yield better results.

Another question is about input docs. Suppose I’ve preprocessed text by the means of nltk up to lemmatization, thus, each sample is a list of tokens. What is the best approach to pass it to Keras embedding layer in this case then?

I have most posts on BoW, learn more here:

https://machinelearningmastery.com/?s=bag+of+words&submit=Search

You can encode your tokens as integers manually or use the Keras Tokenizer.

Well, Keras Tokenizer can accept only texts or sequences. Seems the only way is to glue tokens together using ‘ ‘.join(token_list) and then pass onto the Tokenizer.

As for the BOW articles, I’ve walked through it theys are so very valuable. Thank you!

Using BOW differs so much from using Embeddings. As BOW would introduce huge sparse array of features for each sample, while Embeddings aim to represent those features (tokens) very densely up to a few hundreds items.

So, BOW in the other article gives incredibly good results with just very simple NN architecture (1 layer of 50 or 100 neurons). While I struggled to get good results using Embeddings along with convolutional layers…

From your experience, would you please advice on that please? Are Embeddings actually viable and it is just a matter of finding a correct architecture?

Nice! And well done for working through the tutorials. I love to see that and few people actually “do the work”.

Embeddings make more sense on larger/hard problems generally – e.g. big vocab, complex language model on the front end, etc.

I see, thank you.

Thanks jason for another great tutorial.

I have some questions :

Isn’t one hot definition is binary one, vector of 0’s and 1?

so [[1,2]] would be encoded to [[0,1,0],[0,0,1]]

how embedding algorithm is done on keras word2vec/globe or simply dense layer(or something else)

thanks

joseph

Sorry, I don’t follow your question. Perhaps you could rephrase it?

Amazing Dr. Jason!

Thanks for a great walkthrough.

The dimension for each word vector like above example e.g. 8, is set randomly?

Thank you

The dimensionality is fixed and specified.

In the first example it is 8, in the second it is 100.

Thank you Dr. Jason for your quick feedback!

Ok, I see that the pre-trained word embedding is set to 100 dimensionality because the original file “glove.6B.100d.txt” contained a fixed number of 100 weights for each line of ASCII.

However, the first example as you mentioned in here, “The Embedding has a vocabulary of 50 and an input length of 4. We will choose a small embedding space of 8 dimensions.”

You choose 8 dimensions for the first example. Does it means it can be set to any numbers other than 8? I’ve tried to change the dimension to 12. It doesn’t appeared any errors but the accuracy drops from 100% to 89%

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding_1 (Embedding) (None, 4, 12) 600

_________________________________________________________________

flatten_1 (Flatten) (None, 48) 0

_________________________________________________________________

dense_1 (Dense) (None, 1) 49

=================================================================

Total params: 649

Trainable params: 649

Non-trainable params: 0

Accuracy: 89.999998

So, how dimensionality is set? Does the dimensions effects the accuracy performance?

Sorry I am trying to grasp the basic concept in understanding NLP stuff. Much appreciated for your help Dr. Jason.

Thank you

No problem, please ask more questions.

Yes, you can choose any dimensionality you like. Larger means more expressive, required for larger vocabs.

Does that help Anna?

Yes indeed Dr. Now I can see that the dimensionality is set depends on the number of vocabs.

Thank you again Dr Jason for enlighten me! 🙂

You asked good questions.

Hi Jason,

thanks for amazing tutorial.

I have a question. I am trying to do semantic role labeling with context window in Keras. How can I implement context window with embedding layer?

Thank you

I don’t know, sorry.

Hi, great website! I’ve been learning a lot from all the tutorials. Thank you for providing all these easy to understand information.

How would I go about using other data for the CNN model? At the moment, I am using just textual data for my model using the word embeddings. From what I understand, the first layer of the model has to be the Embeddings, so how would I use other input data such as integers along with the Embeddings?

Thank you!

Great question!

You can use a multiple-input model, see examples here:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Thank you for the fast reply!

Hi Jason, this tutorial is simple and easy to understand. Thanks.

However, I have a question. While using the pre-trained embedding weights such as Glove or word2vec, what if there exists few words in my dataset, which weren’t present in the dataset on which word2vec or Glove was trained. How does the model represent such words?

My understanding is that in your second section (Using Pre-Trained Glove Embeddin), you are mapping the words from the loaded weights to the words present in your dataset, hence the question above.

Correct me, if it’s not the way I think it is.

You can ignore them, or assign them to zero vector, or train a new model that includes them.

Hi Jason. Thanks for this and other VERY clear and informative articles.

Wanted to add one question to Aditya post:

“mapping the words from the loaded weights to the words present in your dataset”

how does it mapping? does it use matrix index == word number from (padded_docs or)?

I am asking because – what if I pass embedding_matrix with origin order, but will shuffle padded_docs before model.fit?

Words must be assigned unique integers that remain consistent across all data and embeddings.

Hi Jason,

I am trying to train a Keras LSTM model on some text sentiment data. I am also using GridSearchCV in sklearn to find the best parameters. I am not quite sure what went wrong but the classification report from sklearn says:

UndefinedMetricWarning: Precision and F-score are ill-defined and being set to 0.0 in labels with no predicted samples.

Below is what the classification report looks like:

precision recall f1-score support

negative 0.00 0.00 0.00 98

positive 0.70 1.00 0.83 232

avg / total 0.49 0.70 0.58 330

Do you know what the problem is?

Perhaps you are trying to use keras metrics with sklearn? Watch the keywords you use when specifying the keras model vs the sklearn evaluation (CV).

Hi Jason,

your blog is really really interesting. I have a question: Which is the diffrence between using word2vec and texts_to_sequences from Tokenizer in keras? I mean in the way the texts are represented.

Is any of the two options better than the other?

Thanks a lot.

Kind regards.

word2vec encodes words (integers) to vectors. texts_to_seqences encodes words to integers. It is a step before word2vec or a step before bag of words.

Hi Jason,

I have a dataframe which contains texts and corresponding labels. I have used gensim module and used word2vec to make a model from the text. Now I want to use that model for input into Conv1D layers. Can you please tell me how to load the word2vec model in Keras Embedding layer? Do I need to pre-process the model in some way before loading? Thanks in advance.

Yes, load the weights into an Embedding layer, then define the rest of your network.

The tutorial above will help a lot.

This is really helpful. You make us awesome at what we do. Thanks!!

I’m glad to hear that.

Thank you for this extremely helpful blog post. I have a question regarding to interpreting the model. Is there a way to know / visualize the word importance after the model is trained? I am looking for a way to do so. For instance, is there a way to find like the top 10 words that would trigger the model to classify a text as negative and vice versa? Thanks a lot for your help in advance

There may be methods, but I am not across them. Generally, neural nets are opaque, and even weight activations in the first/last layers might be misleading if used as importance scores.

Maybe look at Lime

https://github.com/marcotcr/lime

Thanks.

Hi Jason ,

Can you please tell me the logic behind this:

vocab_size = len(t.word_index) + 1

Why we added 1 here??

So that the word indexes are 1-offset, and 0 is reserved for padding / no data.

Hi Jason,

If I want to use this model to predict next word, can I just change the output layer to Dense(100, activation = ‘linear’) and change the loss function to MSE?

Many thanks,

Ray

Perhaps look at some of the posts on training a language model for word generation:

https://machinelearningmastery.com/?s=language+model&submit=Search

Thanks for this tutoriel ! Really clear and usefull !

You’re welcome.

Hi Jason,

U R the best in keras tutorials and also replying the questions. I am really grateful.

Although I have understood the content of the context and codes U have written above, I am not able to understand what you mean about this sentence:[It might be better to filter the embedding for the unique words in your training data.].

what does “to filter the embedding” mean??

Thank you for replying.

It means, only have the words in the embedding that you know exist in your dataset.

Hi Jason,

Thank U 4 replying but as I am not a native English speaker, I am not sure whether I got it or not. You mean to remove all the words which exist in the glove but do not exist in my own dataset?? in order to raise the speed of implementation?

I am sorry to ask it again as I did not understand clearly.

Thank U in advance Jason

Exactly.

Hi,

This post is great. I am new to machine learning so i have a question which might be basic so i am not sure.As from what i understand, the model takes the embedding matrix and text along with labels at once.What i am trying to do is concatenate POS tag embedding with each pre-trained word embedding but POS tag can be different for the same word depending upon the context.It essentially means that i cant alter the embedding matrix at add to the network embedding layer.I want to take each sentence,find its embedding and concatenate with POS tag embedding and then feed into neural network.Is there a way to do the training sentence by sentence or something? Thanks

You might be able to use the embedding and pre-calculate the vector representations for each sentence.

Sorry but i didn’t quite understand.Can you please elaborate a little?

Sorry, I mean that you can prepare an word2vec model standalone. Then pass each sentence through it to build up a list of vectors. Concat the vectors together and you have a distributed sentence representation.

Thanks alot! One more thing, is it possible to pass other information to the embedding layer than just weights?For example i was thinking that what if i dont change the embedding matrix at all and create a separate matrix of POS tags for whole training data which is also passed to the embedding layer which concatenates these both sequentially?

You could develop a model that has multiple inputs, for example see this post for examples:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Thanks.I saw this post.Your model has separate inputs but they get merged after flattening.In my case i want to pass the embeddings to first convolutional layer,only after they are concatenated. Uptil now what i did was that i have created another integerized sequence of my data according to POS_tags(embedding_pos) to pass as another input and another embedding matrix that contains the embeddings of all the POS tags.

e=(Embedding(vocab_size, 50, input_length=23, weights=[embedding_matrix], trainable=False))

e1=(Embedding(38, 38, input_length=23, weights=[embedding_matrix_pos], trainable=False))

merged_input = concatenate([e,e1], axis=0)

model_embed = Sequential()

model_embed.add(merged_input)

model_embed.fit(data,embedding_pos, final_labels, epochs=50, verbose=0)

I know this is wrong but i am not sure how to concat those both sequences and if you can direct me in right direction,it would be great.The error is

‘Layer concatenate_6 was called with an input that isn’t a symbolic tensor. Received type: . Full input: [, ]. All inputs to the layer should be tensors.’

Perhaps you could experiment and compare the performance of models with different merge layers for combining the inputs.

Hi Jason, awesome post as usual!

Your last sentence is tricky though. You write:

“In practice, I would encourage you to experiment with learning a word embedding using a pre-trained embedding that is fixed and trying to perform learning on top of a pre-trained embedding.”

Without the original corpus, I would argue, that’s impossible.

In Google’s case, the original corpus of around 100 billion words is not publicly available. Solution? I believe you’re suggesting “Transfer Learning for NLP.” In this case, the only solution I see is to add manually words.

E.g. you need ‘dolares’ which is not in Google’s Word2Vec. You want to have similar vectors as ‘money’. In this case, you add ‘dolares’ + 300 vectors from money. Very painful, I know. But it’s the only way I see to do “Transfer Learning with NLP”.

If you have a better solution, I’d love your input.

Cheerio, a big fan

Not impossible, you can use an embedding trained on a other corpus and ignore the difference or fine tune the embedding while fitting your model.

You can also add missing words to the embedding and learn those.

Remember, we want a vector for each word that best captures their usage, some inconsistencies does not result in a useless model, it is not binary useful/useless case.

Thank you very much for the detailed answer!

The link for the Tokenizer API is this same webpage. Can you update it please?

Fixed, thanks.

Hi Jason, great post!

I have successfully trained my model using the word embedding and Keras. I saved the trained model and the word tokens.Now in order to make some predictions, do i have to use the same tokenizer with one that i used in the training?

Correct.

Thank you very much!

Hi Jason, when i was looking for how to use pre-trained word embedding,

I found your article along with this one:

https://jovianlin.io/embeddings-in-keras/

They have many similarities.

Glad to hear it.

Hey jason,

I am trying to do this but sometime keras gives the same integer to different words. Would it be better to use scikit encoder that converts words to integers?

This might happen if you are using a hash encoder, as Keras does, but calls it a one hot encoder.

Perhaps try a different encoding scheme of words to integers

Hi Jason,

I have implemented the above tutorial and the code works fine with GloVe. I am so grateful abot the tutorial Jason.

but when I download GoogleNews-vectors-negative300.bin which is a pre-trained embedding word2vec it gave me this error:

File “/home/mary/anaconda3/envs/virenv/lib/python3.5/site-packages/gensim/models/keyedvectors.py”, line 171, in __getitem__

return vstack([self.get_vector(entity) for entity in entities])

TypeError: ‘int’ object is not iterable.

I wrote the code as the same as your code which you wrote for loading glove but with a little change.

‘model = gensim.models.KeyedVectors.load_word2vec_format(‘./GoogleNews-vectors-negative300.bin’, binary=True)

for line in model:

values = line.split()

word = values[0]

coefs = asarray(values[1:], dtype=’float32′)

embeddings_index[word] = coefs

model.close()

print(‘Loaded %s word vectors.’ % len(embeddings_index))

embedding_matrix = zeros((vocab_dic_size, 300))

for word in vocab_dic.keys():

embedding_vector = embeddings_index.get(word)

if embedding_vector is not None:

embedding_matrix[vocab_dic[word]] = embedding_vector

I saw you wrote a tutorial about creating word2vec by yourself in this link “https://machinelearningmastery.com/develop-word-embedding-model-predicting-movie-review-sentiment/”,

but I have not seen a tutorial about aplying pre-trained word2vec like GloVe.

please guide me to solve the error and how to apply the GoogleNews-vectors-negative300.bin pretrained wor2vec?

I am so sorry to write a lot as I wanted to explain in detail to be clear.

any guidance will be appreciated.

Best

Meysam

Perhaps try the text version as in the above tutorial?

Hi Jason

thank you very much for replying, but as I am weak at the English language, the meaning of this sentence is not clear. what do you mean by “try the text version”??

in fact, GloVe contains txt files and I implement it correctly but when I wanna run a program by GoogleNews-vectors-negative300.bin which is a pre-trained embedding word2vec it gave me the error and also this file is a binary one and there is no pre-trained embedding word2vec file by txt prefix.

can you help me though I know you are busy?

Best

Meysam

You are using the binary version of the glove file (.bin). Try downloading and using the text version instead.

You can get .txt versions here:

https://nlp.stanford.edu/projects/glove/

How can we use pre-trained word embedding on mobile?

I don’t see why not, other than disk/ram size issues.

Great post! What changes are necessary if the labels are more than binary such as with 4 classes:

labels = array([2,1,1,1,2,0,-1,0,-1,0])

?

E.g. instead of ‘binary_crossentropy’ perhaps ‘categorical_crossentropy’?

And how shold the Dense layer change?

If I use: model.add(Dense(4, activation=’sigmoid’)), I get an error:

ValueError: Error when checking target: expected dense_1 to have shape (None, 4) but got array with shape (10, 1)

thanks for your work!

I believe this will help:

https://machinelearningmastery.com/faq/single-faq/how-can-i-change-a-neural-network-from-regression-to-classification

thanks! also using keras’s to_categorical to discretize the labels was necessary.

one more question: is there a simply way to create the Tokenizer() instance, fit it, save it, and then extend it on new documents? Specifically, so that t.fit_on_texts( ) can be updated on new data.

I’m not so sure that you can.

It might be easier to manage the encoding/mapping yourself so that you can extend it at will.

Nice.

Hi Jason,

For starters, thanks for this post. Ideal to get things going quickly. I have a couple of questions if you don’t mind:

1) I don’t think that one-hot encoding the string vectors is ideal. Even with the recommended vocab size (50), I still got collisions which defeats the purpose even in a toy example such as this. Even the documentation states that uniqueness is not guaranteed. Keras’ Tokenizer(), which you used in the pre-trained example is a more reliable choice in that no two words will share the same integer mapping. How come you proposed one-hot encoding when Tokenizer() does the same job better?

2) Getting Tokenizer()’s word_index property, returns the full dictionary. I expected the vocab_size to be equal to len(t.word_index) but you increment that value by one. This is in fact necessary because otherwise fitting the model fails. But I cannot get the intuition of that. Why is the input dimension size equal to vocab_size + 1?

3) I created a model that expects a BoW vector representation of each “document”. Naturally, the vectors were larger and sparser [ (10,14) ] which means more parameters to learn, no. However, in your document you refer to this encoding or tf-idf as “more sophisticated”. Why do you believe so? With that encoding don’t you lose the word order which is important to learn word embeddings? For the record, this encoding worked well too but it’s probably due to the nature of this little experiment.

Thank you in advance.

The keras one hot encoding method really just takes a hash. It is better to use a true one hot encoding when needed.

I do prefer the Tokenizer class in practice.

The words are 1-offset, leaving room for 0 for “unknown” word.

tf-idf gives some idea of the frequency over the simple presence/absence of a word in BoW.

How that helps.

where can i find the file “../glove_data/glove.6B/glove.6B.100d.txt”??because i come up with the following error.

File “”, line 36

f = open(‘../glove_data/glove.6B/glove.6B.100d.txt’)

^

SyntaxError: invalid character in identifier

You must download it and place it in your current working directory.

Perhaps re-read section 4.

I have placed the code and dataset in same directory.what’s wrong with the code??

f = open(‘glove.6B/glove.6B.100d.txt’)

I am facing the following error.

File “”, line 36

f = open(‘glove.6B/glove.6B.100d.txt’)

^

SyntaxError: invalid character in identifier

Perhaps this will help you when copying code from the tutorial:

https://machinelearningmastery.com/faq/single-faq/how-do-i-copy-code-from-a-tutorial

Excellent work! This is quite helpful to novice.

And I wonder is this useful to other language apart from English? Since I am a Chinese, and I wonder whether I can apply this to Chinese language and vocabulary.

Thanks again for your time devoted!

I don’t see why not.

Hi,

can you explain how can the word embeddings be given as hidden state input to LSTM?

thanks in advance

Word embeddings don’t have hidden state. They don’t have any state.

for example, I have a word and its 50 (dimensional) embeddings. How can I give these embeddings as hidden state input to an LSTM layer?

Why would you want to give them as the hidden state instead of using them as input to the LSTM?

hi, what a practical post!

I have a question, I work in a sentiment analysis project with word2vec as an embedding model with keras. my problem is when I want to predict a new sentence as an input I face this error:

ValueError: Error when checking input: expected conv1d_1_input to have shape (15, 512) but got array with shape (3, 512)

consider that I want to enter a simple sentence like:”I’m really sad” with the length 3- and my input shape has the length of 15- I don’t know how can I reshape it or doing what to get rid of this error.

and this is the related part of my code:

model = Sequential()

model.add(Conv1D(32, kernel_size=3, activation=’elu’, padding=’same’, input_shape=(15, 512)))

model.add(Conv1D(32, kernel_size=3, activation=’elu’, padding=’same’))

model.add(Conv1D(32, kernel_size=3, activation=’elu’, padding=’same’))

model.add(Conv1D(32, kernel_size=3, activation=’elu’, padding=’same’))

model.add(Dropout(0.25))

model.add(Conv1D(32, kernel_size=2, activation=’elu’, padding=’same’))

model.add(Conv1D(32, kernel_size=2, activation=’elu’, padding=’same’))

model.add(Conv1D(32, kernel_size=2, activation=’elu’, padding=’same’))

model.add(Conv1D(32, kernel_size=2, activation=’elu’, padding=’same’))

model.add(Dropout(0.25))

model.add(Dense(256, activation=’relu’))

model.add(Dense(256, activation=’relu’))

model.add(Dropout(0.5))

model.add(Flatten())

model.add(Dense(2, activation=’softmax’))

would you please help me to solve this problem?

You must prepare the new sentence in exactly the same way as the training data, including length and integer encoding.

At least would you mind sharing some suitable source for me to solve this problem please?

I hope you answer my question as what you done all the time. Thanks

I’m eager to help and answer specific questions, but I don’t have the capacity to write code for you sorry.

Hi Jason,

I have two questions. First, for new instances if the length is greater than this model input shall we truncate the sentence?

Also, since the embedding input is just for the seen training set words, what happens to the predictions-to-word process? I assume it just returns some words similar to the training words not considering all the dictionary words. Of course I am talking about a language model with Glove.

Yes, truncate.

Unseen words are mapped to nothing or 0.

The training dataset MUST be representative of the problem you are solving.

From what i understood from this comment,it is about prediction on test data. Lets assume that there are 50 words in vocabulary which means sentences will have unique integers uptil 50. Now since test data must be tokenized with same instance of tokenizer and if it has some new words, it would have integers with 51 ,52 oand so on..In this case,would the model automatically use 0 for word embeddings or can it raise out of bound type exception?Thanks

You would encode unseen words as 0.

All 50 vocabulary words should start from index 1 to 50, while leave 0 for unseen word in vocabulary. am I right?

Correct.

can this be described as transfer learning?

Perhaps.

We need to know for our homework pls help !!

Please see https://machinelearningmastery.com/faq/single-faq/can-you-help-me-with-my-homework-or-assignment/

Hi,

i have trained and tested my own network. During my work,when i integerized the sentences and created a corresponding word embedding matrix ,it included embeddings for train,validation and test data as well.

Now if i want to reload my model to test for some other similar data, i am confused that how the words from this new data would relate to embedding matrix?

You should have embeddings for test data as well right? or when you create embedding matrix you exclude test data?Thanks

The embedding is created from the training dataset.

It should be sufficiently rich/representative enough to cover all data you expect to in the future.

New data must have the same integer encoding as the training data prior to being mapped onto the embedding when making a prediction.

Does that help?

yes,i understand that i should be using the same tokenizer object for encoding both train and test data, but i am not sure how the embedding layer would behave for the word or index which isnt part of embedding matrix. Obviously test data would have similar words but there must be some words that are bound to be new. Would you say this approach is right to include test data too while creating embedding matrix for model? If you want to predict using some pre trained model,how can i deal with this issue? A small example can be really helpful. Thanks alot for all the help and time!

It won’t. The encoding will set unknown words to 0.

It really depends on the goal of what you want to evaluate.

i want to train my model to predict the target word given to a 5 word sequence . how can i represent my target word ?

Probably using a one hot encoding.

Hello Jason,

This is regarding the output shape of the first embedding layer : (None,4,8).

Am I correct in understanding that the 4 represents the input size which is 4 words and the 8 is the number of features it has generated using those words?

I believe so.

Hi Jason,

Thanks for sharing your knowledge.

My task is to classify set of documents into different categories.( I have a training set of 100 documents and say 10 categories).

The idea is to extract top M words ( say 20) from the first few lines of each doc, convert words to word embeddings and use it as feature vectors for the neural network.

Question : Since i take top M words from the document, it may not be in the “right” order each time, meaning the there can be different words at a given position in the input layer ( unlike bag of words model). Wont this approach impact the Neural network from converging?

Regards,

Srini

The key is to assign the same integer value to each word prior to feeding the data into the embedding.

You must use the same text tokenizer for all documents.

Hi Jason,

Thank you for your great explanation. I have used the pre-trained google embedding matrix in my seqtoseq project by using encoder-decoder. but in my test, I have a problem. I don’t know how to make a reverse for my embedding matrix. Do you have a sample project? My solution is: when my decoder predicts a vector, I should search for that in my pre-trained embedding matrix, and then find its index and then understand its related word. Am I right?

Why would you need to reverse it?

Hi Jason

Thanks for an excellent tutorial. Using your methods, I’ve converted text into word index and applied word embeddings.

Like Fatemeh, I’m wondering if it’s possible to reverse the process, and convert embedding vectors back into text? This could be useful for applications such as text summarising.

Thank you.

Yes, each vector has an int value known by the embedding and each int has a mapping to a word via the tokenizer.

Random vectors in the space do not, you will have to find the closest vector in the embedding using euclidean distance.

Dear Dr. Jason,

Accuracy: 89.999998 on my Laptop, result different from computer to other?

Well done!

Results are different each run, learn more here:

https://machinelearningmastery.com/randomness-in-machine-learning/

Hi,

So many thanks for this tutorial!

I’ve been trying to train a network that consists of an Embedding layer, an LSTM and a softmax as the output layer. However, it seems that the loss and accuracy get stuck at some point.

Do you have any advice?

Thanks in advance.

Yes, I have a ton of advice right here:

https://machinelearningmastery.com/improve-deep-learning-performance/

Thank you so much,

It helped me alot in learning how to use pre trained embbeding in neural nets

I’m happy to hear that.

Hi Jason, thank uou for the great materiAl.

I have one doubt, want to make the embedding of a list of 1200 documents to use it as input to a classification model to predict moviebox office based on the moviescript text…

My question is… if i want to train the embedding with the vocabulary of the real dataset, how can i after classify the rest of the dataset that was not trained ? Can a use the embeddings learned on the training as input to the classification model ?

Good question.

You must ensure that the training dataset is representative of the broader problem. Any words unseen during training will be marked zero (unknown) by the tokenizer unless you update your model.

Thank You Jason. As soon as I get the results I’ll try to share it here.

I’d like to thank you too about your great platform, it is being very helpful to me.

You’re welcome.

Nice post once again! It seems that in each batch all embedding are updated which I think it should not happen. You got any idea how to update only the one that are passed each time? That is for computational reasons or others problem definitions related reasons.

I’m not sure what you mean exactly, can you elaborate?

Hello Jason, i would like to think you for this post, it’s really interresting and understandable.

I’ve reused the script but instead of using “docs” and “labels” lists, i used the IMDB movie reviews dataset. The problem is that i can’t reach more than 50% accuracy and the loss is stable in all epochs to value 0.6932.

What do you think about that ?

I have some suggestions here:

https://machinelearningmastery.com/improve-deep-learning-performance/

Okay I’ll check it out, thank you Jason

Thanks for the article. Could you also provide an example of how to train a model with only one Embedding layer? I’m trying to do the same with Keras but the problem is that the fit method asks for labels which I don’t have. I mean I only have a bunch of text files that I’m trying to come up with the mapping for.

Models typically only have one embedding layer. What do you mean exactly?

Hello,

Thank you for the excellent explanation!

I have a few questions related to unknown words.

Some pretrained word embeddings like the GoogleNews embeddings have an embedding vector for a token called ‘UNKNOWN’ as well.

1. Can I use this vector to represent words that are not present in the training set instead of the vector of all zeros? If so, how should I go about loading this vector into the Keras Embedding layer? Should it be loaded at the 0th index in the embedding matrix?

2. Also, can I use the Tokenizer API to help me convert all unknown words (words not in the training set) to ‘UNKNOWN’?

Thank you.

Yes, find the integer value for the unknown word and use that to assign to all words not in the vocab.

Hi,

If word embedding doesn’t contain a word we input to a model , How to address this issue?

1) Is it possible to load additional words (besides those in our vocabulary) in embedding matrix.

Or may be any other elegant way you would like to suggest?

It is marked as “unknown”.

Hi .thanks a lot for your post . i’m new in python and deep learning !

i have 240,000 tweet train set “50 % male and 50% female” class . and 120,000 tweet test set ” 50 % male and 50% female”. i want use lstm in python bud i have following error at ” fit ” method :

ValueError: Error when checking input: expected lstm_16_input to have 3 dimensions, but got array with shape (120000, 400)

can you help me?

It looks like a mismatch between your data and the model, you can change the data or change the model.

Hi Jason, Thanks for this article.

I am getting this error

TypeError: ‘OneHotEncoder’ object is not callable

How oto overcome?

Thanks

I have some suggestions here:

https://machinelearningmastery.com/faq/single-faq/why-does-the-code-in-the-tutorial-not-work-for-me

Hi , i have 2 models with this embedding layers , how do i merge those model ?

Thanks

What do you mean exactly? An ensemble model?

hi Jason, greate tutorial, i am very new to all this. I have a query, u r using glove for the embedding layer but during fitting u are directly using padded_docs. The vectors in padded_docs have no co-relation to glove. I am sure that i am missing something plz enlighten.

The padding just adds ‘0’ to ensure the sequences are the same length. It does not effect the encoding.

Hi, Jason. Considering the “3. Example of Learning an Embedding”, I’m adding “model.add(LSTM(32, return_sequences=True))” after the embedding layer and I would like to understand what happens. The number of parameters returned for this LSTM layer is “5248” and I don’t know how to calculate it. Thank you.

Each unit in the LSTM will take the entire embedding as input, therefore must have one weight for each dimension in the embedding.

Hi Jason,