Keras is a Python library for deep learning that wraps the efficient numerical libraries Theano and TensorFlow.

In this tutorial, you will discover how to use Keras to develop and evaluate neural network models for multi-class classification problems.

After completing this step-by-step tutorial, you will know:

- How to load data from CSV and make it available to Keras

- How to prepare multi-class classification data for modeling with neural networks

- How to evaluate Keras neural network models with scikit-learn

Kick-start your project with my new book Deep Learning With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Update Oct/2016: Updated for Keras 1.1.0 and scikit-learn v0.18

- Update Mar/2017: Updated for Keras 2.0.2, TensorFlow 1.0.1 and Theano 0.9.0

- Update Jun/2017: Updated to use softmax activation in output layer, larger hidden layer, default weight initialization

- Update Aug/2019: Added complete working example for convenience, removed random seed

- Update Sep/2019: Updated for Keras 2.2.5 API

Multi-class classification tutorial with the Keras deep learning library

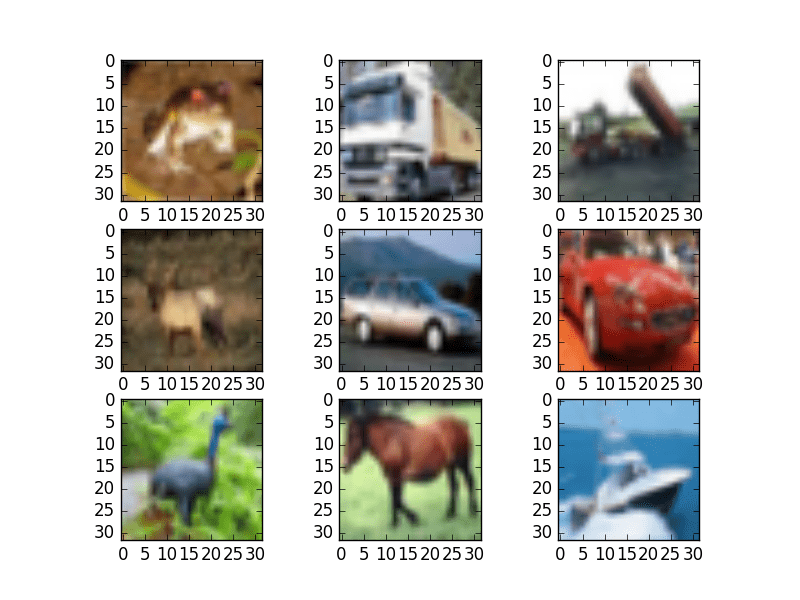

Photo by houroumono, some rights reserved.

1. Problem Description

In this tutorial, you will use the standard machine learning problem called the iris flowers dataset.

This dataset is well studied and makes a good problem for practicing on neural networks because all four input variables are numeric and have the same scale in centimeters. Each instance describes the properties of an observed flower’s measurements, and the output variable is a specific iris species.

This is a multi-class classification problem, meaning that there are more than two classes to be predicted. In fact, there are three flower species. This is an important problem for practicing with neural networks because the three class values require specialized handling.

The iris flower dataset is a well-studied problem, and as such, you can expect to achieve a model accuracy in the range of 95% to 97%. This provides a good target to aim for when developing your models.

You can download the iris flowers dataset from the UCI Machine Learning repository and place it in your current working directory with the filename “iris.csv“.

Need help with Deep Learning in Python?

Take my free 2-week email course and discover MLPs, CNNs and LSTMs (with code).

Click to sign-up now and also get a free PDF Ebook version of the course.

2. Import Classes and Functions

You can begin by importing all the classes and functions you will need in this tutorial.

This includes both the functionality you require from Keras and the data loading from pandas, as well as data preparation and model evaluation from scikit-learn.

|

1 2 3 4 5 6 7 8 9 10 |

import pandas from keras.models import Sequential from keras.layers import Dense from keras.wrappers.scikit_learn import KerasClassifier from keras.utils import np_utils from sklearn.model_selection import cross_val_score from sklearn.model_selection import KFold from sklearn.preprocessing import LabelEncoder from sklearn.pipeline import Pipeline ... |

3. Load the Dataset

The dataset can be loaded directly. Because the output variable contains strings, it is easiest to load the data using pandas. You can then split the attributes (columns) into input variables (X) and output variables (Y).

|

1 2 3 4 5 6 |

... # load dataset dataframe = pandas.read_csv("iris.csv", header=None) dataset = dataframe.values X = dataset[:,0:4].astype(float) Y = dataset[:,4] |

4. Encode the Output Variable

The output variable contains three different string values.

When modeling multi-class classification problems using neural networks, it is good practice to reshape the output attribute from a vector that contains values for each class value to a matrix with a Boolean for each class value and whether a given instance has that class value or not.

This is called one-hot encoding or creating dummy variables from a categorical variable.

For example, in this problem, three class values are Iris-setosa, Iris-versicolor, and Iris-virginica. If you had the observations:

|

1 2 3 |

Iris-setosa Iris-versicolor Iris-virginica |

You can turn this into a one-hot encoded binary matrix for each data instance that would look like this:

|

1 2 3 4 |

Iris-setosa, Iris-versicolor, Iris-virginica 1, 0, 0 0, 1, 0 0, 0, 1 |

You can first encode the strings consistently to integers using the scikit-learn class LabelEncoder. Then convert the vector of integers to a one-hot encoding using the Keras function to_categorical().

|

1 2 3 4 5 6 7 |

... # encode class values as integers encoder = LabelEncoder() encoder.fit(Y) encoded_Y = encoder.transform(Y) # convert integers to dummy variables (i.e. one hot encoded) dummy_y = np_utils.to_categorical(encoded_Y) |

5. Define the Neural Network Model

If you are new to Keras or deep learning, see this helpful Keras tutorial.

The Keras library provides wrapper classes to allow you to use neural network models developed with Keras in scikit-learn.

There is a KerasClassifier class in Keras that can be used as an Estimator in scikit-learn, the base type of model in the library. The KerasClassifier takes the name of a function as an argument. This function must return the constructed neural network model, ready for training.

Below is a function that will create a baseline neural network for the iris classification problem. It creates a simple, fully connected network with one hidden layer that contains eight neurons.

The hidden layer uses a rectifier activation function which is a good practice. Because you used a one-hot encoding for your iris dataset, the output layer must create three output values, one for each class. The output value with the largest value will be taken as the class predicted by the model.

The network topology of this simple one-layer neural network can be summarized as follows:

|

1 |

4 inputs -> [8 hidden nodes] -> 3 outputs |

Note that a “softmax” activation function was used in the output layer. This ensures the output values are in the range of 0 and 1 and may be used as predicted probabilities.

Finally, the network uses the efficient Adam gradient descent optimization algorithm with a logarithmic loss function, which is called “categorical_crossentropy” in Keras.

|

1 2 3 4 5 6 7 8 9 10 |

... # define baseline model def baseline_model(): # create model model = Sequential() model.add(Dense(8, input_dim=4, activation='relu')) model.add(Dense(3, activation='softmax')) # Compile model model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model |

You can now create your KerasClassifier for use in scikit-learn.

You can also pass arguments in the construction of the KerasClassifier class that will be passed on to the fit() function internally used to train the neural network. Here, you pass the number of epochs as 200 and batch size as 5 to use when training the model. Debugging is also turned off when training by setting verbose to 0.

|

1 2 |

... estimator = KerasClassifier(build_fn=baseline_model, epochs=200, batch_size=5, verbose=0) |

6. Evaluate the Model with k-Fold Cross Validation

You can now evaluate the neural network model on our training data.

The scikit-learn has excellent capability to evaluate models using a suite of techniques. The gold standard for evaluating machine learning models is k-fold cross validation.

First, define the model evaluation procedure. Here, you set the number of folds to 10 (an excellent default) and shuffle the data before partitioning it.

|

1 2 |

... kfold = KFold(n_splits=10, shuffle=True) |

Now, you can evaluate your model (estimator) on your dataset (X and dummy_y) using a 10-fold cross-validation procedure (k-fold).

Evaluating the model only takes approximately 10 seconds and returns an object that describes the evaluation of the ten constructed models for each of the splits of the dataset.

|

1 2 3 |

... results = cross_val_score(estimator, X, dummy_y, cv=kfold) print("Baseline: %.2f%% (%.2f%%)" % (results.mean()*100, results.std()*100)) |

7. Complete Example

You can tie all of this together into a single program that you can save and run as a script:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 |

# multi-class classification with Keras import pandas from keras.models import Sequential from keras.layers import Dense from keras.wrappers.scikit_learn import KerasClassifier from keras.utils import np_utils from sklearn.model_selection import cross_val_score from sklearn.model_selection import KFold from sklearn.preprocessing import LabelEncoder from sklearn.pipeline import Pipeline # load dataset dataframe = pandas.read_csv("iris.data", header=None) dataset = dataframe.values X = dataset[:,0:4].astype(float) Y = dataset[:,4] # encode class values as integers encoder = LabelEncoder() encoder.fit(Y) encoded_Y = encoder.transform(Y) # convert integers to dummy variables (i.e. one hot encoded) dummy_y = np_utils.to_categorical(encoded_Y) # define baseline model def baseline_model(): # create model model = Sequential() model.add(Dense(8, input_dim=4, activation='relu')) model.add(Dense(3, activation='softmax')) # Compile model model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy']) return model estimator = KerasClassifier(build_fn=baseline_model, epochs=200, batch_size=5, verbose=0) kfold = KFold(n_splits=10, shuffle=True) results = cross_val_score(estimator, X, dummy_y, cv=kfold) print("Baseline: %.2f%% (%.2f%%)" % (results.mean()*100, results.std()*100)) |

The results are summarized as both the mean and standard deviation of the model accuracy on the dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

This is a reasonable estimation of the performance of the model on unseen data. It is also within the realm of known top results for this problem.

|

1 |

Accuracy: 97.33% (4.42%) |

Summary

In this post, you discovered how to develop and evaluate a neural network using the Keras Python library for deep learning.

By completing this tutorial, you learned:

- How to load data and make it available to Keras

- How to prepare multi-class classification data for modeling using one-hot encoding

- How to use Keras neural network models with scikit-learn

- How to define a neural network using Keras for multi-class classification

- How to evaluate a Keras neural network model using scikit-learn with k-fold cross validation

Do you have any questions about deep learning with Keras or this post?

Ask your questions in the comments below, and I will do my best to answer them.

Thanks for this cool tutorial! I have a question about the input data. If the datatypes of input variables are different (i.e. string and numeric). How to preprocess the train data to fit keras?

Great question. Eventually, all of the data need to be turned into real values.

With categorical variables, you can create dummy variables and use one-hot encoding. For string data, you can use word embeddings.

Could you please let me know how to convert string data into word embeddings in large datasets?

Would really appreciate it

Thanks so much

Hi Shraddha,

First, convert the chars to vectors of integers. You can then pad all vectors to the same length. Then away you go.

I hope that helps.

Thanks so much Jason!

You’re welcome.

can you give an example for that..

I have many tutorials for encoding and padding sequences on the blog. Please use the search.

query:

which type of properties of an observed flower measurements is taken

Told me what is the 4 attributes, you taken

For more on the dataset, see this post:

https://en.wikipedia.org/wiki/Iris_flower_data_set

Class indices are 7. Then how manu output variables i need to mentions

IF i choose to use Entity Embeddings for categorical data, can you please suggest how to feed them to a MLP. I am able to do that in pytorch by using your article on pytorch.

Can you please suggest how to convert the below architecture into an MLP.

(all_embeddings): ModuleList(

(0): Embedding(24, 12)

(1): Embedding(2, 1)

(2): Embedding(7, 4)

)

(embedding_dropout): Dropout(p=0.4, inplace=False)

(batch_norm_num): BatchNorm1d(7, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(layers): Sequential(

(0): Linear(in_features=24, out_features=200, bias=True)

(1): ReLU(inplace=True)

(2): BatchNorm1d(200, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): Dropout(p=0.4, inplace=False)

(4): Linear(in_features=200, out_features=100, bias=True)

(5): ReLU(inplace=True)

(6): BatchNorm1d(100, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(7): Dropout(p=0.4, inplace=False)

(8): Linear(in_features=100, out_features=1, bias=True)

)

)

sorry, I don’t have an example for pytorch, but I have an example for keras that might help:

https://machinelearningmastery.com/how-to-prepare-categorical-data-for-deep-learning-in-python/

A great tutorial. What should I do to preprocess mixed input data (data includes both numeric and categorical variables) for the fitting model? Thanks in advance.

Numeric usually just presented as-is, but sometimes we apply scaling to it too. Categorical is usually one-hot encoded.

Thank you very much, sir, for sharing so much information, but sir I want to a dataset of greenhouse for tomato crop with climate variable like Temperature, Humidity, Soil Moisture, pH Scale, CO2, Light Intensity. Can you provide me this type dataset?

I answer this question here:

https://machinelearningmastery.com/faq/single-faq/where-can-i-get-a-dataset-on-___

Hey,

Can we use this module for array of string

Hey can we use this method for arrays

array([[”, u’multios’, u’dsdsds’, u’DW_SAL_CANNOT_INITIALIZE’, u’av_sw’],

[”, u’android-l’, u’dsssd’, u’SYS_SW’, u’syssw’],

[”, u’gnu_linux-k4.9′, u’dssss’, u’USB_IO_Error’, u’syssw’],

…,

[”, u’android-p’, u’fddfdfdf’, u’mm_nvmedia_video_decoder_create’,

u’multimedia’],

[”, u’android-o’, u’sasa’, u’mm_log_tag’,

u’multimedia’],

[u’rel-32′, u’android-p’, u’dsdsd’,

u’mm_parsevp9_incorrect_sync_code_for_vp9′, u’multimedia’]],

dtype=object)

I would recommend using a bag of words model when starting with text:

https://machinelearningmastery.com/gentle-introduction-bag-words-model/

Hi Mr Jason,

What’s name the model you use to train?

Sorry, I am newbie.

Thanks

The model in this tutorial a neural network or a multilayer neural network, often called an MLP or a fully connected network.

Dear Mr Jason,

I run your example code I noticed that softmax in your tutorial has different result with softmax used in CNN model.

I would like to confirm with you this is a behavior of CNN

Exemple my code:

model = Sequential()

model.add(Conv1D(64, 3, activation=’relu’, input_shape=(8,1)))

model.add(Conv1D(64, 3, activation=’relu’))

model.add(Dropout(0.5))

model.add(MaxPooling1D())

model.add(Flatten())

model.add(Dense(100, activation=’relu’))

model.add(Dense(4, activation=’softmax’))

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

X_train, X_test, Y_train, Y_test = train_test_split(X, dummy_y, test_size=0.33, random_state=seed)

model.fit(X_train, Y_train)

predictions = model.predict(X_test)

print(predictions)

>> Out put

[[0.5863281 0.11777738 0.16206734 0.13382716]

[0.5863281 0.11777738 0.16206734 0.13382716]

[0.39733416 0.19241211 0.2283105 0.1819432 ]

[0.54646176 0.12707633 0.20596607 0.12049587]

I think that softmax in CNN model will return % for each result need to classification.

And your model will return value dummy_y prediction.

Thank you

The softmax is a standard implementation.

Perhaps I don’t follow, what is the problem you have exactly?

I got found solution from another article of you.

Thanks

“First, the raw 17-element prediction vector is printed. If we wish, we could pretty-print this vector and summarize the predicted confidence that the photo would be assigned each label.

Next, the prediction is rounded and the vector indexes that contain a 1 value are reverse-mapped to their tag string values. The predicted tags are then printed. we can see that the model has correctly predicted the known tags for the provided photo.

It might be interesting to repeat this test with an entirely new photo, such as a photo from the test dataset, after you have already manually suggested tags.

[9.0940112e-01 3.6541668e-03 1.5959743e-02 6.8241461e-05 8.5694155e-05

9.9828100e-01 7.4096164e-08 5.5998818e-05 3.6668104e-01 1.2538023e-01

4.6371704e-04 3.7660234e-04 9.9999273e-01 1.9014676e-01 5.6060363e-04

1.4613305e-03 9.5227945e-01]

[‘agriculture’, ‘clear’, ‘primary’, ‘water’] ”

https://machinelearningmastery.com/how-to-develop-a-convolutional-neural-network-to-classify-satellite-photos-of-the-amazon-rainforest/

Happy to hear that.

Hi Jason

I’m doing an image localization and classification task on Keras-FRCNN, on Theano Backend. I’m getting the following error:

Traceback (most recent call last):

File “train_frcnn.py”, line 208, in

model_classifier.compile(optimizer=optimizer_classifier, loss=[losses.class_loss_cls, losses.class_loss_regr(len(classes_count)-1)], metrics={‘dense_class_{}’.format(len(classes_count)): ‘accuracy’})

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\engine\training.py”, line 229, in compile

self.total_loss = self._prepare_total_loss(masks)

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\engine\training.py”, line 692, in _prepare_total_loss

y_true, y_pred, sample_weight=sample_weight)

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\losses.py”, line 71, in __call__

losses = self.call(y_true, y_pred)

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\losses.py”, line 132, in call

return self.fn(y_true, y_pred, **self._fn_kwargs)

File “F:\ML\keras-frcnn-moded\keras_frcnn\losses.py”, line 55, in class_loss_cls

return lambda_cls_class*K.mean(categorical_crossentropy(y_true[0, :, :], y_pred[0, :, :]))

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\losses.py”, line 691, in categorical_crossentropy

return K.categorical_crossentropy(y_true, y_pred, from_logits=from_logits)

File “C:\Users\singh\Anaconda3\lib\site-packages\keras\backend\theano_backend.py”, line 1831, in categorical_crossentropy

output_dimensions = list(range(len(int_shape(output))))

TypeError: object of type ‘NoneType’ has no len()

When I use Tensorflow backend, then I don’t face this error. So, I think it’s something related to Keras and Theano. Using tensorflow as keras backend serves useful but it’s quite slow for the model (takes days for training).

Any clue/fix for the issue, will be very helpful…..

Perhaps post your code and error to stackoverflow?

Hello Jason,

It’s a very nice tutorial to learn. I implemented the same model but on my work station I achieved a score of 88.67% only. After modifying the number of hidden layers, I achieved an accuracy of 93.04%. But I am not able to achieve the score of 95% or above. Any particular reason behind it ?

Interesting Aakash.

I used the Theano backend. Are you using the same?

Are all your libraries up to date? (Keras, Theano, NumPy, etc…)

Yes Jason . Backend is theano and all libraries are up to date.

Interesting. Perhaps seeding the random number generator is not having the desired effect for reproducibility. It perhaps it has different effects on different platforms.

Perhaps re-run the above code example a few times and see the spread of accuracy scores you achieve?

Because Label I use LabelEncoder() to endcoe label.

I could not encoder.inverse_transform(predictions)

Expected :output must be follow format:

—

[[1 0 0 0]

[1 0 0 0]

[1 0 0 0]

—

But current output is:

[[0.5863281 0.11777738 0.16206734 0.13382716]

[0.5863281 0.11777738 0.16206734 0.13382716]

[0.39733416 0.19241211 0.2283105 0.1819432 ]

So I can not encoder.inverse_transform(predictions)

Are you have any suggest?

Thank you

First, you must reverse the prediction to an integer via argmax, then integer to category via the inverse_transform.

lols, exactly !!!!!

Hello Jason,

In chapter 10 of the book “Deep Learning With Python”, there is a fraction of code:

estimator = KerasClassifier(build_fn=baseline_model, nb_epoch=200, batch_size=5, verbose=0)

kfold = KFold(n=len(X), n_folds=10, shuffle=True, random_state=seed)

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

print(“Accuracy: %.2f%% (%.2f%%)” % (results.mean()*100, results.std()*100))

How to save this model and weights to file, then how to load these file to predict a new input data?

Many thanks!

Really good question.

Keras does provide functions to save network weights to HDF5 and network structure to JSON or YAML. The problem is, once you wrap the network in a scikit-learn classifier, how do you access the model and save it. Or can you save the whole wrapped model.

Perhaps a simple but inefficient place to start would be to try and simply pickle the whole classifier?

https://docs.python.org/2/library/pickle.html

I tried doing that. It works for a normal sklearn classifier, but apparently not for a Keras Classifier:

import pickle

with open(“name.p”,”wb”) as fw:

pickle.dump(clf,fw)

with open(name+”.p”,”rb”) as fr:

clf_saved = pickle.load(fr)

print(clf_saved)

prob_pred=clf_saved.predict_proba(X_test)[:,1]

This gives:

theano.gof.fg.MissingInputError: An input of the graph, used to compute DimShuffle{x,x}(keras_learning_phase), was not provided and not given a value.Use the Theano flag exception_verbosity=’high’,for more information on this error.

Backtrace when the variable is created:

File “nn_systematics_I_evaluation_of_optimised_classifiers.py”, line 6, in

import classifier_eval_simplified

File “../../../../classifier_eval_simplified.py”, line 26, in

from keras.utils import np_utils

File “/usr/local/lib/python2.7/site-packages/keras/__init__.py”, line 2, in

from . import backend

File “/usr/local/lib/python2.7/site-packages/keras/backend/__init__.py”, line 56, in

from .theano_backend import *

File “/usr/local/lib/python2.7/site-packages/keras/backend/theano_backend.py”, line 17, in

_LEARNING_PHASE = T.scalar(dtype=’uint8′, name=’keras_learning_phase’) # 0 = test, 1 = train

I provide examples of saving and loading Keras models here:

https://machinelearningmastery.com/save-load-keras-deep-learning-models/

Sorry, I don’t have any examples of saving/loading the wrapped Keras classifier. Perhaps the internal model can be seralized and later deserialized and put back inside the wrapper.

Dear Dr. Jason,

Thanks very much for this great tutorial . I got extra benefit from it, but I need to calculate precision, recall and confusion matrix for such multi-class classification. I tried to did it but each time I got a different problem. could you please explain me how to do this

Hi Sally, you could perhaps use the tools in scikit-learn to summarize the performance of your model.

For example, you could use sklearn.metrics.confusion_matrix() to calculate the confusion matrix for predictions, etc.

See the metrics package:

http://scikit-learn.org/stable/modules/classes.html#module-sklearn.metrics

Could you tell how to use that in this code you have provided above? I am very new Keras.

Thanks in Advance

please how we can implemente python code using recall and precision to evaluate prediction model

You can use the sklearn library to calculate these scores:

http://scikit-learn.org/stable/modules/classes.html#sklearn-metrics-metrics

Hi jason.. your tutorials are a great help.. i am a student working on deep learning for detection of diabetic retinopathy and its stages.. using the code u gave for multi class, for my dataset.. i am getting a very low baseline.. 23%..can help me on improving the accuracy.. also how to classify images using deep learning?

Thanks!

Yes, this will give you ideas:

https://machinelearningmastery.com/start-here/#better

Hi jason, Reading the tutorial and the same example in your book, you still don’t tell us how can use the model to make predictions, you have only show us how to train and evaluate it but I would like to see you using this model to make predictions on at least one example of iris flowers data no matters if is dummy data.

I would like to see how can I load my own instance of an iris-flower and use the above model to predict what kind is the flower?

could you do that for us?

Hi Fabian, no problem.

In the tutorial above, we are using the scikit-learn wrapper. That means we can use the standard model.predict() function to make predictions from scikit-learn.

For example, below is an an example adapted from the above where we split the dataset, train on 67% and make predictions on 33%. Remember that we have encoded the output class value as integers, so the predictions are integers. We can then use encoder.inverse_transform() to turn the predicted integers back into strings.

Running this example prints:

I hope that is clear and useful. Let me know if you have any more questions.

Hi Jason,

I was facing error while converting string to float and so I had to make a minor correction to my code

X = dataset[1:,0:4].astype(float)

Y = dataset[1:,4]

However, I am still unable to run since I am getting the following error for line

“—-> 1 results = cross_val_score(estimator, X, dummy_y, cv=kfold)”

……………….

“Exception: Error when checking model target: expected dense_4 to have shape (None, 3) but got array with shape (135L, 22L)”

I would appreciate your help. Thanks.

I found the issue. It was with with the indexes.

I had to take [1:,1:5] for X and [1:,5] for Y.

I am using Jupyter notebook to run my code.

The index range seems to be different in my case.

I’m glad you worked it out Devendra.

For some reason, when I run this example I get 0 as prediction value for all the samples. What could be happening?

I’ve the same problem on prediction with other code I’m executing, and decided to run yours to check if i could be doing something wrong?

I’m lost now, this is very strange.

Thanks a in advance!

Hello again,

This is happening with Keras 2.0, with Keras 1 works fine.

Thanks,

Cristina

Thanks for the note.

Hi all,

I faced the same problem it works well with keras 1 but gives all 0 with keras 2 !

Thanks for this great tuto !

Fawzi

Does this happen every time you train the model?

Hello Cristina,

I have faced the same problem with keras 2. And then I change keras to 1.2 and worked well. Thank you for the information

Very strange.

Maybe check that your data file is correct, that you have all of the code and that your environment is installed and is working correctly.

Jason, I’m getting the same prediction (all zeroes) with Keras 2. If we could be able to nail the cause, it would be great. After all, as of now it’s more than likely that people will try to run your great examples with keras 2.

Plus, a couple of questions:

1. why did you use a sigmoid for the output layer instead of a softmax?

2. why did you provide initialization even for the last layer?

Thanks a lot.

The example does use softmax, perhaps check that you have copied all of the code from the post?

I’m having same issue. How did u resolve it? could you please help me

Has anyone resolved the issue with the output being all zeros?

Perhaps try re-train the model to see if the issue occurs again?

I changed the seed=7 to seed= 0, which should make each random number different, and the result will no longer be all 0.

Issue is still present! If I use keras >2.0, the model simply predicts the same class for every training example in the dataset.

– Have tried varying loss functions

– changing activation function from sigmoid to softmax in the output layer

– using Theano/tensorflow backends

– Changing the number of hidden neurons in the hidden layer

And for all these fixes the error persists. Only thing that solves the issue, and makes me get similar results to the ones you’re getting in your tutorial, is downgrading to Keras <2.0 (In my case I downgraded to Keras 1.2.2.)

I can confirm the example works as stated with Keras 2.2.4, TensorFlow 1.14 and Python 3.6.

I believe there is an issue with your development environment. This may help:

https://machinelearningmastery.com/setup-python-environment-machine-learning-deep-learning-anaconda/

Could you share with me the entire code you use? I don’t think its environment related, have tried with a fresh conda environment, and am able to reproduce the issue on 2 seperate machines.

The entire code listing is provided in the post, I updated it to provide it all together.

Managed to find the problem!!!

In the code above, as well as in your book (Which I am following) we are using code that I think is written for keras1. The code carries over to keras2, apart from some warnings, but predicts poor. The reason for this is the nb_epoch parameter in the KerasClassifier class. When you leave that as is, the model predicts the same class for every training example. When you change it to “epochs” in keras2, everything is fine. I don’t know if this is Intented behavior or a bug.

No.

The example in the post uses “epochs” for Keras 2.

So does the most recent version of the book.

I think you are not referring to the above tutorial and are in fact referring to a very old version of the book. You can contact me here to get the most recent version:

https://machinelearningmastery.com/contact/

Hi Jason,

Thanks for your awesome tutorials. I had a curious question:

As we are using KerasClassifier or KerasRegressor of Scikit-Learn wrapper, then how to save them as a file after fitting ?

For example, I am predicting regression or multiclass classification. I have to use KerasRegressor or KerasClassifier then. After fitting a large volume of data, I want to save the trained neural network model to use it for prediction purpose only. How to save them and how to restore them from saved files ? Your answer will help me a lot.

Great question, I’m not sure you can easily do this. You might be better served fitting the Keras model directly then using the Keras API to save the model:

https://machinelearningmastery.com/save-load-keras-deep-learning-models/

Hi Jason, Thank your very much for those nice explainations.

I’m having some problems and I trying very hard to get it solved but it wont work..

If I simply copy-past your code from your comment on 31-july 2016 I keep getting the following Error:

Traceback (most recent call last): File “/Users/reinier/PycharmProjects/Test-IRIS/TESTIRIS.py”, line 43, in estimator.fit(X_train, Y_train) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/wrappers/scikit_learn.py”, line 206, in fit return super(KerasClassifier, self).fit(x, y, **kwargs) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/wrappers/scikit_learn.py”, line 149, in fit history = self.model.fit(x, y, **fit_args) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/models.py”, line 856, in fit initial_epoch=initial_epoch) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/engine/training.py”, line 1429, in fit batch_size=batch_size) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/engine/training.py”, line 1309, in _standardize_user_data exception_prefix=’target’) File “/Users/reinier/Library/Python/3.6/lib/python/site-packages/keras/engine/training.py”, line 139, in _standardize_input_data str(array.shape)) ValueError: Error when checking target: expected dense_2 to have shape (None, 3) but got array with shape (67, 40)

It seems like something is wrong with the fit function. Is this the cause of a new Keras version? Thanks you very much in advance,

Reinier

Sorry, it is not clear what is going on.

Does the example in the blog post work as expected?

Hello Jason,

Thank you for such a wonderful and detailed explanation. Please can guide me on how to plot the graphs for clustering for this data set and code (both for training and predictions).

Thanks.

Sorry, I do not have examples of clustering.

Hi Jason,

Thank you so much for such an elegant and detailed explanation. I wanted to learn on how to plot graphs for the same. I went through the comments and you said we can’t plot accuracy but I wish to plot the graphs for input data sets and predictions to show like a cluster (as we show K-means like a scattered plot). Please can you guide me with the same.

Thank you.

Sorry I do not have any examples for clustering.

Woahh,, it’s work’s again…

it’s nice result,

btw, how, it we want make just own sentences, not use test data?

This is called NLP, learn more here:

https://machinelearningmastery.com/start-here/#nlp

I think the line

model = KerasClassifier(build_fn=baseline_model, nb_epoch=200, batch_size=5, verbose=0)

must be

model = KerasClassifier(build_fn=baseline_model, epochs=200, batch_size=5, verbose=0)

for newer Keras versions.

Correct.

hello Sir,

i used the following code in keras backend, but when using categorical_crossentropy

all the rows of a columns have same predictions,but when i use binary_crossentropy the predictions are correct.Can u plz explain why?

And my predictions are also in the form of HotEncoding an and not like 2,1,0,2. Kindly help me out in this.

Thank you

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

train=pd.read_csv(‘iris_train.csv’)

test=pd.read_csv(‘iris_test.csv’)

xtrain=train.iloc[:,0:4].values

ytrain=train.iloc[:,4].values

xtest=test.iloc[:,0:4].values

ytest=test.iloc[:,4].values

import keras

from keras.models import Sequential

from keras.layers import Dense

from keras.utils import to_categorical

from sklearn.preprocessing import LabelEncoder,OneHotEncoder

ytrain2=ytrain.reshape(len(ytrain),1)

encoder1=LabelEncoder()

ytrain2[:,0]=encoder1.fit_transform(ytrain2[:,0])

encoder=OneHotEncoder(categorical_features=[0])

ytrain2=encoder.fit_transform(ytrain2).toarray()

classifier=Sequential()

classifier.add(Dense(output_dim=4,init=’uniform’,activation=’relu’,input_dim=4))

classifier.add(Dense(output_dim=4,init=’uniform’,activation=’relu’))

classifier.add(Dense(output_dim=3,init=’uniform’,activation=’sigmoid’))

classifier.compile(optimizer=’adam’,loss=’categorical_crossentropy’,metrics=[‘accuracy’])

classifier.fit(xtrain,ytrain2,batch_size=5,epochs=300)

y_pred=classifier.predict(xtest)

Sorry, I do not have the capacity to debug your code. Perhaps post to stackoverflow.

Hi Jason, this code gives the accuracy of 98%. But when i add k-fold cross validation code, accuracy decreases to 75%.

Perhaps try tuning the model further?

Hello Jason,

This code does not work form me. I am using the exact same code but I get error with estimator.fit(). The error looks like that:

—————————————————————————

TypeError Traceback (most recent call last)

in

34 estimator = KerasClassifier(build_fn=baseline_model, nb_epoch=200, batch_size=5, verbose=0)

35 X_train, X_test, Y_train, Y_test = train_test_split(X, dummy_y, test_size=0.33, random_state=seed)

—> 36 estimator.fit(X_train, Y_train)

37 predictions = estimator.predict(X_test)

38 print(predictions)

I can confirm that the code works with the latest version of scikit-learn, tensorflow and keras.

Perhaps some of these tips will help:

https://machinelearningmastery.com/faq/single-faq/why-does-the-code-in-the-tutorial-not-work-for-me

Thanks Jason,

I have resolved the issue. I don’t know why but the problem is from the model.add() function.

model.add(Dense(3, init=’normal’, activation=’sigmoid’))

If I remove the argument init = ‘normal’ from model.add() I get the correct result but if I add it then I get error with the estimator.fit() function. I don’t know what the reason maybe but simply removing init = ‘normal’ from model.add() resolves the error.

Thanks.

Nice work!

Jason, boss you are too good! You have really helped me out especially in implementation of Deep learning part. I was rattled and lost and was desperately looking for some technology and came across your blogs. thanks a lot.

I’m glad I have helped in some small way Prash.

It is a great tutorial Dr. Jason. Very clear and crispy. I am a beginner in Keras. I have a small doubt.

Is it necessary to use scikit-learn. Can we solve the same problem using basic keras?

You can use basic Keras, but scikit-learn make Keras better. They work very well together.

Thank You Jason for your prompt reply

You’re welcome Harsha.

Hi Jason, nice tutorial!

I have a question. You mentioned that scikit-learn make Keras better, why?

Thanks!

Hi jokla, great question.

The reason is that we can access all of sklearn’s features using the Keras Wrapper classes. Tools like grid searching, cross validation, ensembles, and more.

Hi Jason,

I’m a CS student currently studying sentiment analysis and was wondering how to use keras for multi classification of text, ideally I would like the functionality of the TFidvectoriser from sklearn so a one hot vector representation against a given vocabulary is used, within a neural net to determine the final classification.

I am having trouble understanding the initial steps in transforming and feeding word data into vector representations. Can you help me out with some basic code examples of this first step in the sense that say I have a text file with 5000 words for example, which also include emoji (to use as the vocabulary), how can I feed in a training file in csv format text,sentiment and convert each text into a one hot representation then feed it into the neural net, for a final output vector of size e.g 1×7 to denote the various class labels.

I have tried to find help online and most of the solutions use helper methods to load in text data such as imdb, while others use word2vec which isnt what i need.

Hope you can help, I would really appreciate it!

Cheers,

Mo

Hi Jason,

Thanks for the great tutorial!

Just one question regarding the output variable encoding. You mentioned that it is a good practice to convert the output variable to one hot encoding matrix. Is this a necessary step? If the output varible consists of discrete integters, say 1, 2, 3, do we still need to to_categorical() to perform one hot encoding?

I check some example codes in keras github, it seems this is required. Can you please kindly shed some lights on it?

Thanks in advance.

Hi Qichang, great question.

A one hot encoding is not required, you can train the network to predict an integer, it is just a MUCH harder problem.

By using a one hot encoding, you greatly simplify the prediction problem making it easier to train for and achieve better performance.

Try it and compare the results.

Hello,

I have followed your tutorial and I get an error in the following line:

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

Traceback (most recent call last):

File “k.py”, line 84, in

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/cross_validation.py”, line 1433, in cross_val_score

for train, test in cv)

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/externals/joblib/parallel.py”, line 800, in __call__

while self.dispatch_one_batch(iterator):

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/externals/joblib/parallel.py”, line 658, in dispatch_one_batch

self._dispatch(tasks)

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/externals/joblib/parallel.py”, line 566, in _dispatch

job = ImmediateComputeBatch(batch)

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/externals/joblib/parallel.py”, line 180, in __init__

self.results = batch()

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/externals/joblib/parallel.py”, line 72, in __call__

return [func(*args, **kwargs) for func, args, kwargs in self.items]

File “/Library/Python/2.7/site-packages/scikit_learn-0.17.1-py2.7-macosx-10.9-intel.egg/sklearn/cross_validation.py”, line 1531, in _fit_and_score

estimator.fit(X_train, y_train, **fit_params)

File “/Library/Python/2.7/site-packages/keras/wrappers/scikit_learn.py”, line 135, in fit

**self.filter_sk_params(self.build_fn.__call__))

TypeError: __call__() takes at least 2 arguments (1 given)

Do you have received this error before? do you have an idea how to fix that?

I have not seen this before Pedro.

Perhaps it is something simple like a copy-paste error from the tutorial?

Are you able to double check the code matches the tutorial exactly?

I have exactly the same problem.

Double checked the code,

have all the versions of keras etc, updated.

🙁

Hi Victor, are you able to share your version of Keras, scikit-learn, TensorFlow/Theano?

Hi Jason,

Thanks for the great tutorial.

But I have a question, why did you use sigmoid activation function together with categorical_crossentropy loss function?

Usually, for multiclass classification problem, I found implementations always using softmax activation function with categorical_cross entropy.

In addition, does one-hot encoding in the output make it as binary classification instead of multiclass classification? Could you please give some explanations on it?

Yes, you could use a softmax instead of sigmoid. Try it and see.

The one hot encoding creates 3 binary output features. This too would be required with the softmax activation function.

Jason,

Great site, great resource. Is it possible to see the old example with the one hot encoding output? I’m interested in creating a network with multiple binary outputs and have been searching around for an example.

Many thanks.

I have many examples on the blog of categorical outputs from LSTMs, try the search.

Thank you.

For Text classification or to basically assign them a category based on the text. How would the baseline_model change????

I’m trying to have an inner layer of 24 nodes and an output of 17 categories but the input_dim=4 as specified in the tutorial wouldn’t be right cause the text length will change depending on the number of words.

I’m a little confused. Your help would be much appreciated.

model.add(Dense(24, init=’normal’, activation=’relu’))

def baseline_model():

# create model

model = Sequential()

model.add(Dense(24, init=’normal’, activation=’relu’))

model.add(Dense(17, init=’normal’, activation=’sigmoid’))

# Compile model

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

return model

You will need to use padding on the input vectors of encoded words.

See this post for an example of working with text:

https://machinelearningmastery.com/predict-sentiment-movie-reviews-using-deep-learning/

Hi Jason,

Thank you for your tutorial. I was really interested in Deep Learning and was looking for a place to start, this helped a lot.

But while I was running the code, I came across two errors. The first one was, that while loading the data through pandas, just like your code i set “header= None” but in the next line when we convert the value to float i got the following error message.

“ValueError: could not convert string to float: ‘Petal.Length'”.

This problem went away after I took the header=None condition off.

The second one came at the end, during the Kfold validation. during the one hot encoding it’s binning the values into 22 categories and not 3. which is causing this error:

“Exception: Error when checking model target: expected dense_2 to have shape (None, 3) but got array with shape (135, 22)”

I haven’t been able to get around this. Any suggestion would be appreciated.

That is quite strange Vishnu, I think perhaps you have the wrong dataset.

You can download the CSV here:

http://archive.ics.uci.edu/ml/machine-learning-databases/iris/iris.data

Hello, I tried to use the exact same code for another dataset , the only difference being the dataset had 78 columns and 100000 rows . I had to predict the last column taking the remaining 77 columns as features . I must also say that the last column has 23 different classes.(types basically) and the 23 different classes are all integers not strings like you have used.

model = Sequential()

model.add(Dense(77, input_dim=77, init=’normal’, activation=’relu’))

model.add(Dense(10, init=’normal’, activation=’relu’))

model.add(Dense(23, init=’normal’, activation=’sigmoid’))

also I used nb_epoch=20 and batch_size=1000

also in estimator I changed the verbose to 1, and now the accuracy is a dismal of 0.52% at the end. Also while running I saw strange outputs in the verbose as :

93807/93807 [==============================] – 0s – loss: nan – acc: 0.0052

why is the loss always as loss: nan ??

Can you please tell me how to modify the code to make it run correctly for my dataset?(remaining everything in the code is unchanged)

Hi Homagni,

That is a lot of classes for 100K records. If you can reduce that by splitting up the problem, that might be good.

Your batch size is probably too big and your number of epochs is way too small. Dramatically increase the number of epochs bu 2-3 orders of magnitude.

Start there and let me know how you go.

Hi Jason,

I’ve edited the first layer’s activation to ‘softplus’ instead of ‘relu’ and number of neurons to 8 instead of 4

Then I edited the second layer’s activation to ‘softmax’ instead of sigmoid and I got 97.33% (4.42%) performance. Do you have an explanation to this enhancement in performance ?

Well done AbuZekry.

Neural nets are infinitely configurable.

Hello Jason,

Is there a error in your code? You said the network has 4 input neurons , 4 hidden neurons and 3 output neurons.But in the code you haven’t added the hidden neurons.You just specified only the input and output neurons… Will it effect the output in anyway?

Hi Panand,

The network structure is as follows:

Line 5 of the code in section 6 adds both the input and hidden layer:

The input_dim argument defines the shape of the input.

Hi Jason,

I have a set of categorical features and continuous features, I have this model:

model = Sequential()

model.add(Dense(117, input_dim=117, init=’normal’, activation=’relu’))

model.add(Dense(10, activation=’softmax’))

I am getting a dismal : (‘Test accuracy:’, 0.43541752685249119) :

Details:

Total records 45k, 10 classes to predict

batch_size=1000, nb_epoch=25

Any improvements also I would like to put LSTM how to go about doing that as I am getting errors if I add

model.add(Dense(117, input_dim=117, init=’normal’, activation=’relu’))

model.add(LSTM(117,dropout_W=0.2, dropout_U=0.2, return_sequences=True))

model.add(Dense(10, activation=’softmax’))

Error:

Exception: Input 0 is incompatible with layer lstm_6: expected ndim=3, found ndim=2

Hi JD,

Here is a long list of ideas to improve the skill of your deep learning model:

https://machinelearningmastery.com/improve-deep-learning-performance/

Not sure about the exception, you may need to double check the input dimensions of your data and confirm that your model definition matches.

Hi Jason,

I have a set of categorical features(events) from a real system, and i am trying to build a deep learning model for event prediction.

The event’s are not appears equally in the training set and one of them is relatively rare compared to the others.

event count in training set

1 22000

2 6000

3 13000

4 12000

5 26000

Should i continue with this training set? or should i restructure the training set?

What is your recommendation?

Hi YA, I would try as many different “views” on your problem as you can think of and see which best exposes the problem to the learning algorithms (gets the best performance when everything else is held constant).

Hello Jason,

Great work on your website and tuturials! I was wondering if you could show a multi hot encoding, I think you can call it al multi label classification.

Now you have (only one option on and the rest off)

[1,0,0]

[0,1,0]

[0,0,1]

And do like (each classification has the option on or off)

[0,0,0]

[0,1,1]

[1,0,1]

[1,1,0]

[1,1,1]

etc..

This would really help for me

Thanks!!

Extra side note, with k-Fold Cross Validation. I got it working with binary_crossentropy with quite bad results. Therefore I wanted to optimize the model and add cross validation which unfortunately didn’t work.

Hi, Jason: Regarding this, I have 2 questions:

1) You said this is a “simple one-layer neural network”. However, I feel it’s still 3-layer network: input layer, hidden layer and output layer.

4 inputs -> [4 hidden nodes] -> 3 outputs

2) However, in your model definition:

model.add(Dense(4, input_dim=4, init=’normal’, activation=’relu’))

model.add(Dense(3, init=’normal’, activation=’sigmoid’))

Seems that only two layers, input and output, there is no hidden layer. So this is actually a 2-layer network. Is this right?

Hi Martin, yes. One hidden layer. I take the input and output layers as assumed, the work happens in the hidden layer.

The first line defines the number of inputs (input_dim=4) AND the number of nodes in the hidden layer:

I hope that helps.

Hi, Jason: I ran this same code but got this error:

Traceback (most recent call last):

File “”, line 1, in

runfile(‘C:/Users/USER/Documents/keras-master/examples/iris_val.py’, wdir=’C:/Users/USER/Documents/keras-master/examples’)

File “C:\Users\USER\Anaconda2\lib\site-packages\spyder\utils\site\sitecustomize.py”, line 866, in runfile

execfile(filename, namespace)

File “C:\Users\USER\Anaconda2\lib\site-packages\spyder\utils\site\sitecustomize.py”, line 87, in execfile

exec(compile(scripttext, filename, ‘exec’), glob, loc)

File “C:/Users/USER/Documents/keras-master/examples/iris_val.py”, line 46, in

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\model_selection\_validation.py”, line 140, in cross_val_score

for train, test in cv_iter)

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\externals\joblib\parallel.py”, line 758, in __call__

while self.dispatch_one_batch(iterator):

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\externals\joblib\parallel.py”, line 603, in dispatch_one_batch

tasks = BatchedCalls(itertools.islice(iterator, batch_size))

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\externals\joblib\parallel.py”, line 127, in __init__

self.items = list(iterator_slice)

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\model_selection\_validation.py”, line 140, in

for train, test in cv_iter)

File “C:\Users\USER\Anaconda2\lib\site-packages\sklearn\base.py”, line 67, in clone

new_object_params = estimator.get_params(deep=False)

TypeError: get_params() got an unexpected keyword argument ‘deep’

Please, I need your help on how to resolve this.

Hi Seun, it is not clear what is going on here.

You may have added an additional line or whitespace or perhaps your environment has a problem?

Hello Seun, perhaps this could help you: http://stackoverflow.com/questions/41796618/python-keras-cross-val-score-error/41832675#41832675

I have reproduced the fault and understand the cause.

The error is caused by a bug in Keras 1.2.1 and I have two candidate fixes for the issue.

I have written up the problem and fixes here:

http://stackoverflow.com/a/41841066/78453

I have the same issue….

File “/usr/local/lib/python3.5/dist-packages/sklearn/base.py”, line 67, in clone

new_object_params = estimator.get_params(deep=False)

TypeError: get_params() got an unexpected keyword argument ‘deep’

Looks to be an old issue fixed last year so I don’t understand which lib is in the wrong version…

https://github.com/fchollet/keras/issues/1385

Hi shazz,

I have reproduced the fault and understand the cause.

The error is caused by a bug in Keras 1.2.1 and I have two candidate fixes for the issue.

I have written up the problem and fixes here:

http://stackoverflow.com/a/41841066/78453

Hi Jasson,

Thanks so much. The second fix worked for me.

Glad to hear it Seun.

Dear Jason,

With the help of your example i am trying to use the same for handwritten digits pixel data to classify the no input is 5000rows with example 20*20 pixels so totally x matrix is (5000,400) and Y is (5000,1), i am not able to successfully run the model getting error as below in the end of the code.

#importing the needed libraries

import scipy.io

import numpy

from sklearn.preprocessing import LabelEncoder

from keras.models import Sequential

from keras.layers import Dense

from keras.wrappers.scikit_learn import KerasClassifier

from keras.utils import np_utils

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import KFold

from sklearn.preprocessing import LabelEncoder

from sklearn.pipeline import Pipeline

In [158]:

#Intializing random no for reproductiblity

seed = 7

numpy.random.seed(seed)

In [159]:

#loading the dataset from mat file

mat = scipy.io.loadmat(‘C:\\Users\\Sulthan\\Desktop\\NeuralNet\\ex3data1.mat’)

print(mat)

{‘X’: array([[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

…,

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.]]), ‘__header__’: b’MATLAB 5.0 MAT-file, Platform: GLNXA64, Created on: Sun Oct 16 13:09:09 2011′, ‘__version__’: ‘1.0’, ‘y’: array([[10],

[10],

[10],

…,

[ 9],

[ 9],

[ 9]], dtype=uint8), ‘__globals__’: []}

Type Markdown and LaTeX:

α

2

α2

In [ ]:

In [ ]:

In [160]:

#Splitting of X and Y of DATA

X_train = mat[‘X’]

In [161]:

X_train

Out[161]:

array([[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

…,

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.]])

In [162]:

Y_train = mat[‘y’]

In [163]:

Y_train

Out[163]:

array([[10],

[10],

[10],

…,

[ 9],

[ 9],

[ 9]], dtype=uint8)

In [164]:

X_train.shape

Out[164]:

(5000, 400)

In [165]:

Y_train.shape

Out[165]:

(5000, 1)

In [166]:

data_trainX = X_train[2500:,0:400]

In [167]:

data_trainX

Out[167]:

array([[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

…,

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.],

[ 0., 0., 0., …, 0., 0., 0.]])

In [168]:

data_trainX.shape

Out[168]:

(2500, 400)

In [256]:

data_trainY = Y_train[:2500,:].reshape(-1)

In [257]:

data_trainY

data_trainY.shape

Out[257]:

(2500,)

In [284]:

#enocode class values as integers

encoder = LabelEncoder()

encoder.fit(data_trainY)

encoded_Y = encoder.transform(data_trainY)

# convert integers to dummy variables

dummy_Y= np_utils.to_categorical(encoded_Y)

In [285]:

dummy_Y

Out[285]:

array([[ 0., 0., 0., 0., 1.],

[ 0., 0., 0., 0., 1.],

[ 0., 0., 0., 0., 1.],

…,

[ 0., 0., 0., 1., 0.],

[ 0., 0., 0., 1., 0.],

[ 0., 0., 0., 1., 0.]])

In [298]:

newy = dummy_Y.reshape(-1,1)

In [300]:

newy

Out[300]:

array([[ 0.],

[ 0.],

[ 0.],

…,

[ 0.],

[ 1.],

[ 0.]])

In [293]:

#define baseline model

def baseline_model():

#create model

model = Sequential()

model.add(Dense(15,input_dim=400,init=’normal’,activation=’relu’))

model.add(Dense(10,init=’normal’,activation=’sigmoid’))

#compilemodel

model.compile(loss=’categorical_crossentropy’,optimizer=’adam’,metrics=[‘accuracy’])

return model

estimator = KerasClassifier(build_fn=baseline_model, nb_epoch=200,batch_size=5,verbose=0)

print(estimator)

In [295]:

kfold = KFold(n_splits=10, shuffle=True, random_state=seed)

results = cross_val_score(estimator, data_trainX, newy, cv=kfold)

print(“Baseline: %.2f%% (%.2f%%)” % (results.mean()*100, results.std()*100))

—————————————————————————

ValueError Traceback (most recent call last)

in ()

—-> 1 results = cross_val_score(estimator, data_trainX, newy, cv=kfold)

2 print(“Baseline: %.2f%% (%.2f%%)” % (results.mean()*100, results.std()*100))

C:\Users\Sulthan\Anaconda3\lib\site-packages\sklearn\model_selection\_validation.py in cross_val_score(estimator, X, y, groups, scoring, cv, n_jobs, verbose, fit_params, pre_dispatch)

126

127 “””

–> 128 X, y, groups = indexable(X, y, groups)

129

130 cv = check_cv(cv, y, classifier=is_classifier(estimator))

C:\Users\Sulthan\Anaconda3\lib\site-packages\sklearn\utils\validation.py in indexable(*iterables)

204 else:

205 result.append(np.array(X))

–> 206 check_consistent_length(*result)

207 return result

208

C:\Users\Sulthan\Anaconda3\lib\site-packages\sklearn\utils\validation.py in check_consistent_length(*arrays)

179 if len(uniques) > 1:

180 raise ValueError(“Found input variables with inconsistent numbers of”

–> 181 ” samples: %r” % [int(l) for l in lengths])

182

183

ValueError: Found input variables with inconsistent numbers of samples: [2500, 12500]

Hi Sulthan, the trace is a little hard to read.

Sorry, I have no off the cuff ideas.

Perhaps try cutting your example back to the minimum to help isolate the fault?

Hi Jason,

Thanks for your tutorial!

Just one question regarding the output. In this problem, we got three classes (setosa, versicolor and virginica), and since each data instance should be classified into only one category, the problem is more specifically “single-lable, multi-class classification”. What if each data instance belonged to multiple categories. Then we are facing “multi-lable, multi-class classification”. In our case, each flower belongs to at least two species (Let’s just forget the biology 🙂 ).

My solution is to modify the output variable (Y) with mutiple ‘1’ in it, i.e. [1 1 0], [0 1 1], [1 1 1 ]……. This is definitely not one-hot encoding any more (maybe two or three-hot?)

Will my method work out? If not, how do you think the problem of “multi-lable, multi-class classification” should be solved?

Thanks in advance

Your method sounds very reasonable.

You may also want to use sigmoid activation functions on the output layer to allow binary class membership to each available class.

Hello, how can I use the model to create predictions?

if i try this: print(‘predict: ‘,estimator.predict([[5.7,4.4,1.5,0.4]])) i got this exception:

AttributeError: ‘KerasClassifier’ object has no attribute ‘model’

Exception ignored in: <bound method BaseSession.__del__ of >

Traceback (most recent call last):

File “/Library/Frameworks/Python.framework/Versions/3.5/lib/python3.5/site-packages/tensorflow/python/client/session.py”, line 581, in __del__

AttributeError: ‘NoneType’ object has no attribute ‘TF_DeleteStatus’

I have not seen this error before.

What versions of Keras/TF/sklearn/Python are you using?

Hi,

Thanks for the great tutorial.

It would be great if you could outline what changes would be necessary if I want to do a multi-class classification with text data: the training data assigns scores to different lines of text, and the problem is to infer the score for a new line of text. It seems that the estimator above cannot handle strings. What would be the fix for this?

Thanks in advance for the help.

Consider encoding your words as integers, using a word embedding and a fixed sequence length.

See this tutorial:

https://machinelearningmastery.com/predict-sentiment-movie-reviews-using-deep-learning/

This was a great tutorial to enhance the skills in deep learning. My question: is it possible to use this same dataset for LSTM? Can you please help with this how to solve in LSTM?

Hi Sweta,

You could use an LSTM, but it would not be appropriate because LSTMs are intended for sequence prediction problems and this is not a sequence prediction problem.

Hi Jason,

I have this problem where I have 1500 features as input to my DNN and 2 output classes, can you explain how do I decide the size of neurons in my hidden layer and how many hidden layers I need to process such high features with accuracy.

Lots of trial and error.

Start with a small network and keep adding neurons and layers and epochs until no more benefit is seen.

sir, the following code is showing an error message.. could you help me figure it out. i am trying to do a multi class classification with 5 datasets combined in one( 4 non epileptic patients and 1 epileptic) …500 x 25 dataset and the 26th column is the class.

# Train model and make predictions

import numpy

import pandas

from keras.models import Sequential

from keras.layers import Dense

from keras.wrappers.scikit_learn import KerasClassifier

from keras.utils import np_utils

from sklearn.model_selection import cross_val_score

from sklearn.cross_validation import train_test_split

from sklearn.preprocessing import LabelEncoder

from sklearn.model_selection import KFold

# fix random seed for reproducibility

seed = 7

numpy.random.seed(seed)

# load dataset

dataframe = pandas.read_csv(“DemoNSO.csv”, header=None)

dataset = dataframe.values

X = dataset[:,0:25].astype(float)

Y = dataset[:,25]

# encode class values as integers

encoder = LabelEncoder()

encoder.fit(Y)

encoded_Y = encoder.transform(Y)

# convert integers to dummy variables (i.e. one hot encoded)

dummy_y = np_utils.to_categorical(encoded_Y)

# define baseline model

def baseline_model():

# create model

model = Sequential()

model.add(Dense(700, input_dim=25, init=’normal’, activation=’relu’))

model.add(Dense(2, init=’normal’, activation=’sigmoid’))

# Compile model

model.compile(loss=’categorical_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

return model

estimator = KerasClassifier(build_fn=baseline_model, nb_epoch=50, batch_size=20)

kfold = KFold(n_splits=5, shuffle=True, random_state=seed)

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

print(“Baseline: %.2f%% (%.2f%%)” % (results.mean()*100, results.std()*100))

X_train, X_test, Y_train, Y_test = train_test_split(X, dummy_y, test_size=0.55, random_state=seed)

estimator.fit(X_train, Y_train)

predictions = estimator.predict(X_test)

print(predictions)

print(encoder.inverse_transform(predictions))

error message:

str(array.shape))

ValueError: Error when checking model target: expected dense_56 to have shape (None, 2) but got array with shape (240, 3)

Confirm the size of your output (y) matches the dimension of your output layer.

Hello Jason,

I got your model to work using Python 2.7.13, Keras 2.0.2, Theano 0.9.0.dev…, by copying the codes exactly, however the results that I get are not only very bad (59.33%, 48.67%, 38.00% on different trials), but they are also different.

I was under the impression that using a fixed seed would allow us to reproduce the same results.

Do you have any idea what could have caused such bad results?

Thanks

edit: I was re-executing only the results=cross_val_score(…) line to get different results I listed above.

Running the whole script over and over generates the same result: “Baseline: 59.33% (21.59%)”

Glad to hear it.

Not sure why the results are so bad. I’ll take a look.

The fixed seed does not seem to have an effect on the Theano or TensorFlow backends. Try running examples multiple times and take the average performance.

Did you have time to look into this?

I had my colleague run this script on Theano 1.0.1, and it gave the expected performance of 95.33%. I then installed Theano 1.0.1, and got the same result again.

However, using Theano 2.0.2 I was getting 59.33% with seed=7, and similar performances with different seeds. Is it possible the developers made some crucial changes with the new version?

The most recent version of Theano is 0.9:

https://github.com/Theano/Theano/releases

Do you mean Keras versions?

It may not be the Keras version causing the difference in the run. The fixed random seed may not be having an effect in general, or may not be having when a Theano backend is being used.

Neural networks are stochastic algorithms and will produce a different result each run:

https://machinelearningmastery.com/randomness-in-machine-learning/

Yes I meant Keras, sorry.

There is no issue with the seed, I’m getting the same result with you on multiple computers using Keras 1.1.1. But with Keras 2.0.2, the results are absymally bad.

not sure if this was every resolved, but I’m getting the same thing with most recent versions of Theano and Keras

59.33% with seed=7

Try running the example a few times with different seeds.

Neural networks are stochastic:

https://machinelearningmastery.com/randomness-in-machine-learning/

Hi Jason

in this code for multiclass classification can u suggest me how to plot graph to display the accuracy and also what should be the axis represent

No, we normally do not graph accuracy, unless you want to graph it over training epochs?

Hi Jason,

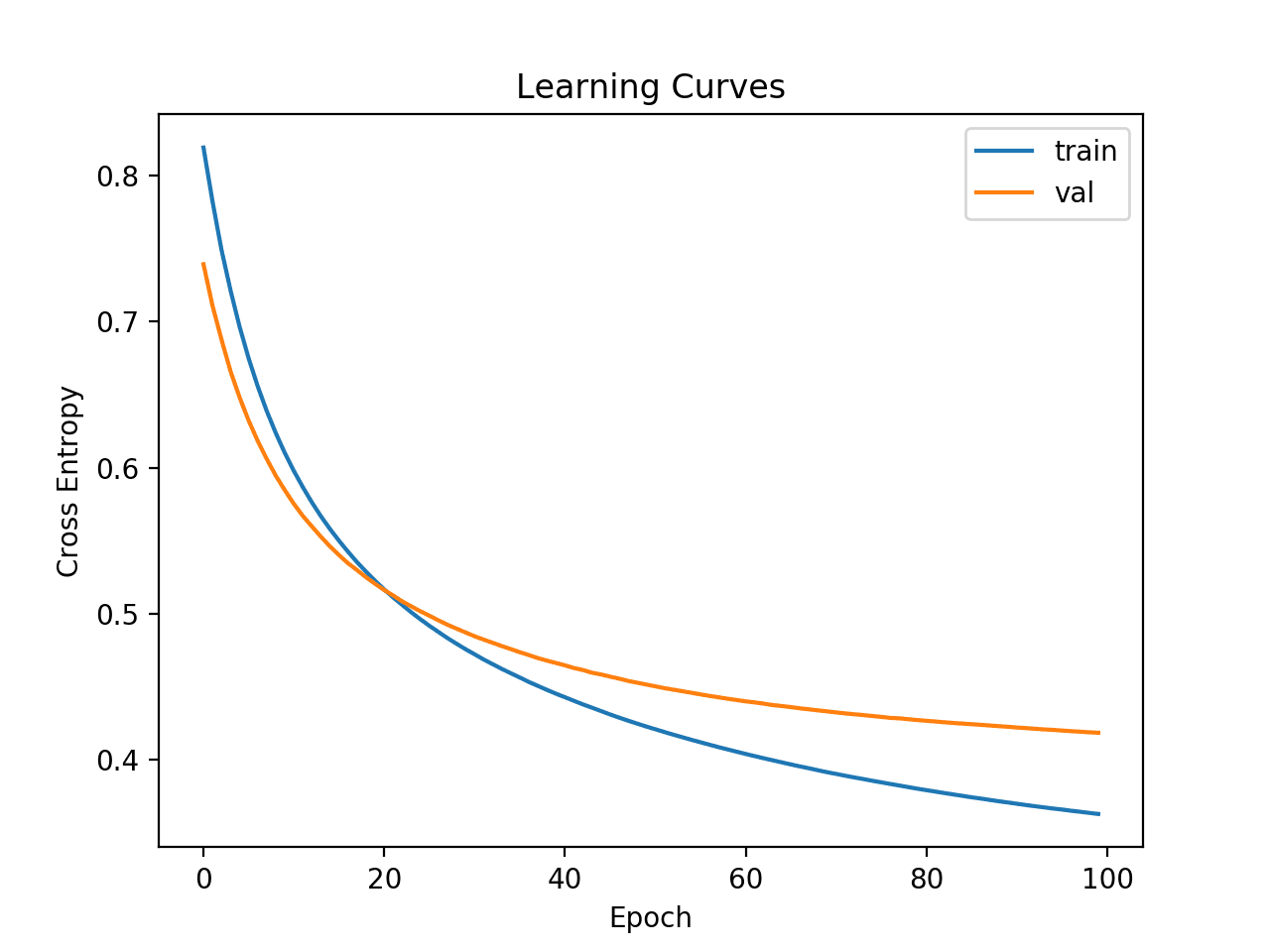

First of all, I’d like to thank you for your blog. I’m currently trying to build a multiclass classifier just as the one you have explained above. I was wondering: how could I plot the history of loss and accuracy for training and validation per epoch as it is done using the historry=model.fit()?.

Many thanks in advance for your help.

Yes, see this post:

https://machinelearningmastery.com/display-deep-learning-model-training-history-in-keras/

thanks

You’re welcome.

Dear Jason,

I have found this tutorial very interesting and helpful.

What I wanted to ask is, I am currently trying to classify poker hands as this kaggle competition: https://www.kaggle.com/c/poker-rule-induction (For a school project) I wish to create a neural network as you have created above. What do you suggest for me to start this?

Your help would be greatly appreciated!

Thanks.

This process will help you work through your modeling problem:

https://machinelearningmastery.com/start-here/#process

Hi Jason,

Its an awesome tutorial. It would be great if you can come up with a blog post on multiclass medical image classification with Keras Deep Learning library. It would serve as a great asset for researchers like me, working with medical image classification. Looking forward.

Thanks for the suggestion.

Thanks for the great tutorial!

I duplicated the result using Theano as backend.

However, using Tensorflow yield a worse accuracy, 88.67%.

Any explanation?

Thanks!

It may be related to the stochastic nature of neural nets and the difficulty of making results with the TF backend reproducible.

You can learn more about the stochastic nature of machine learning algorithms here:

https://machinelearningmastery.com/randomness-in-machine-learning/

Hi Jason, How to find the Precision, Recall and f1 score of your example?

Case-1 I have used like :

model.compile(loss=’categorical_crossentropy’, optimizer=’Nadam’, metrics=[‘acc’, ‘fmeasure’, ‘precision’, ‘recall’])

Case-2 and also used :

def score(yh, pr):

coords = [np.where(yhh > 0)[0][0] for yhh in yh]

yh = [yhh[co:] for yhh, co in zip(yh, coords)]

ypr = [prr[co:] for prr, co in zip(pr, coords)]

fyh = [c for row in yh for c in row]

fpr = [c for row in ypr for c in row]

return fyh, fpr

pr = model.predict_classes(X_train)

yh = y_train.argmax(2)

fyh, fpr = score(yh, pr)

print ‘Training accuracy:’, accuracy_score(fyh, fpr)

print ‘Training confusion matrix:’

print confusion_matrix(fyh, fpr)

precision_recall_fscore_support(fyh, fpr)

pr = model.predict_classes(X_test)

yh = y_test.argmax(2)

fyh, fpr = score(yh, pr)

print ‘Testing accuracy:’, accuracy_score(fyh, fpr)

print ‘Testing confusion matrix:’

print confusion_matrix(fyh, fpr)

precision_recall_fscore_support(fyh, fpr)

What I have observed is that, accuracy of case-1 and case-2 are different?

Any solution?

You can make predictions on your test data and use the tools from sklearn:

http://scikit-learn.org/stable/modules/classes.html#module-sklearn.metrics

Hi Jason,

Like a student earlier in the comments my accuracy results are exactly the same as his:

********** Baseline: 88.67% (21.09%)

and I think this is related to having Tensorflow as the backend rather than the Theano backend.

I am working this through in a Jupyter notebook

I went through your earlier tutorials on setting up the environment:

scipy: 0.18.1

numpy: 1.11.3

matplotlib: 2.0.0

pandas: 0.19.2

statsmodels: 0.6.1

sklearn: 0.18.1

theano: 0.9.0.dev-c697eeab84e5b8a74908da654b66ec9eca4f1291

tensorflow: 1.0.1

Using TensorFlow backend.

keras: 2.0.3

The Tensorflow is a Python3.6 recompile picked up from the web at:

http://www.lfd.uci.edu/~gohlke/pythonlibs/#tensorflow

Do you know have I can force the Keras library to take Theano as a backend rather than the Tensorflow library?

Thanks for the great work on your tutorials… for beginners it is such in invaluable thing to have tutorials that actually work !!!

Looking forward to get more of your books

Rene

Changing to the Theano backend doesn’t change the results:

Managed to change to a Theano backend by setting the Keras config file:

{

“image_data_format”: “channels_last”,

“epsilon”: 1e-07,

“floatx”: “float32”,

“backend”: “theano”

}

as instructed at: https://keras.io/backend/#keras-backends

The notebook no longer reports it is using Tensorflow so I guess the switch worked but the results are still:

****** Baseline: 88.67% (21.09%)

Will need to look a little deeper and play with the actual architecture a bit.

All the same great material to get started with

Thanks again

Rene

Confirmed that changes to the model as someone above mentioned

model.add(Dense(8, input_dim=4, kernel_initializer=’normal’, activation=’relu’))

model.add(Dense(3, kernel_initializer=’normal’, activation=’softmax’))

nodes makes a substantial difference:

**** Baseline: 96.67% (4.47%)

but there is no difference between the Tensorflow and Theano backend results. I guess that’s as far as I can take this for now.

Take care,

Rene

Nice.

Also, note that MLPs are stochastic. This means that if you don’t fix the random seed, you will get different results for each run of the algorithm.

Ideally, you should take the average performance of the algorithm across multiple runs to evaluate its performance.

See this post:

https://machinelearningmastery.com/randomness-in-machine-learning/

You can change the back-end used by Keras in the Kersas config file. See this post:

https://machinelearningmastery.com/introduction-python-deep-learning-library-keras/

Jason,

Thank you very much first. These tutorials are excellent. They are very practical. Your are an excellent educator.

I want classify my data into multiple classes of 25-30. Your IRIS example is nearest classification. They DL4J previously has IRIS classification with DBN; but disappeared in new community version.

I have following issues:

1.>

It takes so long. My laptop is TOSHIBA L745, 4GB RAM, i3 processor. it has CUDA.

My classification problem is solved with SVM in very short time. I’d say in split second.

Do you think speed would increase if we use DBN or CNN something ?

2.>

My result :

Baseline: 88.67% (21.09%),

Once I have installed Docker (tensorflow in it),then run IRIS classification. It shows 96%.

I wish similar or better accuracy. How to reach that level ?

Thank you

MLP is the right algorithm for multi-class classification algorithms.

If it is slow, consider running it on AWS:

https://machinelearningmastery.com/develop-evaluate-large-deep-learning-models-keras-amazon-web-services/

There are many things you can do to lift performance, see this post:

https://machinelearningmastery.com/improve-deep-learning-performance/

Hello Jason,

first of all, your tutorials are really well done when you start working with keras.

I have a question about the epochs and batch_size in this tutorial. I think I haven’t understood it correctly.

I loaded the record and it contains 150 entries.

You choose 200 epochs and batch_size=5. So you use 5*200=1000 examples for training. So does keras use the same entries multiple times or does it stop automatically?

Thanks!

One epoch involves exposing each pattern in the training dataset to the model.

One epoch is comprised of one or more batches.

One batch involves showing a subset of the patterns in the training data to the model and updating weights.

The number of patterns in the dataset for one epoch must be a factor of the batch size (e.g. divide evenly).

Does that help?

Hi,

thank you for the explanation.

The explanation helped me, and in the meantime I have read and tried several LSTM tutorials from you and it became much clearer to me.

greetings, Chris

I’m glad to hear that Chris.

Hey Jason,

I have been following your tutorials and they have been very very helpful!. Especially, the most useful section being the comments where people like me get to ask you questions and some of them are the same ones I had in my mind.

Although, I have one that I think hasn’t been asked before, at least on this page!

What changes should I make to the regular program you illustrated with the “pima_indians_diabetes.csv” in order to take a dataset that has 5 categorical inputs and 1 binary output.

This would be a huge help! Thanks in advance!

Great question.

Consider using an integer encoding followed by a binary encoding of the categorical inputs.

This post will show you how:

https://machinelearningmastery.com/data-preparation-gradient-boosting-xgboost-python/

Hello Dr. Brownlee,

The link that you shared was very helpful and I have been able to one hot encode and use the data set but at this point of time I am not able to find relevant information regarding what the perfect batch size and no. of epochs should be. My data has 5 categorical inputs and 1 binary output (2800 instances). Could you tell me what factors I should take into consideration before arriving at a perfect batch size and epoch number? The following are the configuration details of my neural net:

model.add(Dense(28, input_dim=43, init=’uniform’, activation=’relu’))

model.add(Dense(28, init=’uniform’, activation=’relu’))

model.add(Dense(1, init=’uniform’, activation=’sigmoid’))