Keras is an easy-to-use and powerful Python library for deep learning.

There are a lot of decisions to make when designing and configuring your deep learning models. Most of these decisions must be resolved empirically through trial and error and by evaluating them on real data.

As such, it is critically important to have a robust way to evaluate the performance of your neural networks and deep learning models.

In this post, you will discover a few ways to evaluate model performance using Keras.

Kick-start your project with my new book Deep Learning With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- May/2016: Original post

- Update Oct/2016: Updated examples for Keras 1.1.0 and scikit-learn v0.18

- Update Mar/2017: Updated example for Keras 2.0.2, TensorFlow 1.0.1 and Theano 0.9.0

- Update Mar/2018: Added alternate link to download the dataset as the original appears to have been taken down

- Update Jun/2022: Update to TensorFlow 2.x syntax

Evaluate the performance of deep learning models in Keras

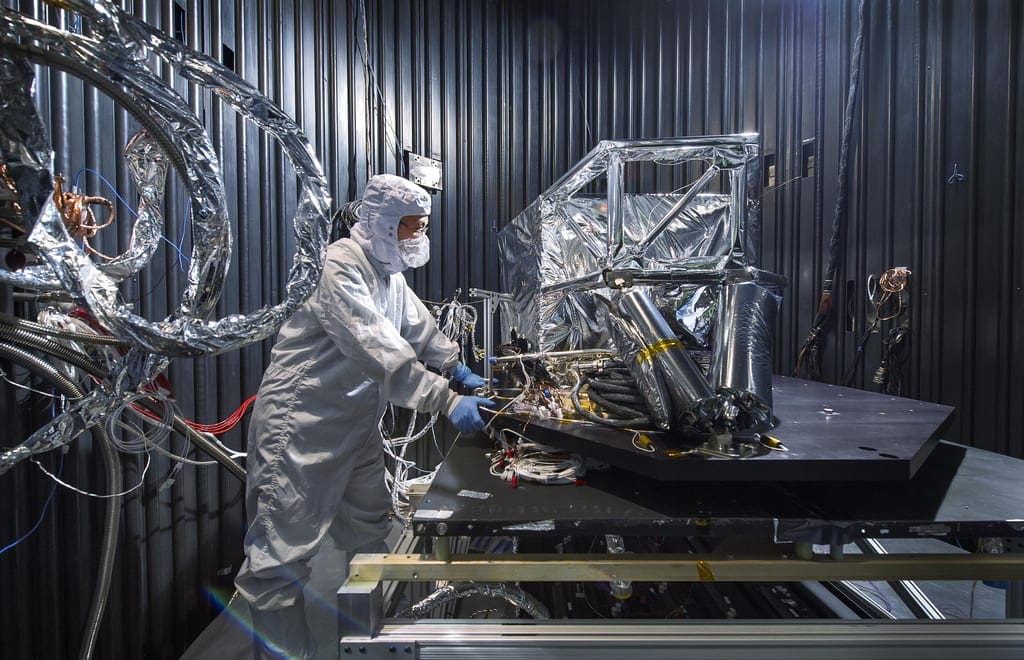

Photo by Thomas Leuthard, some rights reserved.

Empirically Evaluate Network Configurations

You must make a myriad of decisions when designing and configuring your deep learning models.

Many of these decisions can be resolved by copying the structure of other people’s networks and using heuristics. Ultimately, the best technique is to actually design small experiments and empirically evaluate problems using real data.

This includes high-level decisions like the number, size, and type of layers in your network. It also includes the lower-level decisions like the choice of the loss function, activation functions, optimization procedure, and the number of epochs.

Deep learning is often used on problems that have very large datasets. That is tens of thousands or hundreds of thousands of instances.

As such, you need to have a robust test harness that allows you to estimate the performance of a given configuration on unseen data and reliably compare the performance to other configurations.

Need help with Deep Learning in Python?

Take my free 2-week email course and discover MLPs, CNNs and LSTMs (with code).

Click to sign-up now and also get a free PDF Ebook version of the course.

Data Splitting

The large amount of data and the complexity of the models require very long training times.

As such, it is typical to separate data into training and test datasets or training and validation datasets.

Keras provides two convenient ways of evaluating your deep learning algorithms this way:

- Use an automatic verification dataset

- Use a manual verification dataset

Use an Automatic Verification Dataset

Keras can separate a portion of your training data into a validation dataset and evaluate the performance of your model on that validation dataset in each epoch.

You can do this by setting the validation_split argument on the fit() function to a percentage of the size of your training dataset.

For example, a reasonable value might be 0.2 or 0.33 for 20% or 33% of your training data held back for validation.

The example below demonstrates the use of an automatic validation dataset on a small binary classification problem. All examples in this post use the Pima Indians onset of diabetes dataset. You can download it from the UCI Machine Learning Repository and save the data file in your current working directory with the filename pima-indians-diabetes.csv (update: download from here).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# MLP with automatic validation set from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense import numpy # fix random seed for reproducibility numpy.random.seed(7) # load pima indians dataset dataset = numpy.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # create model model = Sequential() model.add(Dense(12, input_dim=8, activation='relu')) model.add(Dense(8, activation='relu')) model.add(Dense(1, activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X, Y, validation_split=0.33, epochs=150, batch_size=10) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Running the example, you can see that the verbose output on each epoch shows the loss and accuracy on both the training dataset and the validation dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

... Epoch 145/150 514/514 [==============================] - 0s - loss: 0.5252 - acc: 0.7335 - val_loss: 0.5489 - val_acc: 0.7244 Epoch 146/150 514/514 [==============================] - 0s - loss: 0.5198 - acc: 0.7296 - val_loss: 0.5918 - val_acc: 0.7244 Epoch 147/150 514/514 [==============================] - 0s - loss: 0.5175 - acc: 0.7335 - val_loss: 0.5365 - val_acc: 0.7441 Epoch 148/150 514/514 [==============================] - 0s - loss: 0.5219 - acc: 0.7354 - val_loss: 0.5414 - val_acc: 0.7520 Epoch 149/150 514/514 [==============================] - 0s - loss: 0.5089 - acc: 0.7432 - val_loss: 0.5417 - val_acc: 0.7520 Epoch 150/150 514/514 [==============================] - 0s - loss: 0.5148 - acc: 0.7490 - val_loss: 0.5549 - val_acc: 0.7520 |

Use a Manual Verification Dataset

Keras also allows you to manually specify the dataset to use for validation during training.

In this example, you can use the handy train_test_split() function from the Python scikit-learn machine learning library to separate your data into a training and test dataset. Use 67% for training and the remaining 33% of the data for validation.

The validation dataset can be specified to the fit() function in Keras by the validation_data argument. It takes a tuple of the input and output datasets.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

# MLP with manual validation set from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense from sklearn.model_selection import train_test_split import numpy # fix random seed for reproducibility seed = 7 numpy.random.seed(seed) # load pima indians dataset dataset = numpy.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # split into 67% for train and 33% for test X_train, X_test, y_train, y_test = train_test_split(X, Y, test_size=0.33, random_state=seed) # create model model = Sequential() model.add(Dense(12, input_dim=8, activation='relu')) model.add(Dense(8, activation='relu')) model.add(Dense(1, activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X_train, y_train, validation_data=(X_test,y_test), epochs=150, batch_size=10) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Like before, running the example provides a verbose output of training that includes the loss and accuracy of the model on both the training and validation datasets for each epoch.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

... Epoch 145/150 514/514 [==============================] - 0s - loss: 0.4847 - acc: 0.7704 - val_loss: 0.5668 - val_acc: 0.7323 Epoch 146/150 514/514 [==============================] - 0s - loss: 0.4853 - acc: 0.7549 - val_loss: 0.5768 - val_acc: 0.7087 Epoch 147/150 514/514 [==============================] - 0s - loss: 0.4864 - acc: 0.7743 - val_loss: 0.5604 - val_acc: 0.7244 Epoch 148/150 514/514 [==============================] - 0s - loss: 0.4831 - acc: 0.7665 - val_loss: 0.5589 - val_acc: 0.7126 Epoch 149/150 514/514 [==============================] - 0s - loss: 0.4961 - acc: 0.7782 - val_loss: 0.5663 - val_acc: 0.7126 Epoch 150/150 514/514 [==============================] - 0s - loss: 0.4967 - acc: 0.7588 - val_loss: 0.5810 - val_acc: 0.6929 |

Manual k-Fold Cross Validation

The gold standard for machine learning model evaluation is k-fold cross validation.

It provides a robust estimate of the performance of a model on unseen data. It does this by splitting the training dataset into k subsets, taking turns training models on all subsets except one, which is held out, and evaluating model performance on the held-out validation dataset. The process is repeated until all subsets are given an opportunity to be the held-out validation set. The performance measure is then averaged across all models that are created.

It is important to understand that cross validation means estimating a model design (e.g., 3-layer vs. 4-layer neural network) rather than a specific fitted model. You do not want to use a specific dataset to fit the models and compare the result since this may be due to that particular dataset fitting better on one model design. Instead, you want to use multiple datasets to fit, resulting in multiple fitted models of the same design, taking the average performance measure for comparison.

Cross validation is often not used for evaluating deep learning models because of the greater computational expense. For example, k-fold cross validation is often used with 5 or 10 folds. As such, 5 or 10 models must be constructed and evaluated, significantly adding to the evaluation time of a model.

Nevertheless, when the problem is small enough or if you have sufficient computing resources, k-fold cross validation can give you a less-biased estimate of the performance of your model.

In the example below, you will use the handy StratifiedKFold class from the scikit-learn Python machine learning library to split the training dataset into 10 folds. The folds are stratified, meaning that the algorithm attempts to balance the number of instances of each class in each fold.

The example creates and evaluates 10 models using the 10 splits of the data and collects all the scores. The verbose output for each epoch is turned off by passing verbose=0 to the fit() and evaluate() functions on the model.

The performance is printed for each model, and it is stored. The average and standard deviation of the model performance are then printed at the end of the run to provide a robust estimate of model accuracy.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

# MLP for Pima Indians Dataset with 10-fold cross validation from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense from sklearn.model_selection import StratifiedKFold import numpy as np # fix random seed for reproducibility seed = 7 np.random.seed(seed) # load pima indians dataset dataset = np.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # define 10-fold cross validation test harness kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed) cvscores = [] for train, test in kfold.split(X, Y): # create model model = Sequential() model.add(Dense(12, input_dim=8, activation='relu')) model.add(Dense(8, activation='relu')) model.add(Dense(1, activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X[train], Y[train], epochs=150, batch_size=10, verbose=0) # evaluate the model scores = model.evaluate(X[test], Y[test], verbose=0) print("%s: %.2f%%" % (model.metrics_names[1], scores[1]*100)) cvscores.append(scores[1] * 100) print("%.2f%% (+/- %.2f%%)" % (np.mean(cvscores), np.std(cvscores))) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Running the example will take less than a minute and will produce the following output:

|

1 2 3 4 5 6 7 8 9 10 11 |

acc: 77.92% acc: 68.83% acc: 72.73% acc: 64.94% acc: 77.92% acc: 35.06% acc: 74.03% acc: 68.83% acc: 34.21% acc: 72.37% 64.68% (+/- 15.50%) |

Summary

In this post, you discovered the importance of having a robust way to estimate the performance of your deep learning models on unseen data.

You discovered three ways that you can estimate the performance of your deep learning models in Python using the Keras library:

- Use Automatic Verification Datasets

- Use Manual Verification Datasets

- Use Manual k-Fold Cross Validation

Do you have any questions about deep learning with Keras or this post? Ask your question in the comments, and I will do my best to answer it.

how to print network diagram

You can use plot_model from keras.utils to plot a keras model. link: https://keras.io/visualization/

Could you explain how can one use different evaluation metric (F1-score or even custom one) for evaluation?

Hi Hendrik, you can use a suite of objectives with Keras models, here’s a lost:

https://keras.io/objectives/

Thanks for the reply, but I don’t mean the “optimizer” parameter but the “metrics” at compilation, which is currently can be only “accuracy”. I’d like to change it to another evaluation metric (F1-score for instance or AUC).

Hey Hendrik, did you get the solution to how to use a different evaluation metric in Keras?

could you give some instruction on how to train a deep model. if X, y is so large that can not be fit into memory?

model.fit(X_train, y_train, validation_data=(X_test,y_test), nb_epoch=150, batch_size=10)

Great question shixudong.

Keras has a data generator for image data that does not fit into memory:

https://keras.io/preprocessing/image/

The same approach could be used for tabular data:

https://github.com/fchollet/keras/issues/107

My dataset are in a data folder like this structure–

-data

–Train

——Dog

——Cat

–Test

——Dog

——Cat

1. How do I know the y_true value of the dataset from ImageDataGenerator, if I use the function flow_from_directory?

2. How do I use k-fold cross validation using the fit_generator function in Keras?

Sorry, I don’t have examples of using the ImageDataGenerator other than for image augmentation.

Hi,Do you have to update it now?

how do can I use k-fold cross validation, the gold standard for evaluating machine learning with the fit function in keras ?

Also, how can I get an history of accuracy and loss with the cross_val_score module for plotting ?

thanks,

andrew

Great questions.

Here’s an example of using Keras with scikit-learn’s k-fold cross validation:

https://machinelearningmastery.com/use-keras-deep-learning-models-scikit-learn-python/

It is way easier to collect and display history for a single train/test run. Here’s an example:

https://machinelearningmastery.com/display-deep-learning-model-training-history-in-keras/

Hey Jason, thanks for the great tutorials!

I wanted to do a CV but read out the accuracy for each fold not only for the training but also for the test data. Would this ansatz be right:

kfold = StratifiedKFold(n_splits=folds, shuffle=True, random_state=seed)

for train, test in kfold.split(X, Y):

model = Sequential()

model.add(Dense(n, input_dim=dim, init=’uniform’, activation=’sigmoid’))

model.add(Dense(1, init=’uniform’, activation=’sigmoid’))

model.compile(loss=’mse’, optimizer=’adam’, metrics=[‘accuracy’])

asd = model.fit(X[train], Y[train], nb_epoch=epoch, validation_data=(X[test], Y[test]), batch_size=10, verbose=1)

cv_acc_train = asd.history[‘acc’]

cv_acc_test = asd.history[‘val_acc’]

Looks good to me off the cuff Watterson.

asd = model.fit(X[train], Y[train], nb_epoch=epoch, validation_data=(X[test], Y[test])

Please, is the validation_data not suppose to be validation_data=(Y[test], Y[test]). Also, can I use categorical_crossentropy when my activation is softmax. Thanks so much.

Hi Seun, the validation_data must include X and y components.

Yes, I think you can use logloss with softmax, try and see.

Hi Jason,

When you use the “automatic verfication dataset” the val_loss is lower than the loss.

“768/768 [==============================] – 0s – loss: 0.4593 – acc: 0.7839 – val_loss: 0.4177 – val_acc: 0.8386”

How can it be possible?

Thank very much for your help and your work !

Sorry, I don’t understand your question, perhaps could be more specific?

I understood from my previous lectures that a model is fitting well when the validation error is low and slightly higher than the training error.

But in your first example (Automatic Verification Datasets), the validation error is lower than the training error. I can’t figure how the model can perform better on the validation set rather than on the training set.

Does it mean that the validation split isn’t randomly defined?

Great question Jonas,

It might be a statistical fluke and a sign of an unstable model.

It might also be a sign of a poor split of the data, and a sign that a strategy with repeated splits might be warranted.

Why we are taking batch size =10, i mean how does it affect the model performance

You can learn more about how batch size impacts learning here:

https://machinelearningmastery.com/how-to-control-the-speed-and-stability-of-training-neural-networks-with-gradient-descent-batch-size/

Hi, I am no expert. But it looks like you are training on the binary labels:

Y = dataset[:,8] => Labels exist in column 8 correct?

X = dataset[:,0:8] => This includes column 8, i.e. labels

I could be wrong, haven’t looked at the data set.

No, I believe the code is correct (at least in Python 2.7).

I’m happy to hear if you get different results on your system.

Hi, Jason, for a simple feedforward MLP, are there any intuitive criteria for choosing between Keras and Sklearn?

Thanks!

Speed, Keras will be faster give it is using optimized symbolic math libs as a backend on CPU or GPUs, whereas sklearn is limited to linear algebra libs on the CPU.

Hello,

Thanks a lot for your tutorials, they are great!

This might be a bit trivial but I’d like to ask the difference between when we used a validation split in “model.fit” and we didn’t. And, for instance instead of using separate train/validation/test sets, will using train/test sets with validation split be enough?

Thanks a lot!

It depends on your problem.

If you can spare the data, it is a good idea to hold back a validation set for final checking. If you can afford the time, use k-fold cross-validation with multiple repeats to eval your model. We often don’t have the time, so we use train/test splits.

Hi Jason,

Very nice example, I enjoyed reading your blog.

I got one question, how to decide the number of epoch and the batch size?

Thanks

Great question! I recommend trial and error.

Hi Jason,

This is a great post!

I’m having trouble combining categorical cross-entropy and StratifiedKFold.

StratifiedKFold assumes the response is a (number,) shape, according to:

http://stackoverflow.com/questions/35022463/stratifiedkfold-indexerror-too-many-indices-for-array

But as you’ve explained before, Keras’s categorical cross-entropy expects the response to be a one-hot matrix. How can I resolve this?

Thank you!

You might have to move away from cross validation and rely on repeated random train/test sets.

Alternatively, you could try pre-computing the folds manually or using a modified version of the dataset, the running the cross-validation manually.

I guess you can do the following:

change :

Y=numpy.argmax(Y,axis=1)

and then using : loss=’sparse_categorical_crossentropy’

it works but would that be correct way?

It is a way. I recommend evaluating many approaches and see what works best for your data.

Hi, Jason.

your blog is great!

I’m new on keras. In your last example you build and compile the keras model inside each for iteration (I show your code below). I don’t know if it is possible, but it looks like it would be more efficient to build and complle the model one time (outside the for loop) and to fit it with the right data each time inside the loop. isn’t this possible in keras?

for train, test in kfold.split(X, Y):

# create model

model = Sequential()

model.add(Dense(12, input_dim=8, activation=’relu’))

model.add(Dense(8, activation=’relu’))

model.add(Dense(1, activation=’sigmoid’))

# Compile model

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

Yes, but you may need to re-initialize the weights.

I am demonstrating complete independence of the model within each loop.

I am trying cross-vlisation code with lstm and getting the following error:

Found array with dim 3. Estimator expected <= 2.

My code is as follows:

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

cvscores = []

for train, test in kfold.split(task_features_padded, task_label_padded):

# create model

model=Sequential()

model.add(LSTM(50,input_shape=(max_seq,number_of_features),return_sequences = 1, activation = 'relu'))

model.add(Dropout(0.2))

model.add(Dense(1,activation='sigmoid'))

print(model.summary())

model.compile(loss='binary_crossentropy',

optimizer='adam',

metrics=['accuracy'])

print('Train…')

model.fit(task_features_padded[train], task_label_padded[train],batch_size=16,nb_epoch=1000,validation_data=(X_test,y_test),verbose=2)

#model.fit(X_train, y_train,batch_size=16,nb_epoch=1000,validation_data=(X_test,y_test),verbose=2)

#model.fit(X_train, y_train,batch_size=1,nb_epoch=1000,validation_data=(X_test,y_test),verbose=2)

score, acc = model.evaluate(task_features_padded[test], task_label_padded[test], batch_size=16,verbose=0)

#score, acc = model.evaluate(X_test, y_test, batch_size=16,verbose=0)

#score, acc = model.evaluate(X_test, y_test, batch_size=1,verbose=0)

print('Test score:', score)

print('Test accuracy:', acc)

scores = model.evaluate(X[test], Y[test], verbose=0)

print("%s: %.2f%%" % (model.metrics_names[1], acc*100))

cvscores.append(acc * 100)

the shape of task_features_padded is (876, 6, 11)

and shape of task_label_padded is (876, 6, 1)

I’m not sure from a quick skim of your code.

I have some debugging suggestions in the FAQ under the question “Can you read, review or debug my code?”

https://machinelearningmastery.com/start-here/#faq

I hope that helps.

Hi Jason,

In the last part of this article, you are training 10 different models instead of training one 10 times on each fold.

In other cases, how can I select the best model out of the 10 trained ? Is it a good practice in Machine Learning to do so ?

Thanks,

Arno

Excellent question, see the last part of this post:

https://machinelearningmastery.com/randomness-in-machine-learning/

Wow, I’m not the only one having these questions apparently 🙂

Thanks for the tips ! This website is indeed very helpful.

I’m glad it helped.

Hi sir,

When i try to run the code after building the layers i am facing this error

FileNotFoundError: [WinError 3] The system cannot find the path specified: ‘C:/deeplearning/openblas-0.2.14-int32/bin’

I have changed theano flag path using this

variable = THEANO_FLAGS value = floatX=float32,device=cpu,blas.ldflags=-LC:\openblas -lopenblas

but still i am facing the same problem…

Thank you!!

Sorry, I have not seen this error.

Consider posting it as a question to stackoverflow or the theano users group.

Hi Jason,

How to give prediction score(not prediction label or prediction probability) of each test instance instead of evaluate result on whole test set?

Thanks.

You can predict probabilities with:

Hi Jason, thanks for the post. Your blog is great to learn Machine Learning with Python, I am very very grateful for you are sharing this with us. Thanks and great job! Muito obrigado, você é muito generoso em compartilhar seu conhecimento.

Thanks José, I’m glad that it is helping.

Hey Jason, Thank you for your many posts and responses.

I have tried to follow this tutorial to train and evaluate a multioutput (3) regression deep network using the keras’ Model class API, and here is my code:

#Define cross validation scheme

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

cvscores = []

for train, test in kfold.split(X_training, [Y_training10,Y_training20,Y_training30]):

# Create model

inputs=Input(shape=(72,),name=’input_layer’)

x=Dense(200,activation=’relu’,name=’hidden_layer1′)(inputs)

x=Dropout(.2)(x)

y1=Dense(1,name=’GHI10′)(x)

y2=Dense(1,name=’GHI20′)(x)

y3=Dense(1,name=’GHI30′)(x)

model=Model(inputs=inputs,outputs=[y1,y2,y3])

# Compile model

model.compile(loss=’mean_squared_error’, optimizer=’rmsprop’,metrics=’mean_absolute_error’)

#Fit model

model.fit(X_training[train],[Y_training10[train],Y_training20[train],Y_training30[train]] ,epochs=100, batch_size=10, verbose=1)

# Evaluate model

Scores=model.evaluate(X_training[test],[Y_training10[test],Y_training20[test],Y_training30[test]], verbose=1, sample_weight=None)

print(“%s: %.2f%% (MSE)” % (model.metrics_names[1], scores[1]))

print(“%s: %.2f%% (MSE)” % (model.metrics_names[2], scores[2]))

print(“%s: %.2f%% (MSE)” % (model.metrics_names[3], scores[3]))

cvscores.append([scores[1],scores[2],scores[3]])

print(“%.2f MSE of training” % (numpy.mean(cvscores,axis=0)))

But unfortunately I get this error :

Found input variables with inconsistent number of samples: [15000,3].

I have a [15000sample x 72predictor] as X_training and [15000samples x 3 outputs] as [Y_training10, Y_training20, Y_training30].

Any sort of help would be appreciated.

You have to make sure your input and output data match the same of your network and that train and test have the same number of features.

Hi, Thank you for an awesome relevant post. I have a question about implementing training\test\validation 80\10\10. Which is sort of outlined in this question: https://stats.stackexchange.com/questions/19048/what-is-the-difference-between-test-set-and-validation-set – how would I further split my test set?

I’m glad it helped Ashley.

Why would you further split your data?

It’s something from the ML course on Udacity. They use a validation set along side test set. It’s outlined in this lecture: https://classroom.udacity.com/courses/ud730/lessons/6370362152/concepts/63798118330923 Lesson 1 #22. They explain it as your test data bleeding into your training data over time, and this biases the training – so to further split your data, train on train data, validate on validation set and only at the very end test on test data.

This link gets you to #22 https://classroom.udacity.com/courses/ud730/lessons/6370362152/concepts/63798118300923

As I say this – I realise that one way to do it is to train and validate and save the model, then load model and test on the test set.

Sure, what is your question exactly?

Initially, it was: how do I take in a validation set and a test set and have two different testing out puts after final run. i.e. How do I set up my model to have a split test/validation set (so have training, validation, test all in one session)

Now I am just assuming that I should train (fit) on my data, optimise my net according to my validation data (evaluate) and save the model. Then reload it and evaluate again but on test data that it has never seen before – which I am hoping will help me with the small data set I have.

You can do that, sounds fine.

Hey, Jason. Great website! I like how you’ve laid out your posts and how you explain the concepts. I haven’t been able to figure out the final result from using k-fold. Let’s assume I execute a k-fold just like you’ve done in your example. Then, I use the code from another of your great posts to save the model (to JSON) and save the weights. Which of the 10 models (created during the k-fold loop) will be saved? The last of the 10?

I want to have a saved model that I can use on new datasets in the future. How does creating 10 models with k-fold help me get a better model than using an automatic validation split (as described in this post)?

Thank you!

CV gives a less biased estimate of the models skill on unseen data than a train/test split, at least in general with smallish datasets (less than millions of obs).

Once you have tuned your model, throw all the trained models away and train a final model with all your data and start using it to make predictions.

See this post:

https://machinelearningmastery.com/train-final-machine-learning-model/

Some models are very expensive to train, in which case don’t use CV and keep the best models you train, use them in an ensemble as final models.

Does that help?

Yes, that post was exactly what I needed. Thank you!

Glad to hear that Michael.

Hello, Jason

I’m one of your ebook readers 🙂

Let me ask a strange behavior my case.

When I train & validate my model with dataset..like

model.fit( train_x, train_y, validation_split=0.1, epochs=15, batch_size=100)

it seems to be overfitting to see between acc and val_acc.

67473/67473 [==============================] – 27s – loss: 2.9052 – acc: 0.6370 – val_loss: 6.0345 – val_acc: 0.3758

Epoch 2/15

67473/67473 [==============================] – 26s – loss: 1.7335 – acc: 0.7947 – val_loss: 6.2073 – val_acc: 0.3788

Epoch 3/15

67473/67473 [==============================] – 26s – loss: 1.5050 – acc: 0.8207 – val_loss: 6.1922 – val_acc: 0.3952

Epoch 4/15

67473/67473 [==============================] – 26s – loss: 1.4130 – acc: 0.8380 – val_loss: 6.2896 – val_acc: 0.4092

Epoch 5/15

67473/67473 [==============================] – 26s – loss: 1.3750 – acc: 0.8457 – val_loss: 6.3136 – val_acc: 0.3953

Epoch 6/15

67473/67473 [==============================] – 26s – loss: 1.3350 – acc: 0.8573 – val_loss: 6.4355 – val_acc: 0.4098

Epoch 7/15

67473/67473 [==============================] – 26s – loss: 1.3045 – acc: 0.8644 – val_loss: 6.3992 – val_acc: 0.4018

Epoch 8/15

67473/67473 [==============================] – 26s – loss: 1.2687 – acc: 0.8710 – val_loss: 6.5578 – val_acc: 0.3897

Epoch 9/15

67473/67473 [==============================] – 26s – loss: 1.2552 – acc: 0.8745 – val_loss: 6.4178 – val_acc: 0.4104

Epoch 10/15

67473/67473 [==============================] – 26s – loss: 1.2195 – acc: 0.8796 – val_loss: 6.5593 – val_acc: 0.4044

Epoch 11/15

67473/67473 [==============================] – 26s – loss: 1.1977 – acc: 0.8833 – val_loss: 6.5514 – val_acc: 0.4041

Epoch 12/15

67473/67473 [==============================] – 26s – loss: 1.1828 – acc: 0.8874 – val_loss: 6.5972 – val_acc: 0.3973

Epoch 13/15

67473/67473 [==============================] – 26s – loss: 1.1665 – acc: 0.8890 – val_loss: 6.5879 – val_acc: 0.3882

Epoch 14/15

67473/67473 [==============================] – 26s – loss: 1.1466 – acc: 0.8931 – val_loss: 6.5610 – val_acc: 0.4104

Epoch 15/15

67473/67473 [==============================] – 27s – loss: 1.1394 – acc: 0.8925 – val_loss: 6.5062 – val_acc: 0.4100

but , when I split the data into train & test with StratifiedKFold(n_splits=10, shuffle=True, random_state=7)

and evaluate it with test data, it shows good result of accuracy.

score = model.evaluate(test_x, test_y)

[1.3547255955601791, 0.82816451482507525]

so.. I tried to validate with test dataset which was split with StratifiedKFold.

model.fit( train_x, train_y, validation_data=(test_x, test_y), epochs=15, batch_size=100)

and it shows good result of val_acc.

Epoch 1/14

67458/67458 [==============================] – 27s – loss: 2.9200 – acc: 0.6006 – val_loss: 1.9954 – val_acc: 0.7508

Epoch 2/14

67458/67458 [==============================] – 26s – loss: 1.8138 – acc: 0.7536 – val_loss: 1.6458 – val_acc: 0.7844

Epoch 3/14

67458/67458 [==============================] – 26s – loss: 1.5869 – acc: 0.7852 – val_loss: 1.5848 – val_acc: 0.7876

Epoch 4/14

67458/67458 [==============================] – 25s – loss: 1.4980 – acc: 0.8056 – val_loss: 1.5353 – val_acc: 0.8015

Epoch 5/14

67458/67458 [==============================] – 25s – loss: 1.4375 – acc: 0.8202 – val_loss: 1.4870 – val_acc: 0.8117

Epoch 6/14

67458/67458 [==============================] – 25s – loss: 1.3795 – acc: 0.8324 – val_loss: 1.4738 – val_acc: 0.8139

Epoch 7/14

67458/67458 [==============================] – 26s – loss: 1.3437 – acc: 0.8400 – val_loss: 1.4677 – val_acc: 0.8146

Epoch 8/14

67458/67458 [==============================] – 26s – loss: 1.3059 – acc: 0.8462 – val_loss: 1.4127 – val_acc: 0.8263

Epoch 9/14

67458/67458 [==============================] – 26s – loss: 1.2758 – acc: 0.8533 – val_loss: 1.4087 – val_acc: 0.8219

Epoch 10/14

67458/67458 [==============================] – 25s – loss: 1.2381 – acc: 0.8602 – val_loss: 1.4095 – val_acc: 0.8242

Epoch 11/14

67458/67458 [==============================] – 26s – loss: 1.2188 – acc: 0.8644 – val_loss: 1.3960 – val_acc: 0.8272

Epoch 12/14

67458/67458 [==============================] – 25s – loss: 1.1991 – acc: 0.8677 – val_loss: 1.3898 – val_acc: 0.8226

Epoch 13/14

67458/67458 [==============================] – 25s – loss: 1.1671 – acc: 0.8733 – val_loss: 1.3370 – val_acc: 0.8380

Epoch 14/14

67458/67458 [==============================] – 25s – loss: 1.1506 – acc: 0.8750 – val_loss: 1.3363 – val_acc: 0.8315

Do you have any idea the reason why the result of auto validation_split and validation with test dataset ?

Thanks in advance.

There is no need to validate when using cross validation. The model is doing twice the work.

Perhaps the stratification of the data sample is important to your model?

Perhaps the model performs better on the smaller sample (e.g. 1/10th of data if 10-folds).

I am having same problem here, when you set shuffle=False when do Kfold CV, you will have low accuracy as well. the auto validation_split didnt shuffle the validation data.

you can try:

StratifiedKFold(n_splits=10, shuffle=True, random_state=7)

model.fit( train_x, train_y, validation_split=0.1, epochs=15, verbose=1 ,batch_size=100)

score = model.evaluate(test_x, test_y)

you will see val_acc is very low and final score is good.

Hello,

First of all congrats for these tutorials, they are great!

I’m trying to use StratifiedKFold validation in a multiple inputs network (3 inputs) for a regression problem, and I’m having several problems when using it. First of all, in the step:

“for train,test in kfold.split()” I’m introducing just one of the inputs and the labels structure, this way: “for train, test in kfold.split(X1, Y):”, and then inside the loop I define “X1_train = X1[train], X2_train = X2[train], X3_train=X3[train]” and so on. This way, when fitting my model I use “model.fit([X1_train, X2_train, X3_train], Y_train….)”. But I’m getting the error “n_splits=10 cannot be greater than the number of members in each class”, and I don’t know how to fix it.

I have also try the option you give in this tutorial: https://machinelearningmastery.com/regression-tutorial-keras-deep-learning-library-python/

But in this case the error I get is “Found input variables with inconsistent numbers of samples”.

I don’t know how can I implement this, I would appreciate any help. Thanks.

All rows must have the same number of columns, if that helps?

X1, X2 and X3 have shape (nb_samples, 2, 8, 10), while Y has shape (nb_samples, 4). I don’t know if it is not able to recognize that the common axis is nb_samples (although I read in the documentation that it takes by default the first axis).

I have resolved it creating an structure of zeros with just one dimension: X_split = np.zeros((X1.shape[0])) and Y_split = np.zeros((Y.shape[0])), and I use those two arrays to create the for loop. But I don’t know why I cannot do it the other way.

Hello, I am facing the same issue. Could you please provide the code example?

You are creating X_split= = np.zeros((X1.shape[0])) for X2 and X3 and Y_split before

kfold.split(X1, Y)?

Thank you

Hi Jason, thanks for your blogs and I learned a lot from your posts.

I encountered a strange problem while using Keras, my problem is regression problem and I would like to show and record the loss and validation loss while training. But when I only assign “validation_split”, then I can only get the “loss” without any “validation loss”, after I manually assign the “validation_data” into model.fit the I can get either “loss” and “validation loss”.

From the document of Keras, “validation_split” will use last XX% of data without shuffle as the validation data, I assume it should have the “validation loss” as well, but I cannot find and get it. Do you have any ideas about it, thanks in advance!

If you set validation data or a validation split, then the validation loss will be printed each epoch if verbose=1 and available in the history object at the end of the run.

Hi Jason, after doing the k-fold CV, how do you train the NN on your whole data set? Because usually, we train it as long as the validation set accuracy is increasing. Before applying the NN into the wild, we would like to train it on the whole data set, if our data set size is small. How do we train it then?

Great question, see this post:

https://machinelearningmastery.com/train-final-machine-learning-model/

Hi Jason. i have trained a network using Keras for segmentation purpose of MRI images. My test data has no ground truth. I need to save the output of network for test set as Images and submit them for evaluation. I would like to ask you how can I do this procedure in testing part. As I know for evaluation in Keras, I need both test samples and the coressponding ground truth!!!!

Correct, you need ground truth in order to evaluate any model.

Thank you for your precious tutorials

I have a question about confusion between test set and validation set in tensorflow+keras.

In your tutorials validation dataset does not affect to training and totally independent from training procedure.

validation dataset is only for monitoring and early stopping. in addition, validation set is not used in training (updating weight, gradient decent, etc).

However i found that wikipedia says in different way as follows:

A validation dataset is a set of examples used to tune the hyperparameters (i.e. the architecture) of a classifier.

LINK: https://en.wikipedia.org/wiki/Training,_test,_and_validation_sets

It sounds like validation dataset is for tuning parameters means that it is used in training procedure.

If it is true we will face overfitting.

if validation data set does not affect to training as your tutorial then does keras use some part of training dataset automatically for validating and tuning parameters?

Yes, validation set is used for tuning the model and is a subset of the training dataset.

Perhaps this post will clear things up:

https://machinelearningmastery.com/difference-test-validation-datasets/

Thank you Mr @Jason Brownlee for your quick answer

Let me clear my question again, i am asking this question not because i do not know the concept of three datasets.

it is because i do not know how background of Keras use the datasets.

For example. in this tutorilal https://machinelearningmastery.com/sequence-classification-lstm-recurrent-neural-networks-python-keras/

You defined only two datasets as:

(X_train, y_train), (X_test, y_test) = imdb.load_data(nb_words=top_words)

You said that validation dataset may disappear if there is K-fold validation (in that case validation is picked from training set), however in the tutorial we did not use k-fold validation. So where is validation set? is it still in the training set?

in the code below, fit function uses validation_data for tuning parameters isn’t it? and also you assigned test data to validation data. in that case we need new test data for evaluation, is it right?

model.fit(X_train, y_train, validation_data=(X_test, y_test), epochs=3, batch_size=64)

in the code below, evaluate function results unbiased score isn’t it? then where is validation data? does keras background code automatically split X_train to train and validation parts?

model.fit(X_train, y_train, nb_epoch=3, batch_size=64)

# Final evaluation of the model

scores = model.evaluate(X_test, y_test, verbose=0)

We do not have to use a validation dataset and in many tutorials I exclude that part of the process for brevity.

Means that keras picks part of training dataset automatically for validating and tuning parameters?

If we do not use validation dataset how to tune parameters?

It can, or we can specify it.

You can tune on the training dataset.

Hi Jason,

Thank you very much for your blog and examples, it is great!

Look I merged two of your examples: the one above and the Save and Load Your Keras Deep Learning Models (https://machinelearningmastery.com/save-load-keras-deep-learning-models/). You can see the code below:

# MLP for Pima Indians Dataset with 10-fold cross validation

from keras.models import Sequential

from keras.layers import Dense

from sklearn.model_selection import StratifiedKFold

from keras.models import model_from_json

import numpy

# fix random seed for reproducibility

seed = 7

numpy.random.seed(seed)

# load pima indians dataset

dataset = numpy.loadtxt(“pima-indians-diabetes.csv”, delimiter=”,”)

# split into input (X) and output (Y) variables

X = dataset[:,0:8]

Y = dataset[:,8]

#make model

def make_model():

model = Sequential()

model.add(Dense(12, input_dim=8, activation=’relu’))

model.add(Dense(8, activation=’relu’))

model.add(Dense(1, activation=’sigmoid’))

# Compile model

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

return(model)

# define 10-fold cross validation test harness

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

cvscores = []

for train, test in kfold.split(X, Y):

# create model

model = make_model()

# Fit the model

model.fit(X[train], Y[train], epochs=150, batch_size=10, verbose=0)

# serialize model to JSON

model_json = model.to_json()

with open(“model.json”, “w”) as json_file:

json_file.write(model_json)

# serialize weights to HDF5

model.save_weights(“model.h5”)

print(“Saved model to disk”)

# evaluate the model

scores = model.evaluate(X[test], Y[test], verbose=0)

print(“%s: %.2f%%” % (model.metrics_names[1], scores[1]*100))

cvscores.append(scores[1] * 100)

del model_json

del model

print(“%.2f%% (+/- %.2f%%)” % (numpy.mean(cvscores), numpy.std(cvscores)))

# load json and create model

json_file = open(‘model.json’, ‘r’)

loaded_model_json = json_file.read()

json_file.close()

loaded_model = model_from_json(loaded_model_json)

# load weights into new model

loaded_model.load_weights(“model.h5”)

print(“Loaded model from disk”)

# evaluate loaded model on test data

loaded_model.compile(loss=’binary_crossentropy’, optimizer=’rmsprop’, metrics=[‘accuracy’])

score = loaded_model.evaluate(X, Y, verbose=0)

print(“%s: %.2f%%” % (loaded_model.metrics_names[1], score[1]*100))

And the output is as follow:

Saved model to disk

acc: 76.62%

Saved model to disk

acc: 74.03%

Saved model to disk

acc: 71.43%

Saved model to disk

acc: 72.73%

Saved model to disk

acc: 70.13%

Saved model to disk

acc: 64.94%

Saved model to disk

acc: 66.23%

Saved model to disk

acc: 64.94%

Saved model to disk

acc: 63.16%

Saved model to disk

acc: 72.37%

69.66% (+/- 4.32%)

Loaded model from disk

acc: 75.91%

Naively I was expecting to get the save accuracy as the last model I saved (which was 72.37%), but I got 75.91%. Could you please explain how the weights are saved inside a k-fold cross validation?

Thanks,

Estelle

Machine learning algorithms are stochastic, see this post for more details:

https://machinelearningmastery.com/randomness-in-machine-learning/

Hi Jason,

Thank you for your quick answer and reference.

Yes, I forgot that randomness happens at so many levels.

Thanks.

Indeed!

Hi Jason,

I try to use your example in my Classification Model, but I got this Error

ValueError: Supported target types are: (‘binary’, ‘multiclass’). Got ‘multilabel-indicator’ instead.

I’m sorry to hear that. Ensure that all of your Python libraries are up to date and that you copied all of the code from the example.

This might be related to how KFold or StratifiedKFold defines ‘multi-label’, see here: https://stackoverflow.com/questions/48508036/sklearn-stratifiedkfold-valueerror-supported-target-types-are-binary-mul/51795404#51795404

Sir, can you sugest how to do startified kfol crossvalidation for this case.

https://gist.github.com/dirko/1d596ca757a541da96ac3caa6f291229

Sorry, I do not have the capacity to review your code.

sorry it’s not my code – just found on the internet and I am learning- So I would like to know that if forward and backward training and testing data exist how to do pass two parameters in kfold.split(X, Y)

as here in the link its given that X_enc_f X_enc_b and y_enc as the forward backward and label encoders

Maybe this doco will help:

http://scikit-learn.org/stable/modules/generated/sklearn.model_selection.KFold.html

HI Jason,

How do you apply model.predict when using k-folds?

I want to be able to create a classification report for my model and possibly an AUC plot, heat map, precision-recall graph as well

Good question, see this post on creating a final model:

https://machinelearningmastery.com/train-final-machine-learning-model/

Sorry what I meant was, how do you code model.predict/predict_proba when you use the kfold.split method?

The examples I’ve seen that don’t use k-folds have code like model.predict(x_test) after applying model.fit.

I’d like to use precision_recall_curve and roc_curve with k-folds

You do not. CV is for evaluating a model, then you can build a final model. Please see the post that I linked.

We are doing 10-fold cross validation on some optical character recognition data set. we used kfold, kerasclassifier functions. A snap shot of our output is

18000/18000 [==============================] – 1s – loss: 0.5963 – acc: 0.8219

Epoch 144/150

18000/18000 [==============================] – 1s – loss: 0.5951 – acc: 0.8217

Epoch 145/150

18000/18000 [==============================] – 1s – loss: 0.5941 – acc: 0.8219

Epoch 146/150

18000/18000 [==============================] – 1s – loss: 0.5928 – acc: 0.8225

Epoch 147/150

18000/18000 [==============================] – 1s – loss: 0.5908 – acc: 0.8234

Epoch 148/150

18000/18000 [==============================] – 2s – loss: 0.5903 – acc: 0.8199

Epoch 149/150

18000/18000 [==============================] – 1s – loss: 0.5892 – acc: 0.8217

Epoch 150/150

18000/18000 [==============================] – 1s – loss: 0.5917 – acc: 0.8235

1720/2000 [========================>…..] – ETA: 0sAccuracy: 81.24% (0.94%)

How to interpret this output?

It shows the progress (epoch number n of m), the loss (minimizing) and the accuracy (maximizing).

What is the problem exactly?

Hello Jason,

Did i understand correctly: using K-folds won’t necessarily increase the accuracy of the model but instead give a more realistic (or “accurate”) accuracy rate? I’m getting a slightly lower score or accuracy on my model which uses your example of kfolds, vs. a very simple model that simply evaluated on test data

scores = model.evaluate(X_test, Y_test)

Yes, the idea is that k-fold cross validation provides a less biased estimate of the skill of your model on unseen data.

This is on average.

A difficult problem or a bad k value can still give poor skill estimates.

Hi Jason,

I appreciate your tutorial. tell you the truth I want to write k-fold cross validation from scratch for the first time, but I do not know which tutorial teach novice student better. I want to write k- fold cross validation for lstm, Rnn, cnn.

would u please recommend me which link is the best one to do the issue??

1-https://machinelearningmastery.com/use-keras-deep-learning-models-scikit-learn-python/

2-https://machinelearningmastery.com/evaluate-skill-deep-learning-models/

3-https://towardsdatascience.com/train-test-split-and-cross-validation-in-python-80b61beca4b6

4- https://www.kaggle.com/stefanie04736/simple-keras-model-with-k-fold-cross-validation

If there are any better tutarial link to teach k-fold cross validataion for deep learning function in keras with tensorflow, please introduce to us.

any answers will be apprecuated.

Best wishes

Maryam

Choose a tutorial that teaches in a style that suits you.

Tell u the truth they are different with each other and I do not know which is proper for writing k-fold cross validation for RNN,CNN, Lstm?

the written codes for k-fold cross validation are different.

please show me which code will work fine for the issue?

Thank u

For RNNs, you may want to use walk-forward validation instead of k-fold cross validation:

https://machinelearningmastery.com/backtest-machine-learning-models-time-series-forecasting/

Hi Jason,

I am grateful for the link. but I have a sentiment analysis binary classification. the given link is for Time Series Forecasting which is not my issue.

I do not know which codes are proper for writing k-fold cross-validation for binary sentiment analysis(text classification) as codes are different and I have not seen a k-fold cross-validation code for cnn or lstm.

Would u please introduce me a similar sample code?

Any replying will be appreciated.

I would recommend scikit-learn for cross validation of deep learning models, if you have the resources.

As in the link I provided.

The specific dataset used in the example is irrelevant, you are interested in the cross validation.

I cannot write the code for you. You have everything you need.

Hi Jason,

I should appreciate the tutorial but when I copy your code and paste it into my spyder, it gave me error in this command line “model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’]).

the error is this: model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

^

IndentationError: unindent does not match any outer indentation level.

but I am sure I have written codes as the same as yours.

To solve the problem I remove the indent and written the code as below but gave me just the final result=acc: 64.47%..

But I want to give each fold’s results like yours:

acc: 77.92%

acc: 68.83%

acc: 72.73%

acc: 64.94%

acc: 77.92%

acc: 35.06%

acc: 74.03%

acc: 68.83%

acc: 34.21%

acc: 72.37%

64.68% (+/- 15.50%)

when I remove the indent space the code just gives me this result:acc: 64.47%

64.47% (+/- 0.00%)

My own written code after removing unindent is this:

for train, test in kfold.split(X, Y):

# create model

model = Sequential()

model.add(Dense(12, input_dim=8, activation=’relu’))

model.add(Dense(8, activation=’relu’))

model.add(Dense(1, activation=’sigmoid’))

# Compile model

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

model.fit(X[train], Y[train], epochs=15, batch_size=10, verbose=0)

# evaluate the model

scores = model.evaluate(X[test], Y[test], verbose=0)

print(“%s: %.2f%%” % (model.metrics_names[1], scores[1]*100))

cvscores.append(scores[1] * 100)

print(“%.2f%% (+/- %.2f%%)” % (numpy.mean(cvscores), numpy.std(cvscores)))

I just write the section that i have chaned= removing indent.

the differents between mine and yours are just remiving indent for these 5 command lines which are :

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

model.fit(X[train], Y[train], epochs=150, batch_size=10, verbose=0)

scores = model.evaluate(X[test], Y[test], verbose=0)

print(“%s: %.2f%%” % (model.metrics_names[1], scores[1]*100))

cvscores.append(scores[1] * 100)

what is the reason which cause me this error (model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

^

IndentationError: unindent does not match any outer indentation level) when i write code as yours.

how can i fix the error?

I really need your help.

Sorry for noting long.

Best REgards

Maryam

Ensure that the code is on one line, e.g. this is a python syntax error.

It is working fine now.

Thank u.

Glad to hear it.

Hi, Jason. Thank you for your tutorial. I’d like to apply the KStratifiedFold to my code using Keras, but I don’t know how to do it. This is based on the tutorial from the Keras blog post ”

Building powerful image classification models using very little data”. In here, the author of the code uses the ‘fit_generator’, instead of ‘X = dataset[:,0:8], Y = dataset[:,8]’

How can I make this work? I’ve been scratching my head for weeks, but I am out of idea…

I’m open to all suggestions and any answers will be appreciated.

Regards,

SUH

p.s: Here’s the full code.

Sorry, I cannot debug your code for you. Perhaps post to stackoverflow.

hi,

thanks for your hard work. every time I have a question google usually sent me to your site.

I came here because I googled ” how to calculate AUC with model.fit_generator” I found that one of your reader had similar issue but you only use “ImageDataGenerator”to augment.

I tried

——————————————————————————————————————

from sklearn.metrics import roc_curve, auc

y_pred = InceptionV3_model.predict_generator(test_generator,2000 )

but dont know how to get y_test

roc_auc_score(y_test, y_pred)

y_test would be the expected outputs for the test dataset.

HI Jason

please elaborate the difference between the validation,training_score and test score?

This post might help:

https://machinelearningmastery.com/difference-test-validation-datasets/

Hi,

Many Thanks for this great post.. learnt a lot…I followed the 5 fold cross validation approach for my dataset, that contains 2000 posts and used 25 epochs.. The accuracy keep increasing, after every fold and finally reached more than 97%… But, in your blog, the acc either increases or decreases in all the folds.. could you please explain the reason, why my results are different…

PLOTTING ACCURACY, PRECISION, RECALL FOR FOLD: 1

Accuracy: : 92.18%

PLOTTING ACCURACY, PRECISION, RECALL FOR FOLD: 2

Accuracy: : 95.84%

PLOTTING ACCURACY, PRECISION, RECALL FOR FOLD: 3

Accuracy: : 98.03%

PLOTTING ACCURACY, PRECISION, RECALL FOR FOLD: 4

Accuracy: : 99.26%

PLOTTING ACCURACY, PRECISION, RECALL FOR FOLD: 5

Accuracy: : 100.00%

Accuracy of 5-Fold Cross Validation with standard deviation:

97.21% (+/- 2.57%)

The skill of the model for any given run is stochastic, given the stochastic nature of the algorithm. You can learn more about this here:

https://machinelearningmastery.com/randomness-in-machine-learning/

Hi, Jason

I’m new in python and deep learning machine

thank you for your all tutorials I learnt too much so far

I have question can you explain to me simply what does it mean

what is val_acc , loss and val-loss in the model what it does tell

I read many comments and articles but I could not get it

poch 145/150

514/514 [==============================] – 0s – loss: 0.4847 – acc: 0.7704 – val_loss: 0.5668 – val_acc: 0.7323

Epoch 146/150

514/514 [==============================] – 0s – loss: 0.4853 – acc: 0.7549 – val_loss: 0.5768 – val_acc: 0.7087

Epoch 147/150

514/514 [==============================] – 0s – loss: 0.4864 – acc: 0.7743 – val_loss: 0.5604 – val_acc: 0.7244

Epoch 148/150

514/514 [==============================] – 0s – loss: 0.4831 – acc: 0.7665 – val_loss: 0.5589 – val_acc: 0.7126

Epoch 149/150

514/514 [==============================] – 0s – loss: 0.4961 – acc: 0.7782 – val_loss: 0.5663 – val_acc: 0.7126

Epoch 150/150

514/514 [==============================] – 0s – loss: 0.4967 – acc: 0.7588 – val_loss: 0.5810 – val_acc: 0.6929

Thank you once again

val_loss is the calculated loss on the validation dataset.

val_acc is the calculated accuracy on the validation dataset.

They are different from loss and acc that are calculated on the training dataset.

Does that help?

Yes Thank you 🙂

Hello, thanks a lot for this tutorial i have been searching for something like this, i am glad i finally found it. but i would like to know how i can visual the training of this neural network in the example, in one of your tutorial i could plot “val_acc” against “acc” but i can not do same here because there is no “val_acc” here in k fold validation. so please how do i do this here if i evaluate with k fold validation. thank you

Good question, here’s an example:

https://machinelearningmastery.com/display-deep-learning-model-training-history-in-keras/

You could plot learning curves for one train/test pass, or for all, for example:

https://machinelearningmastery.com/diagnose-overfitting-underfitting-lstm-models/

Hi!

Thanks for the tutorial, very helpful. I used the validation_data approach, and it seems to be working and producing different accuracies for each epoch (presumably between train and validation), but it gives a puzzling statement before the model starts:

“Train on 15,000 samples, validate on 15,000 samples.”

Does that mean I messed up and fed it the same data for both train and validation or am I ok? In my case, the train has 15,000 samples and the validation file has 10,000 samples.

Thanks so much!

Perhaps confirm the size/shape of the train and validation dataset, just to make sure you have set things up the way that you expect.

Yeah, it is clearly reading in the train dataset twice. Thanks!

Hi,

Thank you for this. How can I change the manual Kfold cross validation to work for multiclass? Say Y was a 100 by 4 array of zeros and ones?

The model is multiclass, not cross validation.

A deep learning model can support multi-class classification by having one neuron for each class in the hidden layer and using the softmax activation function.

Here is an example:

https://machinelearningmastery.com/multi-class-classification-tutorial-keras-deep-learning-library/

If the goal of a training phase is to improve our model acc and reduce model’s loss on every epoch, why do you create and compile a new model on every fold iterarion?

Great question.

We want to know how skillful the model is on average, when trained on a random sample from the domain, and making predictions on other data in the domain.

To calculate this estimate, we use resampling methods like k-fold cross-validation that make good economical use of a fixed sized training dataset.

Once we select a model+config that has the best estimated skill, we can then train it on all of the available data and use it to start making predictions.

Does that help Victor?

Hello!

Is it possible to plot the accuracy and loss graphs (history) resulting from a validation made with the StratifiedKFold class?

Yes, but you will have one plot per fold. You may also have to iterate the folds manually to capture the history and plot it.

Yes, I am able to make a plot per fold, but i want to make only two graphs, one with the accuracy mean and the other with mean loss for k-fold.

You can create two plots and add a line to each plot for each fold.

I found that if you training data input “X” is a Pandas DataFrame, then you will have to use X.loc[train] to have it work. Otherwise, the indexing of a DataFrame directly supplying numpy array (that the unpack of kfold.split are numpy arrays) will throw KeyError.

In the above tutorial we are loading data as a NumPy array.

Hi Doc. Thanks for your examples, they are straight forward. Can you do the pima implementation of cnn in r?

No, it has no temporal or spatial structure.

Hi,

I have problem printing the confusion martix, classification report and draw AUC curve.

It will be helpful if you provide me how I can print confusion matrix, classification report and draw AUC curve in Neural network using keras in 10 fold cross validation.

Thanks

This post will help with the confusion matrix:

https://machinelearningmastery.com/confusion-matrix-machine-learning/

I have posts scheduled on ROC curves.

Hi Jason..

Thank you so much for all tutorials.

How can use Cross validation with flow_from_directory and fit_generator ??

Sorry, I don’t have an example of this combination.

Hi Jason,

Thanks for your fantastic posts about machine learning.

In your cross-validation code, you used 150 epochs, but the results show only 1 full round of 10-fold cross validation. You only printed the outputs for first epoch?

I really have problems understanding cross validation and epochs. Here is how I understood the code:

is it like each epoch consists of one full run of cross validation? So, in first epoch we run 10-fold cross validation and report the average validation error, in second epoch we continue with the model prepared in first epoch and again run k-fold cross validation and so on till epoch 150? And about the mini-batches, is it like that we divide each training set to 10 mini-batches to do forward backward pass, and after completing the training on whole 10 batches, we refer to validation set to estimate validation error?

Could you tell me whether I perceived the concept of cross validation correctly or not?

thanks,

Samin

Not quite.

You can learn how k-fold cross validation works here:

https://machinelearningmastery.com/k-fold-cross-validation/

Hello Jason,

First of all, thank you for you comprehensive and insightful tutorials, your website is my first stop when I look for the answers. I have implemented a deep neural network for time series forecast. The problem I am facing is that my fitting and evaluating part go well and I have somewhere around 0.8 accuracy for both of them. But when I try to predict for a new batch of samples, I get zeros all over my predictions. I would be very grateful if you can suggest me where to start debugging me model. I can also send my model if you would like to take a look it.

Thank you.

Regards,

Akim

Sounds like an issue with your code rather than the model.

This post will show you how to make predictions:

https://machinelearningmastery.com/how-to-make-classification-and-regression-predictions-for-deep-learning-models-in-keras/

Should not we set_learning_phase to 0 right before calling the evaluate function? According to keras documentation while doing inference we should set it to 0.

Perhaps it is set automatically when evaluate() is called?

hi .i would like to know some basic things about validation data. shall i keep 0.2 % percentage of total training data in validation data folder manually without use validation data split function . will it useful while training model

It really depends on how much data you have to spend. Perhaps check this post:

https://machinelearningmastery.com/difference-test-validation-datasets/

Why do you create model for each iteration in kfold instead of creating model once and then calling model.fit in iterations?

A new set of weights is required to fit a new model on each iteration.

‘Hi! How can I use K-fold validation in multiple label problems?

Directly, no change. What problem are you having exactly?

Hi Jason,

Great article first of all!

In your example you perform model compilation within each fold. That is very slow. I am wondering whether I will achieve the same results if I move the model compilation outside of the for loop like the folllowing?

I guess what I am really asking is, once I’ve initialized and compiled the model, will each call to model.fit() perform an independent fitting using the current folds of training and validation data set without being interfered by weights obtained from the last loop?

If yes, then I suppose it’s faster to do my version of the code as the result will be the same.

If no, and if I still want to only initialize and compile the model once before the for loop, is there any way to reset the model after each model.fit()?

Many thanks

Joseph

To be safe, I’d rather re-define and re-compile the model each loop to ensure that each iteration we get a fresh initial set of weights.

How to modify the below code for plotting graph with k-fold cross validation?

def build_classifier():

classifier = Sequential()

classifier.add(Dense(units = 6, kernel_initializer = ‘uniform’, activation = ‘relu’, input_dim = 13))

classifier.add(Dropout(p = 0.1))

classifier.add(Dense(units = 6, kernel_initializer = ‘VarianceScaling’, activation = ‘relu’))

classifier.add(Dense(units = 6, kernel_initializer = ‘VarianceScaling’, activation = ‘relu’))

classifier.add(Dense(units = 3, kernel_initializer = ‘VarianceScaling’, activation = ‘softmax’))

classifier.compile(optimizer = ‘adam’, loss = ‘categorical_crossentropy’, metrics = [‘accuracy’])

return classifier

classifier = KerasClassifier(build_fn = build_classifier, batch_size = 5, epochs = 200, verbose=1)

accuracies = cross_val_score(estimator = classifier, X = X_train, y = y_train, cv = 10, n_jobs = 1)

mean = accuracies.mean()

variance = accuracies.std()

Thanks and regards

Sanghita

For k-fold cross-validation, I would recommend plotting the distribution scores across the folds, e.g. with a box and whisker plot.

Collect the scores in a list and pass them to pyplot.boxplot()

Hi, jason

What is the limitation/disadvatage of using a manual verification dataset.

Compare to what?

Can I write like this:

result = KerasClassifier(build_fn=baseline_model, epochs=200, batch_size=5, verbose=0)

and then:

plot_loss_accuracy(result)

so that result can be used for kfold validation of scikit-learn as well as confusion matrix display of Keras?

Perhaps try it?

Im just wondering why you use X_test,y_test for validation_data?

An easy shortcut for the tutorial. Ideally we would use a separate dataset.

Shouldn’t each fit during cross-validation be saved so that the best fit can be used later?

No, but you can if you like.

Models during CV are only used to estimate the performance of the modeling process on unseen data. After you have this estimate, the models are discarded and a new final model fit on all available data is developed, called a final model:

https://machinelearningmastery.com/train-final-machine-learning-model/

Dear Jason

Some Question here that in my eyes make little sense:

So far, I have produced the following code in python using Keras with Tensorflow backend (1 batch, sequence of 1).

#Define model

model = Sequential()

model.add(LSTM(128, batch_size=BATCH_SIZE, input_shape=(train_x.shape[1],train_x.shape[2]), return_sequences=True, stateful=False ))#,,return_sequences=Tru# stateful=True

model.add(Dense(2, activation=’softmax’))

opt = tf.keras.optimizers.Adam(lr=0.01, decay=1e-6)

#Compile model

model.compile(

loss=’sparse_categorical_crossentropy’,

optimizer=opt,

metrics=[‘accuracy’]

)

model.fit(

train_x, train_y,

batch_size=BATCH_SIZE,

epochs=EPOCHS,#,

verbose=1)

#Now I want to make sure that the we can predict the training set (using evaluate) and that it is the same result as during training

score = model.evaluate(train_x, train_y, batch_size=BATCH_SIZE, verbose=0)

print(‘ Train accuracy:’, score[1])

The Output of the code is

Epoch 1/10 5872/5872 [==============================] – 0s 81us/sample – loss: 0.6954 – acc: 0.4997

Epoch 2/10 5872/5872 [==============================] – 0s 13us/sample – loss: 0.6924 – acc: 0.5229

Epoch 3/10 5872/5872 [==============================] – 0s 14us/sample – loss: 0.6910 – acc: 0.5256

Epoch 4/10 5872/5872 [==============================] – 0s 13us/sample – loss: 0.6906 – acc: 0.5243

Epoch 5/10 5872/5872 [==============================] – 0s 13us/sample – loss: 0.6908 – acc: 0.5238

Train accuracy: 0.52480716

So the problem is that the final modeling accuracy (0.5238) should be equal (evaluation) accuracy (0.52480716) which it is not. This makes no sense, why cant we use evaluate on our train data and then obtain the same result as during training? There are no dropouts or anything that should make training different from evaluation. The same happens if I use a validation set

The score during training is estimated across the batches I believe, and is reported before weight updates.

You could use early stopping to save the weights for the model after a specific batch I believe. Perhaps a custom early stopping/checkpoint callback?

hi Jason ,

thank you for the helpful tutorial .

I have trained keras model for semantic segmentation with ICNET .

how could evaluate my trained model with mIOU (Mean intersection over union ) on the validation set ? any tips or useful articles

Sorry, I don’t have a tutorial on calculating mIOU.

Hello Jason,

Thank you for the detailed work.

Two quick quetstions:

1) Does it suffice to say that a CVscores.mean of 0.78 has better accuracy than a CVscores.mean of 0.58.

2) What is the implication of former?

Thanks

Yes.

What do you mean by importance exactly?

Mr. Brownlee,

Thank you much for your efforts. Very helpful.

Maybe I’m overthinking but once I get a model with good results it is wrapped into a funtion so I can’t call it from a console. I’m used to using “model.predict(x)”. However, with this code, I get “‘model’ is not defined”. Is finallizing just copying the final model definition to the prompt, compiling it, then fitting it on the learning data, and predicting the unknown data?

Will your code produce the same results as defining the model at the prompt then running it through a KFold loop?

Thanks again!

You can train a separate final model and save it to file for later use, more here:

https://machinelearningmastery.com/train-final-machine-learning-model/

Hi Jason,

Since K fold Cross Validation RANDOMLY splits the data (3,5,10..etc) and then trains the model, is it recommended to use GridSearch / K fold on time series data (Multi Classification using LSTM) ? Because the moment the time series data is randomised, LSTM would loose its meaning right?

No. Correct.

You can use walk-forward validation:

https://machinelearningmastery.com/backtest-machine-learning-models-time-series-forecasting/

Hello,

I see you split the data in the k-fold manner via scikti tools for get more accurate estimation .

My question is, there are build in function for doing the k-fold split in temporal domain (for example stock price), when there are meaning to the order of the sample

Thanks,

MAK

Good question, yes this will help:

https://machinelearningmastery.com/how-to-use-the-timeseriesgenerator-for-time-series-forecasting-in-keras/

Hi Jason,

Great tutorial.

I am facing an issue where my loss is not getting decreased.

I used categorical labels but StratifiedKFold threw an error which led to convert the labels to numerical.

Modified code:

input_img = Input(shape = (242, 242, 1))

kfold = StratifiedKFold(n_splits=6, shuffle=True, random_state=13)

cvscores = []

for train, test in kfold.split(images, labels):

model = Model(input_img, model(input_img))

# Compile model

model.compile(loss=’binary_crossentropy’, optimizer = Adam(), metrics=[‘accuracy’])

# Fit the model

#labels = to_categorical(labels)

model.fit(images[train], labels[train], epochs=epochs, batch_size=batch_size, verbose=1)

# evaluate the model

scores = model.evaluate(images[test], labels[test], verbose=1)

print(“%s: %.2f%%” % (model.metrics_names[1], scores[1]*100))

cvscores.append(scores[1] * 100)

print(“%.2f%% (+/- %.2f%%)” % (numpy.mean(cvscores), numpy.std(cvscores)))

Result:

Epoch 1/100

4000/4000 [==============================] – 8s 2ms/step – loss: 7.1741 – acc: 0.5500

Epoch 2/100

4000/4000 [==============================] – 4s 917us/step – loss: 7.1741 – acc: 0.5500

Epoch 3/100

4000/4000 [==============================] – 4s 922us/step – loss: 7.1741 – acc: 0.5500

Epoch 4/100

4000/4000 [==============================] – 4s 955us/step – loss: 7.1741 – acc: 0.5500

Epoch 5/100

4000/4000 [==============================] – 4s 950us/step – loss: 7.1741 – acc: 0.5500

Epoch 6/100

4000/4000 [==============================] – 4s 948us/step – loss: 7.1741 – acc: 0.5500

Epoch 7/100

4000/4000 [==============================] – 4s 918us/step – loss: 7.1741 – acc: 0.5500

Epoch 8/100

4000/4000 [==============================] – 4s 918us/step – loss: 7.1741 – acc: 0.5500

The loss doesnt seem to decrease , could you let me know where I am going wrong.

Thanks

I have some suggestions here that might help:

https://machinelearningmastery.com/start-here/#better

Sir

To my understanding, Cross validation is a method to help choose a model (or) its hyper parameters.

Like if i have

Neural Network 1 with 10 layers

Neural Network 2 with 100 layers

It can guide me choosing either 1 or 2

After identifying the network, to get my final model i should use entire raining set, run it for certain number of epochs and choose the parameters which provided good accuracy during the runs

Is my presumption correct? kindly clarify this doubt?!

Almost. CV is only used to estimate the performance of the model.

You must then interpret the estimated performance of each model/config and choose.

You can do this directly or use statistical hypothesis testing methods, or other methods.

Yes, afterward, you fit on all data and start using the model.

Hi Mr. Jason

Firstly thank you for your very well explained tutorials

I would like to know how to compute the overall confusion matrix of a deep learning model when using the k-fold cross validation ?

Thanks.

You cannot.

A confusion matrix is for a single run only.

Cross validation estimates model performance over multiple runs.

My purpose is that if I can get the confusion matrix at each fold, then the overall

confusion matrix can be obtained as the sum of all confusion matrices resulting from all

folds. In fact, the performance measure (i.e. accuracy) of the model is the average value

across all folds. Thus, by summing all confusion matrices, the accuracy of the model can

be computed as the ratio between the sum of the diagnoal elements of the resulted

confusion matrix and the sum of all elements.

Thanks again!

The confusion matrix at each fold is computed only based on the results of the model on the test set. I have asked this query because I have founded in various works in my field that the authors use the k-fold cross validation to evaluate the model, and in the same time they draw the confusion matrix of the model.

I would expect the confusion matrix is reported based on a standard test set for the dataset.

I would not recommend it as each cell of the matrix would need to report mean and variance. It would be confusing.

Thanks a lot. In this case, you have an idea on how to draw the total confusion matrix ?. Because, In a numerous papers that I reads, the authors have used the k-cross validation and they have designed jointly the confusion matrix, for example they write as title “confusion matrix obtained using 10-fold cross validation”.

Note : The sum of all elements of the matrix is equal to the size of the overall dataset.

Thanks.

Hi Jason, thx a lot i’m getting lots of help from your tutorials, i’m new for both python and machine learning.

i took your code sample for strat k-fold here and used it with some changes on my data and got good results.

i am saving the best fitted model from the k-fold for future predictions. my question is how to save average model or some how to save the entire model.