Keras is one of the most popular deep learning libraries in Python for research and development because of its simplicity and ease of use.

The scikit-learn library is the most popular library for general machine learning in Python.

In this post, you will discover how you can use deep learning models from Keras with the scikit-learn library in Python.

This will allow you to leverage the power of the scikit-learn library for tasks like model evaluation and model hyper-parameter optimization.

Kick-start your project with my new book Deep Learning With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- May/2016: Original post

- Update Oct/2016: Updated examples for Keras 1.1.0 and scikit-learn v0.18.

- Update Jan/2017: Fixed a bug in printing the results of the grid search.

- Update Mar/2017: Updated example for Keras 2.0.2, TensorFlow 1.0.1 and Theano 0.9.0.

- Update Mar/2018: Added alternate link to download the dataset as the original appears to have been taken down.

- Update Jun/2022: Updated code for TensorFlow 2.x and SciKeras.

Use Keras deep learning models with scikit-learn in Python

Photo by Alan Levine, some rights reserved.

Overview

Keras is a popular library for deep learning in Python, but the focus of the library is deep learning models. In fact, it strives for minimalism, focusing on only what you need to quickly and simply define and build deep learning models.

The scikit-learn library in Python is built upon the SciPy stack for efficient numerical computation. It is a fully featured library for general machine learning and provides many useful utilities in developing deep learning models. Not least of which are:

- Evaluation of models using resampling methods like k-fold cross validation

- Efficient search and evaluation of model hyper-parameters

There was a wrapper in the TensorFlow/Keras library to make deep learning models used as classification or regression estimators in scikit-learn. But recently, this wrapper was taken out to become a standalone Python module.

In the following sections, you will work through examples of using the KerasClassifier wrapper for a classification neural network created in Keras and used in the scikit-learn library.

The test problem is the Pima Indians onset of diabetes classification dataset. This is a small dataset with all numerical attributes that is easy to work with. Download the dataset and place it in your currently working directly with the name pima-indians-diabetes.csv (update: download from here).

The following examples assume you have successfully installed TensorFlow 2.x, SciKeras, and scikit-learn. If you use the pip system for your Python modules, you may install them with:

|

1 |

pip install tensorflow scikeras scikit-learn |

Need help with Deep Learning in Python?

Take my free 2-week email course and discover MLPs, CNNs and LSTMs (with code).

Click to sign-up now and also get a free PDF Ebook version of the course.

Evaluate Deep Learning Models with Cross Validation

The KerasClassifier and KerasRegressor classes in SciKeras take an argument model which is the name of the function to call to get your model.

You must define a function called whatever you like that defines your model, compiles it, and returns it.

In the example below, you will define a function create_model() that creates a simple multi-layer neural network for the problem.

You pass this function name to the KerasClassifier class by the model argument. You also pass in additional arguments of nb_epoch=150 and batch_size=10. These are automatically bundled up and passed on to the fit() function, which is called internally by the KerasClassifier class.

In this example, you will use the scikit-learn StratifiedKFold to perform 10-fold stratified cross-validation. This is a resampling technique that can provide a robust estimate of the performance of a machine learning model on unseen data.

Next, use the scikit-learn function cross_val_score() to evaluate your model using the cross-validation scheme and print the results.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 |

# MLP for Pima Indians Dataset with 10-fold cross validation via sklearn from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense from scikeras.wrappers import KerasClassifier from sklearn.model_selection import StratifiedKFold from sklearn.model_selection import cross_val_score import numpy as np # Function to create model, required for KerasClassifier def create_model(): # create model model = Sequential() model.add(Dense(12, input_dim=8, activation='relu')) model.add(Dense(8, activation='relu')) model.add(Dense(1, activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) return model # fix random seed for reproducibility seed = 7 np.random.seed(seed) # load pima indians dataset dataset = np.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # create model model = KerasClassifier(model=create_model, epochs=150, batch_size=10, verbose=0) # evaluate using 10-fold cross validation kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed) results = cross_val_score(model, X, Y, cv=kfold) print(results.mean()) |

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

Running the example displays the skill of the model for each epoch. A total of 10 models are created and evaluated, and the final average accuracy is displayed.

|

1 |

0.646838691487 |

In comparison, the following is an equivalent implementation with a neural network model in scikit-learn:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# MLP for Pima Indians Dataset with 10-fold cross validation via sklearn from sklearn.model_selection import StratifiedKFold from sklearn.model_selection import cross_val_score from sklearn.neural_network import MLPClassifier import numpy as np # fix random seed for reproducibility seed = 7 np.random.seed(seed) # load pima indians dataset dataset = np.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # create model model = MLPClassifier(hidden_layer_sizes=(12,8), activation='relu', max_iter=150, batch_size=10, verbose=False) # evaluate using 10-fold cross validation kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed) results = cross_val_score(model, X, Y, cv=kfold) print(results.mean()) |

The role of the KerasClassifier is to work as an adapter to make the Keras model work like a MLPClassifier object from scikit-learn.

Grid Search Deep Learning Model Parameters

The previous example showed how easy it is to wrap your deep learning model from Keras and use it in functions from the scikit-learn library.

In this example, you will go a step further. The function that you specify to the model argument when creating the KerasClassifier wrapper can take arguments. You can use these arguments to further customize the construction of the model. In addition, you know you can provide arguments to the fit() function.

In this example, you will use a grid search to evaluate different configurations for your neural network model and report on the combination that provides the best-estimated performance.

The create_model() function is defined to take two arguments, optimizer and init, both of which must have default values. This will allow you to evaluate the effect of using different optimization algorithms and weight initialization schemes for your network.

After creating your model, define the arrays of values for the parameter you wish to search, specifically:

- Optimizers for searching different weight values

- Initializers for preparing the network weights using different schemes

- Epochs for training the model for a different number of exposures to the training dataset

- Batches for varying the number of samples before a weight update

The options are specified into a dictionary and passed to the configuration of the GridSearchCV scikit-learn class. This class will evaluate a version of your neural network model for each combination of parameters (2 x 3 x 3 x 3 for the combinations of optimizers, initializations, epochs, and batches). Each combination is then evaluated using the default of 3-fold stratified cross validation.

That is a lot of models and a lot of computation. This is not a scheme you want to use lightly because of the time it will take. It may be useful for you to design small experiments with a smaller subset of your data that will complete in a reasonable time. This is reasonable in this case because of the small network and the small dataset (less than 1000 instances and nine attributes).

Finally, the performance and combination of configurations for the best model are displayed, followed by the performance of all combinations of parameters.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 |

# MLP for Pima Indians Dataset with grid search via sklearn from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense from scikeras.wrappers import KerasClassifier from sklearn.model_selection import GridSearchCV import numpy as np # Function to create model, required for KerasClassifier def create_model(optimizer='rmsprop', init='glorot_uniform'): # create model model = Sequential() model.add(Dense(12, input_dim=8, kernel_initializer=init, activation='relu')) model.add(Dense(8, kernel_initializer=init, activation='relu')) model.add(Dense(1, kernel_initializer=init, activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer=optimizer, metrics=['accuracy']) return model # fix random seed for reproducibility seed = 7 np.random.seed(seed) # load pima indians dataset dataset = np.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # create model model = KerasClassifier(model=create_model, verbose=0) print(model.get_params().keys()) # grid search epochs, batch size and optimizer optimizers = ['rmsprop', 'adam'] init = ['glorot_uniform', 'normal', 'uniform'] epochs = [50, 100, 150] batches = [5, 10, 20] param_grid = dict(optimizer=optimizers, epochs=epochs, batch_size=batches, model__init=init) grid = GridSearchCV(estimator=model, param_grid=param_grid) grid_result = grid.fit(X, Y) # summarize results print("Best: %f using %s" % (grid_result.best_score_, grid_result.best_params_)) means = grid_result.cv_results_['mean_test_score'] stds = grid_result.cv_results_['std_test_score'] params = grid_result.cv_results_['params'] for mean, stdev, param in zip(means, stds, params): print("%f (%f) with: %r" % (mean, stdev, param)) |

Note that in the dictionary param_grid, model__init was used as the key for the init argument to our create_model() function. The prefix model__ is required for KerasClassifier models in SciKeras to provide custom arguments.

This might take about 5 minutes to complete on your workstation executed on the CPU (rather than GPU). Running the example shows the results below.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

You can see that the grid search discovered that using a uniform initialization scheme, rmsprop optimizer, 150 epochs, and a batch size of 10 achieved the best cross-validation score of approximately 77% on this problem.

For a fuller example of tuning hyperparameters with Keras, see the tutorial:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

Best: 0.772167 using {'batch_size': 10, 'epochs': 150, 'model__init': 'normal', 'optimizer': 'rmsprop'} 0.691359 (0.024495) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'glorot_uniform', 'optimizer': 'rmsprop'} 0.690094 (0.026691) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'glorot_uniform', 'optimizer': 'adam'} 0.704482 (0.036013) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'normal', 'optimizer': 'rmsprop'} 0.727884 (0.016890) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'normal', 'optimizer': 'adam'} 0.709600 (0.030281) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'uniform', 'optimizer': 'rmsprop'} 0.694067 (0.019733) with: {'batch_size': 5, 'epochs': 50, 'model__init': 'uniform', 'optimizer': 'adam'} 0.720134 (0.038867) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'glorot_uniform', 'optimizer': 'rmsprop'} 0.743536 (0.022213) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'glorot_uniform', 'optimizer': 'adam'} 0.744809 (0.022928) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'normal', 'optimizer': 'rmsprop'} 0.734360 (0.024717) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'normal', 'optimizer': 'adam'} 0.742127 (0.035137) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'uniform', 'optimizer': 'rmsprop'} 0.729225 (0.035580) with: {'batch_size': 5, 'epochs': 100, 'model__init': 'uniform', 'optimizer': 'adam'} 0.717537 (0.033634) with: {'batch_size': 5, 'epochs': 150, 'model__init': 'glorot_uniform', 'optimizer': 'rmsprop'} 0.724039 (0.033990) with: {'batch_size': 5, 'epochs': 150, 'model__init': 'glorot_uniform', 'optimizer': 'adam'} 0.753926 (0.016855) with: {'batch_size': 5, 'epochs': 150, 'model__init': 'normal', 'optimizer': 'rmsprop'} ... |

Summary

In this post, you discovered how to wrap your Keras deep learning models and use them in the scikit-learn general machine learning library.

You can see that using scikit-learn for standard machine learning operations such as model evaluation and model hyperparameter optimization can save a lot of time over implementing these schemes yourself.

Wrapping your model allowed you to leverage powerful tools from scikit-learn to fit your deep learning models into your general machine learning process.

Do you have any questions about using Keras models in scikit-learn or about this post? Ask your question in the comments, and I will do my best to answer.

First, this is extremely helpful. Thanks a lot.

I’m new to keras and i was trying to optimize other parameters like dropout and number of hidden neurons. The grid search works for the parameters listed above in your example. However, when i try to optimize for dropout the code errors out saying it’s not a legal parameter name. I thought specifying the name as it is in the create_model() function should be enough; obviously I’m wrong.

in short: if i had to optimize for dropout using GridSearchCV, how would the changes to your code look?

apologies if my question is naive, trying to learn keras, python and deep learning all at once. Thanks,

Shruthi

Great question.

As you say, you simply add a new parameter to the create_model() function called dropout_rate then make use of that parameter when creating your dropout layers.

Below is an example of grid searching dropout values in Keras:

Running the example produces the following output:

I hope that helps

Hi Jason,

Thanks for the post, this is awesome. I’ve found the grid search very helpful.

One quick question: is there a way to incorporate early stopping into the grid search? With a particular model I am playing with, I find it can often over-train and consequently my validation loss suffers. Whilst I could incorporate an array of epoch parameters (like in your example), it seems more efficient to just have it stop if the validation accuracy increases over a small number of epochs. Unless you have a better idea?

Thanks again!

Great comment Rish and really nice idea.

I don’t think scikit-learn supports early stopping in it’s parameter searching. You would have to rig up your own parameter search and add an early stop clause to it, or consider modifying sklearn itself.

I also wonder whether you could hook-up Kears check-pointing and capture the best parameter combinations along the way to file (checkpoint callback) and allow you to kill the search at any time.

Thanks for the reply! 🙂 I wonder too!

Hey Jason,

Awesome article. Did you by any chance find a way to hookup callback functions to grid search? In this case it would be possible to have Tensorboard aggregating and visualizing the grid search outcomes.

This would be the ideal use case. Is there any example for this use case somewhere?

Hi Jason,

Great Post. Thanks for this.

One problem that i have always faced with training a deep learning model (in H2O as well) is that predicted probability distribution is always flat (as in very less variation in probability across the sample). Any other ML model e.g. RF/GBM is easier to tune and gives good results in most cases. So the my doubt is two fold:

1. Except for lets say image data where CNN might be a good thing to try, in what scenarios should we try to fit a deep learning model.

2. I think the issues that i face with deep learning models is usually due to underfitting. Can you please give some tips on how to tune a deep learning model (other ML models are easier to tune)

Thanks

Deep learning is good on raw data like images, text, audio and similar. It can work on complex tabular data, but often feature engineering + xgboost can do better in practice.

Keep adding layers/neurons (capacity) and training longer until performance flattens out.

Thanks. I will try to add more layers and add some regularization parameters as well

Good luck Rishabh, let me know how you go.

Hi Jason, I was able to tune the model, thanks to your post although GBM had a better fit. I had included prelu layers which improved the fit.

One question, is there an optimal way to find number of hidden neurons and hidden layer count or grid search the only option?

Tuning (grid/random) is the best option I know of Rishabh.

Thanks. Grid search takes a toll on my 16 GB laptop, hence searching for an optimal way.

It is punishing. Small grids are kinder on memory.

pip install pandas numpy tensorflow scikit-learn matplotlibimport pandas as pd

import numpy as np

import tensorflow as tf

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import LSTM, Dense, Dropout

from sklearn.preprocessing import MinMaxScaler

from datetime import datetime, timedelta

import matplotlib.pyplot as plt

# Charger les données (remplacer par un vrai fichier CSV)

data = pd.read_csv(“lucky_jet_data.csv”)

# Convertir la colonne time en datetime

data[“time”] = pd.to_datetime(data[“time”])

# Transformer l’heure en secondes écoulées depuis le début

start_time = data[“time”].min()

data[“seconds_since_start”] = (data[“time”] – start_time).dt.total_seconds()

# Normalisation des données

scaler = MinMaxScaler()

data_scaled = scaler.fit_transform(data[[“previous_crash”, “crash_value”, “seconds_since_start”]])

# Création des séquences pour LSTM (on utilise 10 parties précédentes pour prédire la prochaine)

sequence_length = 10

X, y_crash, y_time = [], [], []

for i in range(len(data_scaled) – sequence_length):

X.append(data_scaled[i:i+sequence_length, :]) # 10 dernières entrées

y_crash.append(data_scaled[i + sequence_length, 1]) # Cote cible

y_time.append(data_scaled[i + sequence_length, 2]) # Temps cible

X, y_crash, y_time = np.array(X), np.array(y_crash), np.array(y_time)

# Séparation des données en train/test

split = int(0.8 * len(X))

X_train, X_test = X[:split], X[split:]

y_train_crash, y_test_crash = y_crash[:split], y_crash[split:]

y_train_time, y_test_time = y_time[:split], y_time[split:]# Définition du modèle LSTM

model = Sequential([

LSTM(50, return_sequences=True, input_shape=(sequence_length, 3)),

Dropout(0.2),

LSTM(50, return_sequences=False),

Dropout(0.2),

Dense(25, activation=’relu’),

Dense(2) # Prédit deux valeurs : [cote, temps]

])

# Compilation du modèle

model.compile(optimizer=”adam”, loss=”mse”)

model.summary()

# Entraînement du modèle

history = model.fit(X_train, np.column_stack((y_train_crash, y_train_time)), epochs=50, batch_size=32, validation_data=(X_test, np.column_stack((y_test_crash, y_test_time))))# Prédire avec le modèle

prediction_scaled = model.predict(X_test)

# Reconvertir les valeurs normalisées en valeurs réelles

pred_crash = scaler.inverse_transform(np.column_stack((np.zeros(len(prediction_scaled)), prediction_scaled[:, 0], prediction_scaled[:, 1])))[:, 1]

pred_time = scaler.inverse_transform(np.column_stack((np.zeros(len(prediction_scaled)), prediction_scaled[:, 0], prediction_scaled[:, 1])))[:, 2]

# Convertir les secondes en heure lisible

predicted_time = [start_time + timedelta(seconds=s) for s in pred_time]

# Afficher une prédiction aléatoire

index = np.random.randint(0, len(pred_crash))

print(f”📌 Prédiction de la prochaine cote : {pred_crash[index]:.2f}”)

print(f”🕒 Heure estimée d’apparition : {predicted_time[index].strftime(‘%H:%M:%S’)}”)

Hello Kouame…Do you have a question regarding the content?

Hi Jason,

Thanks for the post. I’m a beginner about keras, and I met some problems using keras with sk-learn recently. If it’s convenient, could you do me a falvor?

Details here:

http://stackoverflow.com/questions/39467496/error-when-using-keras-sk-learn-api

Thank you!!!

Ouch. Nothing comes to mind Xiao, sorry.

I would debug it by cutting it back to the simplest possible network/code that generates the problem then find the line that causes the problem, and go from there.

If you need a result fast, I would use the same decompositional approach to rapidly find a workaround.

Let me know how you go.

Thanks a lot, Jason.

I need the result fast if possible.

Hi, Jason. Got any idea?

Yes, I gave you an approach to debug the problem in the previous comment Xiao.

I don’t know any more than that, sorry.

Thanks, I’ll try it out.

Jason, Thanks for the tutorial it saved me a lot of time. I am running a huge amount of data on a remote server from shell files. The output of the model is written to an additional shell file in case there is errors. However when I run my code, following how you approach above, it outputs the status of training, i.e. epoch number and accuracy, for every model in gridsearch. Is there a way to suppress this output? I tried to use “verbose=0” as an additional argument both in calling “fit” which created an error and GridsearchCV which did not do anything.

Thanks

Great question Josh.

Pass verbose=0 into the constructor of your classifier:

Hello Jason,

First of all thank you so much for your guides and examples regarding Keras and deep learning!! Please keep on going 🙂

Question 1

Is it possible to save the best trained model with grid and set some callbacks (for early stopping as well)? I wanted to implement saving the best model by doing

checkpoint=ModelCheckpoint(filepath, monitor=’val_acc’, verbose=0, save_best_only=True, mode=’max’)

grid_result = grid.fit(X, Y, callbacks=[checkpoint])

but TypeError: fit() got an unexpected keyword argument ‘callbacks’

Question 2

Is there a way to visualize the trained weights and literaly seeing the created network? I want to make neural networks a bit more practical instead of only classification. I know you can plot the model: plot(model, to_file=’model.png’) but I want to implement my data in this model.

Thanks in advance,

Tom

Hi Tom, I’m glad you’re finding my material valuable.

Sorry, I don’t know about call-backs in the grid search. Sounds like it might just make a big mess (i.e. not designed to do this).

I don’t know about built in ways to visualize the weights. Personally, I like to look at the weights for production models to see if something crazy is going on – but just the raw numbers.

Hi Jason,

Thanks for the very well written article! Very clear and easy to understand.

I have a callback that does different types of learning rate annealing. It has four parameters I’d like to optimise.

Based on your above comment I’m guessing the SciKit wrapper won’t work for optimising this?

Do you know how I would do this?

Many thanks for your help.

David

You might have to write your own for loop David. In fact, I’d recommend it for the experience and control it offers.

Hi there, thanks for all your inspiration. When running the above example i get a slightly different result:

Best: 0.751302 using {‘optimizer’: ‘rmsprop’, ‘batch_size’: 5, ‘init’: ‘normal’, ‘nb_epoch’: 150}

e.i. init: normal and not uniform as in your example.

Is it normal with these variations?

Hi Soren,

Results can vary based on the initialization method. It is hard to predict how they will vary for a given problem.

Hey am getting an error “ValueError: init is not a legal parameter”

code is as follows.

init = [‘glorot_uniform’, ‘normal’, ‘uniform’]

batches = numpy.array([50, 100, 150])

param_grid = dict(init=init)

print(str(self.model_comp.get_params()))

grid = GridSearchCV(estimator=self.model_comp, param_grid=param_grid)

grid_result = grid.fit(X_train, Y_train)

i can’t figure out what am doing wrong. Help me out here.

Sorry Saddam, the cause does not seem obvious.

Perhaps post more of the error message or consider posting the question on stack overflow?

Hi Saddam and Jason,

The error is due to the init parameter ‘glorot_uniform’.

Seems like it has been deprecated or something, once you remove this from the possible values (i.e., init=[‘uniform’,’normal’]) your code will work.

Thanks

It does not appear to be deprecated:

https://keras.io/initializations/

Don’t forget to add the parameter (related to parameters inside the creation of your model) that you want to iterate over as input parameter to the function that creates your model.

def create_model(init=’normal’):

…

check you have defined the ‘init’ as parameter in the function. if not define it as parameter in the function. once done, when you create the instance of KerasClassifier and call that in the GRidSearchCV(), it will not throw the error.

Hi Jason,

recently, I tried to use K-fold cross validation for Image classification problem and found the following error

training Image X shape= (2041,64,64)

label y shape= (2041,2)

code:

model = KerasClassifier(build_fn=creat_model, nb_epoch=15, batch_size=10, verbose=0)

# evaluate using 6-fold cross validation

kfold = StratifiedKFold(n_splits=6, shuffle=False, random_state=seed)

results = cross_val_score(model, x, y, cv=kfold)

print results

print ‘Mean=’,

print(results.mean())

an error:

IndexError Traceback (most recent call last)

in ()

2 # evaluate using 6-fold cross validation

3 kfold = StratifiedKFold(n_splits=6, shuffle=False, random_state=seed)

—-> 4 results = cross_val_score(model, x, y, cv=kfold)

5 print results

6 print ‘Mean=’,

IndexError: too many indices for array

I don’t understand what is wrong here?

Thanks,

Sorry Tameru, I have not seen this error before. Perhaps try stack overflow?

Hi, I have the same problem. Do you have any easy way to solve it?

Thanks!

Sounds like it could be a data issue.

Perhaps your data does not have enough observations in each class to split into 6 groups?

Ideas:

– Try different values of k.

– Try using KFold rather than StratifiedKFild

– Try a train/test split

@ Coyan, I am still trying to solve it. let us try Jason’s advice. Thanks, Jason.

Hi

I also encounter this problem, and I guess the scikit-learn k-fold functions do not accept the “one hot” vectors. You may try on StratifiedShuffleSplit with the list of “one hot” vectors.

And that means you can only evaluate Keras model with scikit-learn in the binary classification problem.

Same error for me. I have 6 output classes that are one-hot encoded. So, how can we use cross_val_score for multi-class classification problems with Keras model? Is there any other method? I also want to find the optimum number of nodes in hidden layer with cross_val. Any suggestions? @JiaingLin @Jason.

More on how to configure a neural net for classification here:

https://machinelearningmastery.com/faq/single-faq/how-can-i-change-a-neural-network-from-regression-to-classification

More on how to configure the number of nodes and layers here:

https://machinelearningmastery.com/faq/single-faq/how-many-layers-and-nodes-do-i-need-in-my-neural-network

Dear Dr. Brownlee,

Your results here are around 75%. My experiment with my data result in around 85%. Is this considered good result?

Because DNN and RNN are known for their great performances. I wonder if it’s normal and how we can improve the results.

Regards,

Hi Ali, I have some idea to improve performance here:

https://machinelearningmastery.com/improve-deep-learning-performance/

Thanks for your reply.

Hi Jason,

I cannot tell you how awesome your tutorials are in terms of saving me time trying to understand Keras,

However, I have run into a conceptual wall re: training, validation and testing. I originally understood that wrapping Keras in Gridsearch helped me tune my hyperparameters. So with GridsearchCV, there is no separate training and validation sets. This I can live with as it is the case with any CV.

But then I want to use Keras to predict my outcomes on the model with optimized hyperparameters. Every example I see for model.fit/model.evaluate, uses the argument validation_data (or validation_split) and I’m understand that we’re using our test set as a validation set — a real no no.

Please see https://github.com/fchollet/keras/issues/1753 for a discussion of this and proof that I am not theonly one confused.

SO MY QUESTION IS: In completing your wonderful cookbook how to’s for novices, after I have found all my hyperparameters, how do I run my test data?

If I use model.fit, won’t the test data be unlawfully used to retrain? What exactly is happening with the validation_data or _split argument in model.fit in keras????

Hi Miriam,

Generally, you can hold out a separate validation set for evaluating a final model and set of parameters. This is recommended.

Model selection and tuning can be performed on the same test set using a suitable resampling method (k-fold cross validation with repeats). Ideally, separate datasets would be used for algorithm selection and parameter selection, but is often too expensive (in terms available data).

i follow the step ,but error

ValueError: optimizer is not a legal parameter

i don’t konw how to deal with it

I’m sorry to hear that wenger. Perhaps confirm that you have the latest versions of Keras and sklearn installed?

Hi wenger, that error is produced because there is an error of coding in the example shown below. you should change the function and pass it an argument called optimizer, like this create_model(optimizer), also in the same function you have to edit the model compile and change the optimized (which is fixed) that is wrong if you want to do a grid search… so a good solution would be change ‘adam’ and write again ‘optimizer=optimizer’, by doing this you would be able to run the code again and find the best solver.

Happy deep learning!

I have met your problem, and I find that maybe you haven’t transmit the optimizer to the function model.

hello how can i implement SVM machine learning algorithm by using scikit library with keras

Keras is for deep learning, not SVM. You only need sklearn.

Hi Jason,

As always very informative and skillfully written post.

In the face of an extremely unbalanced data set, how would pipeline under-sampling pre-processing step, in the example above.

Thanks !

I don’t have an example, but this post will help:

https://machinelearningmastery.com/tactics-to-combat-imbalanced-classes-in-your-machine-learning-dataset/

it’s a great one and very helpful, but i am looking for a python-keras recipe.

Hey Jason,

I played around with your code for my own project and encountered an issue that I get different results when using the pipeline (both without standardization)

I posted this also on crossvalidated:

https://stats.stackexchange.com/questions/273911/different-results-for-keras-sklearn-wrapper-with-and-without-use-of-pipline

Can you help me with that?

Thank you

Neural networks are a stochastic algorithm that gives different results each time they are run (unless you fix the seed and make everything else the same).

See this post:

https://machinelearningmastery.com/randomness-in-machine-learning/

Could you provide an example in which data generator and fit_generator is being used..

This post might help with the data generator:

https://machinelearningmastery.com/image-augmentation-deep-learning-keras/

Hi Jason,

Thanks for the post, this is awesome. But i’m facing

ValueError: Can’t handle mix of multilabel-indicator and binary

when i set scoring function to precision, recall, or f1 in cross validation. But it works fine if i didn’t set scoring function just like you did. Do you have any easy way to solve it?

Big Thanks!

Here’s the code :

scores2=cross_val_score(model, X_train.as_matrix(), y_train, cv=10, scoring=’precision’)

Is this happening with the dataset used in this tutorial?

No, actually its happening with breast cancer data set from UCI Machine Learning. I used train_test_split to split the data into training and testing. But when I tried to fit the model, i got

IndexError: indices are out-of-bounds.

So I tried to modify y_train by following code :

y_train = np_utils.to_categorical(y_train)

Do you have any idea ? i tried to solve this error for a week and still cannot fixed the problem.

I believe all the variables in that dataset are categorical.

I expect you will need to use an integer encoding and a one hot encoding for each variable.

Okay, I will try your suggestion. Thanks for your reply 🙂

Hey Anni

do you figure it out. I am kind of confused, since the output of neural network is probability, it cannot get like precision and recall directly….

Jason is there something in deep learning like feature_importance in xgboost?

For images it makes no sense though in this case it can be important

There may be, I’m not aware of it.

You could use a neural net within an RFE process.

Thanks for your reply, Jason! Your blog is GOLD 🙂

Thanks Edward.

Do you know how to save the hyperparameters with the TensorBoard callback?

Sorry, I don’t have an example or tutorial.

Hi Jason!

I’m doing grid search with my own scoring function, but I need to get result like accuracy and recall from training model. So, I use cross_val_score with best params that I get from grid search. But then cross_val_score produce different result with best score that I got from grid search. Do you have any idea to solve my problem?

Thanks,

Yes, deep learning algorithms are stochastic.

You need to repeat evaluation experiments many times and take the average, see this post:

https://machinelearningmastery.com/evaluate-skill-deep-learning-models/

Hi Jason!

I’m doin grid search with my own function as scoring function, but i need to reports other metrics from best param that I got from grid search. So, I’m doin cross validation with best params. But the problem is cross validation produce different result with best score from grid search. The different really significan. Do you have any idea to solve this problem?

Thanks.

Yes, you must re-run the algorithm evaluation multiple times and report the average performance.

See this post:

https://machinelearningmastery.com/evaluate-skill-deep-learning-models/

How could we use Keras with ensembles, let’s take Voting. When base models are from sklearn library everything works fine, but with Keras I’m getting ‘TypeError: cannot create ‘sys.flags’ instances’. Do you know any work around?

You can perform voting manually on the lists of predictions from each sub model.

I’m evaluating 3 algorithms (SVM-RBF, XGBoost, and MLP) against small datasets. Is it true if SVM and XGBoost are suitable for small datasets whereas deep learning require “relatively” large datasets to work well? Could you please explain to me?

Thanks a lot.

Perhaps, let the results and hard data drive your model selection.

Great article. It seems similar to what I am currently learning about. I’m using the sklearn wrapper for Keras; particularly the KerasClassifier and sklearn’s

cross_val_score(). I am running into an issue with then_jobsparameter forcross_val_score. I’d like to take advantage of the GPU on my Windows 10 machine, but am gettingBlas GEMM launch failedwhenn_jobs = -1or any value above 1. Also getting different results if I run it from the shell prompt vs. the python interpreter. Any ideas what I can do to get this to work?Windows 10

Geforce 1080 Ti

Tensorflow GPU

Python 3.6 via Anaconda

Keras 2.0.5

Sorry James, I don’t have good advice on setting up GPUs on windows for Keras.

Perhaps you can post on stackoverflow?

Hello Jason,

when I run your first example, the one which uses StratifiedKFold, with a multiclass dataset of mine, I get an errorIsn’t it possible to run StratifiedKFold with multiclass ? I also have the same problem “”IndexError: too many indices for array”. when try to run a GridSearch with StritifiedKFold

Try changing it from StratifiedKFold to KFold.

Hi Jason,

I follow your steps and the program takes more than 40 minutes to run. Therefore, I gave up waiting in the middle. Do you know if there is any way to speed up the GridSearchCV? Or is it normal to wait more than 40 minutes to run this code on a 2013 Mac? Thank you

You could cut down the number of parameters that are being searched.

I often run grid searches that run for weeks on AWS.

Hi Jason,

How do I save the model when wrapping a Keras Classifier in Scikit’s GridSearchCV?

Do I treat it as a scikit object and use pickle/joblib or do I use the model.save method native to Keras?

Sorry, I have not tried this. It may not be supported out of the box.

Hi Jason,

I want to perform stratified K-fold cross-validation with a model that predicts a distance and not a binary label. Is it possible to provide a distance threshold to the cross-validation method in scikit-learn (or is there some other approach), i.e., distance < 0.5 to be treated as a positive label (y=1) and a negative label (y=0) otherwise?

Stratification requires a class value.

Perhaps you can frame your problem so the outcome distances are thresholded class labels.

Note, this is a question of how you frame your prediction problem and prepare your data, not the sklearn library.

Very cool!! And you can use Recursive Feature Elimination sklearn function by this way too?

Sure.

Please could you tell me formula for relu function , i need it for regression.

Sure, see here:

https://en.wikipedia.org/wiki/Rectifier_(neural_networks)

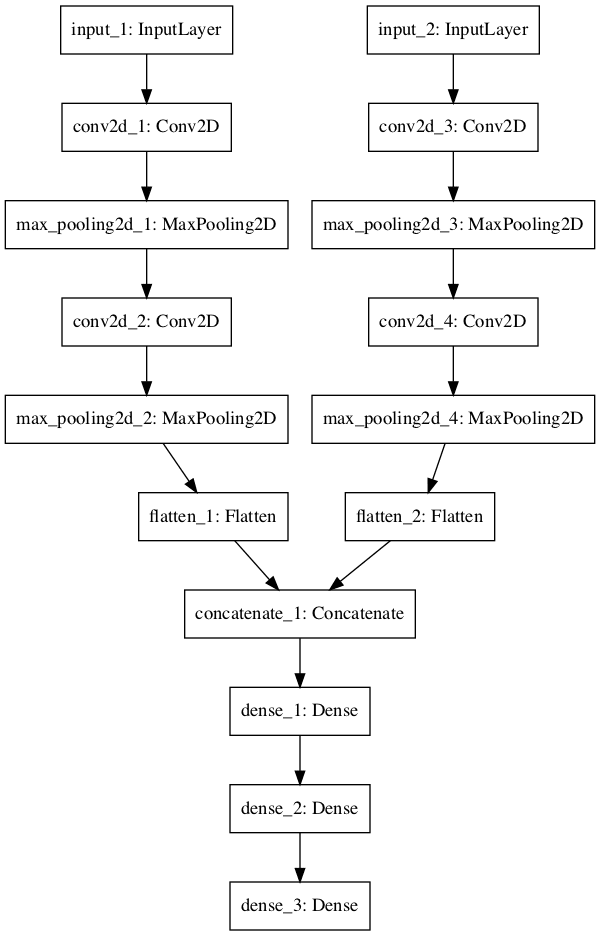

Is it possible to use your code with more complex model architectures? I have 3 different inputs and branches (with ConvLayers), which are concatenated at the end to form a dense layer.

I tried to call the grid.fit() function as follows:

grid_result = grid.fit({‘input_1’: train_input_1, ‘input_2’: train_input_2, ‘input_3’: train_input_3}, {‘main_output’: lebels_train})

I’m getting an error:

“ValueError: found input variables with inconsistent numbers of samples: [3, 1]”

Do you have any experience on that?

I don’t sorry.

I would recommend using native Keras.

from keras.models import Sequential

from keras.layers import Dense

from keras.wrappers.scikit_learn import KerasClassifier

from sklearn.model_selection import StratifiedKFold

from sklearn.model_selection import cross_val_score

import numpy

def create_model():

# create model

model = Sequential()

model.add(Dense(12, input_dim=8, activation=’relu’))

model.add(Dense(8, activation=’relu’))

model.add(Dense(1, activation=’sigmoid’))

# Compile model

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

return model

seed = 7

numpy.random.seed(seed)

dataset = numpy.loadtxt(“/home/nasrin/nslkdd/NSL_KDD-master/KDDTrain+.csv”, delimiter=”,”)

X = dataset[:,0:41]

Y = dataset[:,41]

model = KerasClassifier(build_fn=create_model, epochs=150, batch_size=10, verbose=0)

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

results = cross_val_score(model, X, Y, cv=kfold)

print(results.mean())

Using TensorFlow backend.

Traceback (most recent call last):

File “nsl2.py”, line 20, in

dataset = numpy.loadtxt(“/home/nasrin/nslkdd/NSL_KDD-master/KDDTrain+.csv”, delimiter=”,”)

File “/home/nasrin/.local/lib/python3.5/site-packages/numpy/lib/npyio.py”, line 1024, in loadtxt

items = [conv(val) for (conv, val) in zip(converters, vals)]

File “/home/nasrin/.local/lib/python3.5/site-packages/numpy/lib/npyio.py”, line 1024, in

items = [conv(val) for (conv, val) in zip(converters, vals)]

File “/home/nasrin/.local/lib/python3.5/site-packages/numpy/lib/npyio.py”, line 725, in floatconv

return float(x)

ValueError: could not convert string to float: b’tcp’

sorry to bother you, what step i missed here.

Sorry, the error is not clear.

I list a ton of place to get help with Keras here:

https://machinelearningmastery.com/get-help-with-keras/

Do you know, how to do K.clear_session() inside cross_val_score(), between folds?

I have huge CNN networks, but they fits in my memory when I do just one training. The problem is, when I do cross-validation using sci-kit learn and cross_val_score function. I see that memory is increasing with each fold. Do you know hot to change that? After all, after each fold we have to remember just results, not huge model with all weights.

I’ve tried to use on_train_end callback from keras, but this doesn’t work as model is wiped out before evaluating. So do you know if exists other solution? Unfortunately I don’t see any callbacks in cross_val_score function…

I will be very glad for your help 🙂

No, sorry.

So many thanks for such a helpful code! I have a problem, despite defining the init both in the model and the dictionary in the same way that you have defined it here, I get an error:

‘{} is not a legal parameter’.format(params_name))

ValueError: init is not a legal parameter

Could you please help me with this problem?

Perhaps check your Python is installed correctly and that you copied the code exactly?

This tutorial can help you setup and test your environment:

https://machinelearningmastery.com/setup-python-environment-machine-learning-deep-learning-anaconda/

Hi,

Thank for your nice paper.

I have tried to use AdaBoostClassifier( model, n_estimators=2, learning_rate=1.5, algorithm=”SAMME”)

and used CNN as ‘model’. However I get the following error:

File “”, line 1, in

runfile(‘/home/aboozar/Sphere_FE/adaboost/adaboost_CNN3.py’, wdir=’~/adaboost’)

File “/usr/local/lib/python2.7/dist-packages/spyder/utils/site/sitecustomize.py”, line 688, in runfile

execfile(filename, namespace)

File “/usr/local/lib/python2.7/dist-packages/spyder/utils/site/sitecustomize.py”, line 93, in execfile

builtins.execfile(filename, *where)

File “/~/adaboost_CNN3.py”, line 234, in

bdt_discrete.fit(X_train, y_train)

File “/usr/local/lib/python2.7/dist-packages/sklearn/ensemble/weight_boosting.py”, line 413, in fit

return super(AdaBoostClassifier, self).fit(X, y, sample_weight)

File “/usr/local/lib/python2.7/dist-packages/sklearn/ensemble/weight_boosting.py”, line 130, in fit

self._validate_estimator()

File “/usr/local/lib/python2.7/dist-packages/sklearn/ensemble/weight_boosting.py”, line 431, in _validate_estimator

% self.base_estimator_.__class__.__name__)

ValueError: KerasClassifier doesn’t support sample_weight.

Do you have any advice?

Sorry, the cause of the fault is not clear, perhaps one of these resources will be helpful:

https://machinelearningmastery.com/get-help-with-keras/

Hi,

I am new to keras. Just copied the above code with grid and executed it but getting this error:

“ValueError: init is not a legal parameter”

Your guidance is appreciated.

Double check that you copied all of the code with the same spacing.

Also double check you have the latest version of Keras and sklearn installed.

Hi, great tutorial, so thanks!

I have a question: if I want to use KerasClassifier with my own score function, let’s say maximizing F1, instead of accuracy, in a grid search scenario or similar.

What should I do?

Thanks!

You can specify the scoring function used by sklearn when evaluating the model with CV or what have you, the “scoring” attribute.

Thx for the answer. But, if I understood correctly, the score function of kerasclassifier must be differentiable, since it is used also as loss function, and F1 is not.

No, that is the loss function of the keras model itself, a different thing from the sklearn’s evaluation of model predictions.

Hi Jason, your blog is amazing. I have your Deep Learning book as well.

I have a question: I don’t want the stratified KFold in my code. I have my own validation data. Can I train my model on a given data and check the best scores on a different validation data using Grid Search?

Good question, I’m not sure off hand.

The “cv” argument says it can take an iterable, perhaps you can provide your pairs of train/test to it?

http://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GridSearchCV.html

HI Jason…

Thanks so much for for this set of article on Keras.. I thinks it’s simply awesome

I have a question on what is the best way to run the procedure about Grid Search Deep Learning Model Parameters on a Spark cluster. Is it just a matter to port the code there without any change and the optimization is magically enabled ( run in parallel on different node any combination of parameter to test ) …. or should we import other libraries or apply some changes for enabling that?

thanks a lot in advance

Sorry, I cannot give you good advice for tuning Keras model with Spark.

How to cross validation when we have multi label classification problem ?

Whenever I pass the Y_train, I get ‘IndexError: too many indices for array’, how to resolve this ?

See this tutorial for an example:

https://machinelearningmastery.com/tutorial-first-neural-network-python-keras/

Hi Jason,

How do you obtain the precision and recall scores from the model when using k-folds with KerasClassifier? Is there a method of generating the sklearn.metrics classification report after applying cross_val_score?

I need these values as my dataset is imbalanced and I want to compare the results from before and after undersampling the data by generating a confusion matrix, ROC curve and precision-recall curve.

Yes, change the “scoring” argument to one or a list of metrics to report.

Hi Brownlee ,

Thanks for wonderful material for Machine Learning and Deep Learning. As suggested I have dataset which already split into training and test. Using Keras , How to train model and then predict the model on test data . ?

Learn more about training a final model here:

https://machinelearningmastery.com/train-final-machine-learning-model/

Hi, Jason

How to find the best number of hidden layers and number of neurons?

Can you post the python code? I didn’t find any useful posts by Google.

Thank you very much.

Great question.

You must use trial and error with your specific model on your specific data.

Hi, Jason

Thanks for your response.

I still feel confused. I don’t know how to do? (use grid search or just try and try again?)

Can you provide a clip of python code for the example in this course?

This post may help:

https://machinelearningmastery.com/grid-search-hyperparameters-deep-learning-models-python-keras/

Hi, Jason

Thank you very very much.

You’re welcome.

Hi Jason – thanks for the post. I was not aware of this wrapper. Do we have a similar wrapper for regressor too? I am new to ML and I was trying to do house price prediction problem. I see that it can be done with scikit random forest regressors. But I want to see if we can do the same with keras as well, since I started with keras and find it little easier. I tried with a simple sequential model, with multiple layers, but it did not work. Can you pls let me know how can I implement keras for my problem?

Thanks.

Yes, there is a wrapper for regression.

hello

I face to the error:

what is the problem?

You may not have it imported?

hello

again after importing Kerasclassifier, for Kfold what should i import?

from keras.wrappers.scikit_learn import KerasClassifier

def create_model():

model=Sequential()

…

model.compile(…)

return model

model=KerasClassifier(build_fn=create_model, epochs=150, batch_size=10)

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

NameError Traceback (most recent call last)

in ()

6 return model

7 model=KerasClassifier(build_fn=create_model, epochs=150, batch_size=10)

—-> 8 kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=seed)

NameError: name ‘StratifiedKFold’ is not defined

thank you

Looks like you are still missing some imports.

Consider using existing code from the blog post as a starting point?

Hi

Thanks for the brilliant post.I have one question. Why are we not passing epochs and batches as parameter to create_model function. Can you please helping me in understanding this?

Regards

Subbu

Because we are passing them when we fit the model. The function is only used to define the model, not fit it.

Hi Jason, even though your post seems very straight-forward, still I struggle to implement this grid search approach. It does not give me any error message, BUT it just runs on and on forever without printing out anything. I deliberately tried it with very few epochs and very few hyperparameters to search. Without grid search, one epoch runs through extremely fast, so I don’t think that I just need to give it more time. It simply doesn’t do anything.

My code:

Thanks a lot for any help!!

Perhaps try using a smaller sample of your data?

Perhaps perform the grid search manually with your own for loop? or distributed across machines?

How about changing verbose to verbose=9

model = KerasClassifier(build_fn=create_model, verbose=9)

Not familiar with that setting. What does it do?

Nothing can be more appreciated than looking at the replies that you have made to other people’s issues.

I also have an issue. I want to train a SVM classifier on the weights that I have already saved of a model that used fully connected layer of a ResNet. How do I feel in the values to the SVM classifier?

Any guidance is appreciated.

Sorry, I don’t know how to load neural net weights into an SVM.

Hi Jason,

Thank you for your tutorials.

Is there any other ways not to use ‘KerasClassifier’ to fit the model?

Because I want to train the model with augmented data, which is achieved by using ‘ImageDataGenerator’.

More specifically,

You can change argument X_train, and Y_train with ‘fit()’ function as written below.

history = model.fit(X_train, Y_train, nb_epoch=nb_epoch, batch_size=batch_size, shuffle=True, verbose=1)

However, you can’t change argument X_train, and Y_train using ‘KerasClassifier’ function as written below, because there are no arguments for input data in this function.

model = KerasClassifier(build_fn=create_model, epochs=50, batch_size=1, verbose=0)

Thanks in advance!

Yes, you can use the Keras API directly:

https://machinelearningmastery.com/5-step-life-cycle-neural-network-models-keras/

Here is an example of data augmentation:

https://machinelearningmastery.com/image-augmentation-deep-learning-keras/

Hi Jason,

Thank you for the article

Can you explain how the kfold cross validation example with scikit is different from just using validation split = 1/k in keras, when fitting a model? Sorry, Im new to machine learning. Is the only difference is that the validation split opton in keras never changes or shuffles its validation data?

Thanks in advance!

A validation split is a single split of the data. One model evaluated on one dataset.

k-fold cross-validation creates k models evaluated on k disjoint test sets.

It is a better estimate of the skill of the model trained on a random sample of data of a given size. But comes at an increased computational cost, especially for deep learning models that are really slow to train.

I have read in a few places that k-fold CV is not very common with DL models as they are computationally heavy. But k-fold CV is also used to pick an “optimal” decision/classification threshold for a desired FPR, FNR, etc. before going to the test data set. Do you have any suggestions on how to do “threshold optimization” with DL models? Is k-fold CV the only option?

Thanks!

Makes sense.

Yes, each split you can estimate the threshold to use from the train data and test it on the hold out fold.

Which one give me good result keras scikit learn wraper class or general machine learning algorithms like as KNN , SVM,decession tree , random forest tree?

Please help me.I m in confusion.

scikit-learn provides those algorithms.

Hey Jason,

I’ve followed your blog for some time now and I find your posts on ML very useful, as it sort of fills out some of the holes that many documentation sites leave out. For example, your posts on Keras are lightyears ahead of Keras’ own documentation in terms of clarity. So, please keep up the fantastic work mate.

I have run into a periodic problem when using Keras. Far from every time, but occasionally (I’d say 1 in every 10 times or so), the code fails with this error:

Exception ignored in: <bound method BaseSession.__del__ of >

Traceback (most recent call last):

File “/home/mede/virtualenv/lib/python3.5/site-packages/tensorflow/python/client/session.py”, line 707, in __del__

I believe it’s the same error that these stackoverflow posts describe: https://stackoverflow.com/questions/40560795/tensorflow-attributeerror-nonetype-object-has-no-attribute-tf-deletestatus and https://github.com/tensorflow/tensorflow/issues/3388 Naturally, I have tried to apply the fixes that those posts suggest. However, since (as far as I can see) the error occurs within the loop that executes the grid search I cannot delete the Tensorflow session between each run. Do you know if there is, or can you think of, a workaround for this?

Thanks in advance!

I have not seen this before sorry.

Perhaps ensure that all libs and dependent libs are up to date?

Perhaps move to Py 3.6?

OK, I’ll give it a shot, thanks.

Thanks for the great content!

I’m looking for some guidance on building learning curves that specifically show the training and test error of a sequential keras model as the training examples increases. I’ve put together some code already and have gotten hung up on an error that is making me rethink my approach. Any suggestions would be appreciated.

For reference this is the current error:

TypeError: Cannot clone object ” (type ): it does not seem to be a scikit-learn estimator as it does not implement a ‘get_params’ methods.

Here is an example:

https://machinelearningmastery.com/display-deep-learning-model-training-history-in-keras/

Thanks Jason. I had actually seen that already and it was helpful. Since I’m looking at how the losses change as the number of training examples (not number of epochs) increase I need to train on several subsets of data. In your example mentioned, would you simply loop over what you have while passing the subsetted data? I’ve been trying to use scikit learns learning_curve function while creating a scorer to be used with the sequential model I’m passing.

Got a post at stack overflow if interested.

https://stackoverflow.com/questions/51291980/using-sequential-model-as-estimator-in-learning-curve-function

Hi Jason

the first example of using KerasClassifier to do cross validation is only for accuracy. Is there any way to get f1 score or recall.

The neural network always return the probabilities. so for the binary classification, how the cross_val_score works(I tried, it does not work)

Yes, you can specify any scoring metric you prefer.

Set the “scoring” argument to cross_val_score().

Learn more here:

http://scikit-learn.org/stable/modules/generated/sklearn.model_selection.cross_val_score.html

Hi Jason,

How about if I have multiple models trained on same data. Can you give an example of using VotingClassifier with keras models??

Thaks!

Thanks for the suggestion.

Hi Jason,

I’ve learnt a lot from your website and books. I really thank you for your help.

An observation I’ve made, looking inside the Ebook: Deep Learning With Python and other books from you.

most / nearly all chapters + code is available on your website as separate blogs,

so what’s the added advantage for someone who purchases the book?

Thanks!

Yes, I explain more here:

https://machinelearningmastery.com/faq/single-faq/how-are-your-books-different-from-the-blog

Hi Jason,

Traceback (most recent call last):

File “H:/pycharm_workspace/try_process/model1.py”, line 61, in

model_change.fit(X_train,Y_train)

File “J:\python_3.6\lib\site-packages\keras\wrappers\scikit_learn.py”, line 210, in fit

return super(KerasClassifier, self).fit(x, y, **kwargs)

File “J:\python_3.6\lib\site-packages\keras\wrappers\scikit_learn.py”, line 139, in fit

**self.filter_sk_params(self.build_fn.__call__))

TypeError: __call__() missing 1 required positional argument: ‘inputs’

what is the error meaning?

I have some suggestions here that might help:

https://machinelearningmastery.com/faq/single-faq/why-does-the-code-in-the-tutorial-not-work-for-me

Hi Jason,

Is it possible to wrap SVM classifier in scikit with a Keras CNN feature extraction module?

The outputs of a CNN (features) can be fed to an SVM.

Thank You, Jason.

Instead of extracting the features using CNN and then classifying using SVM, is it possible to replace the fully connected layers of CNN with SVM module available in scikit learn and to perform an end-to-end training?

Directly? Perhaps, I have not tried.

sir,

Which anaconda prompt is best for executing the grid search hyper parameter….for best and execute fast

The command line:

https://machinelearningmastery.com/faq/single-faq/how-do-i-run-a-script-from-the-command-line

sir: I use keras api to adaboost LSTM in scikit=learn.but I got some bugs

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

from keras.layers.core import Dense, Activation, Dropout

from keras.models import Sequential

import time

import math

from keras.layers import LSTM

from sklearn.preprocessing import MinMaxScaler

from sklearn.metrics import mean_squared_error

from keras import optimizers

import os

from matplotlib import pyplot

from keras.wrappers.scikit_learn import KerasRegressor

from sklearn import ensemble

from sklearn.tree import DecisionTreeRegressor

def create_dataset(dataset, look_back):

dataX, dataY = [], []

for i in range(len(dataset)-look_back):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return np.array(dataX), np.array(dataY)

def create_train_test_data(dataset,look_back ):

scaler = MinMaxScaler(feature_range=(0, 1))

dataset = scaler.fit_transform(dataset)

datasetX, datasetY = create_dataset(dataset, look_back)

train_size = int(len(datasetX) * 0.90)

trainX, trainY, testX, testY = datasetX[0:train_size,:],datasetY[0:train_size],datasetX[train_size:len(datasetX),:],datasetY[train_size:len(datasetX)]

trainXrp = trainX.reshape (trainX.shape[0],look_back,1)

testXrp = testX.reshape(testX.shape[0], look_back,1)

return scaler,trainXrp,trainY,testXrp,testY,trainX,testX

def build_model(): #layers [1,50,100,1]

model = Sequential()

model.add(LSTM(10,return_sequences=True))

model.add(Dropout(0.5))

model.add(LSTM(100,return_sequences=False))

model.add(Dropout(0.2))

#model.add(LSTM(layers[3],return_sequences=False))

#model.add(LSTM(layers[4],return_sequences=False))

#model.add(Dropout(0.5))

model.add(Dense(units=1, activation=’tanh’))

start = time.time()

#adagrad=optimizers.adagrad(lr=1)

rmsprop = optimizers.rmsprop(lr=0.001)

#model.compile(loss=”mse”, optimizer=rmsprop)

#model.compile(loss=”mse”, optimizer=”adagrad”)

model.compile(loss=”mse”, optimizer=”rmsprop”)

print(“Compilation Time : “, time.time() – start)

return model

if __name__==’__main__’:

global_start_time = time.time()

print(‘> Loading data… ‘)

dataframe = pd.read_csv(‘xxxx.csv’, usecols=[0])

dataset = dataframe.values

dataset = dataset.astype(‘float32’)

scaler,trainXrp,trainY,testXrp,testY,trainX,testX = create_train_test_data(dataset,seq_len)

LSTM_estimator = KerasRegressor(build_fn=build_model, nb_epoch=2)

boosted_LSTM = ensemble.AdaBoostRegressor(base_estimator=LSTM_estimator,n_estimators=100, random_state=None,learning_rate=0.1)

trainXrp = [np.concatenate(i) for i in trainXrp]

boosted_LSTM.fit(trainXrp, trainY)

trainPredict=boosted_LSTM.predict(testXrp)

————————————————————————————————————————

—————————————————————————

ValueError Traceback (most recent call last)

in

102 trainZ = trainX.reshape (trainX.shape[0],1)

103 print(‘Xrp_train shape:’,trainZ.shape)

–> 104 boosted_LSTM.fit(trainZ, trainY.ravel())

105 trainPredict=boosted_LSTM.predict(trainX)

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/sklearn/ensemble/weight_boosting.py in fit(self, X, y, sample_weight)

958

959 # Fit

–> 960 return super(AdaBoostRegressor, self).fit(X, y, sample_weight)

961

962 def _validate_estimator(self):

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/sklearn/ensemble/weight_boosting.py in fit(self, X, y, sample_weight)

141 X, y,

142 sample_weight,

–> 143 random_state)

144

145 # Early termination

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/sklearn/ensemble/weight_boosting.py in _boost(self, iboost, X, y, sample_weight, random_state)

1017 # Fit on the bootstrapped sample and obtain a prediction

1018 # for all samples in the training set

-> 1019 estimator.fit(X[bootstrap_idx], y[bootstrap_idx])

1020 y_predict = estimator.predict(X)

1021

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/wrappers/scikit_learn.py in fit(self, x, y, **kwargs)

150 fit_args.update(kwargs)

151

–> 152 history = self.model.fit(x, y, **fit_args)

153

154 return history

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/training.py in fit(self, x, y, batch_size, epochs, verbose, callbacks, validation_split, validation_data, shuffle, class_weight, sample_weight, initial_epoch, steps_per_epoch, validation_steps, **kwargs)

950 sample_weight=sample_weight,

951 class_weight=class_weight,

–> 952 batch_size=batch_size)

953 # Prepare validation data.

954 do_validation = False

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/training.py in _standardize_user_data(self, x, y, sample_weight, class_weight, check_array_lengths, batch_size)

675 # to match the value shapes.

676 if not self.inputs:

–> 677 self._set_inputs(x)

678

679 if y is not None:

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/training.py in _set_inputs(self, inputs, outputs, training)

587 assert len(inputs) == 1

588 inputs = inputs[0]

–> 589 self.build(input_shape=(None,) + inputs.shape[1:])

590 return

591

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/sequential.py in build(self, input_shape)

219 self.inputs = [x]

220 for layer in self._layers:

–> 221 x = layer(x)

222 self.outputs = [x]

223 self._build_input_shape = input_shape

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/layers/recurrent.py in __call__(self, inputs, initial_state, constants, **kwargs)

530

531 if initial_state is None and constants is None:

–> 532 return super(RNN, self).__call__(inputs, **kwargs)

533

534 # If any of

initial_stateorconstantsare specified and are Keras/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/base_layer.py in __call__(self, inputs, **kwargs)

412 # Raise exceptions in case the input is not compatible

413 # with the input_spec specified in the layer constructor.

–> 414 self.assert_input_compatibility(inputs)

415

416 # Collect input shapes to build layer.

/usr/local/miniconda3/envs/dl/lib/python3.6/site-packages/keras/engine/base_layer.py in assert_input_compatibility(self, inputs)

309 self.name + ‘: expected ndim=’ +

310 str(spec.ndim) + ‘, found ndim=’ +

–> 311 str(K.ndim(x)))

312 if spec.max_ndim is not None:

313 ndim = K.ndim(x)

ValueError: Input 0 is incompatible with layer lstm_13: expected ndim=3, found ndim=2

Sorry, I don’t have the capacity to debug your code.

Perhaps post on stackoverflow?

Hi!

Did you manage to find the solution? I am in the same situation.

Thank you.

hello jason,i just read few of your article, but all of that is use simple keras model. I want to know if it is possible to run crossvalidation on complex model like CNN ?

Yes.

Hello Jason,

One question here i am trying to find the best parameters with grid search using keras model but my scoring in auc , how to do that as keras classifiers only allows accuracy as scoring

This will help:

https://machinelearningmastery.com/how-to-calculate-precision-recall-f1-and-more-for-deep-learning-models/

i want the best model with gridsearch and with scoring as AUC , i tried this code but it is not working , Can you just review and help where am going wrong.

from keras.wrappers.scikit_learn import KerasClassifier

from sklearn.model_selection import GridSearchCV

from keras.models import Sequential

from keras.layers import Dense

from sklearn.metrics import roc_auc_score

import tensorflow as tf

from keras.utils import np_utils

from keras.callbacks import Callback, EarlyStopping

# define roc_callback, inspired by https://github.com/keras-team/keras/issues/6050#issuecomment-329996505

def auc_roc(y_true, y_pred):

# any tensorflow metric

value, update_op = tf.contrib.metrics.streaming_auc(y_pred, y_true)

# find all variables created for this metric

metric_vars = [i for i in tf.local_variables() if ‘auc_roc’ in i.name.split(‘/’)[1]]

# Add metric variables to GLOBAL_VARIABLES collection.

# They will be initialized for new session.

for v in metric_vars:

tf.add_to_collection(tf.GraphKeys.GLOBAL_VARIABLES, v)

# force to update metric values

with tf.control_dependencies([update_op]):

value = tf.identity(value)

return value

def build_classifier(optimzer,kernel_initializer):

classifier = Sequential()

classifier.add(Dense(6, input_dim = 11, kernel_initializer = kernel_initializer, activation = ‘relu’ ))

classifier.add(Dense(6, kernel_initializer = kernel_initializer, activation = ‘relu’ ))

classifier.add(Dense(1, kernel_initializer = kernel_initializer, activation = ‘sigmoid’ ))

classifier.compile(optimizer = optimzer, loss = ‘binary_crossentropy’, metrics = [auc_roc])

return classifier

classifier = KerasClassifier(build_fn = build_classifier)

my_callbacks = [EarlyStopping(monitor= auc_roc, patience=300, verbose=1, mode=’max’)]

parameters = {‘batch_size’: [25, 32],

‘nb_epoch’: [100, 300],

‘optimzer’: [‘adam’, ‘rmsprop’] ,

‘kernel_initializer’:[‘random_uniform’,’random_normal’]}

grid_search = GridSearchCV(estimator = classifier,

param_grid = parameters,

scoring = auc_roc,

cv = 5)

grid_search = grid_search.fit(X_train, y_train,callbacks=my_callbacks)

best_parameters = grid_search.best_params_

best_accuracy = grid_search.best_score_

This is a common question that I answer here:

https://machinelearningmastery.com/faq/single-faq/can-you-read-review-or-debug-my-code

Thank You,Dr Jason.

I want to know how to combine an LSTM with SVM with the sequential model of Keras, if possible to give us a simple example without execution. thank you in advance Dr Jason.

Thanks for the suggestion.

I’m building an estimator where some of the parameters are callables. Some of the callables have there own parameters. Using the example above, the estimator has an EarlyStop callable as a parameter. The EarlyStop callable takes a patience parameter. The patience parameter is NOT a parameter of the estimator.

If one wanted to do a GridsearchCV over various values for the patience parameter, is scikit learn equipped to report callables and their parameters among the ‘best_parameters_’?

You would have to implement your own for-loops for the search I believe.

Hi Jason,

First of all thank you for the great tutorial.

I have about 1000 nodes dataset where each node has 4 time-series. Each time series is exactly 6 length long.The label is 0 or 1 (i.e. binary classification).

More precisely my dataset looks as follows.

node, time-series1, time_series2, time_series_3, time_series4, Label

n1, [1.2, 2.5, 3.7, 4.2, 5.6, 8.8], [6.2, 5.5, 4.7, 3.2, 2.6, 1.8], …, 1

n2, [5.2, 4.5, 3.7, 2.2, 1.6, 0.8], [8.2, 7.5, 6.7, 5.2, 4.6, 1.8], …, 0

and so on.

I am using the below mentioned LSTM model.

model = Sequential()

model.add(LSTM(10, input_shape=(6,4)))

model.add(Dense(32))

model.add(Dense(1, activation=’sigmoid’))

model.compile(loss=’binary_crossentropy’, optimizer=’adam’, metrics=[‘accuracy’])

Since, I have a small dataset I would like to perform 10-fold cross-validation as you have suggested in this tutorial.

However, I am not clear what is meant by batch-size of KerasClassifier.

Could you please let me know what batch size is, and what batch size do you recommend me to have?

Cheers, Emi

If they are time series, then cross validation would not be valid, instead, you must used walk forward validation.

You can learn more in this post:

https://machinelearningmastery.com/backtest-machine-learning-models-time-series-forecasting/

You can get started with LSTMs for time series forecasting here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason, Thanks a lot for the response. I went through your “backtest-machine-learning-models-time-series-forecasting” tutorial. However, since my problem is not time-series forecasting, I am not clear how to apply these techniques to my problem.

More specifically, I have a binary classification problem of detecting likely to be trendly goods in future. For that, I am caluculating the following four features;

– timeseries 1: how degree centrality of each good changed from 2010-2016

– timeseries 2: how betweenness centrality of each good changed from 2010-2016

– timeseries 3: how closeness centrality of each good changed from 2010-2016

– timeseries 4: how eigenvector centrality of each good changed from 2010-2016

The label value (0 or 1) is based on the trendy goods on 2017-2018. If the item was trendy it was marked as 1, if not 0.

So, I am assuming that my problem is more like sequence classification. In that case, can I use 10-fold cross validation?

I look forward to hearing from you.

Cheers -Emi

Yes, it sounds like a time series classification, and the notion of walk-forward validation applies just as well. That is, you must only ever fit on the past and predict on the future, never shuffle the samples.

I give examples of walk forward validation for time series classification here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi Jason, I thought a lot about what you said.

However, I feel like the data in my problem setting is divided by point-wise in the cross-validation, but not time-wise.

i.e. (point-wise)

1st fold training:

item_1, item2, …….., item_799, item_800

1st fold testing:

item 801, …….., item_1000

not (time-wise)

1st fold training:

2010, 2011, …….., 2015

1st fold testing:

2016, …….., 2018

Due to this reason I am not clear how to use walk forward validation.

May be, I provided you a little description about my problem setting. So, I have expanded my problem setting and posted it as a Stackoverflow question: https://stackoverflow.com/questions/57734955/how-to-use-lstm-for-sequence-classification-using-kerasclassifier/57737501#57737501

I hope, my problem is clearly described in the stackoverflow question.

Please let me know your thoughts. I am still new to this area. So, I would love to get your feedback to improve my solution. Looking forward to hearing from you.

Thank you very much

🙂

Cheers -Emi

Well, you are the expert on your problem, not me, so if you think it’s safe, go for it!

Let me know how you go.

Sorry, the link to the stackoverfow question should be: https://stackoverflow.com/questions/57734955/how-to-use-lstm-for-sequence-classification-using-kerasclassifier

Thanks

You’re welcome.

Plz help how the extracted feature from cnn can be fed into svm is there any code then plz let me know

Good question, you can learn more here:

https://machinelearningmastery.com/how-to-use-transfer-learning-when-developing-convolutional-neural-network-models/

Hi Jason, greetings, good article

you can help me?

how to use .predict to calculate f1 score “weighted” or how calculate f1 score “weighted”

Perhaps this will help:

https://machinelearningmastery.com/how-to-calculate-precision-recall-f1-and-more-for-deep-learning-models/

Hi Jason,

Thank you for your great work. I was wondering if you have something regarding the coupling of CNN’s used for image recognition with normal features. Could you help?

Yes, you could achieve this with a multi-input model, one input for the image and one for the static input variables.

You can discover how to implement multi-input models this here:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

I have implemented same code, but the achieved accuracy is 32%.

import numpy

import pandas

from keras.models import Sequential

from keras.layers import Dense

from keras.wrappers.scikit_learn import KerasClassifier

from keras.utils import np_utils

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import KFold

from sklearn.preprocessing import LabelEncoder

from sklearn.pipeline import Pipeline

# fix random seed for reproducibility

seed = 7

numpy.random.seed(seed)

# load dataset

dataframe = pandas.read_csv(“iris.csv”, header=None)

dataset = dataframe.values

X = dataset[:,0:4].astype(float)

Y = dataset[:,4]

# encode class values as integers

encoder = LabelEncoder()

encoder.fit(Y)

encoded_Y = encoder.transform(Y)

# convert integers to dummy variables (i.e. one hot encoded)

dummy_y = np_utils.to_categorical(encoded_Y)

# define baseline model

def baseline_model():

# create model

model = Sequential()

model.add(Dense(4, input_dim=4, kernel_initializer=’normal’ , activation= ‘relu’ ))

model.add(Dense(3, kernel_initializer=’normal’ , activation= ‘sigmoid’ ))

# Compile model

model.compile(loss= ‘categorical_crossentropy’ , optimizer= ‘adam’ , metrics=[ ‘accuracy’ ])

return model

estimator = KerasClassifier(build_fn=baseline_model, nb_epoch=200, batch_size=5, verbose=0)

kfold = KFold(n_splits=10, shuffle=True, random_state=seed)

results = cross_val_score(estimator, X, dummy_y, cv=kfold)

print(“Accuracy: %.2f%% (%.2f%%)” % (results.mean()*100, results.std()*100))

Great start!