Deep learning networks have gained immense popularity in the past few years. The “attention mechanism” is integrated with deep learning networks to improve their performance. Adding an attention component to the network has shown significant improvement in tasks such as machine translation, image recognition, text summarization, and similar applications.

This tutorial shows how to add a custom attention layer to a network built using a recurrent neural network. We’ll illustrate an end-to-end application of time series forecasting using a very simple dataset. The tutorial is designed for anyone looking for a basic understanding of how to add user-defined layers to a deep learning network and use this simple example to build more complex applications.

After completing this tutorial, you will know:

- Which methods are required to create a custom attention layer in Keras

- How to incorporate the new layer in a network built with SimpleRNN

Kick-start your project with my book Building Transformer Models with Attention. It provides self-study tutorials with working code to guide you into building a fully-working transformer model that can

translate sentences from one language to another...

Let’s get started.

Adding a custom attention layer to a recurrent neural network in Keras

Photo by Yahya Ehsan, some rights reserved.

Tutorial Overview

This tutorial is divided into three parts; they are:

- Preparing a simple dataset for time series forecasting

- How to use a network built via SimpleRNN for time series forecasting

- Adding a custom attention layer to the SimpleRNN network

Prerequisites

It is assumed that you are familiar with the following topics. You can click the links below for an overview.

- What is Attention?

- The attention mechanism from scratch

- An introduction to RNN and the math that powers them

- Understanding simple recurrent neural networks in Keras

The Dataset

The focus of this article is to gain a basic understanding of how to build a custom attention layer to a deep learning network. For this purpose, let’s use a very simple example of a Fibonacci sequence, where one number is constructed from the previous two numbers. The first 10 numbers of the sequence are shown below:

0, 1, 1, 2, 3, 5, 8, 13, 21, 34, …

When given the previous ‘t’ numbers, can you get a machine to accurately reconstruct the next number? This would mean discarding all the previous inputs except the last two and performing the correct operation on the last two numbers.

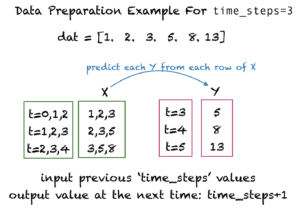

For this tutorial, you’ll construct the training examples from t time steps and use the value at t+1 as the target. For example, if t=3, then the training examples and the corresponding target values would look as follows:

Want to Get Started With Building Transformer Models with Attention?

Take my free 12-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

The SimpleRNN Network

In this section, you’ll write the basic code to generate the dataset and use a SimpleRNN network to predict the next number of the Fibonacci sequence.

The Import Section

Let’s first write the import section:

|

1 2 3 4 5 6 7 8 9 |

from pandas import read_csv import numpy as np from keras import Model from keras.layers import Layer import keras.backend as K from keras.layers import Input, Dense, SimpleRNN from sklearn.preprocessing import MinMaxScaler from keras.models import Sequential from keras.metrics import mean_squared_error |

Preparing the Dataset

The following function generates a sequence of n Fibonacci numbers (not counting the starting two values). If scale_data is set to True, then it would also use the MinMaxScaler from scikit-learn to scale the values between 0 and 1. Let’s see its output for n=10.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

def get_fib_seq(n, scale_data=True): # Get the Fibonacci sequence seq = np.zeros(n) fib_n1 = 0.0 fib_n = 1.0 for i in range(n): seq[i] = fib_n1 + fib_n fib_n1 = fib_n fib_n = seq[i] scaler = [] if scale_data: scaler = MinMaxScaler(feature_range=(0, 1)) seq = np.reshape(seq, (n, 1)) seq = scaler.fit_transform(seq).flatten() return seq, scaler fib_seq = get_fib_seq(10, False)[0] print(fib_seq) |

|

1 |

[ 1. 2. 3. 5. 8. 13. 21. 34. 55. 89.] |

Next, we need a function get_fib_XY() that reformats the sequence into training examples and target values to be used by the Keras input layer. When given time_steps as a parameter, get_fib_XY() constructs each row of the dataset with time_steps number of columns. This function not only constructs the training set and test set from the Fibonacci sequence but also shuffles the training examples and reshapes them to the required TensorFlow format, i.e., total_samples x time_steps x features. Also, the function returns the scaler object that scales the values if scale_data is set to True.

Let’s generate a small training set to see what it looks like. We have set time_steps=3 and total_fib_numbers=12, with approximately 70% of the examples going toward the test points. Note the training and test examples have been shuffled by the permutation() function.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

def get_fib_XY(total_fib_numbers, time_steps, train_percent, scale_data=True): dat, scaler = get_fib_seq(total_fib_numbers, scale_data) Y_ind = np.arange(time_steps, len(dat), 1) Y = dat[Y_ind] rows_x = len(Y) X = dat[0:rows_x] for i in range(time_steps-1): temp = dat[i+1:rows_x+i+1] X = np.column_stack((X, temp)) # random permutation with fixed seed rand = np.random.RandomState(seed=13) idx = rand.permutation(rows_x) split = int(train_percent*rows_x) train_ind = idx[0:split] test_ind = idx[split:] trainX = X[train_ind] trainY = Y[train_ind] testX = X[test_ind] testY = Y[test_ind] trainX = np.reshape(trainX, (len(trainX), time_steps, 1)) testX = np.reshape(testX, (len(testX), time_steps, 1)) return trainX, trainY, testX, testY, scaler trainX, trainY, testX, testY, scaler = get_fib_XY(12, 3, 0.7, False) print('trainX = ', trainX) print('trainY = ', trainY) |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

trainX = [[[ 8.] [13.] [21.]] [[ 5.] [ 8.] [13.]] [[ 2.] [ 3.] [ 5.]] [[13.] [21.] [34.]] [[21.] [34.] [55.]] [[34.] [55.] [89.]]] trainY = [ 34. 21. 8. 55. 89. 144.] |

Setting Up the Network

Now let’s set up a small network with two layers. The first one is the SimpleRNN layer, and the second one is the Dense layer. Below is a summary of the model.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

# Set up parameters time_steps = 20 hidden_units = 2 epochs = 30 # Create a traditional RNN network def create_RNN(hidden_units, dense_units, input_shape, activation): model = Sequential() model.add(SimpleRNN(hidden_units, input_shape=input_shape, activation=activation[0])) model.add(Dense(units=dense_units, activation=activation[1])) model.compile(loss='mse', optimizer='adam') return model model_RNN = create_RNN(hidden_units=hidden_units, dense_units=1, input_shape=(time_steps,1), activation=['tanh', 'tanh']) model_RNN.summary() |

|

1 2 3 4 5 6 7 8 9 10 11 |

Model: "sequential_1" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= simple_rnn_3 (SimpleRNN) (None, 2) 8 _________________________________________________________________ dense_3 (Dense) (None, 1) 3 ================================================================= Total params: 11 Trainable params: 11 Non-trainable params: 0 |

Train the Network and Evaluate

The next step is to add code that generates a dataset, trains the network, and evaluates it. This time around, we’ll scale the data between 0 and 1. We don’t need to pass the scale_data parameter as its default value is True.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

# Generate the dataset trainX, trainY, testX, testY, scaler = get_fib_XY(1200, time_steps, 0.7) model_RNN.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2) # Evalute model train_mse = model_RNN.evaluate(trainX, trainY) test_mse = model_RNN.evaluate(testX, testY) # Print error print("Train set MSE = ", train_mse) print("Test set MSE = ", test_mse) |

As output, you’ll see the progress of the training and the following values for the mean square error:

|

1 2 |

Train set MSE = 5.631405292660929e-05 Test set MSE = 2.623497312015388e-05 |

Adding a Custom Attention Layer to the Network

In Keras, it is easy to create a custom layer that implements attention by subclassing the Layer class. The Keras guide lists clear steps for creating a new layer via subclassing. You’ll use those guidelines here. All the weights and biases corresponding to a single layer are encapsulated by this class. You need to write the __init__ method as well as override the following methods:

build(): The Keras guide recommends adding weights in this method once the size of the inputs is known. This method “lazily” creates weights. The built-in functionadd_weight()can be used to add the weights and biases of the attention layer.call(): Thecall()method implements the mapping of inputs to outputs. It should implement the forward pass during training.

The Call Method for the Attention Layer

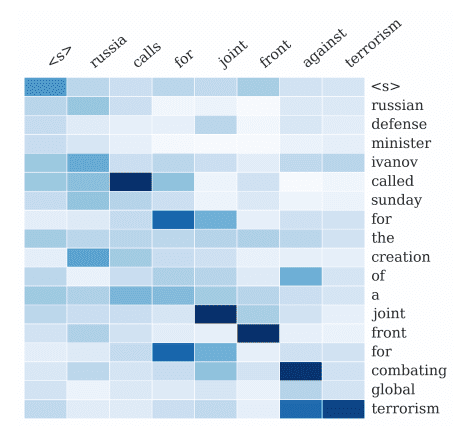

The call method of the attention layer has to compute the alignment scores, weights, and context. You can go through the details of these parameters in Stefania’s excellent article on The Attention Mechanism from Scratch. You’ll implement the Bahdanau attention in your call() method.

The good thing about inheriting a layer from the Keras Layer class and adding the weights via the add_weights() method is that weights are automatically tuned. Keras does an equivalent of “reverse engineering” of the operations/computations of the call() method and calculates the gradients during training. It is important to specify trainable=True when adding the weights. You can also add a train_step() method to your custom layer and specify your own method for weight training if needed.

The code below implements the custom attention layer.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

# Add attention layer to the deep learning network class attention(Layer): def __init__(self,**kwargs): super(attention,self).__init__(**kwargs) def build(self,input_shape): self.W=self.add_weight(name='attention_weight', shape=(input_shape[-1],1), initializer='random_normal', trainable=True) self.b=self.add_weight(name='attention_bias', shape=(input_shape[1],1), initializer='zeros', trainable=True) super(attention, self).build(input_shape) def call(self,x): # Alignment scores. Pass them through tanh function e = K.tanh(K.dot(x,self.W)+self.b) # Remove dimension of size 1 e = K.squeeze(e, axis=-1) # Compute the weights alpha = K.softmax(e) # Reshape to tensorFlow format alpha = K.expand_dims(alpha, axis=-1) # Compute the context vector context = x * alpha context = K.sum(context, axis=1) return context |

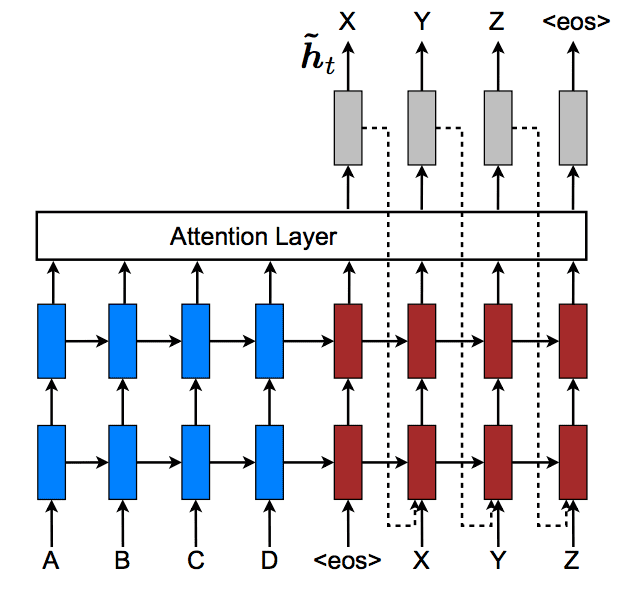

RNN Network with Attention Layer

Let’s now add an attention layer to the RNN network you created earlier. The function create_RNN_with_attention() now specifies an RNN layer, an attention layer, and a Dense layer in the network. Make sure to set return_sequences=True when specifying the SimpleRNN. This will return the output of the hidden units for all the previous time steps.

Let’s look at a summary of the model with attention.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

def create_RNN_with_attention(hidden_units, dense_units, input_shape, activation): x=Input(shape=input_shape) RNN_layer = SimpleRNN(hidden_units, return_sequences=True, activation=activation)(x) attention_layer = attention()(RNN_layer) outputs=Dense(dense_units, trainable=True, activation=activation)(attention_layer) model=Model(x,outputs) model.compile(loss='mse', optimizer='adam') return model model_attention = create_RNN_with_attention(hidden_units=hidden_units, dense_units=1, input_shape=(time_steps,1), activation='tanh') model_attention.summary() |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

Model: "model_1" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= input_2 (InputLayer) [(None, 20, 1)] 0 _________________________________________________________________ simple_rnn_2 (SimpleRNN) (None, 20, 2) 8 _________________________________________________________________ attention_1 (attention) (None, 2) 22 _________________________________________________________________ dense_2 (Dense) (None, 1) 3 ================================================================= Total params: 33 Trainable params: 33 Non-trainable params: 0 _________________________________________________________________ |

Train and Evaluate the Deep Learning Network with Attention

It’s time to train and test your model and see how it performs in predicting the next Fibonacci number of a sequence.

|

1 2 3 4 5 6 7 8 9 |

model_attention.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2) # Evalute model train_mse_attn = model_attention.evaluate(trainX, trainY) test_mse_attn = model_attention.evaluate(testX, testY) # Print error print("Train set MSE with attention = ", train_mse_attn) print("Test set MSE with attention = ", test_mse_attn) |

You’ll see the training progress as output and the following:

|

1 2 |

Train set MSE with attention = 5.3511179430643097e-05 Test set MSE with attention = 9.053358553501312e-06 |

You can see that even for this simple example, the mean square error on the test set is lower with the attention layer. You can achieve better results with hyper-parameter tuning and model selection. Try this out on more complex problems and by adding more layers to the network. You can also use the scaler object to scale the numbers back to their original values.

You can take this example one step further by using LSTM instead of SimpleRNN, or you can build a network via convolution and pooling layers. You can also change this to an encoder-decoder network if you like.

Consolidated Code

The entire code for this tutorial is pasted below if you would like to try it. Note that your outputs would be different from the ones given in this tutorial because of the stochastic nature of this algorithm.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 |

from pandas import read_csv import numpy as np from keras import Model from keras.layers import Layer import keras.backend as K from keras.layers import Input, Dense, SimpleRNN from sklearn.preprocessing import MinMaxScaler from keras.models import Sequential from keras.metrics import mean_squared_error # Prepare data def get_fib_seq(n, scale_data=True): # Get the Fibonacci sequence seq = np.zeros(n) fib_n1 = 0.0 fib_n = 1.0 for i in range(n): seq[i] = fib_n1 + fib_n fib_n1 = fib_n fib_n = seq[i] scaler = [] if scale_data: scaler = MinMaxScaler(feature_range=(0, 1)) seq = np.reshape(seq, (n, 1)) seq = scaler.fit_transform(seq).flatten() return seq, scaler def get_fib_XY(total_fib_numbers, time_steps, train_percent, scale_data=True): dat, scaler = get_fib_seq(total_fib_numbers, scale_data) Y_ind = np.arange(time_steps, len(dat), 1) Y = dat[Y_ind] rows_x = len(Y) X = dat[0:rows_x] for i in range(time_steps-1): temp = dat[i+1:rows_x+i+1] X = np.column_stack((X, temp)) # random permutation with fixed seed rand = np.random.RandomState(seed=13) idx = rand.permutation(rows_x) split = int(train_percent*rows_x) train_ind = idx[0:split] test_ind = idx[split:] trainX = X[train_ind] trainY = Y[train_ind] testX = X[test_ind] testY = Y[test_ind] trainX = np.reshape(trainX, (len(trainX), time_steps, 1)) testX = np.reshape(testX, (len(testX), time_steps, 1)) return trainX, trainY, testX, testY, scaler # Set up parameters time_steps = 20 hidden_units = 2 epochs = 30 # Create a traditional RNN network def create_RNN(hidden_units, dense_units, input_shape, activation): model = Sequential() model.add(SimpleRNN(hidden_units, input_shape=input_shape, activation=activation[0])) model.add(Dense(units=dense_units, activation=activation[1])) model.compile(loss='mse', optimizer='adam') return model model_RNN = create_RNN(hidden_units=hidden_units, dense_units=1, input_shape=(time_steps,1), activation=['tanh', 'tanh']) # Generate the dataset for the network trainX, trainY, testX, testY, scaler = get_fib_XY(1200, time_steps, 0.7) # Train the network model_RNN.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2) # Evalute model train_mse = model_RNN.evaluate(trainX, trainY) test_mse = model_RNN.evaluate(testX, testY) # Print error print("Train set MSE = ", train_mse) print("Test set MSE = ", test_mse) # Add attention layer to the deep learning network class attention(Layer): def __init__(self,**kwargs): super(attention,self).__init__(**kwargs) def build(self,input_shape): self.W=self.add_weight(name='attention_weight', shape=(input_shape[-1],1), initializer='random_normal', trainable=True) self.b=self.add_weight(name='attention_bias', shape=(input_shape[1],1), initializer='zeros', trainable=True) super(attention, self).build(input_shape) def call(self,x): # Alignment scores. Pass them through tanh function e = K.tanh(K.dot(x,self.W)+self.b) # Remove dimension of size 1 e = K.squeeze(e, axis=-1) # Compute the weights alpha = K.softmax(e) # Reshape to tensorFlow format alpha = K.expand_dims(alpha, axis=-1) # Compute the context vector context = x * alpha context = K.sum(context, axis=1) return context def create_RNN_with_attention(hidden_units, dense_units, input_shape, activation): x=Input(shape=input_shape) RNN_layer = SimpleRNN(hidden_units, return_sequences=True, activation=activation)(x) attention_layer = attention()(RNN_layer) outputs=Dense(dense_units, trainable=True, activation=activation)(attention_layer) model=Model(x,outputs) model.compile(loss='mse', optimizer='adam') return model # Create the model with attention, train and evaluate model_attention = create_RNN_with_attention(hidden_units=hidden_units, dense_units=1, input_shape=(time_steps,1), activation='tanh') model_attention.summary() model_attention.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2) # Evalute model train_mse_attn = model_attention.evaluate(trainX, trainY) test_mse_attn = model_attention.evaluate(testX, testY) # Print error print("Train set MSE with attention = ", train_mse_attn) print("Test set MSE with attention = ", test_mse_attn) |

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

- Deep Learning Essentials by Wei Di, Anurag Bhardwaj, and Jianing Wei.

- Deep Learning by Ian Goodfellow, Joshua Bengio, and Aaron Courville.

Papers

Articles

- A Tour of Recurrent Neural Network Algorithms for Deep Learning.

- What is Attention?

- The attention mechanism from scratch.

- An introduction to RNN and the math that powers them.

- Understanding simple recurrent neural networks in Keras.

- How to Develop an Encoder-Decoder Model with Attention in Keras

Summary

In this tutorial, you discovered how to add a custom attention layer to a deep learning network using Keras.

Specifically, you learned:

- How to override the Keras

Layerclass. - The method

build()is required to add weights to the attention layer. - The

call()method is required for specifying the mapping of inputs to outputs of the attention layer. - How to add a custom attention layer to the deep learning network built using SimpleRNN.

Do you have any questions about RNNs discussed in this post? Ask your questions in the comments below, and I will do my best to answer.

Hi,I have a question

I have tried to use LSTM instead of simple_RNN with your help.

then I found it only has train loss, I can not find the val_loss.

So how can I monitor the overfitting problem?

I would like to ask you for help.

thank you very much!

Likely you didn’t provide validation data when you called fit(), hence no validation has been performed. See this code snippet:

history = model.fit(X_train, y_train, epochs=200, batch_size=16, validation_data=(X_test,y_test))

Could you tell me how to monitor the overfitting problem with your code?

Or is it that RNN models with attention mechanism do not need to consider this overfitting problem?

I don’t see any tuning hyperparameters involved in your example.

I have fixed the problem

Thank you!

I am getting the following error : “NameError: name ‘Layer’ is not defined”

Do you have “from keras.layers import Layer”?

Well structured and well described with clarity.

Thanks for the clarity explanation and example.

I have some different result when executing the code above.

The attention layer’s output should be (None, 2) above.

However, I get (None, 20, 2) and cause dimensions doesn’t match error.

The attention layer does output the (None, 2)

But when it was concatenated to model it becomes (None, 20, 2)

Could you please tell me what’s the problem?

Thank you.

It is hard to tell what’s wrong. Can you try to copy over the example code at the end of this post and compare with your version?

Thank you for the reply.

I just copy the code at the end and execute it on my PC.

The error is below:

ValueError: Error when checking target: expected dense_2 to have 3 dimensions, but got array with shape (826, 1)

It seems that the attention layer return the sequence?

I just verified and don’t see the error. Did you see which line is triggering that?

Getting same error. I don’t know but the Dense layer is expecting 3 values, but it is getting 2. I try to use Flatten before Dense, i think it is also not working.

Below is the line of code.

ValueError: Error when checking target: expected dense_2 to have 3 dimensions, but got array with shape (826, 1)

–> 123 model_attention.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2)

My python version is 3.7.3

Keras 2.3.1

tensorflow 2.2.0

Thanks for the reply.

I just use the colab to run the code and get the right result.

It may caused by environment.

But I’m not sure which part is wrong.

Anyway, thank you for the very useful guide on attention layer.

It’s really helpful.

In a seq2seq model trained for time series forecasting and having a 3-stack LSTM encoder plus a similar decoder, would the following approach be reasonable?

1) Calculate the attention scores after the last encoder LSTM.

2) Condition the first decoder LSTM with attention outputs (initialize LSTM states from context vector).

I have the same question…

I also meet the error “ValueError: Error when checking target: expected dense_9 to have 3 dimensions, but got array with shape (826, 1)” in the line”model_attention.fit(trainX, trainY, epochs=epochs, batch_size=1, verbose=2)”, do you have any suggestion? Thank you.

After the “model_attention.summary()”, the Output Shape of the attention layer and the dense layer is “(None, 20,2), (None,20,1)”, which is different from what you present in this blog “(None 2) and (None, 1) “, is there something wrong?

I solved this problem by upgrading the version of Tensorflow from 1.14.0 to 2.7.0

Tensorflow 1.x is too old to use nowadays. The recent tutorials on this blog are all checked against the 2.x version while the older posts may need revision.

Reply to myself.

I run the code on colab and the result is the same as the author.

I think that might be caused by environment. QAQ

Thanks! That’s a good way to check. Usually for python libraries, you can print “libraryname.__version__” to check the version. That helps to identify when one machine report a different result than another.

I ran your code on my machine but it produces different summary for SimpleRNN+Attention network. The output of attention layer in my summary is

attention_1 (attention) (None,20, 2) 22

This produces an error for dense layer. I don’t know where is the problem!

Hi Nav…Hopefully the issue is now resolved with reinstallation of TensorFlow.

Regards,

Resolved the issue by upgrading tensorflow.

Thank you so much for this amazing tutorial!

You are very welcome Nav!

Regards,

Hi, thank you for the post.

For the class attention(Layer), why is there only one weight vector for the alignment score (e)?

In Bahdanau et al’s paper (https://arxiv.org/pdf/1409.0473.pdf), I see that the alighment model has three weights, v_a, W_a, and U_a.

Furthermore, can you clarify if attention mechanisms are appropriate for non-autoencoder architectures?

Thanks again.

Hi Ray…The following may be of interest to you:

https://machinelearningmastery.com/the-transformer-attention-mechanism/

I noticed Keras has an attention layer implementation. Do you have any plans to use the keras attention layer implementation as an example in one of your blogs? Thank you.

https://keras.io/api/layers/attention_layers/attention/

Hi!

It is my understanding that attention is a way to decrease information in matrixes that are less semantic by multiplying them with a scalar value between 0-1.

In you case, you’re only left with one time-step (the time_step dimension is lost after attention layer).

Instead of:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_2 (InputLayer) [(None, 20, 1)] 0

_________________________________________________________________

simple_rnn_2 (SimpleRNN) (None, 20, 2) 8

_________________________________________________________________

attention_1 (attention) (None, 2) 22

_________________________________________________________________

dense_2 (Dense) (None, 1) 3

=================================================================

shouldn’t the attions yeild these shapes:

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_2 (InputLayer) [(None, 20, 1)] 0

_________________________________________________________________

simple_rnn_2 (SimpleRNN) (None, 20, 2) 8

_________________________________________________________________

attention_1 (attention) (None, 20, 2) ???

_________________________________________________________________

dense_2 (Dense) (None, 20, 1) ???

=================================================================

To make compare with a NLP subject. Shouldn’t you keep the whole sentence and multiply irrelevant words with a scalar value close to 0 instead of just choosing the most semantic word?

Hi Martin…Since semantics are the ultimate goal of NLP, I would recommend choosing the most semantic word.

Hi,

Thanks for the implementation of the custom attention layer.

Here I want to print the probability values or the alpha.

If I simply print(alpha), then it’s giving below output.

Tensor(“attention_1/ExpandDims:0”, shape=(None, 20, 1), dtype=float32)

But I want the values of alpha. Could you please help to find out the probability values?

reply to myself:

sorry,I saw the two part data was different

Hello, ,

The code runs for me, but the standard recurrent model usually outperforms the

attention model. Could this be just a version issue or is there a bigger problem?

My tensorflow is version 2.3.1 and my keras is version 2.4.0

Hi Ruan…What are you using as your measures of performance to compare the models?

Hello Dr.Brownlee

I want to build a mode like

…

Input => input_shape => (1, 7, 1) => (batch_size=1, n_steps=7, n_features=1)

“LSTM” => stateful=True

“”Attention”” => Error

“Conv1D” => 128, 3,

Flatten

Dense

…

I can make (without “Attention_layer”)

…

Input

LSTM

Conv1D

Flatten

Dense

…

model.compile(loss=’mse’,

optimizer=’adam’,

metrics=[tf.keras.metrics.RootMeanSquaredError(),

tf.keras.losses.MeanAbsoluteError(),

‘mean_absolute_percentage_error’])

but when, I add “Attention_layer”, I will have error…

/usr/local/lib/python3.7/dist-packages/keras/engine/input_spec.py in assert_input_compatibility(input_spec, inputs, layer_name)

226 ndim = x.shape.rank

227 if ndim is not None and ndim 228 raise ValueError(f’Input {input_index} of layer “{layer_name}” ‘

229 ‘is incompatible with the layer: ‘

230 f’expected min_ndim={spec.min_ndim}, ‘

ValueError: Input 0 of layer “Conv1D_01” is incompatible with the layer: expected min_ndim=3, found ndim=2. Full shape received: (1, 7)

How can/must I change “Attention_layer” that I can put it between LSTM & Conv1D?

Thanks so much.

sorry I forgot something

I used 2 lstm

whit

return_sequences=True,

…

Input => input_shape => (1, 7, 1) => (batch_size=1, n_steps=7, n_features=1)

“LSTM” => stateful=True, return_sequences=True,

“LSTM” => stateful=True

“”Attention”” => Error

“Conv1D” => 128, 3,

Flatten

Dense

Dense

…

and, another question

How can we use “Conv2D”

…

Input => input_shape => (1, 7, 1) => (batch_size=1, n_steps=7, n_features=1)

“LSTM” => stateful=True, return_sequences=True,

“LSTM” => stateful=True

“”Attention”” => Error

“Conv2D” => 128, (3,3),

“Conv2D” => 64, (3,3),

Flatten

..

Thanks

Hi Arash…The following resource will help with understanding how to use Conv2D layers:

https://pyimagesearch.com/2018/12/31/keras-conv2d-and-convolutional-layers/

Thanks a lot for last guiding.

But I don’t have problem with ‘Conv1D’ & ‘Conv2D’.

My problem is;

I don’t know how can I connect a “custom attention layer” to a Conv1D /Conv2D layer. when “custom attention layer” is before Conv1D /Conv2D layer and before of “custom attention layer” is a LSTM layer .

Even when I don’t use code of “custom attention layer” which is in this page, and I use “attention layer” that is in keras, like this:

Input layer => LSTM layer => “custom attention layer” => Conv1D layer => …..

Again code can not compile without error.

I watched same my problem in this page,

https://stackoverflow.com/questions/69959445/connect-an-attention-block-to-the-conv1d-cnn-block-keras

But no one seems to have a solution to this problem.

Thanks for spending your time for reading this comment.

Hi

how to use different evaluation metrics to evaluate the results like MAE and etc?

Hello, thank you for the post.

i try to change this code and make it seq2seq for example but fail do you have any example for seq2seq?

Hi Mohammed…You may find the following resource of interest:

https://machinelearningmastery.com/develop-encoder-decoder-model-sequence-sequence-prediction-keras/

i mean make it many to many 🙂

Thank you for your feedback Mohammed!

A beginner question:

Inside the build() function of the custom attention layer, it has these two lines:

self.W = self.add_weight(name=’attention_weight’, shape=(input_shape[-1], 1), initializer=’random_normal’, trainable=True)

self.b=self.add_weight(name=’attention_bias’, shape=(input_shape[1],1), initializer=’zeros’, trainable=True)

In the 2nd line, the shape param is (input_shape[1], 1). Why input_shape[1]? (vs. input_shape[-1] in the 1st line)

My understanding is that for RNN, input_shape = (time_steps, features).

So input_shape[-1] == input_shape[1]. But why the code wrote it differently among these two lines? What’s the reason behind?

Hi Patrick…Your understanding is correct. The alternative notation you mentioned should work as well. Let us know what you find with your implementation.

So the difference is not intentional, but inconsistency may create confusion to a learner. Thanks!

Thank you for your feedback Patrick!

Hi there,

quick feedback: while the idea of a “Bahdanau self attention” is interesting, the training results for both scenarios (with/without attention) are completely meaningless due to the use of Fibonacci numbers. These numbers grow basically exponential, so linearly scaling 1200 of them to the range of 0…1 doesn’t work. Take a look at your scaled trainX, most numbers are like 1E-200, which is 0 when converted to tf.float32.

Both networks don’t learn anything, the mse values are completely artificial, therefore this tutorial doesn’t show that attention helps. You could take the log before, but then the log Fibonacci sequence is linear, therefore trivial. The Fibonacci numbers simply aren’t useable here.

Hi Markus…Thank you for your feedback!

Came here to give the exact same feedback and write a similar comment. I spent half a day trying to understand what was “wrong” with my code, only to find out eventually that virtually all model predictions were identical and therefore useless. (The models are not learning). After putting some thought into it, I realized you couldn’t possibly expect much better when you convert a range as wide as (1, F_1200) to the (0, 1) range and back. Might be a better idea to use the same dataset as the one in chapter 7 (sunspots) for this chapter. I’m going to try it myself later. Thank you for the book though. I am learning a lot.

Hey! Thank you for this tutorial.

What would be the diference bwteen appling the attention mechanism after the input and then the RNN compared to placing the attention after the RNN?

Hi Filipa…I would recommend trying both methods. Let us know how your models perform.

Hi, James. thanks for your post. May I ask that what is the difference for the attention layer on this post and the attention layer (Class AttentionDecoder(Recurrent):) on 2017 post : https://machinelearningmastery.com/encoder-decoder-attention-sequence-to-sequence-prediction-keras/

I am a little confuse that is it the attention in this post is a layer to add between encoder and decoder layer, but for the code in 2017 post, the attention layer is combined with de decoder layer? thanks

what is the corresponding Q, K V, in the attention class call method?

Hi J…The following resource may be of interest to you.

https://medium.com/analytics-vidhya/understanding-q-k-v-in-transformer-self-attention-9a5eddaa5960

what is the type of this attention?

Hi Eman…The following resource may be of interest to learn more about the role of attention in deep learning. The concepts presented form the basis for large langauge models such as ChatGPT.

https://machinelearningmastery.com/transformer-models-with-attention/