The Keras Python library for deep learning focuses on creating models as a sequence of layers.

In this post, you will discover the simple components you can use to create neural networks and simple deep learning models using Keras from TensorFlow.

Kick-start your project with my new book Deep Learning With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- May 2016: First version

- Update Mar/2017: Updated example for Keras 2.0.2, TensorFlow 1.0.1 and Theano 0.9.0.

- Update Jun/2022: Updated code to TensorFlow 2.x. Update external links.

How to build multi-layer perceptron neural network models with Keras

Photo by George Rex, some rights reserved.

Neural Network Models in Keras

The focus of the Keras library is a model.

The simplest model is defined in the Sequential class, which is a linear stack of Layers.

You can create a Sequential model and define all the layers in the constructor; for example:

|

1 2 |

from tensorflow.keras.models import Sequential model = Sequential(...) |

A more useful idiom is to create a Sequential model and add your layers in the order of the computation you wish to perform; for example:

|

1 2 3 4 5 |

from tensorflow.keras.models import Sequential model = Sequential() model.add(...) model.add(...) model.add(...) |

Model Inputs

The first layer in your model must specify the shape of the input.

This is the number of input attributes defined by the input_shape argument. This argument expects a tuple.

For example, you can define input in terms of 8 inputs for a Dense type layer as follows:

|

1 |

Dense(16, input_shape=(8,)) |

Model Layers

Layers of different types have a few properties in common, specifically their method of weight initialization and activation functions.

Weight Initialization

The type of initialization used for a layer is specified in the kernel_initializer argument.

Some common types of layer initialization include:

random_uniform: Weights are initialized to small uniformly random values between -0.05 and 0.05.random_normal: Weights are initialized to small Gaussian random values (zero mean and standard deviation of 0.05).zeros: All weights are set to zero values.

You can see a full list of the initialization techniques supported on the Usage of initializations page.

Activation Function

Keras supports a range of standard neuron activation functions, such as softmax, rectified linear (relu), tanh, and sigmoid.

You typically specify the type of activation function used by a layer in the activation argument, which takes a string value.

You can see a full list of activation functions supported by Keras on the Usage of activations page.

Interestingly, you can also create an Activation object and add it directly to your model after your layer to apply that activation to the output of the layer.

Layer Types

There are a large number of core layer types for standard neural networks.

Some common and useful layer types you can choose from are:

- Dense: Fully connected layer and the most common type of layer used on multi-layer perceptron models

- Dropout: Apply dropout to the model, setting a fraction of inputs to zero in an effort to reduce overfitting

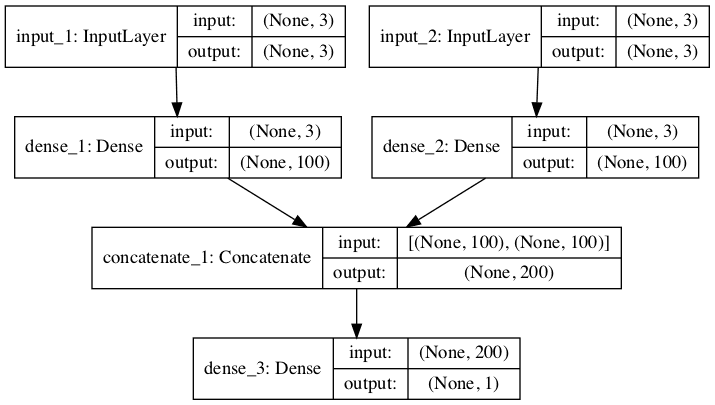

- Concatenate: Combine the outputs from multiple layers as input to a single layer

You can learn about the full list of core Keras layers on the Core Layers page.

Model Compilation

Once you have defined your model, it needs to be compiled.

This creates the efficient structures used by TensorFlow in order to efficiently execute your model during training. Specifically, TensorFlow converts your model into a graph so the training can be carried out efficiently.

You compile your model using the compile() function, and it accepts three important attributes:

- Model optimizer

- Loss function

- Metrics

|

1 |

model.compile(optimizer=..., loss=..., metrics=...) |

1. Model Optimizers

The optimizer is the search technique used to update weights in your model.

You can create an optimizer object and pass it to the compile function via the optimizer argument. This allows you to configure the optimization procedure with its own arguments, such as learning rate. For example:

|

1 2 3 |

from tensorflow.keras.optimizers import SGD sgd = SGD(...) model.compile(optimizer=sgd) |

You can also use the default parameters of the optimizer by specifying the name of the optimizer to the optimizer argument. For example:

|

1 |

model.compile(optimizer='sgd') |

Some popular gradient descent optimizers you might want to choose from include:

- SGD: stochastic gradient descent, with support for momentum

- RMSprop: adaptive learning rate optimization method proposed by Geoff Hinton

- Adam: Adaptive Moment Estimation (Adam) that also uses adaptive learning rates

You can learn about all of the optimizers supported by Keras on the Usage of optimizers page.

You can learn more about different gradient descent methods in the Gradient descent optimization algorithms section of Sebastian Ruder’s post, An overview of gradient descent optimization algorithms.

2. Model Loss Functions

The loss function, also called the objective function, is the evaluation of the model used by the optimizer to navigate the weight space.

You can specify the name of the loss function to use in the compile function by the loss argument. Some common examples include:

- ‘mse‘: for mean squared error

- ‘binary_crossentropy‘: for binary logarithmic loss (logloss)

- ‘categorical_crossentropy‘: for multi-class logarithmic loss (logloss)

You can learn more about the loss functions supported by Keras on the Losses page.

3. Model Metrics

Metrics are evaluated by the model during training.

Only one metric is supported at the moment, and that is accuracy.

Model Training

The model is trained on NumPy arrays using the fit() function; for example:

|

1 |

model.fit(X, y, epochs=..., batch_size=...) |

Training both specifies the number of epochs to train on and the batch size.

- Epochs (

epochs) refer to the number of times the model is exposed to the training dataset. - Batch Size (

batch_size) is the number of training instances shown to the model before a weight update is performed.

The fit function also allows for some basic evaluation of the model during training. You can set the validation_split value to hold back a fraction of the training dataset for validation to be evaluated in each epoch or provide a validation_data tuple of (X, y) data to evaluate.

Fitting the model returns a history object with details and metrics calculated for the model in each epoch. This can be used for graphing model performance.

Model Prediction

Once you have trained your model, you can use it to make predictions on test data or new data.

There are a number of different output types you can calculate from your trained model, each calculated using a different function call on your model object. For example:

- model.evaluate(): To calculate the loss values for the input data

- model.predict(): To generate network output for the input data

For example, if you provided a batch of data X and the expected output y, you can use evaluate() to calculate the loss metric (the one you defined with compile() before). But for a batch of new data X, you can obtain the network output with predict(). It may not be the output you want, but it will be the output of your network. For example, a classification problem will probably output a softmax vector for each sample. You will need to use numpy.argmax() to convert the softmax vector into class labels.

Need help with Deep Learning in Python?

Take my free 2-week email course and discover MLPs, CNNs and LSTMs (with code).

Click to sign-up now and also get a free PDF Ebook version of the course.

Summarize the Model

Once you are happy with your model, you can finalize it.

You may wish to output a summary of your model. For example, you can display a summary of a model by calling the summary function:

|

1 |

model.summary() |

You can also retrieve a summary of the model configuration using the get_config() function:

|

1 |

model.get_config() |

Finally, you can create an image of your model structure directly:

|

1 2 |

from tensorflow.keras.utils import plot_model plot(model, to_file='model.png') |

Resources

You can learn more about how to create a simple neural network and deep learning models in Keras using the following resources:

Summary

In this post, you discovered the Keras API that you can use to create artificial neural networks and deep learning models.

Specifically, you learned about the life cycle of a Keras model, including:

- Constructing a model

- Creating and adding layers, including weight initialization and activation

- Compiling models, including optimization method, loss function, and metrics

- Fitting models, including epochs and batch size

- Model predictions

- Summarizing the model

If you have any questions about Keras for Deep Learning or this post, ask in the comments, and I will do my best to answer them.

Hi, Jason.

For the merge layer, Do you know how to use the mode=’dot’? I want to merge the outputs of two embedding layers by dot multiplication.

The specific question is here: http://stackoverflow.com/questions/39919549/how-to-get-dot-product-of-the-outputs-of-two-embedding-layers-in-keras

Thanks.

Oh, I just figure out that I should use ‘mul’, instead of ‘dot’.

Do you think it makes sense that ‘mul’ can also capture the interaction of the two embeddings as ‘dot’ does in SVD? (The output of ‘mul’ will be input into further LSTM layers.)

Great question Chong, sorry I’ve not experimented with merge layers yet.

Does anyone know if the order of inputs into a merge layer has any significance? Assuming the merged layer goes straight into one dense layer?

Not off hand, you could email the google group, use trial and error with controlled experiments, or review source code to discern.

Hi Jason,

Thanks for this tutorial and all your other articles in the blog – they are super helpful and awesome!

I have a question: What is the recommended number of hidden units in a single hidden (Dense) layer? For a MLP, shouldn’t it be less than the number of features in the input layer? (input_dim parameter)? So the number of units in the above example should be less than 8 right? so how does 16 work? referring to this code: ‘ Dense(16, input_dim=8) ‘

We cannot configure a neural network analytically, you must use trial and error to discover what works on your specific dataset.

Ah okay cool. Thanks Jason!

What about using this approach? https://github.com/melodyguan/enas

Perhaps you can summarize it for me Joe?

Thanks a lot for this great post.

I tried to run the example that you provided. I noticed that I need to use “from keras.utils.vis_utils import plot_model” instead of “from keras.utils.visualize_util import plot”. I thought it may be useful for other people if they have similar issue, so I decided to share it here.

Thanks, fixed.

No worries, I guess the line after that needs to be plot_model as well.

If we have multiple hidden layers, how can we explore the best combination of activation functions? For example, for a binary classification, I would think of sigmoid function for output. However, I do not know if it is better to use sigmoid for the hidden layers, or I need to try different functions to see which one is the best for my data.

You must design experiments to test different configurations to see what works best on your specific data.

Thanks for the article Jason! Well written. huge fan of Keras.

“We cannot configure a neural network analytically, you must use trial and error to discover what works on your specific dataset.”

Just an fyi to all that read this, trial and error is a pain in the arse. But a great way to learn the zoo. I must I would say.

However, here is some code that you may find useful. A simple GA that optimizes your learning pipeline for scikit-learn. https://github.com/mikewlange/KETTLE – Originally called TPot and developed by Computational Genetics Lab http://epistasis.org/ – it’s limited to scikit-learn Classification and Regression pipelines. But I’m building in Keras support – one day! lol.

Cheers,

mike

Thanks for sharing Michael!

I also have more suggestions here:

https://machinelearningmastery.com/faq/single-faq/how-many-layers-and-nodes-do-i-need-in-my-neural-network

It is believed that initializing an MLP with all zero weights is not a good idea. Why?

I explain why here:

https://machinelearningmastery.com/why-initialize-a-neural-network-with-random-weights/

Hello DR.Jason

Fantastic blog…

I want to predict all of my system features one step ahead , the data is temporal .

I have 100 features that use as input , and 100 features as output (predict all the features one step ahead)

I build an LSTM model with 10 hidden layers for predict the next step.

Each hidden layer contains 200 neurons , in each layer my activation function is relu ,

The output layer’s activation function is linear.

optimizer loss function is mae

During the training phase I notice some strange issue:

1. I received low loss value and in addition low accuracy ? , can you explain how it’s possible ?

2. During the training I insert different dataset with the same features , I suddenly received very high loss values (change from 0.01 to 2909090), may you have any idea why?

3. The number of neurons in each layer is OK? may you have any finger rule for choice the number of neurons vs input layer?

Thanks

My best advice for getting started with neural network models for time series forecasting is here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

I do not think some one can write a better introduction than this

Thanks.

Awesome tutorial! How can I create multiple outputs in an MLP model? all your examples show just one output.

Thanks

Change the number of nodes in the output layer to the number of outputs required. That’s it.

Thanks for the tutorial. I am unable to use sequential() in my jupyter notebook. I know this is not the apt platform but please help me out. error message is “Using TensorFlow backend..No module named ‘tensorflow’ “. . Then i installed tensorflow. even then the issue persists.

I am fine if anyone suggests any other python tool where the deep learning packages will be easily installed.

I recommend not using a notebook:

https://machinelearningmastery.com/faq/single-faq/why-dont-use-or-recommend-notebooks

This will help you setup your workstation:

https://machinelearningmastery.com/setup-python-environment-machine-learning-deep-learning-anaconda/