This tutorial is designed for anyone looking for an understanding of how recurrent neural networks (RNN) work and how to use them via the Keras deep learning library. While the Keras library provides all the methods required for solving problems and building applications, it is also important to gain an insight into how everything works. In this article, the computations taking place in the RNN model are shown step by step. Next, a complete end-to-end system for time series prediction is developed.

After completing this tutorial, you will know:

- The structure of an RNN

- How an RNN computes the output when given an input

- How to prepare data for a SimpleRNN in Keras

- How to train a SimpleRNN model

Kick-start your project with my book Building Transformer Models with Attention. It provides self-study tutorials with working code to guide you into building a fully-working transformer model that can

translate sentences from one language to another...

Let’s get started.

Understanding simple recurrent neural networks in Keras. Photo by Mehreen Saeed, some rights reserved.

Tutorial Overview

This tutorial is divided into two parts; they are:

- The structure of the RNN

- Different weights and biases associated with different layers of the RNN

- How computations are performed to compute the output when given an input

- A complete application for time series prediction

Prerequisites

It is assumed that you have a basic understanding of RNNs before you start implementing them. An Introduction to Recurrent Neural Networks and the Math That Powers Them gives you a quick overview of RNNs.

Let’s now get right down to the implementation part.

Import Section

To start the implementation of RNNs, let’s add the import section.

|

1 2 3 4 5 6 7 8 |

from pandas import read_csv import numpy as np from keras.models import Sequential from keras.layers import Dense, SimpleRNN from sklearn.preprocessing import MinMaxScaler from sklearn.metrics import mean_squared_error import math import matplotlib.pyplot as plt |

Want to Get Started With Building Transformer Models with Attention?

Take my free 12-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Keras SimpleRNN

The function below returns a model that includes a SimpleRNN layer and a Dense layer for learning sequential data. The input_shape specifies the parameter (time_steps x features). We’ll simplify everything and use univariate data, i.e., one feature only; the time steps are discussed below.

|

1 2 3 4 5 6 7 8 9 |

def create_RNN(hidden_units, dense_units, input_shape, activation): model = Sequential() model.add(SimpleRNN(hidden_units, input_shape=input_shape, activation=activation[0])) model.add(Dense(units=dense_units, activation=activation[1])) model.compile(loss='mean_squared_error', optimizer='adam') return model demo_model = create_RNN(2, 1, (3,1), activation=['linear', 'linear']) |

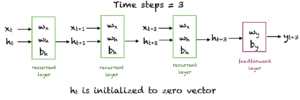

The object demo_model is returned with two hidden units created via the SimpleRNN layer and one dense unit created via the Dense layer. The input_shape is set at 3×1, and a linear activation function is used in both layers for simplicity. Just to recall, the linear activation function $f(x) = x$ makes no change in the input. The network looks as follows:

If we have $m$ hidden units ($m=2$ in the above case), then:

- Input: $x \in R$

- Hidden unit: $h \in R^m$

- Weights for the input units: $w_x \in R^m$

- Weights for the hidden units: $w_h \in R^{mxm}$

- Bias for the hidden units: $b_h \in R^m$

- Weight for the dense layer: $w_y \in R^m$

- Bias for the dense layer: $b_y \in R$

Let’s look at the above weights. Note: As the weights are randomly initialized, the results posted here will be different from yours. The important thing is to learn what the structure of each object being used looks like and how it interacts with others to produce the final output.

|

1 2 3 4 5 6 7 |

wx = demo_model.get_weights()[0] wh = demo_model.get_weights()[1] bh = demo_model.get_weights()[2] wy = demo_model.get_weights()[3] by = demo_model.get_weights()[4] print('wx = ', wx, ' wh = ', wh, ' bh = ', bh, ' wy =', wy, 'by = ', by) |

|

1 2 3 |

wx = [[ 0.18662322 -1.2369459 ]] wh = [[ 0.86981213 -0.49338293] [ 0.49338293 0.8698122 ]] bh = [0. 0.] wy = [[-0.4635998] [ 0.6538409]] by = [0.] |

Now let’s do a simple experiment to see how the layers from a SimpleRNN and Dense layer produce an output. Keep this figure in view.

We’ll input x for three time steps and let the network generate an output. The values of the hidden units at time steps 1, 2, and 3 will be computed. $h_0$ is initialized to the zero vector. The output $o_3$ is computed from $h_3$ and $w_y$. An activation function is not required as we are using linear units.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

x = np.array([1, 2, 3]) # Reshape the input to the required sample_size x time_steps x features x_input = np.reshape(x,(1, 3, 1)) y_pred_model = demo_model.predict(x_input) m = 2 h0 = np.zeros(m) h1 = np.dot(x[0], wx) + h0 + bh h2 = np.dot(x[1], wx) + np.dot(h1,wh) + bh h3 = np.dot(x[2], wx) + np.dot(h2,wh) + bh o3 = np.dot(h3, wy) + by print('h1 = ', h1,'h2 = ', h2,'h3 = ', h3) print("Prediction from network ", y_pred_model) print("Prediction from our computation ", o3) |

|

1 2 3 |

h1 = [[ 0.18662322 -1.23694587]] h2 = [[-0.07471441 -3.64187904]] h3 = [[-1.30195881 -6.84172557]] Prediction from network [[-3.8698118]] Prediction from our computation [[-3.86981216]] |

Running the RNN on Sunspots Dataset

Now that we understand how the SimpleRNN and Dense layers are put together. Let’s run a complete RNN on a simple time series dataset. We’ll need to follow these steps:

- Read the dataset from a given URL

- Split the data into training and test sets

- Prepare the input to the required Keras format

- Create an RNN model and train it

- Make the predictions on training and test sets and print the root mean square error on both sets

- View the result

Step 1, 2: Reading Data and Splitting Into Train and Test

The following function reads the train and test data from a given URL and splits it into a given percentage of train and test data. It returns single-dimensional arrays for train and test data after scaling the data between 0 and 1 using MinMaxScaler from scikit-learn.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# Parameter split_percent defines the ratio of training examples def get_train_test(url, split_percent=0.8): df = read_csv(url, usecols=[1], engine='python') data = np.array(df.values.astype('float32')) scaler = MinMaxScaler(feature_range=(0, 1)) data = scaler.fit_transform(data).flatten() n = len(data) # Point for splitting data into train and test split = int(n*split_percent) train_data = data[range(split)] test_data = data[split:] return train_data, test_data, data sunspots_url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/monthly-sunspots.csv' train_data, test_data, data = get_train_test(sunspots_url) |

Step 3: Reshaping Data for Keras

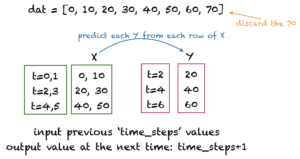

The next step is to prepare the data for Keras model training. The input array should be shaped as: total_samples x time_steps x features.

There are many ways of preparing time series data for training. We’ll create input rows with non-overlapping time steps. An example for time steps = 2 is shown in the figure below. Here, time steps denotes the number of previous time steps to use for predicting the next value of the time series data.

The following function get_XY() takes a one-dimensional array as input and converts it to the required input X and target Y arrays. We’ll use 12 time_steps for the sunspots dataset as the sunspots generally have a cycle of 12 months. You can experiment with other values of time_steps.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# Prepare the input X and target Y def get_XY(dat, time_steps): # Indices of target array Y_ind = np.arange(time_steps, len(dat), time_steps) Y = dat[Y_ind] # Prepare X rows_x = len(Y) X = dat[range(time_steps*rows_x)] X = np.reshape(X, (rows_x, time_steps, 1)) return X, Y time_steps = 12 trainX, trainY = get_XY(train_data, time_steps) testX, testY = get_XY(test_data, time_steps) |

Step 4: Create RNN Model and Train

For this step, you can reuse your create_RNN() function that was defined above.

|

1 2 3 |

model = create_RNN(hidden_units=3, dense_units=1, input_shape=(time_steps,1), activation=['tanh', 'tanh']) model.fit(trainX, trainY, epochs=20, batch_size=1, verbose=2) |

Step 5: Compute and Print the Root Mean Square Error

The function print_error() computes the mean square error between the actual and predicted values.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

def print_error(trainY, testY, train_predict, test_predict): # Error of predictions train_rmse = math.sqrt(mean_squared_error(trainY, train_predict)) test_rmse = math.sqrt(mean_squared_error(testY, test_predict)) # Print RMSE print('Train RMSE: %.3f RMSE' % (train_rmse)) print('Test RMSE: %.3f RMSE' % (test_rmse)) # make predictions train_predict = model.predict(trainX) test_predict = model.predict(testX) # Mean square error print_error(trainY, testY, train_predict, test_predict) |

|

1 2 |

Train RMSE: 0.058 RMSE Test RMSE: 0.077 RMSE |

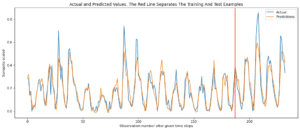

Step 6: View the Result

The following function plots the actual target values and the predicted values. The red line separates the training and test data points.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# Plot the result def plot_result(trainY, testY, train_predict, test_predict): actual = np.append(trainY, testY) predictions = np.append(train_predict, test_predict) rows = len(actual) plt.figure(figsize=(15, 6), dpi=80) plt.plot(range(rows), actual) plt.plot(range(rows), predictions) plt.axvline(x=len(trainY), color='r') plt.legend(['Actual', 'Predictions']) plt.xlabel('Observation number after given time steps') plt.ylabel('Sunspots scaled') plt.title('Actual and Predicted Values. The Red Line Separates The Training And Test Examples') plot_result(trainY, testY, train_predict, test_predict) |

The following plot is generated:

Consolidated Code

Given below is the entire code for this tutorial. Try this out at your end and experiment with different hidden units and time steps. You can add a second SimpleRNN to the network and see how it behaves. You can also use the scaler object to rescale the data back to its normal range.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 |

# Parameter split_percent defines the ratio of training examples def get_train_test(url, split_percent=0.8): df = read_csv(url, usecols=[1], engine='python') data = np.array(df.values.astype('float32')) scaler = MinMaxScaler(feature_range=(0, 1)) data = scaler.fit_transform(data).flatten() n = len(data) # Point for splitting data into train and test split = int(n*split_percent) train_data = data[range(split)] test_data = data[split:] return train_data, test_data, data # Prepare the input X and target Y def get_XY(dat, time_steps): Y_ind = np.arange(time_steps, len(dat), time_steps) Y = dat[Y_ind] rows_x = len(Y) X = dat[range(time_steps*rows_x)] X = np.reshape(X, (rows_x, time_steps, 1)) return X, Y def create_RNN(hidden_units, dense_units, input_shape, activation): model = Sequential() model.add(SimpleRNN(hidden_units, input_shape=input_shape, activation=activation[0])) model.add(Dense(units=dense_units, activation=activation[1])) model.compile(loss='mean_squared_error', optimizer='adam') return model def print_error(trainY, testY, train_predict, test_predict): # Error of predictions train_rmse = math.sqrt(mean_squared_error(trainY, train_predict)) test_rmse = math.sqrt(mean_squared_error(testY, test_predict)) # Print RMSE print('Train RMSE: %.3f RMSE' % (train_rmse)) print('Test RMSE: %.3f RMSE' % (test_rmse)) # Plot the result def plot_result(trainY, testY, train_predict, test_predict): actual = np.append(trainY, testY) predictions = np.append(train_predict, test_predict) rows = len(actual) plt.figure(figsize=(15, 6), dpi=80) plt.plot(range(rows), actual) plt.plot(range(rows), predictions) plt.axvline(x=len(trainY), color='r') plt.legend(['Actual', 'Predictions']) plt.xlabel('Observation number after given time steps') plt.ylabel('Sunspots scaled') plt.title('Actual and Predicted Values. The Red Line Separates The Training And Test Examples') sunspots_url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/monthly-sunspots.csv' time_steps = 12 train_data, test_data, data = get_train_test(sunspots_url) trainX, trainY = get_XY(train_data, time_steps) testX, testY = get_XY(test_data, time_steps) # Create model and train model = create_RNN(hidden_units=3, dense_units=1, input_shape=(time_steps,1), activation=['tanh', 'tanh']) model.fit(trainX, trainY, epochs=20, batch_size=1, verbose=2) # make predictions train_predict = model.predict(trainX) test_predict = model.predict(testX) # Print error print_error(trainY, testY, train_predict, test_predict) #Plot result plot_result(trainY, testY, train_predict, test_predict) |

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

- Deep Learning Essentials by Wei Di, Anurag Bhardwaj, and Jianing Wei.

- Deep Learning by Ian Goodfellow, Joshua Bengio, and Aaron Courville.

Articles

- Wikipedia article on BPTT

- A Tour of Recurrent Neural Network Algorithms for Deep Learning

- A Gentle Introduction to Backpropagation Through Time

- How to Prepare Univariate Time Series Data for Long Short-Term Memory Networks

Summary

In this tutorial, you discovered recurrent neural networks and their various architectures.

Specifically, you learned:

- The structure of RNNs

- How the RNN computes an output from previous inputs

- How to implement an end-to-end system for time series forecasting using an RNN

Do you have any questions about RNNs discussed in this post? Ask your questions in the comments below, and I will do my best to answer.

When I use tensorflow.keras, NotImplementedError is thrown in create_RNN(). What should I update? Thanks.

create_RNN() is a function defined here. Which line is triggering the NotImplementedError?

In “model.add(SimpleRNN(hidden_units, input_shape=input_shape, activation=activation[0]))”

The error thrown at:

~/anaconda3/envs/py38/lib/python3.8/site-packages/tensorflow/python/framework/ops.py in __array__(self)

850

851 def __array__(self):

–> 852 raise NotImplementedError(

853 “Cannot convert a symbolic Tensor ({}) to a numpy array.”

854 ” This error may indicate that you’re trying to pass a Tensor to”

NotImplementedError: Cannot convert a symbolic Tensor (simple_rnn/strided_slice:0) to a numpy array. This error may indicate that you’re trying to pass a Tensor to a NumPy call, which is not supported

Python version is 3.8.12

Tensorflow version is 2.4.1

Thanks.

Error still wasn’t fix NotImplementedError: Cannot convert a symbolic Tensor (simple_rnn_1/strided_slice:0) to a numpy array. This error may indicate that you’re trying to pass a Tensor to a NumPy call, which is not supported

Hi Master56^^…Please provide a full code listing and a description of your Python coding environment.

Regards,

Hi Mehran,

Thank you for providing this very insightful post! I had gone through learning materials from other online courses and labs but did not actually grasp what was actually happening in a RNN until I read your post here. It was very well written and elegantly lucid. The code is similarly very easy to follow and I hope to expand on this example to implement an RNN for my research project!

All the best,

Tommy

Great feedback Tommy! We appreciate your support and feedback!

really great post!

I might have spotted a little typo:

h1 = np.dot(x[0], wx) + h0 + bh

should be:

h1 = np.dot(x[0], wx) + np.dot(h0, wh) + bh

anyway, the result doesn’t change as h0 is all zeros

Thank you Paolo! We appreciate the feedback.

we want extra information about this and it also execute on copy paste in colab or jupyter

Hi cesiy…Have you been able to successfully execute the sample code? If not please let us know what is not working or what error messages you are receiving.

Thank you! How about a sequence with more than one feature per timestep? I tried the following but Keras outputs one value, and the manual computation outputs two values:

# hidden_units, dense_units, input_shape, activation

m = 2

demo_model = create_RNN(m, 1, (3,2), activation=[‘tanh’, ‘linear’])

wx = demo_model.get_weights()[0]

wh = demo_model.get_weights()[1]

bh = demo_model.get_weights()[2]

wy = demo_model.get_weights()[3]

by = demo_model.get_weights()[4]

print(‘wx = ‘, wx, ‘\nwh = ‘, wh, ‘\nbh = ‘, bh, ‘\nwy =’, wy, ‘\nby = ‘, by)

x = np.array([1,0.1, 2,0.2, 3,0.3])

# Reshape the input to the required sample_size x time_steps x features

x_input = np.reshape(x,(1, 3, 2))

print(‘x =’, x)

print(‘x_reshaped = ‘, x_input)

y_pred_model = demo_model.predict(x_input)

h0 = np.zeros(m)

h1 = np.tanh(np.dot(x[0], wx) + np.dot(h0,wh) + bh)

h2 = np.tanh(np.dot(x[1], wx) + np.dot(h1,wh) + bh)

h3 = np.tanh(np.dot(x[2], wx) + np.dot(h2,wh) + bh)

o3 = np.dot(h3, wy) + by

print(‘h1 = ‘, h1, ‘h2 = ‘, h2, ‘h3 = ‘, h3)

print(“Prediction from network “, y_pred_model)

print(“Prediction from our computation “, o3)

Output:

Prediction from network [[0.09162921]]

Prediction from our computation [[ 0.14343597] [-0.27000149]]

Hi Tasos…Thank you for your feedback! We will review this scenario.

Hello, im using this dataset at the moment and i have to use a split ( train test validation) where train: from first row until 1965, validation from 1966 to 1975 and test from 1976 to last row. Im currently new to this type of language since i just started using Rstudio and i would like an answer as soon as posible bcuz i don’t seem to find a solution. Thank you very much.

Hi Odise…The following resource provides best practices when applying training, testing and validation:

https://machinelearningmastery.com/training-validation-test-split-and-cross-validation-done-right/

Hi,

In the first example (Keras simpleRNN), you get weights without training the model ( no fit functing after model compilation )

how is that ?

Thanks in advance

One more question PLZ

In the first example (Keras Simple RNN);

y_pred_model=demo_model.predict(x_input)

print(“Prediction from network “, y_pred_model)

I obtain : [[-1.1634194]] !!

I just copy and past the code you give

I have a little confusion, is the time_step the length of the sequence or the number of the sequence? for eg I have (16230,1) and I want to use 24 as the time_step so it becomes (680,24,1) where the 680 is the number of samples, 24 is the length of the timestep and 1 the feature. Please am I correct?