We have put together the complete Transformer model, and now we are ready to train it for neural machine translation. We shall use a training dataset for this purpose, which contains short English and German sentence pairs. We will also revisit the role of masking in computing the accuracy and loss metrics during the training process.

In this tutorial, you will discover how to train the Transformer model for neural machine translation.

After completing this tutorial, you will know:

- How to prepare the training dataset

- How to apply a padding mask to the loss and accuracy computations

- How to train the Transformer model

Kick-start your project with my book Building Transformer Models with Attention. It provides self-study tutorials with working code to guide you into building a fully-working transformer model that can

translate sentences from one language to another...

Let’s get started.

Training the transformer model

Photo by v2osk, some rights reserved.

Tutorial Overview

This tutorial is divided into four parts; they are:

- Recap of the Transformer Architecture

- Preparing the Training Dataset

- Applying a Padding Mask to the Loss and Accuracy Computations

- Training the Transformer Model

Prerequisites

For this tutorial, we assume that you are already familiar with:

Recap of the Transformer Architecture

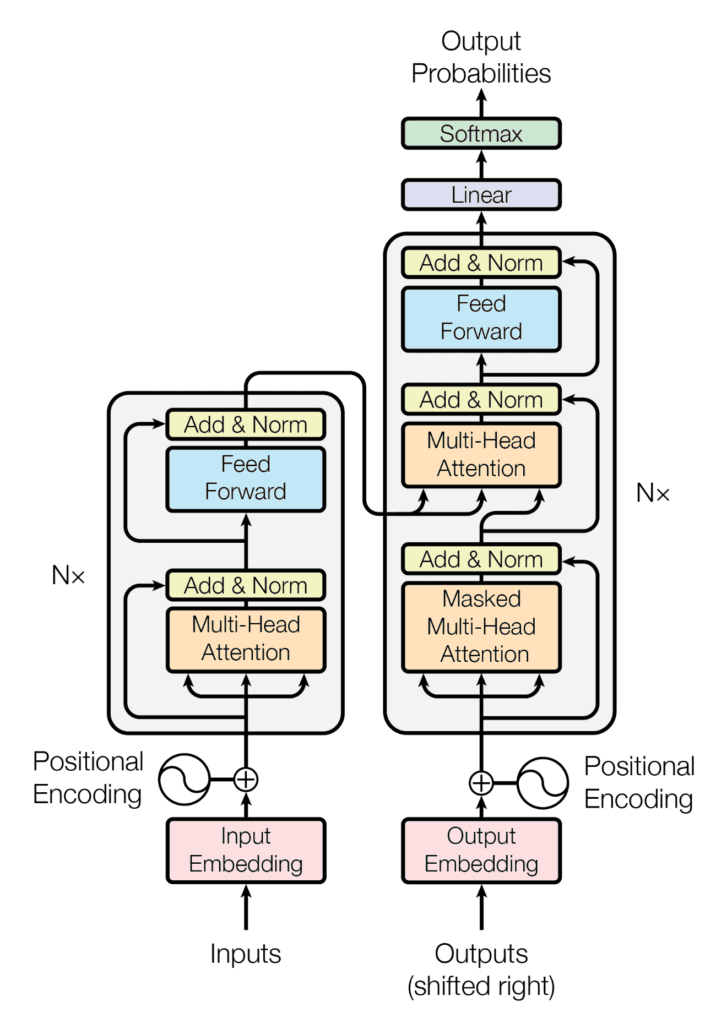

Recall having seen that the Transformer architecture follows an encoder-decoder structure. The encoder, on the left-hand side, is tasked with mapping an input sequence to a sequence of continuous representations; the decoder, on the right-hand side, receives the output of the encoder together with the decoder output at the previous time step to generate an output sequence.

The encoder-decoder structure of the Transformer architecture

Taken from “Attention Is All You Need“

In generating an output sequence, the Transformer does not rely on recurrence and convolutions.

You have seen how to implement the complete Transformer model, so you can now proceed to train it for neural machine translation.

Let’s start first by preparing the dataset for training.

Want to Get Started With Building Transformer Models with Attention?

Take my free 12-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Preparing the Training Dataset

For this purpose, you can refer to a previous tutorial that covers material about preparing the text data for training.

You will also use a dataset that contains short English and German sentence pairs, which you may download here. This particular dataset has already been cleaned by removing non-printable and non-alphabetic characters and punctuation characters, further normalizing all Unicode characters to ASCII, and changing all uppercase letters to lowercase ones. Hence, you can skip the cleaning step, which is typically part of the data preparation process. However, if you use a dataset that does not come readily cleaned, you can refer to this this previous tutorial to learn how to do so.

Let’s proceed by creating the PrepareDataset class that implements the following steps:

- Loads the dataset from a specified filename.

|

1 |

clean_dataset = load(open(filename, 'rb')) |

- Selects the number of sentences to use from the dataset. Since the dataset is large, you will reduce its size to limit the training time. However, you may explore using the full dataset as an extension to this tutorial.

|

1 |

dataset = clean_dataset[:self.n_sentences, :] |

- Appends start (<START>) and end-of-string (<EOS>) tokens to each sentence. For example, the English sentence,

i like to run, now becomes,<START> i like to run <EOS>. This also applies to its corresponding translation in German,ich gehe gerne joggen, which now becomes,<START> ich gehe gerne joggen <EOS>.

|

1 2 3 |

for i in range(dataset[:, 0].size): dataset[i, 0] = "<START> " + dataset[i, 0] + " <EOS>" dataset[i, 1] = "<START> " + dataset[i, 1] + " <EOS>" |

- Shuffles the dataset randomly.

|

1 |

shuffle(dataset) |

- Splits the shuffled dataset based on a pre-defined ratio.

|

1 |

train = dataset[:int(self.n_sentences * self.train_split)] |

- Creates and trains a tokenizer on the text sequences that will be fed into the encoder and finds the length of the longest sequence as well as the vocabulary size.

|

1 2 3 |

enc_tokenizer = self.create_tokenizer(train[:, 0]) enc_seq_length = self.find_seq_length(train[:, 0]) enc_vocab_size = self.find_vocab_size(enc_tokenizer, train[:, 0]) |

- Tokenizes the sequences of text that will be fed into the encoder by creating a vocabulary of words and replacing each word with its corresponding vocabulary index. The <START> and <EOS> tokens will also form part of this vocabulary. Each sequence is also padded to the maximum phrase length.

|

1 2 3 |

trainX = enc_tokenizer.texts_to_sequences(train[:, 0]) trainX = pad_sequences(trainX, maxlen=enc_seq_length, padding='post') trainX = convert_to_tensor(trainX, dtype=int64) |

- Creates and trains a tokenizer on the text sequences that will be fed into the decoder, and finds the length of the longest sequence as well as the vocabulary size.

|

1 2 3 |

dec_tokenizer = self.create_tokenizer(train[:, 1]) dec_seq_length = self.find_seq_length(train[:, 1]) dec_vocab_size = self.find_vocab_size(dec_tokenizer, train[:, 1]) |

- Repeats a similar tokenization and padding procedure for the sequences of text that will be fed into the decoder.

|

1 2 3 |

trainY = dec_tokenizer.texts_to_sequences(train[:, 1]) trainY = pad_sequences(trainY, maxlen=dec_seq_length, padding='post') trainY = convert_to_tensor(trainY, dtype=int64) |

The complete code listing is as follows (refer to this previous tutorial for further details):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 |

from pickle import load from numpy.random import shuffle from keras.preprocessing.text import Tokenizer from keras.preprocessing.sequence import pad_sequences from tensorflow import convert_to_tensor, int64 class PrepareDataset: def __init__(self, **kwargs): super(PrepareDataset, self).__init__(**kwargs) self.n_sentences = 10000 # Number of sentences to include in the dataset self.train_split = 0.9 # Ratio of the training data split # Fit a tokenizer def create_tokenizer(self, dataset): tokenizer = Tokenizer() tokenizer.fit_on_texts(dataset) return tokenizer def find_seq_length(self, dataset): return max(len(seq.split()) for seq in dataset) def find_vocab_size(self, tokenizer, dataset): tokenizer.fit_on_texts(dataset) return len(tokenizer.word_index) + 1 def __call__(self, filename, **kwargs): # Load a clean dataset clean_dataset = load(open(filename, 'rb')) # Reduce dataset size dataset = clean_dataset[:self.n_sentences, :] # Include start and end of string tokens for i in range(dataset[:, 0].size): dataset[i, 0] = "<START> " + dataset[i, 0] + " <EOS>" dataset[i, 1] = "<START> " + dataset[i, 1] + " <EOS>" # Random shuffle the dataset shuffle(dataset) # Split the dataset train = dataset[:int(self.n_sentences * self.train_split)] # Prepare tokenizer for the encoder input enc_tokenizer = self.create_tokenizer(train[:, 0]) enc_seq_length = self.find_seq_length(train[:, 0]) enc_vocab_size = self.find_vocab_size(enc_tokenizer, train[:, 0]) # Encode and pad the input sequences trainX = enc_tokenizer.texts_to_sequences(train[:, 0]) trainX = pad_sequences(trainX, maxlen=enc_seq_length, padding='post') trainX = convert_to_tensor(trainX, dtype=int64) # Prepare tokenizer for the decoder input dec_tokenizer = self.create_tokenizer(train[:, 1]) dec_seq_length = self.find_seq_length(train[:, 1]) dec_vocab_size = self.find_vocab_size(dec_tokenizer, train[:, 1]) # Encode and pad the input sequences trainY = dec_tokenizer.texts_to_sequences(train[:, 1]) trainY = pad_sequences(trainY, maxlen=dec_seq_length, padding='post') trainY = convert_to_tensor(trainY, dtype=int64) return trainX, trainY, train, enc_seq_length, dec_seq_length, enc_vocab_size, dec_vocab_size |

Before moving on to train the Transformer model, let’s first have a look at the output of the PrepareDataset class corresponding to the first sentence in the training dataset:

|

1 2 3 4 5 |

# Prepare the training data dataset = PrepareDataset() trainX, trainY, train_orig, enc_seq_length, dec_seq_length, enc_vocab_size, dec_vocab_size = dataset('english-german-both.pkl') print(train_orig[0, 0], '\n', trainX[0, :]) |

|

1 2 |

<START> did tom tell you <EOS> tf.Tensor([ 1 25 4 97 5 2 0], shape=(7,), dtype=int64) |

(Note: Since the dataset has been randomly shuffled, you will likely see a different output.)

You can see that, originally, you had a three-word sentence (did tom tell you) to which you appended the start and end-of-string tokens. Then you proceeded to vectorize (you may notice that the <START> and <EOS> tokens are assigned the vocabulary indices 1 and 2, respectively). The vectorized text was also padded with zeros, such that the length of the end result matches the maximum sequence length of the encoder:

|

1 |

print('Encoder sequence length:', enc_seq_length) |

|

1 |

Encoder sequence length: 7 |

You can similarly check out the corresponding target data that is fed into the decoder:

|

1 |

print(train_orig[0, 1], '\n', trainY[0, :]) |

|

1 2 |

<START> hat tom es dir gesagt <EOS> tf.Tensor([ 1 14 5 7 42 162 2 0 0 0 0 0], shape=(12,), dtype=int64) |

Here, the length of the end result matches the maximum sequence length of the decoder:

|

1 |

print('Decoder sequence length:', dec_seq_length) |

|

1 |

Decoder sequence length: 12 |

Applying a Padding Mask to the Loss and Accuracy Computations

Recall seeing that the importance of having a padding mask at the encoder and decoder is to make sure that the zero values that we have just appended to the vectorized inputs are not processed along with the actual input values.

This also holds true for the training process, where a padding mask is required so that the zero padding values in the target data are not considered in the computation of the loss and accuracy.

Let’s have a look at the computation of loss first.

This will be computed using a sparse categorical cross-entropy loss function between the target and predicted values and subsequently multiplied by a padding mask so that only the valid non-zero values are considered. The returned loss is the mean of the unmasked values:

|

1 2 3 4 5 6 7 8 9 10 |

def loss_fcn(target, prediction): # Create mask so that the zero padding values are not included in the computation of loss padding_mask = math.logical_not(equal(target, 0)) padding_mask = cast(padding_mask, float32) # Compute a sparse categorical cross-entropy loss on the unmasked values loss = sparse_categorical_crossentropy(target, prediction, from_logits=True) * padding_mask # Compute the mean loss over the unmasked values return reduce_sum(loss) / reduce_sum(padding_mask) |

For the computation of accuracy, the predicted and target values are first compared. The predicted output is a tensor of size (batch_size, dec_seq_length, dec_vocab_size) and contains probability values (generated by the softmax function on the decoder side) for the tokens in the output. In order to be able to perform the comparison with the target values, only each token with the highest probability value is considered, with its dictionary index being retrieved through the operation: argmax(prediction, axis=2). Following the application of a padding mask, the returned accuracy is the mean of the unmasked values:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

def accuracy_fcn(target, prediction): # Create mask so that the zero padding values are not included in the computation of accuracy padding_mask = math.logical_not(math.equal(target, 0)) # Find equal prediction and target values, and apply the padding mask accuracy = equal(target, argmax(prediction, axis=2)) accuracy = math.logical_and(padding_mask, accuracy) # Cast the True/False values to 32-bit-precision floating-point numbers padding_mask = cast(padding_mask, float32) accuracy = cast(accuracy, float32) # Compute the mean accuracy over the unmasked values return reduce_sum(accuracy) / reduce_sum(padding_mask) |

Training the Transformer Model

Let’s first define the model and training parameters as specified by Vaswani et al. (2017):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# Define the model parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of model layers' outputs d_ff = 2048 # Dimensionality of the inner fully connected layer n = 6 # Number of layers in the encoder stack # Define the training parameters epochs = 2 batch_size = 64 beta_1 = 0.9 beta_2 = 0.98 epsilon = 1e-9 dropout_rate = 0.1 |

(Note: Only consider two epochs to limit the training time. However, you may explore training the model further as an extension to this tutorial.)

You also need to implement a learning rate scheduler that initially increases the learning rate linearly for the first warmup_steps and then decreases it proportionally to the inverse square root of the step number. Vaswani et al. express this by the following formula:

$$\text{learning_rate} = \text{d_model}^{−0.5} \cdot \text{min}(\text{step}^{−0.5}, \text{step} \cdot \text{warmup_steps}^{−1.5})$$

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

class LRScheduler(LearningRateSchedule): def __init__(self, d_model, warmup_steps=4000, **kwargs): super(LRScheduler, self).__init__(**kwargs) self.d_model = cast(d_model, float32) self.warmup_steps = warmup_steps def __call__(self, step_num): # Linearly increasing the learning rate for the first warmup_steps, and decreasing it thereafter arg1 = step_num ** -0.5 arg2 = step_num * (self.warmup_steps ** -1.5) return (self.d_model ** -0.5) * math.minimum(arg1, arg2) |

An instance of the LRScheduler class is subsequently passed on as the learning_rate argument of the Adam optimizer:

|

1 |

optimizer = Adam(LRScheduler(d_model), beta_1, beta_2, epsilon) |

Next, split the dataset into batches in preparation for training:

|

1 2 |

train_dataset = data.Dataset.from_tensor_slices((trainX, trainY)) train_dataset = train_dataset.batch(batch_size) |

This is followed by the creation of a model instance:

|

1 |

training_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) |

In training the Transformer model, you will write your own training loop, which incorporates the loss and accuracy functions that were implemented earlier.

The default runtime in Tensorflow 2.0 is eager execution, which means that operations execute immediately one after the other. Eager execution is simple and intuitive, making debugging easier. Its downside, however, is that it cannot take advantage of the global performance optimizations that run the code using the graph execution. In graph execution, a graph is first built before the tensor computations can be executed, which gives rise to a computational overhead. For this reason, the use of graph execution is mostly recommended for large model training rather than for small model training, where eager execution may be more suited to perform simpler operations. Since the Transformer model is sufficiently large, apply the graph execution to train it.

In order to do so, you will use the @function decorator as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

@function def train_step(encoder_input, decoder_input, decoder_output): with GradientTape() as tape: # Run the forward pass of the model to generate a prediction prediction = training_model(encoder_input, decoder_input, training=True) # Compute the training loss loss = loss_fcn(decoder_output, prediction) # Compute the training accuracy accuracy = accuracy_fcn(decoder_output, prediction) # Retrieve gradients of the trainable variables with respect to the training loss gradients = tape.gradient(loss, training_model.trainable_weights) # Update the values of the trainable variables by gradient descent optimizer.apply_gradients(zip(gradients, training_model.trainable_weights)) train_loss(loss) train_accuracy(accuracy) |

With the addition of the @function decorator, a function that takes tensors as input will be compiled into a graph. If the @function decorator is commented out, the function is, alternatively, run with eager execution.

The next step is implementing the training loop that will call the train_step function above. The training loop will iterate over the specified number of epochs and the dataset batches. For each batch, the train_step function computes the training loss and accuracy measures and applies the optimizer to update the trainable model parameters. A checkpoint manager is also included to save a checkpoint after every five epochs:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

train_loss = Mean(name='train_loss') train_accuracy = Mean(name='train_accuracy') # Create a checkpoint object and manager to manage multiple checkpoints ckpt = train.Checkpoint(model=training_model, optimizer=optimizer) ckpt_manager = train.CheckpointManager(ckpt, "./checkpoints", max_to_keep=3) for epoch in range(epochs): train_loss.reset_states() train_accuracy.reset_states() print("\nStart of epoch %d" % (epoch + 1)) # Iterate over the dataset batches for step, (train_batchX, train_batchY) in enumerate(train_dataset): # Define the encoder and decoder inputs, and the decoder output encoder_input = train_batchX[:, 1:] decoder_input = train_batchY[:, :-1] decoder_output = train_batchY[:, 1:] train_step(encoder_input, decoder_input, decoder_output) if step % 50 == 0: print(f'Epoch {epoch + 1} Step {step} Loss {train_loss.result():.4f} Accuracy {train_accuracy.result():.4f}') # Print epoch number and loss value at the end of every epoch print("Epoch %d: Training Loss %.4f, Training Accuracy %.4f" % (epoch + 1, train_loss.result(), train_accuracy.result())) # Save a checkpoint after every five epochs if (epoch + 1) % 5 == 0: save_path = ckpt_manager.save() print("Saved checkpoint at epoch %d" % (epoch + 1)) |

An important point to keep in mind is that the input to the decoder is offset by one position to the right with respect to the encoder input. The idea behind this offset, combined with a look-ahead mask in the first multi-head attention block of the decoder, is to ensure that the prediction for the current token can only depend on the previous tokens.

This masking, combined with fact that the output embeddings are offset by one position, ensures that the predictions for position i can depend only on the known outputs at positions less than i.

– Attention Is All You Need, 2017.

It is for this reason that the encoder and decoder inputs are fed into the Transformer model in the following manner:

encoder_input = train_batchX[:, 1:]

decoder_input = train_batchY[:, :-1]

Putting together the complete code listing produces the following:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 |

from tensorflow.keras.optimizers import Adam from tensorflow.keras.optimizers.schedules import LearningRateSchedule from tensorflow.keras.metrics import Mean from tensorflow import data, train, math, reduce_sum, cast, equal, argmax, float32, GradientTape, TensorSpec, function, int64 from keras.losses import sparse_categorical_crossentropy from model import TransformerModel from prepare_dataset import PrepareDataset from time import time # Define the model parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of model layers' outputs d_ff = 2048 # Dimensionality of the inner fully connected layer n = 6 # Number of layers in the encoder stack # Define the training parameters epochs = 2 batch_size = 64 beta_1 = 0.9 beta_2 = 0.98 epsilon = 1e-9 dropout_rate = 0.1 # Implementing a learning rate scheduler class LRScheduler(LearningRateSchedule): def __init__(self, d_model, warmup_steps=4000, **kwargs): super(LRScheduler, self).__init__(**kwargs) self.d_model = cast(d_model, float32) self.warmup_steps = warmup_steps def __call__(self, step_num): # Linearly increasing the learning rate for the first warmup_steps, and decreasing it thereafter arg1 = step_num ** -0.5 arg2 = step_num * (self.warmup_steps ** -1.5) return (self.d_model ** -0.5) * math.minimum(arg1, arg2) # Instantiate an Adam optimizer optimizer = Adam(LRScheduler(d_model), beta_1, beta_2, epsilon) # Prepare the training and test splits of the dataset dataset = PrepareDataset() trainX, trainY, train_orig, enc_seq_length, dec_seq_length, enc_vocab_size, dec_vocab_size = dataset('english-german-both.pkl') # Prepare the dataset batches train_dataset = data.Dataset.from_tensor_slices((trainX, trainY)) train_dataset = train_dataset.batch(batch_size) # Create model training_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) # Defining the loss function def loss_fcn(target, prediction): # Create mask so that the zero padding values are not included in the computation of loss padding_mask = math.logical_not(equal(target, 0)) padding_mask = cast(padding_mask, float32) # Compute a sparse categorical cross-entropy loss on the unmasked values loss = sparse_categorical_crossentropy(target, prediction, from_logits=True) * padding_mask # Compute the mean loss over the unmasked values return reduce_sum(loss) / reduce_sum(padding_mask) # Defining the accuracy function def accuracy_fcn(target, prediction): # Create mask so that the zero padding values are not included in the computation of accuracy padding_mask = math.logical_not(equal(target, 0)) # Find equal prediction and target values, and apply the padding mask accuracy = equal(target, argmax(prediction, axis=2)) accuracy = math.logical_and(padding_mask, accuracy) # Cast the True/False values to 32-bit-precision floating-point numbers padding_mask = cast(padding_mask, float32) accuracy = cast(accuracy, float32) # Compute the mean accuracy over the unmasked values return reduce_sum(accuracy) / reduce_sum(padding_mask) # Include metrics monitoring train_loss = Mean(name='train_loss') train_accuracy = Mean(name='train_accuracy') # Create a checkpoint object and manager to manage multiple checkpoints ckpt = train.Checkpoint(model=training_model, optimizer=optimizer) ckpt_manager = train.CheckpointManager(ckpt, "./checkpoints", max_to_keep=3) # Speeding up the training process @function def train_step(encoder_input, decoder_input, decoder_output): with GradientTape() as tape: # Run the forward pass of the model to generate a prediction prediction = training_model(encoder_input, decoder_input, training=True) # Compute the training loss loss = loss_fcn(decoder_output, prediction) # Compute the training accuracy accuracy = accuracy_fcn(decoder_output, prediction) # Retrieve gradients of the trainable variables with respect to the training loss gradients = tape.gradient(loss, training_model.trainable_weights) # Update the values of the trainable variables by gradient descent optimizer.apply_gradients(zip(gradients, training_model.trainable_weights)) train_loss(loss) train_accuracy(accuracy) for epoch in range(epochs): train_loss.reset_states() train_accuracy.reset_states() print("\nStart of epoch %d" % (epoch + 1)) start_time = time() # Iterate over the dataset batches for step, (train_batchX, train_batchY) in enumerate(train_dataset): # Define the encoder and decoder inputs, and the decoder output encoder_input = train_batchX[:, 1:] decoder_input = train_batchY[:, :-1] decoder_output = train_batchY[:, 1:] train_step(encoder_input, decoder_input, decoder_output) if step % 50 == 0: print(f'Epoch {epoch + 1} Step {step} Loss {train_loss.result():.4f} Accuracy {train_accuracy.result():.4f}') # print("Samples so far: %s" % ((step + 1) * batch_size)) # Print epoch number and loss value at the end of every epoch print("Epoch %d: Training Loss %.4f, Training Accuracy %.4f" % (epoch + 1, train_loss.result(), train_accuracy.result())) # Save a checkpoint after every five epochs if (epoch + 1) % 5 == 0: save_path = ckpt_manager.save() print("Saved checkpoint at epoch %d" % (epoch + 1)) print("Total time taken: %.2fs" % (time() - start_time)) |

Running the code produces a similar output to the following (you will likely see different loss and accuracy values because the training is from scratch, whereas the training time depends on the computational resources that you have available for training):

|

1 2 3 4 5 6 7 8 9 10 11 12 |

Start of epoch 1 Epoch 1 Step 0 Loss 8.4525 Accuracy 0.0000 Epoch 1 Step 50 Loss 7.6768 Accuracy 0.1234 Epoch 1 Step 100 Loss 7.0360 Accuracy 0.1713 Epoch 1: Training Loss 6.7109, Training Accuracy 0.1924 Start of epoch 2 Epoch 2 Step 0 Loss 5.7323 Accuracy 0.2628 Epoch 2 Step 50 Loss 5.4360 Accuracy 0.2756 Epoch 2 Step 100 Loss 5.2638 Accuracy 0.2839 Epoch 2: Training Loss 5.1468, Training Accuracy 0.2908 Total time taken: 87.98s |

It takes 155.13s for the code to run using eager execution alone on the same platform that is making use of only a CPU, which shows the benefit of using graph execution.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

Papers

Websites

- Writing a training loop from scratch in Keras: https://keras.io/guides/writing_a_training_loop_from_scratch/

Summary

In this tutorial, you discovered how to train the Transformer model for neural machine translation.

Specifically, you learned:

- How to prepare the training dataset

- How to apply a padding mask to the loss and accuracy computations

- How to train the Transformer model

Do you have any questions?

Ask your questions in the comments below, and I will do my best to answer.

Dear Dr.Stefania Cristina

When from model import TransformerModel, it shows No module named ‘model’, how to fix it?

Thank you

Hi Jack, model is a Python (.py) script file in which I had saved the TransformerModel class that we had previously put together in this tutorial: https://machinelearningmastery.com/joining-the-transformer-encoder-and-decoder-and-masking/. You can create one with the same name (model.py) for yourself too.

I get it, thank you. Dr.Stefania Cristina

Dear Dr Stefania Cristina

This tutorial is used for neural machine translation, if I need to use it for data classification and data regression tasks how to change it?

Cheers

Jack

Dear Dr Stefania Cristina

To avoid train again, the trained checkpoint needs to be loaded, how to code it?

Thank you

thanks for the blogs so far. This has been a very interesting series.

Please note there is a bug in the PrepareDataset class … trainY = enc_tokenizer.texts_to_sequences(train[:, 1]). This should be dec_tokenizer instead of enc_tokenizer. If you use this class as is most sequences will only return 2 tokens as it will not recognize the german word in the english vocab.

Thank you for the feedback Sacha! We appreciate it!

Thank you for this awesome tutorial. I want to know how I can adapt this same code for protein desordered region prediction.

Input is a sequence of protein and output is a binary sequence (1 if the corresponding residus is desordered and 0 otherwise.)

Example:

input: AAAALLLLAKKK

output: 111111100001

Hi Michel…You are very welcome! You may want to consider a sequence to sequence model:

https://machinelearningmastery.com/develop-encoder-decoder-model-sequence-sequence-prediction-keras/

Hi James thanks for the tutorial.

How to use this model for question and answer prediction?

Bert supports only 512 tokens and can i use this model to custom train my dataset?

Hi Jithin…You may find the following of interest:

https://cs224d.stanford.edu/reports/Bogatyy.pdf

https://blog.paperspace.com/how-to-train-question-answering-machine-learning-models/

May I ask how to revert the Tensor back to sentence?

After training, how to use the model? I saw that the model require decode_output, so I am very confuse this one

For example, after training, I have a sentence ‘I want to go home’, then how can I received the predicted sentence like predictSentence = model(‘I want to go home’)?

Thank you

Hi Peter…The following discussion should add clarity:

https://stackoverflow.com/questions/61071537/how-to-convert-vector-back-to-sentence-using-tensorflows-universal-sentence-enc

The following articles might also be helpful to you:

https://machinelearningmastery.com/plotting-the-training-and-validation-loss-curves-for-the-transformer-model/

https://machinelearningmastery.com/inferencing-the-transformer-model/

Hi Jason,

thanks for this great article!

I’m a little confused by the training process,

based on my knowledge, the transformer decoder generates only one token (word) at a time. so, how this line ” prediction = training_model(encoder_input, decoder_input, training=True) ” in train_step function will generate a complete sentence consists of a number of words to used later in loss calculation without using for loop to generate one word per loop.

Can you explain this issue please.

Indeed, no. The transformer decoder generates the entire sentence in one shot but sometimes we are not confident for the entire result so we take only the next token. Remember how attention works: you have a key, a value, and a query. The key and value are from the encoder and query is the decoder input. You can pass in an empty query and ask the attention mechanism give you everything in one shot. But you can also give a partial sentence and ask the attention mechnaism to fill in the rest of the sentence. The former is used here. The way you mentioned is the latter approach.

Thanks for your response

You mean that the decoder is trained to predict the last token only, so in this line decoder_input = train_batchY[:, :-1] the last token is removed ?

An other question, can Teacher Forcing method be used with the Transformer, or is it already used?

I think this line has a mistake. According to Adrian’s explanation decoder input should contain only START-token. Correct line would be:

decoder_input = train_batchY[:, :1] # not -1

Please disregard my previous response, it is incorrect.

encoder_input = train_batchX[:, 1:]

decoder_input = train_batchY[:, :-1]

decoder_output = train_batchY[:, 1:]

is the correct approach.

Here is the deep dive:

Encoder and decoder might be a different length, ie. enc_seq_length not equal to dec_seq_length. This is make sense due to sentences length in different languages is different.

Decoder_input seq_length must be equal to decoder_output seq_length, this requirement must be satisfied to be able to calculate loss-function.

Let’s assume:

enc_seq_length = 5

dec_seq_length = 7

START-token = 1

EOS-token = 2

enc_input = [[1,10,11,12,2]] # i like transformers

dec_input = [[1,100,101,102,103,2,0]] # ik hou van transformatoren

Encoder doesn’t require or tokens, but for book convenience they keep EOS. So this explains encoder_input = train_batchX[:, 1:]

Decoder_input must start with non-existing word. This is clearly visible during inference: to instruct neural-net to start generate output text we should provide single -token. Basically [[1, 0,0,0,…..]]. But in training phase full sentence will work [[1,100,101,102,103,2,0]] (lookahead mask will ensure that network is not peeking to following words).

Decoder_output should precict actual words, neural net should NOT predict -token, this explains decoder_output = train_batchY[:, 1:]

To be able to calculate loss-function dec_input_seq_length must be equal to dec_output_seq_length, therefore decoder_input = train_batchY[:, :-1].

But there is a caveat. To be able to keep full sentences in decoder, dec_seq_length must be +1 of actual max(sentence length) to take into account [:, :-1].

Here is how you can implement this:

In file prepare_dataset.py add “+ 1” to the line:

dec_seq_length = self.get_seq_length(ds_Y, dec_tokenizer) + 1

Thank you for your comments on all the posts in this series, Ivan. It seems like I followed in your footsteps only a few months later! Your corrections and explanations were immensely helpful while I tried to implement and understand the code. The book really is in need of being updated and corrected at numerous points. I certainly would have given up on it (around the positional encoding chapter) if I hadn’t chanced upon your comments.

On this last point about shifting the inputs/outputs, I am still a bit lost, especially because the website removed some words in your comment due to the use of angle brackets. But I got the gist of it.

From one traveler to another: Good luck on your journey and thanks again!

Hello,

When adding and tokens, change to smth like .

Reason: tokenizer removes special symbols and lowercase a text. Word “start” used in english-sentences about 30 times (check the enc_tokenizer.get_config()[“word_counts”]). Basically current version is mixing regular word-start with separator-start.

Hello,

Due to forum restriction I can’t use angle brackets, so I’ll refer to separation tokens by capitalizing it.

When adding START and EOS tokens, change START-token to smth like SOS.

Reason: tokenizer removes special symbols and lowercasing a text. Word “start” used in english-sentences about 30 times (check the enc_tokenizer.get_config()[“word_counts”]). Basically current version is mixing regular word-start with separator-START.

If zeros are there only for padding and word indices start from 1, the function “accuracy_fcn” messes up the accuracy figure due to the line

accuracy = math.equal(target, argmax(prediction, axis=2))

because argmax(prediction, axis=2) returns indices starting from 0, not 1. I got correct figures after the following correction.

accuracy = math.equal(target, argmax(prediction, axis=2) + 1)

I apologize if I am missing the point. Thank you!

Thank you for this tuto. How can I adapt the code in classification task? Thank you in advance

Hi,

Thank you for this valuable tutorial resources. But when I ran the code in Colab, I got this error in LRScheduler.

“TypeError: Cannot convert -0.5 to EagerTensor of dtype int64”

Do you have any solution? I will also try to fix it by myself.

Regards,

Hi Surasak…You are very welcome! The following discussion may add clarity:

https://stackoverflow.com/questions/76001766/typeerror-cannot-convert-0-0-to-eagertensor-of-dtype-int64

Hi! Thank you very much for this amazing tutorial and code walk through!! When I execute the “optimizer = Adam(LRScheduler(d_model), beta_1, beta_2, epsilon) ” with the exact same learning rate scheduler class of yours, I get the following error: “TypeError: cannot convert -0.5 to EagerTensor of dtype int64”. I am running the code in Google colab. I appreciate your help.

Hi Parisa…The following resource may provide clarity:

https://www.tensorflow.org/api_docs/python/tf/Tensor

Hello,

If I understand well the decoder is always training to output the decoder input plus the token. Is that how is it supposed to be or is it a mistake in the code ? Or is it a poor understanding from my side?

Hi Alan…The following resource may be of interest to you:

https://machinelearningmastery.com/encoder-decoder-recurrent-neural-network-models-neural-machine-translation/

Hello,

If I understand well the decoder is always training to output the decoder input plus the token. Is that how is it supposed to be or is it a mistake in the code? Or is it a poor understanding from my side?

Your comment is awaiting moderation.

Hello,

If I understand well the decoder is always training to output the decoder input plus the EOS token. Is that how is it supposed to be or is it a mistake in the code? Or is it a poor understanding from my side?

Hello,

If I understand well the decoder is always training to output the decoder input plus the -EOS- token. Is that how is it supposed to be or is it a mistake in the code? Or is it a poor understanding from my side?

Dear Jason Brownle,

Sorry, do you have any guidance about implementation of transformers in time series prediction?

Hi Ali…This resource is a great starting point:

https://arxiv.org/abs/2205.13504

Dear Jason,

Thank you for sharing your valuable knowledge through your website. I have a suggestion: if possible, could you create resources for implementing Transformers in time series forecasting or classification problems? I have a project related to this, but I have not yet found any useful implementation code to follow as instructions.

Best regards,

Hi Amir…You are very welcome! We appreciate your suggestions! Please ensure that you are subscribed to our newsletter so that you will kept up to date on new content!

https://machinelearningmastery.com/newsletter/

Hi,

Thank you for sharing these great articles. I’m interested in using transformers for time-series forecasting. If you can share any related tutorials to implement transformer for time-series forecasting, that would be great.

Thank You.

Hi Madara…You are very welcome! The following resource may also be of interest.

https://huggingface.co/blog/autoformer

Thank you for this great content! I’m interested in training a Transformers model with the traditional encoder-decoder architecture for causal language modeling (auto text generation). I know that in the original Attention Is All You Need paper, they also trained using a similar English-German dataset like in this article. If it’s possible to do with the encoder-decoder architecture, how would I set up my sequences for language generation?

Specifically, let’s say I’d like to train on a novel.

One option is to set an arbitrary sequence length and create my sequences from that. So as an example the first sequence will be tokens 1-30 for the encoder input and 2-31 for the decoder input, the second one tokens 2-31 for the encoder input and 3-32 for the decoder input, etc. In this case, though, I will not have padding or end-of-sequence tokens in my training, which becomes a problem during prediction.

If I break up sequences by paragraph, for example, then I can have end of sequence and padding tokens, but then I’m not sure how I would structure the encoder and decoder inputs.

Hi Piotr…You may want to start here and then submit any specific questions as you work through the content:

https://machinelearningmastery.com/start-here/#attention

Hi James, this article seemed like the most relevant to my specific question since it’s the one article from the Attention section you referenced that trains on actual data. If there’s a more appropriate article for my question, please point to the specific article. If there’s noone to answer this specific question, that’s ok, too. Thanks

How would I be able make a model with multiple transformers in sequence?

This is one way that the architecture may make sense: A transformer in the form of what this post shows is to process a sequence, and output a vector of probabilities. You can consider each word of a sentence a number, and there are N words in the language (called the vocabulary size). Then the output would be a vector of N, which is considered as probabilities. You can take the highest probability one as the output word. This is the “next-token” model. So you can connect two transformer models in such a way that the first transformer produce a vector of N, then you use argmax function to find the single word. Repeat the process to generate a sequence of words. Then this sequence of words is feed into another transformer model to produce a vector of M (output vocabulary size of second model), and use argmax function again to deduce a word from the vector.

This make sense for the case that the first model is to translate English to French and the second model is to translate French to German.