We have seen how to train the Transformer model on a dataset of English and German sentence pairs and how to plot the training and validation loss curves to diagnose the model’s learning performance and decide at which epoch to run inference on the trained model. We are now ready to run inference on the trained Transformer model to translate an input sentence.

In this tutorial, you will discover how to run inference on the trained Transformer model for neural machine translation.

After completing this tutorial, you will know:

- How to run inference on the trained Transformer model

- How to generate text translations

Kick-start your project with my book Building Transformer Models with Attention. It provides self-study tutorials with working code to guide you into building a fully-working transformer model that can

translate sentences from one language to another...

Let’s get started.

Inferencing the Transformer model

Photo by Karsten Würth, some rights reserved.

Tutorial Overview

This tutorial is divided into three parts; they are:

- Recap of the Transformer Architecture

- Inferencing the Transformer Model

- Testing Out the Code

Prerequisites

For this tutorial, we assume that you are already familiar with:

- The theory behind the Transformer model

- An implementation of the Transformer model

- Training the Transformer model

- Plotting the training and validation loss curves for the Transformer model

Recap of the Transformer Architecture

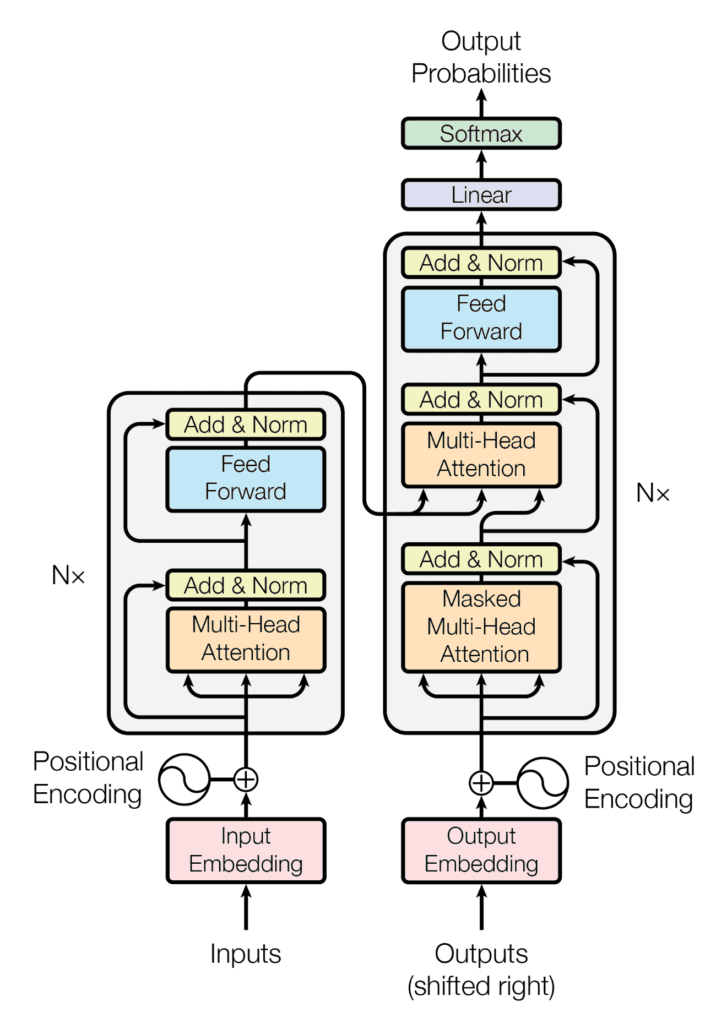

Recall having seen that the Transformer architecture follows an encoder-decoder structure. The encoder, on the left-hand side, is tasked with mapping an input sequence to a sequence of continuous representations; the decoder, on the right-hand side, receives the output of the encoder together with the decoder output at the previous time step to generate an output sequence.

The encoder-decoder structure of the Transformer architecture

Taken from “Attention Is All You Need“

In generating an output sequence, the Transformer does not rely on recurrence and convolutions.

You have seen how to implement the complete Transformer model and subsequently train it on a dataset of English and German sentence pairs. Let’s now proceed to run inference on the trained model for neural machine translation.

Inferencing the Transformer Model

Let’s start by creating a new instance of the TransformerModel class that was previously implemented in this tutorial.

You will feed into it the relevant input arguments as specified in the paper of Vaswani et al. (2017) and the relevant information about the dataset in use:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

# Define the model parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of model layers' outputs d_ff = 2048 # Dimensionality of the inner fully connected layer n = 6 # Number of layers in the encoder stack # Define the dataset parameters enc_seq_length = 7 # Encoder sequence length dec_seq_length = 12 # Decoder sequence length enc_vocab_size = 2405 # Encoder vocabulary size dec_vocab_size = 3858 # Decoder vocabulary size # Create model inferencing_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, 0) |

Here, note that the last input being fed into the TransformerModel corresponded to the dropout rate for each of the Dropout layers in the Transformer model. These Dropout layers will not be used during model inferencing (you will eventually set the training argument to False), so you may safely set the dropout rate to 0.

Furthermore, the TransformerModel class was already saved into a separate script named model.py. Hence, to be able to use the TransformerModel class, you need to include from model import TransformerModel.

Next, let’s create a class, Translate, that inherits from the Module base class in Keras and assign the initialized inferencing model to the variable transformer:

|

1 2 3 4 5 |

class Translate(Module): def __init__(self, inferencing_model, **kwargs): super(Translate, self).__init__(**kwargs) self.transformer = inferencing_model ... |

When you trained the Transformer model, you saw that you first needed to tokenize the sequences of text that were to be fed into both the encoder and decoder. You achieved this by creating a vocabulary of words and replacing each word with its corresponding vocabulary index.

You will need to implement a similar process during the inferencing stage before feeding the sequence of text to be translated into the Transformer model.

For this purpose, you will include within the class the following load_tokenizer method, which will serve to load the encoder and decoder tokenizers that you would have generated and saved during the training stage:

|

1 2 3 |

def load_tokenizer(self, name): with open(name, 'rb') as handle: return load(handle) |

It is important that you tokenize the input text at the inferencing stage using the same tokenizers generated at the training stage of the Transformer model since these tokenizers would have already been trained on text sequences similar to your testing data.

The next step is to create the class method, call(), that will take care to:

- Append the start (<START>) and end-of-string (<EOS>) tokens to the input sentence:

|

1 2 |

def __call__(self, sentence): sentence[0] = "<START> " + sentence[0] + " <EOS>" |

- Load the encoder and decoder tokenizers (in this case, saved in the

enc_tokenizer.pklanddec_tokenizer.pklpickle files, respectively):

|

1 2 |

enc_tokenizer = self.load_tokenizer('enc_tokenizer.pkl') dec_tokenizer = self.load_tokenizer('dec_tokenizer.pkl') |

- Prepare the input sentence by tokenizing it first, then padding it to the maximum phrase length, and subsequently converting it to a tensor:

|

1 2 3 |

encoder_input = enc_tokenizer.texts_to_sequences(sentence) encoder_input = pad_sequences(encoder_input, maxlen=enc_seq_length, padding='post') encoder_input = convert_to_tensor(encoder_input, dtype=int64) |

- Repeat a similar tokenization and tensor conversion procedure for the <START> and <EOS> tokens at the output:

|

1 2 3 4 5 |

output_start = dec_tokenizer.texts_to_sequences(["<START>"]) output_start = convert_to_tensor(output_start[0], dtype=int64) output_end = dec_tokenizer.texts_to_sequences(["<EOS>"]) output_end = convert_to_tensor(output_end[0], dtype=int64) |

- Prepare the output array that will contain the translated text. Since you do not know the length of the translated sentence in advance, you will initialize the size of the output array to 0, but set its

dynamic_sizeparameter toTrueso that it may grow past its initial size. You will then set the first value in this output array to the <START> token:

|

1 2 |

decoder_output = TensorArray(dtype=int64, size=0, dynamic_size=True) decoder_output = decoder_output.write(0, output_start) |

- Iterate, up to the decoder sequence length, each time calling the Transformer model to predict an output token. Here, the

traininginput, which is then passed on to each of the Transformer’sDropoutlayers, is set toFalseso that no values are dropped during inference. The prediction with the highest score is then selected and written at the next available index of the output array. Theforloop is terminated with abreakstatement as soon as an <EOS> token is predicted:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

for i in range(dec_seq_length): prediction = self.transformer(encoder_input, transpose(decoder_output.stack()), training=False) prediction = prediction[:, -1, :] predicted_id = argmax(prediction, axis=-1) predicted_id = predicted_id[0][newaxis] decoder_output = decoder_output.write(i + 1, predicted_id) if predicted_id == output_end: break |

- Decode the predicted tokens into an output list and return it:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

output = transpose(decoder_output.stack())[0] output = output.numpy() output_str = [] # Decode the predicted tokens into an output list for i in range(output.shape[0]): key = output[i] translation = dec_tokenizer.index_word[key] output_str.append(translation) return output_str |

The complete code listing, so far, is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 |

from pickle import load from tensorflow import Module from keras.preprocessing.sequence import pad_sequences from tensorflow import convert_to_tensor, int64, TensorArray, argmax, newaxis, transpose from model import TransformerModel # Define the model parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of model layers' outputs d_ff = 2048 # Dimensionality of the inner fully connected layer n = 6 # Number of layers in the encoder stack # Define the dataset parameters enc_seq_length = 7 # Encoder sequence length dec_seq_length = 12 # Decoder sequence length enc_vocab_size = 2405 # Encoder vocabulary size dec_vocab_size = 3858 # Decoder vocabulary size # Create model inferencing_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, 0) class Translate(Module): def __init__(self, inferencing_model, **kwargs): super(Translate, self).__init__(**kwargs) self.transformer = inferencing_model def load_tokenizer(self, name): with open(name, 'rb') as handle: return load(handle) def __call__(self, sentence): # Append start and end of string tokens to the input sentence sentence[0] = "<START> " + sentence[0] + " <EOS>" # Load encoder and decoder tokenizers enc_tokenizer = self.load_tokenizer('enc_tokenizer.pkl') dec_tokenizer = self.load_tokenizer('dec_tokenizer.pkl') # Prepare the input sentence by tokenizing, padding and converting to tensor encoder_input = enc_tokenizer.texts_to_sequences(sentence) encoder_input = pad_sequences(encoder_input, maxlen=enc_seq_length, padding='post') encoder_input = convert_to_tensor(encoder_input, dtype=int64) # Prepare the output <START> token by tokenizing, and converting to tensor output_start = dec_tokenizer.texts_to_sequences(["<START>"]) output_start = convert_to_tensor(output_start[0], dtype=int64) # Prepare the output <EOS> token by tokenizing, and converting to tensor output_end = dec_tokenizer.texts_to_sequences(["<EOS>"]) output_end = convert_to_tensor(output_end[0], dtype=int64) # Prepare the output array of dynamic size decoder_output = TensorArray(dtype=int64, size=0, dynamic_size=True) decoder_output = decoder_output.write(0, output_start) for i in range(dec_seq_length): # Predict an output token prediction = self.transformer(encoder_input, transpose(decoder_output.stack()), training=False) prediction = prediction[:, -1, :] # Select the prediction with the highest score predicted_id = argmax(prediction, axis=-1) predicted_id = predicted_id[0][newaxis] # Write the selected prediction to the output array at the next available index decoder_output = decoder_output.write(i + 1, predicted_id) # Break if an <EOS> token is predicted if predicted_id == output_end: break output = transpose(decoder_output.stack())[0] output = output.numpy() output_str = [] # Decode the predicted tokens into an output string for i in range(output.shape[0]): key = output[i] print(dec_tokenizer.index_word[key]) return output_str |

Want to Get Started With Building Transformer Models with Attention?

Take my free 12-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Testing Out the Code

In order to test out the code, let’s have a look at the test_dataset.txt file that you would have saved when preparing the dataset for training. This text file contains a set of English-German sentence pairs that have been reserved for testing, from which you can select a couple of sentences to test.

Let’s start with the first sentence:

|

1 2 |

# Sentence to translate sentence = ['im thirsty'] |

The corresponding ground truth translation in German for this sentence, including the <START> and <EOS> decoder tokens, should be: <START> ich bin durstig <EOS>.

If you have a look at the plotted training and validation loss curves for this model (here, you are training for 20 epochs), you may notice that the validation loss curve slows down considerably and starts plateauing at around epoch 16.

So let’s proceed to load the saved model’s weights at the 16th epoch and check out the prediction that is generated by the model:

|

1 2 3 4 5 6 7 8 |

# Load the trained model's weights at the specified epoch inferencing_model.load_weights('weights/wghts16.ckpt') # Create a new instance of the 'Translate' class translator = Translate(inferencing_model) # Translate the input sentence print(translator(sentence)) |

Running the lines of code above produces the following translated list of words:

|

1 |

['start', 'ich', 'bin', 'durstig', ‘eos'] |

Which is equivalent to the ground truth German sentence that was expected (always keep in mind that since you are training the Transformer model from scratch, you may arrive at different results depending on the random initialization of the model weights).

Let’s check out what would have happened if you had, instead, loaded a set of weights corresponding to a much earlier epoch, such as the 4th epoch. In this case, the generated translation is the following:

|

1 |

['start', 'ich', 'bin', 'nicht', 'nicht', 'eos'] |

In English, this translates to: I in not not, which is clearly far off from the input English sentence, but which is expected since, at this epoch, the learning process of the Transformer model is still at the very early stages.

Let’s try again with a second sentence from the test dataset:

|

1 2 |

# Sentence to translate sentence = ['are we done'] |

The corresponding ground truth translation in German for this sentence, including the <START> and <EOS> decoder tokens, should be: <START> sind wir dann durch <EOS>.

The model’s translation for this sentence, using the weights saved at epoch 16, is:

|

1 |

['start', 'ich', 'war', 'fertig', 'eos'] |

Which, instead, translates to: I was ready. While this is also not equal to the ground truth, it is close to its meaning.

What the last test suggests, however, is that the Transformer model might have required many more data samples to train effectively. This is also corroborated by the validation loss at which the validation loss curve plateaus remain relatively high.

Indeed, Transformer models are notorious for being very data hungry. Vaswani et al. (2017), for example, trained their English-to-German translation model using a dataset containing around 4.5 million sentence pairs.

We trained on the standard WMT 2014 English-German dataset consisting of about 4.5 million sentence pairs…For English-French, we used the significantly larger WMT 2014 English-French dataset consisting of 36M sentences…

– Attention Is All You Need, 2017.

They reported that it took them 3.5 days on 8 P100 GPUs to train the English-to-German translation model.

In comparison, you have only trained on a dataset comprising 10,000 data samples here, split between training, validation, and test sets.

So the next task is actually for you. If you have the computational resources available, try to train the Transformer model on a much larger set of sentence pairs and see if you can obtain better results than the translations obtained here with a limited amount of data.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

Papers

Summary

In this tutorial, you discovered how to run inference on the trained Transformer model for neural machine translation.

Specifically, you learned:

- How to run inference on the trained Transformer model

- How to generate text translations

Do you have any questions?

Ask your questions in the comments below, and I will do my best to answer.

@ Jason Brownlee and @ Stefania Cristina do you plan to release book about transformers?

Great suggestion Jerzy! We appreciate the recommendation.

Thanks for the great tutorial!

Some errors happened when I ran the code. The traceback is as below.

I am still struggling to find the bugs. I did not change any parameters in this tutorial.

Traceback (most recent call last):

File “E:\code\transformer\inference_trans.py”, line 101, in

print(translator(sentence))

File “E:\code\transformer\inference_trans.py”, line 45, in __call__

prediction = self.transformer(encoder_input, transpose(decoder_output.stack()), training=False)

File “C:\Anaconda3\envs\ML\lib\site-packages\keras\utils\traceback_utils.py”, line 70, in error_handler

raise e.with_traceback(filtered_tb) from None

File “E:\code\transformer\transformer.py”, line 198, in call

decoder_output = self.decoder(decoder_input, encoder_output, dec_in_lookahead_mask, enc_padding_mask, training)

File “E:\code\transformer\transformer.py”, line 158, in call

pos_encoding_output = self.pos_encoding(output_target)

File “E:\code\transformer\positional_encoding.py”, line 47, in call

embedded_words = self.word_embedding_layer(inputs)

ValueError: Exception encountered when calling layer “position_embedding_fixed_weights_1″ ” f”(type PositionEmbeddingFixedWeights).

In this

tf.Variablecreation, the initial value’s shape ((2404, 512)) is not compatible with the explicitly suppliedshapeargument ((2405, 512)).Call arguments received by layer “position_embedding_fixed_weights” ” f”(type PositionEmbeddingFixedWeights):

\u2022 inputs=tf.Tensor(shape=(1, 7), dtype=int64)

Hi Helen, thank you for your message!

When you inference the Transformer model, you need to make sure that you set these parameter values according to how your dataset was prepared at the training stage:

# Define the dataset parametersenc_seq_length = 7 # Encoder sequence length

dec_seq_length = 12 # Decoder sequence length

enc_vocab_size = 2405 # Encoder vocabulary size

dec_vocab_size = 3858 # Decoder vocabulary size

From your error, I suspect that (at least) the value of the enc_vocab_size needs to change to 2404. Can you, please, check if your error is originating from here?

Thanks for your help!

It turned out that both enc_vocab_size and dec_vocab_size are set wrong.

Hi! This is an excellent post, thanks for the efforts!

I have the following doubt: during inference, the decoder is fed the token “START” from which it predicts “dec_seq_length”, 12 in this case. The shape of the decoder thus would be [batch_size, 12, d_model], from which only the last prediction is taken (prediction = prediction[:, -1, :]).

My question is, do the remaining 11 predictions have any meaning? As the Transformer is trained with the values shifted to the right one unit I understand that those 11 are the previous words during training but in inference, I´m having a hard time understanding what it is predicting or if these values should just be omitted because they don´t have any meaning at all. From the forecasting point of view, I guess you can just omit them but I´m just curious.

Thanks in advance!

Hi Alex…You are very welcome! The following resource may add clarity:

https://towardsdatascience.com/how-to-use-transformer-networks-to-build-a-forecasting-model-297f9270e630

Hi,

Nice explanation. I created a hindi to english transliteration model using transformer in keras. The model is working really well. The problem I am facing is with inference time. Do you have any suggestions to reduce inference time?

Hi Lokesh…You may find value in using Google Colab with a GPU option.

Hi,

As always great explanation and clean code! Thank you very much for such a great place to learn.

Working on an implementation of Decision Transformers (DT) I realized that the authors don’t pad the inputs during inference, like you are doing here.

It got me wondering why there is no padding for inference. Could this be just because there are only decoders? What would happen if we didn’t use padding during inference?

Hi Gabriel…You are very welcome! This is a great question. Can you give it a try so that we can learn from your results?

Hi,

In the previous tutorial the method for saving the weights of model for every epoch, tensorflow raises an exception that the weight must be saved as “__.weights.h5” . Now during inferencing I have loaded the model instance first as shown in this tutorial and the arguments [enc_vocab_size = 2189 and dec_vocab_size=3447], i have trained the model for 10000 datasets.

But for loading the weights, following error is raised

Traceback (most recent call last):

File “”, line 1, in

training_model.load_weights(“wght0.weights.h5”)

File “C:\Users\ABC\AppData\Local\Programs\Python\Python310\lib\site-packages\keras\src\utils\traceback_utils.py”, line 122, in error_handler

raise e.with_traceback(filtered_tb) from None

File “C:\Users\ABC\AppData\Local\Programs\Python\Python310\lib\site-packages\keras\src\saving\saving_lib.py”, line 456, in _raise_loading_failure

raise ValueError(msg)

ValueError: A total of 1 objects could not be loaded. Example error message for object :

Layer ’embedding_2′ expected 1 variables, but received 0 variables during loading. Expected: [’embeddings’]

List of objects that could not be loaded:

[]

Hi Raja…It appears that there might be a mismatch between the saved model weights and the model architecture during the loading process. This can happen if the model’s architecture has changed between saving and loading or if the weights are not properly aligned with the model layers.

Here’s a step-by-step approach to troubleshoot and resolve this issue:

### Step-by-Step Guide to Load Model Weights Correctly

#### 1. **Ensure Consistency in Model Architecture**

– Ensure that the model architecture is exactly the same when you load the weights as it was when you saved them. Any changes in the model structure can cause issues during weight loading.

#### 2. **Save Model Weights Correctly**

– Use a consistent naming convention and ensure the file paths are correct.

– Example:

python

# Saving weights after each epoch

checkpoint_callback = tf.keras.callbacks.ModelCheckpoint(

filepath='model_weights_epoch_{epoch:02d}.weights.h5',

save_weights_only=True,

save_freq='epoch'

)

#### 3. **Load Model and Weights**

– Define your model architecture before loading the weights.

– Load the weights using the correct method.

– Example:

python# Define model architecture

model = create_model(enc_vocab_size=2189, dec_vocab_size=3447) # Ensure this matches the training model architecture

# Load weights

model.load_weights('model_weights_epoch_10.weights.h5')

#### 4. **Example Code**

Here is a complete example showing how to define, save, and load model weights:

pythonimport tensorflow as tf

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Input, Embedding, LSTM, Dense

def create_model(enc_vocab_size, dec_vocab_size, embedding_dim=256, units=512):

# Define the model architecture

encoder_inputs = Input(shape=(None,), name='encoder_inputs')

encoder_embedding = Embedding(input_dim=enc_vocab_size, output_dim=embedding_dim, name='encoder_embedding')(encoder_inputs)

encoder_lstm = LSTM(units, return_state=True, name='encoder_lstm')

encoder_outputs, state_h, state_c = encoder_lstm(encoder_embedding)

encoder_states = [state_h, state_c]

decoder_inputs = Input(shape=(None,), name='decoder_inputs')

decoder_embedding = Embedding(input_dim=dec_vocab_size, output_dim=embedding_dim, name='decoder_embedding')(decoder_inputs)

decoder_lstm = LSTM(units, return_sequences=True, return_state=True, name='decoder_lstm')

decoder_outputs, _, _ = decoder_lstm(decoder_embedding, initial_state=encoder_states)

decoder_dense = Dense(dec_vocab_size, activation='softmax', name='decoder_dense')

decoder_outputs = decoder_dense(decoder_outputs)

model = Model([encoder_inputs, decoder_inputs], decoder_outputs)

return model

# Instantiate the model

model = create_model(enc_vocab_size=2189, dec_vocab_size=3447)

# Compile the model (if necessary)

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy')

# Train the model and save weights

# Assume training_data and validation_data are defined

# model.fit(training_data, epochs=10, validation_data=validation_data, callbacks=[checkpoint_callback])

# Load weights

try:

model.load_weights('model_weights_epoch_10.weights.h5')

print("Weights loaded successfully.")

except ValueError as e:

print("Error loading weights:", e)

### Key Points

1. **Consistent Model Architecture**: Ensure the model architecture is the same during both saving and loading weights.

2. **File Naming and Paths**: Double-check the file paths and names to ensure they match what was saved.

3. **Model Compilation**: Sometimes, compiling the model before loading weights can resolve issues.

By following these steps, you should be able to correctly load your model weights and avoid the

ValueErrorrelated to mismatched variables. If you continue to face issues, ensure that the model architecture and weights files are compatible and correctly aligned.Thanks for this guide, I have resolved this issue as I have provided incorrect parameters while creating the instance of transformer, which causes the above-mentioned error.

Now while inferencing the model predicts only the “eos” token instead of predicting the translation of the sentence. Any help in this regard is appreciated.

Thanks

Hi ,

while training the model, I added a cross-check for every epoch to see the trained model prediction to the input sentence. for every epoch iteration i have called the Translator and passed the training model instance to its argument to initiate translator instance and passed the input sentence to that instance but for every epoch the prediction of the model is “”. token

INput and output of Translator

1. tokenized input sentence:

[[1, 3, 151, 1336, 2]]

2. padded tokens:

tf.Tensor([[ 1 3 151 1336 2 0 0]], shape=(1, 7), dtype=int64)

3. Decoder output PREDICTION scores for each tokens in the vocabulary

tf.Tensor([[-12.633082 -12.154094 5.066319 … -3.5435305 -3.546515

-3.5011318]], shape=(1, 3474), dtype=float32)

4. get the token with maximum score using tensorflow argmax

tf.Tensor([2], shape=(1,), dtype=int64)

5. Decoder output stack: tf.Tensor([1 2], shape=(2,), dtype=int64)

Input sentence: [” i made cookies “]

Result:

translation: [‘start’, ‘eos’]

I have exactly the same problem. Did you find out what causes this problem?

Thanks for the tutorial, accomplished seq2seq translation from English to German…