The Pix2Pix Generative Adversarial Network, or GAN, is an approach to training a deep convolutional neural network for image-to-image translation tasks.

The careful configuration of architecture as a type of image-conditional GAN allows for both the generation of large images compared to prior GAN models (e.g. such as 256×256 pixels) and the capability of performing well on a variety of different image-to-image translation tasks.

In this tutorial, you will discover how to develop a Pix2Pix generative adversarial network for image-to-image translation.

After completing this tutorial, you will know:

- How to load and prepare the satellite image to Google maps image-to-image translation dataset.

- How to develop a Pix2Pix model for translating satellite photographs to Google map images.

- How to use the final Pix2Pix generator model to translate ad hoc satellite images.

Kick-start your project with my new book Generative Adversarial Networks with Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Updated Jan/2021: Updated so layer freezing works with batch norm.

How to Develop a Pix2Pix Generative Adversarial Network for Image-to-Image Translation

Photo by European Southern Observatory, some rights reserved.

Tutorial Overview

This tutorial is divided into five parts; they are:

- What Is the Pix2Pix GAN?

- Satellite to Map Image Translation Dataset

- How to Develop and Train a Pix2Pix Model

- How to Translate Images With a Pix2Pix Model

- How to Translate Google Maps to Satellite Images

What Is the Pix2Pix GAN?

Pix2Pix is a Generative Adversarial Network, or GAN, model designed for general purpose image-to-image translation.

The approach was presented by Phillip Isola, et al. in their 2016 paper titled “Image-to-Image Translation with Conditional Adversarial Networks” and presented at CVPR in 2017.

The GAN architecture is comprised of a generator model for outputting new plausible synthetic images, and a discriminator model that classifies images as real (from the dataset) or fake (generated). The discriminator model is updated directly, whereas the generator model is updated via the discriminator model. As such, the two models are trained simultaneously in an adversarial process where the generator seeks to better fool the discriminator and the discriminator seeks to better identify the counterfeit images.

The Pix2Pix model is a type of conditional GAN, or cGAN, where the generation of the output image is conditional on an input, in this case, a source image. The discriminator is provided both with a source image and the target image and must determine whether the target is a plausible transformation of the source image.

The generator is trained via adversarial loss, which encourages the generator to generate plausible images in the target domain. The generator is also updated via L1 loss measured between the generated image and the expected output image. This additional loss encourages the generator model to create plausible translations of the source image.

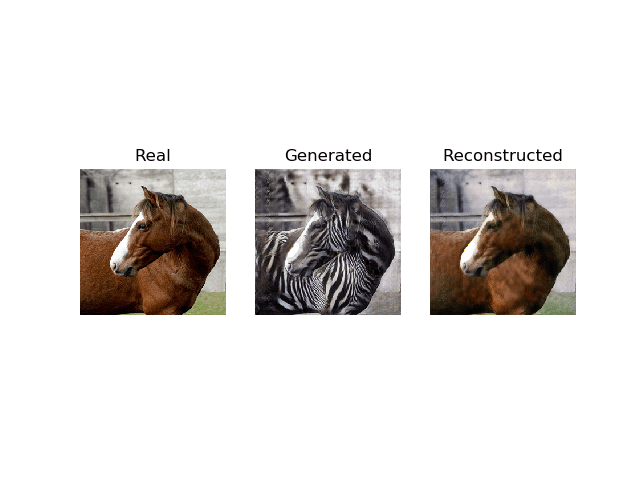

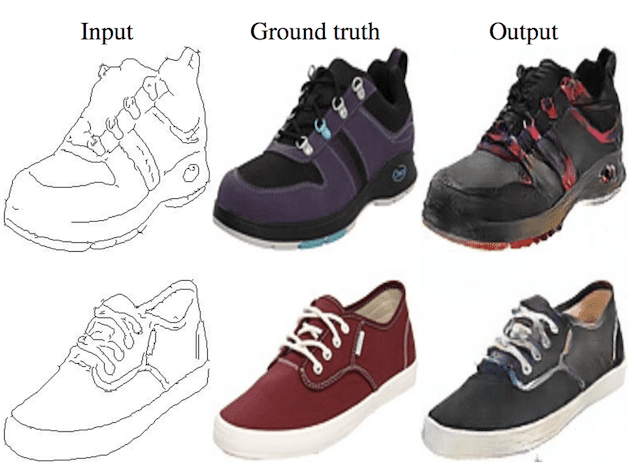

The Pix2Pix GAN has been demonstrated on a range of image-to-image translation tasks such as converting maps to satellite photographs, black and white photographs to color, and sketches of products to product photographs.

Now that we are familiar with the Pix2Pix GAN, let’s prepare a dataset that we can use with image-to-image translation.

Want to Develop GANs from Scratch?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Satellite to Map Image Translation Dataset

In this tutorial, we will use the so-called “maps” dataset used in the Pix2Pix paper.

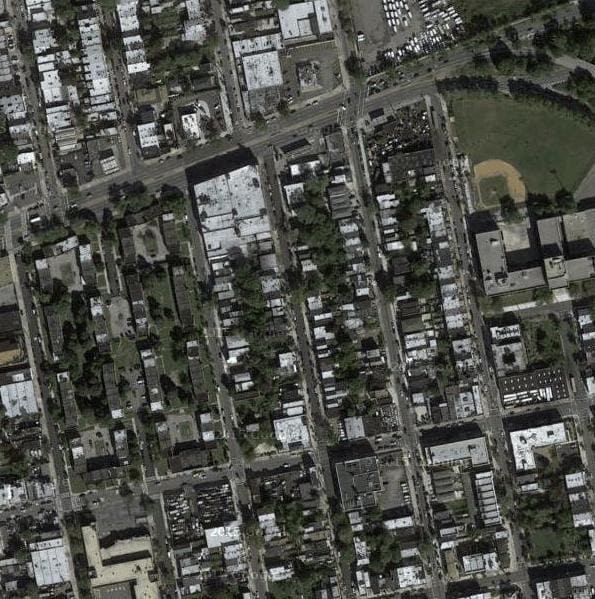

This is a dataset comprised of satellite images of New York and their corresponding Google maps pages. The image translation problem involves converting satellite photos to Google maps format, or the reverse, Google maps images to Satellite photos.

The dataset is provided on the pix2pix website and can be downloaded as a 255-megabyte zip file.

Download the dataset and unzip it into your current working directory. This will create a directory called “maps” with the following structure:

|

1 2 3 |

maps ├── train └── val |

The train folder contains 1,097 images, whereas the validation dataset contains 1,099 images.

Images have a digit filename and are in JPEG format. Each image is 1,200 pixels wide and 600 pixels tall and contains both the satellite image on the left and the Google maps image on the right.

Sample Image From the Maps Dataset Including Both Satellite and Google Maps Image.

We can prepare this dataset for training a Pix2Pix GAN model in Keras. We will just work with the images in the training dataset. Each image will be loaded, rescaled, and split into the satellite and Google map elements. The result will be 1,097 color image pairs with the width and height of 256×256 pixels.

The load_images() function below implements this. It enumerates the list of images in a given directory, loads each with the target size of 256×512 pixels, splits each image into satellite and map elements and returns an array of each.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# load all images in a directory into memory def load_images(path, size=(256,512)): src_list, tar_list = list(), list() # enumerate filenames in directory, assume all are images for filename in listdir(path): # load and resize the image pixels = load_img(path + filename, target_size=size) # convert to numpy array pixels = img_to_array(pixels) # split into satellite and map sat_img, map_img = pixels[:, :256], pixels[:, 256:] src_list.append(sat_img) tar_list.append(map_img) return [asarray(src_list), asarray(tar_list)] |

We can call this function with the path to the training dataset. Once loaded, we can save the prepared arrays to a new file in compressed format for later use.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

# load, split and scale the maps dataset ready for training from os import listdir from numpy import asarray from numpy import vstack from keras.preprocessing.image import img_to_array from keras.preprocessing.image import load_img from numpy import savez_compressed # load all images in a directory into memory def load_images(path, size=(256,512)): src_list, tar_list = list(), list() # enumerate filenames in directory, assume all are images for filename in listdir(path): # load and resize the image pixels = load_img(path + filename, target_size=size) # convert to numpy array pixels = img_to_array(pixels) # split into satellite and map sat_img, map_img = pixels[:, :256], pixels[:, 256:] src_list.append(sat_img) tar_list.append(map_img) return [asarray(src_list), asarray(tar_list)] # dataset path path = 'maps/train/' # load dataset [src_images, tar_images] = load_images(path) print('Loaded: ', src_images.shape, tar_images.shape) # save as compressed numpy array filename = 'maps_256.npz' savez_compressed(filename, src_images, tar_images) print('Saved dataset: ', filename) |

Running the example loads all images in the training dataset, summarizes their shape to ensure the images were loaded correctly, then saves the arrays to a new file called maps_256.npz in compressed NumPy array format.

|

1 2 |

Loaded: (1096, 256, 256, 3) (1096, 256, 256, 3) Saved dataset: maps_256.npz |

This file can be loaded later via the load() NumPy function and retrieving each array in turn.

We can then plot some images pairs to confirm the data has been handled correctly.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

# load the prepared dataset from numpy import load from matplotlib import pyplot # load the dataset data = load('maps_256.npz') src_images, tar_images = data['arr_0'], data['arr_1'] print('Loaded: ', src_images.shape, tar_images.shape) # plot source images n_samples = 3 for i in range(n_samples): pyplot.subplot(2, n_samples, 1 + i) pyplot.axis('off') pyplot.imshow(src_images[i].astype('uint8')) # plot target image for i in range(n_samples): pyplot.subplot(2, n_samples, 1 + n_samples + i) pyplot.axis('off') pyplot.imshow(tar_images[i].astype('uint8')) pyplot.show() |

Running this example loads the prepared dataset and summarizes the shape of each array, confirming our expectations of a little over one thousand 256×256 image pairs.

|

1 |

Loaded: (1096, 256, 256, 3) (1096, 256, 256, 3) |

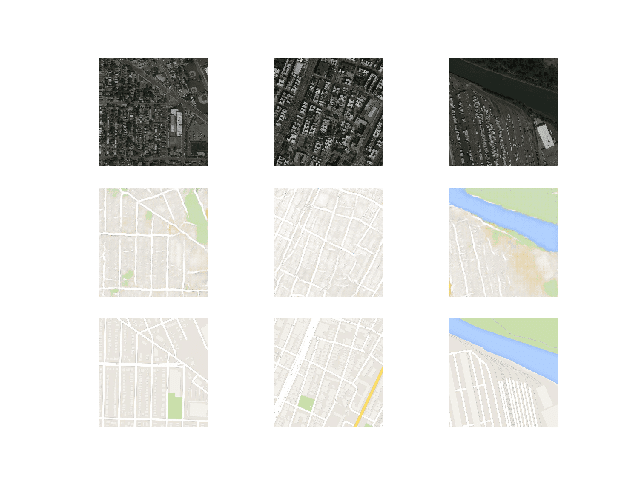

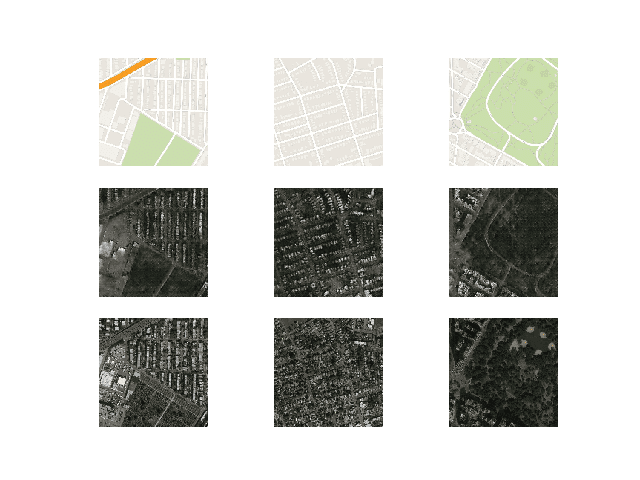

A plot of three image pairs is also created showing the satellite images on the top and Google map images on the bottom.

We can see that satellite images are quite complex and that although the Google map images are much simpler, they have color codings for things like major roads, water, and parks.

Plot of Three Image Pairs Showing Satellite Images (top) and Google Map Images (bottom).

Now that we have prepared the dataset for image translation, we can develop our Pix2Pix GAN model.

How to Develop and Train a Pix2Pix Model

In this section, we will develop the Pix2Pix model for translating satellite photos to Google maps images.

The same model architecture and configuration described in the paper was used across a range of image translation tasks. This architecture is both described in the body of the paper, with additional detail in the appendix of the paper, and a fully working implementation provided as open source with the Torch deep learning framework.

The implementation in this section will use the Keras deep learning framework based directly on the model described in the paper and implemented in the author’s code base, designed to take and generate color images with the size 256×256 pixels.

The architecture is comprised of two models: the discriminator and the generator.

The discriminator is a deep convolutional neural network that performs image classification. Specifically, conditional-image classification. It takes both the source image (e.g. satellite photo) and the target image (e.g. Google maps image) as input and predicts the likelihood of whether target image is real or a fake translation of the source image.

The discriminator design is based on the effective receptive field of the model, which defines the relationship between one output of the model to the number of pixels in the input image. This is called a PatchGAN model and is carefully designed so that each output prediction of the model maps to a 70×70 square or patch of the input image. The benefit of this approach is that the same model can be applied to input images of different sizes, e.g. larger or smaller than 256×256 pixels.

The output of the model depends on the size of the input image but may be one value or a square activation map of values. Each value is a probability for the likelihood that a patch in the input image is real. These values can be averaged to give an overall likelihood or classification score if needed.

The define_discriminator() function below implements the 70×70 PatchGAN discriminator model as per the design of the model in the paper. The model takes two input images that are concatenated together and predicts a patch output of predictions. The model is optimized using binary cross entropy, and a weighting is used so that updates to the model have half (0.5) the usual effect. The authors of Pix2Pix recommend this weighting of model updates to slow down changes to the discriminator, relative to the generator model during training.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 |

# define the discriminator model def define_discriminator(image_shape): # weight initialization init = RandomNormal(stddev=0.02) # source image input in_src_image = Input(shape=image_shape) # target image input in_target_image = Input(shape=image_shape) # concatenate images channel-wise merged = Concatenate()([in_src_image, in_target_image]) # C64 d = Conv2D(64, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(merged) d = LeakyReLU(alpha=0.2)(d) # C128 d = Conv2D(128, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # C256 d = Conv2D(256, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # C512 d = Conv2D(512, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # second last output layer d = Conv2D(512, (4,4), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # patch output d = Conv2D(1, (4,4), padding='same', kernel_initializer=init)(d) patch_out = Activation('sigmoid')(d) # define model model = Model([in_src_image, in_target_image], patch_out) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss='binary_crossentropy', optimizer=opt, loss_weights=[0.5]) return model |

The generator model is more complex than the discriminator model.

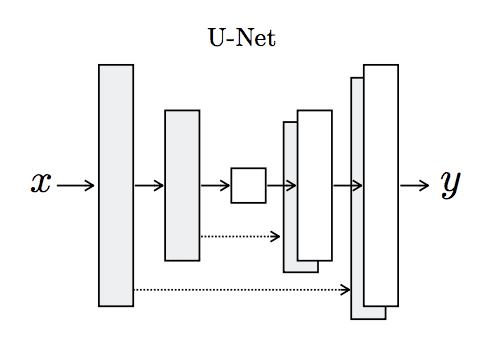

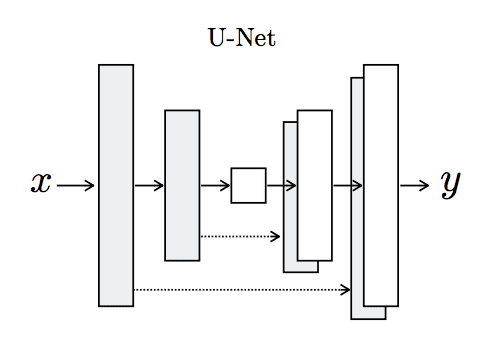

The generator is an encoder-decoder model using a U-Net architecture. The model takes a source image (e.g. satellite photo) and generates a target image (e.g. Google maps image). It does this by first downsampling or encoding the input image down to a bottleneck layer, then upsampling or decoding the bottleneck representation to the size of the output image. The U-Net architecture means that skip-connections are added between the encoding layers and the corresponding decoding layers, forming a U-shape.

The image below makes the skip-connections clear, showing how the first layer of the encoder is connected to the last layer of the decoder, and so on.

Architecture of the U-Net Generator Model

Taken from Image-to-Image Translation With Conditional Adversarial Networks

The encoder and decoder of the generator are comprised of standardized blocks of convolutional, batch normalization, dropout, and activation layers. This standardization means that we can develop helper functions to create each block of layers and call it repeatedly to build-up the encoder and decoder parts of the model.

The define_generator() function below implements the U-Net encoder-decoder generator model. It uses the define_encoder_block() helper function to create blocks of layers for the encoder and the decoder_block() function to create blocks of layers for the decoder. The tanh activation function is used in the output layer, meaning that pixel values in the generated image will be in the range [-1,1].

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 |

# define an encoder block def define_encoder_block(layer_in, n_filters, batchnorm=True): # weight initialization init = RandomNormal(stddev=0.02) # add downsampling layer g = Conv2D(n_filters, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(layer_in) # conditionally add batch normalization if batchnorm: g = BatchNormalization()(g, training=True) # leaky relu activation g = LeakyReLU(alpha=0.2)(g) return g # define a decoder block def decoder_block(layer_in, skip_in, n_filters, dropout=True): # weight initialization init = RandomNormal(stddev=0.02) # add upsampling layer g = Conv2DTranspose(n_filters, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(layer_in) # add batch normalization g = BatchNormalization()(g, training=True) # conditionally add dropout if dropout: g = Dropout(0.5)(g, training=True) # merge with skip connection g = Concatenate()([g, skip_in]) # relu activation g = Activation('relu')(g) return g # define the standalone generator model def define_generator(image_shape=(256,256,3)): # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=image_shape) # encoder model e1 = define_encoder_block(in_image, 64, batchnorm=False) e2 = define_encoder_block(e1, 128) e3 = define_encoder_block(e2, 256) e4 = define_encoder_block(e3, 512) e5 = define_encoder_block(e4, 512) e6 = define_encoder_block(e5, 512) e7 = define_encoder_block(e6, 512) # bottleneck, no batch norm and relu b = Conv2D(512, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(e7) b = Activation('relu')(b) # decoder model d1 = decoder_block(b, e7, 512) d2 = decoder_block(d1, e6, 512) d3 = decoder_block(d2, e5, 512) d4 = decoder_block(d3, e4, 512, dropout=False) d5 = decoder_block(d4, e3, 256, dropout=False) d6 = decoder_block(d5, e2, 128, dropout=False) d7 = decoder_block(d6, e1, 64, dropout=False) # output g = Conv2DTranspose(3, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d7) out_image = Activation('tanh')(g) # define model model = Model(in_image, out_image) return model |

The discriminator model is trained directly on real and generated images, whereas the generator model is not.

Instead, the generator model is trained via the discriminator model. It is updated to minimize the loss predicted by the discriminator for generated images marked as “real.” As such, it is encouraged to generate more real images. The generator is also updated to minimize the L1 loss or mean absolute error between the generated image and the target image.

The generator is updated via a weighted sum of both the adversarial loss and the L1 loss, where the authors of the model recommend a weighting of 100 to 1 in favor of the L1 loss. This is to encourage the generator strongly toward generating plausible translations of the input image, and not just plausible images in the target domain.

This can be achieved by defining a new logical model comprised of the weights in the existing standalone generator and discriminator model. This logical or composite model involves stacking the generator on top of the discriminator. A source image is provided as input to the generator and to the discriminator, although the output of the generator is connected to the discriminator as the corresponding “target” image. The discriminator then predicts the likelihood that the generator was a real translation of the source image.

The discriminator is updated in a standalone manner, so the weights are reused in this composite model but are marked as not trainable. The composite model is updated with two targets, one indicating that the generated images were real (cross entropy loss), forcing large weight updates in the generator toward generating more realistic images, and the executed real translation of the image, which is compared against the output of the generator model (L1 loss).

The define_gan() function below implements this, taking the already-defined generator and discriminator models as arguments and using the Keras functional API to connect them together into a composite model. Both loss functions are specified for the two outputs of the model and the weights used for each are specified in the loss_weights argument to the compile() function.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# define the combined generator and discriminator model, for updating the generator def define_gan(g_model, d_model, image_shape): # make weights in the discriminator not trainable for layer in d_model.layers: if not isinstance(layer, BatchNormalization): layer.trainable = False # define the source image in_src = Input(shape=image_shape) # connect the source image to the generator input gen_out = g_model(in_src) # connect the source input and generator output to the discriminator input dis_out = d_model([in_src, gen_out]) # src image as input, generated image and classification output model = Model(in_src, [dis_out, gen_out]) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'mae'], optimizer=opt, loss_weights=[1,100]) return model |

Next, we can load our paired images dataset in compressed NumPy array format.

This will return a list of two NumPy arrays: the first for source images and the second for corresponding target images.

|

1 2 3 4 5 6 7 8 9 10 |

# load and prepare training images def load_real_samples(filename): # load compressed arrays data = load(filename) # unpack arrays X1, X2 = data['arr_0'], data['arr_1'] # scale from [0,255] to [-1,1] X1 = (X1 - 127.5) / 127.5 X2 = (X2 - 127.5) / 127.5 return [X1, X2] |

Training the discriminator will require batches of real and fake images.

The generate_real_samples() function below will prepare a batch of random pairs of images from the training dataset, and the corresponding discriminator label of class=1 to indicate they are real.

|

1 2 3 4 5 6 7 8 9 10 11 |

# select a batch of random samples, returns images and target def generate_real_samples(dataset, n_samples, patch_shape): # unpack dataset trainA, trainB = dataset # choose random instances ix = randint(0, trainA.shape[0], n_samples) # retrieve selected images X1, X2 = trainA[ix], trainB[ix] # generate 'real' class labels (1) y = ones((n_samples, patch_shape, patch_shape, 1)) return [X1, X2], y |

The generate_fake_samples() function below uses the generator model and a batch of real source images to generate an equivalent batch of target images for the discriminator.

These are returned with the label class-0 to indicate to the discriminator that they are fake.

|

1 2 3 4 5 6 7 |

# generate a batch of images, returns images and targets def generate_fake_samples(g_model, samples, patch_shape): # generate fake instance X = g_model.predict(samples) # create 'fake' class labels (0) y = zeros((len(X), patch_shape, patch_shape, 1)) return X, y |

Typically, GAN models do not converge; instead, an equilibrium is found between the generator and discriminator models. As such, we cannot easily judge when training should stop. Therefore, we can save the model and use it to generate sample image-to-image translations periodically during training, such as every 10 training epochs.

We can then review the generated images at the end of training and use the image quality to choose a final model.

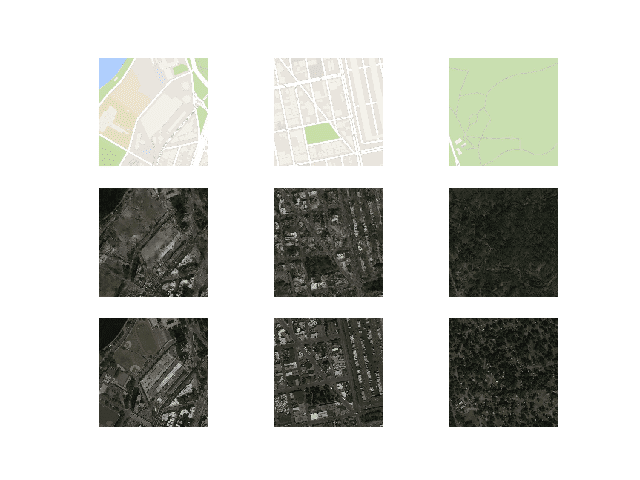

The summarize_performance() function implements this, taking the generator model at a point during training and using it to generate a number, in this case three, of translations of randomly selected images in the dataset. The source, generated image, and expected target are then plotted as three rows of images and the plot saved to file. Additionally, the model is saved to an H5 formatted file that makes it easier to load later.

Both the image and model filenames include the training iteration number, allowing us to easily tell them apart at the end of training.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 |

# generate samples and save as a plot and save the model def summarize_performance(step, g_model, dataset, n_samples=3): # select a sample of input images [X_realA, X_realB], _ = generate_real_samples(dataset, n_samples, 1) # generate a batch of fake samples X_fakeB, _ = generate_fake_samples(g_model, X_realA, 1) # scale all pixels from [-1,1] to [0,1] X_realA = (X_realA + 1) / 2.0 X_realB = (X_realB + 1) / 2.0 X_fakeB = (X_fakeB + 1) / 2.0 # plot real source images for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + i) pyplot.axis('off') pyplot.imshow(X_realA[i]) # plot generated target image for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + n_samples + i) pyplot.axis('off') pyplot.imshow(X_fakeB[i]) # plot real target image for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + n_samples*2 + i) pyplot.axis('off') pyplot.imshow(X_realB[i]) # save plot to file filename1 = 'plot_%06d.png' % (step+1) pyplot.savefig(filename1) pyplot.close() # save the generator model filename2 = 'model_%06d.h5' % (step+1) g_model.save(filename2) print('>Saved: %s and %s' % (filename1, filename2)) |

Finally, we can train the generator and discriminator models.

The train() function below implements this, taking the defined generator, discriminator, composite model, and loaded dataset as input. The number of epochs is set at 100 to keep training times down, although 200 was used in the paper. A batch size of 1 is used as is recommended in the paper.

Training involves a fixed number of training iterations. There are 1,097 images in the training dataset. One epoch is one iteration through this number of examples, with a batch size of one means 1,097 training steps. The generator is saved and evaluated every 10 epochs or every 10,970 training steps, and the model will run for 100 epochs, or a total of 109,700 training steps.

Each training step involves first selecting a batch of real examples, then using the generator to generate a batch of matching fake samples using the real source images. The discriminator is then updated with the batch of real images and then fake images.

Next, the generator model is updated providing the real source images as input and providing class labels of 1 (real) and the real target images as the expected outputs of the model required for calculating loss. The generator has two loss scores as well as the weighted sum score returned from the call to train_on_batch(). We are only interested in the weighted sum score (the first value returned) as it is used to update the model weights.

Finally, the loss for each update is reported to the console each training iteration and model performance is evaluated every 10 training epochs.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

# train pix2pix model def train(d_model, g_model, gan_model, dataset, n_epochs=100, n_batch=1): # determine the output square shape of the discriminator n_patch = d_model.output_shape[1] # unpack dataset trainA, trainB = dataset # calculate the number of batches per training epoch bat_per_epo = int(len(trainA) / n_batch) # calculate the number of training iterations n_steps = bat_per_epo * n_epochs # manually enumerate epochs for i in range(n_steps): # select a batch of real samples [X_realA, X_realB], y_real = generate_real_samples(dataset, n_batch, n_patch) # generate a batch of fake samples X_fakeB, y_fake = generate_fake_samples(g_model, X_realA, n_patch) # update discriminator for real samples d_loss1 = d_model.train_on_batch([X_realA, X_realB], y_real) # update discriminator for generated samples d_loss2 = d_model.train_on_batch([X_realA, X_fakeB], y_fake) # update the generator g_loss, _, _ = gan_model.train_on_batch(X_realA, [y_real, X_realB]) # summarize performance print('>%d, d1[%.3f] d2[%.3f] g[%.3f]' % (i+1, d_loss1, d_loss2, g_loss)) # summarize model performance if (i+1) % (bat_per_epo * 10) == 0: summarize_performance(i, g_model, dataset) |

Tying all of this together, the complete code example of training a Pix2Pix GAN to translate satellite photos to Google maps images is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 |

# example of pix2pix gan for satellite to map image-to-image translation from numpy import load from numpy import zeros from numpy import ones from numpy.random import randint from keras.optimizers import Adam from keras.initializers import RandomNormal from keras.models import Model from keras.models import Input from keras.layers import Conv2D from keras.layers import Conv2DTranspose from keras.layers import LeakyReLU from keras.layers import Activation from keras.layers import Concatenate from keras.layers import Dropout from keras.layers import BatchNormalization from keras.layers import LeakyReLU from matplotlib import pyplot # define the discriminator model def define_discriminator(image_shape): # weight initialization init = RandomNormal(stddev=0.02) # source image input in_src_image = Input(shape=image_shape) # target image input in_target_image = Input(shape=image_shape) # concatenate images channel-wise merged = Concatenate()([in_src_image, in_target_image]) # C64 d = Conv2D(64, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(merged) d = LeakyReLU(alpha=0.2)(d) # C128 d = Conv2D(128, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # C256 d = Conv2D(256, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # C512 d = Conv2D(512, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # second last output layer d = Conv2D(512, (4,4), padding='same', kernel_initializer=init)(d) d = BatchNormalization()(d) d = LeakyReLU(alpha=0.2)(d) # patch output d = Conv2D(1, (4,4), padding='same', kernel_initializer=init)(d) patch_out = Activation('sigmoid')(d) # define model model = Model([in_src_image, in_target_image], patch_out) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss='binary_crossentropy', optimizer=opt, loss_weights=[0.5]) return model # define an encoder block def define_encoder_block(layer_in, n_filters, batchnorm=True): # weight initialization init = RandomNormal(stddev=0.02) # add downsampling layer g = Conv2D(n_filters, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(layer_in) # conditionally add batch normalization if batchnorm: g = BatchNormalization()(g, training=True) # leaky relu activation g = LeakyReLU(alpha=0.2)(g) return g # define a decoder block def decoder_block(layer_in, skip_in, n_filters, dropout=True): # weight initialization init = RandomNormal(stddev=0.02) # add upsampling layer g = Conv2DTranspose(n_filters, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(layer_in) # add batch normalization g = BatchNormalization()(g, training=True) # conditionally add dropout if dropout: g = Dropout(0.5)(g, training=True) # merge with skip connection g = Concatenate()([g, skip_in]) # relu activation g = Activation('relu')(g) return g # define the standalone generator model def define_generator(image_shape=(256,256,3)): # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=image_shape) # encoder model e1 = define_encoder_block(in_image, 64, batchnorm=False) e2 = define_encoder_block(e1, 128) e3 = define_encoder_block(e2, 256) e4 = define_encoder_block(e3, 512) e5 = define_encoder_block(e4, 512) e6 = define_encoder_block(e5, 512) e7 = define_encoder_block(e6, 512) # bottleneck, no batch norm and relu b = Conv2D(512, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(e7) b = Activation('relu')(b) # decoder model d1 = decoder_block(b, e7, 512) d2 = decoder_block(d1, e6, 512) d3 = decoder_block(d2, e5, 512) d4 = decoder_block(d3, e4, 512, dropout=False) d5 = decoder_block(d4, e3, 256, dropout=False) d6 = decoder_block(d5, e2, 128, dropout=False) d7 = decoder_block(d6, e1, 64, dropout=False) # output g = Conv2DTranspose(3, (4,4), strides=(2,2), padding='same', kernel_initializer=init)(d7) out_image = Activation('tanh')(g) # define model model = Model(in_image, out_image) return model # define the combined generator and discriminator model, for updating the generator def define_gan(g_model, d_model, image_shape): # make weights in the discriminator not trainable for layer in d_model.layers: if not isinstance(layer, BatchNormalization): layer.trainable = False # define the source image in_src = Input(shape=image_shape) # connect the source image to the generator input gen_out = g_model(in_src) # connect the source input and generator output to the discriminator input dis_out = d_model([in_src, gen_out]) # src image as input, generated image and classification output model = Model(in_src, [dis_out, gen_out]) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'mae'], optimizer=opt, loss_weights=[1,100]) return model # load and prepare training images def load_real_samples(filename): # load compressed arrays data = load(filename) # unpack arrays X1, X2 = data['arr_0'], data['arr_1'] # scale from [0,255] to [-1,1] X1 = (X1 - 127.5) / 127.5 X2 = (X2 - 127.5) / 127.5 return [X1, X2] # select a batch of random samples, returns images and target def generate_real_samples(dataset, n_samples, patch_shape): # unpack dataset trainA, trainB = dataset # choose random instances ix = randint(0, trainA.shape[0], n_samples) # retrieve selected images X1, X2 = trainA[ix], trainB[ix] # generate 'real' class labels (1) y = ones((n_samples, patch_shape, patch_shape, 1)) return [X1, X2], y # generate a batch of images, returns images and targets def generate_fake_samples(g_model, samples, patch_shape): # generate fake instance X = g_model.predict(samples) # create 'fake' class labels (0) y = zeros((len(X), patch_shape, patch_shape, 1)) return X, y # generate samples and save as a plot and save the model def summarize_performance(step, g_model, dataset, n_samples=3): # select a sample of input images [X_realA, X_realB], _ = generate_real_samples(dataset, n_samples, 1) # generate a batch of fake samples X_fakeB, _ = generate_fake_samples(g_model, X_realA, 1) # scale all pixels from [-1,1] to [0,1] X_realA = (X_realA + 1) / 2.0 X_realB = (X_realB + 1) / 2.0 X_fakeB = (X_fakeB + 1) / 2.0 # plot real source images for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + i) pyplot.axis('off') pyplot.imshow(X_realA[i]) # plot generated target image for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + n_samples + i) pyplot.axis('off') pyplot.imshow(X_fakeB[i]) # plot real target image for i in range(n_samples): pyplot.subplot(3, n_samples, 1 + n_samples*2 + i) pyplot.axis('off') pyplot.imshow(X_realB[i]) # save plot to file filename1 = 'plot_%06d.png' % (step+1) pyplot.savefig(filename1) pyplot.close() # save the generator model filename2 = 'model_%06d.h5' % (step+1) g_model.save(filename2) print('>Saved: %s and %s' % (filename1, filename2)) # train pix2pix models def train(d_model, g_model, gan_model, dataset, n_epochs=100, n_batch=1): # determine the output square shape of the discriminator n_patch = d_model.output_shape[1] # unpack dataset trainA, trainB = dataset # calculate the number of batches per training epoch bat_per_epo = int(len(trainA) / n_batch) # calculate the number of training iterations n_steps = bat_per_epo * n_epochs # manually enumerate epochs for i in range(n_steps): # select a batch of real samples [X_realA, X_realB], y_real = generate_real_samples(dataset, n_batch, n_patch) # generate a batch of fake samples X_fakeB, y_fake = generate_fake_samples(g_model, X_realA, n_patch) # update discriminator for real samples d_loss1 = d_model.train_on_batch([X_realA, X_realB], y_real) # update discriminator for generated samples d_loss2 = d_model.train_on_batch([X_realA, X_fakeB], y_fake) # update the generator g_loss, _, _ = gan_model.train_on_batch(X_realA, [y_real, X_realB]) # summarize performance print('>%d, d1[%.3f] d2[%.3f] g[%.3f]' % (i+1, d_loss1, d_loss2, g_loss)) # summarize model performance if (i+1) % (bat_per_epo * 10) == 0: summarize_performance(i, g_model, dataset) # load image data dataset = load_real_samples('maps_256.npz') print('Loaded', dataset[0].shape, dataset[1].shape) # define input shape based on the loaded dataset image_shape = dataset[0].shape[1:] # define the models d_model = define_discriminator(image_shape) g_model = define_generator(image_shape) # define the composite model gan_model = define_gan(g_model, d_model, image_shape) # train model train(d_model, g_model, gan_model, dataset) |

The example can be run on CPU hardware, although GPU hardware is recommended.

The example might take about two hours to run on modern GPU hardware.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

The loss is reported each training iteration, including the discriminator loss on real examples (d1), discriminator loss on generated or fake examples (d2), and generator loss, which is a weighted average of adversarial and L1 loss (g).

If loss for the discriminator goes to zero and stays there for a long time, consider re-starting the training run as it is an example of a training failure.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

>1, d1[0.566] d2[0.520] g[82.266] >2, d1[0.469] d2[0.484] g[66.813] >3, d1[0.428] d2[0.477] g[79.520] >4, d1[0.362] d2[0.405] g[78.143] >5, d1[0.416] d2[0.406] g[72.452] ... >109596, d1[0.303] d2[0.006] g[5.792] >109597, d1[0.001] d2[1.127] g[14.343] >109598, d1[0.000] d2[0.381] g[11.851] >109599, d1[1.289] d2[0.547] g[6.901] >109600, d1[0.437] d2[0.005] g[10.460] >Saved: plot_109600.png and model_109600.h5 |

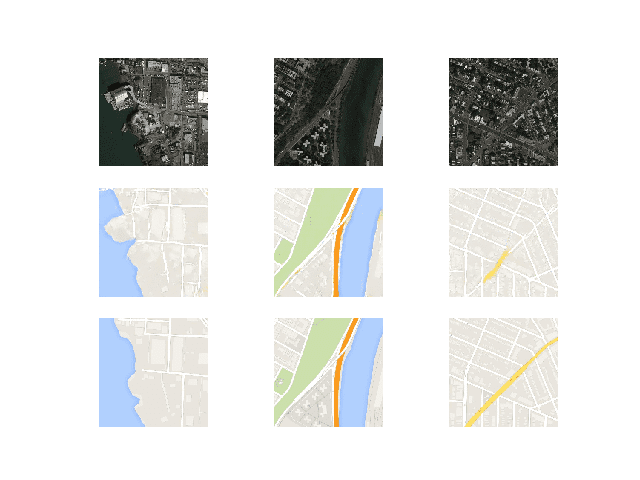

Models are saved every 10 epochs and saved to a file with the training iteration number. Additionally, images are generated every 10 epochs and compared to the expected target images. These plots can be assessed at the end of the run and used to select a final generator model based on generated image quality.

At the end of the run, will you will have 10 saved model files and 10 plots of generated images.

After the first 10 epochs, map images are generated that look plausible, although the lines for streets are not entirely straight and images contain some blurring. Nevertheless, large structures are in the right places with mostly the right colors.

Plot of Satellite to Google Map Translated Images Using Pix2Pix After 10 Training Epochs

Generated images after about 50 training epochs begin to look very realistic, at least to mean, and quality appears to remain good for the remainder of the training process.

Note the first generated image example below (right column, middle row) that includes more useful detail than the real Google map image.

Plot of Satellite to Google Map Translated Images Using Pix2Pix After 100 Training Epochs

Now that we have developed and trained the Pix2Pix model, we can explore how they can be used in a standalone manner.

How to Translate Images With a Pix2Pix Model

Training the Pix2Pix model results in many saved models and samples of generated images for each.

More training epochs does not necessarily mean a better quality model. Therefore, we can choose a model based on the quality of the generated images and use it to perform ad hoc image-to-image translation.

In this case, we will use the model saved at the end of the run, e.g. after 100 epochs or 109,600 training iterations.

A good starting point is to load the model and use it to make ad hoc translations of source images in the training dataset.

First, we can load the training dataset. We can use the same function named load_real_samples() for loading the dataset as was used when training the model.

|

1 2 3 4 5 6 7 8 9 10 |

# load and prepare training images def load_real_samples(filename): # load compressed ararys data = load(filename) # unpack arrays X1, X2 = data['arr_0'], data['arr_1'] # scale from [0,255] to [-1,1] X1 = (X1 - 127.5) / 127.5 X2 = (X2 - 127.5) / 127.5 return [X1, X2] |

This function can be called as follows:

|

1 2 3 4 |

... # load dataset [X1, X2] = load_real_samples('maps_256.npz') print('Loaded', X1.shape, X2.shape) |

Next, we can load the saved Keras model.

|

1 2 3 |

... # load model model = load_model('model_109600.h5') |

Next, we can choose a random image pair from the training dataset to use as an example.

|

1 2 3 4 |

... # select random example ix = randint(0, len(X1), 1) src_image, tar_image = X1[ix], X2[ix] |

We can provide the source satellite image as input to the model and use it to predict a Google map image.

|

1 2 3 |

... # generate image from source gen_image = model.predict(src_image) |

Finally, we can plot the source, generated image, and the expected target image.

The plot_images() function below implements this, providing a nice title above each image.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

# plot source, generated and target images def plot_images(src_img, gen_img, tar_img): images = vstack((src_img, gen_img, tar_img)) # scale from [-1,1] to [0,1] images = (images + 1) / 2.0 titles = ['Source', 'Generated', 'Expected'] # plot images row by row for i in range(len(images)): # define subplot pyplot.subplot(1, 3, 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(images[i]) # show title pyplot.title(titles[i]) pyplot.show() |

This function can be called with each of our source, generated, and target images.

|

1 2 3 |

... # plot all three images plot_images(src_image, gen_image, tar_image) |

Tying all of this together, the complete example of performing an ad hoc image-to-image translation with an example from the training dataset is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 |

# example of loading a pix2pix model and using it for image to image translation from keras.models import load_model from numpy import load from numpy import vstack from matplotlib import pyplot from numpy.random import randint # load and prepare training images def load_real_samples(filename): # load compressed arrays data = load(filename) # unpack arrays X1, X2 = data['arr_0'], data['arr_1'] # scale from [0,255] to [-1,1] X1 = (X1 - 127.5) / 127.5 X2 = (X2 - 127.5) / 127.5 return [X1, X2] # plot source, generated and target images def plot_images(src_img, gen_img, tar_img): images = vstack((src_img, gen_img, tar_img)) # scale from [-1,1] to [0,1] images = (images + 1) / 2.0 titles = ['Source', 'Generated', 'Expected'] # plot images row by row for i in range(len(images)): # define subplot pyplot.subplot(1, 3, 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(images[i]) # show title pyplot.title(titles[i]) pyplot.show() # load dataset [X1, X2] = load_real_samples('maps_256.npz') print('Loaded', X1.shape, X2.shape) # load model model = load_model('model_109600.h5') # select random example ix = randint(0, len(X1), 1) src_image, tar_image = X1[ix], X2[ix] # generate image from source gen_image = model.predict(src_image) # plot all three images plot_images(src_image, gen_image, tar_image) |

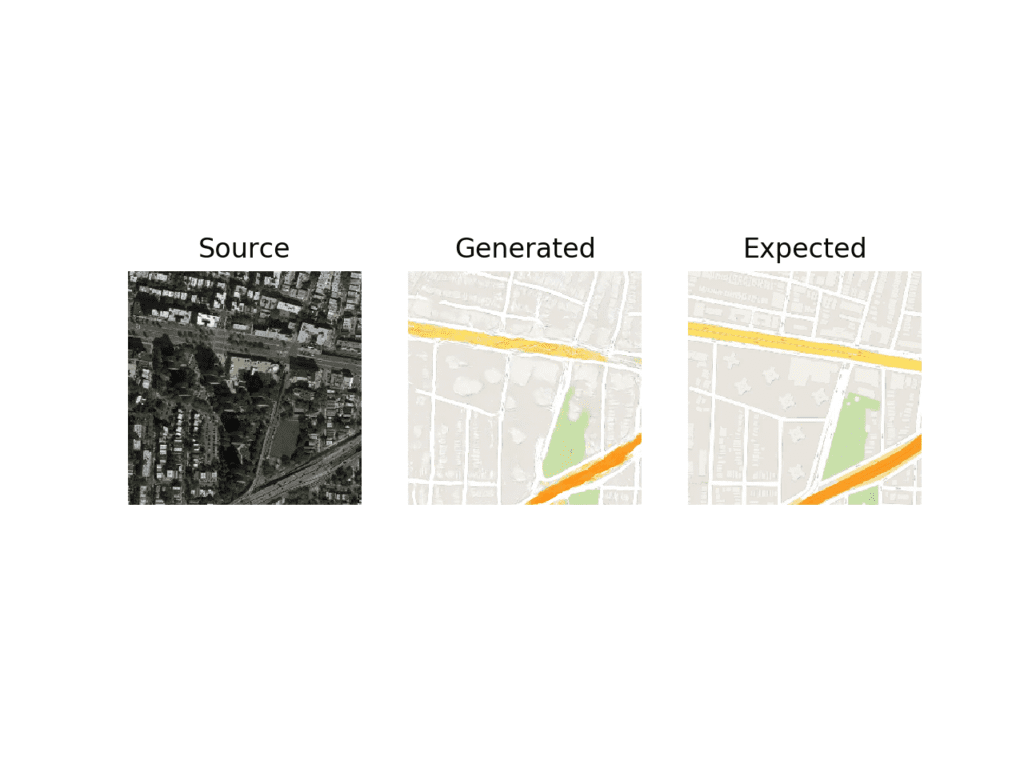

Running the example will select a random image from the training dataset, translate it to a Google map, and plot the result compared to the expected image.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that the generated image captures large roads with orange and yellow as well as green park areas. The generated image is not perfect but is very close to the expected image.

Plot of Satellite to Google Map Image Translation With Final Pix2Pix GAN Model

We may also want to use the model to translate a given standalone image.

We can select an image from the validation dataset under maps/val and crop the satellite element of the image. This can then be saved and used as input to the model.

In this case, we will use “maps/val/1.jpg“.

Example Image From the Validation Part of the Maps Dataset

We can use an image program to create a rough crop of the satellite element of this image to use as input and save the file as satellite.jpg in the current working directory.

Example of a Cropped Satellite Image to Use as Input to the Pix2Pix Model.

We must load the image as a NumPy array of pixels with the size of 256×256, rescale the pixel values to the range [-1,1], and then expand the single image dimensions to represent one input sample.

The load_image() function below implements this, returning image pixels that can be provided directly to a loaded Pix2Pix model.

|

1 2 3 4 5 6 7 8 9 10 11 |

# load an image def load_image(filename, size=(256,256)): # load image with the preferred size pixels = load_img(filename, target_size=size) # convert to numpy array pixels = img_to_array(pixels) # scale from [0,255] to [-1,1] pixels = (pixels - 127.5) / 127.5 # reshape to 1 sample pixels = expand_dims(pixels, 0) return pixels |

We can then load our cropped satellite image.

|

1 2 3 4 |

... # load source image src_image = load_image('satellite.jpg') print('Loaded', src_image.shape) |

As before, we can load our saved Pix2Pix generator model and generate a translation of the loaded image.

|

1 2 3 4 5 |

... # load model model = load_model('model_109600.h5') # generate image from source gen_image = model.predict(src_image) |

Finally, we can scale the pixel values back to the range [0,1] and plot the result.

|

1 2 3 4 5 6 7 |

... # scale from [-1,1] to [0,1] gen_image = (gen_image + 1) / 2.0 # plot the image pyplot.imshow(gen_image[0]) pyplot.axis('off') pyplot.show() |

Tying this all together, the complete example of performing an ad hoc image translation with a single image file is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 |

# example of loading a pix2pix model and using it for one-off image translation from keras.models import load_model from keras.preprocessing.image import img_to_array from keras.preprocessing.image import load_img from numpy import load from numpy import expand_dims from matplotlib import pyplot # load an image def load_image(filename, size=(256,256)): # load image with the preferred size pixels = load_img(filename, target_size=size) # convert to numpy array pixels = img_to_array(pixels) # scale from [0,255] to [-1,1] pixels = (pixels - 127.5) / 127.5 # reshape to 1 sample pixels = expand_dims(pixels, 0) return pixels # load source image src_image = load_image('satellite.jpg') print('Loaded', src_image.shape) # load model model = load_model('model_109600.h5') # generate image from source gen_image = model.predict(src_image) # scale from [-1,1] to [0,1] gen_image = (gen_image + 1) / 2.0 # plot the image pyplot.imshow(gen_image[0]) pyplot.axis('off') pyplot.show() |

Running the example loads the image from file, creates a translation of it, and plots the result.

The generated image appears to be a reasonable translation of the source image.

The streets do not appear to be straight lines and the detail of the buildings is a bit lacking. Perhaps with further training or choice of a different model, higher-quality images could be generated.

Plot of Satellite Image Translated to Google Maps With Final Pix2Pix GAN Model

How to Translate Google Maps to Satellite Images

Now that we are familiar with how to develop and use a Pix2Pix model for translating satellite images to Google maps, we can also explore the reverse.

That is, we can develop a Pix2Pix model to translate Google map images to plausible satellite images. This requires that the model invent or hallucinate plausible buildings, roads, parks, and more.

We can use the same code to train the model with one small difference. We can change the order of the datasets returned from the load_real_samples() function; for example:

|

1 2 3 4 5 6 7 8 9 10 11 |

# load and prepare training images def load_real_samples(filename): # load compressed arrays data = load(filename) # unpack arrays X1, X2 = data['arr_0'], data['arr_1'] # scale from [0,255] to [-1,1] X1 = (X1 - 127.5) / 127.5 X2 = (X2 - 127.5) / 127.5 # return in reverse order return [X2, X1] |

Note: the order of X1 and X2 is reversed.

This means that the model will take Google map images as input and learn to generate satellite images.

Run the example as before.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

As before, the loss of the model is reported each training iteration. If loss for the discriminator goes to zero and stays there for a long time, consider re-starting the training run as it is an example of a training failure.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

>1, d1[0.442] d2[0.650] g[49.790] >2, d1[0.317] d2[0.478] g[56.476] >3, d1[0.376] d2[0.450] g[48.114] >4, d1[0.396] d2[0.406] g[62.903] >5, d1[0.496] d2[0.460] g[40.650] ... >109596, d1[0.311] d2[0.057] g[25.376] >109597, d1[0.028] d2[0.070] g[16.618] >109598, d1[0.007] d2[0.208] g[18.139] >109599, d1[0.358] d2[0.076] g[22.494] >109600, d1[0.279] d2[0.049] g[9.941] >Saved: plot_109600.png and model_109600.h5 |

It is harder to judge the quality of generated satellite images, nevertheless, plausible images are generated after just 10 epochs.

Plot of Google Map to Satellite Translated Images Using Pix2Pix After 10 Training Epochs

As before, image quality will improve and will continue to vary over the training process. A final model can be chosen based on generated image quality, not total training epochs.

The model appears to have little difficulty in generating reasonable water, parks, roads, and more.

Plot of Google Map to Satellite Translated Images Using Pix2Pix After 90 Training Epochs

Extensions

This section lists some ideas for extending the tutorial that you may wish to explore.

- Standalone Satellite. Develop an example of translating standalone Google map images to satellite images, as we did for satellite to Google map images.

- New Image. Locate a satellite image for an entirely new location and translate it to a Google map and consider the result compared to the actual image in Google maps.

- More Training. Continue training the model for another 100 epochs and evaluate whether the additional training results in further improvements in image quality.

- Image Augmentation. Use some minor image augmentation during training as described in the Pix2Pix paper and evaluate whether it results in better quality generated images.

If you explore any of these extensions, I’d love to know.

Post your findings in the comments below.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Official

- Image-to-Image Translation with Conditional Adversarial Networks, 2016.

- Image-to-Image Translation with Conditional Adversarial Nets, Homepage.

- Image-to-image translation with conditional adversarial nets, GitHub.

- pytorch-CycleGAN-and-pix2pix, GitHub.

- Interactive Image-to-Image Demo, 2017.

- Pix2Pix Datasets

API

- Keras Datasets API.

- Keras Sequential Model API

- Keras Convolutional Layers API

- How can I “freeze” Keras layers?

Summary

In this tutorial, you discovered how to develop a Pix2Pix generative adversarial network for image-to-image translation.

Specifically, you learned:

- How to load and prepare the satellite image to Google maps image-to-image translation dataset.

- How to develop a Pix2Pix model for translating satellite photographs to Google map images.

- How to use the final Pix2Pix generator model to translate ad hoc satellite images.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Amazing tutorial. Detailed and clear explanation of concepts as well as the codes.

Thanks & Regards

Thanks!

From a digital signal processing viewpoint a weighted sum is an adjustable filter.

Each layer in a conventional artificial neural network has n of those filters and the total compute is a brutal n squared fused multiply accumulates.

A fast Fourier transform is a fixed (nonadjustable) bank of filters, where each filter picks out frequency/phase.

There are other transforms that act as filter banks too, such as the fast Walsh Hadamard transform and these often require far less compute (eg. nlog(n)) than a filter bank of weighed sums.

The question then is why not use an efficient transform based filter bank and adjust the nonlinear functions in a neural network by individually parameterizing them?

Ie. change what you adjust:

https://github.com/S6Regen/Fixed-Filter-Bank-Neural-Networks

https://discourse.numenta.org/t/fixed-filter-bank-neural-networks/6392

https://discourse.numenta.org/t/distributed-representations-1984-by-hinton/6378/10

Perhaps test your alternate approach and compare the results Sean?

It seems to me that the discriminator is not a 70×70 PatchGAN, since the 4th layer should not be there. With that layer it seems like the discirminator is a 142×142 PatchGAN. Please correct me if I am mistaken.

I believe you are mistaken.

You can learn more about the 70×70 patch gan in greater detail in this post:

https://machinelearningmastery.com/how-to-implement-pix2pix-gan-models-from-scratch-with-keras/

That example has the same structure, 6 layers of Conv2D (including the last one). But when looking at the beginning of the post where You are calculating the receptive field with 5 layers of Conv layers. The calculation also states that there are only 3 layers of Conv2D with a stride of 2. I believe that the layer named C512 should be the second to last layer.

I believe the implementation matches the official implementation described here:

https://github.com/phillipi/pix2pix/blob/master/models.lua#L180

Hi Jason, thanks for the great tutorials. I agreed with Villem that the current discriminator model is a 142×142 PatchGAN. For a 70x70PatchGAN, I think it should be only 3 layers with 4×4 kernel and 2×2 stride (remove the C512).

If anyone else has the same confusion with me, please let me know. thanks:)

Thank you all for the feedback!

Sorry what’s the link?? This link is the same as the original one.

Thanks for the tutorial.

My question is in original paper they are giving the direction as configurable parameter.

But in your implementation I am unable to see that one.

How can do that for both direction.

Please explain

I show how to translate images in both directions in the above tutorial.

Many thanks for this amazing tutorial!

PS “There are 1,097 images”… and then there are saves every 10970 steps, and 109700 steps overall

Thanks.

Fixed.

Thanks for an amazing tutorial

How we use GAN for motion transfer or which type of GAN will best for Motion Transfer?

I don’t know off hand sorry, perhaps try a search on scholar.google.com

Hello, thanks for the great article.

I have one question, but why you scale the image to [-1, 1] instead of [0, 1]?

Does this make the model behave differently?

Because the generator generates pixels in that range, and the discriminator must “see” all images pixels in the same range.

The choice of -1,1 for pixels is a gan hack:

https://machinelearningmastery.com/how-to-code-generative-adversarial-network-hacks/

Hi sir, is it possible to train this model with inputs and output of different sizes?

For example, I have 3 image a,b,c with size 50x50x3. I want the model to generate c from a,b. First I append a and b to get d with size 50x100x3, then use d as input, c as output

Yes, you can use different sized input and output, although the u-net will require modification.

Could you give me some more details about how do I need to modify U-net in my case ? I’m not very familiar with this texture

Sorry, I don’t have the capacity to prepare custom code for you.

Perhaps experiment with adding/subtracting groups of layers to the decoder part of the model and see the effect on the image size?

I know you are very busy so I didn’t ask for custom code, I just need something to start with. Thank for the suggestion sir !

Perhaps start with just the function that defines the model and try playing around with it.

Did you find a solution for this? I am struggling with the same issue. Thanks

Hi Jason,

First of all, thank you very much for posting this tutorial, I learned a lot from it.

I have a question.

Do u think if I leave the picture resolution as it is rather than compressing them.

The performance is gonna be better? As my pictures between translation is very minor.

Thank you!

David

Thanks, I’m happy that it helped.

Interesting idea. You mean likely working with TIFF images or other loess-less formats?

Probably not, but perhaps test it to confirm.

Hello,

How can I increase speed of training? It uses very small portion of gpu memory.

Some ideas:

Use less data.

Use a smaller model.

Use a faster machine.

I am using a machine with 8 gpus (8 X p4000) 🙂

I mean, for example, while training on darknet, changing batch size directly affects gpu memory usage. But this codes use only 100 mb of each gpu. And batch size doesn’t affect it. So I need an adjustment just like on darknet so that I can use full capability of gpus.

Thanks

I see, I’m not sure I can help sorry.

Swapping out the training data for the SEN1-2 dataset had amazing results. I can now translate Sentinel 1 images to RGB Sentinel 2! Many thanks for such a thorough tutorial.

Well done!

I would love to see an example of a translated image.

Hi Jason,

Thank you so much for your great website, it is fantastic.

I was wondering what your opinion is about the future research direction for this area of research?

Thanks

Thanks.

Sorry, I don’t have thoughts on research directions – I try to stay focused on the industrial side these days.

Awesome tutorial on Pix2Pix. Your other GAN articles were great too and very helpful. After reading your tutorials, I was able to implement my own Pix2Pix project. All the code is on my GItHub. https://github.com/michaelnation26/pix2pix-edges-with-color

Thanks.

Well done, that is very impressive!

– python version: 3.6.7

– tensorflow-gpu version: 2.0.0

– keras version: 2.3.1

– cuDNN version:10.0

– CUDA version:10.0

mnist_mlp.py (https://github.com/keras-team/keras/blob/master/examples/mnist_mlp.py) works perfectly but code which is given below gives me this error:

tensorflow.python.framework.errors_impl.UnknownError: Failed to get convolution algorithm. This is probably because cuDNN failed to initialize, so try looking to see if a warning log message was printed above.

[[node conv2d_7/convolution (defined at C:\Users\ACSECKIN\Anaconda3\envs\tensorflow\lib\site-packages\tensorflow_core\python\framework\ops.py:1751) ]] [Op:__inference_keras_scratch_graph_4815]

Function call stack:

keras_scratch_graph

Perhaps try running on the CPU first to confirm your environment is working?

Code works with CPU. But I want to run in the GPU to complete in less time. CPU time is about 16 hours. I am open to any alternative to decrease the training time.

That is odd. I ran the examples on GPU without incident, specifically on EC2.

Perhaps try that?

Based on my experience, I receive this error message when my GPU does not have enough memory to handle the process. Maybe try reducing the computational workload by using a smaller image? If not, use CPU if you are fine with it.

Great suggestions!

Hi guys,

It takes 8 hours to train the model on GPU (Floydhub).

But several different models were saved in the process.

Can you explain why?

Perhaps try training on a large ec2 instance, it is much faster.

Models are saved periodically during training, we cannot know what a good model will be without using it to generate images.

Thanks for the tip!

Thought that more epochs give better results, since GAN`s cannot converge… so by theory no over fitting.. but I am new in the field, so I will look more into it 🙂

Not with GANs. Perhaps start here:

https://machinelearningmastery.com/start-here/#gans

Thank you for your awesome post, it is really detailed and helpful! I have a question about normalizing between [0, 255] to [-1, 1]. My images are single channel and the maximum and minimum pixel values vary for each image, from 0 to around 3-4 (depends on the image). How should I go about normalizing the images? Should I take the maximum of the whole batch of samples and normalize, or should I take the maximum for each sample and normalize each individually?

Also, when translating new images, what would be the values of image? Would it be between -1 to 1? If yes, how should I “denormalize” the values to the original? Thank you for your help!

Thanks.

I recommend selecting a min and max that you expect to see for all time and use that to scale the data. If not known or it cannot be known, use the limits of the domain (e.g. 0 and 255).

Thank you for your suggestions! How would you suggest I “de-normalize” the data during testing? Should I use the same range (I am taking the range from the training data) and reverse the process on the test data?

Yes.

Hi Jason,

Thank you very much for sharing such an in-depth analysis of Pix2Pix GAN. It is really helpful for early career researchers like me who don’t have a CS background. I thought of applying this fro solving and inverse problems in Digital Holographic Microscopy and I am now intrigued by the preliminary results I have got. As you know, the output of the model is a translated image, hence it is not possible to calculate the model accuracy. I am looking for an image quality metric such as SSIM. Do you have any suggestions?

Thank You,

PS: As this post helped me enormously, I would like to cite your works on GANs in the future.

You’re welcome.

That sounds very cool! Perhaps one of the metrics here would be helpful:

https://machinelearningmastery.com/how-to-evaluate-generative-adversarial-networks/

Hi! Currently implementing this with images with shape (256, 512, 3) and keep running to an error as follows:

“ValueError: A target array with shape (1, 16, 16, 1) was passed for an output of shape (None, 16, 32, 1) while using as loss

binary_crossentropy. This loss expects targets to have the same shape as the output.”I assume that this is due to the downsampling? Any help would be appreciated

Perhaps start with square images, get it working, then try changing to rectangular images?

Hmm, alright! Could you explain why you use target_size=(256, 512) instead of (256, 256)?

The images are 256×512 – as they contain the input and output images together.

We load them and split them into two separate square images 256×256 when working with the GAN.

The discriminator error seems to be going to zero pretty quick, any tips to avoid this?

Perhaps try running the example a few times and continue running – it may recover.

Thank you for this tutorial and simple code. I used it to perform image-to-image translation from Köppen–Geiger climate classification map ( https://en.wikipedia.org/wiki/K%C3%B6ppen_climate_classification ) to real satellite data, with truly amazing results, but I have a question.

In my strategy I create near one thousand pairs of 256×256 tiles from the Köppen–Geiger map (present in the Wikipedia article above), and a high-resolution satellite map of the Earth. In order to minimize deformation on tiles pairs near poles I use orthographic projection. This gives me nice pairs of image for GAN training (see https://photos.app.goo.gl/eGvpXghUtCB9kqkX6 ).

I trained the GAN until the end (n_epochs=100) with amazing results. Using training data give truly convincing satellite map validation (https://photos.app.goo.gl/a4EV6Gh15hAnYokm7). Even with hand-painted or with source image converted from a random image into Köppen–Geiger colormap, results are very nice (https://photos.app.goo.gl/eGbFmTH7YqYi4xfu5).

However I noticed that the result lacked of “relief” effect. Moreover, on large landmasses where the climate does not change but the topography noticeably affects the satellite view (e.g Tibetan Plateau or the Grand Canyon), the model results in “flat” satellite views.

As the climate map is composed of only 29 different indexed colors (plus the one I added for oceans), a simple label-to-image translation could be used, instead of using a full RGB climate image as input.

So my idea was to store a heightmap of the earth on the first channel of the input image, and the normalized indexed climate color on the second channel. The third channel is kept unused. It results in a Red-Green image where the Red channel is the heightmap and the Green channel is normalized climate index (see https://photos.app.goo.gl/cN1cmCNLSXwwqzNB9).

The problem is that training with this input images give bad results compared to my first try (only climate date). Results were already convincing after 30 epochs in my my first try, with smooth transition between climates, why here the boundaries are clearly visible in generated images (see https://photos.app.goo.gl/Q1vjjeY8ewWrCZYv5 ).

I tried to run the training several times to ensure that it was not purely bad luck, with the same result.

I don’t understand because climate index can clearly be stored on one channel without information loss, and heightmap provides additional data, so it should improve the results. Is it simply because it needs more epochs ?

Thank you in advance and sorry for the long post and for my english, it is not my native language.

Well done!

Very cool application.

Two thoughts off the cuff. One would be to make image synthesis conditional on two input images (source and the height map). A second would be to have 2 steps – one step to generate the initial translation and a second to improve the generated image with a height map.

I’m eager to hear how you go!

Thank you very much ! The aim is to develop a tool for worlbuidling and create realistic maps of imaginary planets (following Atrifexian’s tutorials https://www.youtube.com/watch?v=5lCbxMZJ4zA&t=1s ).

I used the idea of using R and G channels for heightmap and climate following this thread concerning the pytorch implementation : https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/498. They recommend to concatenate the input images, but it seems that your code is limited to 3 channels and as I’m a complete beginner I still don’t know how to use more than one image as input.

However it seems indeed that training on more epochs actually gives good results with my method. Maybe 100 is not enough, so I restarted it with a limit of 1000 epochs. However I have to redo the first 100, an I run the code on Google Colab which seems to be very unstable (I only managed to reach 100 epochs twice).

Do you have a tutorial on how to make complete checkpoints in order to continue the training in case of crash ? If I understand well, your summarize_performance function only save the generator model, so we should have to save the whole gan_model and reload it for later training. Do you have documentation or examples concerning this ?

Thank you so much for your tutorial. I’ll keep you informed on later developments !

Yes, the above code already saves the model every n steps.

See the summarize_performance() function.

Should i approach this the same way if i have images containing white backgrounds? Similar to the edges2shoes dataset?

Perhaps test it with a prototype?

For some reason i end up only with blank white images…

Hello,

Thanks for the wonderful tutorial, Please how can I adapt the generator and the descriminator in order to make a transition from matrix (2,64) into matrix(2,64)

Sorry, I don’t follow.

Thanks Jason for great post!

I have tried this code, but images do not appear to be good enough, and discriminator loss becomes 0 after 10-15 epochs.

Perhaps try using a different final model or re-fit the model?

I want to continue training from the last checkpoint stored. Can you help me in resuming the training of a model from last checkpoint.

Yes, load the model as per normal and call the train() function.

I have a dataset consisting of 216 images. I trained for 100 epochs but unfortunately the results are not good. Can you help me how can I improve the results?

Yes, try some of these suggestions:

https://machinelearningmastery.com/how-to-code-generative-adversarial-network-hacks/

Thank you for this wonderful tutorial! It has been extremely helpful. I was wondering if you had considered data augmentation?

For GANs, not really, in general, yes:

https://machinelearningmastery.com/how-to-configure-image-data-augmentation-when-training-deep-learning-neural-networks/

Thanks!

This is probably a newbie question, but I am new to GANs. In my limited experience with deep CNNs, I used the validation data during the training process to sort of evaluate how well it was “learning”> I then had another dataset I called the “test” dataset that I used after the training process was complete. Here it seems like you don’t use any validation during the training process. And what you call validation is what I call the test dataset. Is that something unique to GANs or can validation be included in the training process?

No, I often use tests sets for validation to make tutorials simpler:

https://machinelearningmastery.com/faq/single-faq/why-do-you-use-the-test-dataset-as-the-validation-dataset

Hi! Im running this on Titan V and it seems to be running extremely slow. Any ideas as to why?

Training GANs is slow…

Perhaps check you have enough RAM and GPU RAM?

Perhaps compare results on an ec2 with a lot of RAM?

Perhaps adjust model config to use less RAM?

I suggest using google colab. You can use their GPUs for training. It’s much faster!

GPUs are practically a requirement when working with GANs.

sir can i know how you downloaded the images of satellite and maps can you please help me to download my own dataset for this project

See the section of the above tutorial “Satellite to Map Image Translation Dataset” on how to download the dataset.

Hi Jason,

From the theory, we understand that dicriminator learns the objective loss function.However referring to define_GAN(),line 56 in the code, I am not able to see the object loss learnt by discriminator getting passed to GAN model. I see that the model doesnot converge as expected

Thanks and Regards,

Vaibhav Kotwal

We create a composite model that combines G and D so that G is trained via inverse corrections to D.

Perhaps see this:

https://machinelearningmastery.com/how-to-code-the-generative-adversarial-network-training-algorithm-and-loss-functions/

Thanks for the great tutorial. I need help with something. I want to see accuracy metrics for both train dataset and test dataset throughout the training process. And I want to see this for each epoch, not for each steps. Like a standart CNN model training procedure. How can add this things to code ? I couldn’t apply it because it is different than standard CNN codes. I would really appreciate it if you answer. Thank you.

Accuracy is a bad metric for GANs.

See this:

https://machinelearningmastery.com/how-to-evaluate-generative-adversarial-networks/

Can pix2pix Gan save and load againt without training again

Yes, see this tutorial for an example:

https://machinelearningmastery.com/how-to-develop-a-pix2pix-gan-for-image-to-image-translation/

Yes,thank for your reply!!And I want to ask another questions!!Can the other kind of GAN versions save and load?

Yes, they are all Keras models that can be saved and loaded.

Hi Jason,

Thanks for the great tutorial. I have a problem with image scales. In first step, after splitting the input images, I check the image size, instead of of 256*256 pixel they are 134*139 with background. Also, at translation a given standalone image using by model step, the output should be 256*256 same as input, but I get 252*262 output again with background.

I was wondering if you would mind letting me know where is the problem?

Thanks in advance

Ehsan

I don’t know the cause of your fault. Sorry.

Great work Jason. Just one question: do you believe that this approach could work using a RGB satellite image against its mask image, to make some kind of image segmentation ?

Thanks in advance

Perhaps try it. Prototypes are a fast way to get answers.

I mean, the mask image would have just 2 colors (yes/not) … this was my concern. Thanks

You can add two ‘dummy’ layers to the mask image, so that it is compatible as a target image to the RGB source image. Your RGB image as numpy array will be in the shape of (nr images, width, height, nr bands) where nr bands is three. Your mask image will be in shape (nr images, width, height, nr bands) where nr bands is one. So if you add two bands to the mask image, with e.g. only -1 values, then they are compatible.

It is an interesting article publish here. I am new using this, i want some question for the first script for clear explanation :

1. I saw the loaded data is maps in train and test folder. I want to know which 3 sample was loaded from the folder train? because the results was : (1096, 256, 256, 3) (1096, 256, 256, 3). I understand 1096 is the certain amount of image in that folder. and 256 I still dont understand because when I open picture 256 is not the same as the it was loaded.

2. I saw the folder contain image in train and test. I want to ask the train, example 1.jpg it contains two image from satellite. May I know how to develop the left picture and the right picture or it develop itself? Also in test does it develop itself or have to save it first?

Need some explanation for preparing using it in the future. Thank you

The images are 256×256 squares with 3 color channels. You can learn more about loading images here:

https://machinelearningmastery.com/how-to-load-and-manipulate-images-for-deep-learning-in-python-with-pil-pillow/

Sorry, I don’t follow your second question, perhaps you can elaborate?

thank you for replying. For my second question I saw the folder train and test contains an image. So the question come up :

1.Does the image build itself?

2. If No, how do you make the images side by side that contains two image in one image.

Thank you very much.

The tutorial shows how to load the images and prepare them for modeling.

hi thank you for your work !

I need your help,I need the same model but the input of the generator is one channel and not three .

I have tried to change it but it does’nt work .thank you

Sorry to hear that.

Perhaps confirm that your images are grayscale (1 channel), then change the model to expect 1 channel via the input shape.

Hi! I wanted to train myself. I prepared them just like in this tutorial. Size X1 and X2 are the same. Data display works. But I get this error:

‘Got inputs shapes: %s’ % (input_shape))

ValueError: A

Concatenatelayer requires inputs with matching shapes except for the concat axis. Got inputs shapes: [(None, 2, 2, 512), (None, 1, 1, 512)]What have I done wrong?

I don’t know sorry.

Have you been able to solve this problem. I get the same issue.

This is probably because your input size is not divisible by 256.

hello I have this problem I dont know why :

ValueError: Graph disconnected: cannot obtain value for tensor Tensor(“input_14:0”, shape=(?, 256, 256, 3), dtype=float32) at layer “input_14”. The following previous layers were accessed without issue: [‘input_15’]

Perhaps confirm that your keras and tensorflow versions are updated?

Hi. I tried to use a different dataset using this code. Specifically the edges2shoes dataset but i was not able to convert it into npz file. Everytime i ran into memory error. My ram is 16GB still that was not enough. I managed to create multiple npz files though. How should i proceed?

Also could you be kind enough to make tutorial of Tensorflow/Keras of pix2pixHD since it is much more accurate and better in side by side tests compared to normal pix2pix.

Perhaps use a sample of the data?

Perhaps use progressive loading?

Perhaps use an ec2 instance wth more ram?

Thanks for the suggestion.

Thanks I think your tutorial here https://machinelearningmastery.com/how-to-load-large-datasets-from-directories-for-deep-learning-with-keras/ will be helpful in my case.

I am using my own machine not AWS instance.

Great!

Hi Jason. Great Article. Good explanation. Your articles gave me a good overview and starting point when I started developing my own networks. But I have two questions:

1:) From what I can see the original code on Github seems to be slightly different to your code in this article when it comes to how you connect an encoder and a decoder layer. On Github data from an encoder layer passed to a decoder layer (via skip-connection) is unactivated, meaning that the data is passed directly after the convolution(or batch norm/dropout), in contrary to the solution here. Is this a mistake or variation ?

2.) What does the flag ‘training=True’ do when calling batch normalization layer or dropout layer ?

Thanks in advance.

Perhaps. I thought I had the architecture spot on based on the paper and the released code.

Training=True causes the layer to think it is always in training model. E.g. normally batchnorm and dropout operate differently in training vs inference. More here:

https://machinelearningmastery.com/how-to-implement-pix2pix-gan-models-from-scratch-with-keras/

Hi Jason,

thank you very much for this tutorial, it’s awesome!