Generative Adversarial Networks, or GANs, are an architecture for training generative models, such as deep convolutional neural networks for generating images.

The conditional generative adversarial network, or cGAN for short, is a type of GAN that involves the conditional generation of images by a generator model. Image generation can be conditional on a class label, if available, allowing the targeted generated of images of a given type.

The Auxiliary Classifier GAN, or AC-GAN for short, is an extension of the conditional GAN that changes the discriminator to predict the class label of a given image rather than receive it as input. It has the effect of stabilizing the training process and allowing the generation of large high-quality images whilst learning a representation in the latent space that is independent of the class label.

In this tutorial, you will discover how to develop an auxiliary classifier generative adversarial network for generating photographs of clothing.

After completing this tutorial, you will know:

- The auxiliary classifier GAN is a type of conditional GAN that requires that the discriminator predict the class label of a given image.

- How to develop generator, discriminator, and composite models for the AC-GAN.

- How to train, evaluate, and use an AC-GAN to generate photographs of clothing from the Fashion-MNIST dataset.

Kick-start your project with my new book Generative Adversarial Networks with Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Updated Jan/2021: Updated so layer freezing works with batch norm.

How to Develop an Auxiliary Classifier GAN (AC-GAN) From Scratch with Keras

Photo by ebbe ostebo, some rights reserved.

Tutorial Overview

This tutorial is divided into five parts; they are:

- Auxiliary Classifier Generative Adversarial Networks

- Fashion-MNIST Clothing Photograph Dataset

- How to Define AC-GAN Models

- How to Develop an AC-GAN for Fashion-MNIST

- How to Generate Items of Clothing With the AC-GAN

Auxiliary Classifier Generative Adversarial Networks

The generative adversarial network is an architecture for training a generative model, typically deep convolutional neural networks for generating image.

The architecture is comprised of both a generator model that takes random points from a latent space as input and generates images, and a discriminator for classifying images as either real (from the dataset) or fake (generate). Both models are then trained simultaneously in a zero-sum game.

A conditional GAN, cGAN or CGAN for short, is an extension of the GAN architecture that adds structure to the latent space. The training of the GAN model is changed so that the generator is provided both with a point in the latent space and a class label as input, and attempts to generate an image for that class. The discriminator is provided with both an image and the class label and must classify whether the image is real or fake as before.

The addition of the class as input makes the image generation process, and image classification process, conditional on the class label, hence the name. The effect is both a more stable training process and a resulting generator model that can be used to generate images of a given specific type, e.g. for a class label.

The Auxiliary Classifier GAN, or AC-GAN for short, is a further extension of the GAN architecture building upon the CGAN extension. It was introduced by Augustus Odena, et al. from Google Brain in the 2016 paper titled “Conditional Image Synthesis with Auxiliary Classifier GANs.”

As with the conditional GAN, the generator model in the AC-GAN is provided both with a point in the latent space and the class label as input, e.g. the image generation process is conditional.

The main difference is in the discriminator model, which is only provided with the image as input, unlike the conditional GAN that is provided with the image and class label as input. The discriminator model must then predict whether the given image is real or fake as before, and must also predict the class label of the image.

… the model […] is class conditional, but with an auxiliary decoder that is tasked with reconstructing class labels.

— Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

The architecture is described in such a way that the discriminator and auxiliary classifier may be considered separate models that share model weights. In practice, the discriminator and auxiliary classifier can be implemented as a single neural network model with two outputs.

The first output is a single probability via the sigmoid activation function that indicates the “realness” of the input image and is optimized using binary cross entropy like a normal GAN discriminator model.

The second output is a probability of the image belonging to each class via the softmax activation function, like any given multi-class classification neural network model, and is optimized using categorical cross entropy.

To summarize:

Generator Model:

- Input: Random point from the latent space, and the class label.

- Output: Generated image.

Discriminator Model:

- Input: Image.

- Output: Probability that the provided image is real, probability of the image belonging to each known class.

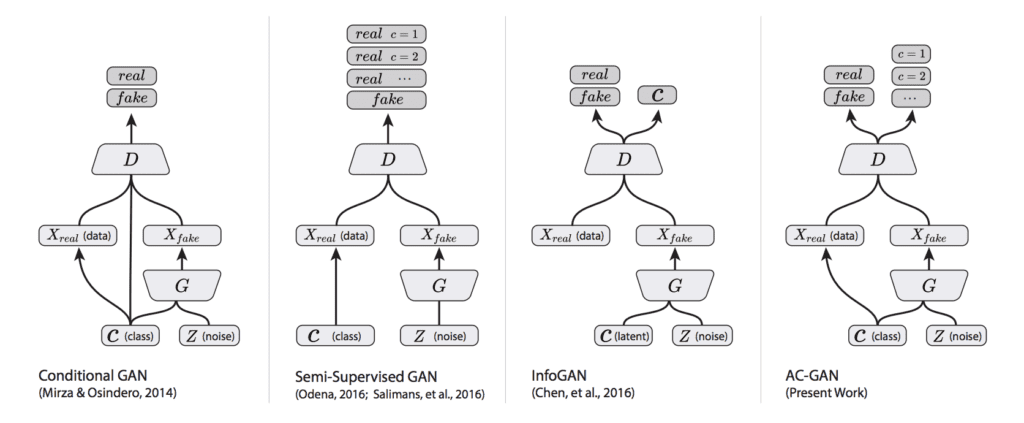

The plot below summarizes the inputs and outputs of a range of conditional GANs, including the AC-GAN, providing some context for the differences.

Summary of the Differences Between the Conditional GAN, Semi-Supervised GAN, InfoGAN, and AC-GAN.

Taken from: Version of Conditional Image Synthesis With Auxiliary Classifier GANs.

The discriminator seeks to maximize the probability of correctly classifying real and fake images (LS) and correctly predicting the class label (LC) of a real or fake image (e.g. LS + LC). The generator seeks to minimize the ability of the discriminator to discriminate real and fake images whilst also maximizing the ability of the discriminator predicting the class label of real and fake images (e.g. LC – LS).

The objective function has two parts: the log-likelihood of the correct source, LS, and the log-likelihood of the correct class, LC. […] D is trained to maximize LS + LC while G is trained to maximize LC − LS.

— Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

The resulting generator learns a latent space representation that is independent of the class label, unlike the conditional GAN.

AC-GANs learn a representation for z that is independent of class label.

— Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

The effect of changing the conditional GAN in this way is both a more stable training process and the ability of the model to generate higher quality images with a larger size than had been previously possible, e.g. 128×128 pixels.

… we demonstrate that adding more structure to the GAN latent space along with a specialized cost function results in higher quality samples. […] Importantly, we demonstrate quantitatively that our high resolution samples are not just naive resizings of low resolution samples.

— Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

Want to Develop GANs from Scratch?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Fashion-MNIST Clothing Photograph Dataset

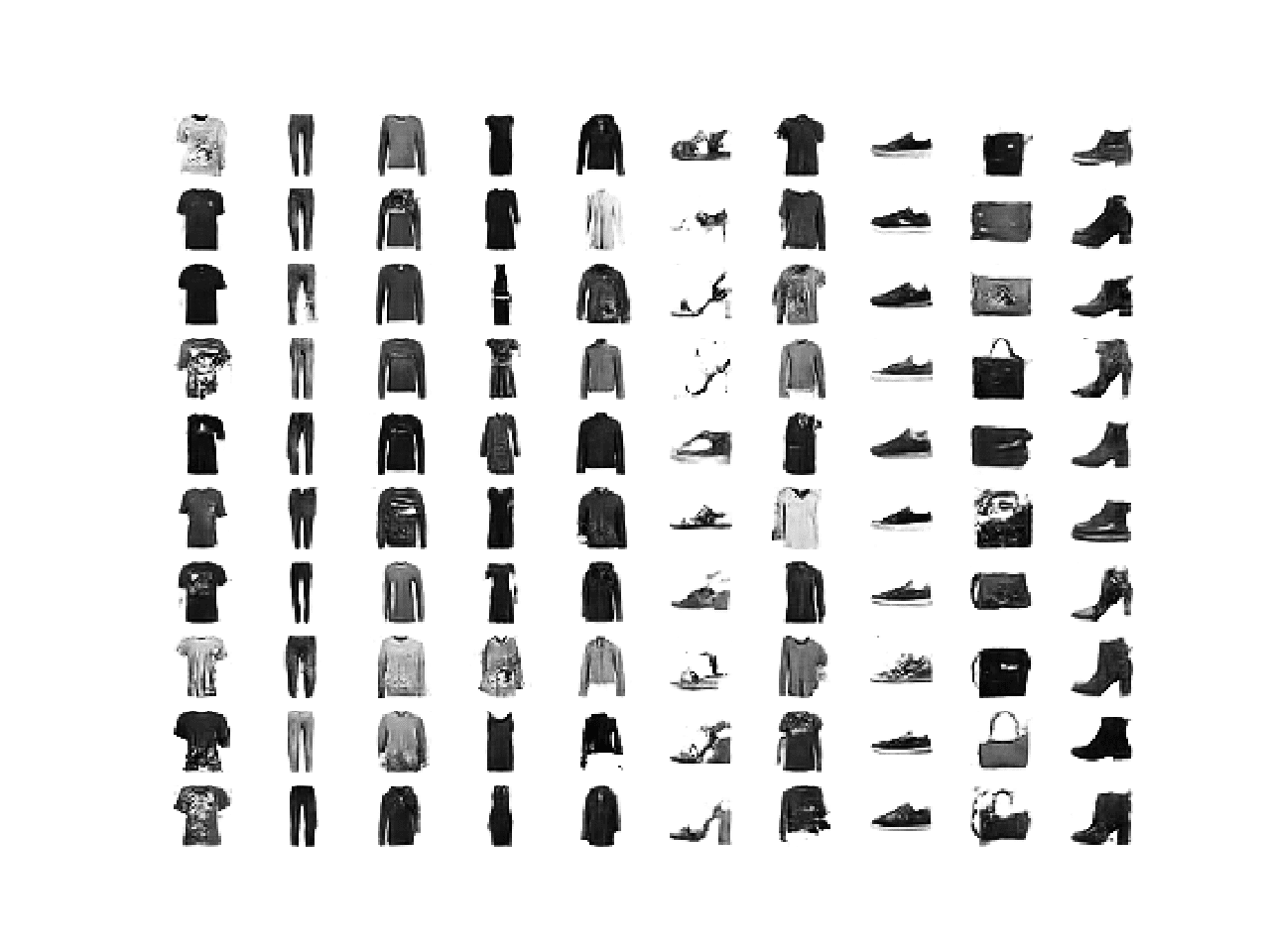

The Fashion-MNIST dataset is proposed as a more challenging replacement dataset for the MNIST handwritten digit dataset.

It is a dataset comprised of 60,000 small square 28×28 pixel grayscale images of items of 10 types of clothing, such as shoes, t-shirts, dresses, and more.

Keras provides access to the Fashion-MNIST dataset via the fashion_mnist.load_dataset() function. It returns two tuples, one with the input and output elements for the standard training dataset, and another with the input and output elements for the standard test dataset.

The example below loads the dataset and summarizes the shape of the loaded dataset.

Note: the first time you load the dataset, Keras will automatically download a compressed version of the images and save them under your home directory in ~/.keras/datasets/. The download is fast as the dataset is only about 25 megabytes in its compressed form.

|

1 2 3 4 5 6 7 |

# example of loading the fashion_mnist dataset from keras.datasets.fashion_mnist import load_data # load the images into memory (trainX, trainy), (testX, testy) = load_data() # summarize the shape of the dataset print('Train', trainX.shape, trainy.shape) print('Test', testX.shape, testy.shape) |

Running the example loads the dataset and prints the shape of the input and output components of the train and test splits of images.

We can see that there are 60K examples in the training set and 10K in the test set and that each image is a square of 28 by 28 pixels.

|

1 2 |

Train (60000, 28, 28) (60000,) Test (10000, 28, 28) (10000,) |

The images are grayscale with a black background (0 pixel value) and the items of clothing in white ( pixel values near 255). This means if the images were plotted, they would be mostly black with a white item of clothing in the middle.

We can plot some of the images from the training dataset using the matplotlib library with the imshow() function and specify the color map via the ‘cmap‘ argument as ‘gray‘ to show the pixel values correctly.

|

1 2 |

# plot raw pixel data pyplot.imshow(trainX[i], cmap='gray') |

Alternately, the images are easier to review when we reverse the colors and plot the background as white and the clothing in black.

They are easier to view as most of the image is now white with the area of interest in black. This can be achieved using a reverse grayscale color map, as follows:

|

1 2 |

# plot raw pixel data pyplot.imshow(trainX[i], cmap='gray_r') |

The example below plots the first 100 images from the training dataset in a 10 by 10 square.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# example of loading the fashion_mnist dataset from keras.datasets.fashion_mnist import load_data from matplotlib import pyplot # load the images into memory (trainX, trainy), (testX, testy) = load_data() # plot images from the training dataset for i in range(100): # define subplot pyplot.subplot(10, 10, 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(trainX[i], cmap='gray_r') pyplot.show() |

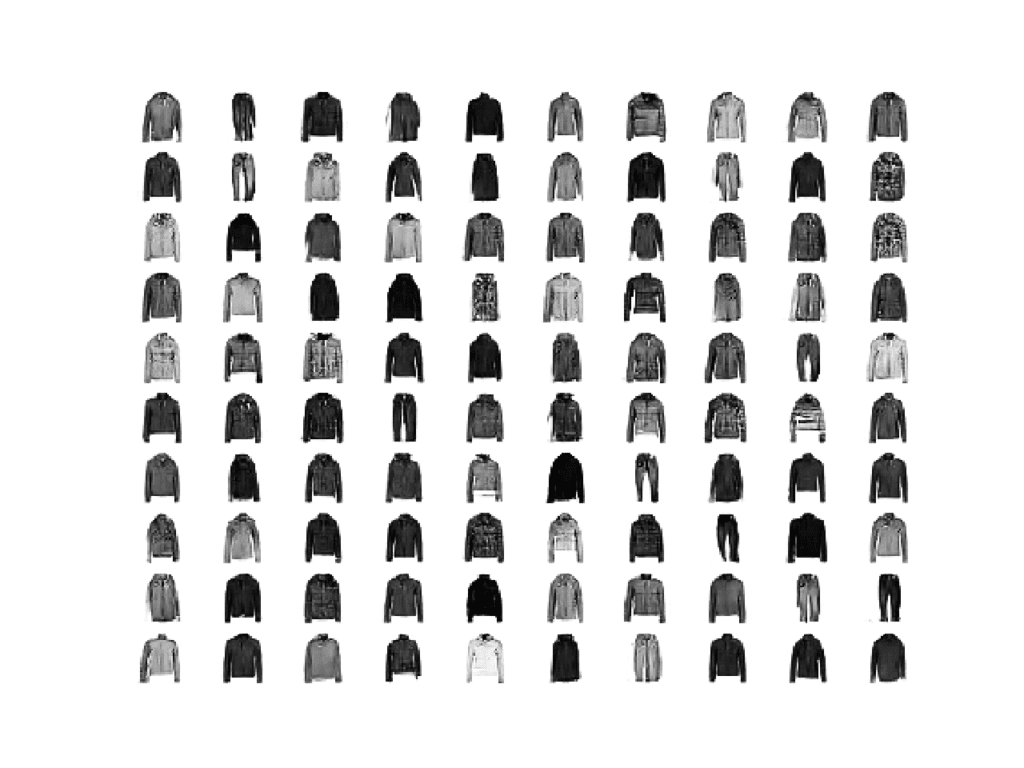

Running the example creates a figure with a plot of 100 images from the MNIST training dataset, arranged in a 10×10 square.

Plot of the First 100 Items of Clothing From the Fashion MNIST Dataset.

We will use the images in the training dataset as the basis for training a Generative Adversarial Network.

Specifically, the generator model will learn how to generate new plausible items of clothing, using a discriminator that will try to distinguish between real images from the Fashion MNIST training dataset and new images output by the generator model, and predict the class label for each.

This is a relatively simple problem that does not require a sophisticated generator or discriminator model, although it does require the generation of a grayscale output image.

How to Define AC-GAN Models

In this section, we will develop the generator, discriminator, and composite models for the AC-GAN.

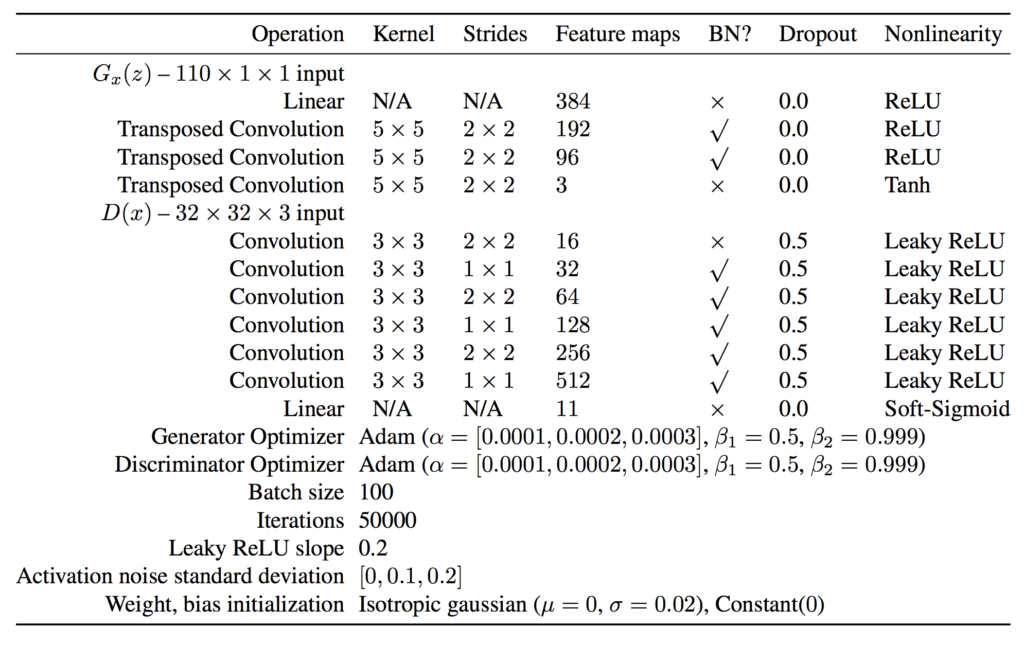

The appendix of the AC-GAN paper provides suggestions for generator and discriminator configurations that we will use as inspiration. The table below summarizes these suggestions for the CIFAR-10 dataset, taken from the paper.

AC-GAN Generator and Discriminator Model Configuration Suggestions.

Take from: Conditional Image Synthesis With Auxiliary Classifier GANs.

AC-GAN Discriminator Model

Let’s start with the discriminator model.

The discriminator model must take as input an image and predict both the probability of the ‘realness‘ of the image and the probability of the image belonging to each of the given classes.

The input images will have the shape 28x28x1 and there are 10 classes for the items of clothing in the Fashion MNIST dataset.

The model can be defined as per the DCGAN architecture. That is, using Gaussian weight initialization, batch normalization, LeakyReLU, Dropout, and a 2×2 stride for downsampling instead of pooling layers.

For example, below is the bulk of the discriminator model defined using the Keras functional API.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

... # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=in_shape) # downsample to 14x14 fe = Conv2D(32, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(in_image) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(64, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # downsample to 7x7 fe = Conv2D(128, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(256, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # flatten feature maps fe = Flatten()(fe) ... |

The main difference is that the model has two output layers.

The first is a single node with the sigmoid activation for predicting the real-ness of the image.

|

1 2 3 |

... # real/fake output out1 = Dense(1, activation='sigmoid')(fe) |

The second is multiple nodes, one for each class, using the softmax activation function to predict the class label of the given image.

|

1 2 3 |

... # class label output out2 = Dense(n_classes, activation='softmax')(fe) |

We can then construct the image with a single input and two outputs.

|

1 2 3 |

... # define model model = Model(in_image, [out1, out2]) |

The model must be trained with two loss functions, binary cross entropy for the first output layer, and categorical cross-entropy loss for the second output layer.

Rather than comparing a one hot encoding of the class labels to the second output layer, as we might do normally, we can compare the integer class labels directly. We can achieve this automatically using the sparse categorical cross-entropy loss function. This will have the identical effect of the categorical cross-entropy but avoids the step of having to manually one hot encode the target labels.

When compiling the model, we can inform Keras to use the two different loss functions for the two output layers by specifying a list of function names as strings; for example:

|

1 |

loss=['binary_crossentropy', 'sparse_categorical_crossentropy'] |

The model is fit using the Adam version of stochastic gradient descent with a small learning rate and modest momentum, as is recommended for DCGANs.

|

1 2 3 4 |

... # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) |

Tying this together, the define_discriminator() function will define and compile the discriminator model for the AC-GAN.

The shape of the input images and the number of classes are parameterized and set with defaults, allowing them to be easily changed for your own project in the future.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

# define the standalone discriminator model def define_discriminator(in_shape=(28,28,1), n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=in_shape) # downsample to 14x14 fe = Conv2D(32, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(in_image) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(64, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # downsample to 7x7 fe = Conv2D(128, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(256, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # flatten feature maps fe = Flatten()(fe) # real/fake output out1 = Dense(1, activation='sigmoid')(fe) # class label output out2 = Dense(n_classes, activation='softmax')(fe) # define model model = Model(in_image, [out1, out2]) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) return model |

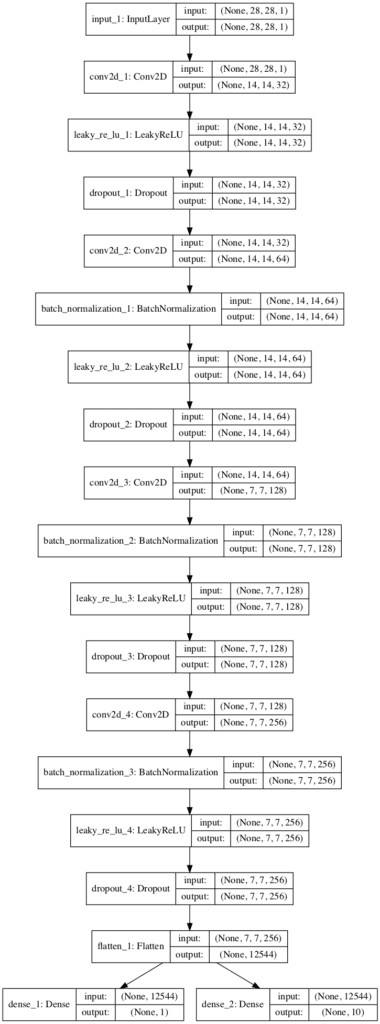

We can define and summarize this model.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 |

# example of defining the discriminator model from keras.models import Model from keras.layers import Input from keras.layers import Dense from keras.layers import Conv2D from keras.layers import LeakyReLU from keras.layers import Dropout from keras.layers import Flatten from keras.layers import BatchNormalization from keras.initializers import RandomNormal from keras.optimizers import Adam from keras.utils.vis_utils import plot_model # define the standalone discriminator model def define_discriminator(in_shape=(28,28,1), n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=in_shape) # downsample to 14x14 fe = Conv2D(32, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(in_image) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(64, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # downsample to 7x7 fe = Conv2D(128, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(256, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # flatten feature maps fe = Flatten()(fe) # real/fake output out1 = Dense(1, activation='sigmoid')(fe) # class label output out2 = Dense(n_classes, activation='softmax')(fe) # define model model = Model(in_image, [out1, out2]) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) return model # define the discriminator model model = define_discriminator() # summarize the model model.summary() # plot the model plot_model(model, to_file='discriminator_plot.png', show_shapes=True, show_layer_names=True) |

Running the example first prints a summary of the model.

This confirms the expected shape of the input images and the two output layers, although the linear organization does make the two separate output layers clear.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 |

__________________________________________________________________________________________________ Layer (type) Output Shape Param # Connected to ================================================================================================== input_1 (InputLayer) (None, 28, 28, 1) 0 __________________________________________________________________________________________________ conv2d_1 (Conv2D) (None, 14, 14, 32) 320 input_1[0][0] __________________________________________________________________________________________________ leaky_re_lu_1 (LeakyReLU) (None, 14, 14, 32) 0 conv2d_1[0][0] __________________________________________________________________________________________________ dropout_1 (Dropout) (None, 14, 14, 32) 0 leaky_re_lu_1[0][0] __________________________________________________________________________________________________ conv2d_2 (Conv2D) (None, 14, 14, 64) 18496 dropout_1[0][0] __________________________________________________________________________________________________ batch_normalization_1 (BatchNor (None, 14, 14, 64) 256 conv2d_2[0][0] __________________________________________________________________________________________________ leaky_re_lu_2 (LeakyReLU) (None, 14, 14, 64) 0 batch_normalization_1[0][0] __________________________________________________________________________________________________ dropout_2 (Dropout) (None, 14, 14, 64) 0 leaky_re_lu_2[0][0] __________________________________________________________________________________________________ conv2d_3 (Conv2D) (None, 7, 7, 128) 73856 dropout_2[0][0] __________________________________________________________________________________________________ batch_normalization_2 (BatchNor (None, 7, 7, 128) 512 conv2d_3[0][0] __________________________________________________________________________________________________ leaky_re_lu_3 (LeakyReLU) (None, 7, 7, 128) 0 batch_normalization_2[0][0] __________________________________________________________________________________________________ dropout_3 (Dropout) (None, 7, 7, 128) 0 leaky_re_lu_3[0][0] __________________________________________________________________________________________________ conv2d_4 (Conv2D) (None, 7, 7, 256) 295168 dropout_3[0][0] __________________________________________________________________________________________________ batch_normalization_3 (BatchNor (None, 7, 7, 256) 1024 conv2d_4[0][0] __________________________________________________________________________________________________ leaky_re_lu_4 (LeakyReLU) (None, 7, 7, 256) 0 batch_normalization_3[0][0] __________________________________________________________________________________________________ dropout_4 (Dropout) (None, 7, 7, 256) 0 leaky_re_lu_4[0][0] __________________________________________________________________________________________________ flatten_1 (Flatten) (None, 12544) 0 dropout_4[0][0] __________________________________________________________________________________________________ dense_1 (Dense) (None, 1) 12545 flatten_1[0][0] __________________________________________________________________________________________________ dense_2 (Dense) (None, 10) 125450 flatten_1[0][0] ================================================================================================== Total params: 527,627 Trainable params: 526,731 Non-trainable params: 896 __________________________________________________________________________________________________ |

A plot of the model is also created, showing the linear processing of the input image and the two clear output layers.

Plot of the Discriminator Model for the Auxiliary Classifier GAN

Now that we have defined our AC-GAN discriminator model, we can develop the generator model.

AC-GAN Generator Model

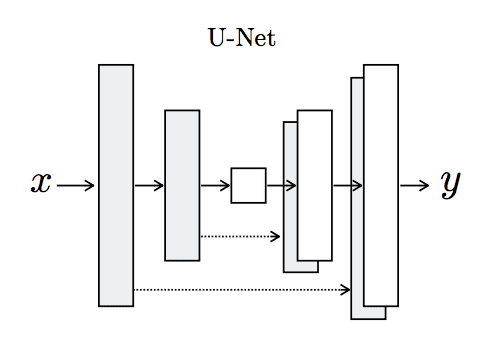

The generator model must take a random point from the latent space as input, and the class label, then output a generated grayscale image with the shape 28x28x1.

The AC-GAN paper describes the AC-GAN generator model taking a vector input that is a concatenation of the point in latent space (100 dimensions) and the one hot encoded class label (10 dimensions) that is 110 dimensions.

An alternative approach that has proven effective and is now generally recommended is to interpret the class label as an additional channel or feature map early in the generator model.

This can be achieved by using a learned embedding with an arbitrary number of dimensions (e.g. 50), the output of which can be interpreted by a fully connected layer with a linear activation resulting in one additional 7×7 feature map.

|

1 2 3 4 5 6 7 8 9 10 |

... # label input in_label = Input(shape=(1,)) # embedding for categorical input li = Embedding(n_classes, 50)(in_label) # linear multiplication n_nodes = 7 * 7 li = Dense(n_nodes, kernel_initializer=init)(li) # reshape to additional channel li = Reshape((7, 7, 1))(li) |

The point in latent space can be interpreted by a fully connected layer with sufficient activations to create multiple 7×7 feature maps, in this case, 384, and provide the basis for a low-resolution version of our output image.

The 7×7 single feature map interpretation of the class label can then be channel-wise concatenated, resulting in 385 feature maps.

|

1 2 3 4 5 6 7 8 9 10 |

... # image generator input in_lat = Input(shape=(latent_dim,)) # foundation for 7x7 image n_nodes = 384 * 7 * 7 gen = Dense(n_nodes, kernel_initializer=init)(in_lat) gen = Activation('relu')(gen) gen = Reshape((7, 7, 384))(gen) # merge image gen and label input merge = Concatenate()([gen, li]) |

These feature maps can then go through the process of two transpose convolutional layers to upsample the 7×7 feature maps first to 14×14 pixels, and then finally to 28×28 features, quadrupling the area of the feature maps with each upscaling step.

The output of the generator is a single feature map or grayscale image with the shape 28×28 and pixel values in the range [-1, 1] given the choice of a tanh activation function. We use ReLU activation for the upscaling layers instead of LeakyReLU given the suggestion the AC-GAN paper.

|

1 2 3 4 5 6 7 |

# upsample to 14x14 gen = Conv2DTranspose(192, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(merge) gen = BatchNormalization()(gen) gen = Activation('relu')(gen) # upsample to 28x28 gen = Conv2DTranspose(1, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(gen) out_layer = Activation('tanh')(gen) |

We can tie all of this together and into the define_generator() function defined below that will create and return the generator model for the AC-GAN.

The model is intentionally not compiled as it is not trained directly; instead, it is trained via the discriminator model.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

# define the standalone generator model def define_generator(latent_dim, n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # label input in_label = Input(shape=(1,)) # embedding for categorical input li = Embedding(n_classes, 50)(in_label) # linear multiplication n_nodes = 7 * 7 li = Dense(n_nodes, kernel_initializer=init)(li) # reshape to additional channel li = Reshape((7, 7, 1))(li) # image generator input in_lat = Input(shape=(latent_dim,)) # foundation for 7x7 image n_nodes = 384 * 7 * 7 gen = Dense(n_nodes, kernel_initializer=init)(in_lat) gen = Activation('relu')(gen) gen = Reshape((7, 7, 384))(gen) # merge image gen and label input merge = Concatenate()([gen, li]) # upsample to 14x14 gen = Conv2DTranspose(192, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(merge) gen = BatchNormalization()(gen) gen = Activation('relu')(gen) # upsample to 28x28 gen = Conv2DTranspose(1, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(gen) out_layer = Activation('tanh')(gen) # define model model = Model([in_lat, in_label], out_layer) return model |

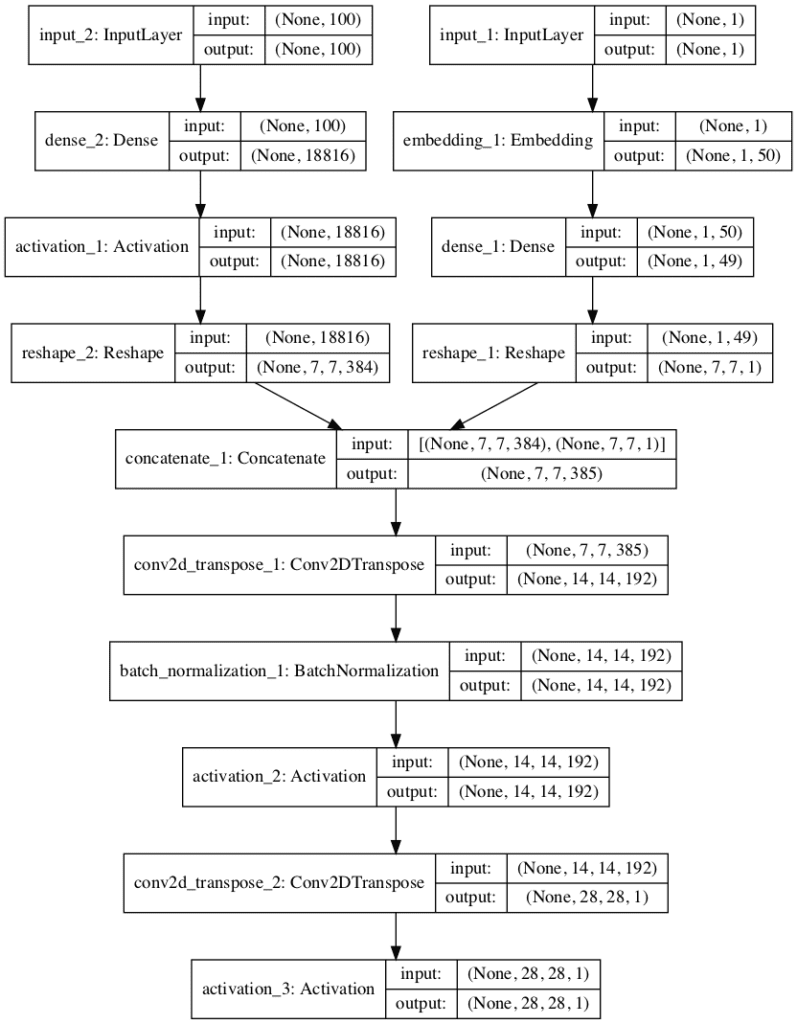

We can create this model and summarize and plot its structure.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 |

# example of defining the generator model from keras.models import Model from keras.layers import Input from keras.layers import Dense from keras.layers import Reshape from keras.layers import Conv2DTranspose from keras.layers import Embedding from keras.layers import Concatenate from keras.layers import Activation from keras.layers import BatchNormalization from keras.initializers import RandomNormal from keras.utils.vis_utils import plot_model # define the standalone generator model def define_generator(latent_dim, n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # label input in_label = Input(shape=(1,)) # embedding for categorical input li = Embedding(n_classes, 50)(in_label) # linear multiplication n_nodes = 7 * 7 li = Dense(n_nodes, kernel_initializer=init)(li) # reshape to additional channel li = Reshape((7, 7, 1))(li) # image generator input in_lat = Input(shape=(latent_dim,)) # foundation for 7x7 image n_nodes = 384 * 7 * 7 gen = Dense(n_nodes, kernel_initializer=init)(in_lat) gen = Activation('relu')(gen) gen = Reshape((7, 7, 384))(gen) # merge image gen and label input merge = Concatenate()([gen, li]) # upsample to 14x14 gen = Conv2DTranspose(192, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(merge) gen = BatchNormalization()(gen) gen = Activation('relu')(gen) # upsample to 28x28 gen = Conv2DTranspose(1, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(gen) out_layer = Activation('tanh')(gen) # define model model = Model([in_lat, in_label], out_layer) return model # define the size of the latent space latent_dim = 100 # define the generator model model = define_generator(latent_dim) # summarize the model model.summary() # plot the model plot_model(model, to_file='generator_plot.png', show_shapes=True, show_layer_names=True) |

Running the example first prints a summary of the layers and their output shape in the model.

We can confirm that the latent dimension input is 100 dimensions and that the class label input is a single integer. We can also confirm that the output of the embedding class label is correctly concatenated as an additional channel resulting in 385 7×7 feature maps prior to the transpose convolutional layers.

The summary also confirms the expected output shape of a single grayscale 28×28 image.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 |

__________________________________________________________________________________________________ Layer (type) Output Shape Param # Connected to ================================================================================================== input_2 (InputLayer) (None, 100) 0 __________________________________________________________________________________________________ input_1 (InputLayer) (None, 1) 0 __________________________________________________________________________________________________ dense_2 (Dense) (None, 18816) 1900416 input_2[0][0] __________________________________________________________________________________________________ embedding_1 (Embedding) (None, 1, 50) 500 input_1[0][0] __________________________________________________________________________________________________ activation_1 (Activation) (None, 18816) 0 dense_2[0][0] __________________________________________________________________________________________________ dense_1 (Dense) (None, 1, 49) 2499 embedding_1[0][0] __________________________________________________________________________________________________ reshape_2 (Reshape) (None, 7, 7, 384) 0 activation_1[0][0] __________________________________________________________________________________________________ reshape_1 (Reshape) (None, 7, 7, 1) 0 dense_1[0][0] __________________________________________________________________________________________________ concatenate_1 (Concatenate) (None, 7, 7, 385) 0 reshape_2[0][0] reshape_1[0][0] __________________________________________________________________________________________________ conv2d_transpose_1 (Conv2DTrans (None, 14, 14, 192) 1848192 concatenate_1[0][0] __________________________________________________________________________________________________ batch_normalization_1 (BatchNor (None, 14, 14, 192) 768 conv2d_transpose_1[0][0] __________________________________________________________________________________________________ activation_2 (Activation) (None, 14, 14, 192) 0 batch_normalization_1[0][0] __________________________________________________________________________________________________ conv2d_transpose_2 (Conv2DTrans (None, 28, 28, 1) 4801 activation_2[0][0] __________________________________________________________________________________________________ activation_3 (Activation) (None, 28, 28, 1) 0 conv2d_transpose_2[0][0] ================================================================================================== Total params: 3,757,176 Trainable params: 3,756,792 Non-trainable params: 384 __________________________________________________________________________________________________ |

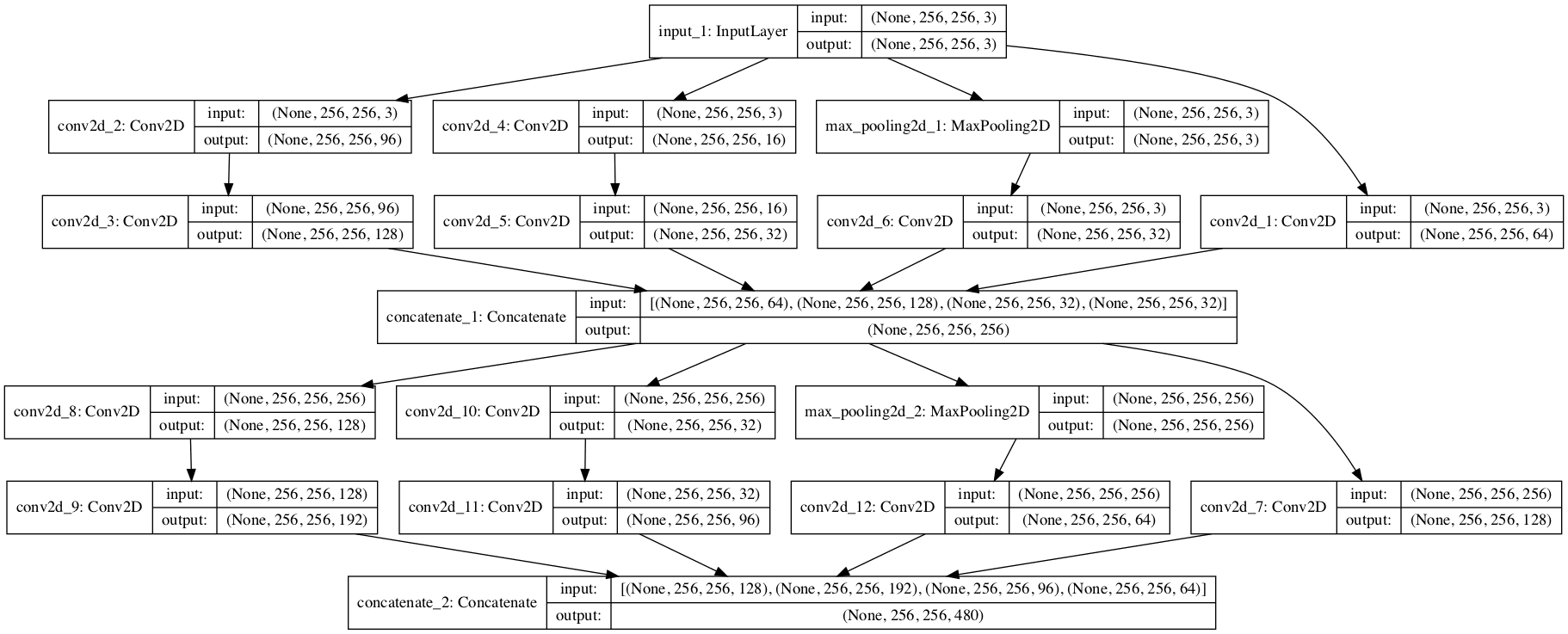

A plot of the network is also created summarizing the input and output shapes for each layer.

The plot confirms the two inputs to the network and the correct concatenation of the inputs.

Plot of the Generator Model for the Auxiliary Classifier GAN

Now that we have defined the generator model, we can show how it might be fit.

AC-GAN Composite Model

The generator model is not updated directly; instead, it is updated via the discriminator model.

This can be achieved by creating a composite model that stacks the generator model on top of the discriminator model.

The input to this composite model is the input to the generator model, namely a random point from the latent space and a class label. The generator model is connected directly to the discriminator model, which takes the generated image directly as input. Finally, the discriminator model predicts both the realness of the generated image and the class label. As such, the composite model is optimized using two loss functions, one for each output of the discriminator model.

The discriminator model is updated in a standalone manner using real and fake examples, and we will review how to do this in the next section. Therefore, we do not want to update the discriminator model when updating (training) the composite model; we only want to use this composite model to update the weights of the generator model.

This can be achieved by setting the layers of the discriminator as not trainable prior to compiling the composite model. This only has an effect on the layer weights when viewed or used by the composite model and prevents them from being updated when the composite model is updated.

The define_gan() function below implements this, taking the already defined generator and discriminator models as input and defining a new composite model that can be used to update the generator model only.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# define the combined generator and discriminator model, for updating the generator def define_gan(g_model, d_model): # make weights in the discriminator not trainable for layer in d_model.layers: if not isinstance(layer, BatchNormalization): layer.trainable = False # connect the outputs of the generator to the inputs of the discriminator gan_output = d_model(g_model.output) # define gan model as taking noise and label and outputting real/fake and label outputs model = Model(g_model.input, gan_output) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) return model |

Now that we have defined the models used in the AC-GAN, we can fit them on the Fashion-MNIST dataset.

How to Develop an AC-GAN for Fashion-MNIST

The first step is to load and prepare the Fashion MNIST dataset.

We only require the images in the training dataset. The images are black and white, therefore we must add an additional channel dimension to transform them to be three dimensional, as expected by the convolutional layers of our models. Finally, the pixel values must be scaled to the range [-1,1] to match the output of the generator model.

The load_real_samples() function below implements this, returning the loaded and scaled Fashion MNIST training dataset ready for modeling.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# load images def load_real_samples(): # load dataset (trainX, trainy), (_, _) = load_data() # expand to 3d, e.g. add channels X = expand_dims(trainX, axis=-1) # convert from ints to floats X = X.astype('float32') # scale from [0,255] to [-1,1] X = (X - 127.5) / 127.5 print(X.shape, trainy.shape) return [X, trainy] |

We will require one batch (or a half batch) of real images from the dataset each update to the GAN model. A simple way to achieve this is to select a random sample of images from the dataset each time.

The generate_real_samples() function below implements this, taking the prepared dataset as an argument, selecting and returning a random sample of Fashion MNIST images and clothing class labels.

The “dataset” argument provided to the function is a list comprised of the images and class labels as returned from the load_real_samples() function. The function also returns their corresponding class label for the discriminator, specifically class=1 indicating that they are real images.

|

1 2 3 4 5 6 7 8 9 10 11 |

# select real samples def generate_real_samples(dataset, n_samples): # split into images and labels images, labels = dataset # choose random instances ix = randint(0, images.shape[0], n_samples) # select images and labels X, labels = images[ix], labels[ix] # generate class labels y = ones((n_samples, 1)) return [X, labels], y |

Next, we need inputs for the generator model.

These are random points from the latent space, specifically Gaussian distributed random variables.

The generate_latent_points() function implements this, taking the size of the latent space as an argument and the number of points required, and returning them as a batch of input samples for the generator model. The function also returns randomly selected integers in [0,9] inclusively for the 10 class labels in the Fashion-MNIST dataset.

|

1 2 3 4 5 6 7 8 9 |

# generate points in latent space as input for the generator def generate_latent_points(latent_dim, n_samples, n_classes=10): # generate points in the latent space x_input = randn(latent_dim * n_samples) # reshape into a batch of inputs for the network z_input = x_input.reshape(n_samples, latent_dim) # generate labels labels = randint(0, n_classes, n_samples) return [z_input, labels] |

Next, we need to use the points in the latent space and clothing class labels as input to the generator in order to generate new images.

The generate_fake_samples() function below implements this, taking the generator model and size of the latent space as arguments, then generating points in the latent space and using them as input to the generator model.

The function returns the generated images, their corresponding clothing class label, and their discriminator class label, specifically class=0 to indicate they are fake or generated.

|

1 2 3 4 5 6 7 8 9 |

# use the generator to generate n fake examples, with class labels def generate_fake_samples(generator, latent_dim, n_samples): # generate points in latent space z_input, labels_input = generate_latent_points(latent_dim, n_samples) # predict outputs images = generator.predict([z_input, labels_input]) # create class labels y = zeros((n_samples, 1)) return [images, labels_input], y |

There are no reliable ways to determine when to stop training a GAN; instead, images can be subjectively inspected in order to choose a final model.

Therefore, we can periodically generate a sample of images using the generator model and save the generator model to file for later use. The summarize_performance() function below implements this, generating 100 images, plotting them, and saving the plot and the generator to file with a filename that includes the training “step” number.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

# generate samples and save as a plot and save the model def summarize_performance(step, g_model, latent_dim, n_samples=100): # prepare fake examples [X, _], _ = generate_fake_samples(g_model, latent_dim, n_samples) # scale from [-1,1] to [0,1] X = (X + 1) / 2.0 # plot images for i in range(100): # define subplot pyplot.subplot(10, 10, 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(X[i, :, :, 0], cmap='gray_r') # save plot to file filename1 = 'generated_plot_%04d.png' % (step+1) pyplot.savefig(filename1) pyplot.close() # save the generator model filename2 = 'model_%04d.h5' % (step+1) g_model.save(filename2) print('>Saved: %s and %s' % (filename1, filename2)) |

We are now ready to fit the GAN models.

The model is fit for 100 training epochs, which is arbitrary, as the model begins generating plausible items of clothing after perhaps 20 epochs. A batch size of 64 samples is used, and each training epoch involves 60,000/64, or about 937, batches of real and fake samples and updates to the model. The summarize_performance() function is called every 10 epochs, or every (937 * 10) 9,370 training steps.

For a given training step, first the discriminator model is updated for a half batch of real samples, then a half batch of fake samples, together forming one batch of weight updates. The generator is then updated via the combined GAN model. Importantly, the class label is set to 1, or real, for the fake samples. This has the effect of updating the generator toward getting better at generating real samples on the next batch.

Note, the discriminator and composite model return three loss values from the call to the train_on_batch() function. The first value is the sum of the loss values and can be ignored, whereas the second value is the loss for the real/fake output layer and the third value is the loss for the clothing label classification.

The train() function below implements this, taking the defined models, dataset, and size of the latent dimension as arguments and parameterizing the number of epochs and batch size with default arguments. The generator model is saved at the end of training.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

# train the generator and discriminator def train(g_model, d_model, gan_model, dataset, latent_dim, n_epochs=100, n_batch=64): # calculate the number of batches per training epoch bat_per_epo = int(dataset[0].shape[0] / n_batch) # calculate the number of training iterations n_steps = bat_per_epo * n_epochs # calculate the size of half a batch of samples half_batch = int(n_batch / 2) # manually enumerate epochs for i in range(n_steps): # get randomly selected 'real' samples [X_real, labels_real], y_real = generate_real_samples(dataset, half_batch) # update discriminator model weights _,d_r1,d_r2 = d_model.train_on_batch(X_real, [y_real, labels_real]) # generate 'fake' examples [X_fake, labels_fake], y_fake = generate_fake_samples(g_model, latent_dim, half_batch) # update discriminator model weights _,d_f,d_f2 = d_model.train_on_batch(X_fake, [y_fake, labels_fake]) # prepare points in latent space as input for the generator [z_input, z_labels] = generate_latent_points(latent_dim, n_batch) # create inverted labels for the fake samples y_gan = ones((n_batch, 1)) # update the generator via the discriminator's error _,g_1,g_2 = gan_model.train_on_batch([z_input, z_labels], [y_gan, z_labels]) # summarize loss on this batch print('>%d, dr[%.3f,%.3f], df[%.3f,%.3f], g[%.3f,%.3f]' % (i+1, d_r1,d_r2, d_f,d_f2, g_1,g_2)) # evaluate the model performance every 'epoch' if (i+1) % (bat_per_epo * 10) == 0: summarize_performance(i, g_model, latent_dim) |

We can then define the size of the latent space, define all three models, and train them on the loaded fashion MNIST dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# size of the latent space latent_dim = 100 # create the discriminator discriminator = define_discriminator() # create the generator generator = define_generator(latent_dim) # create the gan gan_model = define_gan(generator, discriminator) # load image data dataset = load_real_samples() # train model train(generator, discriminator, gan_model, dataset, latent_dim) |

Tying all of this together, the complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 |

# example of fitting an auxiliary classifier gan (ac-gan) on fashion mnsit from numpy import zeros from numpy import ones from numpy import expand_dims from numpy.random import randn from numpy.random import randint from keras.datasets.fashion_mnist import load_data from keras.optimizers import Adam from keras.models import Model from keras.layers import Input from keras.layers import Dense from keras.layers import Reshape from keras.layers import Flatten from keras.layers import Conv2D from keras.layers import Conv2DTranspose from keras.layers import LeakyReLU from keras.layers import BatchNormalization from keras.layers import Dropout from keras.layers import Embedding from keras.layers import Activation from keras.layers import Concatenate from keras.initializers import RandomNormal from matplotlib import pyplot # define the standalone discriminator model def define_discriminator(in_shape=(28,28,1), n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # image input in_image = Input(shape=in_shape) # downsample to 14x14 fe = Conv2D(32, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(in_image) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(64, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # downsample to 7x7 fe = Conv2D(128, (3,3), strides=(2,2), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # normal fe = Conv2D(256, (3,3), padding='same', kernel_initializer=init)(fe) fe = BatchNormalization()(fe) fe = LeakyReLU(alpha=0.2)(fe) fe = Dropout(0.5)(fe) # flatten feature maps fe = Flatten()(fe) # real/fake output out1 = Dense(1, activation='sigmoid')(fe) # class label output out2 = Dense(n_classes, activation='softmax')(fe) # define model model = Model(in_image, [out1, out2]) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) return model # define the standalone generator model def define_generator(latent_dim, n_classes=10): # weight initialization init = RandomNormal(stddev=0.02) # label input in_label = Input(shape=(1,)) # embedding for categorical input li = Embedding(n_classes, 50)(in_label) # linear multiplication n_nodes = 7 * 7 li = Dense(n_nodes, kernel_initializer=init)(li) # reshape to additional channel li = Reshape((7, 7, 1))(li) # image generator input in_lat = Input(shape=(latent_dim,)) # foundation for 7x7 image n_nodes = 384 * 7 * 7 gen = Dense(n_nodes, kernel_initializer=init)(in_lat) gen = Activation('relu')(gen) gen = Reshape((7, 7, 384))(gen) # merge image gen and label input merge = Concatenate()([gen, li]) # upsample to 14x14 gen = Conv2DTranspose(192, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(merge) gen = BatchNormalization()(gen) gen = Activation('relu')(gen) # upsample to 28x28 gen = Conv2DTranspose(1, (5,5), strides=(2,2), padding='same', kernel_initializer=init)(gen) out_layer = Activation('tanh')(gen) # define model model = Model([in_lat, in_label], out_layer) return model # define the combined generator and discriminator model, for updating the generator def define_gan(g_model, d_model): # make weights in the discriminator not trainable for layer in d_model.layers: if not isinstance(layer, BatchNormalization): layer.trainable = False # connect the outputs of the generator to the inputs of the discriminator gan_output = d_model(g_model.output) # define gan model as taking noise and label and outputting real/fake and label outputs model = Model(g_model.input, gan_output) # compile model opt = Adam(lr=0.0002, beta_1=0.5) model.compile(loss=['binary_crossentropy', 'sparse_categorical_crossentropy'], optimizer=opt) return model # load images def load_real_samples(): # load dataset (trainX, trainy), (_, _) = load_data() # expand to 3d, e.g. add channels X = expand_dims(trainX, axis=-1) # convert from ints to floats X = X.astype('float32') # scale from [0,255] to [-1,1] X = (X - 127.5) / 127.5 print(X.shape, trainy.shape) return [X, trainy] # select real samples def generate_real_samples(dataset, n_samples): # split into images and labels images, labels = dataset # choose random instances ix = randint(0, images.shape[0], n_samples) # select images and labels X, labels = images[ix], labels[ix] # generate class labels y = ones((n_samples, 1)) return [X, labels], y # generate points in latent space as input for the generator def generate_latent_points(latent_dim, n_samples, n_classes=10): # generate points in the latent space x_input = randn(latent_dim * n_samples) # reshape into a batch of inputs for the network z_input = x_input.reshape(n_samples, latent_dim) # generate labels labels = randint(0, n_classes, n_samples) return [z_input, labels] # use the generator to generate n fake examples, with class labels def generate_fake_samples(generator, latent_dim, n_samples): # generate points in latent space z_input, labels_input = generate_latent_points(latent_dim, n_samples) # predict outputs images = generator.predict([z_input, labels_input]) # create class labels y = zeros((n_samples, 1)) return [images, labels_input], y # generate samples and save as a plot and save the model def summarize_performance(step, g_model, latent_dim, n_samples=100): # prepare fake examples [X, _], _ = generate_fake_samples(g_model, latent_dim, n_samples) # scale from [-1,1] to [0,1] X = (X + 1) / 2.0 # plot images for i in range(100): # define subplot pyplot.subplot(10, 10, 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(X[i, :, :, 0], cmap='gray_r') # save plot to file filename1 = 'generated_plot_%04d.png' % (step+1) pyplot.savefig(filename1) pyplot.close() # save the generator model filename2 = 'model_%04d.h5' % (step+1) g_model.save(filename2) print('>Saved: %s and %s' % (filename1, filename2)) # train the generator and discriminator def train(g_model, d_model, gan_model, dataset, latent_dim, n_epochs=100, n_batch=64): # calculate the number of batches per training epoch bat_per_epo = int(dataset[0].shape[0] / n_batch) # calculate the number of training iterations n_steps = bat_per_epo * n_epochs # calculate the size of half a batch of samples half_batch = int(n_batch / 2) # manually enumerate epochs for i in range(n_steps): # get randomly selected 'real' samples [X_real, labels_real], y_real = generate_real_samples(dataset, half_batch) # update discriminator model weights _,d_r1,d_r2 = d_model.train_on_batch(X_real, [y_real, labels_real]) # generate 'fake' examples [X_fake, labels_fake], y_fake = generate_fake_samples(g_model, latent_dim, half_batch) # update discriminator model weights _,d_f,d_f2 = d_model.train_on_batch(X_fake, [y_fake, labels_fake]) # prepare points in latent space as input for the generator [z_input, z_labels] = generate_latent_points(latent_dim, n_batch) # create inverted labels for the fake samples y_gan = ones((n_batch, 1)) # update the generator via the discriminator's error _,g_1,g_2 = gan_model.train_on_batch([z_input, z_labels], [y_gan, z_labels]) # summarize loss on this batch print('>%d, dr[%.3f,%.3f], df[%.3f,%.3f], g[%.3f,%.3f]' % (i+1, d_r1,d_r2, d_f,d_f2, g_1,g_2)) # evaluate the model performance every 'epoch' if (i+1) % (bat_per_epo * 10) == 0: summarize_performance(i, g_model, latent_dim) # size of the latent space latent_dim = 100 # create the discriminator discriminator = define_discriminator() # create the generator generator = define_generator(latent_dim) # create the gan gan_model = define_gan(generator, discriminator) # load image data dataset = load_real_samples() # train model train(generator, discriminator, gan_model, dataset, latent_dim) |

Running the example may take some time, and GPU hardware is recommended, but not required.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

The loss is reported each training iteration, including the real/fake and class loss for the discriminator on real examples (dr), the discriminator on fake examples (df), and the generator updated via the composite model when generating images (g).

|

1 2 3 4 5 6 |

>1, dr[0.934,2.967], df[1.310,3.006], g[0.878,3.368] >2, dr[0.711,2.836], df[0.939,3.262], g[0.947,2.751] >3, dr[0.649,2.980], df[1.001,3.147], g[0.844,3.226] >4, dr[0.732,3.435], df[0.823,3.715], g[1.048,3.292] >5, dr[0.860,3.076], df[0.591,2.799], g[1.123,3.313] ... |

A total of 10 sample images are generated and 10 models saved over the run.

Plots of generated clothing after 10 iterations already look plausible.

Example of AC-GAN Generated Items of Clothing after 10 Epochs

The images remain reliable throughout the training process.

Example of AC-GAN Generated Items of Clothing After 100 Epochs

How to Generate Items of Clothing With the AC-GAN

In this section, we can load a saved model and use it to generate new items of clothing that plausibly could have come from the Fashion-MNIST dataset.

The AC-GAN technically does not conditionally generate images based on the class label, at least not in the same way as the conditional GAN.

AC-GANs learn a representation for z that is independent of class label.

— Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

Nevertheless, if used in this way, the generated images mostly match the class label.

The example below loads the model from the end of the run (any saved model would do), and generates 100 examples of class 7 (sneaker).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

# example of loading the generator model and generating images from math import sqrt from numpy import asarray from numpy.random import randn from keras.models import load_model from matplotlib import pyplot # generate points in latent space as input for the generator def generate_latent_points(latent_dim, n_samples, n_class): # generate points in the latent space x_input = randn(latent_dim * n_samples) # reshape into a batch of inputs for the network z_input = x_input.reshape(n_samples, latent_dim) # generate labels labels = asarray([n_class for _ in range(n_samples)]) return [z_input, labels] # create and save a plot of generated images def save_plot(examples, n_examples): # plot images for i in range(n_examples): # define subplot pyplot.subplot(sqrt(n_examples), sqrt(n_examples), 1 + i) # turn off axis pyplot.axis('off') # plot raw pixel data pyplot.imshow(examples[i, :, :, 0], cmap='gray_r') pyplot.show() # load model model = load_model('model_93700.h5') latent_dim = 100 n_examples = 100 # must be a square n_class = 7 # sneaker # generate images latent_points, labels = generate_latent_points(latent_dim, n_examples, n_class) # generate images X = model.predict([latent_points, labels]) # scale from [-1,1] to [0,1] X = (X + 1) / 2.0 # plot the result save_plot(X, n_examples) |

Running the example, in this case, generates 100 very plausible photos of sneakers.

Example of 100 Photos of Sneakers Generated by an AC-GAN

It may be fun to experiment with other class values.

For example, below are 100 generated coats (n_class = 4). Most of the images are coats, although there are a few pants in there, showing that the latent space is partially, but not completely, class-conditional.

Example of 100 Photos of Coats Generated by an AC-GAN

Extensions

This section lists some ideas for extending the tutorial that you may wish to explore.

- Generate Images. Generate images for each clothing class and compare results across different saved models (e.g. epoch 10, 20, etc.).

- Alternate Configuration. Update the configuration of the generator, discriminator, or both models to have more or less capacity and compare results.

- CIFAR-10 Dataset. Update the example to train on the CIFAR-10 dataset and use model configuration described in the appendix of the paper.

If you explore any of these extensions, I’d love to know.

Post your findings in the comments below.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Papers

- Conditional Image Synthesis With Auxiliary Classifier GANs, 2016.

- Conditional Image Synthesis With Auxiliary Classifier GANs, Reviewer Comments.

- Conditional Image Synthesis with Auxiliary Classifier GANs, NIPS 2016, YouTube.

API

- Keras Datasets API.

- Keras Sequential Model API

- Keras Convolutional Layers API

- How can I “freeze” Keras layers?

- MatplotLib API

- NumPy Random sampling (numpy.random) API

- NumPy Array manipulation routines

Articles

- How to Train a GAN? Tips and tricks to make GANs work

- Fashion-MNIST Project, GitHub.

- AC-GAN, Keras GAN Project.

- AC-GAN, Keras Example.

Summary

In this tutorial, you discovered how to develop an auxiliary classifier generative adversarial network for generating photographs of clothing.

Specifically, you learned:

- The auxiliary classifier GAN is a type of conditional GAN that requires that the discriminator predict the class label of a given image.

- How to develop generator, discriminator, and composite models for the AC-GAN.

- How to train, evaluate, and use an AC-GAN to generate photographs of clothing from the Fashion-MNIST dataset.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Thank you for your excellent blogs.

One question I want to ask,Tensorflow or keras,which one do you command for realize rnn/deep-lstm/encoder-decoder/..?

I think both are good, but I don’t know how to choose.

Thanks again

Keras.

Thanks for the great article! 🙂

I’m trying to get a better grasp of the latent space when “AC-GANs learn a representation for z that is independent of class label”. Does this mean that if I had a latent space in 2D, for simplicity, that the latent space does NOT have regions which are a specific class like I would in a variational autoencoder?

It does not force or guarantee the mapping or association, but it will capture something that makes sense to the model for image synthesis.

For real control, use a conditional GAN or an InfoGAN.

Thanks for your blogging Jason, you really articulate the concepts very well and your posts have helped me understand a variety of topics in machine learning.

FYI tensorflow 2.0 includes keras so I would use keras within tf2

Thanks for your support.

TF2 is not released, it is in beta. I recommend standalone keras at this stage.

As always, I’m very impressed with your work. I tried out your programming for the 2D graph, similar work done on LS-GAN/CGANS, and finally the AC-GAN. I confess to having to use the tensorflow version of Keras (Keras by itself doesn’t work for me for some reason). I tried out the following statement in the define_gan routine and found it did a much better job of generating correct clothing icons when tested at the end:

model.compile(loss=[‘mse’, ‘sparse_categorical_crossentropy’], optimizer=opt)

I like mse for exactly the reasons given with LS-GAN… In my mind, mse gives a measure of distance from the “correct” generation rather than just a measure of “correctness”.

However, I’m not sure why it would work in this problem when labels are also used. Does mse only apply to the icons and not to the labels (0 thru 9)? Hopefully I’m making sense.

I’m sure you explain it somewhere but it escapes me.

Thanks for your great work.

Thanks.

Nice one! Yes, I’m a big fan of MSE loss, it’s also used heavily on fancy GANs like pix2pix and cyclegan.

Thanks for your excellent blogging Jason,

The power for deep learning show clearly in image data, but it also could serve time-series, and raw data, does not it?

Unfortunately, I can not find enough resources and examples for applying deep learning on the non-image dataset.

My question,

Does it worth to apply non-image data, raw- data set, for example, on this method?

If yes, could you kindly point me to a useful resource and guidance?

Thank you in advance,

Yes, see here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

And it does great on text data:

https://machinelearningmastery.com/start-here/#nlp

Thank you for the neatly explained article Jason,

I’m actually trying to recreate it for Cifar10 using the hyperparameters given in the research paper, I see that after after a few thousand steps, the loss for discriminator for the classes of fake images goes down and fluctuates around zero, while real/fake loss stays around 0.7. I’m not sure if this means that the generator is learning something, as based on what I read discriminator loss reaching zero means it’s failure. Also, the generator loss is fluctuation around zero. Does this mean I have to restart and change the hyperparameters?

Thanks again in advance

It likely suggests a gan failure mode:

https://machinelearningmastery.com/practical-guide-to-gan-failure-modes/

Yes, restart.

How to predict the class label of a given image?

GANs are not used for image classification, instead you can use different models described here:

https://machinelearningmastery.com/start-here/#dlfcv

What does ‘C’ indicates in the diagram of AC-GAN?

and what is output of discriminator? It is mentioned like Probability that the provided image is real, probability of the image belonging to each known class. – What this means?

How can I check this?

C is the class.

The output of the discriminator is whether the image is real or fake. If this is a new idea, perhaps start with the simpler tutorials here:

https://machinelearningmastery.com/start-here/#gans

Hi sir, but you said that AC gan can predict the class of a given image, what does this mean?

It means that the model is trained on data where images are labeled.

Thanks for you wonderful blogs and your GAN book Jason,

kindly help me in understanding this much better.

y doubt is how the loss of AC GAN i.e “LC-LS” can be interpreted from the loss functions in “define GAN()” funtion

if the below code determines LC +LS in discriminator :

model.compile(loss=[‘binary_crossentropy’, ‘sparse_categorical_crossentropy’], optimizer=opt)

(real images y=1, fake images y=0)

How the below code determines LC- LS in generator training :???

model.compile(loss=[‘binary_crossentropy’, ‘sparse_categorical_crossentropy’], optimizer=opt)

(fake images y=1)

Recall that the generator is updated via the discriminator.

I have understood the generator training part clearly,

My doubt is that, whatever losses we mention ,the model triesto mimize both.

We are writing the 2 losses i.e 1.binary cross entropy loss ( for fake or real) 2. Categorical cross entropy loss(for labels) in a similar way at 2 different places in the code(line no. 60 and 106 in the ac gan code)

at one place(line no. 60) binary cross entropy loss is supposed to be minimized (by discriminator)

and at other place (line no. 106) binary cross entropy loss is supposed to be maximized (by generator)

In tensorflow we mention a loss which to be minimized directly nad a loss which is to be maximized by negaive quantity i.e( – loss)

can you please clear this doubt?

Yes, we fit the generator via the discriminator here. The input passes through the discriminator to the generator.

Perhaps start with this tutorial to better understand the chosen architecture for model updates:

https://machinelearningmastery.com/how-to-develop-a-generative-adversarial-network-for-a-1-dimensional-function-from-scratch-in-keras/

Hi jason,

if try to print accuracy also for final gan model , there are total 5 parametrs in output of model.compile()

loss1+loss2, loss1, loss2, accuracy1, accuracy2

you have explained about the out when compiled using only loss, saying

loss1 –for real/fake

loss2– for labels

so can i derive that

accuracy– for real/fake (ofcourse it has no meaning)

accuracy2– for labels ?????????????

Accuracy of the classifier is most relevant, the discriminator less so.

As you said about the order of losses from model.train_on_batch()

“Note, the discriminator and composite model return three-loss values from the call to the train_on_batch() function. The first value is the sum of the loss values and can be ignored, whereas the second value is the loss for the real/fake output layer and the third value is the loss for the clothing label classification.”

Can we apply the same order for accuracy metric also,(if we give it as a metric in discriminator)???

as I got 2 new values from model.train_on_batch(), if i give additional metric ‘accuracy’

Thank you so much for your replies Jason!

Yes, I believe so.

How to get the accuracy of the classifier? I want to know how well the model has predicted the class labels.

We don’t measure the accuracy of GANs, instead we score their generated images:

https://machinelearningmastery.com/how-to-evaluate-generative-adversarial-networks/

Hi Jason,

Have you implemented keras acgan code with Wasserstein metric? Would be of great help.

This (above) is the only implementation of the AC-GAN I have.

Thank you for all of your amazing work, i am new to deep learning probably why but when i run this code, the number of steps keep going on and on, there is no sign which indicates epoch number , if these steps are iterations then they should bbe 9370 as you mentioned but in my case they are going for an infinite number can you please explain this sir that will be great..

>18290, dr[0.317,0.741], df[0.474,0.061], g[1.949,0.154]

>18291, dr[0.236,1.152], df[0.341,0.093], g[2.630,0.043]

>1829, dr[0.477,0.674], df[0.441,0.143], g[2.447,0.097]

>18293, dr[0.622,0.789], df[0.402,0.134], g[2.926,0.076]

The first number will be the epoch number.

Thank you for the response but in my case the first number represent the total number of steps which are 93750…because the number of epochs in your model are 100, with 60000 images and batch of 64 which makes 93750 steps…anyway after training i figure it out…so thank you for all of your amazing work, this site and your work is a tremendous help in my journey of deep learning especially GANs, there are not so many sources which provide both intuition and implementation, so please keep this work going you are a great help to students and researcher.

Happy to hear that you figured out your issue!

Hy! Thank you for this amazing post, i want to apply this implementation to image dataset there are only two classes male and female, i want to classify the generated images into male or female, what changes do i have to make to this code to accomplish my desired goal and.

Kind Regards

Not a lot. Use your dataset instead of my dataset, perhaps tune the models a little. Change to binary cross entropy – for your 2 classes.

Thank you for the response, you are a great help to many people, i will try to make some changes to this model to solve my issue, i guess i have to buy your books collection.

You’re welcome.

Can the GANs predict the label of the image rather than generating images

Some can, but they are designed to generate images. This is their purpose.

Tnq Jason. Can you suggest some GANs that can be used in Predictive Analytics

GANs are generally not used for prediction, they are generative models.

Dear Adrian,

Thank you for sharing your experience with us

Could you kindly tell me how to use spherical linear interpolation (slerp) to generate faces using AC gan

How will labels be treated?

Thanks in advance

Adrian?

Thanks for the suggestion, perhaps I will cover the topic in the future.

Dear Jason,

I am really sorry for this conflict

No problem.

When you set the d_model.trainable = False in define_gan method, I don’t see you setting it back to True anywhere. Yet it seems that when d_model is trained in isolation the weights are updated but not when the gan_model is trained. And since python argument passing is by reference, I assume d_model.trainable should be False everywhere else as well. Am I missing something?

No need as it only impact the composite model.

To learn more about how freezing layers works, see this:

“How can I freeze layers and do fine-tuning?”

https://keras.io/getting_started/faq/#how-can-i-freeze-layers-and-do-fine-tuning

As a student i should thank you for all your tutorials specifically for the GANs. I have a problem that I searched a lot about it but couldn’t find any helpful answer… my problem is when I use BatchNormalization layer in models, I have no improvement in training phase… and in each epoch the produced images from generator are all like the one I have in the first epoch !!! could you help me to find the solution …

You’re welcome.

Perhaps you can try an alternate model configuration or learning configuration and find an approach that best suits your dataset.

Hi Jason,

Yours tutorials are just wonderfull. Thanks for them. But I have a problem with ACGAN. Every time (and believe me I tried a lot of times) I launch your code I’m getting a Convergence Failure afer about 1k iterations. What’s may be the reason of that and, what is more important, how to fix this problem?

Thanks in advance for your response.

Why train so long?

Train for far fewer epochs and keep on the generated images.

Also, these tips may help avoid mode failure / convergence:

https://machinelearningmastery.com/how-to-code-generative-adversarial-network-hacks/

Sorry, I wasn’t precise enough. I ran this example for 100 epochs just like you, by iterations I meant number of steps (for each epoch there are 937 steps, so Convergence Failure appears after about 1 epoch). I believe that is not too much.

Thanks.

Perhaps confirm that your libraries are up to date?

Perhaps try running the example many times?

Perhaps try adjusting the learning hyperparameters, e.g. learning rate?

Perhaps try adjusting the model complexity, e.g. more or fewer layers?

Perhaps try GAN hacks?

I don’t understand why, but after many tries I found the reason of Convergence Failure. After erasing all the BatchNormalization layers, model seems to work just fine. Thank you for your help. Best wishes.

Well done, I’m happy to hear that.

Also, no one has a good idea of the “why” questions for GANs 🙂

Thanks for suggesting the fix to remove BatchNorm layers Radoslaw! I was facing an issue where the output was quite bad even after 100 epochs but your suggestion fixed that.

Thank you!

Hi Jason, thanks for the great article. I’m working on an ACGAN with a dataset of around 9000 images of 4 classes with a 256×192 resolution. I have implemented all the tips you mention here and in your other article “Tips for Training Stable Generative Adversarial Networks”. I’ve added more convolutional layers to my networks though because the images have higher resolution and the loss quickly went to zero when using your exact code. My issue is that the discriminator loss for fake images (df) is often very close to 0.. it doesn’t stay there, goes up and down quite a bit, but always comes back to around 0.00something. I’ve found that adding a smaller momentum number like 0.8 in the BatchNorm layers helps with this a little, but the problem still occurs. These are some typical values that I get:

>526, dr[0.863,4.146], df[0.016,0.797], g[1.441,1.510]

>527, dr[0.485,5.122], df[0.001,0.432], g[1.107,1.458]

>528, dr[0.714,6.939], df[0.000,0.134], g[2.428,2.347]

>529, dr[1.288,6.916], df[0.440,1.296], g[1.442,1.757]

>530, dr[1.151,6.066], df[0.568,2.175], g[0.973,1.084]

>531, dr[0.714,3.896], df[0.001,1.817], g[2.965,0.757]

Any idea what parameter I should tweak to make this more stable? Or could the training set be too small?

Thanks so much, your articles have been extremely helpful!

And is there any guideline about roughly how many parameters your generator and discriminator networks should have based on the image size? Should the generator always have more parameters like in your example here?

Not really, lots of trial and error in configuring GANs.

Well done!

Perhaps model stability does not matter? (e.g. focus on the generated images)

Perhaps a larger model?

Perhaps a smaller learning rate?

Hi Jason, for generate_real_samples, you take a random sample of images from the data set each time. So if I understand correctly, this means that – since you take random samples – not all images from the training set may be used within one epoch, whereas some images might be used several times? I thought that in an epoch, all images in the training set needed to be used exactly once. Is it better to take random ones rather than making sure all images are used exactly once per epoch / does it not matter?

Correct. I chose this approach for simplicity and consistency with all my other GAN tutorials.

Yes, you can change it to shuffle the dataset prior to each epoch and enumerate all images.

I don’t think it will matter much, but happy to be proven wrong.

Hello,

I have tried CGAN and ACGAN on the same dataset (imbalanced) and the generated images were much realistic and diverse with CGAN. In ACGAN, for generating images with specific class, it gave me alot of realistic images from another class. Any clue on what’s happening and how do I solve it?

Nice work!

Perhaps you need to tune the model for your dataset.

Hello,

Thank you for providing a great tutorial about ACGAN.

I have tried ACGAN on the human activity dataset (i.e., accelerometer) and generated synthetic data from it. However, after several times, my ACGAN still have ‘poor performance’. If I further check on the training process, the discriminator class loss on fake data is closer to zero while real/fake loss is stable.

2020-11-23 21:54:07.524759

————— AC-GAN TRAINING —————

>10, >10, dr[0.684,1.590], df[0.793,1.600], g[0.415,1.576]

>20, >10, dr[0.633,1.581], df[0.725,1.595], g[0.619,1.630]

>30, >10, dr[0.701,1.475], df[0.473,0.388], g[0.959,0.417]

>40, >10, dr[0.725,0.920], df[0.591,0.112], g[0.376,0.111]

>50, >10, dr[0.686,0.915], df[0.642,0.023], g[0.444,0.050]

>60, >10, dr[0.846,0.470], df[0.711,0.025], g[0.566,0.085]

>70, >10, dr[0.805,0.761], df[0.744,0.022], g[0.593,0.031]

>80, >10, dr[0.670,0.623], df[0.695,0.018], g[0.678,0.021]

>90, >10, dr[0.683,0.552], df[0.687,0.005], g[0.698,0.005]

>100, >10, dr[0.646,0.595], df[0.779,0.050], g[0.646,0.018]

>110, >10, dr[0.648,0.530], df[0.674,0.000], g[0.804,0.002]

Do you have any clues on what’s happening and how do I solve it?

Thanks.

Perhaps try varying the model configurations to see if it has an impact on the quality of generated data.

Hi Jason, thank you for this tutorial of ACGAN. My problem / question is that can we use this network for audio generation, speech command to be exact ? I tried to do this with mel-spectrograms of command set but could obtain any acceptable result. I am wondering if it’s possible to obtain any good results with this kind of network or if it’s a dead end.

What is your opinion on this ?

Thanks.

I don’t think so, it is designed for image data.

Perhaps check the literature for GANs that are appropriate for audio data.

Hello Jason,

Thanks for this post, it was really interesting.

I followed along and tried to run the code for the Fashion-MNiST dataset but my results are much worse than the ones you managed to produce.

The images generated after training a model for 100 epochs look like this – https://ibb.co/Tv90c25

Could please help me out on how I can improve this to match yours? Any help is greatly appreciated.

Thanks.

You might need to run the example a few times to get good results.

Hi Jason,

Thanks for the quick response. I did run the model a few times and the result was the same.

But, I went through each of the comments on this post to see if anyone was facing the same issue and tried the fix that Radoslaw has mentioned above (link to the comment here for future ref – https://machinelearningmastery.com/how-to-develop-an-auxiliary-classifier-gan-ac-gan-from-scratch-with-keras/#comment-559908)

And his solution of removing the BatchNormalization layers from both Generator and Discriminator worked and fixed the problem. Now my results look pretty good after just 10 epochs as noted by you.

Thanks again and if you want you may add a small edit to the post or disclaimer to remove BatchNorm layers.

One thing I’m not sure of is why removing BatchNorm layers helped and improved the output in this case? Do you have any ideas or thoughts on this?

Thanks, I’ll investigate.

If the result looks better or evaluates better by modifying the architecture (depending on your goals), then the modification is good.

Does AC GAN too suffer mode collapse which we see in GAN.