Time series forecasting is typically discussed where only a one-step prediction is required.

What about when you need to predict multiple time steps into the future?

Predicting multiple time steps into the future is called multi-step time series forecasting. There are four main strategies that you can use for multi-step forecasting.

In this post, you will discover the four main strategies for multi-step time series forecasting.

After reading this post, you will know:

- The difference between one-step and multiple-step time series forecasts.

- The traditional direct and recursive strategies for multi-step forecasting.

- The newer direct-recursive hybrid and multiple output strategies for multi-step forecasting.

Kick-start your project with my new book Time Series Forecasting With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Update May/2018: Fixed typo in direct strategy example.

Strategies for Multi-Step Time Series Forecasting

Photo by debs-eye, some rights reserved.

Multi-Step Forecasting

Generally, time series forecasting describes predicting the observation at the next time step.

This is called a one-step forecast, as only one time step is to be predicted.

There are some time series problems where multiple time steps must be predicted. Contrasted to the one-step forecast, these are called multiple-step or multi-step time series forecasting problems.

For example, given the observed temperature over the last 7 days:

|

1 2 3 4 5 6 7 8 |

Time, Temperature 1, 56 2, 50 3, 59 4, 63 5, 52 6, 60 7, 55 |

A single-step forecast would require a forecast at time step 8 only.

A multi-step may require a forecast for the next two days, as follows:

|

1 2 3 |

Time, Temperature 8, ? 9, ? |

There are at least four commonly used strategies for making multi-step forecasts.

They are:

- Direct Multi-step Forecast Strategy.

- Recursive Multi-step Forecast Strategy.

- Direct-Recursive Hybrid Multi-step Forecast Strategies.

- Multiple Output Forecast Strategy.

Let’s take a closer look at each method in turn.

Stop learning Time Series Forecasting the slow way!

Take my free 7-day email course and discover how to get started (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

1. Direct Multi-step Forecast Strategy

The direct method involves developing a separate model for each forecast time step.

In the case of predicting the temperature for the next two days, we would develop a model for predicting the temperature on day 1 and a separate model for predicting the temperature on day 2.

For example:

|

1 2 |

prediction(t+1) = model1(obs(t-1), obs(t-2), ..., obs(t-n)) prediction(t+2) = model2(obs(t-2), obs(t-3), ..., obs(t-n)) |

Having one model for each time step is an added computational and maintenance burden, especially as the number of time steps to be forecasted increases beyond the trivial.

Because separate models are used, it means that there is no opportunity to model the dependencies between the predictions, such as the prediction on day 2 being dependent on the prediction in day 1, as is often the case in time series.

2. Recursive Multi-step Forecast

The recursive strategy involves using a one-step model multiple times where the prediction for the prior time step is used as an input for making a prediction on the following time step.

In the case of predicting the temperature for the next two days, we would develop a one-step forecasting model. This model would then be used to predict day 1, then this prediction would be used as an observation input in order to predict day 2.

For example:

|

1 2 |

prediction(t+1) = model(obs(t-1), obs(t-2), ..., obs(t-n)) prediction(t+2) = model(prediction(t+1), obs(t-1), ..., obs(t-n)) |

Because predictions are used in place of observations, the recursive strategy allows prediction errors to accumulate such that performance can quickly degrade as the prediction time horizon increases.

3. Direct-Recursive Hybrid Strategies

The direct and recursive strategies can be combined to offer the benefits of both methods.

For example, a separate model can be constructed for each time step to be predicted, but each model may use the predictions made by models at prior time steps as input values.

We can see how this might work for predicting the temperature for the next two days, where two models are used, but the output from the first model is used as an input for the second model.

For example:

|

1 2 |

prediction(t+1) = model1(obs(t-1), obs(t-2), ..., obs(t-n)) prediction(t+2) = model2(prediction(t+1), obs(t-1), ..., obs(t-n)) |

Combining the recursive and direct strategies can help to overcome the limitations of each.

4. Multiple Output Strategy

The multiple output strategy involves developing one model that is capable of predicting the entire forecast sequence in a one-shot manner.

In the case of predicting the temperature for the next two days, we would develop one model and use it to predict the next two days as one operation.

For example:

|

1 |

prediction(t+1), prediction(t+2) = model(obs(t-1), obs(t-2), ..., obs(t-n)) |

Multiple output models are more complex as they can learn the dependence structure between inputs and outputs as well as between outputs.

Being more complex may mean that they are slower to train and require more data to avoid overfitting the problem.

Further Reading

See the resources below for further reading on multi-step forecasts.

- Machine Learning Strategies for Time Series Forecasting, 2013

- Recursive and direct multi-step forecasting: the best of both worlds, 2012 [PDF]

Summary

In this post, you discovered strategies that you can use to make multiple-step time series forecasts.

Specifically, you learned:

- How to train multiple parallel models in the direct strategy or reuse a one-step model in the recursive strategy.

- How to combine the best parts of the direct and recursive strategies in the hybrid strategy.

- How to predict the entire forecast sequence in a one-shot manner using the multiple output strategy.

Do you have any questions about multi-step time series forecasts, or about this post? Ask your questions in the comments below and I will do my best to answer.

Thanks Jason for a wonderful post. Why does your model skips the value at “t”?

Just a choice of terminology, think of t+1 as t.

I could have made it clearer, thanks for the note.

Hi Jason,

Using t+1 instead of t is super confusing. 🙁 I don’t know about other users but it made it very difficult for me to grasp the idea with this terminology. If possible, please reconsider changing it to the traditional way in the future. Thanks a lot!

Thanks Pavlo.

Hi Jason,

which strategy would you recommend for recursive models like ARIMA ? I originally thought recursive but now I’m wondering if the hybrid would make more sense. I have the same question for moving averages and exponential smoothing models. I was using the strictly recursive approach and repeating the entire training process for several models on several folds. This was really computationally expensive, though, and I don’t know if was really necessary. Not sure if this matters, but models from a few different families (arima, ets, ..) were pre-tuned/configured on the smallest possible subset of the training set (I used hyperopt) and then walk forward validation was applied for each of the candidate models. As mentioned, Multistep forecasts were estimated using a strictly recursive approach with the RMSE being calculated over all time steps in the horizon (t+1,t+2,..) for each iteration of walk forward validation. To reiterate, models were pre-tuned so the same exact models were applied to predict each value in the horizon for a given iteration, but it was recursive since each new time step in the horizon in a given iteration was predicted using a training set with previous predcited time steps appended.

Oh, I forgot to provide some important details for context:

1. I’m working with small samples

2. The frequency is monthly

3. The data is volatile

4. The context is inventory optimization (specifically, we’re predicting quantity of products issued by warehouses)

5. Forecasting is done at the SKU level and separate forecasts need to be made for each product and for each warehouse at my company

6. Some SKUs are sparse and most are extremely volatile

7. (slightly) Negative quantities do occur (indicating returns or adjustments) but are rare

7. Solution is being developed in R and Python in Azure ML

Hi Katie, Wondering if you were able to build and test any models. Please share your findings if you are able to.

I would recommend testing a suite of methods on your dataset and use the approach that results in the lowest error.

good question.thanks

Hi Jason, it is always helpful to read your post. I have some confusion related to Time Series Forecasting.

There is traffic data (1440 pieces in total, and 288 pieces each day) I collected to predict traffic flow. The data is collected every 5 min in five consecutive working days. I am going to use the traffic data of the first four day to train the prediction model, while the traffic data of the fifth day is used to test the model.

Here is my question, if I want to predict the traffic flow of the fifth day, do I only need to treat my prediction as one-step forecast or do I have to predict 288-step?

Look forward to your advice.

Thanks for your post again.

Hi Dylan,

If you want to predict an entire day in advance (288 observations), this sounds like a multi-step forecast.

You could use a recursive one-step strategy or something like a neural net to predict the entire sequence one a one-shot manner.

Predicting so many steps in advance is hard work (a hard problem) and results may be poor. You will do better if you can use data as it comes in to continually refine your forecast.

Does that help?

Yes, your response is very helpful. Thank you very much. Now I realize my prediction is a multi-step forecast.

Could you recommend me some more detailed materials related to the multi-step forecast, like the recursive one-step strategy or the neural net?

Now I am reading your post, it is great.

Thank you for your advice.

Best regards

I am working on this type of material at the moment, it should be on the blog in coming weeks/months.

You can use an ARIMA recursively by taking forecasts as inputs to make the next prediction.

You can use a neural network to make a multi-step forecast by setting the output sequence length as the number of neurons in the output layer.

I hope that helps as a start.

Thank you for your advice. I will keep digging into this puzzle. Hope to discuss with you again. It is very helpful. Thank you for your time.

Best regards.

Let me know how you go.

Hi Jason,

How far are you out from publishing this material your speaking about above? Thanks for the tutorials.

Here is an example for multi-step forecasting with LSTMs:

https://machinelearningmastery.com/multi-step-time-series-forecasting-long-short-term-memory-networks-python/

Hi Jason,

Thank you for great posts! they’re awesome!

I have the same problem as Dylan and decided to use statsmodel’s SARIMAX. It takes some time to do the prediction for the entire next day (288 steps), and have been wondering if I’m doing this wrong or should I use a different approach.

Currently, I’m looking into LSTM RNN as a possible approach, but not sure.

The thing is, with my data, I have to predict the entire 288 steps in one shot and detect an anomaly if there’s any, then predict the type of anomaly that occured….

My question is, am I going in the right direction by looking into LSTM RNN?

I’m really looking forward into reading your posts on this topic!

Thanks Jason 🙂

I am not high on LSTMs for autoregression models:

https://machinelearningmastery.com/suitability-long-short-term-memory-networks-time-series-forecasting/

Hi Jason! Thank you for your great posts!

I have a similar problem as Dylan. I have a dataset containing system metrics collected every 5 min. I have a total of 12 features (CPU utilization, network activity, disk operations etc.) which makes this a multivariate problem.

What would be the best approach if I would like to predict all 12 features for let’s say the next hour with a LSTM? Since every time step represent 5 min would it mean I have to predict t+12 (since 5 * 12 makes one hour)? Would it be beneficial to perhaps down sample from the per-5-min-observations to hours instead, making it possible to forecast the next hour with just one step instead of multiple?

Looking forward to hear your input on this!

Best regards,

Andreas

I recommend starting here:

https://machinelearningmastery.com/how-to-develop-a-skilful-time-series-forecasting-model/

Yes, I will. Discussing with you is always helpful. Look forward to reading your new post on Time Series Forecast.

Hi Jason, just another brilliant post. Can you show up a working example for first or second method like you have always shown in other tutorials. It would be immense help to a novice like me. Thanks…

I do hope to have many examples on the blog in the coming weeks.

Thank you Jason for your wonderful articles ! you are a life saver!

But I suppose you did a mistake in the example for number2 and 3. both has the same value as

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(prediction(t-1), obs(t-2), …, obs(t-n))

I believe that one of them should be

prediction(t+2) = model2(prediction(t+1), obs(t-1), …, obs(t-n-1))

Hmmm, I guess you’re right. I was thinking from the frame of the second prediction rather than the frame of both predictions.

Fixed.

Thanks Mary. So in effect what should both step be?

many thanks

No, You are not aware of the difference between an expanding and a rolling window recursive forecast model.

Hello Jason,

What kind of Multiple output models would you recommend if we are opting for the fourth strategy?

Neural networks, such as MLPs.

Sir, how about LSTM?

Sure, try them, but contrast results to an MLP.

Sir, Is MLP performs better than RNN for multi-output or multivariate ?

It really depends on the specific dataset. I recommend testing a range of algorithms and discover what works best on your data.

Sir,

Would you please come up with a blog where we would love to see all these strategies have been applied to an example (dataset) and their result comparisons.

Would you please?

Perhaps in the future, thanks for the suggestion.

sir,

would you be kind enough to post soon?

I am stuck with my theoretical knowledge need to apply on my data to see the result and their comparative analysis.

Thanx for great explanations.

I have one little question.

Is the accuracy same for all these 4 strategies.?

If No, then which one gives more accuracy.

No, you must test a suite of models and strategies and discover what works best for your specific dataset.

What is a decent one-step prediction of unseen data? How would it looks like?

Let’s say I have 100 rows in a data set and do the following in R:

I write ‘=’ instead of the arrows because of the forum parser:

1. I split the 100 rows of raw data in 99 training rows and 1 testing row:

inTrain=createDataPartition(y=dataset$n12,p=1,list = FALSE)

training=dataset[inTrain-1,];

testing=dataset[-inTrain+1,]

2. I train the model:

modFit=train(n12~., data=training, method = ‘xxx’)

3. I get the final model of Caret

finMod<-modFit$finalModel

4. I predict one step with the final model of the training and the one row of testing.

newx=testing[,1:11]

unseenPredict=predict(finMod, newx)

Now, do I have a decent prediction of one step unseen data in point 4 ???

And why there are libraries like forecast for R, if everything can have been coded to a one-step forecast by default?

https://github.com/robjhyndman/forecast/

Sorry, I don’t have examples of time series forecasting in R, I cannot offer good advice.

I know there is also the option to use the time series object(s) in R.

But could you answer my question in general?

I have many posts showing how to make predictions in Python, including many that show repeated one-step predictions in a walk-forward validation test harness:

https://machinelearningmastery.com/category/time-series/

I don’t understand the difference between regression forecast and time series forecast.

Or what are the benefits from each over the other.

A time series forecast can predict a real value (regression) or a class value (classification).

Hello Jason,

How to prepare dataset for train models using with Direct Multi-step Forecast Strategy ?

For the serie: 1,2,3,4,5,6,7,8,9

Model 1 will forecast t+1 using window of size 3 , then the dataset would be:

1,2,3->4

2,3,4->5

3,4,5->6

4,5,6->7

5,6,7->8

6,7,8->9

Model 2 will forecast t+2 using window of size 3 , then the dataset would be:

1,2,3->5

2,3,4->6

3,4,5->7

4,5,6->8

5,6,7->9

Model 3 will forecast t+3 using window of size 3 , then the dataset would be:

1,2,3->6

2,3,4->7

3,4,5->8

4,5,6->9

and so on. Is it right ? Thanks

Great question.

For the direct approach, the input will be the available lag vars and the output will be a vector of the prediction.

I can see that you want to use different models for each step in the prediction.

You could structure it as follows:

Try many approaches and see what works best on your problem.

I hope that helps.

thank you so much! This answers my question.

I’m happy to help (if I can).

Dear Jason,

In Direct Multi-step Forecast Strategy, for model 2

why haven’t you used obs(t-1) as well?

i.e.

instead of:

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(obs(t-2), obs(t-2), obs(t-3), …, obs(t-n))

=>

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(obs(t-1), obs(t-2), …, obs(t-n))

The latter seems more compatible with the example you provided in this comment.

thanks!

t-1 for model2 would be the predicted value of model1. It could use them too if you wish.

Hi, Jason,

Can you explain more about why t-1 for model2 would be the predicted value of model1?

I think there is no information leakage since the model 1 is “older” than the model 2. The model 2 can use all the data used by the model 1.

What is your concern?

Sorry, I don’t follow, what’s the context exactly?

Hi Shellder,

I think that’s indeed a mistake in Jason’s formulation of the direct multi-step approach. The component obs(t-1) should also be included in the training set for the model2. 😉 It made me very confused the first time I read it but then after checking other sources I realized that there is no reason not to include obs(t-1) in the model2.

Jason, could you please have a look at it and correct it for the future blog visitors? =) Thanks!

Hello Jason, thank you for your wonderful post. (Actually I already bought your book.)

I have a question.. I am building a forecasting model with my timeseries dataset, which is a daily number of some cases, I have 3 years past data (so will be records of 3*365 days.) I’d like to forecast 2 months future data (60 days.)

I already built a multi-step LSTM model for this, however, it doesn’t seem to work well… For example, 3 years past data clearly has a pattern like Nov/Dec high peak seasonality and increasing trend, but 60 steps of LSTM gave me poor forecasting like decreasing trend with no seasonality… and even the base is decreasing too.. which is so not understandable.

My question is:

1. Do you think my parameter tuning could be wrong? I mean, LSTM multi step forecasting cannot be this much poor..?

2. Is there any recommendation for one model approach for my problem..? I used ARIMA, but I wanted to use algorithmic model rather than a statistical model, so that’s why I’m trying to build LSTM… Do you think I need to go back to ARIMA..?

(After building one model, I will use ensemble method to improve current model though. For now, I need a decent model giving me the understandable result.)

Thank you so much, your any opinion on this will be really appreciated.

Thanks Jisun,

I generally would recommend using an MLP, LSTMs do not seem to perform well on straight autoregression problems.

Hi Jason,

There is a question above asking you “What kind of Multiple output models would you recommend if we are opting for the fourth strategy”?

And you answer MLPs

Then I try to use mlp to get a one-shot sequence, but I keep getting error…

Below is my code and scenario,

x_train.shape:

(4, 5, 29)

y_train.shape:

(4, 28)

I wish to use prior 5 timesteps and 29 features to get the 28 timesteps ahead forecast sequence.

only 4 training data for illustrative purpose.

model = Sequential()

model.add(Dense(units = 100, input_shape = (5, 29)))

model.add(Dense(90, kernel_initializer=’normal’, activation=’relu’))

model.add(Dense(90, kernel_initializer=’normal’, activation=’relu’))

model.add(Dense(30, kernel_initializer=’normal’, activation=’relu’))

model.add(Dense( 28,kernel_initializer=’normal’, activation=’relu’))

model.compile(loss=’mse’, optimizer=’adam’, metrics=[‘accuracy’])

Error when checking target: expected dense_168 to have 3 dimensions, but got array with shape (4, 28)

\

How can I rectify it? Thank you very much

With an MLP, the prior observations will be features.

If you have 29 features and 5 time steps, this will in fact be 5 x 29 (145) input features to the MLP.

Hi Jason

I am trying to fit a LSTM model which is a multivariate (input and output) and multi step.

So I need to predict multiple steps and multiple features in one model.

Temp : [1,2,3,4],Rain[1,2,3,4] = predict(Temp : [5,6], Rain[5,6])

What is your recommended architecture to do this in one model ?.

I have daily selling values for 5 years with 167000(per item per store) features to predict 15 days for 167000 features

To output two series, perhaps a multiple output model:

https://machinelearningmastery.com/keras-functional-api-deep-learning/

Hi Jason,

Thank you very much for sharing a great article again. I have read many your posts these days, and learned a lot from them.

My project is one-step forecast on time series data. Do you think which model is the best to it?

Charles

I would recommend testing a suite of models to see what works best for your data.

Hi Jason,

Is there anyway to reduce the propagated error during Multi step ahead prediction with recurrent neural network?

Thanks

It’s a hard problem. The general methods help:

https://machinelearningmastery.com/machine-learning-performance-improvement-cheat-sheet/

Hi Jason,

Thanks for the great post. Your post uses Direct Strategy. I would like to apply Direct-Recursive Hybrid Strategy using RNN-LSTM on a time series data that has trend and seasonality. What I need is a multistep forecast where the prediction for the prior time step is used as an input for making a prediction on the following time step. How to go about this ? Because recursion for multi-step would be highly computationally expensive. What changes do I need in the existing code for multi-step using hybrid method?

Thanks

Perhaps this post can help as a starting pint:

https://machinelearningmastery.com/multivariate-time-series-forecasting-lstms-keras/

Hi Jason,

I am going with the 4th strategy you mentioned that is one model predicting forecasts in one shot.

I have two models in my mind for this.

1) Multi-Output Network : Output layer of this architecture has ‘forecast’ number of dense layer. In this model there are ‘forecast’ number of weight matrices each trained on predicting individual forecast? Each output dense layer is optimized(adam) for MSE.

2)Single-Output network: This architecture has one dense layer as output with ‘forecast’ number of neurons. So in this case there is only one weight matrix trained for all forecasts and one cost function across all forecasts as opposed to first approach.

Are both the architecture valid? Which architecture works best?

Also one more question Jason. What is the best way to add regularization on time series model.Dropouts or kernel regularizer?Both would do?

Thanks,

Kaushal

Try them both (and more!) and see what works best for your specific dataset.

Try many regularization methods and see what works best for your specific data.

Perhaps this post will help you think about the challenge ahead:

https://machinelearningmastery.com/applied-machine-learning-as-a-search-problem/

Hi Jason, it is a great article and very helpful summary. I have one trivial question.

1. Direct Multi-step Forecast Strategy

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model1(obs(t-2), obs(t-3), …, obs(t-n))

==>

do you mean model2, not model1 for t+2?

prediction(t+2) = model2(obs(t-2), obs(t-3), …, obs(t-n))

why it starts t+1 not t

prediction(t) = model1(obs(t-1), obs(t-2), …, obs(t-n))

Yes, I meant model2, thanks – fixed.

sorry to disturb you again.

Why it is t+1, not t? Thanks.

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

=>

prediction(t) = model1(obs(t-1), obs(t-2), …, obs(t-n))

It could be and probably should be t. Just a chosen notation of t-1, t+1.

Hi jason,

Thanks a lot for the great post. I am going with the 2th strategy you mentioned that is recursive multi-step forecast but having difficulty in implementing the recursive forecasting part.

How to put the prior time step to be used as an input for making a prediction on the following time step, for example with SVR or MLP. It would be immense help to a novice like me. Thanks…

Thanks Klaus.

You will have to store it in a variable and then create an array that includes the value and use it as input for the next prediction.

Hi Jason,

Thank you for your great post.

If I use Recursive Multi-step Forecast with ARMA model, the effect of MA predictions will reduce over the steps of prediction or not?

Due to the nature of multi-step forecast, the error terms of the previous unobserved samples will become zero when they are used as an inputs for the further prediction. Howevers, MA will estimate based on the previous errors if I do not missunderstand. Thus, if the previous error terms become 0, will only AR terms affect the prediction results?

Sorry if I misunderstand about the ARMA model. I’m quite new for this topic.

Recursive prediction will result in compounding error. Why would MA go to zero?

Sorry, I didn’t mean the real error but the unobserved residuals. For example in the following website, no observed values for residuals ε106, ε107 are considered as zero in the equation ŷ107 = f(ε106, ε107, y106).

http://www.real-statistics.com/time-series-analysis/arma-processes/forecasting-arma/

“This time, there are no observed values for ε106, ε107, or y106. As before, we estimate ε106 and ε107 by zero, but we estimate y106 by the forecasted value ŷ106.”

Hello Jason,

I am trying to build an LSTM model. My training set has 580 timesteps and 12000 features.

I wanted to use 10 timesteps to predict next 5 timesteps. In this case my train_x.shape will be (87,10,12000). However I am confused about train_y.shape. Should it be (87,10)?

I have information on how to prepare data for LSTMs here:

https://machinelearningmastery.com/faq/single-faq/how-do-i-prepare-my-data-for-an-lstm

I also have an example here that might help:

https://machinelearningmastery.com/multi-step-time-series-forecasting-long-short-term-memory-networks-python/

Hi Jason,

In my understanding, Direct-Recursive Hybrid Strategies can be implemented in below three steps. Could you help me to check if it is correct please? Thanks in advance.

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(prediction(t+1), obs(t-1), …, obs(t-n))

1. Use train data to train model1

2. Predict t+1 for all train data

3. Use predicted t+1 plus train data to train model2

Seems good.

Dear Jason,

In Recursive Multi-step Forecast:

I guess to predict the value at (t+2), the observed value of (t-n) is not needed but the one at (t-n+1).

therefore:

instead of:

prediction(t+1) = model(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model(prediction(t+1), obs(t-1), …, obs(t-n))

should be :

prediction(t+1) = model(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model(prediction(t+1), obs(t-1), …, obs(t-n-1))

Am I right?

Dear Jason ,

I have some experience in python and machine learning and now I am trying to learn predicting a value ( Regression ) based on Timestamp ( Every 5 mins ). So please suggest me the appropriate model for this. Also, let me know if your book on forecasting helps me in this.

You can get started here:

https://machinelearningmastery.com/start-here/#timeseries

HI JASON,

Very informative and nice one. I have one problem related to LSTM forecasting model. I am making a model on call volume forecasting. I want to forecast 3 months ahead of today, let’s say I have built a model and scoring today (in Aug’18), so the forecasted month should of Dec’18 3 months ahead of scoring month. And how should I be proceeding while building model (training dataset) and validation (test dataset) and scoring unseen data(as discussed above). Do I have to build the model on training dataset in similar way, like forecasting 3 months in advance? If yes, how to proceed.

Thanks in Advance

Yes, frame the historical data in the way that you intend to use the model.

E.g. if you need a model to predict n months ahead, frame all historical data this way and fit a model, then score the fit model.

Hello Dr. Jason,

Thank you for your amazing post.

kindly I am confusing about the error calculating for multi-step-ahead prediction.

Suppose I have my training data D_pred = [1,2,3,4,5,6]

and my corresponding target data, D_trgt = [7,8,9,10,11,12,13]

I am using lag = 4 and I want to predict 5 step-ahead

My D_pred would be like this

1 2 3 4

2 3 4 5

3 4 5 6

I used my D_pred to get my prediction result D_out in one shot like this

x7 x8 x9 x10 x11

x8 x9 x10 x11 x12

x9 x10 x11 x12 x13

and my D_trgt would be like this

7 8 9 10 11

8 9 10 11 12

9 10 11 12 13

now, how to calculate the SMAPE error between D_out and D_trgt for horizon 5?

– get SMAPE error between [x7, x8, x9, x10, x11, x12, x13] and [7, 8, 9, 10, 11, 12, 13]

or

– get SMAPE error between [x11, x12, x13] and [11, 12, 13]

which way is the right way?

Thank you so much

I show how with RMSE in this tutorial:

https://machinelearningmastery.com/multi-step-time-series-forecasting-long-short-term-memory-networks-python/

Thank you so much, Dr. Jason

Hi, master. What is the lag timesteps used for? I still can’t understand,my doctor.

Lag time steps are observations at prior times. They are used as inputs to the model.

Can you explain more exactly?

Sure, which part are you having difficulty with exactly?

Is there any criteria for choosing the four main strategies?

For example,How many steps?How many datas?

Project requirements may impose constraints, alternately, choose the approach that results in the best skill.

Hi Jason,

Thanks for your post. I’m implementing the Multiple Output Strategy using a Neural Network approach. I have about 5 years worth of historical data at weekly granularity, and I want to predict 6, 12, 18 and 24 months into the future.

I’ve read your “How To Backtest” post, but am still not sure about how to do the train/test split in this case, ie. predicting 2 years into the future with only 5 years historical data. Even if I only wanted to forecast one year ahead (at say 3, 6, 9 and 12 months), how would you backtest?

Thanks.

You would use walk-forward validation as described in the back test tutorial.

I do not understand the direct approach. I have only found vague examples to explain. Let us consider a problem in which I want to do a 1 step prediction.

history = [1,2,3,4,5,6,7,8,9,10]

Yt = 10

problem: predict Yt+1

My current understanding of how to formulate the training data is to use a sliding window of size 1.

X_train = [1,2,3,4,5,6,7,8,9]

Y_train = [2,3,4,5,6,7,8,9,10]

Then I train the first model using X_train and Y_train

In a single step prediction scenario, to predict what comes after 10 I would call

Predict(model1, 10), and the output should be 11 + some error, depending on the model

Now, for the 2-tep reccursive method I would call

Predict(model1,Predict(model1,10)) to get 12 + some error

The direct method for the 2 step prediction will be

a = Predict(model1, 10) to get 11 + some error

b = Predict(model2, K) to get 12 + some error

predictions = [ ]

predicions.append(a)

predictions.append(b)

Finally, a 2-step prediction is accomplished:

predictions == [11 + error, 12 + error]

My questions:

1)What is K? Which value do I need to send to the predict method for the first model

2) What are the values of the X_train an Y_train used to train the second model.

Thanks.

Looks good.

K would be an input for the required output, e.g. the last available observation, or the prior time step prediction, or a mix. You must test and see what is appropriate for your specific dataset. Same for input, you can test/choose.

I have an example here:

https://machinelearningmastery.com/multi-step-time-series-forecasting-with-machine-learning-models-for-household-electricity-consumption/

Hi Jason,

Thanks for your post.

I have two products, both of which have their historical data, and how do I model so that both products can predict future sequences from their historical data.Construct two models or just one model?

Perhaps try one model and two models and see which is most effective?

A good place to start is here:

https://machinelearningmastery.com/start-here/#timeseries

dear sir, i follow all your tutorials and combined them. i have more than thousand sequences. i choose vanilla lstm according this

https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

i also add train and test, 80% 20%. i got RMSE and MAPE and the Graph.

i want to forecast next value, and i follow this.

https://machinelearningmastery.com/multi-step-time-series-forecasting/

according https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

you forecasting 1 next value with 3 last value.

so want to forecast 2 and 3 next value using Recursive Multi-step Forecast, and my code like this

#one

x_input = array([Y_test[-1], Y_test[-2], Y_test[-3]])

x_input = x_input.reshape((1,1,3))

yhat = model.predict(x_input, verbose=0)

#two

x_input1 = array([yhat,Y_test[-1], Y_test[-2]])

x_input1 = x_input1.reshape((1,1,3))

yhat1 = model.predict(x_input1, verbose=0)

#three

x_input2 = array([yhat1,yhat, Y_test[-1]])

x_input2 = x_input2.reshape((1,1,3))

yhat2 = model.predict(x_input2, verbose=0)

my question is dowhat i am doing is right? do i follow the principle LSTM & Recursive Multi-step?

that all, thank you sir

i wait your responses

Nice work.

I’m eager to help but I don’t have the capacity to review/debug your code. Sorry.

Thank you Jason for sharing it. I want to know which methods are more helpful in timeseries problem. Did you do some contrast experiment with them?

It really depends on the specifics of your data.

I recommend following this process:

https://machinelearningmastery.com/how-to-develop-a-skilful-time-series-forecasting-model/

Hi Jason! Writing a thesis on this right now and your examples are very much appreciated.

I have a question about the Direct-Recursive Hybrid, as we have been able to test out all the other methods to a certain degree.

How would you go about writing the programming logic for this? Especially when using time-series cross validation.

What exactly do i fit model2 on at time t?

Perhaps review the example in the tutorial and try to map your data onto it, also perhaps check the paper for an elaboration on the approach.

You will have to write custom code to prepare data and fit models.

Thank you for your tutorial.

I have a question: is it possible for the same model to have different dimensions of input variables in Recursive Multi-step Forecast (i.e., from obs(t-n) to (t-1) with R_n dimension, and from t-n to prediction(t+1) with R_n+1 dimension)?

Also, is it better whether the input dimension should be the same in Direct-Recursive Hybrid Strategies?

Yes, you could have a multi-input model, like a neural net.

Perhaps experiment with your dataset/model and see what works well?

Hi Jason,

I have a question regarding error propagation in different multi-step forecast models that I post on StackExchange before reading this post. (so the terminology I used is a bit non-standard) I would like to understand the theory behind error propagation. Could you shed some lights, please?

https://datascience.stackexchange.com/questions/54130/error-propagation-in-time-series-forecast-with-many-to-many-multi-steps-rnn-lstm

Many thanks!

Perhaps you can summarize the gist of your question?

Hi Jason,

I have been able to implement the recursive strategy. However for Direct-Recursive Hybrid Strategy if I understood correctly we train & predict the entire training data & append those predictions to the initial train data & retrain the model.

Having said that if my initial train data has 100 observations & I predict all 100 then I append these predictions to my initial train data making 200 observations ? Is my understanding correct or am I missing out something?

Not quite, the predictions become inputs for subsequent predictions.

Sorry to ask again Jason but can you please explain because I tried finding it in your books but couldn’t find & also tried understanding it based on the example & the example of the household electricity but could not really grasp it entirely.

Sure, what are you having trouble understanding exactly?

Thanks for your reply Jason.

Suppose I have 100 observations in total after training & validating my model I train my model on the entire data (all 100 observations) & predict one step ahead i.e; 101st observation.

Do I use this predicted value to replace the 1st observation in my original data to have 100 observations & predict the next step & repeat the process ?

Thanks again.

It really depends on how your model is defined.

You have defined your model to map some number of inputs to an output, you must provide data in that format to make a prediction.

Hi Jason, thanks for making this great tutorial.

I am not sure I’m fully understood the difference between recursive multi-step and direct-recursive hybrid. These two look exactly same in your example code.

prediction(t+1) = model1(obs(t-1), obs(t-2), ..., obs(t-n))

prediction(t+2) = model2(prediction(t+1), obs(t-1), ..., obs(t-n))

If I understood correctly, the main difference is hybrid is each model may use or may not use the models at prior time, and the recursive multi-step is each model will use the prior model?

Thanks!

If the above description is correct, how do you decide when should use or not use? I assumed that requires to be training?

Test a few different approaches and see what works best for your choice of model and the dataset.

Recursive uses predictions as inputs.

Direct recursive hybrid uses the same idea, but separate models for each time step to be forecasted.

Does that help?

Hi Jason, your articles are great and they helped me a lot!

I’m working on predictive maintenance and given a long time series of data, each of them with 15 features, I should predict the next X time steps.

Basically I thought of using 400 time steps as input and predicting 20 steps as output. As a result I’m using your 4.Multiple Output Forecast Strategy.

My Net is this:

n_steps_in = 400

n_steps_out = 20

n_epochs = 20

batch_size = 128

model = Sequential()

model.add(LSTM(100,

activation=’relu’,

input_shape=(n_steps_in, n_features),

return_sequences=True))

model.add(LSTM(20))

model.add(Dense(n_steps_out * n_features))

model.add(Reshape((n_steps_out, n_features)))

model.compile(loss=’mse’,

optimizer=Adam(lr=0.001),

metrics=[‘accuracy’])

model.fit(X,

y,

epochs=n_epochs,

validation_split=0.25,

batch_size=batch_size)

But sometimes i’ve got Nan in Loss while training, but i don’t know why. Do you have any explanation?

Thanks

Nice work.

Perhaps vanishing or exploding gradients.

Try scaling the data prior to fitting?

Try relu?

Try gradient clipping?

Did you think about applying the concept of Survival model here?

Hi Jason,

I wanted to know if you have any posts that have implemented each of the strategies you discussed above?

I also wanted to know if one strategy is better than the other by any chance? I’d like to get a deeper insight on what kind of strategy would work for a particular kind of data.

I believe so, you can get started here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

I recommend testing different framings/strategies on your problem in order to discover what works best for your specific data.

Hi Jason, First of all, thanks for all your nice posts. People has asked this question before, but I was wondering if there might be an update! I was wondering do you have an example code for “Direct-Recursive Hybrid Strategies” similar to what you have for “Time Series Prediction With Deep Learning in Keras”. Or if your E-Book has it?

Thanks again, Sam

I don’t think so. I have some direct models here that could be adapted:

https://machinelearningmastery.com/multi-step-time-series-forecasting-with-machine-learning-models-for-household-electricity-consumption/

Hi Mr. Jason,

I’m working on forecasting time series, i use LSTM as model to forecast.This is the main steps i used to structure my data in oder to predict one step:

1) The model takes 1 day of data as “training X”

2) The model takes the VALUE of 1 day + 18hours after as “trainingY”

3) I build a slliding window as well as the sequences are shifted by one value, fore example:

XTrain{1} = data(1:24) –> YTrain{1} = data(42)

XTrain{2} = data(2:25) –> YTrain{1} = data(43)

XTrain{3} = data(3:26) –> YTrain{1} = data(44)

XTrain{4} = data(4:27) –> YTrain{1} = data(45)

.

.

.

4) The test data are also constructed as the same way of training data, fore example:

XTtest{1} = data_test(1:24) –> YTest{1} = data_test(42)

XTtest{2} = data_test(2:25) –> YTest{2} = data_test(43)

.

.

.

First, to sumurize, my objective id to predict each time 18h after. Is this structed cited above is correct?

If yes, I have the problem when i try to predict the XTest{1} the obtained predicted value is the corresponding value of data_test(25) instead of d ata_test(42)? For this purpuse, why the predicted value is shifted? Where is the problem and how to remedy to this problem?

Tnak you in advance for your help.

There are many ways to frame your problem, your approach is one possible way.

Perhaps the model is not skillful?

Perhaps try alternate architectures or training configurations?

Perhaps try alternate framings of the problem?

Perhaps try alternate models?

Thank you very your reativity. Hence, i have tried several architechtures of the model and LSTM gives the best reults, which suitable for for learning many sequences with different lengths.

For my part, i use this architechture to learn the model. However, could you please inform me or show me the orther architechtures to prepare the training data that can help me to solve this problem of shiftting results? Because, i use 3 test sets, when try t predict, the results are shifted by the number of difference steps used for target prediction. i.e.

model_1: data(1:24) –>data(30) the difference is 6 points. Therefore the predicted curve is shifted by 6 steps earlier

model_2: data(1:24) –>data(36) the difference is 12 points. Therefore the predicted curve is shifted by 12 steps earlier

I don’t think that this is logical response?

Best regards

It may be that the model has learned a persistence forecast, e.g. has no skill. More here:

https://machinelearningmastery.com/faq/single-faq/why-is-my-forecasted-time-series-right-behind-the-actual-time-series

Hello, could there be a typo in the explanation of Direct Multi-step Forecast Strategy ?

I was expecting the second row in the code to be :

prediction(t+2) = model2(obs(t-2), obs(t-4), obs(t-6), obs(t-8), obs(t-n)) – so basically no (t-3), and two time steps increments ?

Otherwise, I still do not understand that correctly 🙂

Thank you

No, I believe it is correct.

There are 2 models and both models only use available historic data.

model1 predicts t+1 and model2 predicts t+2.

Does that help?

Hi Jason,

Thanks for your efforts to put up all these helpful articles.

I have a question that what is the difference between these two cases when we want to have multi-step time series forecast:

1. There is one neuron in output layer (T+1). After extracting weights, we iteratively use weights and (T+1) forecast to get (T+2) forecast and so on.

2. We have multiple neurons for each horizon

My question is mostly related to LSTM, however, a general reply is also appreciated. I need a mathematical explanation if there is any.

Thanks a lot,

Morteza

Yes, in the first case you are reusing the same model recursively in the second you are using a single direct model.

Yes, you can describe each approach using math or pseudocode.

Perhaps I don’t understand the problem you’re having?

Hi Jason, can CNN LSTM do multi-step?

Sure.

Do you have any examples for CNN multi-step?

Yes, you can search on the blog to find many.

This tutorial gives a good starting point for a CNN for multi-step forecasting:

https://machinelearningmastery.com/how-to-develop-convolutional-neural-network-models-for-time-series-forecasting/

This tutorial gives a good simple example of CNN-LSTMs for forecasting that can be adapted to be multi-step:

https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

hello, very nice doc but there are several tiny typos:

in almost all the code, you wrote:

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(obs(t-2), obs(t-3), …, obs(t-n))

the prediction(t+2) is wrong, which should be

prediction(t+2) = model2(obs(t-2), obs(t-3), …, obs(t-n+1))

Hello.

Thanks for the awesome article.

Do you have any post on multivariate multi-step time series forecasting ??

Thanks.

Yes. You can use the blog search.

Also, perhaps start with the simple example here:

https://machinelearningmastery.com/how-to-develop-lstm-models-for-time-series-forecasting/

good very nice concept description

Thanks!

Hi Jason,

Great post! You are always clear in your concepts

Let me tell you this concern.

I have seen notebooks trying to analyze the spreadth of COVIT-19 using regression analysis. They treat X as the consecutive number of days since the first case [1,2,3,..,n-1,n], and y as the number of daily cases since the first case [1,3,7,…, 3821,4213].

Then they predict future values for X=[n+1, n+2, …n+20] trying to forecast daily cases for the next 20 days.

I think this is not correct, because these models do not consider the effect of time, and also, they are doing extrapolation in a regression analysis model.

I guess I saw one of your posts saying that supervised learning can intrapolate, but time series can extrapolate.

Is this correct so far?

Now, if you arrange time series data as a supervised learning problem with input sequence = [t-lags, …, t-1] and forecast sequence = [t+1, …,t+steps], now you can use supervised learning algorithms or MLP to make predictions, right?

We can now evaluate performance using backtesting.

Is this comparable to ARIMA/SES forecasting?

Thank you

Thanks.

Yes, correct.

Also, more advice on modeling covid case data:

https://machinelearningmastery.com/faq/single-faq/how-can-i-use-machine-learning-to-model-covid-19-data

Thank you

If I understand this Autoreg model_fit.predict example correctly, it is an example of multi-step forecast strategy: https://machinelearningmastery.com/autoregression-models-time-series-forecasting-python/

But I got nan’s following this method. I guess that happened because the model has missing lag values as the input. Referring to this example code, it doesn’t explicitly update the historic lags for subsequent forecasts. I wonder if .predict() already handles that and something in my code caused the nan’s, or the example code is missing the recursively updating steps?

I figured why: use series.values instead of series solved the problem.

Well done.

Sorry to hear that you’re having trouble.

You can make a multistep prediction directly by first fitting the model on all available data and calling predict() and specifying the interval to forecast, or calling forecast() and specifying the number of steps to predict.

This will help:

https://machinelearningmastery.com/make-sample-forecasts-arima-python/

H, Jason thank for the great article,

For the direct multi-step forecast, we build separate model for each forecast time step,

Let’s say for two days, model m1 for day 1 and model m2 for day2, and you have given the

following example

Example

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(obs(t-2), obs(t-3), …, obs(t-n))

In the above example, for predicting day t+1 we used data from precious day t-1 to t-n.

for predicting second t+2 we used t-2 to t-n.

If so,

It’s seems like for forecasting tomorrow’s data use the historical data from last day to

last day -n.

for forecasting day after tomorrow’s use the data from two days before to day -n, how it make

sense?

Yes, the other model can use historical data and output of another model to make a prediction.

Perhaps I don’t understand your question?

Hello and thanks for the tutorial,

I wonder for the second and third approaches for forecasting you mentioned above, which you said:

prediction(t+1) = model(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model(prediction(t+1), obs(t-1), …, obs(t-n))

if the prediction(t+1) uses some kind of a supervised time series approach where the model actually have seen a part of data at (t+1), is that going to create information leakage to the second model?

I

Not leakage as it does not violate the design. It is by design.

Hi,

First of all thanks for the tutorial.

For the direct multi-step forecast, you have given the

following example

Example

prediction(t+1) = model1(obs(t-1), obs(t-2), …, obs(t-n))

prediction(t+2) = model2(obs(t-2), obs(t-3), …, obs(t-n))

My questions are

1. For prediction(t+2) why you are not taking obs(t-1) as input like for prediction(t+1).

2. I am using direct multi-step forecast for my project and it is expected with machine learning that by increasing forecast horizon(time step) error should increase. Am I right? If yes then in my project, error is continuously increasing upto 7 time step but after that error is fluctuating. Can you suggest me how can I improve this error?

You’re welcome.

We do not use the observation at t-1 because it is not available in that framing of the problem.

Yes, the further into the future you want to predict, the more error.

You can reduce the error with different/better models or by simplifying the problem.

Hi Jayson,

Loving your work!

I recently bought the math bundle and considering the forecasting books.

I was wondering If you cover a full example of a Direct-Recursive Hybrid model? And in which e-book?

Best,

A fan

Jason apologies for the misspelling was unable to change it.

No problem.

Thanks.

I don’t think I have an example of that model off hand. If I do, it would probably be under the neural net time series examples:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Ok thanks for getting back so fast!

You’re welcome.

Hi Jason,

great article, there is very little content on the internet on prediction strategies for using the model once trained.

I have defined a fairly simple recursive prediction method.

My model uses a 160 hour rollback window to predict the next hour on 10 features outputs.

Model inputs: 10 features

Model outputs: 10 features

Timesteps: 160h

Output timesteps: 1h

My recursive prediction method therefore consists of taking the last 160 hour window of my data to predict the next hour and re-integrating the prediction into the last window with an offset of 1 to then predict the second hour. Etc …

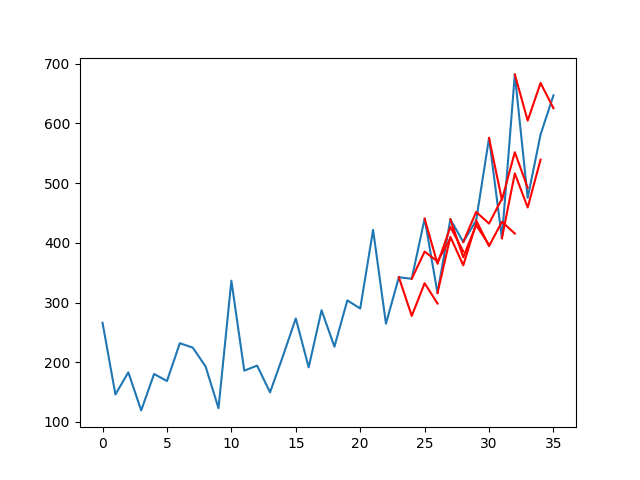

I understand that a recursive prediction method increases the error over time but I still get very bad results while on the evaluation of the model under test the results are very good (green series and red)

Here is an image to illustrate my point:

https://ibb.co/R0hs2NQ

The recursive prediction in blue seems smooth and totally wrong compared to the reality in orange.

Do you have any idea where to dig? I went through all your articles on this subject.

Thanks to you Jason.

Well done!

Perhaps you can vary the amount of input data?

Perhaps you can try alternate models? alternate configs? alternate data preparations?

Perhaps you can try direct prediction methods?

Perhaps you can try algorithms that support input sequences, like LSTMs?

Perhaps you can benchmark with a naive forecasting method (persistence) to see if any methods have skill?

Perhaps you can use a linear methods like SARIMA or ETS?

Hello Jason, thanks for your advice!

I’m actually already using a simple LSTM model with 3 layers .

I get very good results in MAE, MAPE and R2.

This is confirmed when I plot the test series with the predicted series.

The problem is with future predictions now, with my recursive method I got bad results, I tried to increase and decrease the rollback window but it doesn’t change much. The same for the model parameters (number of layers or neurons), the evaluation is good but the prediction on future data remains bad.

However, I managed to improve the prediction by adding an additional Dense layer with Relu activation in addition to the output Dense layer:

model = Sequential ()

model.add (LSTM (units = 50, return_sequences = True, input_shape = (X_train.shape [1], X_train.shape [2])))

model.add (Dropout (0.4))

model.add (LSTM (units = 50, return_sequences = True))

model.add (Dropout (0.4))

model.add (LSTM (units = 50))

model.add (Dropout (0.4))

model.add (Dense (units = 50, activation = ‘relu’)) # New Dense layer !!!

model.add (Dense (units = X_train.shape [2], activation = ‘linear’))

model.compile (optimizer = ‘adam’, loss = ‘mean_squared_error’

On the other hand, I can’t quite understand why this is better with a second Dense layer in Relu activation.

Do you have an idea ?

Nice work!

We can’t answer “why” questions in deep learning (e.g. why does this config work and another one not work), the best we can do is run experiments and discover what works well for a given dataset.

Hi Jason,

I tried with the direct forecast and the results are much better!

I was using the wrong window to make my prediction that’s why.

Thank you for your advice !

Well done!

Hi Jason,

Is this apporach applicable for only linear models or it can be used with non-linear models like SVR , RandomForestRegressor etc as well?

Any models.

Hi Jason,

I have a basic question. I have data from 1969Q1 to 2020Q4, train (1969q1- 2018q4) and test (2019q1-2020q4). Is the forecast on test data is one-step/single step or multip step. It is static no rolling window is used.

I think it is a multi-step where we apply the one-step strategy as you mentioned in one of your comments. Actually, somebody said to me, it is one step ahead but it is not one step ahead rather multiple steps using a one-step strategy.

You can choose how to frame your problem and what you want to use to evaluate the model.

Hi Jason,

thanks for the wonderful posts you have published.

I’m new at machine learning, just completed some courses. I have a question: Is there any function that can automatically calculate different strategies for a given model?

You could implement such a library.

Hi Jason! Thanks a lot for all this admirable endeavour of yours – we deeply appreciate it!

Regarding the recursive Multi-step Forecast approach, would it be applicable in the case of datasets with multiple ‘predictor’ variables as well?

Say for example, one is interested in forecasting the temperature for the next two days but based both on current temperature and humidity:

temp(t+1) = model(temp(t-1), hum(t-1), temp(t-2), hum(t-2))

temp(t+2) = model(temp(t+1), hum(t+1), temp(t-1), hum(t-1))

Which would then be the humidity value to be used in the 2nd prediction step i.e. hum(t+1)? A humidity predicted value would not be available as the model of interest only predicts temperature.

I seem to be missing something…

Thanks in advance!

Sure if you want.

Perhaps try it and see what works well or best for your data and model.

Hi Jason, thanks for putting this together.

As a follow-up to this, what would you advise when you have a lot of features and want to try out a recursive style model? At some point it would become impractical to build predictive models for each feature to use in the t+1 and beyond steps.

Thank you!!

I try to not be prescriptive – intuitions are often less effective.

Perhaps you can prototype a few approaches and discover what seems like a good fit for your specific dataset.

Hi jason

congratulations for your work!!

i have a confusion about Recursive Multi-step Forecast.

Considering that we have 10 days and we use the first 6 for training our model to predict the temperature for day 7 and 8 with Recursive Multi-step Forecast.

To predict day 9 and 10 a new model is trained with the real temperatures of days 1-8?

Thank you!!

You can define the input and output any way that you want based on the data that is avaialble.

Hi Jason,

Thanks for the tutorial! I have a problem of predicting the temperature flow in a specific process. I have multiple measurements from sensors and controllers. The measurements are recorded every 5 minutes. The system has to be stopped every now and then for an specific maintenance. The goal is to predict when the system needs to be shut down for this specific maintenance by predicting the value of temperature flow two weeks a head. Is this considered to be a multi step or one-step time series prediction?

Another question: I want to use the given sensor measurements for predicting the Temperature flow using a machine learning approach. Is there any specific approach that you would suggest for such a problem?

Thank you!

It can be one-step to predict whether the system will be down for maintenance. It can also be multi-step to predict whether the system will be down for maintenance in the next N steps.

Hope this helps explain that.

Hi Jason,

I am wondering if there is a model that takes a varying number of time steps as one sample that can be labelled ‘1’ or ‘0’. Suppose I have thousands of such samples to train the model and let it predict on new data (also of varying number of time steps). For example,

[ [1, 1.5, 1.45, 1.60, 1.60, 0.1, 1000],

[2, 1.55, 1.5, 1.82, 1.63, 0.06, 1200],

[3, 1.6, 1.61, 1.86, 1.72,0.06, 1150],

]

its label is ‘1’

[ [1, 1.50, 1.45, 1.60, 1.60, 0.10, 1000],

[2, 1.60, 1.50, 1.82, 1.63, 0.06, 1200],

[3, 1.63, 1.61, 1.56, 1.47, -0.06, 1150],

[4, 1.47, 1.50, 1.65, 1.55, 0.055, 1320]

]

its label is ‘0’

etc

after training, we want the model to predict for

[ [1, 1.50, 1.45, 1.60, 1.60, 0.10, 1000],

[2, 1.60, 1.50, 1.82, 1.63, 0.06, 1200],

[3, 1.63, 1.61, 1.56, 1.47, -0.06, 1150],

[4, 1.47, 1.50, 1.65, 1.55, 0.055, 1320],

[5, 1.67, 1.56, 1.98, 1.77, 0.061, 1350]

]

Is this possbile? Thank you.

Depends on the model, you may do it. If you use a LSTM, you can train it to read from varying number of steps. Otherwise, people usually use padding to fill up the time steps to a fixed width.

Hi Jason

I want to predict the bitcoin price in the next month (30 steps ahead). Which approach do you suggest?

Also, another question, do you have any guidance about conv2dLSTM layer in your book?

Best regards

Probably a direct multi-step would be easier.

In the Recursive Multi-step Forecast with multivariate data (lets say 8 variables) in step 2 where we take in consideration the previous predicted value and the real values previous the predicted (depends on the lag) what happend with the other 7?

Are we also predict this 7 variables and take them in consideration for step 2?

The short answer to your question is yes. You understanding is correct.

Hi Jason

Do you recommend using XGBoost for multi-step forecast? And what strategy would be appropriate?

Thank you!!!

Hi Zhi…I do not recommend any strategy in general. I recommend investigating several for a given application and comparing results. You may be surprised at the performance of a given strategy depending up the data available for training.

Hi,

I have a question regarding time series forecasting. Can we predict future values with DeepAR algorithm which are some time ahead of the values for which the model was trained without providing the intermediate values?

e.g., the model was trained till December 2021 and I want to predict/forecast values for the month June 2022.. Do I have to provide all the values for the months in between (Jan, Feb, Marh, April & May)? Or Do I just have to provide the values for the context length which e.g., is one month (May 2022)?

If it does provide the predictions, then kindly let me know its working

Thanks alot

Thank you for the effort you put in writing this beneficial post.

Is it possible to make some manipulation to one of the series in the multivariate time series before making forecast with the aim of expecting the modification to be propagated in the result of the forecast?

Once again, thank you!

Hi Jason,

first of all thank you for your helpful article.

I have a task where I should predict future inflation using ML-models with a recursive scheme and distinguish the results for 1-,2-,3-, and 4-step forecasts. My training data consists of 81 observations and 100 regressors/features and my testing set of 24 observations and 100 regressors/features.

I understand the 1-step ahead forecast with an expanding window, where I use initially the first 81 obs in the training set to predict obs. 82 (first forecast) and then use the first actual 82 obs to predict obs. 83 (second forecast) and so on.

But how does this work in the 2-step forecast? I also initially use the first 81 obs in the training set to predict obs. 82 and 83 for the first forecast. But what do I do for the second forecast? Using the first actual 82 obs to predict obs. 83 and 84 (would have predicted obs. 83 then twice) or using the first actual 83 obs. to predict obs. 84 & 85.

Hope my questions got clear.

Cheers,

Tom

Hi Tom…You are very welcome! We recommend that deep learning techniques be used for such a purpose:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

Hi , Thanks for the

post. could you share any sample code for all the Multi-step forecasting strategies – Direct strategy, Recursive multi-step forecasting strategy, Multiple-output forecasting strategy.

Hi Divya…The following resource provides many examples that will get you started on your own projects.

https://machinelearningmastery.com/deep-learning-for-time-series-forecasting/

Hello, please how do you handle imbalance in this case? I try using randomoversampler and SMOT but it’s say its not for muti class or multiple output .

I’m working on forest fire. And I’m predicting 5 steps ahead

Dear Jason,

I’m having trouble with the terminology of multi-step ahead forecasting and strategies. For example, I have a dataset that contains hourly measurements, and I want to make a prediction of 4 points by using LSTMs to compare with the actual ones.

So, if I want to use the Direct Multi-step Forecast Strategy, am I going to make a one-step ahead prediction for each next point?

X_test[0] for t+1,

X_test[1] for t+2,

X_test[2] for t+3,

X_test[3] for t+4

If I also want to apply the Multiple Output Strategy, am I going to create my model as Dense(1) or Dense(4)?

In other words, let’s assume that for the above model, all X_test elements contain 10 measurements. For the Multiple Output Strategy, am I going to create samples again for 10, or should I create for 40? I’m getting lose in here:

model.predict(X_test[0], X_test[1], X_test[2], X_test[3]) ->t+1,t+2,t+3,t+4 (elements contains 10 for each and Dense(1), 1 output for each X_test variables)

or

model.predict(X_test[0]) ->t+1,t+2,t+3,t+4 (X_test[0] contains 40 measurements for all the X_test variables above and Dense(4))

Which strategy should I follow? I’m really confused about that.

If you could help me with that, I’d really appreciate it.

Best.

Hi New_Learner…The following resource may be of interest:

https://machinelearningmastery.com/multivariate-time-series-forecasting-lstms-keras/