In this post you will discover 4 recipes for non-linear regression in R.

There are many advanced methods you can use for non-linear regression, and these recipes are but a sample of the methods you could use.

Kick-start your project with my new book Machine Learning Mastery With R, including step-by-step tutorials and the R source code files for all examples.

Let’s get started.

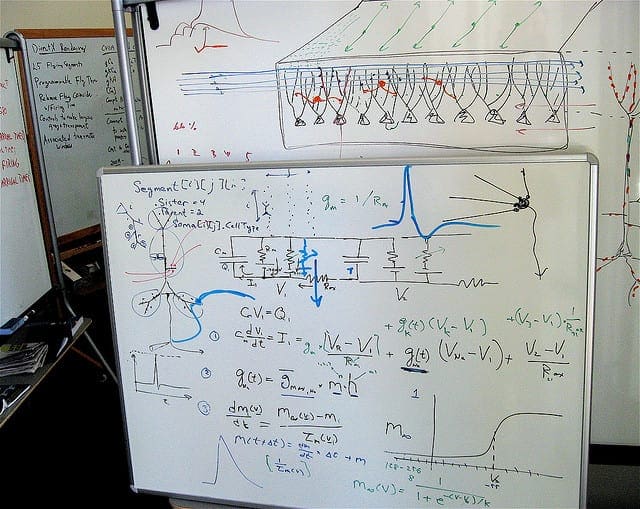

Non-Linear Regression

Photo by Steve Jurvetson, some rights reserved

Each example in this post uses the longley dataset provided in the datasets package that comes with R. The longley dataset describes 7 economic variables observed from 1947 to 1962 used to predict the number of people employed yearly.

Multivariate Adaptive Regression Splines

Multivariate Adaptive Regression Splines (MARS) is a non-parametric regression method that models multiple nonlinearities in data using hinge functions (functions with a kink in them).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# load the package library(earth) # load data data(longley) # fit model fit <- earth(Employed~., longley) # summarize the fit summary(fit) # summarize the importance of input variables evimp(fit) # make predictions predictions <- predict(fit, longley) # summarize accuracy mse <- mean((longley$Employed - predictions)^2) print(mse) |

Learn more about the earth function and the earth package.

Support Vector Machine

Support Vector Machines (SVM) are a class of methods, developed originally for classification, that find support points that best separate classes. SVM for regression is called Support Vector Regression (SVM).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

# load the package library(kernlab) # load data data(longley) # fit model fit <- ksvm(Employed~., longley) # summarize the fit summary(fit) # make predictions predictions <- predict(fit, longley) # summarize accuracy mse <- mean((longley$Employed - predictions)^2) print(mse) |

Learn more about the ksvm function and the kernlab package.

Need more Help with R for Machine Learning?

Take my free 14-day email course and discover how to use R on your project (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

k-Nearest Neighbor

The k-Nearest Neighbor (kNN) does not create a model, instead it creates predictions from close data on-demand when a prediction is required. A similarity measure (such as Euclidean distance) is used to locate close data in order to make predictions.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

# load the package library(caret) # load data data(longley) # fit model fit <- knnreg(longley[,1:6], longley[,7], k=3) # summarize the fit summary(fit) # make predictions predictions <- predict(fit, longley[,1:6]) # summarize accuracy mse <- mean((longley$Employed - predictions)^2) print(mse) |

Learn more about the knnreg function and the caret package.

Neural Network

A Neural Network (NN) is a graph of computational units that recieve inputs and transfer the result into an output that is passed on. The units are ordered into layers to connect the features of an input vector to the features of an output vector. With training, such as the Back-Propagation algorithm, neural networks can be designed and trained to model the underlying relationship in data.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# load the package library(nnet) # load data data(longley) x <- longley[,1:6] y <- longley[,7] # fit model fit <- nnet(Employed~., longley, size=12, maxit=500, linout=T, decay=0.01) # summarize the fit summary(fit) # make predictions predictions <- predict(fit, x, type="raw") # summarize accuracy mse <- mean((y - predictions)^2) print(mse) |

Learn more about the nnet function and the nnet package.

Summary

In this post you discovered 4 non-linear regression methods with recipes that you can copy-and-paste for your own problems.

For more information see Chapter 7 of Applied Predictive Modeling by Kuhn and Johnson that provides an excellent introduction to non-linear regression with R for beginners.

Hi Jason

Just joined your seamingly fantastic course in R and machine learning.

I want to practise it with a colleague and therefore I will ask you if it is possible to take the 14 courses in a day or two by saving your course emails – or should we take one email course and finish it before we receive the next one?

Regards

Finn Gilling

finn@gilling.com

I have data of electricity consumption for 2 days.I want to train an SVR model using this data and predict for next 1 day only but the R software predicts for 2 days instead of one day. Basically I want to train the model using more data but predict for lesser values.

Hi Akash, I think this may be how you are framing your problem rather than SVR.

Perhaps reconsider how you have your data structure for the problem?

Hello,

I have a question about MARS, If I have for example 50 observations of 5 sensors with 5 signals and I tried to do regression with MARS. I found the model eliminate the 5th sensor readings as it is so near. So, the model is function of 4 sensor variables and does not be affected by the 5th one and I use this model for prediction. But if suddenly and for any reason happen that I get an observation that has a reading of the 5th sensor which is too high than that I had before so the model will not sense that however this is an indication for a fault. So, now I wonder what shall I do to keep the model at least sense that there is a problem or something like that.

Thanks in advance,

Ramy

Perhaps try scaling (standardizing or normalizing) the data prior to fitting the model?

Perhaps try a suite of methods in addition to MARS?

Thanks for your response but still there some variable that are not included in the model. In addition, I think that MARS deals with data within the training data as if a new observation which beyond the region the response is the same and nothing change.

thank you

but why you don’t use the training and testing or validation part for neural network, it’s not necessary to build a neural network ?

Sorry, I don’t understand. Can you elaborate please?

Hi,

I wonder why you did not divide your database in two (training data for example(70%) and testing data(30%)), to validate the model of regression especially for neural networks

To keep the examples simple, i.e. brevity.

thank you for replying

You’re welcome.

Thank you Jason ,

one question about neural network

linear output =TRUE ? is it for regression ?

linear output = FALSE , is it for classification ?

knowing that I am working on predictive models, using regression by neural network

I recommend checking the documentation for the function.

Good job

I want to ask a question about the neuralalnet package, I just find the training and testing , there is no validation in the function, how to validate the model or it is sufficient to use only training and testing in the neuralnet package

in my case training(92%) testing(83%)

Thank you

Perhaps check the documentation for the package?

Another choice could be Lixallyt Weighred regression!

Great tip, thanks.