Model evaluation involves using the available dataset to fit a model and estimate its performance when making predictions on unseen examples.

It is a challenging problem as both the training dataset used to fit the model and the test set used to evaluate it must be sufficiently large and representative of the underlying problem so that the resulting estimate of model performance is not too optimistic or pessimistic.

The two most common approaches used for model evaluation are the train/test split and the k-fold cross-validation procedure. Both approaches can be very effective in general, although they can result in misleading results and potentially fail when used on classification problems with a severe class imbalance. Instead, the techniques must be modified to stratify the sampling by the class label, called stratified train-test split or stratified k-fold cross-validation.

In this tutorial, you will discover how to evaluate classifier models on imbalanced datasets.

After completing this tutorial, you will know:

- The challenge of evaluating classifiers on datasets using train/test splits and cross-validation.

- How a naive application of k-fold cross-validation and train-test splits will fail when evaluating classifiers on imbalanced datasets.

- How modified k-fold cross-validation and train-test splits can be used to preserve the class distribution in the dataset.

Kick-start your project with my new book Imbalanced Classification with Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

How to Use k-Fold Cross-Validation for Imbalanced Classification

Photo by Bonnie Moreland, some rights reserved.

Tutorial Overview

This tutorial is divided into three parts; they are:

- Challenge of Evaluating Classifiers

- Failure of k-Fold Cross-Validation

- Fix Cross-Validation for Imbalanced Classification

Challenge of Evaluating Classifiers

Evaluating a classification model is challenging because we won’t know how good a model is until it is used.

Instead, we must estimate the performance of a model using available data where we already have the target or outcome.

Model evaluation involves more than just evaluating a model; it includes testing different data preparation schemes, different learning algorithms, and different hyperparameters for well-performing learning algorithms.

- Model = Data Preparation + Learning Algorithm + Hyperparameters

Ideally, the model construction procedure (data preparation, learning algorithm, and hyperparameters) with the best score (with your chosen metric) can be selected and used.

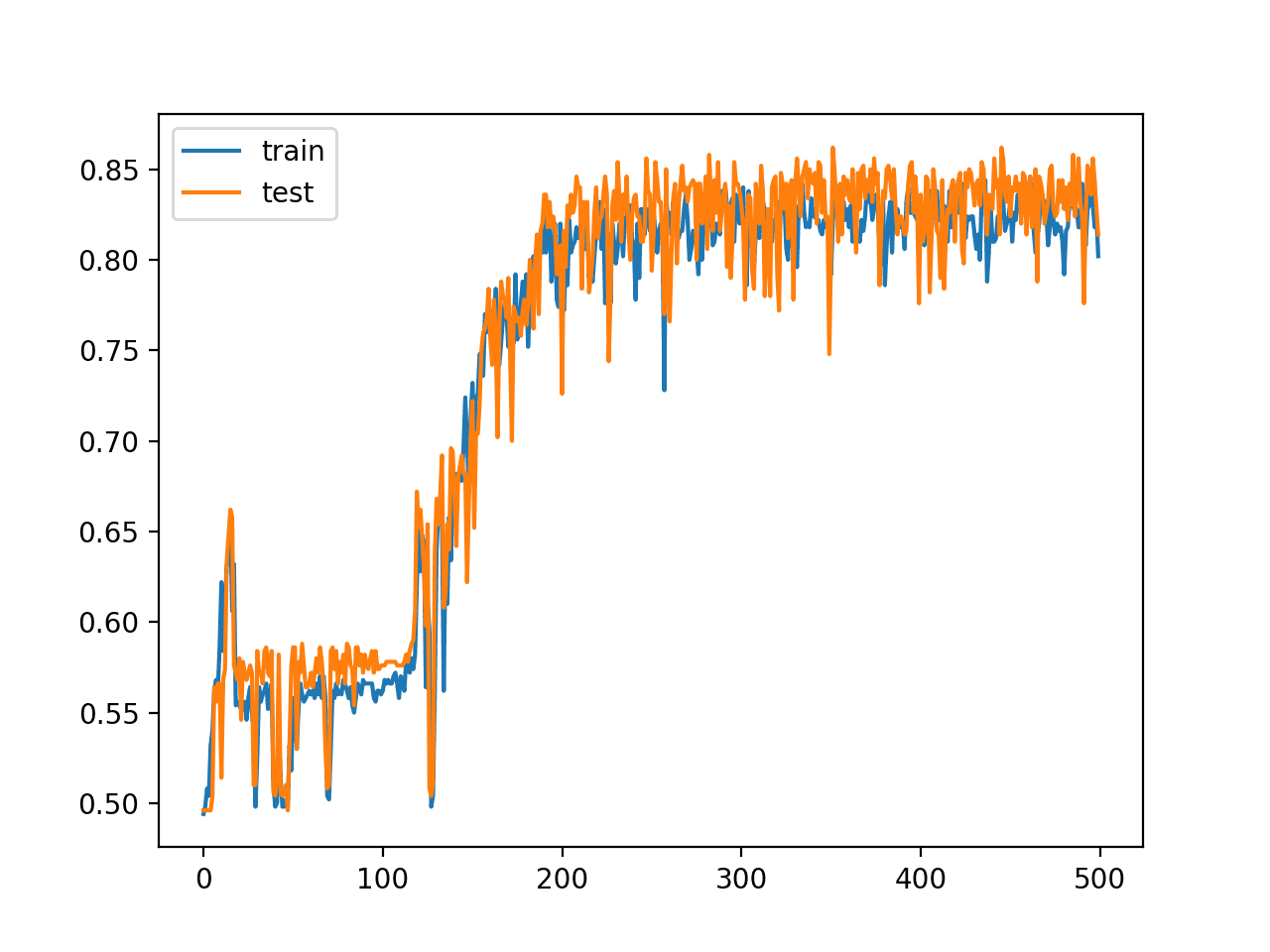

The simplest model evaluation procedure is to split a dataset into two parts and use one part for training a model and the second part for testing the model. As such, the parts of the dataset are named for their function, train set and test set respectively.

This is effective if your collected dataset is very large and representative of the problem. The number of examples required will differ from problem to problem, but may be thousands, hundreds of thousands, or millions of examples to be sufficient.

A split of 50/50 for train and test would be ideal, although more skewed splits are common, such as 67/33 or 80/20 for train and test sets.

We rarely have enough data to get an unbiased estimate of performance using a train/test split evaluation of a model. Instead, we often have a much smaller dataset than would be preferred, and resampling strategies must be used on this dataset.

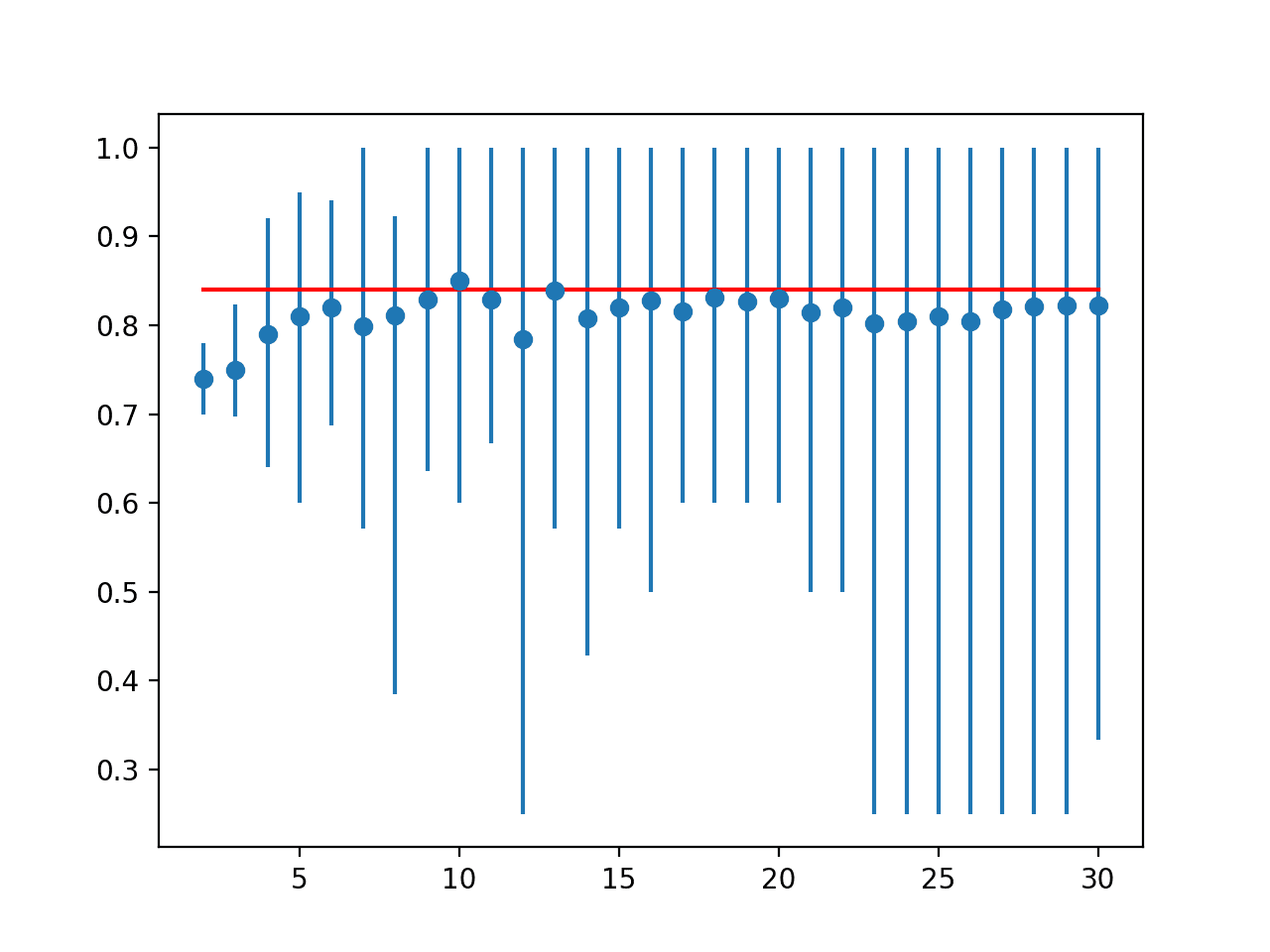

The most used model evaluation scheme for classifiers is the 10-fold cross-validation procedure.

The k-fold cross-validation procedure involves splitting the training dataset into k folds. The first k-1 folds are used to train a model, and the holdout kth fold is used as the test set. This process is repeated and each of the folds is given an opportunity to be used as the holdout test set. A total of k models are fit and evaluated, and the performance of the model is calculated as the mean of these runs.

The procedure has been shown to give a less optimistic estimate of model performance on small training datasets than a single train/test split. A value of k=10 has been shown to be effective across a wide range of dataset sizes and model types.

Want to Get Started With Imbalance Classification?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Failure of k-Fold Cross-Validation

Sadly, the k-fold cross-validation is not appropriate for evaluating imbalanced classifiers.

A 10-fold cross-validation, in particular, the most commonly used error-estimation method in machine learning, can easily break down in the case of class imbalances, even if the skew is less extreme than the one previously considered.

— Page 188, Imbalanced Learning: Foundations, Algorithms, and Applications, 2013.

The reason is that the data is split into k-folds with a uniform probability distribution.

This might work fine for data with a balanced class distribution, but when the distribution is severely skewed, it is likely that one or more folds will have few or no examples from the minority class. This means that some or perhaps many of the model evaluations will be misleading, as the model need only predict the majority class correctly.

We can make this concrete with an example.

First, we can define a dataset with a 1:100 minority to majority class distribution.

This can be achieved using the make_classification() function for creating a synthetic dataset, specifying the number of examples (1,000), the number of classes (2), and the weighting of each class (99% and 1%).

|

1 2 |

# generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) |

The example below generates the synthetic binary classification dataset and summarizes the class distribution.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# create a binary classification dataset from numpy import unique from sklearn.datasets import make_classification # generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) # summarize dataset classes = unique(y) total = len(y) for c in classes: n_examples = len(y[y==c]) percent = n_examples / total * 100 print('> Class=%d : %d/%d (%.1f%%)' % (c, n_examples, total, percent)) |

Running the example creates the dataset and summarizes the number of examples in each class.

By setting the random_state argument, it ensures that we get the same randomly generated examples each time the code is run.

|

1 2 |

> Class=0 : 990/1000 (99.0%) > Class=1 : 10/1000 (1.0%) |

A total of 10 examples in the minority class is not many. If we used 10-folds, we would get one example in each fold in the ideal case, which is not enough to train a model. For demonstration purposes, we will use 5-folds.

In the ideal case, we would have 10/5 or two examples in each fold, meaning 4*2 (8) folds worth of examples in a training dataset and 1*2 folds (2) in a given test dataset.

First, we will use the KFold class to randomly split the dataset into 5-folds and check the composition of each train and test set. The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# example of k-fold cross-validation with an imbalanced dataset from sklearn.datasets import make_classification from sklearn.model_selection import KFold # generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) kfold = KFold(n_splits=5, shuffle=True, random_state=1) # enumerate the splits and summarize the distributions for train_ix, test_ix in kfold.split(X): # select rows train_X, test_X = X[train_ix], X[test_ix] train_y, test_y = y[train_ix], y[test_ix] # summarize train and test composition train_0, train_1 = len(train_y[train_y==0]), len(train_y[train_y==1]) test_0, test_1 = len(test_y[test_y==0]), len(test_y[test_y==1]) print('>Train: 0=%d, 1=%d, Test: 0=%d, 1=%d' % (train_0, train_1, test_0, test_1)) |

Running the example creates the same dataset and enumerates each split of the data, showing the class distribution for both the train and test sets.

We can see that in this case, there are some splits that have the expected 8/2 split for train and test sets, and others that are much worse, such as 6/4 (optimistic) and 10/0 (pessimistic).

Evaluating a model on these splits of the data would not give a reliable estimate of performance.

|

1 2 3 4 5 |

>Train: 0=791, 1=9, Test: 0=199, 1=1 >Train: 0=793, 1=7, Test: 0=197, 1=3 >Train: 0=794, 1=6, Test: 0=196, 1=4 >Train: 0=790, 1=10, Test: 0=200, 1=0 >Train: 0=792, 1=8, Test: 0=198, 1=2 |

We can demonstrate a similar issue exists if we use a simple train/test split of the dataset, although the issue is less severe.

We can use the train_test_split() function to create a 50/50 split of the dataset and, on average, we would expect five examples from the minority class to appear in each dataset if we performed this split many times.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 |

# example of train/test split with an imbalanced dataset from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split # generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) # split into train/test sets with same class ratio trainX, testX, trainy, testy = train_test_split(X, y, test_size=0.5, random_state=2) # summarize train_0, train_1 = len(trainy[trainy==0]), len(trainy[trainy==1]) test_0, test_1 = len(testy[testy==0]), len(testy[testy==1]) print('>Train: 0=%d, 1=%d, Test: 0=%d, 1=%d' % (train_0, train_1, test_0, test_1)) |

Running the example creates the same dataset as before and splits it into a random train and test split.

In this case, we can see only three examples of the minority class are present in the training set, with seven in the test set.

Evaluating models on this split would not give them enough examples to learn from, too many to be evaluated on, and likely give poor performance. You can imagine how the situation could be worse with an even more severe random spit.

|

1 |

>Train: 0=497, 1=3, Test: 0=493, 1=7 |

Fix Cross-Validation for Imbalanced Classification

The solution is to not split the data randomly when using k-fold cross-validation or a train-test split.

Specifically, we can split a dataset randomly, although in such a way that maintains the same class distribution in each subset. This is called stratification or stratified sampling and the target variable (y), the class, is used to control the sampling process.

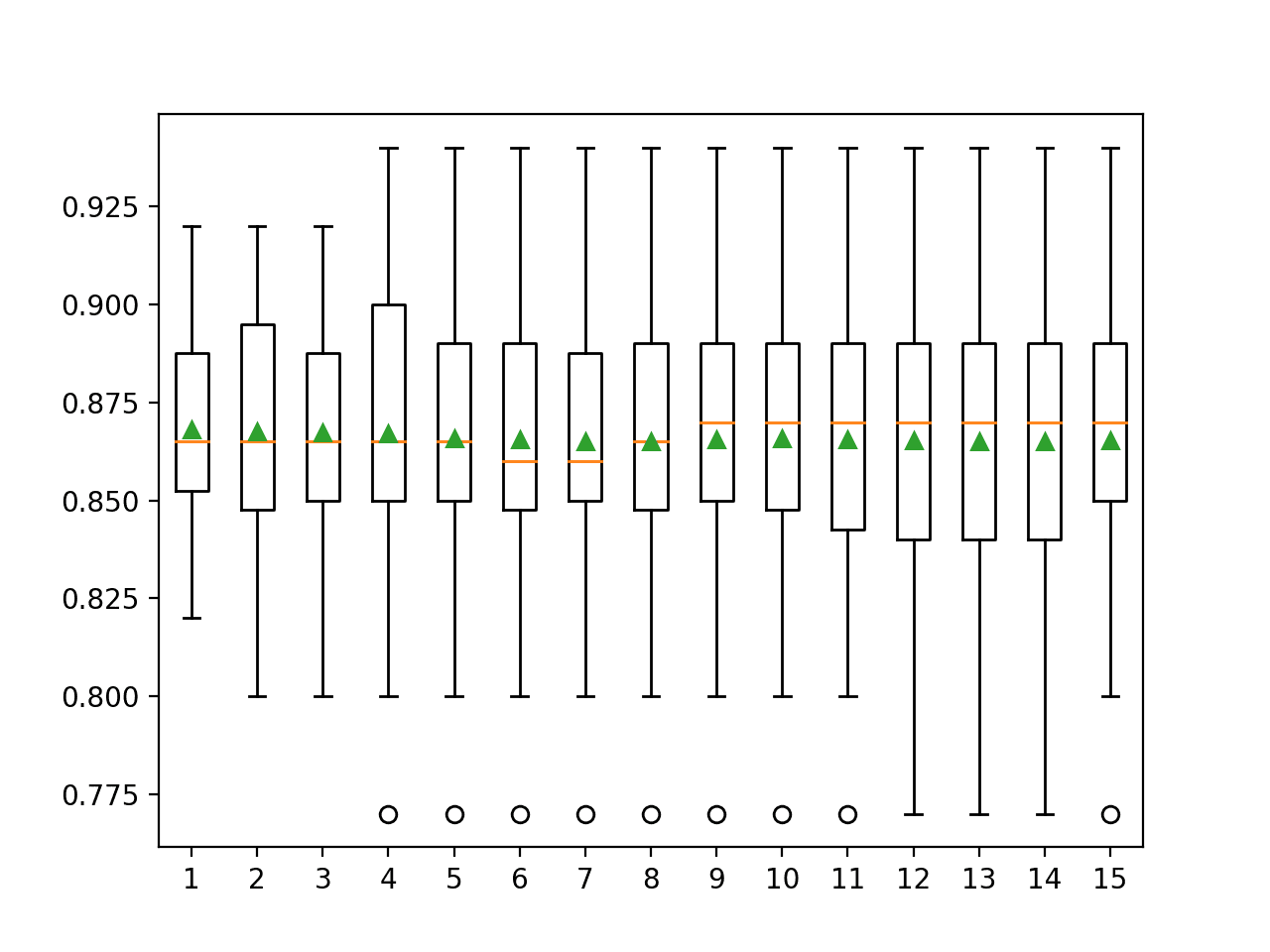

For example, we can use a version of k-fold cross-validation that preserves the imbalanced class distribution in each fold. It is called stratified k-fold cross-validation and will enforce the class distribution in each split of the data to match the distribution in the complete training dataset.

… it is common, in the case of class imbalances in particular, to use stratified 10-fold cross-validation, which ensures that the proportion of positive to negative examples found in the original distribution is respected in all the folds.

— Page 205, Imbalanced Learning: Foundations, Algorithms, and Applications, 2013.

We can make this concrete with an example.

We can stratify the splits using the StratifiedKFold class that supports stratified k-fold cross-validation as its name suggests.

Below is the same dataset and the same example with the stratified version of cross-validation.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# example of stratified k-fold cross-validation with an imbalanced dataset from sklearn.datasets import make_classification from sklearn.model_selection import StratifiedKFold # generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) kfold = StratifiedKFold(n_splits=5, shuffle=True, random_state=1) # enumerate the splits and summarize the distributions for train_ix, test_ix in kfold.split(X, y): # select rows train_X, test_X = X[train_ix], X[test_ix] train_y, test_y = y[train_ix], y[test_ix] # summarize train and test composition train_0, train_1 = len(train_y[train_y==0]), len(train_y[train_y==1]) test_0, test_1 = len(test_y[test_y==0]), len(test_y[test_y==1]) print('>Train: 0=%d, 1=%d, Test: 0=%d, 1=%d' % (train_0, train_1, test_0, test_1)) |

Running the example generates the dataset as before and summarizes the class distribution for the train and test sets for each split.

In this case, we can see that each split matches what we expected in the ideal case.

Each of the examples in the minority class is given one opportunity to be used in a test set, and each train and test set for each split of the data has the same class distribution.

|

1 2 3 4 5 |

>Train: 0=792, 1=8, Test: 0=198, 1=2 >Train: 0=792, 1=8, Test: 0=198, 1=2 >Train: 0=792, 1=8, Test: 0=198, 1=2 >Train: 0=792, 1=8, Test: 0=198, 1=2 >Train: 0=792, 1=8, Test: 0=198, 1=2 |

This example highlights the need to first select a value of k for k-fold cross-validation to ensure that there are a sufficient number of examples in the train and test sets to fit and evaluate a model (two examples from the minority class in the test set is probably too few for a test set).

It also highlights the requirement to use stratified k-fold cross-validation with imbalanced datasets to preserve the class distribution in the train and test sets for each evaluation of a given model.

We can also use a stratified version of a train/test split.

This can be achieved by setting the “stratify” argument on the call to train_test_split() and setting it to the “y” variable containing the target variable from the dataset. From this, the function will determine the desired class distribution and ensure that the train and test sets both have this distribution.

We can demonstrate this with a worked example, listed below.

|

1 2 3 4 5 6 7 8 9 10 11 |

# example of stratified train/test split with an imbalanced dataset from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split # generate 2 class dataset X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.99, 0.01], flip_y=0, random_state=1) # split into train/test sets with same class ratio trainX, testX, trainy, testy = train_test_split(X, y, test_size=0.5, random_state=2, stratify=y) # summarize train_0, train_1 = len(trainy[trainy==0]), len(trainy[trainy==1]) test_0, test_1 = len(testy[testy==0]), len(testy[testy==1]) print('>Train: 0=%d, 1=%d, Test: 0=%d, 1=%d' % (train_0, train_1, test_0, test_1)) |

Running the example creates a random split of the dataset into training and test sets, ensuring that the class distribution is preserved, in this case leaving five examples in each dataset.

|

1 |

>Train: 0=495, 1=5, Test: 0=495, 1=5 |

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Tutorials

Books

API

- sklearn.model_selection.KFold API.

- sklearn.model_selection.StratifiedKFold API.

- sklearn.model_selection.train_test_split API.

Summary

In this tutorial, you discovered how to evaluate classifier models on imbalanced datasets.

Specifically, you learned:

- The challenge of evaluating classifiers on datasets using train/test splits and cross-validation.

- How a naive application of k-fold cross-validation and train-test splits will fail when evaluating classifiers on imbalanced datasets.

- How modified k-fold cross-validation and train-test splits can be used to preserve the class distribution in the dataset.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Thank you!

You’re welcome!

As always, KUDOS!

Thanks!

train_X, test_y = X[train_ix], X[test_ix] should be train_X, test_X = X[train_ix], X[test_ix]

Thanks, fixed!

Hello, Great article. Thanks.

My problem is that in my original dataset, I have 1% response so I have undersampled it to make it 50% responders and 50% non responders. After this I have done the k-fold validation. Is it incorrect to do validation on sampled data (not real data)? Also, I am holding out another 20% real data (non sampled) as test dataset.

No.

The resampling must happen within the cross-validation folds. See examples here:

https://machinelearningmastery.com/smote-oversampling-for-imbalanced-classification/

Thanks. Even in that example the SMOTE and undersampling of majority class is done before stratified k-fold validation is applied on X and y. This means the validation is done on balanced data. Please correct if I am wrong. Thanks again.

Not true.

We use a pipeline to ensure that data sampling occurs within the cross-validation procedure.

Perhaps re-read the tutorial?

Thanks for the tutorial.. 🙂

Okay… Suppose If I have a binary classification {0:500,1:150} If random undersampling is done, {0:150, 1:150} ,

In this case no data leakage will occur if I do cross validation on sampled data . Right? Because, it is not oversampled with same copies or synthesized, just removed some values randomly ..

So is it okay to do random undersampling first and then do cross validation like normal unsampled data?

No, it is invalid. You will have changed the composition of the the test sets.

Are there cases where it makes sense to use a combination of stratified sampling during train_test_split *and* oversampling or undersampling?

And if so, is the idea to split the data first, the apply the oversampling? And if so there, should the oversampling be applied only to the training data?

Hi Daniel…The following resource will hopefully give you some ideas to consider:

https://machinelearningmastery.com/training-validation-test-split-and-cross-validation-done-right/

According to the example, this is the pipeline:

steps = [(‘over’, over), (‘under’, under), (‘model’, model)]

pipeline = Pipeline(steps=steps)

and then this step does the cross validation:

scores = cross_val_score(pipeline, X, y, scoring=’roc_auc’, cv=cv, n_jobs=-1)

doesn’t this mean that pipeline is applied to original dataset (X and y) – which means first

first oversampling by SMOTE, then random undersampling and then the model is applied and validated with ROC curve?

Yes, but the over/under sampling is applied WITHIN each fold of the cross-validation.

Hello,

What is the disadvantage(s) of the method s

Stratified cross validation ?

It is computationally more expensive, slightly.

It is only for classification, not regression.

I don’t understand this one. Do you mean we shouldn’t use StratifiedKFold for regressions like logistic regression?

Stratified cannot be used for regression problems (predicting a number).

“logistic regression” is a classification algorithm (for predicting a label).

Got what u meant now, thanks! Also, your website is a valuable resource about ML. Keep up the good work!

Thanks!

> Jason Brownlee January 21, 2021 at 6:49 am #

> Stratified cannot be used for regression problems (predicting a number).

I’ve been reading through some articles and this is exactly the answer I was looking for.

I was wondering how to create a dataset for a model (think of it as a regression model) that predicts a range of real numbers (e.g. 0-100), when I think the prediction is biased towards a certain range.

For one thing, I thought I would need to do some sort of stratified sampling for each range, but in a regression model, would random sampling be appropriate?

The former is very complicated because the performance of the model varies depending on the size of the range.

It may be a rare case that a regression problem falls within a certain range (in this case 0-100).

However, I believe that not only in this case, but also for regression, the problem, the training data may be obtained according to the distribution of the data obtained.

Do you have such a discussion?

Hi Ogawa…I am not following your question. Please elaborate on what you are trying to accomplish so that I may better assist you.

Regards,

James, thanks for your interest in my question.

To elaborate a bit, I am interested in predicting certain numbers from image data.

For example, it is similar to an effort to estimate age from a mugshot. (*)

In this example, if the data to be learned is biased towards a certain age group, it will not be able to make correct predictions.

(To be more specific, it may be difficult to infer the age of a child from a photo of his or her face, even if we only learn the age from photos of adults.)

Therefore, I think that even regression problems that predict numerical values should be free from bias during learning.

For many regression problems, the range of the predicted values cannot be specified, so stratified extraction is not possible.

However, I thought that some kind of processing might be necessary for these regression problems.

(In this example, one way to do this would be to extract data equally (or in the same proportion as the total number of data) in age groups (for example, in the range of every 10 years).

Are there any such arguments in general?

In the midst of such doubts, I saw this article, so I asked the question here.

This is how the question came about.

I would like some advice from Dr. Jason and other volunteers who are browsing this site.

(*) If you are interested, the data set can be obtained from the following site

https://susanqq.github.io/UTKFace/

Thank you

You’re welcome.

Hi, great post! In the case of GridSearchCV, is (Stratified)KFolds implicit? This is an example:

gs_clf = GridSearchCV(clf_pipe, param_grid=params, verbose=0, cv=5, n_jobs=-1)

Thanks for your reply!

Thanks.

I think so. It is better to be explicit with you cv method in grid search if possible.

Hello Jason, great post thank you !

One question

I have a data of 100 samples and 10 features. I want to fit an accurate model that predict one of them (variables)

method 1: I divide my data into 80% for training using k-fold cross validation and then validate the model on the unseen data(20%)

Method 2: I use all my data to fit the model using k-fold cross validation.

Between the two methods, which is the true one?

They are both viable/true, use the method that you believe will give you the most robust estimate of model performance for your specific project.

Thank you, Jason

You’re welcome.

It seems that “stratify” flag from train_test_split() is very useful. What is more, almost any real classification problem is imbalanced. Then I guess that this strategy shall be always used as a default? (as there is nothing to lose, right?)

Exactly right on all points!

I guess the only practical down side is a slight computational cost.

Hi Jason. Wonderful article:-). I just had one doubt. The “stratify” flag from train_test_split() helps in splitting of the imbalanced data in train and test dataset by ensuiring that the same ratio of imbalance is maintained in training and test dataset. In stratified k-fold cv method, it makes sure that whenever our “training” dataset is divided into n_folds, it maintains the same data imbalance ratio in each split. So when we are handling imbalanced data, we need to use “stratify flag” in train_test_splti and also use “stratified k-fold cv” . Am i correct?

Thanks.

Correct.

Hi Jason,

If we have 2 balanced classes, and our explanatory variables includes a class_guess, should we do k fold-stratification based on this mis-classified data? Numerically:

20k samples, 10k class A and 10k class B

f(label_guess, x2, x3, … xn) = label_true

w/ 400 samples that have label_guess = A but label_true = B. Intuitively, I think that the remaining data will help classify A/B, but I worry that these 400 points are unique and the data stratification needs to be constructed around them.

Thank you!

Sorry, I don’t understand your question, perhaps you could rephrase it?

If you have balanced class labels, no need to stratify the cross-validation unless you want to.

Thanks Jason, sorry for the poor phrasing, let me try again using the iris problem to illustrate my dilemma:

The Iris dataset has 2 measurements and a label for each iris. What if along with the 2 measurements, I also have an expert who makes a snap judgement classification. So each data point looks like this:

[2.3, 7.1, Setosa_expert] = [setosa], and 90% of the time the expert is correct, but they are sometimes wrong. My “theory” is that I can make a model that performs better than “just data” or “just expert”, by combining the experts guess with the data. In actuality, im working with a survey where respondents often get one question incorrect, and this is only discovered later based on a followup survey (which is where label_truth comes from).

Now there is one common mistake which the experts make – they identify setosa as veriscolor. Would you recommend doing stratification so that I treat [x, y, setosa] = [versicolor] as its own case, even tho [x, y, setosa] = [setosa] and [x, y, versicolor] = [versicolor] are equally frequent?

If I am still not making sense, then please feel free to delete, I may be trying to fit an anomaly detection scheme to a classification problem incorrectly.

Thanks again!

Yes, this idea is the basis for ensemble learning where the expert is a model (another data source).

Try it with and without and use what works best.

You will need sufficient cases of when the expert is correct and incorrect and incorrect in different ways. If stratification helps you ensure the balance of examples is sufficient for the model, then use it.

Thanks so much Jason! awesome blog, all the time

Thanks!

Hi Jason, just some reflection after reading your article: as you demonstrated in the article, it is important to consider the impact of the imbalanced data set on the cross-validation. but one of the other routines to preprocess the imbalanced data is undersampling or oversampling. Either way, more balanced data can be acquired. But then why still need stratified sampling? Thanks

Over/under sampling must happen within the cross-validation process!

Otherwise I suspect the results would be invalid/optimistic given data leakage.

Hi Jason,

I have a time series data set. I think I cannot use Stratified KFold for in this case. Any suggestions, please ?

Correct.

You can use walk-forward validation:

https://machinelearningmastery.com/backtest-machine-learning-models-time-series-forecasting/

Thank you for your reply.

The dataset I deal with is imbalanced (99.6% vs 0.4%), this means that some validation set will contain only the dominant class during the K-Fold iterations! Can I use a stratified method? Thank you.

Yes, stratified k-fold cross-validation is required.

Change the number folds so you get some examples of each class in each fold.

Hi ,

I have a dataset which is imbalanced . I am trying to use the stratified K fold cross validation but it is giving me error .The error is a keyvalue error. Kindly help me on this . Thanks.

Perhaps try lowering the number of folds.

Hi Jason, thanks for your enlightening articles!

I’d like to ask a question about this one.

So the underlying issue seems to be the classic misestimation of model performance due to imbalanced classes and the added one of estimates becoming unstable across folds due to the number of positive examples varying widely between them.

But this issue only applies if an accuracy-based evaluation metric is used (relating correct predictions to the number of all examples), right? Using area under the ROC curve, for instance, the estimates should be similar across folds independent of the proportion of positive examples in either one, since the ROC curve is based on the number of correct or incorrect positive predictions *in relation* to the their true number / the true number of negative examples, respectively. Is that correct?

Best regards

Jonas

You’re welcome.

Not quite. The test harness is probably invalid unless you stratify the train/test sets.

Thanks a lot for your response! But why is that so? Is it just due to the number of minority examples assigned to the train and test varying across folds? Wouldn’t that just mean that the estimates are less reliable (high variance across folds) rather than completely invalid?

What I don’t understand is how estimates based on proportions, like TPR and FPR, can be rendered invalid by changing the size of the base sets they operate on (true positives for TPR, true negatives for FPR). I feel the proportion a given model produces should always be the same, save for statistical fluctuations w.r.t which examples *exactly* – easy or difficult ones – end up in the train and test set.

No, stratified means that each fold has the same distribution of classes as the original dataaset.

Thanks again, Jason, I really appreciate your efforts here! However, I’m clear on what stratified means. My premise was that you stated in your earlier response that even when using AUC for evaluation, stratification for train and test is necessary, or the evaluation metric will be invalid. That surprised me, and I wondered how a metric like AUC that “partials out” the imbalance between classes by looking only at ratios can be thrown off by not stratifying. I’ll buy your book for a response 😉 (just kidding, I’ll probably buy them anyway)

Stratification is a sampling method. It means when we draw a sample or split a dataset we ensure the new sample has the same proportion of each class as the original sample.

The choice of metric is not related to how the dataset is split into train/test sets.

Hi Jason,

maybe this quote clarifies what I mean:

“ROC curves have an attractive property: they are insensitive to changes in class distribution. If the proportion of positive to negative instances changes in a test set, the ROC curves will not change.”

from

Fawcett, T. (2006) An Introduction to ROC Analysis. Pattern Recognition Letters, 27, 861-874. https://doi.org/10.1016/j.patrec.2005.10.010

If that is so, then why should I bother stratifying my sample when using AUC as evaluation metric.

Best regards

To ensure the evaluation of the model is fair/representative.

ROC curves are misleading if used on a skewed dataset:

https://machinelearningmastery.com/roc-curves-and-precision-recall-curves-for-imbalanced-classification/

Hi Jason, Is there a stratified cross-validator (to be used for hyperparameter tuning) for regression? I.e. with an ability to pass in a separate column for stratification, like in train_test_split?

For an example problem: If we’re trying to model when an engine temperature of a car. Features are mostly continuous, except the make/model of the car. As there a certain continuity in how temperature rises, we would like to avoid startified shuffling when doing train-test splitting (as possible with train_test_split). We perform our own stratified sampling, leaving the last ~20% of the dataset per each make/model as the test set. For hyperparameter tuning, we’d like to include a proportional amount of each make/model in each fold. However, available cross-validators like StratifiedKFold will stratify only based on target and not on a separate column that you can pass in.

“Stratification” does not make sense for regression.

The train and test sets should be iid and representative of the broader dataset.

Hi, Jason

Does every K-fold have the same size? . I mean, is the dataset splitted into K equal parts?

Thanks in advance!

Generally, yes, or as close as possible.

Hi Jason,

Great article!

I’m working on a small binary classification problem which has around 130 labelled data points (class split=90/40, features=12). What approach would be better for this?

Train/test split or cross-validation? and stratified or not?

The model has to be used to classify further 700 data points that are unlabeled and we don’t have any knowledge about the class distribution in that unseen data.

Thanks!

I would suggest stratified repeated k-fold cross-validation.

Hi,

If my data set is multiclass with 6 classes are labelled as 0,1,2,3,4,5. Then what change i have to do in the this code

train_0, train_1 = len(train_y[train_y==0]), len(train_y[train_y==1])

test_0, test_1 = len(test_y[test_y==0]), len(test_y[test_y==1])

print(‘>Train: 0=%d, 1=%d, Test: 0=%d, 1=%d’ % (train_0, train_1, test_0, test_1))

I tried by taking train_0,train_1, train_2,train_3= len(train_y[train_y==0]),……

But later confused .Will it possible to show instances from all classes in every fold ?

If your class labels are already ordinal encoded, no additional data preparation is required.

Thank you !!! yeah labels already encoded. In output

>Train: 0=792, 1=8, Test: 0=198, 1=2 number of training instances for 0 class 792 & for 1 class 8 & for test data 198 for 0 class and 2 for 1 class. Am I correct ?

So if I want to display number of instances in every class I to pass corresponding label to length function like this

train_0, train_1, train_2, train_3,train_4, train_5= len(train_y[train_y==0]), len(train_y[train_y==1]),len(train_y[train_y==2]), len(train_y[train_y==3]),len(train_y[train_y==4]), len(train_y[train_y==5])

Am I correct?

Perhaps use the Counter() object on the train_y to get counts for each label.

Hi Jason,

As always, thank you for your amazing and super helpful articles.

I have a question:

I am doing some sort of incremental/online learning for a classifier where I keep on updating my network with more data (you’ve already made an article about that too).

My data are high imbalanced so what I did is:

1. Using stratified k-fold cross validation (where k=5).

2. Either use class weights or over sampling (training data)

3. Fit the model and validate across the unaltered validation data for that fold.

4. I have set callbacks, so if it find the lowest “val_loss” at epoch one and waited for the some other epochs with no decrease, it reupdate the network with that weights of the first epoch.

Now I am really wondering why my model is underfitting (class weights where much better than oversampling, at least in my case). I mean, I can assume that my validation set isn’t representative enough for my training set and hence its stopping early. I tried lowering k to 3, but still same issue.

Even what I update the network with more data, it keeps on performing the same way.

Am I missing anything? Please let me know if I can provide more info..

I am sorry, there were a miss evaluation from my side. Please don’t bother. But thanks anyways for your support to us, developers :).

No problem.

Perhaps you can run some experiments to test your idea that the validation set is not representative, e.g. compare results with a larger validation set.

Hi jason

Thanks for your Great article

Can you a little explain how can i write this in matlab code?

Sorry, I don’t have any matlab examples.

Should we use SMOTE algorithm for imbalanced data or using stratified k-fold fix the imbalanced data problem?

Use both. SMOTE and CV solve different problems.

Smote will fix the imbalance in training data, CV will estimate a model’s performance on new data.

Thank you for your reply. And sorry, one more question do you know a but crossvalind command? It use whole data not each class seperately . And if i use smote i will do over sampling on one class with less sample then how can i use crossvalind command?sorry im new to machine learning.

Best regards

Sorry, I have not heard of the “crossvalind” command.

When using SMOTE, you can use a pipeline with cross-validation to ensure that oversampling is only applied on the training folds each iteration.

Hello Jason,

Thank you so much for making so many things clear about cross vallidation.

I have a question about RepeatedStratifiedKFold.

How does it exactly work?

1) Split the data set into a number of folds as K is set. While dividing into k-folds, it makes sure that every class is represented with the same proportion of the dataset. (Makes strata).

2) Builds the model with k-1 folds and validates the model with the remaining 1 held out data set.

Now my question is, when is the ‘repetitions’ introduced?

Once when the whole k-fold process is finished after validating the model with all folds, then again by re-distributing the data-set and creating the new folds or re-distributing the data just when each split gets done out of k-split?

Can you please make me clear about this issue?

Best regards

Repetition happens at your step 2: When k-1 fold are used for the model and 1 for the validation, which 1? You have k different possibilities here. Hence you can make a loop and do this k times, each for a different fold.

Hi

Should we do resampling like smote or random under sampling after feature extraction or before feature extraction on data?

Sample before feature extraction should make your total cost of computation less expensive.

Thanks, Dr. Jason for this valuable blog.

I have one observation. We always perform normalization (multiply by 1/255 for image) followed by train_test_split. However, in none of the k-fold cross-validation blogs, you have shown the normalization steps in the for loop of k-fold.

My query is: do we need to perform normalization/rescaling of images (in array) in every fold of the data?

When I perform rescaling of each fold data in the for loop of k fold, my training error grows slowly to very low accuracy and cross-validation accuracy remains 0.00 (zero) in all epochs.

Kindly guide.

Thanks and Regards.

Not every fold, but more like a preprocessing stage for images before you even start the k-fold. The reason is to make input pixel data stay in the range of 0 to 1 for easier training. For other kind of data, you do things like one-hot encoding, etc. are similarly to help the model to learn.

Thank you so much for your quick and exact solutions. Thanks for your support and being a “Real Guide”. All the Best.

Can I use stratified sampling with the multi-output model described in the following page?

https://machinelearningmastery.com/neural-network-models-for-combined-classification-and-regression

In you example showing the StratifiedKFold splits, you should use iloc or the code breaks in you example.

for train_ix, test_ix in kfold.split(X):

# select rows

train_X, test_X = X[train_ix], X[test_ix]

train_y, test_y = y[train_ix], y[test_ix]

Should be like that:

for train_ix, test_ix in kfold.split(X):

# select rows

train_X, test_X = X.iloc[train_ix], X.iloc[test_ix]

train_y, test_y = y.iloc[train_ix], y.iloc[test_ix]

Hi Panagis…thank you for the feedback!

HELLO, THANKS FOR THE INFORMATION… WHAT I DON’T UNDERSTAND WHY IS SMOTE USED WITH StratifiedKFold, IF StratifiedKFold SOLVES THE UNBALANCE PROBLEM BY ITSELF

Hi Christian…The following resource may help add clarity:

https://machinelearningmastery.com/smote-oversampling-for-imbalanced-classification/

Thanks for the great tutorial .

I just would like you to clarify one thing for me .

From what I have understood we have to apply under/oversampling after cross validation folds are defined , otherwise we will have the data leakage problem , but I’m a bit confused with the implementation of this approach .

When using :

# define dataset

X, y = make_classification(n_samples=10000, n_features=2, n_redundant=0,

n_clusters_per_class=1, weights=[0.99], flip_y=0, random_state=1)

# define pipeline

steps = [(‘over’, SMOTE()), (‘model’, DecisionTreeClassifier())]

pipeline = Pipeline(steps=steps)

# evaluate pipeline

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(pipeline, X, y, scoring=’roc_auc’, cv=cv, n_jobs=-1)

print(‘Mean ROC AUC: %.3f’ % mean(scores))

Is this what’s the code is doing (in this order) :

1) Apply stratified cv(cross validation) on X,y (dataset)

2) Get train and test folds from cv

3) Apply pipeline ONLY on the train folds

5) Apply evaluation metric on non-sampled test folds

4) Repeat for n iterations

Hi Mohamed…The following may be of interest:

https://machinelearningmastery.com/repeated-k-fold-cross-validation-with-python/

Hi Jason, I think you made a mistake in your terminology here:

“We can see that in this case, there are some splits that have the expected 8/2 split for train and test sets, and others that are much worse, such as 6/4 (optimistic) and 10/0 (pessimistic).”

10/0 case would be optimistic and 6/4 pessimistic. dont you think so?

Thank you for the feedback Nima!

Hello.

If we assign weight to the clases…

Can we get this values from the frequencies on the original dataset?

Or the proper way to do it is by using the frequencies on each k-fold? (to avoid leaking)

Good evening dear sir. Very glad to learn from your page about stratified k-folds cross-validation. But I have a concern about my data set. I am working on a dataset related to the 6 stages of Chronic Kidney disease. My Y variable is the stage of disease for the surveyed patient. My goal is to compare ML models to see which one predicts the best the stages of CKD and after using that model to identify the most important variables related to the CKD stages. As a cross-validation method, I think that stratified k folds cross-validation is the best. But inside my data, there is the variable SEX splits as M and F. I imagine that that must also be taken into account for the stratification. What could I do?

Hi Floriane…best practices for repeated k-fold cross validation can be found here:

https://machinelearningmastery.com/repeated-k-fold-cross-validation-with-python/