Long Short-Term Memory (LSTM) networks are a type of recurrent neural network capable of learning order dependence in sequence prediction problems.

This is a behavior required in complex problem domains like machine translation, speech recognition, and more.

LSTMs are a complex area of deep learning. It can be hard to get your hands around what LSTMs are, and how terms like bidirectional and sequence-to-sequence relate to the field.

In this post, you will get insight into LSTMs using the words of research scientists that developed the methods and applied them to new and important problems.

There are few that are better at clearly and precisely articulating both the promise of LSTMs and how they work than the experts that developed them.

We will explore key questions in the field of LSTMs using quotes from the experts, and if you’re interested, you will be able to dive into the original papers from which the quotes were taken.

Kick-start your project with my new book Long Short-Term Memory Networks With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

A Gentle Introduction to Long Short-Term Memory Networks by the Experts

Photo by Oran Viriyincy, some rights reserved.

The Promise of Recurrent Neural Networks

Recurrent neural networks are different from traditional feed-forward neural networks.

This difference in the addition of complexity comes with the promise of new behaviors that the traditional methods cannot achieve.

Recurrent networks … have an internal state that can represent context information. … [they] keep information about past inputs for an amount of time that is not fixed a priori, but rather depends on its weights and on the input data.

…

A recurrent network whose inputs are not fixed but rather constitute an input sequence can be used to transform an input sequence into an output sequence while taking into account contextual information in a flexible way.

— Yoshua Bengio, et al., Learning Long-Term Dependencies with Gradient Descent is Difficult, 1994.

The paper defines 3 basic requirements of a recurrent neural network:

- That the system be able to store information for an arbitrary duration.

- That the system be resistant to noise (i.e. fluctuations of the inputs that are random or irrelevant to predicting a correct output).

- That the system parameters be trainable (in reasonable time).

The paper also describes the “minimal task” for demonstrating recurrent neural networks.

Context is key.

Recurrent neural networks must use context when making predictions, but to this extent, the context required must also be learned.

… recurrent neural networks contain cycles that feed the network activations from a previous time step as inputs to the network to influence predictions at the current time step. These activations are stored in the internal states of the network which can in principle hold long-term temporal contextual information. This mechanism allows RNNs to exploit a dynamically changing contextual window over the input sequence history

— Hassim Sak, et al., Long Short-Term Memory Recurrent Neural Network Architectures for Large Scale Acoustic Modeling, 2014

Need help with LSTMs for Sequence Prediction?

Take my free 7-day email course and discover 6 different LSTM architectures (with code).

Click to sign-up and also get a free PDF Ebook version of the course.

LSTMs Deliver on the Promise

The success of LSTMs is in their claim to be one of the first implements to overcome the technical problems and deliver on the promise of recurrent neural networks.

Hence standard RNNs fail to learn in the presence of time lags greater than 5 – 10 discrete time steps between relevant input events and target signals. The vanishing error problem casts doubt on whether standard RNNs can indeed exhibit significant practical advantages over time window-based feedforward networks. A recent model, “Long Short-Term Memory” (LSTM), is not affected by this problem. LSTM can learn to bridge minimal time lags in excess of 1000 discrete time steps by enforcing constant error flow through “constant error carrousels” (CECs) within special units, called cells

— Felix A. Gers, et al., Learning to Forget: Continual Prediction with LSTM, 2000

The two technical problems overcome by LSTMs are vanishing gradients and exploding gradients, both related to how the network is trained.

Unfortunately, the range of contextual information that standard RNNs can access is in practice quite limited. The problem is that the influence of a given input on the hidden layer, and therefore on the network output, either decays or blows up exponentially as it cycles around the network’s recurrent connections. This shortcoming … referred to in the literature as the vanishing gradient problem … Long Short-Term Memory (LSTM) is an RNN architecture specifically designed to address the vanishing gradient problem.

— Alex Graves, et al., A Novel Connectionist System for Unconstrained Handwriting Recognition, 2009

The key to the LSTM solution to the technical problems was the specific internal structure of the units used in the model.

… governed by its ability to deal with vanishing and exploding gradients, the most common challenge in designing and training RNNs. To address this challenge, a particular form of recurrent nets, called LSTM, was introduced and applied with great success to translation and sequence generation.

— Alex Graves, et al., Framewise Phoneme Classification with Bidirectional LSTM and Other Neural Network Architectures, 2005.

How do LSTMs Work?

Rather than go into the equations that govern how LSTMs are fit, analogy is a useful tool to quickly get a handle on how they work.

We use networks with one input layer, one hidden layer, and one output layer… The (fully) self-connected hidden layer contains memory cells and corresponding gate units…

…

Each memory cell’s internal architecture guarantees constant error flow within its constant error carrousel CEC… This represents the basis for bridging very long time lags. Two gate units learn to open and close access to error flow within each memory cell’s CEC. The multiplicative input gate affords protection of the CEC from perturbation by irrelevant inputs. Likewise, the multiplicative output gate protects other units from perturbation by currently irrelevant memory contents.

— Sepp Hochreiter and Jurgen Schmidhuber, Long Short-Term Memory, 1997.

Multiple analogies can help to give purchase on what differentiates LSTMs from traditional neural networks comprised of simple neurons.

The Long Short Term Memory architecture was motivated by an analysis of error flow in existing RNNs which found that long time lags were inaccessible to existing architectures, because backpropagated error either blows up or decays exponentially.

An LSTM layer consists of a set of recurrently connected blocks, known as memory blocks. These blocks can be thought of as a differentiable version of the memory chips in a digital computer. Each one contains one or more recurrently connected memory cells and three multiplicative units – the input, output and forget gates – that provide continuous analogues of write, read and reset operations for the cells. … The net can only interact with the cells via the gates.

— Alex Graves, et al., Framewise Phoneme Classification with Bidirectional LSTM and Other Neural Network Architectures, 2005.

It is interesting to note, that even after more than 20 years, the simple (or vanilla) LSTM may still be the best place to start when applying the technique.

The most commonly used LSTM architecture (vanilla LSTM) performs reasonably well on various datasets…

Learning rate and network size are the most crucial tunable LSTM hyperparameters …

… This implies that the hyperparameters can be tuned independently. In particular, the learning rate can be calibrated first using a fairly small network, thus saving a lot of experimentation time.

— Klaus Greff, et al., LSTM: A Search Space Odyssey, 2015

What are LSTM Applications?

It is important to get a handle on exactly what type of sequence learning problems that LSTMs are suitable to address.

Long Short-Term Memory (LSTM) can solve numerous tasks not solvable by previous learning algorithms for recurrent neural networks (RNNs).

…

… LSTM holds promise for any sequential processing task in which we suspect that a hierarchical decomposition may exist, but do not know in advance what this decomposition is.

— Felix A. Gers, et al., Learning to Forget: Continual Prediction with LSTM, 2000

The Recurrent Neural Network (RNN) is neural sequence model that achieves state of the art performance on important tasks that include language modeling, speech recognition, and machine translation.

— Wojciech Zaremba, Recurrent Neural Network Regularization, 2014.

Since LSTMs are effective at capturing long-term temporal dependencies without suffering from the optimization hurdles that plague simple recurrent networks (SRNs), they have been used to advance the state of the art for many difficult problems. This includes handwriting recognition and generation, language modeling and translation, acoustic modeling of speech, speech synthesis, protein secondary structure prediction, analysis of audio, and video data among others.

— Klaus Greff, et al., LSTM: A Search Space Odyssey, 2015

What are Bidirectional LSTMs?

A commonly mentioned improvement upon LSTMs are bidirectional LSTMs.

The basic idea of bidirectional recurrent neural nets is to present each training sequence forwards and backwards to two separate recurrent nets, both of which are connected to the same output layer. … This means that for every point in a given sequence, the BRNN has complete, sequential information about all points before and after it. Also, because the net is free to use as much or as little of this context as necessary, there is no need to find a (task-dependent) time-window or target delay size.

… for temporal problems like speech recognition, relying on knowledge of the future seems at first sight to violate causality … How can we base our understanding of what we’ve heard on something that hasn’t been said yet? However, human listeners do exactly that. Sounds, words, and even whole sentences that at first mean nothing are found to make sense in the light of future context.

— Alex Graves, et al., Framewise Phoneme Classification with Bidirectional LSTM and Other Neural Network Architectures, 2005.

One shortcoming of conventional RNNs is that they are only able to make use of previous context. … Bidirectional RNNs (BRNNs) do this by processing the data in both directions with two separate hidden layers, which are then fed forwards to the same output layer. … Combining BRNNs with LSTM gives bidirectional LSTM, which can access long-range context in both input directions

— Alex Graves, et al., Speech recognition with deep recurrent neural networks, 2013

Unlike conventional RNNs, bidirectional RNNs utilize both the previous and future context, by processing the data from two directions with two separate hidden layers. One layer processes the input sequence in the forward direction, while the other processes the input in the reverse direction. The output of current time step is then generated by combining both layers’ hidden vector…

— Di Wang and Eric Nyberg, A Long Short-Term Memory Model for Answer Sentence Selection in

Question Answering, 2015

What are seq2seq LSTMs or RNN Encoder-Decoders?

The sequence-to-sequence LSTM, also called encoder-decoder LSTMs, are an application of LSTMs that are receiving a lot of attention given their impressive capability.

… a straightforward application of the Long Short-Term Memory (LSTM) architecture can solve general sequence to sequence problems.

…

The idea is to use one LSTM to read the input sequence, one timestep at a time, to obtain large fixed-dimensional vector representation, and then to use another LSTM to extract the output sequence from that vector. The second LSTM is essentially a recurrent neural network language model except that it is conditioned on the input sequence.

The LSTM’s ability to successfully learn on data with long range temporal dependencies makes it a natural choice for this application due to the considerable time lag between the inputs and their corresponding outputs.

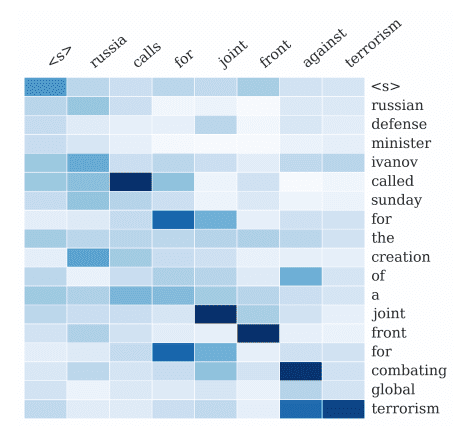

We were able to do well on long sentences because we reversed the order of words in the source sentence but not the target sentences in the training and test set. By doing so, we introduced many short term dependencies that made the optimization problem much simpler. … The simple trick of reversing the words in the source sentence is one of the key technical contributions of this work

— Ilya Sutskever, et al., Sequence to Sequence Learning with Neural Networks, 2014

An “encoder” RNN reads the source sentence and transforms it into a rich fixed-length vector representation, which in turn in used as the initial hidden state of a “decoder” RNN that generates the target sentence. Here, we propose to follow this elegant recipe, replacing the encoder RNN by a deep convolution neural network (CNN). … it is natural to use a CNN as an image “encoder”, by first pre-training it for an image classification task and using the last hidden layer as an input to the RNN decoder that generates sentences.

— Oriol Vinyals, et al., Show and Tell: A Neural Image Caption Generator, 2014

… an RNN Encoder–Decoder, consists of two recurrent neural networks (RNN) that act as an encoder and a decoder pair. The encoder maps a variable-length source sequence to a fixed-length vector, and the decoder maps the vector representation back to a variable-length target sequence.

— Kyunghyun Cho, et al., Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation, 2014

Summary

In this post, you received a gentle introduction to LSTMs in the words of the research scientists that developed and applied the techniques.

This provides you both with a clear and precise idea of what LSTMs are and how they work, as well as important articulation on the promise of LSTMs in the field of recurrent neural networks.

Did any of the quotes help your understanding or inspire you?

Let me know in the comments below.

I am not expert but I think it’s better to use time steps instead of time lags, As most papers use it.

I also confused about definition of time lags in another article here 🙂

Yes, it is better tot use past observations as time steps when inputting to the model.

Hi Jason,

Can you please tell me how LSTMs are different from Autoregressive neural networks?

Yes, no fixed length input or output sequences.

Hello, good explanation and intoduction.

Can you please help me with something? The input layers of a LSTM net.

For exemple, if I have this:

model.add(LSTM(4))

model.add(Dense(1))

How many neurons I have on my input layers? I think the first line of code refer to the hidden layers, how things get in?

These are not input layers, but are instead hidden layers.

You must specify the size of the expected input as an argument “input_shape=(xx,xx)” on the first hidden layer.

The input_shape specifies a tuple that specifies the number of time steps and features. A feature is the number of observations taken at each time step.

See this post for more:

https://machinelearningmastery.com/5-step-life-cycle-long-short-term-memory-models-keras/

Does that help?

waste of my time.

Sorry to hear that.

Hi Jason,

layers.LSTM(units=4, activation=’tanh’, dropout=0.1)(lstm_input)

what does the units here mean? I put the units here equals neurons number of hidden layer. Am I right? But if the input sequence is smaller than the number of the units here (i.e. blocks/neuron), does ir mean that some neurons in the lstm layer have not input series, just pass states to the next neurons?

Thanks a lot.

Yes, you are correct.

No, one unit can have many inputs. Further, the RNN only takes one time step as input at a time.

Hi Jason,

I still confused about this topic. Let me say: 28 steps series are input the LSTM layer, while there are 128 neuron. Does it mean 100 neurons have not input at this situation, just pass previous states to the next neurons?

Reference: https://stackoverflow.com/questions/43034960/many-to-one-and-many-to-many-lstm-examples-in-keras, the green rectangles represent the LSTM blocks/neuron in keras, which is 128. The pink rectangles represent the input series, which is 28. And the blue rectangles represent the output series.

Thank you very much.

No, the number of time steps and number of units are not related.

Hi Jason,

Thanks for your answer. When we use

layers.LSTM(units=128, activation=’tanh’, dropout=0.1)(lstm_input)

does we mean there are 128 units in A (depicted in http://colah.github.io/posts/2015-08-Understanding-LSTMs/)? If yes, what is the structure of such A like?

128 means 128 memory cells or neurons or what have you.

I cannot speak to the pictures on another site. Perhaps contact their author?

Thanks Jason.

I just wanted to know the structure of the LSTM layer with 128 units, and the input and output.

Generally, in Keras the shape of the input is defined by the output of the prior layer.

The shape of the input to the network is specified on the visible layer or as an argument on the first hidden layer.

Hello All,

Thank you for this source, I’m trying to find the hidden states in this example, I can’t see it defined in the code? I’m trying to port this model to another framework but can’t find the number of hidden states? Many thanks in advance.

There is no code in this post, perhaps you are referring to another post.

This post may help:

https://machinelearningmastery.com/return-sequences-and-return-states-for-lstms-in-keras/

Thanks Jason. Liked seeing the original author’s words. Really appreciate your blog!

I’m glad it helped.

Nice work !

Thanks.

The blog is very useful and it helped me a lot. I have doubt about LSTM (128/64). Does LSTM model require to have the same RNN/LSTM cell for each time step? What if my sequence is larger than the 128? From the RNN unfolding description, I found that each RNN/LSTM cell take one time step at time. This part confuse me a lot. Can you please clarify me?

No, the number of units in the first hidden layer is unrelated to the number of times steps in the input data.

Hello sir,

What are the differences between time steps and time lags? I’m confused about these two terms.

Also, what are the methods of finding optimal no of time lags in LSTM network?

A time step could be input or output, a lag is a time step from the past relative to the current observation or prediction.

Trial and error is best we can do, perhaps informed by ACF/PACF plots.

Thank you this is a nice article but for the two hours I am trying to inverse transform the predictions that fit with real data. Looking at other articles, etc, can’t make the data fit. RMSE and it’s plot doesn’t mean much unless you see what the model actually predicts.

Perhaps this will help:

https://machinelearningmastery.com/machine-learning-data-transforms-for-time-series-forecasting/

What the heck is ‘constant error ow’?

‘Ow’ is just a word for pain, which is not likely what you mean here.

Typo, fixed. Thanks!

I have a question regarding the mapping of input to the hidden nodes in a seq2seq (encoder-decoder) model you talked about. Reading about it further I understand that the hidden nodes count are usually matched to the dimension of the vectorized input (read as a word is represented by it’s embedding), but this is usually not the case. So given the latter, if hidden node count is lesser than the dimension of the input, what is the mapping between the two. (is it maybe fully connected?) Understanding this mapping can help me better understand how the LSTM learns as a whole.

The encoding of the entire input sequence is used as a context in generating each step of the output sequence, regardless of how long it is.

Thank you for beautiful explanation..

You’re welcome.

Thanks for the explanation, I have a doubt regarding the block size in LSTM and how to change them, and how to access memory blocks in LSTM.

I believe Keras does not use the concept of blocks for LSTMs layers.

I didn’t get you? Is there any way to change the memory block inside a hidden layer?

What do you mean by “memory block”.

Yes, training changes the LSTM model weights.

Thanks for the content, I have question

does lstm have window stride like cnn or window stride always equal 1

thanks for your time

No stride in LSTM. Or consider the stride fixed at 1, e.g. one time step.

You can achieve this effect using a CNN-LSTM.

Hi Jason. This is a great article. I follow your website to learn different Machine Learning Concepts and techniques.

I am currently working on Binary Classification problem where data contains logs recorded at any point of time. each log record has multiple features with label as Pass/Fail. I used LSTM for such sequence classification. I want to understand how LSTM works internally for sequence classification problem.

Could you please suggest some references for that?

Thank You

This will help as a first step:

https://machinelearningmastery.com/faq/single-faq/how-is-data-processed-by-an-lstm

Thank you Jason for quick reply. Here is what I understood: each node in the layer will get one time step of input sequence at a time,process it and give one output label(for Sequence Classification). Output of last time step from end of sequence will be used further.

I am still curious how output vector from all nodes will be used later in the processing.

Could you please elaborate more on it?

The entire vector of outputs from layer is passed to the next layer for consideration, if return_sequences is true, otherwise the output from the last time step is passed to the next layer. This applies for each node in the layer.

Thank You Jason. I understood. As I mentioned earlier, I am currently working on Binary classification problem. I have one more question.

Dataset contains storage drives(unique id) with multiple heads(one drive will have multiple records/1:many), each head will have multiple records of events with different time(unit hour). We can say it is time series data.

Here Label for each record is PASS/FAIL head. Below is the snapshot of dataset.

drive head time feature1 label

1. 0. t1 x PASS

1. 0. t2. y PASS

1. 1. t1. z. PASS

1. 2. t1. p. PASS

1. 2. t2. w. PASS

2. 0. t1. x. FAIL

2. 0. t2. y. FAIL

Our goal is to predict drive will fail within next X hour. If we can predict pass/fail head then we can combine all head prediction and maximum prediction will be prediction of drive.

We first converted this tabular data into 3D sequences for each unique drive, used LSTM as LSTM requires input in shape of (samples, time steps, features)

My question is while preparing sequence for LSTM, Should it be for each drive or for each head?

Also, once we get head level prediction, is there any other way to get drive level prediction?

Thank You.

Yes, I think each drive would be one sample to train the model.

Yes, you can make a prediction by calling model.predict() this will help:

https://machinelearningmastery.com/make-predictions-long-short-term-memory-models-keras/

Thank You Jason. This article helped me in the process. As I started adding more data, I see that it is severe imbalance between major(negative) and minor(positive) class.

I am aware that SMOTE and it’s variation are best way to handle imbalance data but I am not able to figure out how I can use SMOTE with LSTM time series binary classification.

Could you please suggest some reference or other technique I should use with LSTM for imbalance data?

Thank You.

SMOTE is not appropriate for sequence data.

Perhaps try designing your own sequence data augmentation generator?

Hello Jason, I had a question…. What information does the hidden state in an LSTM carry?

It carries whatever the LSTM learns is useful for making subsequent predictions.

Hi Jason,

thanks for the detailed explanations! One short question:

“A recurrent network whose inputs are not fixed but rather constitute an input sequence can be used to transform an input sequence into an output sequence while taking into account contextual information in a flexible way.”

Why is a vanilla neural network restricted to a fixed input size, while a RNN is not? Could you elaborate on this with an example?

Thanks a lot in advance!

You’re welcome.

MLPs cannot take a sequence, instead we have to take each time step of the sequences as a “feature” with no time dimension.

Good article. In the part that quotes Hochreiter, “flow” is misspelled (twice) as “ow”.

Thanks. Fixed.

This article is great, please can I get a practical example for behavioural prediction eg extrovert, introvert etc. I am trying to apply this concept to prediction problem but am entirely new to this field and I have limited time

Thanks for the suggestion.

Perhaps you can adapt an example for your specific dataset:

https://machinelearningmastery.com/start-here/#lstm

“Long Short-Term Memory (LSTM) networks are a type of recurrent neural network capable of learning order dependence in sequence prediction problems.”

Error in this line: “older” not “order”

Thank you for the feedback Arnav! Let us know if you have any questions regarding LSTMs or any other machine learning concepts.

Regards,