Data leakage is a big problem in machine learning when developing predictive models.

Data leakage is when information from outside the training dataset is used to create the model.

In this post you will discover the problem of data leakage in predictive modeling.

After reading this post you will know:

- What is data leakage is in predictive modeling.

- Signs of data leakage and why it is a problem.

- Tips and tricks that you can use to minimize data leakage on your predictive modeling problems.

Kick-start your project with my new book Data Preparation for Machine Learning, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Data Leakage in Machine Learning

Photo by DaveBleasdale, some rights reserved.

Goal of Predictive Modeling

The goal of predictive modeling is to develop a model that makes accurate predictions on new data, unseen during training.

This is a hard problem.

It’s hard because we cannot evaluate the model on something we don’t have.

Therefore, we must estimate the performance of the model on unseen data by training it on only some of the data we have and evaluating it on the rest of the data.

This is the principle that underlies cross validation and more sophisticated techniques that try to reduce the variance in this estimate.

Want to Get Started With Data Preparation?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

What is Data Leakage in Machine Learning?

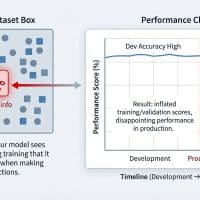

Data leakage can cause you to create overly optimistic if not completely invalid predictive models.

Data leakage is when information from outside the training dataset is used to create the model. This additional information can allow the model to learn or know something that it otherwise would not know and in turn invalidate the estimated performance of the mode being constructed.

if any other feature whose value would not actually be available in practice at the time you’d want to use the model to make a prediction, is a feature that can introduce leakage to your model

when the data you are using to train a machine learning algorithm happens to have the information you are trying to predict

— Daniel Gutierrez, Ask a Data Scientist: Data Leakage

There is a topic in computer security called data leakage and data loss prevention which is related but not what we are talking about.

Data Leakage is a Problem

It is a serious problem for at least 3 reasons:

- It is a problem if you are running a machine learning competition. Top models will use the leaky data rather than be good general model of the underlying problem.

- It is a problem when you are a company providing your data. Reversing an anonymization and obfuscation can result in a privacy breach that you did not expect.

- It is a problem when you are developing your own predictive models. You may be creating overly optimistic models that are practically useless and cannot be used in production.

As machine learning practitioners, we are primarily concerned with this last case.

Do I have Data Leakage?

An easy way to know you have data leakage is if you are achieving performance that seems a little too good to be true.

Like you can predict lottery numbers or pick stocks with high accuracy.

“too good to be true” performance is “a dead giveaway” of its existence

— Chapter 13, Doing Data Science: Straight Talk from the Frontline

Data leakage is generally more of a problem with complex datasets, for example:

- Time series datasets when creating training and test sets can be difficult.

- Graph problems where random sampling methods can be difficult to construct.

- Analog observations like sound and images where samples are stored in separate files that have a size and a time stamp.

Techniques To Minimize Data Leakage When Building Models

Two good techniques that you can use to minimize data leakage when developing predictive models are as follows:

- Perform data preparation within your cross validation folds.

- Hold back a validation dataset for final sanity check of your developed models.

Generally, it is good practice to use both of these techniques.

1. Perform Data Preparation Within Cross Validation Folds

You can easily leak information when preparing your data for machine learning.

The effect is overfitting your training data and having an overly optimistic evaluation of your models performance on unseen data.

For example, if you normalize or standardize your entire dataset, then estimate the performance of your model using cross validation, you have committed the sin of data leakage.

The data rescaling process that you performed had knowledge of the full distribution of data in the training dataset when calculating the scaling factors (like min and max or mean and standard deviation). This knowledge was stamped into the rescaled values and exploited by all algorithms in your cross validation test harness.

A non-leaky evaluation of machine learning algorithms in this situation would calculate the parameters for rescaling data within each fold of the cross validation and use those parameters to prepare the data on the held out test fold on each cycle.

The reality is that as a data scientist, you’re at risk of producing a data leakage situation any time you prepare, clean your data, impute missing values, remove outliers, etc. You might be distorting the data in the process of preparing it to the point that you’ll build a model that works well on your “clean” dataset, but will totally suck when applied in the real-world situation where you actually want to apply it.

— Page 313, Doing Data Science: Straight Talk from the Frontline

More generally, non-leaky data preparation must happen within each fold of your cross validation cycle.

You may be able to relax this constraint for some problems, for example if you can confidently estimate the distribution of your data because you have some other domain knowledge.

In general though, it is a good idea to re-prepare or re-calculate any required data preparation within your cross validation folds including tasks like feature selection, outlier removal, encoding, feature scaling and projection methods for dimensionality reduction, and more.

If you perform feature selection on all of the data and then cross-validate, then the test data in each fold of the cross-validation procedure was also used to choose the features and this is what biases the performance analysis.

— Dikran Marsupial, in answer to the question “Feature selection and cross-validation” on Cross Validated.

Platforms like R and scikit-learn in Python help automate this good practice, with the caret package in R and Pipelines in scikit-learn.

2. Hold Back a Validation Dataset

Another, perhaps simpler approach is to split your training dataset into train and validation sets, and store away the validation dataset.

Once you have completed your modeling process and actually created your final model, evaluate it on the validation dataset.

This can give you a sanity check to see if your estimation of performance has been overly optimistic and has leaked.

Essentially the only way to really solve this problem is to retain an independent test set and keep it held out until the study is complete and use it for final validation.

— Dikran Marsupial in answer to the question “How can I help ensure testing data does not leak into training data?” on Cross Validated

5 Tips to Combat Data Leakage

- Temporal Cutoff. Remove all data just prior to the event of interest, focusing on the time you learned about a fact or observation rather than the time the observation occurred.

- Add Noise. Add random noise to input data to try and smooth out the effects of possibly leaking variables.

- Remove Leaky Variables. Evaluate simple rule based models line OneR using variables like account numbers and IDs and the like to see if these variables are leaky, and if so, remove them. If you suspect a variable is leaky, consider removing it.

- Use Pipelines. Heavily use pipeline architectures that allow a sequence of data preparation steps to be performed within cross validation folds, such as the caret package in R and Pipelines in scikit-learn.

- Use a Holdout Dataset. Hold back an unseen validation dataset as a final sanity check of your model before you use it.

Further Reading on Data Leakage

There is not a lot of material on data leakage, but those few precious papers and blog posts that do exist are gold.

Below are some of the better resources that you can use to learn more about data leakage in applied machine learning.

- Leakage in Data Mining: Formulation, Detection, and Avoidance [pdf], 2011. (recommended!)

- Mini episode on Data Leakage on the Data Skeptic podcast.

- Chapter 13: Lessons Learned from Data Competitions: Data Leakage and Model Evaluation, from Doing Data Science: Straight Talk from the Frontline, 2013.

- Ask a Data Scientist: Data Leakage, 2014

- Data Leakage on the Kaggle Wiki

- Fascinating Discussion on data leakage in the ICML 2013 Whale Challenge

Summary

In this post you discovered data leakage in machine learning when developing predictive models.

You learned:

- What data leakage is when developing predictive models.

- Why data leakage is a problem and how to detect it.

- Techniques that you can use to over come data leakage when developing predictive models.

Do you have any questions about data leakage or about this post? Ask your questions in the comments and I will do my best to answer.

So if I split for instance 60% of data for training, and keep the other 40% apart for test, scaling both after splitted I’m avoiding data leakage, right?

Hi Calvin, the best practice would be to learn how to scale from the training dataset and use that knowledge to then scale the test dataset.

Thank You for this tutorial, but i have a question

if I manually clean my train set, how could I do the same for my test set:

please is it possible to see tutorial where we learn to clean the train set for example using clean = missForest()

X_trainf = clean.fit_transform(X_train)

X_testf = clean.transform(X_test)

Good question.

Prepare the transform on the training set, apply it to the train and test sets.

Hello Jason

great article.

typically, once I have done all the munging, feature creation, pca, removing outliers, binary encoding, I split the data into 3 sets (85% train, 10% val, 5% test).

then I do all the cross validation, grid/ random search etc on the train set , possibly make some tweaks to the features (e.g add interactions or power terms of the top 2/3 predictors) and verify the results on the validation set. This is done across different modeling techniques.

and whence I have found the best model, I gauge it’s performance ONLY ONCE on the test set.

few questions.

1. I haven’t seen it being done much but does one store the imputed values of the training set somewhere and impute the unknown test set with those. The unknown test set would for e.g. be the one on which your model performance will be gauged in a kaggle competition.

how would this work, when I use some R packages for imputing data (e.g. amelia etc) which use more sophisticated approaches to imputation than vanilla mean/ mode / median.

Should one run the package on the unknown set too?

I haven’t found much difference in results in the models I’ve build when I imputed the unknown test set with training data vs imputing the unknown set with it’s own mean / median etc.

2. similar to the imputation, what is your advice for outlier methods? perhaps some univariate or multi-variate methods were used to detect outliers in the training data set and then (let’s say) the outlier values were replaced with mean /median etc.

How does one carry out the same steps and mitigate leakage into the unknown test set?

3. Would it be possible to see an example of how one can perform outlier removal, encoding, feature scaling, imputation with in each cross validation fold?

I know caret allows to perform PCA for e.g. when training the model (but then how would I know how many principal components it selects) or more recently, using H2O , one is not recommended to create binary features as H2O takes care of it automatically.

Thanks sandeep.

Nive approach, although I would suggest changing your percentages based on the specifics of the problem.

Any models used to transform training data (like imputing) must be stored, to then be used on any new data in the future like tests and validation sets, and even new data. It is now a part of the model.

Outliers are tricky. Store the outlier model as above. You often want to filter data in the same way after the model is built and perhaps return an “i don’t know” prediction for data out of bounds of the training dataset. I talk about outliers a little here:

https://machinelearningmastery.com/how-to-identify-outliers-in-your-data/

I don’t have a worked example with outlier remove, I don’t think. Sorry.

Nice information!

But I’m confused.

In that post, Dikran Marsupial, in answer to the question “Feature selection and cross validation”, In here, feature seleciton means variable selection?????

And, data leakage is not problem in R tool. Because there is function that solve this problem.(In train() function, we can use “CV” method or “repeatedCV”) is it right???

Thank you!

Data leakage can be a problem in R or any platform if we use information from the test set when fitting the model.

This can be as simple as including test data when scaling training data.

You are right, tools like caret make this much less of a risk, if the tools are used correctly (e.g. scaling is performed within each cross validation fold).

If we divide the data into suppose 5 folds, and, scale first 4 and predict the 5th. After this, we use other 4 and scale them. What about the first fold which got scaled along with the previous group? How do we unscale that? Also, do we have to scale the test fold also?

Yes, any transforms performed are discarded or reversed.

Your site has been really helpful, though i’m still kinda blur on why preparing data using normalization or standardization on the entire training dataset (maybe 70% of all data) before learning can cause leakage. why is knowing distribution of the majority of data causing issue?

To scale data you need coefficients (min/max or avg/stdev).

These must best estimated from data (unless you know the exact values from the domain).

If they are estimated from the entire dataset then leakage occurs because knowledge from the test set was used to scale the training set.

So, Jason, first of all: Thank you for you great blog!

Back into the question, so what do you suggest? Perform the normalization (min/max) after split (train_test_split) the data set?

thank you.

Yes, after the split and coefficients from the training data, then applied to train, test, other…

Hi!. For example, if Im using the mean of the training set in NaNs imputation. This mean must be the parameter to apply in the test set or I need to recalculate the mean inside the test set?

Ex.

Training set:

10

Nan

5

10

5

Nan – >5(mean)

Test set:

1

1

2

Nan

2

Nan – >10 from the training set or 1.5 (mean of the test set)???

The mean would be estimated from the training dataset only.

Excellent blog on machine learning with the detailed information. Appreciate your efforts Jason.

Thanks.

Thank you for creating such an incredibly useful blog that I find that I come back to often for reference! 1) What is the general methodology to engineer features by target encoding the mean of target variable, as grouped by X feature, and measured within the out-of-fold CVs sets? I’ve seen this before as an important engineered feature. 2) Isn’t this very prone to data leakage? If so, how?

Thank you again!

As long as the mean or other stats were based on training data alone, it would not be leakage.

Hi Jason,

What is validation dataset and how can I prepare it to hold back for sanity check of my model, being constructed?

Do I need to further break the training set to make validation dataset? Correct me if I am wrong.

This post will explain the validation dataset:

https://machinelearningmastery.com/difference-test-validation-datasets/

hi,

So my understanding is that the data preprocessing should be done at each fold during the cross-validation.And we can do that by caret package.Can caret deal with multicollinearity within each fold as well?

Thanks.

Yes, data prep must be performed for each fold.

Caret may support multicollinearity, I’m not sure off hand.

Hi,

So,if we perform the data preprocessing for each fold like box-cox, centering during cross-validation, we don’t need to do same preprocessing at the initial stage like during exploratory data analysis?

The reason I am asking because most of the time I see that people are preprocessing data (e.g missing value imputation, outlier removing) during the exploratory data analysis.Justed wanted to have the crystal clear idea!!

Thanks

Ideally we would do this within the CV fold to avoid leakage. Sometimes, it does not impact the results very much.

Hi,

When we are adding new attributes, removing attributes or creating dummy variables before the fitting models, can these actions cause data leakage as well?

It can if the procedure uses information outside of the data sample.

Hi Jason, indeed cool article.

I cannot understand this phrase in the context of data leakage “if any other feature whose value would not actually be available in practice at the time you’d want to use the model to make a prediction, is a feature that can introduce leakage to your model”.

What I understand from the above is that we have a feature whose values cannot be calculated during production stage. If so it means that we made a mistake during feature selection step. To solve it, we need to remove that feature and train again our model. Does this mistake has something related to data leakage ?

If you can point me to an concrete example would be helpful 😉

Thanks

It means if you are using information about data you don’t have, e.g. data from the future or data out of sample, then you have data leakage.

Does that help?

I feel I’m on the right way to understand it 🙂

Hang in there!

Regrading future data example, if you have data that includes data that is not needed for the prediction, do not use it. For example if the prediction problem is to predict the occurrence of disease and data includes the treatment administered column (future data) as well that can be an example of data leakage due to future data. So understanding the decision flow with domain SME can be a helpful in formulating the question.

Great tip!

The link “Leakage in Data Mining: Formulation, Detection, and Avoidance [pdf], 2011. (recommended!)” to http://dstillery.com/wp-content/uploads/2014/05/Leakage-in-Data-Mining-Formulation-Detection-and-Avoidance.pdf has died.

A google search for the title found:

https://www.cs.umb.edu/~ding/history/470_670_fall_2011/papers/cs670_Tran_PreferredPaper_LeakingInDataMining.pdf

Thank you! That article indeed does talk through some great examples of leakage.

Hi Jason,

Thank you for making these articles free online. They have been very helpful as I learn Keras this summer.

I feel that this article would benefit from some practical — if contrived — examples of leakage. I think it covers the theory pretty well, but examples showing leakage actually occurring and how to fix it would really drive the point home.

As I search for “example” in the comments, I suppose I’m repeating what Sandeep and Tudor said.

Thanks for the suggestion.

Sir can u provide Code for data leakage prediction?

What do you mean exactly? Do you have an example?

Thanks for your wonderful site and article. I am a bit confused on one part: if feature selection happens in the folds, then what is the final model that is chosen? I had thought that repeated k folds for example would average out the models to give final accuracy for example. (The repeated k folds giving some idea of the uncertainty around the predicted accuracy for example).

But if there are different features used for each fold then what is the suggested final set of features?

thanks!

Good question, we train a final model on all available data.

This post explains in more detail:

https://machinelearningmastery.com/train-final-machine-learning-model/

If I’m understanding the question correctly, Milley is asking, if feature selection is applied to each fold, then you might have a different set of features in each fold. So how do you

Do you select the final set of features based on the features selected in the fold with the best performance?

I didn’t see any reference to feature selection in the linked article.

Please keep up the good work! 🙂

I see, excellent question.

There’s no best way. One approach would be to use the average or superset of top features selected across all folds.

Or, use a holdout validation set prior to training.

The point the questioners are making here requires more response. If you’re doing feature selection within cross-validation folds, then what you are ultimately testing in your cross-validation execution is a model-building process, not a model (though some people actually include that in their notion of a “model”). But what most people are looking for in prediction is the performance of a model, not an entire model-building process. So while this approach may avoid a data leakage concern, it doesn’t deliver what most people are looking for in prediction, which means it doesn’t suit the need it was supposed to solve.

IOW, when these people ask “what is the final model?” they’re asking because this method of cross validation does not select a model, it selects a process of building a model. If you want to know how a particular model (not model-building process) will perform on unknown/future data then you have to preselect your features and test them similarly in each model fold. A hold out set can be created that was not used in the feature selection process nor in the cross validation execution.

I would argue you cannot (must not) decouple the two. There is only the model-building process (data prep + model), e.g. raw data in predictions out.

The final model is model selection applied to (data prep + model), more here:

https://machinelearningmastery.com/train-final-machine-learning-model/

Does that help Paul?

Maybe there’s just a miscommunication on what kind of scenario you have in mind for “doing feature selection as part of the model selection process w/i cross validation folds.” (My wording, not yours.) Suppose, for example, that you plan to use a single algorithm, logistic regression in your process. To select features, you decide also to use only one specific process: pick all features with associated p-value < 0.05 when doing univariate regression of the outcome on the feature. So that is part of the process in each of the, say, 10 x-val folds. Now, what do we have after doing x-validation? Well, it may be that we have 10 log regr models all with different features (one from each fold). The questions the other commenters are asking is, “How does this tell us what model to use for predictions on future data?” And I think they mean “which of those log regr models do I choose?” And I think the answer is, “it doesn’t tell you that.” What it does is to tell us on average what we should expect to see for prediction error on future data if we were to use the stated process (log regr with features selected based on univaritate p’s < 0.05) using our current training data set to build the model (tho the x-val variability probably over-estimates variability slightly). So it doesn’t tell us what features to use, it tells us what will happen if we use that feature selection process to select features as input to a logistic regression algorithm. I.e., it only indirectly tells us what features to use (use the p < 0.05 process).

However, they (and most people) are probably thinking of selecting a model from a set of choices. For *picking* a model, we need things that are different within each fold (different feature selection process, different algorithms or both), but which stay the same across all folds. Example: I will compare ridge regression where I use all available features, with logistic regression and features selected based on p < 0.05. NOW I have a cross-validation process that gives me two things: a means to select the best model-building process, and an estimate of the average error I’ll see on future data.

The miscommunication (if any) comes from thinking you’re referring to the first scenario above, when maybe you’re thinking of the second. The second one tells you how to select from different models. The first does not. But either way, I think this kind of specific detail gives a great deal of clarity in communicating this stuff.

I think I agree.

To clarify:

Although, a single k-fold cross validation evaluation of a “model” results in an estimate of model performance when making predictions on out of sample data. This process is repeated for another “model”, and scores compared in order to perform “model selection”. We do not vary anything across folds other than the data.

All models fit during k-fold cross-validation are discarded. Typically. We could develop an ensemble of them or whatever (e.g. super learner/stacking), but ignore this for now.

Once a “model” is selected, we fit it on all data and away we go making predictions on new examples from the domain with no know output/target.

Hi, thank you for your nice blog

would you please explain “OneR” and “Cutoff”

Temporal Cutoff:

Remove Leaky Variables: Evaluate simple rule based models line OneR using variables like account numbers

OneR is the one-rule algorithm, e.g. it picks one split in the data results in the lowest error.

Hi, Thank you for your nice post!

I’m currently taking part in some competitions in kaggle. And, sometimes, such this issue is being discussed in kaggle,

This post helped me understand what ‘the leak’ is in ML.

Thanks!

I’m glad it helped.

I have a situation where I suspect data leakage occurred, but it doesn’t sound exactly like the cases above:

Suppose I am interested in predicting whether an event is likely to occur within some specific time bound, but I have not very many examples of the event occurring as it is fairly rare. So, I might set this up as a supervised learning problem, where I take summarized numerical data for multiple distinct time periods, for each subject in the study, in order to create more data for each subject.

When I am done constructing these observations, let’s say I have 5 observations for each subject, each with their own numerical variables computed off a distinct summary time period, as well as some categorical variables that are not time bound so they are the same for a given subject. It will be a classification problem, and my target variable for all 5 observations for each subject will indicate whether the future event occurred or not within the time bound of interest (all 5 observations from each subject have the same value for target).

Now I want to partition this data into training and test data sets. I simply shuffle all of my observations randomly, such that it is likely that for any observation appearing in my test set, there are one or more observations that came from the same subject. If done this way and separate observations from the same subjects end up in both training and test data sets, is this a pretty clear cut case of data leakage?

As a rule, if any abstraction/statistic of more than one sample is used to prepare the dataset before the split, then you have a form of leakage.

It sounds like you might be resampling your data, which might be a leak.

Hi Jason,

When it comes to encoding categorical variables on the entire dataset, would it also cause leakage? Currently, I am encoding all categorical variables on the entire dataset and saving the encoders into corresponding numpy files. I was thinking of using the encoders in production when I need to encode the categorical variables on new samples.

Sincerely,

One of your top 10 fans,

Billy

Not really, as long as the cardinality for the variable is fixed and general “domain knowledge”.

I am using tree based boosting algorithms like xgboost, lightgbm, catboost etc. so scaling or normalization is really not my need.

My question focuses on fact that I have seen many ML Hackathon Competitors when provided with “TrainSet” and “TestSet” usually the idea that is commonly followed is to combine these two to form “AllSet” and than engineer aggregate features like “Min, Max, Mean, Std” and “Count” by grouping categorical features and aggregating continuous.

Then these aggregate features are merged with AllSet followed by splitting into TrainSet and TestSet again!

Is this data leakage? If yes, say the model post hackathon is really implemented in real-world problem where the “aggregate features” as calculated are preserved and just merged with new data again. Now since the trained model is known to perform well on aggregate distributions how is this leak is going to affect in wrong way in my future predictions?

Excellent question!

Yes it is leakage, but it does not matter as the extent of the domain is only the train and test sets – for the competition or challenge.

Hello Jason,

Thank you for your answer.

So if this was not hackathons but deploying models in real-world for performing real future predictions and if I had Train-Test split, would you recommend to aggregate only on TrainSet?

I would recommend preparing data preparation on the training set only to give a fair evaluation of a model+model prep process.

Hi, can you please explain the solution given for non-leaky data in ” Perform Data Preparation Within Cross Validation Folds” thank you

Yes, any fit/stats required is performed in the train set for each train/test split during cross validation.

Does that help?

Dear Jason, thanks for your great work,

I’m still landing here after google – again and again ;)…

One question i got for a long time is:

Imagine we split DATA into TRAIN and TEST, we get the M = mean(TRAIN) and SD = std(TRAIN).

Now we do what we want to do, for example z_TRAIN = (TRAIN – M) / SD.

But, the mean()/std() we use here is a fixed mean(), depending on the ALL train data.

Scaling/transforming the data is done with a mean() value that we simply don’t know

– if we do it with the first train data values.

TRAIN

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||

^ ^ –> we get the mean() of all data points

SCALE

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||

^ ^ –> how can it be correct to scale values here with a mean we do not know here?

Isn’t that also data-leakage?

Doesn’t make it more sense to use a ROLLING MEAN instead?

TRAIN

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||

^——–^ –> we get the mean() of all existing points in ^—^ mean(TRAIN[1:20])

SCALE

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||

^——^ –> scale, do transforms from here

Sure we lose some data and it is more complicated – but doesn’t make it sense?

IF we are in an environment that gives us more and more (new) data?

While asking this i get the idea, that this maybe only is needed for time-series data?

Is doing scaling/transforming on complete TRAIN data (like caret pre-processing in R does) a valid approach for time-series?

Regards laz

Great question!

We prepare data prep and models on train, then apply data prep to test and evaluate on test.

If your data is a time series, which I think is what you’re getting at, then the same applies. You will have some historical data and any min.max or mean.std can be prepared from that or from expert knowledge of the domain.

Maybe you need to update the data prep/models after some time. E.g. weekly/monthly/yearly. This is common and addresses the ideas of concept drift:

https://machinelearningmastery.com/gentle-introduction-concept-drift-machine-learning/

Does that help?

Hi Jason,

I’m not sure that I understand your answer. So if I’m having a time series, applying the scaling on the train set by itself means using the information from the future and should therefore be avoided? In this case, basically any standardization / normalization of the time series should be avoided?

Thanks for clarification!

Sorry. I’ll be clearer.

Any coefficients calculated from the data that are used to scale the data must be calculated on training data only.

Hi Jason,

Thanks for this excellent tutorial.

I have a question regarding leakage, and that is…when is it OK to ignore data leakage?

Is there ever a situation when data leakage is a good thing?

Nope. It is important to consider and prevent in order to develop reliable estimates of model performance.

Hi Jason,

If I reduce cardinality of a feature in the entire data and use Label encoder, it is not Data Leakage ?

The label encoder does not change the cardinality of a feature. It only encodes the variable and maintains the cardinality.

Ok thanks but if I reduce cardinality of a feature in the entire dataset, it is Data leakage ?

It might be if you use information that is not in the training dataset to change data in the training dataset.

This definition might be too strict. It really depends on the specifics of your project/application as to whether the information you are using is true leakage.

Dear Dr. Jason,

As usual, an excellent article! I’m working with a set of images of different sizes and I want to build a model for image classification. As one of the preprocessing steps to build a dataset, I want to resize all the images to the average image size in the set of images. Then, I’ll define a feature vector of dimension equals to that average to store the resized images. After that, I’ll break the dataset down into training and test datasets. Am I leaking data? Another problem is the selection of top-N features and then defining a feature vector with those features. After that, I’ll break the dataset down into training and test sets. Am I leaking data? Both problems have to do with defining the dimensions of the features vectors (metadata) using actual data. I’m not sure how to proceed in this case. Thank you!

Thanks!

Maybe. Perhaps perform any selection of image size based on the training set only.

Hello Dr. Jason

Thank you very much for the great tutorial and for the patience to answer everybody’s questions. If you don’t mind I would like to ask you some questions myself after I have read all the comments:

Let’s say that I have an untouched dataset that needs several things done, for example, it has NaN values (Imputation), it has categorical variables (One Hot Encoded), it has continious variables (scalling or discretization)

Would you agree with this flow?

1. Train / Test split (we get “xtrain”, “xtest”)

2. We grab one of the variables of “xtrain”, let’s say “train_var1” and we need to scale this feature. We get that the parameters used for the scaling were: max=10, min=2, mean=5.

2.1 Question: Now that we know the parameters used for “train_var1”, we are going to use the same parameters (max=10. min=2, mean=5) to scale “test_var1” OR we are going to calculate its own new parameter for “test_var1”, which let’s say end up as max=12, min=1, mean = 8. Which approach would be right? I see a problem in both approches because in the first approach you have values that are off limits the parameters of the “train_var1” so it will end up being a mess. In the second approach the scales will be different so a “0.2” in “train_var1” is not the same as “0.2” in “test_var”, they were representing different numbers behind that scale.

3. We handle the NaNs for “train_var2” by doing an IterativeImputer using just “xtrain”, but it turns out that “test_var2” also has NaNs, so would we run an IterativeImputer for this var using just “xtest” data, which could give a completely different value to be imputed in “xtest” for exactly the same observation (all the other vars are the same) in “xtrain”

So for all the other processes I have more or less exactly the same questions. I have seen that somebody asked a similar question, but I couldn’t really understand from that.

Thank you very much again for taking the time to reply to these question, hopefully you can check out mine.

You’re welcome.

You can use any test harness you want. You don’t need my sign-off or permission. But I cannot say that one test harness is better than another – only you know your problem well enough to choose how to evaluate models.

I recommend testing a suite of imputation methods and discover what works well/best for your dataset and mode.

Hi Jason,

Sorry, I wasn’t trying to get your comments on the methods I am using for imputation,scaling or other, we can forget about those, they were just to give a more complete example. it is more about how to stop the data leakage from the train set to the test set and show you where I don’t understand when they say that pre-processesing should be in the training and test data separately

Does that make sense?

The best approach is to define a pipeline will all operations you want to use.

This will force all data prep and imputation to be based on the training data only, regardless of the test harness used, and in turn, avoid data leakage.

Hi Jason,

Thanks, you are the best, I will look into it! Although I would like to know if the pipeline is using any of the two approaches that I mentioned or a different one, do you have any thoughts on that?

A pipeline will always be fit on the training set only and be applied to the train and test sets, regardless of whether you’re using a train/test split or cross-validation with many folds.

Hi Jason,

When performing SMOTE for imbalanced dataset, we would have to impute missing values first as SMOTE is not capable of handling missing values. Hence before performing a train_test split we are imputing the missing values as after performing train_test split SMOTE makes no sense.Thus there is data leakage. How to overcome this data leakage problem while performing SMOTE?

No, you can impute first using the training set only. This is not data leakage.

You can do this in a pipeline if you like, e.g. imputer, then smote, then your model.

So, what you are saying is that after performing train_test split we can create a pipeline consisting imputer, then SMOTE etc, fit it on the training data and predict on the test data?

Yes. Or use k-fold cv.

make sure , apply the same method on the train and test set

Yes.

Great articles and excellent discussions in the posts above.

Part of the confusion I am experiencing (I think) in making sense of the great discussions above seems to emerge from the ambiguity of the phrase “training set” since this is used in multiple ways.

For instance, sometimes it seems that the discussion is about a single split: a “training set” (say, 80%) and a “testing set” (say, 20%).

At other times, k-fold cross validation seems to be the context: an initial split results in a “training set” (say, 80%) and a “testing set” (say, 20%). Then if we use 10-fold cross-validation, the initial “training set” is further divided into “training sets” (10 different combinations of 9 folds) and “validation sets” (10 different single folds).

So, to (hopefully) reduce the confusion, I will use the following terminology (following Max Kuhn) for my question:

Let’s say I initially split a dataset into a “training set” (80%) and a “testing set” (20%). I want to use 10-fold CV. So, I further split the “training set” into 10 equal folds. Let’s call any combination of 9 folds an “analysis set” and any holdout fold an “assessment set.” Let’s also say that I want to impute, balance, encode, scale, etc. (the specific preprocessing is not that important for this example, only that some type of preprocessing is required).

*****Here’s my question (BTW – I am working in R):*****

Does preprocessing (e.g., impute, balance, scale) occur on each “analysis set” (i.e., treat 9 folds combined as a whole set) and then the same preprocessing (e.g., impute, balance, scale) is applied to the corresponding “assessment set” (i.e., the holdout fold)?

SO:

*Preprocess on “analysis set” (the combined data from folds 1-9) and apply same preprocessing to the “assessment set” (data from fold 10).

*Preprocess on “analysis set” (the combined data from folds 1-8,10) and apply same preprocessing to the “assessment set” (data from fold 9).

*Preprocess on “analysis set” (the combined data from folds 1-7, 9-10) and apply same preprocessing to the “assessment set” (data from fold 8).

………….

*Preprocess on “analysis set” (the combined data from folds 2-10) and apply to the “assessment set” (data from fold 1).

Thank you!

Yes, we may create a training set different ways. Regardless, the way data prep is performed to avoid data leakage holds.

Yes, I think what you have described is correct.

Thank you, Jason.

Hi,

Did you post an article of some practice for Data Preparation to avoid Data leakage? If not, could you post one please?

Because usually, data preparation practice articles perform the pre-processing techniques on the entire dataset and the splitting is usually the last step before training and testing the model.

Thank you.

Yes, many. Any tutorial where you’re using a pipeline.

Hi,

I found a nice practice, here is the link

https://machinelearningmastery.com/data-preparation-without-data-leakage/

Thank you.

Excellent, yes!

Hi,

I have been looking for answers to how to properly avoid data leakage for a while now, and maybe you can help me out.

I understand that when applying transformations such as normalization one should fit_transform on training data and the transform on the testing, however what about data leakage in the stages such as Exploratory Data Analysis and Feature Engineering.

1) Is it correct to design features based on the combined dataset? (train+test)

If yes, wouldn’t that also provide us with information about the testing set that normally we wouldn’t have and thus cause leakage?

For example, by using testing data set the correlation between certain features is higher than by just analyzing the training set.

2) Assuming we deal with continuous numerical data ex. age. One method to deal with is to preprocess the data by performing binning to discrete bins/categories.

Again: do we perform binning on the whole dataset or separately on training and testing data?

If we do perform it separately then it can cause problems when one-hot encoding later on as we will have features in training data that do not exist in testing and vice versa.

3) What about applying log() transform to deal with skewed data? Can it be applied to the whole dataset as well?

I am at the beginning of my ML journey so maybe those questions are trivial but I would appreciate help anyway.

These examples will show you how exactly:

https://machinelearningmastery.com/data-preparation-without-data-leakage/

Probably not, features should be designed based on the training set and the process should be automated.

You can bring in broader domain knowledge, but it may be a risk or it may be fine. You will have to assess.

Log can be applied to train and test directly, no coefficient is used. If you’re using power transform it is a different story.

Thank you for sharing your knowledge, always adding excerpts from books and experts, thank you very much!

You are very welcome, Caleb!