Data preparation is difficult because the process is not objective, or at least it does not feel that way. Questions like “what is the best form of the data to describe the problem?” are not objective. You have to think from the perspective of the problem you want to solve and try a few different representations through your pipeline.

Hadley Wickham is the Adjunct Professor at Rice University and Chief Scientist and RStudio and he’s deeply interested in this problem. He has authored some of the most popular R packages for organizing and presenting your data such as reshape, plyr and ggplot2. In his journal article Tidy Data, Wickham presents his take on data cleaning and defines what he means by tidy data.

Kick-start your project with my new book Data Preparation for Machine Learning, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Tidy Data, photo by Andrew King

Data Cleaning

A lot of data analysis time is spent data cleaning and preparing data, up to 80% of the time. Wickham points out that it is not a once-off process, that it is iterative as you understand the problem deeper on each successive pass. The objective is to structure the data to facilitate the data analysis you set out to perform.

Tidy Data

Wickham’s idea leverages from ideas of relational databases and database normalization from computer science, although his audience is statisticians and data analysts. He starts off by defining terms, suggesting that talking about rows and columns is not rich enough:

- The data is a collection of values of a given type

- Every value belongs to a variable

- Every variable belongs to an observation

- Observations are variables for a unit (like an object or an event).

Variables are columns, observations are rows and types of observations are tables. Classically, Wickham relates this to third normal form from relational database theory. He also describes types of variables as fixed and measured and suggests organizing fixed before measured in a table.

- Fixed Variable: a variable that is part of the experimental design and known before the experiment took place (like demographics)

- Measured Variable: a variable that was measured in the study.

The objective of tidy data is to map the meaning of the data (semantics) onto the structure of the data.

Want to Get Started With Data Preparation?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Worked Examples

Wickham says that real datasets violate the principle of tidy data. He describes 5 common problems:

- Column headers are values, not variable names

- Multiple variables are stored in one column

- Variables are stored in both rows and columns

- Multiple types of observational units are stored in the same table

- A single observational unit is stored in multiple tables

He then proceeds to provide worked examples of each of these problems. He presents sample real world data for each problem and demonstrate the process to fix it so that it is tidy.

These examples are very instructive and it is very much worth reading the paper for these worked examples alone. He goes on to provide a larger case study with mortality data from Mexico.

Tidy Data Tools

It is only after data is tidy that is is useful for data analysis. Tidy data makes it easy to perform the tasks of data analysis with tools that are designed for tidy data:

- Manipulation: Variable manipulation such as aggregation, filtering, reordering, transforming and sorting.

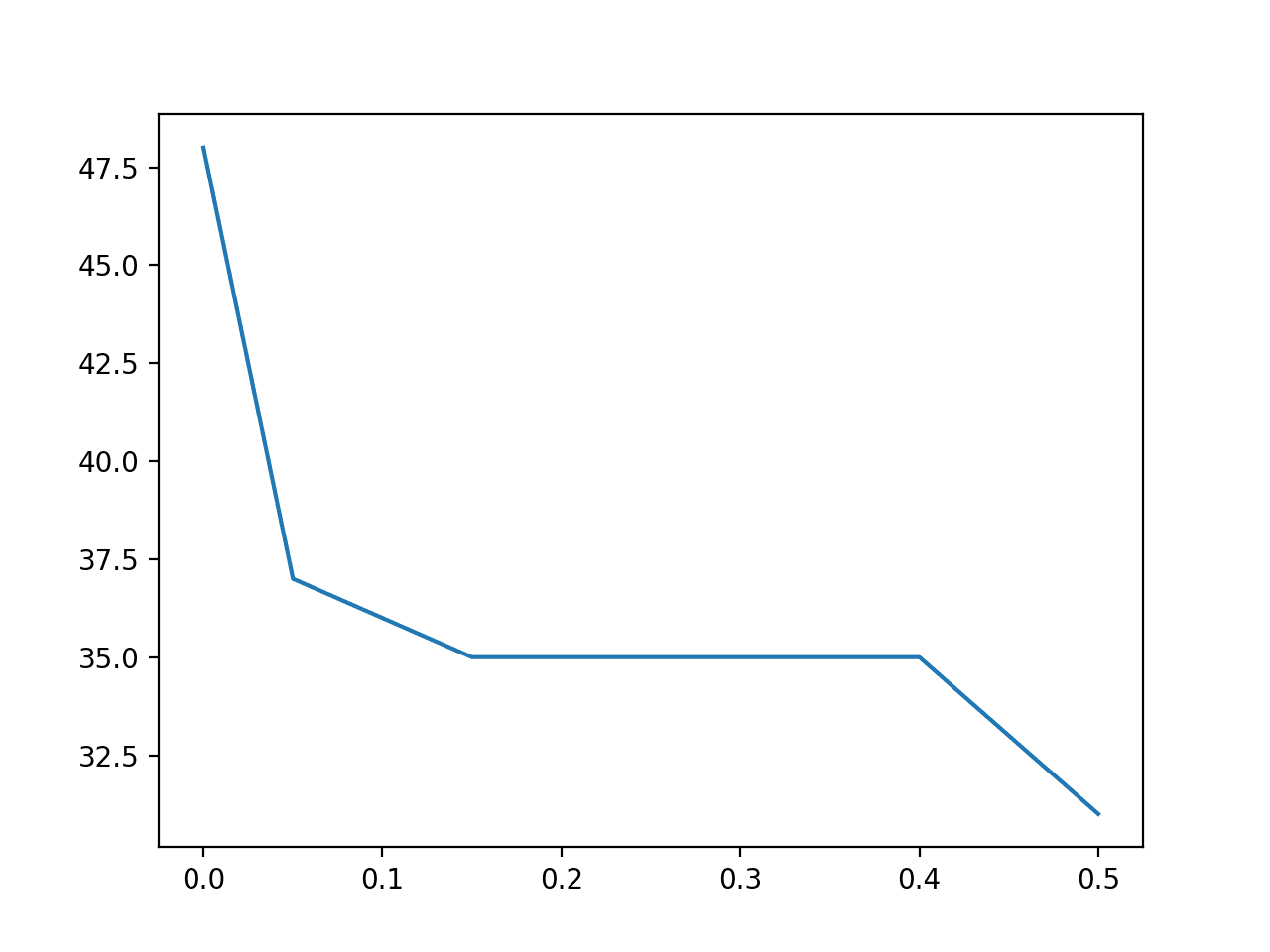

- Visualization: Summarizing data using graphs and charts for exploration and exposition.

- Modeling: This is the driving inspiration for tidy data, modeling is what we set out to do.

Wickham is careful to point out that tidy data is but one part of the data cleaning process. Other areas out side of tidy data include parsing variable types (dates and numbers), dealing with missing values, char encodings, typos and outliers.

He comments that the work is based on his own experience consulting and teaching, and his experience is considerable given his R packages are some of the most downloaded.

Resources

Wickham seemed to have unleashed these ideas in 2011. You can watch a presentation of similar ideas in a presentation titled Tidy Data on Vimeo and review the slides (PDF).

Wickam also gave a Google tech talk in 2011 on the same ideas titled Engineering Data Analysis (with R and ggplot2). I also recommend watching this talk. He highlights the importance of domain specific languages in this work like ggplot2 (grammar for graphics) and others. He also highlights the importance of using a programming languages for this work (rather than excel) to get properties such as transparency, reproducibility and automation. The same mortality case study is used.

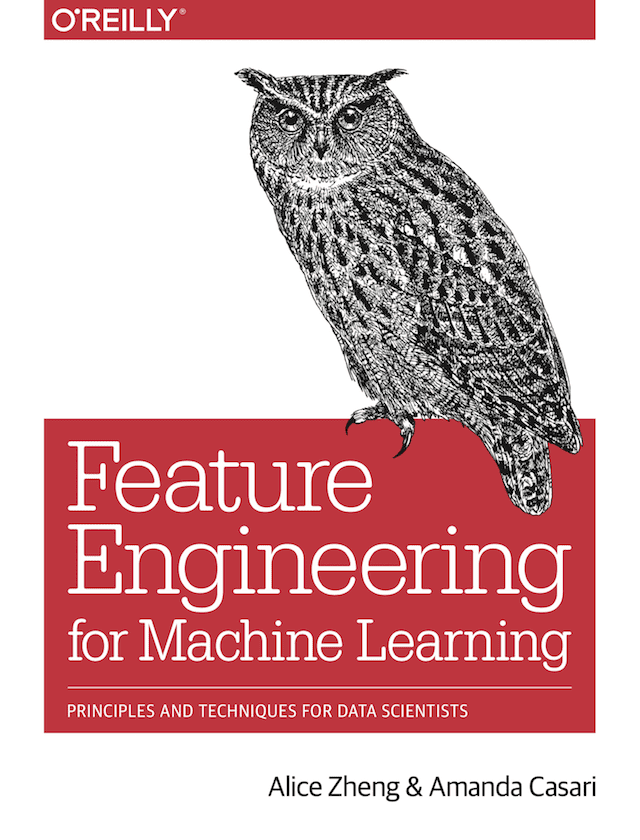

Some good books you might like to look into referenced by Wickam in his paper include:

- The Relational Model for Database Management: On relational database theory and data normalization.

- Exploratory Data Mining and Data Cleaning On data cleaning and data preparation best practices.

- The Grammar of Graphics On the now famous grammar of graphics used on R and python charting libraries ggplot.

- Lattice: Multivariate Data Visualization with R On the Lattice R package for charting data.

Thank you very much for sharing.

Thanks Bro. Really helpful.

Regards

Zaily.

I’m happy to hear that.

Thanks a lot Jason for your super helpful content series on data cleaning. Really appreciate your community support for learners.

Thanks.