Many machine learning models have been developed, each with strengths and weaknesses. This catalog is not complete without neural network models. In OpenCV, you can use a neural network model developed using another framework. In this post, you will learn about the workflow of applying a neural network in OpenCV. Specifically, you will learn:

- What OpenCV can use in its neural network model

- How to prepare a neural network model for OpenCV

Kick-start your project with my book Machine Learning in OpenCV. It provides self-study tutorials with working code.

Let’s get started.

Running a Neural Network Model in OpenCV

Photo by Nastya Dulhiier. Some rights reserved.

Overview of Neural Network Models

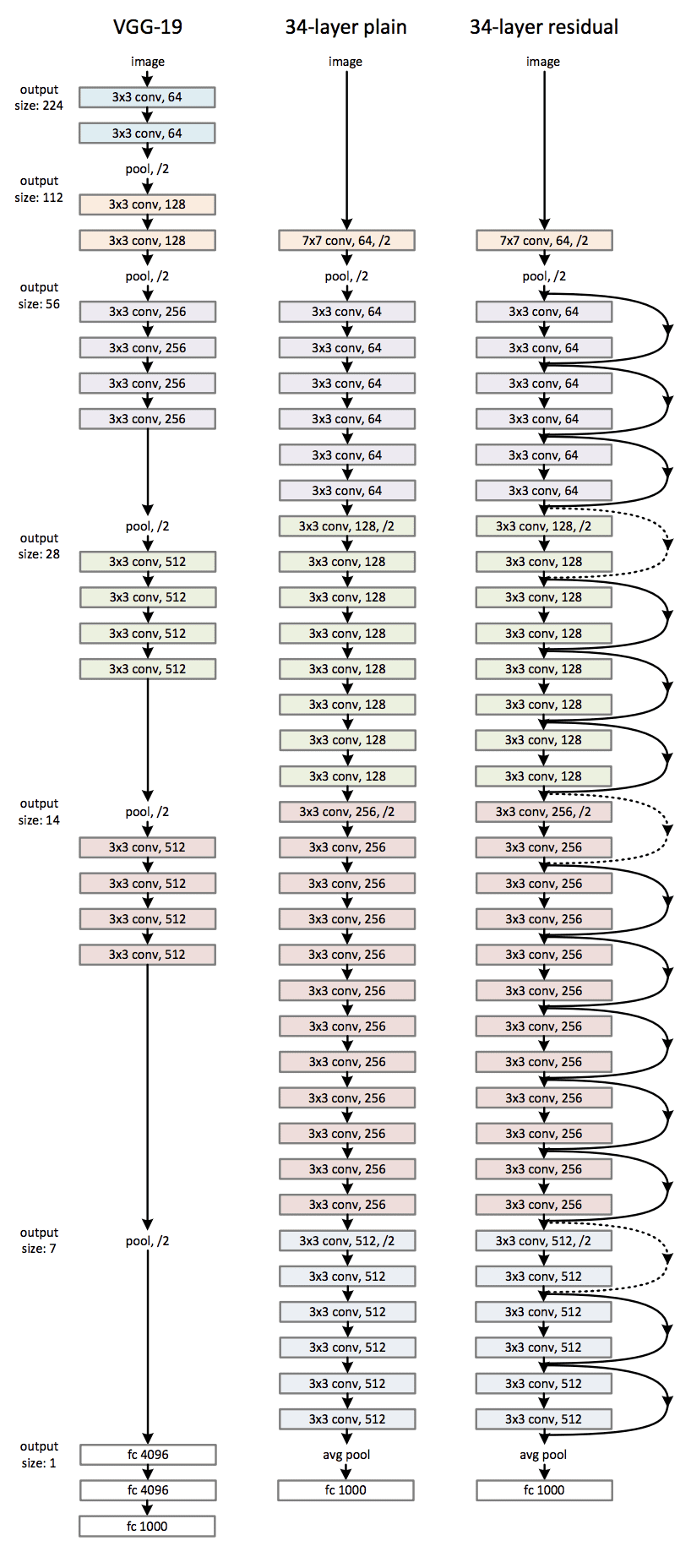

The other name of the neural network is multilayer perceptrons. It is inspired by the structure and function of the human brain. Imagine a web of interconnected nodes, each performing simple calculations on data that passes through it. These nodes, or “perceptrons,” communicate with each other, adjusting their connections based on the information they receive. These perceptrons are organized in a directed graph, and the calculations have a deterministic order from input to output. Their organization is often described in terms of sequential layers. The learning process allows the network to identify patterns and make predictions even with unseen data.

In computer vision, neural networks tackle tasks like image recognition, object detection, and image segmentation. Usually, within the model, three high-level operations are performed:

- Feature extraction: The network receives an image as input. The first layers then analyze the pixels, searching for basic features like edges, curves, and textures. These features are like building blocks, giving the network a rudimentary understanding of the image’s content.

- Feature learning: Deeper layers build upon these features, combining and transforming them to discover higher-level, more complex patterns. This could involve recognizing shapes or objects.

- Output generation: Finally, the last layers of the network use the learned patterns to make their predictions. Depending on the task, it could classify the image (e.g., cat vs. dog) or identify the objects it contains.

These operations are learned rather than crafted. The power of neural networks lies in their flexibility and adaptivity. By fine-tuning the connections between neurons and providing large amounts of labeled data, we can train them to solve complex vision problems with remarkable accuracy. But also because of their flexibility and adaptivity, neural networks are usually not the most efficient model regarding memory and computation complexity.

Training a Neural Network

Because of the nature of the model, training a generic neural network is not trivial. There is no training facility in OpenCV. Therefore, you must train a model using another framework and load it in OpenCV. You want to use OpenCV in this case because you are already using OpenCV for other image processing tasks and do not want to introduce another dependency to your project or because OpenCV is a much lighter library.

For example, consider the classic MNIST handwritten digit recognition problem. Let’s use Keras and TensorFlow to build and train the model for simplicity. The dataset can be obtained from TensorFlow.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

import matplotlib.pyplot as plt import numpy as np from tensorflow.keras.datasets import mnist # Load MNIST data (X_train, y_train), (X_test, y_test) = mnist.load_data() print(X_train.shape) print(y_train.shape) # Check visually fig, ax = plt.subplots(4, 5, sharex=True, sharey=True) idx = np.random.randint(len(X_train), size=4*5).reshape(4,5) for i in range(4): for j in range(5): ax[i][j].imshow(X_train[idx[i][j]], cmap="gray") plt.show() |

The two print statements give:

|

1 2 |

(60000, 28, 28) (60000,) |

You can see that the dataset provides the digits in 28×28 grayscale format. The training set has 60,000 samples. You can show some random samples using matplotlib, which you should see an image like the following:

This dataset has a label of 0 to 9, denoting the digits on the image. There are many models you can use for this classification problem. The famous LeNet5 model is one of them. Let’s create one using Keras syntax:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Conv2D, Dense, AveragePooling2D, Flatten # LeNet5 model model = Sequential([ Conv2D(6, (5,5), input_shape=(28,28,1), padding="same", activation="tanh"), AveragePooling2D((2,2), strides=2), Conv2D(16, (5,5), activation="tanh"), AveragePooling2D((2,2), strides=2), Conv2D(120, (5,5), activation="tanh"), Flatten(), Dense(84, activation="tanh"), Dense(10, activation="softmax") ]) model.summary() |

The last line shows the neural network architecture as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

Model: "sequential" ________________________________________________________________________________ Layer (type) Output Shape Param # ================================================================================ conv2d (Conv2D) (None, 28, 28, 6) 156 average_pooling2d (AveragePooling2D) (None, 14, 14, 6) 0 conv2d_1 (Conv2D) (None, 10, 10, 16) 2416 average_pooling2d_1 (AveragePooling2D) (None, 5, 5, 16) 0 conv2d_2 (Conv2D) (None, 1, 1, 120) 48120 flatten (Flatten) (None, 120) 0 dense (Dense) (None, 84) 10164 dense_1 (Dense) (None, 10) 850 ================================================================================ Total params: 61706 (241.04 KB) Trainable params: 61706 (241.04 KB) Non-trainable params: 0 (0.00 Byte) ________________________________________________________________________________ |

There are three convolutional layers followed by two dense layers in this network. The final dense layer output is a 10-element vector as a probability that the input image corresponds to one of the 10 digits.

Training such a network in Keras is not difficult.

First, you need to reformat the input from a 28×28 image pixels into a tensor of 28×28×1 such that the extra dimension is expected by the convolutional layers. Then, the labels should be converted into a one-hot vector to match the format of the network output.

Then, you can kickstart the training by providing the hyperparameters: The loss function should be cross entropy because it is a multi-class classification problem. Adam is used as the optimizer since it is the usual choice. And during training, you want to observe for its prediction accuracy. The training should be fast. So, let’s decide to run it for 100 epochs, but let it stop early if you can’t see the model improved on the loss metric in the validation set for four consecutive epochs.

Want to Get Started With Machine Learning with OpenCV?

Take my free email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

The code is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

import numpy as np from tensorflow.keras.utils import to_categorical from tensorflow.keras.callbacks import EarlyStopping # Reshape data to shape of (n_sample, height, width, n_channel) X_train = np.expand_dims(X_train, axis=3).astype('float32') X_test = np.expand_dims(X_test, axis=3).astype('float32') print(X_train.shape) # One-hot encode the output y_train = to_categorical(y_train) y_test = to_categorical(y_test) model.compile(loss="categorical_crossentropy", optimizer="adam", metrics=["accuracy"]) earlystopping = EarlyStopping(monitor="val_loss", patience=4, restore_best_weights=True) model.fit(X_train, y_train, validation_data=(X_test, y_test), epochs=100, batch_size=32, callbacks=[earlystopping]) |

Running this model would print the progress like the following:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

Epoch 1/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.1567 - accuracy: 0.9528 - val_loss: 0.0795 - val_accuracy: 0.9739 Epoch 2/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0683 - accuracy: 0.9794 - val_loss: 0.0677 - val_accuracy: 0.9791 Epoch 3/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0513 - accuracy: 0.9838 - val_loss: 0.0446 - val_accuracy: 0.9865 Epoch 4/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0416 - accuracy: 0.9869 - val_loss: 0.0438 - val_accuracy: 0.9863 Epoch 5/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0349 - accuracy: 0.9891 - val_loss: 0.0389 - val_accuracy: 0.9869 Epoch 6/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0300 - accuracy: 0.9903 - val_loss: 0.0435 - val_accuracy: 0.9864 Epoch 7/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0259 - accuracy: 0.9914 - val_loss: 0.0469 - val_accuracy: 0.9864 Epoch 8/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0254 - accuracy: 0.9918 - val_loss: 0.0375 - val_accuracy: 0.9891 Epoch 9/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0209 - accuracy: 0.9929 - val_loss: 0.0479 - val_accuracy: 0.9853 Epoch 10/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0178 - accuracy: 0.9942 - val_loss: 0.0396 - val_accuracy: 0.9882 Epoch 11/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0182 - accuracy: 0.9938 - val_loss: 0.0359 - val_accuracy: 0.9891 Epoch 12/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0150 - accuracy: 0.9952 - val_loss: 0.0445 - val_accuracy: 0.9876 Epoch 13/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0146 - accuracy: 0.9950 - val_loss: 0.0427 - val_accuracy: 0.9876 Epoch 14/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0141 - accuracy: 0.9954 - val_loss: 0.0453 - val_accuracy: 0.9871 Epoch 15/100 1875/1875 [==============================] - 7s 4ms/step - loss: 0.0147 - accuracy: 0.9951 - val_loss: 0.0404 - val_accuracy: 0.9890 |

This training stopped at epoch 15 because of the early stopping rule.

Once you finished the model training, you can save your Keras model in the HDF5 format, which will include both the model architecture and the layer weights:

|

1 |

model.save("lenet5.h5") |

The complete code to build a model is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 |

#!/usr/bin/env python import numpy as np from tensorflow.keras.datasets import mnist from tensorflow.keras.callbacks import EarlyStopping from tensorflow.keras.layers import Conv2D, Dense, AveragePooling2D, Flatten from tensorflow.keras.models import Sequential from tensorflow.keras.utils import to_categorical # Load MNIST data (X_train, y_train), (X_test, y_test) = mnist.load_data() print(X_train.shape) print(y_train.shape) # LeNet5 model model = Sequential([ Conv2D(6, (5,5), input_shape=(28,28,1), padding="same", activation="tanh"), AveragePooling2D((2,2), strides=2), Conv2D(16, (5,5), activation="tanh"), AveragePooling2D((2,2), strides=2), Conv2D(120, (5,5), activation="tanh"), Flatten(), Dense(84, activation="tanh"), Dense(10, activation="softmax") ]) # Reshape data to shape of (n_sample, height, width, n_channel) X_train = np.expand_dims(X_train, axis=3).astype('float32') X_test = np.expand_dims(X_test, axis=3).astype('float32') # One-hot encode the output y_train = to_categorical(y_train) y_test = to_categorical(y_test) # Training model.compile(loss="categorical_crossentropy", optimizer="adam", metrics=["accuracy"]) earlystopping = EarlyStopping(monitor="val_loss", patience=4, restore_best_weights=True) model.fit(X_train, y_train, validation_data=(X_test, y_test), epochs=100, batch_size=32, callbacks=[earlystopping]) model.save("lenet5.h5") |

Converting the Model for OpenCV

OpenCV supports neural networks in its dnn module. It can consume models saved by several frameworks, including TensorFlow 1.x. But for the Keras models saved above, it is better to first convert into the ONNX format.

The tool to convert a Keras model (HDF5 format) or generic TensorFlow model (Protocol Buffer format) is the Python module tf2onnx. You can install it in your environment with the following command:

|

1 |

pip install tf2onnx |

Afterwards, you have the conversion command from the module. For example, since you saved a Keras model into HDF5 format, you can use the following command to convert it into an ONNX format:

|

1 |

python -m tf2onnx.convert --keras lenet5.h5 --output lenet5.onnx |

Then, a file lenet5.onnx is created.

To use it in OpenCV, you need to load the model into OpenCV as a network object. Should it be a TensorFlow Protocol Buffer file, there is a function cv2.dnn.readNetFromTensorflow('frozen_graph.pb') for this. In this post, you are using an ONNX file. Hence, it should be cv2.dnn.readNetFromONNX('model.onnx')

This model assumes an input as a “blob”, and you should invoke the model with the following:

|

1 2 3 4 |

net = cv2.dnn.readNetFromONNX('model.onnx') blob = cv2.dnn.blobFromImage(numpyarray, scale, size, mean) net.setInput(blob) output = net.forward() |

The blob is also a numpy array but reformatted to add the batch dimension.

Using the model in OpenCV only needs a few lines of code. For example, we get the images again from the TensorFlow dataset and check all test set samples to compute the model accuracy:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

import numpy as np import cv2 from tensorflow.keras.datasets import mnist # Load the frozen model in OpenCV net = cv2.dnn.readNetFromONNX('lenet5.onnx') # Prepare input image (X_train, y_train), (X_test, y_test) = mnist.load_data() correct = 0 wrong = 0 for i in range(len(X_test)): img = X_test[i] label = y_test[i] blob = cv2.dnn.blobFromImage(img, 1.0, (28, 28)) # Run inference net.setInput(blob) output = net.forward() prediction = np.argmax(output) if prediction == label: correct += 1 else: wrong += 1 print("count of test samples:", len(X_test)) print("accuracy:", (correct/(correct+wrong))) |

Running a neural network model in OpenCV is slightly different from running the model in TensorFlow in such a way that you need to assign the input and get the output in two separate steps.

In the code above, you convert the output into a “blob” with no scaling and shifting since this is how the model is trained. You set the input of a single image, and the output will be a 1×10 array. As a softmax output, you get the model’s prediction using the argmax function. The subsequent calculation of average accuracy over the test set is trivial. The above code prints:

|

1 2 |

count of test samples: 10000 accuracy: 0.9889 |

Summary

In this post, you learned how to use a neural network in OpenCV via its dnn module. Specifically, you learned

- How to train a neural network model and convert it to ONNX format for the consumption of OpenCV

- How to use load the model in OpenCV and run the model

Thanks. Really useful!

You are very welcome Dawit! Let us know if you have any questions we can help with.

Awesome content!

Thank you Chikopa for the support!

Really useful! Thank you!

You are very welcome vish_1499! We appreciate your support!

Hire me sir give me a chance in your company I will give my hundred percent efforts in your company

Hi Soniya…Sorry, I cannot help you get a job directly.

But I have some advice.

I recommend that you focus your learning on how to work through predictive modeling probelms end-to-end, from problem definition to making predictions. I describe this process here:

Applied machine learning process

I recommend practicing this process with your chosen tools/libraries and develop a portfolio of completed machine learning projects. This portfolio can be used to demonstrate your growing skills and provide a code base that you can leverage on larger and more sophisticated projects.

You can learn more about developing a machine learning portfolio here:

Build a Machine Learning Portfolio

I recommend searching for a job in smaller companies and start-ups that value your ability to deliver results (demonstrated by your portfolio) over old-fashioned ways of hiring (e.g. having a degree on the topic).