What is a parametric machine learning algorithm and how is it different from a nonparametric machine learning algorithm?

In this post you will discover the difference between parametric and nonparametric machine learning algorithms.

Kick-start your project with my new book Master Machine Learning Algorithms, including step-by-step tutorials and the Excel Spreadsheet files for all examples.

Let’s get started.

Parametric and Nonparametric Machine Learning Algorithms

Photo by John M., some rights reserved.

Learning a Function

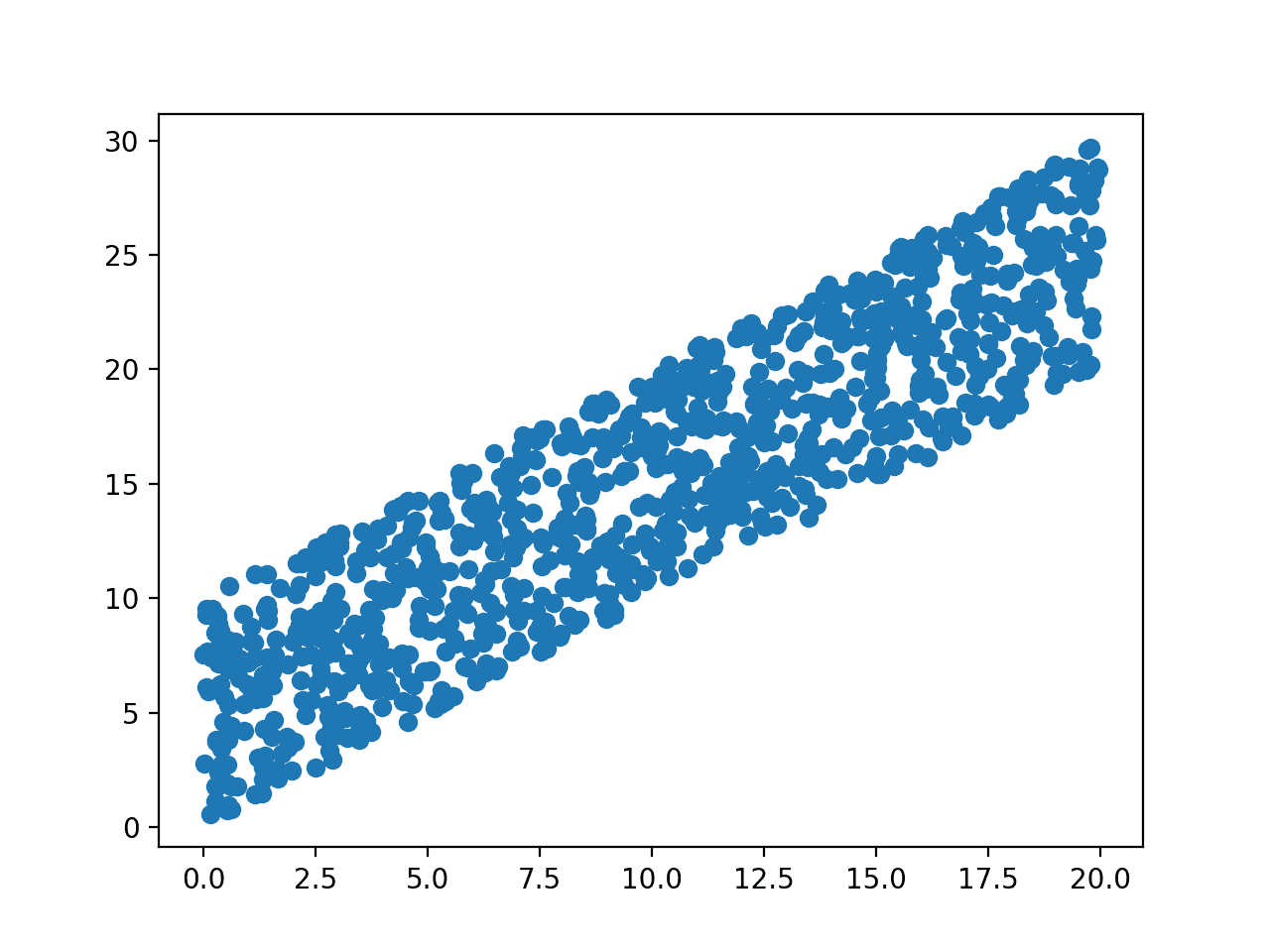

Machine learning can be summarized as learning a function (f) that maps input variables (X) to output variables (Y).

Y = f(x)

An algorithm learns this target mapping function from training data.

The form of the function is unknown, so our job as machine learning practitioners is to evaluate different machine learning algorithms and see which is better at approximating the underlying function.

Different algorithms make different assumptions or biases about the form of the function and how it can be learned.

Get your FREE Algorithms Mind Map

Sample of the handy machine learning algorithms mind map.

I've created a handy mind map of 60+ algorithms organized by type.

Download it, print it and use it.

Also get exclusive access to the machine learning algorithms email mini-course.

Parametric Machine Learning Algorithms

Assumptions can greatly simplify the learning process, but can also limit what can be learned. Algorithms that simplify the function to a known form are called parametric machine learning algorithms.

A learning model that summarizes data with a set of parameters of fixed size (independent of the number of training examples) is called a parametric model. No matter how much data you throw at a parametric model, it won’t change its mind about how many parameters it needs.

— Artificial Intelligence: A Modern Approach, page 737

The algorithms involve two steps:

- Select a form for the function.

- Learn the coefficients for the function from the training data.

An easy to understand functional form for the mapping function is a line, as is used in linear regression:

b0 + b1*x1 + b2*x2 = 0

Where b0, b1 and b2 are the coefficients of the line that control the intercept and slope, and x1 and x2 are two input variables.

Assuming the functional form of a line greatly simplifies the learning process. Now, all we need to do is estimate the coefficients of the line equation and we have a predictive model for the problem.

Often the assumed functional form is a linear combination of the input variables and as such parametric machine learning algorithms are often also called “linear machine learning algorithms“.

The problem is, the actual unknown underlying function may not be a linear function like a line. It could be almost a line and require some minor transformation of the input data to work right. Or it could be nothing like a line in which case the assumption is wrong and the approach will produce poor results.

Some more examples of parametric machine learning algorithms include:

- Logistic Regression

- Linear Discriminant Analysis

- Perceptron

- Naive Bayes

- Simple Neural Networks

Benefits of Parametric Machine Learning Algorithms:

- Simpler: These methods are easier to understand and interpret results.

- Speed: Parametric models are very fast to learn from data.

- Less Data: They do not require as much training data and can work well even if the fit to the data is not perfect.

Limitations of Parametric Machine Learning Algorithms:

- Constrained: By choosing a functional form these methods are highly constrained to the specified form.

- Limited Complexity: The methods are more suited to simpler problems.

- Poor Fit: In practice the methods are unlikely to match the underlying mapping function.

Nonparametric Machine Learning Algorithms

Algorithms that do not make strong assumptions about the form of the mapping function are called nonparametric machine learning algorithms. By not making assumptions, they are free to learn any functional form from the training data.

Nonparametric methods are good when you have a lot of data and no prior knowledge, and when you don’t want to worry too much about choosing just the right features.

— Artificial Intelligence: A Modern Approach, page 757

Nonparametric methods seek to best fit the training data in constructing the mapping function, whilst maintaining some ability to generalize to unseen data. As such, they are able to fit a large number of functional forms.

An easy to understand nonparametric model is the k-nearest neighbors algorithm that makes predictions based on the k most similar training patterns for a new data instance. The method does not assume anything about the form of the mapping function other than patterns that are close are likely to have a similar output variable.

Some more examples of popular nonparametric machine learning algorithms are:

- k-Nearest Neighbors

- Decision Trees like CART and C4.5

- Support Vector Machines

Benefits of Nonparametric Machine Learning Algorithms:

- Flexibility: Capable of fitting a large number of functional forms.

- Power: No assumptions (or weak assumptions) about the underlying function.

- Performance: Can result in higher performance models for prediction.

Limitations of Nonparametric Machine Learning Algorithms:

- More data: Require a lot more training data to estimate the mapping function.

- Slower: A lot slower to train as they often have far more parameters to train.

- Overfitting: More of a risk to overfit the training data and it is harder to explain why specific predictions are made.

Further Reading

This section lists some resources if you are looking to learn more about the difference between parametric and non-parametric machine learning algorithms.

Books

- An Introduction to Statistical Learning: with Applications in R, chapter 2

- Artificial Intelligence: A Modern Approach, chapter 18

Posts

- What are the advantages of using non-parametric methods in machine learning? on Quora

- What are the disadvantages of non-parametric methods in machine learning? on Quora

- Nonparametric statistics on Wikipedia

- Parametric statistics on Wikipedia

- Parametric vs. Nonparametric on Stack Exchange

Summary

In this post you have discovered the difference between parametric and nonparametric machine learning algorithms.

You learned that parametric methods make large assumptions about the mapping of the input variables to the output variable and in turn are faster to train, require less data but may not be as powerful.

You also learned that nonparametric methods make few or no assumptions about the target function and in turn require a lot more data, are slower to train and have a higher model complexity but can result in more powerful models.

If you have any questions about parametric or nonparametric machine learning algorithms or this post, leave a comment and I will do my best to answer them.

Update: I originally had some algorithms listed under the wrong sections like neural nets and naive bayes, which made things confusing. All fixed now.

hi jason

thanks for taking your time to summarize these topics so that even a novice like me can understand. love your posts

i have a problem with this article though, according to the small amount of knowledge i have on parametric/non parametric models, non parametric models are models that need to keep the whole data set around to make future predictions. and it looks like Artificial Intelligence: A Modern Approach, chapter 18 agrees with me on this fact stating neural nets are parametric and once the weights w are learnt we can get rid of the training set. i would say its the same case with trees/naive bays as well.

so what was your thinking behind in categorizing these methods as non-parametric?

thanks,

a confused beginner

Indeed simple multilayer perceptron neural nets are parametric models.

Non-parametric models do not need to keep the whole dataset around, but one example of a non-parametric algorithm is kNN that does keep the whole dataset. Instead, non-parametric models can vary the number of parameters, like the number of nodes in a decision tree or the number of support vectors, etc.

Isn’t number of nodes in the decision tree a hyper parameter?

One more question is, How do you deploy non parametric machine learning models in production as there parameters are not fixed?

No, but the max depth of the tree is.

You can finalize your model, save it to file and load it later to make predictions on new data.

See this post:

https://machinelearningmastery.com/train-final-machine-learning-model/

Does that help?

Excellent… Master class types

I am also interesting to know why Naive Bayes is categorized as non-parametric.

Yes, Naive bayes is generally a parametric method as we choose a distribution (Gaussian) for the input variables, although there are non-parametric formulations of the method that use a kernel estimator. In fact, these may be more common in practice.

Confused here too.

AFAIK, parametric models have fixed parameter set, i.e. the amount of parameters won’t change once you have designed the model, whereas the amount of parameters of non-parametric models varies, for example, Gaussian Process and matrix factorization for collaborative filtering etc.

Correct me if I’m wrong 🙂

This is correct.

This is the single useful explanation. Thanks.

I think the classification does not really depend on what ‘parameters’ are. It’s about the assumption you have made when you try to construct a model or function. Parametric models usually has a probability model (i.e. pdf) behind to support the function-finding process such as normal distribution or other distribution model.

On the other hand, non-parametric model just depends on the error minimisation search process to identify the set of ‘parameters’ which has nothing to do with a pdf.

So, parameters are still there for both parametric and non-parametric ML algo. It just doesn’t have additional layer of assumption to govern the nature of pdf of which the ML algo tries to determine.

Hi Simon, the statistical definition of parametric and non-parametric does not agree with you.

The crux of the definition is whether the number of parameters is fixed or not.

It might be more helpful for us to consider linear and non-linear methods instead…

Is there a relation between parametric/nonparametric models and lazy/eager learning?

In machine learning literature, nonparametric methods are also

call instance-based or memory-based learning algorithms.

-Store the training instances in a lookup table and interpolate

from these for prediction.

-Lazy learning algorithm, as opposed to the eager parametric

methods, which have simple model and a small number

of parameters, and once parameters are learned we no longer

keep the training set.

I have questions of distinguishing between parametric and non parametric algorithms: 1) for linear regression, we can also introducing x^2, x^3 … to make the boundary we learned nonlinear, does it mean that it becomes non parametric in this case?

2) The main difference between them is that SVM puts additional constraints on how do we select the hyperplane . Why perception is considered as parametric while svm is not?

Hi Jianye,

When it comes down to it, parametric means a fixed number of model parameters to define the modeled decision.

Adding more inputs makes the linear regression equation still parametric.

SVM can choose the number of support vectors based on the data and hyperparameter tuning, making it non-parametric.

I hope that is clearer.

Hi Jason,

Nice content here. Had some suggestions,

1. Do you think, it would be a good idea to include histogram: as a simple non-parametric model for estimation probability distribution ? Some beginners might be able to related to histograms.

2. Also, may be mentioning SVM(RBF kernel) as non-parametric to be precise.

What do you think ?

Hi Pramit,

1. nice suggestion.

2. perhaps, there is debate about where SVM sits. I do think it is nonparametric as the number of support vectors is chosen based on the data and the interaction with the argument-defined margin.

Jason, as always, an excellent post.

Thanks Manish.

jason ,it is a good post about parametric and non parametric model

but i still confused

did deep learning supposed to be parametric or non parametric and why

Best Regards

There is not a hard line between parametric and non-parametric.

I think of neural nets as non-parametric myself.

See this:

https://www.quora.com/Are-Neural-Networks-parametric-or-non-parametric-models

Hello Jason, thanks for discussing this topic. your consideration of NN as non-parametric doesn’t make sense to me as per your post & suggestions above.! Please [read here](https://stats.stackexchange.com/questions/322049/are-deep-learning-models-parametric-or-non-parametric) & [hear](r/MachineLearning – Is artificial neural network a parametric or non-parametric method? (https://www.reddit.com/r/MachineLearning/comments/kvkud/is_artificial_neural_network_a_parametric_or/c2noexo)) for more clarity and correct me where I am wrong.!

Perhaps you can summarize the links for me?

Hi

The answer is very convincing, i just have a small question, for pressure distribution plots which ML algorithm should we consider?

Sorry, I don’t know what pressure distribution plots are.

Hi Jason,

Decision tree contains parameters like Splitting Criteria, Minimal Size, Minimal Leaf Size, Minimal Gain, Maximal Depth then why it is called as non-parametric. Please throw some light on it.

They are considered hyperparameters of the model.

The chosen split points are the parameters of the model and their number can vary based on specific data. Thus, the decision tree is a nonparametric algorithm.

Does that make sense?

Could you please briefly tell me what are the parameters and hyperparameters in the following models:

1.Naive Baye

2.KNN

3.Decision Tree

4.Multiple Regression

5.Logistic Regression

Yes, please search the blog for posts on each of these algorithms.

Hi Jason! Nice blog.

I have a doubt about the “simple neural networks”, shouldn’t it be “neural networks” in general? The number of parameters is determined a priori.

In addition, I think that linear SVM might be considered as a parametric model because, despite the number of support vector varies with the data, the final decision boundary can be expressed as a fixed number of parameters.

I know the distinction between parametric and non-parametric is a little bit ambiguous, but what I said makes sense, right?

Up to this question! I have the same doubt about linear SVM.

Saludos!

Hi Jason, I want to know that despite having not

required much data to train, does the parametric algorithms also cause overfitting? Or can they be lead to underfitting, instead?

Both types of algorithms can over and under fit data.

It is more common that parametric underfit and non-parametric overfit.

Hi Jason, thanks for your help but there is a request by my side to also look question posted above my question because it is a nice question about distinction between parametric vs non-parametric and I am very curious to know your opinion about this question posted by Guiferviz on november 3, 2017. Please answer to this question…….

Hi Jason, you mention that simple multilayer perceptron neural nets are parametric models. This I understand, but which neural networks are then non-parametric? I assume e.g. that neural nets with dropouts are non-parametric?

Perhaps. Categorizing algorithms gets messy.

if we are doing regression for decision trees

do we need to check for correlation among the features?

when we talk about nonparamertic or parametric are we talking about the method like CART or we are talking about the data.

and if my data are not normally distributed do I have to do data transformation to make them normally dis. if I want to use parametric or nonparamertic

It is a good idea to make the problem as simple as possible for the model.

Nonlinear methods do not require data with a Normal distribution.

Hi Jason,

Good post.Could u pls explain parametric and non parametric methods by an example?

Bit confused about the parameters(what are the parameters,model parameters).For example,in the script the X and y values are the parameters?

Great question, this post will help:

https://machinelearningmastery.com/difference-between-a-parameter-and-a-hyperparameter/

Hi Jason

Can you post or let me know about parameter tuning.

Yes, I have many posts, try a search for “grid search”

Hi Jason, Can you throw some light on Semi Parametric Models and examples of them?

I’ve not heard of them before.

Do you have an example?

Hi Jason,

If you may just use K-NN, naive bayes, simple perceptron and multilayer perceptron for building a real time prediction system in a web based application, which algorithm you use for classification and why ? Can you please tell me algorithm’s advantages and disadvantages for this situation ?

Thank you.

I would test each and se the one that gave the best performance.

Hi Jason,

Nice summary and clear examples.

But I have one problem with understanding…

Why does the division of models into parametric and non-parametric take only as a criterion whether the number of parameters is fixed and whether we have assumptions of the function?

Shouldn’t there be a criterion for whether the distribution of attributes is known?

I know that it is possible if we know the parameters, say the mean and the variance in normal distribution that we can fully determine it with those parameters.

But here we have an example that hypothesis (b) that it has a given mean but unspecified variance is a parametric hypothesis

https://en.wikipedia.org/wiki/Nonparametric_statistics

Does the division of models into parametric and nonparametric differences from the division of statistical methods into parametric and nonparametric?

Is it an argument that these are different things, models serve for prediction, while methods serve for hypothesis testing? For some it is necessary to know the distribution, while for others it is not?

Isn’t it an advantage to have more information about the distribution shape, do some models imply a certain distribution (e.g. normal) or do they simply give better results with a certain distribution?

I know that not all distributions can be converted to a normal distribution without losing the essential distance between the points. Does it make sense to pre-process with logarithmic transformation all numerical attributes (try to convert to normal) to improve model performance?

Here are some discussion about that topic

https://www.kaggle.com/getting-started/47679

Thanks.

It is just one approach to think about the diffrent types of algorithms, not the only approach.

Yes, it is related – e.g. do we know and specify the functional form of the relationship or not.

Thanks Jason for your great site .

I appreciate your vast experience and insights and for that reason I feel confident to ask you a machine learning question.

I want to determine the remaining useful life of a railway wagon based on 9 measured parameters (e.g. Hollow wear, tread wear, etc) for every wheel (8 wheels). I do not have any labeled data and therefore I know that it is an unsupervised learning problem. A regression is not possible to predict the time required. I tried k-means, where k=5 (optimal k according to elbow method) but cant make sense of the result. Do you have any suggestions of what algorithm I can use for this situation?

Thanks!

This may help you define your problem:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Perhaps look into using survival analysis or hazard model:

https://en.wikipedia.org/wiki/Survival_analysis

It’s good to read this. But I have a question regarding parametric methods.

How parametric methods are exceptional in certain cases in machine learning?

May i know the answer with example?

TIA

Good question, parametric methods are excellent when they fit the problem you are solving well. They can be the most efficient and most effective.

You rocked man, I was confused but because of you I am jem clear now most probably.

Thanks again..

You’re welcome!

Hi Jason, great article explaining the subject in short clear summary. But from my understanding about those algorithms and particularly KNN, I have though a different opinion, which might be wrong, about the benefits and limitations as presented in this article, if the three cited examples are all nonparametric (and they are). For benefits/performance, does not apply to all three examples. KNN can be very slow in prediction, the more data, the slower it gets because it needs to compute the distance from each data sample hen sort it. On the contrary, also Limitations/slow training does not apply to KNN as KNN is supper fast in training (in fact it takes no time because it need not train anything). I am very interested to know your feedback on this.

Yes, KNN is fast during training and relatively slow during inference/prediction.

Hi Jason,

From my reading, Perceptron seems to be non-parametric instead of parametric..

Thanks.

hi jason,

Can you explain these lines which you have stated above in more simpler way,

“The problem is, the actual unknown underlying function may not be a linear function like a line. It could be almost a line and require some minor transformation of the input data to work right. Or it could be nothing like a line in which case the assumption is wrong and the approach will produce poor results.”

Hello…Please explain what part is not clear to you so that I may better help you.

Hello,

why isn’t mentioned the “kernel density estimtion (KDE)” for nonparametric estimation? Is it considered within SVM?

Best,

Hi Elie…You may find the following of interest:

https://machinelearningmastery.com/probability-density-estimation/

why can’t I download the photo of algorithms?

Hi Elham…Please elaborate on what you are trying to do and what you are experiencing?