Engineers all over the globe get instant headaches and feel seriously unwell when they hear the “Data is the new oil” phrase. Well, if it is, then why don’t we just go to the nearest data pump and fill up our tanks for a nice, long ride down machine learning valley?

It’s just not that easy. Data is messy. Data needs to be cleaned, transformed, anonymized and most importantly, data needs to be available. All in all, that data oil well is pretty tricky to get a good flow of compliant and ready-to-use data out of.

Synthetic oil or rather, synthetic data to the rescue! But what is synthetic data today? AI-generated synthetic data is set to become the standard data alternative for building AI and machine learning models. Originally a privacy-enhancing technology for data anonymization without intelligence loss, synthetic data is expected to replace or complement original data in AI and machine learning projects. Synthetic data generators can open the taps on the proverbial data well and allow engineers to inject new domain knowledge into their models.

Synthetic data companies, like MOSTLY AI offer state of the art generative AI for data. Choosing the right platform or opting for open source synthetic data must be a hands-on process with a lot of experimentation. To get the most out of this new technology, it’s a good idea to keep in mind some of the principles necessary for synthetic data generation:

- You need a large enough data sample.

Your data sample or seed data, that is used for training the synthetic data generating algorithm should contain at least 1000 data subjects, give or take, depending on your specific dataset. Even if you have less, give it a try – MOSTLY AI’s synthetic data generator has automated privacy checks, so you won’t end up with bad quality data or a privacy leak. - Separate your static data – describing subjects – and dynamic data – describing events – into separate tables. If you don’t have any time series data in your dataset, use only one table for synthesization.

- If you want to synthesize time-series data and run a two-table setup, make sure your tables refer to each other with primary and foreign keys.

- Choose the right synthetic data generator. MOSTLY AI’s free synthetic data generator comes with built-in quality checks and allows you to assess the accuracy and privacy of your synthetic data closely.

Performance boost for machine learning

A lot of people tried and failed to build synthetic data themselves. The accuracy and privacy of the resulting datasets can vary considerably and without automated privacy checks, you could end up with something potentially dangerous. But that’s not everything. The synthetic data use case for machine learning goes way beyond privacy.

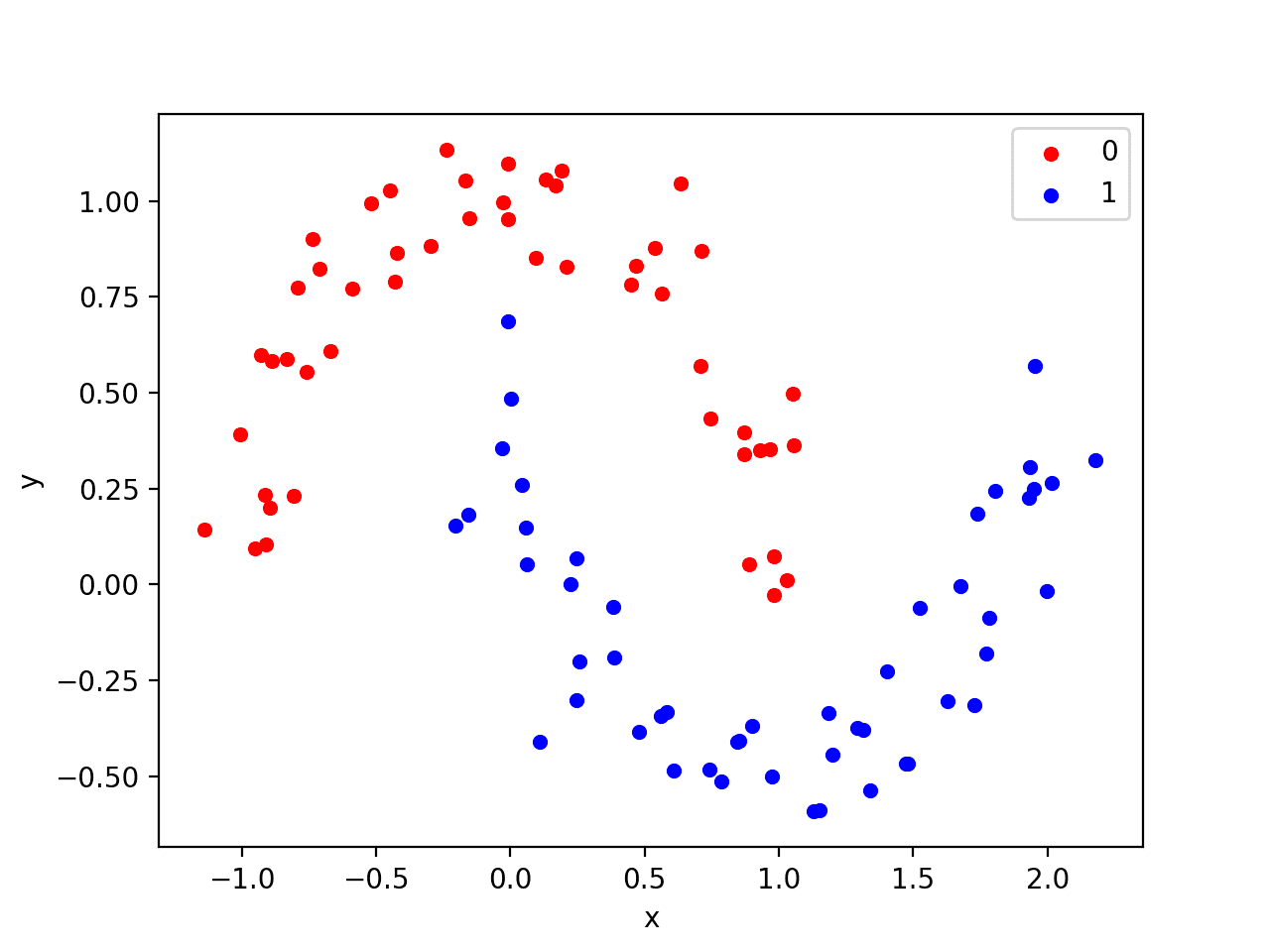

Algorithms are only as good as the data that is used to train them. Synthetic data offers a machine learning performance boost in two ways: simply providing more data for training and by using more synthetic samples of minority classes than what is available. The performance of machine learning models can increase as much as 15%, depending on the exact dataset and model.

Fairness and explainability

According to some estimates, as much as 85% of algorithms are erroneous due to bias. AI-generation can be used to enforce fairness definitions and to provide insight into the decision making of algorithms through data that is safe to share with regulators and third parties. High quality AI-generated synthetic data can be used as drop in placement for local interpretability in validating machine learning models.

Of course, you won’t know until you try. MOSTLY AI’s robust synthetic data generator offers free synthetic data up to 100K rows a day with interactive quality assurance reports. Go ahead and synthesize your first dataset today. If you have questions related to data prep, read more about how to generate synthetic data on our blog.

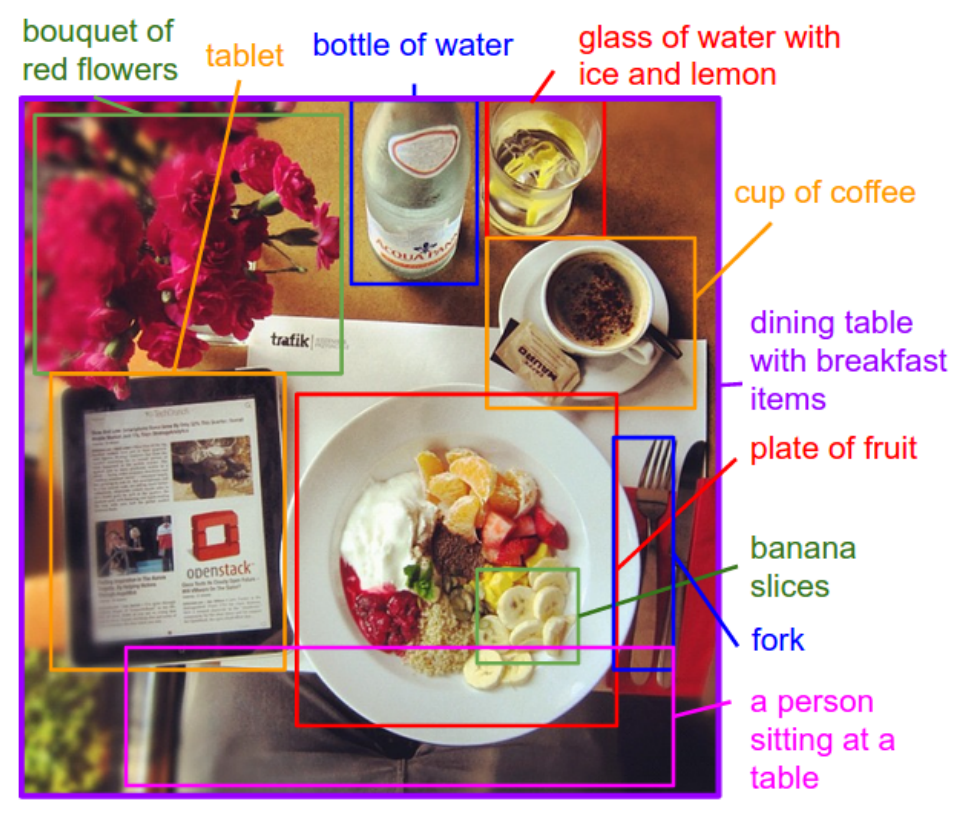

I have worked with machine learning models a lot, and I personally have found that synthetic data can generate more powerful training than raw data. As an example, there was a study done on an image classifciation algorithm. A large amount of the raw data used to train the algorithm had wolves in wintery mountenesque terrains. Because wolves often are in mountain terrains, it was found that the algorithm used the details of the mountain and the snow to classify the wolves more than the actual wolves. You see, the thing is that with “real” raw data there can often be patterns that you don’t want your algoirhtm to pay attention to, but it will anyway because it’s hard to near impossible to remove those paterns in raw data. However, if the researchers were take pictures of wolves and past them into various backgrounds the algorithm would learn to not focus on the background which would in turn improve it’s performance. As such, if you have a specific bias of what you want your algorithm to learn, creating and controlling that bias with synthetic data can help improve your models performance significiantly.

Hi Matthew…You raise many great points! The following resource presents some additional considerations:

https://www.spiceworks.com/tech/artificial-intelligence/articles/synthetic-data-in-machine-learning/

I think that to some extent, the value of synthetic data is just an artifact of the way that our current machine learning methods work. Humans don’t need to see thousands or millions of examples to understand something. Humans are also able to learn about counterexamples and exceptions to the rule with very few examples, especially if is pointed out to them that they are exceptions. The need to increase the sampling rate of low probability events stems from our current algorithm’s failures to learn under-represented areas of the data distribution. We need to make sure that all aspects of the distribution are well represented.

The reason I expect synthetic data to become less useful for training better models is that the goal of a machine learning method is to learn the shape of an underlying statistical distribution, and synthetic data only reflects the algorithm’s current hypothesis about that distribution given the real data it was trained on. The synthetic data can’t actually provide new information about the true distribution because it was drawn from the hypothesis distribution, our best guess. It will only serve to reinforce (and maybe polish) our best guess, not give us anything genuinely new.

Synthetic data will be useful for privacy still, as well as for communicating information about the shape of a statistical distribution. But it won’t always help us train more.[

Outstanding feedback Zac! We greatly appreciate it!

Great post, I’ve been using synthetic data for training for quite some time and my main observations are a decrease in loss for neural networks. My only “constructive criticism” would be if you guys could include in the posts some or more research papers that actually use these techniques and show some of these “improvements” and “benefits” for generating data. Thats just my personal opinion as a researcher. This site has always been a great source of inspiration though and resources, so thanks for the great content!