Machine learning models have hyperparameters that you must set in order to customize the model to your dataset.

Often the general effects of hyperparameters on a model are known, but how to best set a hyperparameter and combinations of interacting hyperparameters for a given dataset is challenging. There are often general heuristics or rules of thumb for configuring hyperparameters.

A better approach is to objectively search different values for model hyperparameters and choose a subset that results in a model that achieves the best performance on a given dataset. This is called hyperparameter optimization or hyperparameter tuning and is available in the scikit-learn Python machine learning library. The result of a hyperparameter optimization is a single set of well-performing hyperparameters that you can use to configure your model.

In this tutorial, you will discover hyperparameter optimization for machine learning in Python.

After completing this tutorial, you will know:

- Hyperparameter optimization is required to get the most out of your machine learning models.

- How to configure random and grid search hyperparameter optimization for classification tasks.

- How to configure random and grid search hyperparameter optimization for regression tasks.

Let’s get started.

Hyperparameter Optimization With Random Search and Grid Search

Photo by James St. John, some rights reserved.

Tutorial Overview

This tutorial is divided into five parts; they are:

- Model Hyperparameter Optimization

- Hyperparameter Optimization Scikit-Learn API

- Hyperparameter Optimization for Classification

- Random Search for Classification

- Grid Search for Classification

- Hyperparameter Optimization for Regression

- Random Search for Regression

- Grid Search for Regression

- Common Questions About Hyperparameter Optimization

Model Hyperparameter Optimization

Machine learning models have hyperparameters.

Hyperparameters are points of choice or configuration that allow a machine learning model to be customized for a specific task or dataset.

- Hyperparameter: Model configuration argument specified by the developer to guide the learning process for a specific dataset.

Machine learning models also have parameters, which are the internal coefficients set by training or optimizing the model on a training dataset.

Parameters are different from hyperparameters. Parameters are learned automatically; hyperparameters are set manually to help guide the learning process.

For more on the difference between parameters and hyperparameters, see the tutorial:

Typically a hyperparameter has a known effect on a model in the general sense, but it is not clear how to best set a hyperparameter for a given dataset. Further, many machine learning models have a range of hyperparameters and they may interact in nonlinear ways.

As such, it is often required to search for a set of hyperparameters that result in the best performance of a model on a dataset. This is called hyperparameter optimization, hyperparameter tuning, or hyperparameter search.

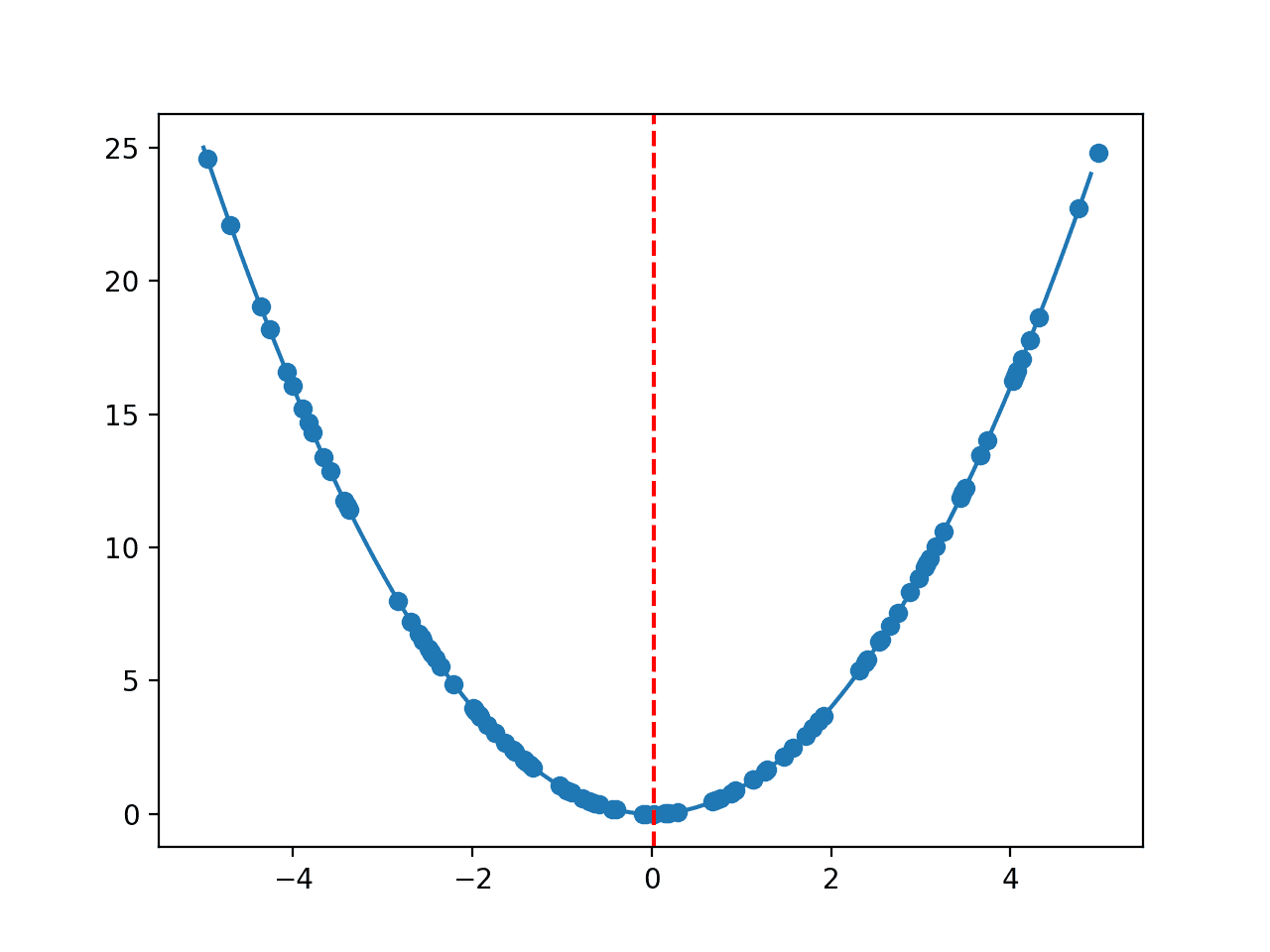

An optimization procedure involves defining a search space. This can be thought of geometrically as an n-dimensional volume, where each hyperparameter represents a different dimension and the scale of the dimension are the values that the hyperparameter may take on, such as real-valued, integer-valued, or categorical.

- Search Space: Volume to be searched where each dimension represents a hyperparameter and each point represents one model configuration.

A point in the search space is a vector with a specific value for each hyperparameter value. The goal of the optimization procedure is to find a vector that results in the best performance of the model after learning, such as maximum accuracy or minimum error.

A range of different optimization algorithms may be used, although two of the simplest and most common methods are random search and grid search.

- Random Search. Define a search space as a bounded domain of hyperparameter values and randomly sample points in that domain.

- Grid Search. Define a search space as a grid of hyperparameter values and evaluate every position in the grid.

Grid search is great for spot-checking combinations that are known to perform well generally. Random search is great for discovery and getting hyperparameter combinations that you would not have guessed intuitively, although it often requires more time to execute.

More advanced methods are sometimes used, such as Bayesian Optimization and Evolutionary Optimization.

Now that we are familiar with hyperparameter optimization, let’s look at how we can use this method in Python.

Hyperparameter Optimization Scikit-Learn API

The scikit-learn Python open-source machine learning library provides techniques to tune model hyperparameters.

Specifically, it provides the RandomizedSearchCV for random search and GridSearchCV for grid search. Both techniques evaluate models for a given hyperparameter vector using cross-validation, hence the “CV” suffix of each class name.

Both classes require two arguments. The first is the model that you are optimizing. This is an instance of the model with values of hyperparameters set that you want to optimize. The second is the search space. This is defined as a dictionary where the names are the hyperparameter arguments to the model and the values are discrete values or a distribution of values to sample in the case of a random search.

|

1 2 3 4 5 6 7 8 |

... # define model model = LogisticRegression() # define search space space = dict() ... # define search search = GridSearchCV(model, space) |

Both classes provide a “cv” argument that allows either an integer number of folds to be specified, e.g. 5, or a configured cross-validation object. I recommend defining and specifying a cross-validation object to gain more control over model evaluation and make the evaluation procedure obvious and explicit.

In the case of classification tasks, I recommend using the RepeatedStratifiedKFold class, and for regression tasks, I recommend using the RepeatedKFold with an appropriate number of folds and repeats, such as 10 folds and three repeats.

|

1 2 3 4 5 |

... # define evaluation cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1) # define search search = GridSearchCV(..., cv=cv) |

Both hyperparameter optimization classes also provide a “scoring” argument that takes a string indicating the metric to optimize.

The metric must be maximizing, meaning better models result in larger scores. For classification, this may be ‘accuracy‘. For regression, this is a negative error measure, such as ‘neg_mean_absolute_error‘ for a negative version of the mean absolute error, where values closer to zero represent less prediction error by the model.

|

1 2 3 |

... # define search search = GridSearchCV(..., scoring='neg_mean_absolute_error') |

You can see a list of build-in scoring metrics here:

Finally, the search can be made parallel, e.g. use all of the CPU cores by specifying the “n_jobs” argument as an integer with the number of cores in your system, e.g. 8. Or you can set it to be -1 to automatically use all of the cores in your system.

|

1 2 3 |

... # define search search = GridSearchCV(..., n_jobs=-1) |

Once defined, the search is performed by calling the fit() function and providing a dataset used to train and evaluate model hyperparameter combinations using cross-validation.

|

1 2 3 |

... # execute search result = search.fit(X, y) |

Running the search may take minutes or hours, depending on the size of the search space and the speed of your hardware. You’ll often want to tailor the search to how much time you have rather than the possibility of what could be searched.

At the end of the search, you can access all of the results via attributes on the class. Perhaps the most important attributes are the best score observed and the hyperparameters that achieved the best score.

|

1 2 3 4 |

... # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Once you know the set of hyperparameters that achieve the best result, you can then define a new model, set the values of each hyperparameter, then fit the model on all available data. This model can then be used to make predictions on new data.

Now that we are familiar with the hyperparameter optimization API in scikit-learn, let’s look at some worked examples.

Hyperparameter Optimization for Classification

In this section, we will use hyperparameter optimization to discover a well-performing model configuration for the sonar dataset.

The sonar dataset is a standard machine learning dataset comprising 208 rows of data with 60 numerical input variables and a target variable with two class values, e.g. binary classification.

Using a test harness of repeated stratified 10-fold cross-validation with three repeats, a naive model can achieve an accuracy of about 53 percent. A top-performing model can achieve accuracy on this same test harness of about 88 percent. This provides the bounds of expected performance on this dataset.

The dataset involves predicting whether sonar returns indicate a rock or simulated mine.

No need to download the dataset; we will download it automatically as part of our worked examples.

The example below downloads the dataset and summarizes its shape.

|

1 2 3 4 5 6 7 8 9 |

# summarize the sonar dataset from pandas import read_csv # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/sonar.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] print(X.shape, y.shape) |

Running the example downloads the dataset and splits it into input and output elements. As expected, we can see that there are 208 rows of data with 60 input variables.

|

1 |

(208, 60) (208,) |

Next, let’s use random search to find a good model configuration for the sonar dataset.

To keep things simple, we will focus on a linear model, the logistic regression model, and the common hyperparameters tuned for this model.

Random Search for Classification

In this section, we will explore hyperparameter optimization of the logistic regression model on the sonar dataset.

First, we will define the model that will be optimized and use default values for the hyperparameters that will not be optimized.

|

1 2 3 |

... # define model model = LogisticRegression() |

We will evaluate model configurations using repeated stratified k-fold cross-validation with three repeats and 10 folds.

|

1 2 3 |

... # define evaluation cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1) |

Next, we can define the search space.

This is a dictionary where names are arguments to the model and values are distributions from which to draw samples. We will optimize the solver, the penalty, and the C hyperparameters of the model with discrete distributions for the solver and penalty type and a log-uniform distribution from 1e-5 to 100 for the C value.

Log-uniform is useful for searching penalty values as we often explore values at different orders of magnitude, at least as a first step.

|

1 2 3 4 5 6 |

... # define search space space = dict() space['solver'] = ['newton-cg', 'lbfgs', 'liblinear'] space['penalty'] = ['none', 'l1', 'l2', 'elasticnet'] space['C'] = loguniform(1e-5, 100) |

Next, we can define the search procedure with all of these elements.

Importantly, we must set the number of iterations or samples to draw from the search space via the “n_iter” argument. In this case, we will set it to 500.

|

1 2 3 |

... # define search search = RandomizedSearchCV(model, space, n_iter=500, scoring='accuracy', n_jobs=-1, cv=cv, random_state=1) |

Finally, we can perform the optimization and report the results.

|

1 2 3 4 5 6 |

... # execute search result = search.fit(X, y) # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Tying this together, the complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

# random search logistic regression model on the sonar dataset from scipy.stats import loguniform from pandas import read_csv from sklearn.linear_model import LogisticRegression from sklearn.model_selection import RepeatedStratifiedKFold from sklearn.model_selection import RandomizedSearchCV # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/sonar.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] # define model model = LogisticRegression() # define evaluation cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1) # define search space space = dict() space['solver'] = ['newton-cg', 'lbfgs', 'liblinear'] space['penalty'] = ['none', 'l1', 'l2', 'elasticnet'] space['C'] = loguniform(1e-5, 100) # define search search = RandomizedSearchCV(model, space, n_iter=500, scoring='accuracy', n_jobs=-1, cv=cv, random_state=1) # execute search result = search.fit(X, y) # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Running the example may take a minute. It is fast because we are using a small search space and a fast model to fit and evaluate. You may see some warnings during the optimization for invalid configuration combinations. These can be safely ignored.

At the end of the run, the best score and hyperparameter configuration that achieved the best performance are reported.

Your specific results will vary given the stochastic nature of the optimization procedure. Try running the example a few times.

In this case, we can see that the best configuration achieved an accuracy of about 78.9 percent, which is fair, and the specific values for the solver, penalty, and C hyperparameters used to achieve that score.

|

1 2 |

Best Score: 0.7897619047619049 Best Hyperparameters: {'C': 4.878363034905756, 'penalty': 'l2', 'solver': 'newton-cg'} |

Next, let’s use grid search to find a good model configuration for the sonar dataset.

Grid Search for Classification

Using the grid search is much like using the random search for classification.

The main difference is that the search space must be a discrete grid to be searched. This means that instead of using a log-uniform distribution for C, we can specify discrete values on a log scale.

|

1 2 3 4 5 6 |

... # define search space space = dict() space['solver'] = ['newton-cg', 'lbfgs', 'liblinear'] space['penalty'] = ['none', 'l1', 'l2', 'elasticnet'] space['C'] = [1e-5, 1e-4, 1e-3, 1e-2, 1e-1, 1, 10, 100] |

Additionally, the GridSearchCV class does not take a number of iterations, as we are only evaluating combinations of hyperparameters in the grid.

|

1 2 3 |

... # define search search = GridSearchCV(model, space, scoring='accuracy', n_jobs=-1, cv=cv) |

Tying this together, the complete example of grid searching logistic regression configurations for the sonar dataset is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

# grid search logistic regression model on the sonar dataset from pandas import read_csv from sklearn.linear_model import LogisticRegression from sklearn.model_selection import RepeatedStratifiedKFold from sklearn.model_selection import GridSearchCV # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/sonar.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] # define model model = LogisticRegression() # define evaluation cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1) # define search space space = dict() space['solver'] = ['newton-cg', 'lbfgs', 'liblinear'] space['penalty'] = ['none', 'l1', 'l2', 'elasticnet'] space['C'] = [1e-5, 1e-4, 1e-3, 1e-2, 1e-1, 1, 10, 100] # define search search = GridSearchCV(model, space, scoring='accuracy', n_jobs=-1, cv=cv) # execute search result = search.fit(X, y) # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Running the example may take a moment. It is fast because we are using a small search space and a fast model to fit and evaluate. Again, you may see some warnings during the optimization for invalid configuration combinations. These can be safely ignored.

At the end of the run, the best score and hyperparameter configuration that achieved the best performance are reported.

Your specific results will vary given the stochastic nature of the optimization procedure. Try running the example a few times.

In this case, we can see that the best configuration achieved an accuracy of about 78.2% which is also fair and the specific values for the solver, penalty and C hyperparameters used to achieve that score. Interestingly, the results are very similar to those found via the random search.

|

1 2 |

Best Score: 0.7828571428571429 Best Hyperparameters: {'C': 1, 'penalty': 'l2', 'solver': 'newton-cg'} |

Hyperparameter Optimization for Regression

In this section we will use hyper optimization to discover a top-performing model configuration for the auto insurance dataset.

The auto insurance dataset is a standard machine learning dataset comprising 63 rows of data with 1 numerical input variable and a numerical target variable.

Using a test harness of repeated stratified 10-fold cross-validation with 3 repeats, a naive model can achieve a mean absolute error (MAE) of about 66. A top performing model can achieve a MAE on this same test harness of about 28. This provides the bounds of expected performance on this dataset.

The dataset involves predicting the total amount in claims (thousands of Swedish Kronor) given the number of claims for different geographical regions.

- Auto Insurance Dataset (auto-insurance.csv)

- Auto Insurance Dataset Description (auto-insurance.names)

No need to download the dataset, we will download it automatically as part of our worked examples.

The example below downloads the dataset and summarizes its shape.

|

1 2 3 4 5 6 7 8 9 |

# summarize the auto insurance dataset from pandas import read_csv # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/auto-insurance.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] print(X.shape, y.shape) |

Running the example downloads the dataset and splits it into input and output elements. As expected, we can see that there are 63 rows of data with 1 input variable.

|

1 |

(63, 1) (63,) |

Next, we can use hyperparameter optimization to find a good model configuration for the auto insurance dataset.

To keep things simple, we will focus on a linear model, the linear regression model and the common hyperparameters tuned for this model.

Random Search for Regression

Configuring and using the random search hyperparameter optimization procedure for regression is much like using it for classification.

In this case, we will configure the important hyperparameters of the linear regression implementation, including the solver, alpha, fit_intercept, and normalize.

We will use a discrete distribution of values in the search space for all except the “alpha” argument which is a penalty term, in which case we will use a log-uniform distribution as we did in the previous section for the “C” argument of logistic regression.

|

1 2 3 4 5 6 7 |

... # define search space space = dict() space['solver'] = ['svd', 'cholesky', 'lsqr', 'sag'] space['alpha'] = loguniform(1e-5, 100) space['fit_intercept'] = [True, False] space['normalize'] = [True, False] |

The main difference in regression compared to classification is the choice of the scoring method.

For regression, performance is often measured using an error, which is minimized, with zero representing a model with perfect skill. The hyperparameter optimization procedures in scikit-learn assume a maximizing score. Therefore a version of each error metric is provided that is made negative.

This means that large positive errors become large negative errors, good performance are small negative values close to zero and perfect skill is zero.

The sign of the negative MAE can be ignored when interpreting the result.

In this case we will mean absolute error (MAE) and a maximizing version of this error is available by setting the “scoring” argument to “neg_mean_absolute_error“.

|

1 2 3 |

... # define search search = RandomizedSearchCV(model, space, n_iter=500, scoring='neg_mean_absolute_error', n_jobs=-1, cv=cv, random_state=1) |

Tying this together, the complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

# random search linear regression model on the auto insurance dataset from scipy.stats import loguniform from pandas import read_csv from sklearn.linear_model import Ridge from sklearn.model_selection import RepeatedKFold from sklearn.model_selection import RandomizedSearchCV # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/auto-insurance.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] # define model model = Ridge() # define evaluation cv = RepeatedKFold(n_splits=10, n_repeats=3, random_state=1) # define search space space = dict() space['solver'] = ['svd', 'cholesky', 'lsqr', 'sag'] space['alpha'] = loguniform(1e-5, 100) space['fit_intercept'] = [True, False] space['normalize'] = [True, False] # define search search = RandomizedSearchCV(model, space, n_iter=500, scoring='neg_mean_absolute_error', n_jobs=-1, cv=cv, random_state=1) # execute search result = search.fit(X, y) # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Running the example may take a moment. It is fast because we are using a small search space and a fast model to fit and evaluate. You may see some warnings during the optimization for invalid configuration combinations. These can be safely ignored.

At the end of the run, the best score and hyperparameter configuration that achieved the best performance are reported.

Your specific results will vary given the stochastic nature of the optimization procedure. Try running the example a few times.

In this case, we can see that the best configuration achieved a MAE of about 29.2, which is very close to the best performance on the model. We can then see the specific hyperparameter values that achieved this result.

|

1 2 |

Best Score: -29.23046315344758 Best Hyperparameters: {'alpha': 0.008301451461243866, 'fit_intercept': True, 'normalize': True, 'solver': 'sag'} |

Next, let’s use grid search to find a good model configuration for the auto insurance dataset.

Grid Search for Regression

As a grid search, we cannot define a distribution to sample and instead must define a discrete grid of hyperparameter values. As such, we will specify the “alpha” argument as a range of values on a log-10 scale.

|

1 2 3 4 5 6 7 |

... # define search space space = dict() space['solver'] = ['svd', 'cholesky', 'lsqr', 'sag'] space['alpha'] = [1e-5, 1e-4, 1e-3, 1e-2, 1e-1, 1, 10, 100] space['fit_intercept'] = [True, False] space['normalize'] = [True, False] |

Grid search for regression requires that the “scoring” be specified, much as we did for random search.

In this case, we will again use the negative MAE scoring function.

|

1 2 3 |

... # define search search = GridSearchCV(model, space, scoring='neg_mean_absolute_error', n_jobs=-1, cv=cv) |

Tying this together, the complete example of grid searching linear regression configurations for the auto insurance dataset is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

# grid search linear regression model on the auto insurance dataset from pandas import read_csv from sklearn.linear_model import Ridge from sklearn.model_selection import RepeatedKFold from sklearn.model_selection import GridSearchCV # load dataset url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/auto-insurance.csv' dataframe = read_csv(url, header=None) # split into input and output elements data = dataframe.values X, y = data[:, :-1], data[:, -1] # define model model = Ridge() # define evaluation cv = RepeatedKFold(n_splits=10, n_repeats=3, random_state=1) # define search space space = dict() space['solver'] = ['svd', 'cholesky', 'lsqr', 'sag'] space['alpha'] = [1e-5, 1e-4, 1e-3, 1e-2, 1e-1, 1, 10, 100] space['fit_intercept'] = [True, False] space['normalize'] = [True, False] # define search search = GridSearchCV(model, space, scoring='neg_mean_absolute_error', n_jobs=-1, cv=cv) # execute search result = search.fit(X, y) # summarize result print('Best Score: %s' % result.best_score_) print('Best Hyperparameters: %s' % result.best_params_) |

Running the example may take a minute. It is fast because we are using a small search space and a fast model to fit and evaluate. Again, you may see some warnings during the optimization for invalid configuration combinations. These can be safely ignored.

At the end of the run, the best score and hyperparameter configuration that achieved the best performance are reported.

Your specific results will vary given the stochastic nature of the optimization procedure. Try running the example a few times.

In this case, we can see that the best configuration achieved a MAE of about 29.2, which is nearly identical to what we achieved with the random search in the previous section. Interestingly, the hyperparameters are also nearly identical, which is good confirmation.

|

1 2 |

Best Score: -29.275708614337326 Best Hyperparameters: {'alpha': 0.1, 'fit_intercept': True, 'normalize': False, 'solver': 'sag'} |

Common Questions About Hyperparameter Optimization

This section addresses some common questions about hyperparameter optimization.

How to Choose Between Random and Grid Search?

Choose the method based on your needs. I recommend starting with grid and doing a random search if you have the time.

Grid search is appropriate for small and quick searches of hyperparameter values that are known to perform well generally.

Random search is appropriate for discovering new hyperparameter values or new combinations of hyperparameters, often resulting in better performance, although it may take more time to complete.

How to Speed-Up Hyperparameter Optimization?

Ensure that you set the “n_jobs” argument to the number of cores on your machine.

After that, more suggestions include:

- Evaluate on a smaller sample of your dataset.

- Explore a smaller search space.

- Use fewer repeats and/or folds for cross-validation.

- Execute the search on a faster machine, such as AWS EC2.

- Use an alternate model that is faster to evaluate.

How to Choose Hyperparameters to Search?

Most algorithms have a subset of hyperparameters that have the most influence over the search procedure.

These are listed in most descriptions of the algorithm. For example, here are some algorithms and their most important hyperparameters:

If you are unsure:

- Review papers that use the algorithm to get ideas.

- Review the API and algorithm documentation to get ideas.

- Search all hyperparameters.

How to Use Best-Performing Hyperparameters?

Define a new model and set the hyperparameter values of the model to the values found by the search.

Then fit the model on all available data and use the model to start making predictions on new data.

This is called preparing a final model. See more here:

How to Make a Prediction?

First, fit a final model (previous question).

Then call the predict() function to make a prediction.

For examples of making a prediction with a final model, see the tutorial:

Do you have another question about hyperparameter optimization?

Let me know in the comments below.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Tutorials

- What is the Difference Between a Parameter and a Hyperparameter?

- Results for Standard Classification and Regression Machine Learning Datasets

- Tune Hyperparameters for Classification Machine Learning Algorithms

- How to Train a Final Machine Learning Model

- How to Make Predictions with scikit-learn

APIs

- Tuning the hyper-parameters of an estimator, scikit-learn Documentation.

- sklearn.model_selection.GridSearchCV API.

- sklearn.model_selection.RandomizedSearchCV API.

Articles

Summary

In this tutorial, you discovered hyperparameter optimization for machine learning in Python.

Specifically, you learned:

- Hyperparameter optimization is required to get the most out of your machine learning models.

- How to configure random and grid search hyperparameter optimization for classification tasks.

- How to configure random and grid search hyperparameter optimization for regression tasks.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Isn’t there something more efficient than random and grid? Hyperparameter tuning is itself obviously an optimisation and a normal optimisation doesn’t search the entire problem space.

Isn’t this a classic case of the “exploration” vs “exploitation” tradeoff, except the above 2 methods have no exploitation.?

Yes, random search is a good start, bayesian optimization is common, or any population-based global opitmization algorithm can be used, like a genetic algorithm or simulated annealing.

Wouldn’t quasi random numbers (e.g. Sobol sequences) be preferable to pseudo-random numbers? Because it would more evenly search the problem space. These are widely used in monte carlo in finance for just this reason.

Is this implemented by scikit-learn?

They would also allow early stopping – you could see the quality of solution you’d achieved and decide for yourself when to use the best solution so far, rather than needing to (examine the entire grid) or (specify a fixed number of iterations).

Pseudorandom numbers are good enough in most cases.

where to put or apply exactly the specific hyperparameter values that achieved this result. please explain this step and thank you very much.

Once the method identifies the hyperparameters that result in the best performance, you can then figure a final model with those hyperparametres and fit a model on all available data, ready for making predictions on new data.

I think this is the best tutorial from beginning to end. Simple, easy to use and excellent ! Thanks for this post.

Thanks!

Hi Jason!

Thank you very much for this detailed overview! Currently I am using the Sequential model of keras, since this one seems to be an easy way to get a neural network architecture with defined layers and nodes. I guess since this model is not a model from sklearn, I cannot use your optimization methods explained here?

You’re welcome.

You can grid search keras hyperparameters manually with a for loop.

Alternately, you can wrap your model in the scikit-learn wrapper and use the grid/random search.

There are examples of both on the blog, try a search.

Thank you Jason!

Can I use grid search or random search in Deep learning algorithms?

or is there a specific technique used to tune hyperparameter in deep learning algorithms?

Yes. There are many examples on the blog.

hi. many thanks for your amazing tutorial.

I have a question. what is the difference between finding hyper-parameter with random search and metaheuristics algorithm in deep learning models?

can you refer me to a kind of tutorial?

You’re welcome.

The difference is that some algorithms may find better results faster than other algorithms on some problems.

Hell Jason.

Very nice and helpful information can be find in your web, congrats!.

I am using the RF in R. I used the predictors table to create a regular dendrogram (euclidean distance and average method) in order to have figure to explain (or show) how close or far are the group of samples. Do you think it is valid or does it make any sense? or do you thing is better just use the original data table to build the dendrogram?

Thank you,

Perhaps compare results to other methods and use it if it works well/better.

In what place does the number of epochs being specified? I mean, is the number of epochs specified in any line?

You mean the n_iter parameter?

I have seen online, people recommend creating 3 data sets – Train, Validation, and Test sets.

They say to perform Hyperparameter Tuning on the Validation set or else you risk data leakage. Here you perform the tuning on the entire dataset, is this valid ?

Is the other advice of tuning only on the Validation set incorrect and why ?

Thanks !

Please see this post: https://machinelearningmastery.com/training-validation-test-split-and-cross-validation-done-right/

hi. Jason!

I have a question, does this article use a standardize methodology to apply random search? I´m looking for a hyperparameter optimization methodology using random search, to standardize processes within a study.

do you know a standardize methodology to random search?

Many thanks,

It seems to me that standardizing is a data preprocessing technique while random search is for hyperparameters. Hence they should be unrelated.

Hello Diego…The RandomSearchCV and GridSearchCV techniques are both based upon time tested methodologies utilizing cross-validation. Follow the links for these two in the original post. Also, please check out the “Optimization for Machine Learning Crash Course” – https://machinelearningmastery.com/optimization-for-machine-learning-crash-course/

Regards,

Hi Jason, thank you for this article.

I have a large dataset that is split as training, validation, and testing dataset.

It is computationally expensive (takes a long time) to do hyperparameter tuning with the whole training dataset and validation of the performance of the hyperparameters using the whole validation dataset.

Question:

Is it advisable in this situation to take a smaller sample of the training and validation dataset and tune the hyperparameters with it? If yes, what sampling method should I use?

Thank you.

Hi Jack…You are very welcome! I would investigate batch normalization.

https://machinelearningmastery.com/how-to-accelerate-learning-of-deep-neural-networks-with-batch-normalization/

Hi James, thank you for your reply. I am not using a deep learning model. Hence, I could not apply batch normalization.

Since I have a large dataset as I originally posted, my question is whether I can take a smaller sample of the training and validation dataset and tune the hyperparameters with it? If yes, what sampling method should I use?

Man I just want to thank you for the work you do. We’ve learned so much from your articles. Thanks!

Great feedback Rodrigo!

Hi Jason,

Thanks for your articles!

I am still having issues understanding why the the random search/grid search is run directly on the entire dataset and not just on the training set. I have checked a previous similar question but that did not help clarify my doubts. I often work with small datasets and understand how to effectively and correctly perform hyperparameters optimization on such small datasets can be of great help.

Thanks

Hi Lucas…I would highly recommend investigation of hyperparameter tuning with Bayesian Optimization.

https://www.analyticsvidhya.com/blog/2021/05/bayesian-optimization-bayes_opt-or-hyperopt/

is random search same as randomized grid search

Hi siera…Yes, they are the same!

Is random search same as Randomized grid search? Thanks for youre help

Hi amanda…Yes, the terms refer to the same concept. You may also want to investigate Bayesian hyperparameter optimization:

https://towardsdatascience.com/a-conceptual-explanation-of-bayesian-model-based-hyperparameter-optimization-for-machine-learning-b8172278050f

Hello Jason,

Thank you for the article. Can we use the same code on tree based models?

I’m working on a large dataset (47,000 documents) for text classification. What is the fastest and least computationally intensive hyperparameter optimization method to optimize the value of C in SVM? I don’t have enough resources.