The test options you use when evaluating machine learning algorithms can mean the difference between over-learning, a mediocre result and a usable state-of-the-art result that you can confidently shout from the roof tops (you really do feel like doing that sometimes).

In this post you will discover the standard test options you can use in your algorithm evaluation test harness and how to choose the right options next time.

Randomness

The root of the difficulty in choosing the right test options is randomness. Most (almost all) machine learning algorithms use randomness in some way. The randomness may be explicit in the algorithm or may be in the sample of the data selected to train the algorithm.

Randomness

Photo by afoncubierta, some rights reserved

This does not mean that the algorithms produce random results, it means that they produce results with some noise or variance. We call this type of limited variance, stochastic and the algorithms that exploit it, stochastic algorithms.

Train and Test on Same Data

If you have a dataset, you may want to train the model on the dataset and then report the results of the model on that dataset. That’s how good the model is, right?

The problem with this approach of evaluating algorithms is that you indeed will know the performance of the algorithm on the dataset, but do not have any indication of how the algorithm will perform on data that the model was not trained on (so-called unseen data).

This matters, only if you want to use the model to make predictions on unseen data.

Split Test

A simple way to use one dataset to both train and estimate the performance of the algorithm on unseen data is to split the dataset. You take the dataset, and split it into a training dataset and a test dataset. For example, you randomly select 66% of the instances for training and use the remaining 34% as a test dataset.

The algorithm is run on the training dataset and a model is created and assessed on the test dataset and you get a performance accuracy, lets say 87% classification accuracy.

Spit tests are fast and great when you have a lot of data or when training a model is expensive (it resources or time). A split test on a very very large dataset can produce an accurate estimate of the actual performance of the algorithm.

How good is the algorithm on the data? Can we confidently say it can achieve an accuracy of 87%?

A problem is that if we spit the training dataset again into a different 66%/34% split, we would get a different result from our algorithm. This is called model variance.

Multiple Split Tests

A solution to our problem with the split test getting different results on different splits of the dataset is to reduce the variance of the random process and do it many times. We can collect the results from a fair number of runs (say 10) and take the average.

For example, let’s say we split our dataset 66%/34%, ran our algorithm and got an accuracy and we did this 10 times with 10 different splits. We might have 10 accuracy scores as follows: 87, 87, 88, 89, 88, 86, 88, 87, 88, 87.

The average performance of our model is 87.5, with a standard deviation of about 0.85.

Coin Toss

Photo by ICMA Photos, some rights reserved

A problem with multiple split tests is that it is possible that some data instance are never included for training or testing, where as others may be selected multiple times. The effect is that this may skew results and may not give an meaningful idea of the accuracy of the algorithm.

Cross Validation

A solution to the problem of ensuring each instance is used for training and testing an equal number of times while reducing the variance of an accuracy score is to use cross validation. Specifically k-fold cross validation, where k is the number of splits to make in the dataset.

For example, let’s choose a value of k=10 (very common). This will split the dataset into 10 parts (10 folds) and the algorithm will be run 10 times. Each time the algorithm is run, it will be trained on 90% of the data and tested on 10%, and each run of the algorithm will change which 10% of the data the algorithm is tested on.

In this example, each data instance will be used as a training instance exactly 9 times and as a test instance 1 time. The accuracy will not be a mean and a standard deviation, but instead will be an exact accuracy score of how many correct predictions were made.

The k-fold cross validation method is the go-to method for evaluating the performance of an algorithm on a dataset. You want to choose k-values that give you a good sized training and test dataset for your algorithm. Not too disproportionate (too large or small for training or test). If you have a lot of data, you may may have to resort to either sampling the data or reverting to a split test.

Cross validation does give an unbiased estimation of the algorithms performance on unseen data, but what if the algorithm itself uses randomness. The algorithm would produce different results for the same training data each time it was trained with a different random number seed (start of the sequence of pseudo-randomness). Cross validation does not account for variance in the algorithm’s predictions.

Another point of concern is that cross validation itself uses randomness to decide how to split the dataset into k folds. Cross validation does not estimate how the algorithm perform with different sets of folds.

This only matters if you want to understand how robust the algorithm is on the dataset.

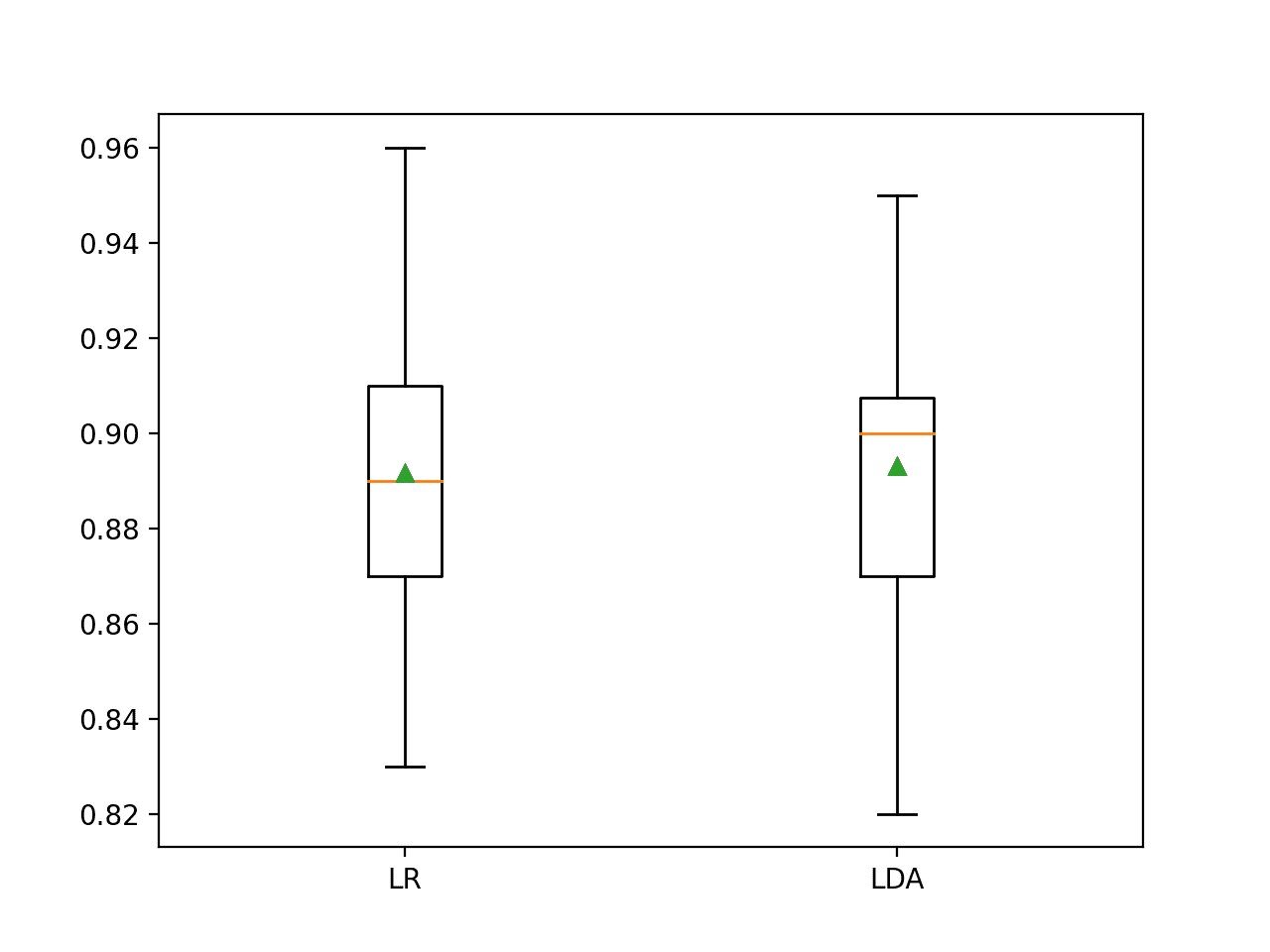

Multiple Cross Validation

A way to account for the variance in the algorithm itself is to run cross validation multiple times and take the mean and the standard deviation of the algorithm accuracy from each run.

This will will give you an an estimate of the performance of the algorithm on the dataset and an estimation of how robust (the size of the standard deviation) the performance is.

If you have one mean and standard deviation for algorithm A and another mean and standard deviation for algorithm B and they differ (for example, algorithm A has a higher accuracy), how do you know if the difference is meaningful?

This only matters if you want to compare the results between algorithms.

Statistical Significance

A solution to comparing algorithm performance measures when using multiple runs of k-fold cross validation is to use statistical significance tests (like the Student’s t-test).

The results from multiple runs of k-fold cross validation is a list of numbers. We like to summarize these numbers using the mean and standard deviation. You can think of these numbers as a sample from an underlying population. A statistical significance test answers the question: are two samples drawn from the same population? (no difference). If the answer is “yes”, then, even if the mean and standard deviations differ, the difference can be said to be not statistically significant.

We can use statistical significance tests to give meaning to the differences (or lack there of) between algorithm results when using multiple runs (like multiple runs of k-fold cross validation with different random number seeds). This can when we want to make accurate claims about results (algorithm A was better than algorithm B and the difference was statistically significant)

This is not the end of the story, because there are different statistical significance tests (parametric and nonparametric) and parameters to those tests (p-value). I’m going to draw the line here because if you have followed me this far, you now know enough about selecting test options to produce rigorous (publishable!) results.

Summary

In this post you have discovered the difference between the main test options available to you when designing a test harness to evaluate machine learning algorithms.

Specifically, you learned the utility and problems with:

- Training and testing on the same dataset

- Split tests

- Multiple split tests

- Cross validation

- Multiple cross validation

- Statistical significance

When in doubt, use k-fold cross validation (k=10) and use multiple runs of k-fold cross validation with statistical significance tests when you want to meaningfully compare algorithms on your dataset.

Great post Jason. Very clear and easy to follow.

Thanks Mickael.

Great post.

About the last part, are there situations where performance comparison for different algorithms needs to involve non parametric test?

Could you please tell us some examples for that if they exists?

Thanks for your help.

The distribution of results is often Gaussian (normally distributed).

If you believe this is not the case for some reason, I would advice you to use a non-parametric test. It is a good question and I cannot think of anything off hand. Often I will use a non-parametric test to take all guessing out of the equation.

Thanks;)

Great posts! Thanks!

You suggest running multiple k-fold cross validation w/ statistical significance tests would help draw conclusions of algorithm comparison.

I wonder how to set aside a fold for validating and tuning a model if I use k-fold cross-validation.

Is it ok to run multiple splits of training/validation/test datasets w/ statistical significance tests, even though some data may occur multiple times in the same split of datasets?

Thanks for your help!

Hi Dr Jason,

This is another great post. I have learned a lot from this post.

Keep up.

Regards,

Surajit

Hi Jason – I’m new to ML and python.Just learnt your algorithm for implementing naive Bayes from scratch in Python.First thanks for explaining it beautifully.

Also learnt implementation of the same algorithm with scikit learn.

Need some help in understanding terms precision,recall and f1 score in classification_report.

Jason … your posts are simple and great

Thanks.

thanks

You’re welcome anbu.

Thank you very much for this magnificent post, Jason. Please keep up the good work.

You’re very welcome Mohammed.

I’m trying to understand how K-fold CV could help evaluate a neural network model trained using backpropagation. As I understand it, in K-fold CV, every batch is use to train a single neural network model. Would that means, at the end of every batch the model is more or less overfitted to that particular batch? So in a 10 fold CV, at the end of the last batch, we have a model that potentially overfitted to the last batch where it could perform well on the last batch 90% training data, but sucked in the test 10%?

And would this work on time series data?

Anyway, thank you for sharing, your posts have been very useful to me.

Hi hew.

Yes, 10 different models are trained and evaluated. We report the average score and throw the models away. Cross validation is only used to estimate the performance of the model on unseen data, not to train the model.

If we are happy with the performance, we can then train the model on the entire training dataset and begin to use it.

Time series is difficult to use with cross validation. Normally, I a train/test split and a sliding window to evaluate models on time series data.

Hi Jason,

Thank you for your reply. Im not sure if i understand you completely. To estimate the performance of our model on unseen data? Is the definition of model here, the ML method used? What does it mean we score well on k fold exercise? All i would assume is that, the dataset we use contain evenly spread common features that can be use for our prediction of our dataset. It doesnt proof its effectiveness against oos. Perhaps the dataset that we selected for the exercise is biased in away that the features that we found never works in oos datset.

Great comment.

The goal of predictive modeling is to develop a model from a sample of data of the domain to perform well on data it has not seen before. To make predictions on unseen data.

If this is not the goal, then you are doing stats and developing a descriptive rather than a predictive model and trying to understand the domain.

Knowing how well a model does on unseen data is a hard problem. We can hold back a sample and use that to estimate the skill of the model. A more advanced technique is to do this many times – cross validation.

Cross validation does not prove a model or modeling methodology (data prep + model) will do well, but it gives us confidence that it will do well.

Indeed, we must be very concerned with the quality of our data sample, otherwise the ability to generalize will be compromised.

Does that help?

Hi Jason,

May I ask you what is the alternative to fit the data when we have very low data points (say 15 to 20 only) but no. of predictors are large (e.g.9 to 10)

That is a harder type of problem Poonam.

You may be better served with small n statistics (e.g. statistical methods). You just don’t have enough observations for machine learning methods to learn from and generalize.

Hi Jason,

Could you please explain the differences between test set and validation set? If I split the data into test set and training set. Do I need to setup a validation set?

Thank you so much!

Hi Rita,

A training set is used to fit the model, a test set is used to evaluate a model. A single large dataset can be split into multiple train and test sets – e.g. k-fold cross validation.

A validation dataset is held back and used as a final check. It is optional but recommended.

Hi jason! it is good simple and nice information!

And I have two questions.

.

1. In the last part(statistical significance), How to calculate statistical significance? As p-value??

2. In data split, You said that you recommended validation data set in above question. Then, when i split data set as 60%(train), 20%(test), 20%(validation), what is the difference between test and validation set? I think that algorithm is evaluated as using test data. So.. what is the role of validation?? is it same role ??? I’m confused…

Have a nice day!

Hello Dr,

Thank you for the good work. I want to ask how can someone plot the accuracy of each K-folds model deveolped in K-Flold cross validation.

Thank you for very detailed explanation Dr Jason. I see that for any example of k-fold cross validation, sample split size is always 10. How do we determine the right splits for cross validation. Some of my dataset have 700 rows and some have 7000 rows of data. Always splitting dataset into 10 folds is not the right decision I think

10 is reported for most dataset because further groups result in diminishing returns on the bias/variance of the mean skill score.

You can try a sensitivity analysis and see how k values affect the distribution of skill scores. Not a bad idea.

Which is the best technique to minimize Mean absolute error?

It depends on the specific data and algorithm. Try a few.

hello jadon. i want to learn how to estimate the accuracy of a regression model. I have gone through the post but I don’t seem to cope. please I need to learn machine learning from the scratch… I mean from the very scrach and foundation. please help with any material. Thanks

You can estimate the skill of a regression model by looking at prediction error.

It is common to estimate prediction error using Root Mean Squared Error (RMSE) or Mean Absolute Error (MAE).

These measures are provided in top platforms like scikit-learn, Weka and caret in R.

I also have posts on how to calculate them manually.

I hope that helps.

Sir, for a two-class defect detection problem, apart from accuracy which other parameters have to be evaluated?

Consider a confusion matrix and look at each quadrant of the matrix:

https://machinelearningmastery.com/confusion-matrix-machine-learning/

Also consider precision and recall.

Hello Jason

Do we need to use cross validation when building predictive modelling? is it important?

Secondly, if i decided to use cross validation, when to use it? on the training or the test data?

In my scenario, i ll build some classification algorithms using the training data set, then i ill use the test set to evaluate the performance of my models.

Should i use Cross validation when i build my models or when i test them?

Yes, CV is perhaps the best method we have for developing unbiased (less biased) estimators.

You can use train/test if you have a lot of data.

Nice article, Jason. I really like the tools you provided here. I’ve got a question: In case you’re on a tight deadline (like in a competition or dealing with a really impatient boss), what method would you use? I’m asking because although CV and multiple CV runs are a great way to gain confidence in your results, they consume lots of time.

Thanks for your time in advance!

I seem to always fall back to repeated CV and significance tests. It’s my background in stochastic optimization that makes me not settle for anything less.

Hi,

This is a great post, many thanks for this.

Have you written, or do you know of any introduction/tutorial you would advice to the application of significance tests on cross-validations results? It would be really useful.

Many thanks, again!

Yes, I have a few posts on the topic scheduled.

I recommend reading this paper:

http://web.cs.iastate.edu/~honavar/dietterich98approximate.pdf

Hi,

Under “Cross Validation” section, you say:

In this example, each data instance will be used as a training instance exactly 9 times and as a test instance 1 time. The accuracy will not be a mean and a standard deviation, but instead will be an exact accuracy score of how many correct predictions were made.

This is the overall accuracy is computed. But in the paper, “Steven L Salzberg. 1997. On comparing classifiers: Pitfalls to avoid and a recommended approach. Data mining and knowledge discovery 1, 3 (1997), 317-328” under “A recommended approach”, Overall accuracy is averaged across all k partitions. These k values also give an estimate of the variance of the algorithms. Then to compare algorithms, the binomial test or the McNemar test is suggested.

So which one do you think is valid?

Thanks

There are many statistical hypothesis test methods that can be used, I explain more here:

https://machinelearningmastery.com/statistical-significance-tests-for-comparing-machine-learning-algorithms/

Please, i will like to know the level of efficiency for all the Machine Learning Algorithm. I’m actually on a project and it’s very important for me to know this. Please as soon as possible. Thanks

Do you mean computational efficiency?

Perhaps perform a big-O analysis on your chosen algorithm?

Hi Jason,

what is the acceptable standard deviation (limits?) between cross-validated model errors?

Kind Regards,

It is problem specific. Compare your results to a naive baseline model. More details here:

https://machinelearningmastery.com/faq/single-faq/how-to-know-if-a-model-has-good-performance

hello Jason, I might want to clarify with u about run algorithm in training dataset and the model is access and created on test data set? How to build model in test dataset with the result run from train dataset? am not clear about this statement..Could you please advice?

Sorry, I don’t follow.

What are you trying to achieve exactly?

Hi Jason,

I want to make sure with you: the “Multiple Cross Validation” that you mentioned in your post, does it mean “repeated cross-validation” in caret method=”repeatedcv” in the “trainControl” function? Thank you!

Yes.

I trained a learner on the training dataset and got an accuracy of 99%. When i trained the same learner on a 10 fold CV my accuracy deacreases to 40%. Cleary my model is overfitting. After performing Random search CV I obtain the best estimator wich gives an accuracy of 55%. How do I know that my tunned hyperparameter model has combat the problem of overfitting. In other words on which dataset should I asses my tuned learner model? obviously i cannot use the test set as I’m still not confident about my learner.

Maybe not overfitting, maybe just the first case was a poor estimate of expected performance and the cv was more reliable.

You can grid search on a hold out dataset or grid search within each cross-validation fold called nested cross-validation.

Hello Mr.Brownlee,

Great post. I would like to ask you a few doubts if you don’t mind:

1. In k-fold cross-validation technique the dataset is then used to both establish and validate the model, which is not ideal right?

2. Even the model may be accurately classified, will it be limited by the data-specificity?

3. How can you calculate the accuracy to classify a novel and unseen sample?

Thanks in advance

k-fold cv is only used to estimate the performance of the model:

https://machinelearningmastery.com/k-fold-cross-validation/

The model is only as good as the data used to train it.

k-fold cv estimates the performance of the model when used to make predictions on new data not seen during training.

Thank you so much for your great post.

I would like to know what do you think in case of an unsupervised model.

Do we need test data for the evaluation of an unsupervised model where the model used to generate synthetic data?

How to evaluate an unspervised model?

Thank you in advance for your time and reply.

It depends on the type of model, e.g. it is common to evaluate clustering methods like a classification model:

https://machinelearningmastery.com/faq/single-faq/how-do-i-evaluate-a-clustering-algorithm

Dear sir…your articles are always very nice…when I do any search and your article is in option I always prefer to read yours…my query is… I was trying RandomizedSearchCV using cross validation to select the hyper parameters for F1 score…it’s giving me the best model for F1 score for class 1 but F1 score for class2 which is my minority class is coming very poor…I want to select the best hyper parameters for the model for class2 ? What should I do in that case ?

Hi Anjali…The following is a great discussion that may add clarity:

https://stackoverflow.com/questions/62672842/how-to-improve-f1-score-for-classification