In this article, you will learn how machine learning is evolving in 2026 from prediction-focused systems into deeply integrated, action-oriented systems that drive real-world workflows.

Making developers awesome at machine learning

Making developers awesome at machine learning

In this article, you will learn how machine learning is evolving in 2026 from prediction-focused systems into deeply integrated, action-oriented systems that drive real-world workflows.

In this article, you will learn how to implement state-managed interruptions in LangGraph so an agent workflow can pause for human approval before resuming execution.

In the previous article, we saw how a language model converts logits into probabilities and samples the next token. But where do these logits come from? In this tutorial, we take a hands-on approach to understand the generation pipeline: How the prefill phase processes your entire prompt in a single parallel pass How the decode […]

In this article, you will learn how to use Python’s itertools module to simplify common feature engineering tasks with clean, efficient patterns.

In this article, you will learn how to build, deploy, and test a no-code document-processing AI agent with LlamaAgents Builder in LlamaCloud.

In this article, you will learn how vector databases work, from the basic idea of similarity search to the indexing strategies that make large-scale retrieval practical.

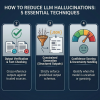

In this article, you will learn why large language model hallucinations happen and how to reduce them using system-level techniques that go beyond prompt engineering.

In this article, you will learn why production AI applications need both a vector database for semantic retrieval and a relational database for structured, transactional workloads.

In this article, you will learn how to design, implement, and evaluate memory systems that make agentic AI applications more reliable, personalized, and effective over time.

In this article, you will learn how temperature and seed values influence failure modes in agentic loops, and how to tune them for greater resilience.