Blending is an ensemble machine learning algorithm.

It is a colloquial name for stacked generalization or stacking ensemble where instead of fitting the meta-model on out-of-fold predictions made by the base model, it is fit on predictions made on a holdout dataset.

Blending was used to describe stacking models that combined many hundreds of predictive models by competitors in the $1M Netflix machine learning competition, and as such, remains a popular technique and name for stacking in competitive machine learning circles, such as the Kaggle community.

In this tutorial, you will discover how to develop and evaluate a blending ensemble in python.

After completing this tutorial, you will know:

- Blending ensembles are a type of stacking where the meta-model is fit using predictions on a holdout validation dataset instead of out-of-fold predictions.

- How to develop a blending ensemble, including functions for training the model and making predictions on new data.

- How to evaluate blending ensembles for classification and regression predictive modeling problems.

Kick-start your project with my new book Ensemble Learning Algorithms With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Blending Ensemble Machine Learning With Python

Photo by Nathalie, some rights reserved.

Tutorial Overview

This tutorial is divided into four parts; they are:

- Blending Ensemble

- Develop a Blending Ensemble

- Blending Ensemble for Classification

- Blending Ensemble for Regression

Blending Ensemble

Blending is an ensemble machine learning technique that uses a machine learning model to learn how to best combine the predictions from multiple contributing ensemble member models.

As such, blending is the same as stacked generalization, known as stacking, broadly conceived. Often, blending and stacking are used interchangeably in the same paper or model description.

Many machine learning practitioners have had success using stacking and related techniques to boost prediction accuracy beyond the level obtained by any of the individual models. In some contexts, stacking is also referred to as blending, and we will use the terms interchangeably here.

— Feature-Weighted Linear Stacking, 2009.

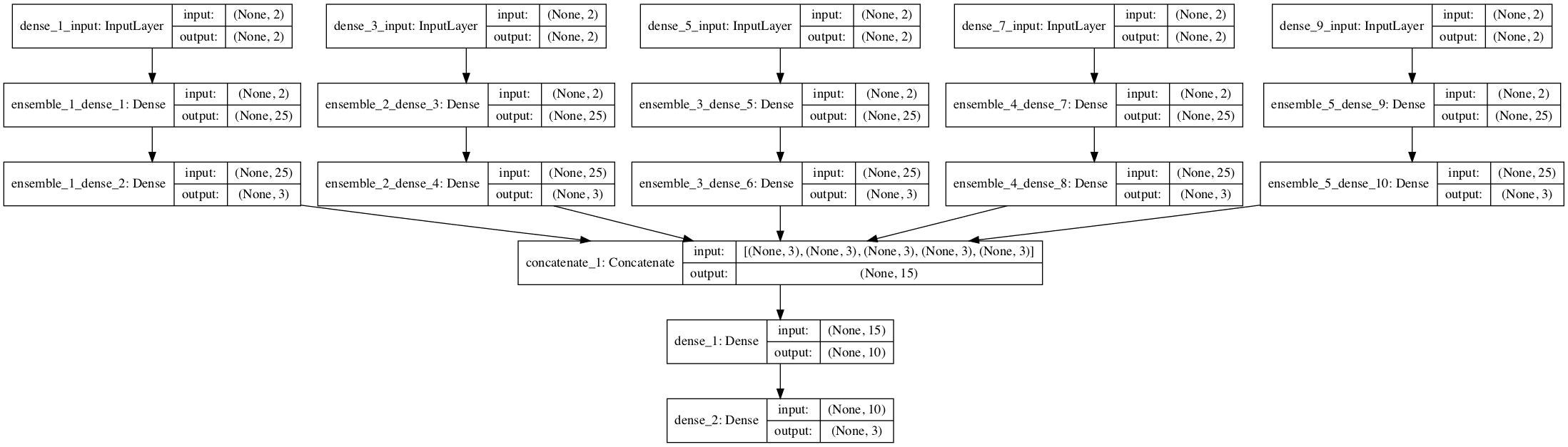

The architecture of a stacking model involves two or more base models, often referred to as level-0 models, and a meta-model that combines the predictions of the base models, referred to as a level-1 model. The meta-model is trained on the predictions made by base models on out-of-sample data.

- Level-0 Models (Base-Models): Models fit on the training data and whose predictions are compiled.

- Level-1 Model (Meta-Model): Model that learns how to best combine the predictions of the base models.

Nevertheless, blending has specific connotations for how to construct a stacking ensemble model.

Blending may suggest developing a stacking ensemble where the base-models are machine learning models of any type, and the meta-model is a linear model that “blends” the predictions of the base-models.

For example, a linear regression model when predicting a numerical value or a logistic regression model when predicting a class label would calculate a weighted sum of the predictions made by base models and would be considered a blending of predictions.

- Blending Ensemble: Use of a linear model, such as linear regression or logistic regression, as the meta-model in a stacking ensemble.

Blending was the term commonly used for stacking ensembles during the Netflix prize in 2009. The prize involved teams seeking movie recommendation predictions that performed better than the native Netflix algorithm and a US$1M prize was awarded to the team that achieved a 10 percent performance improvement.

Our RMSE=0.8643^2 solution is a linear blend of over 100 results. […] Throughout the description of the methods, we highlight the specific predictors that participated in the final blended solution.

— The BellKor 2008 Solution to the Netflix Prize, 2008.

As such, blending is a colloquial term for ensemble learning with a stacking-type architecture model. It is rarely, if ever, used in textbooks or academic papers, other than those related to competitive machine learning.

Most commonly, blending is used to describe the specific application of stacking where the meta-model is trained on the predictions made by base-models on a hold-out validation dataset. In this context, stacking is reserved for a meta-model that is trained on out-of fold predictions during a cross-validation procedure.

- Blending: Stacking-type ensemble where the meta-model is trained on predictions made on a holdout dataset.

- Stacking: Stacking-type ensemble where the meta-model is trained on out-of-fold predictions made during k-fold cross-validation.

This distinction is common among the Kaggle competitive machine learning community.

Blending is a word introduced by the Netflix winners. It is very close to stacked generalization, but a bit simpler and less risk of an information leak. […] With blending, instead of creating out-of-fold predictions for the train set, you create a small holdout set of say 10% of the train set. The stacker model then trains on this holdout set only.

— Kaggle Ensemble Guide, MLWave, 2015.

We will use this latter definition of blending.

Next, let’s look at how we can implement blending.

Want to Get Started With Ensemble Learning?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Develop a Blending Ensemble

The scikit-learn library does not natively support blending at the time of writing.

Instead, we can implement it ourselves using scikit-learn models.

First, we need to create a number of base models. These can be any models we like for a regression or classification problem. We can define a function get_models() that returns a list of models where each model is defined as a tuple with a name and the configured classifier or regression object.

For example, for a classification problem, we might use a logistic regression, kNN, decision tree, SVM, and Naive Bayes model.

|

1 2 3 4 5 6 7 8 9 |

# get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC(probability=True))) models.append(('bayes', GaussianNB())) return models |

Next, we need to fit the blending model.

Recall that the base models are fit on a training dataset. The meta-model is fit on the predictions made by each base model on a holdout dataset.

First, we can enumerate the list of models and fit each in turn on the training dataset. Also in this loop, we can use the fit model to make a prediction on the hold out (validation) dataset and store the predictions for later.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

... # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict(X_val) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store predictions as input for blending meta_X.append(yhat) |

We now have “meta_X” that represents the input data that can be used to train the meta-model. Each column or feature represents the output of one base model.

Each row represents the one sample from the holdout dataset. We can use the hstack() function to ensure this dataset is a 2D numpy array as expected by a machine learning model.

|

1 2 3 |

... # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) |

We can now train our meta-model. This can be any machine learning model we like, such as logistic regression for classification.

|

1 2 3 4 5 |

... # define blending model blender = LogisticRegression() # fit on predictions from base models blender.fit(meta_X, y_val) |

We can tie all of this together into a function named fit_ensemble() that trains the blending model using a training dataset and holdout validation dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict(X_val) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LogisticRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender |

The next step is to use the blending ensemble to make predictions on new data.

This is a two-step process. The first step is to use each base model to make a prediction. These predictions are then gathered together and used as input to the blending model to make the final prediction.

We can use the same looping structure as we did when training the model. That is, we can collect the predictions from each base model into a training dataset, stack the predictions together, and call predict() on the blender model with this meta-level dataset.

The predict_ensemble() function below implements this. Given the list of fit base models, the fit blender ensemble, and a dataset (such as a test dataset or new data), it will return a set of predictions for the dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

# make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for name, model in models: # predict with base model yhat = model.predict(X_test) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) |

We now have all of the elements required to implement a blending ensemble for classification or regression predictive modeling problems

Blending Ensemble for Classification

In this section, we will look at using blending for a classification problem.

First, we can use the make_classification() function to create a synthetic binary classification problem with 10,000 examples and 20 input features.

The complete example is listed below.

|

1 2 3 4 5 6 |

# test classification dataset from sklearn.datasets import make_classification # define dataset X, y = make_classification(n_samples=10000, n_features=20, n_informative=15, n_redundant=5, random_state=7) # summarize the dataset print(X.shape, y.shape) |

Running the example creates the dataset and summarizes the shape of the input and output components.

|

1 |

(10000, 20) (10000,) |

Next, we need to split the dataset up, first into train and test sets, and then the training set into a subset used to train the base models and a subset used to train the meta-model.

In this case, we will use a 50-50 split for the train and test sets, then use a 67-33 split for train and validation sets.

|

1 2 3 4 5 6 7 |

... # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # split training set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X_train_full, y_train_full, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s, Test: %s' % (X_train.shape, X_val.shape, X_test.shape)) |

We can then use the get_models() function from the previous section to create the classification models used in the ensemble.

The fit_ensemble() function can then be called to fit the blending ensemble on the train and validation datasets and the predict_ensemble() function can be used to make predictions on the holdout dataset.

|

1 2 3 4 5 6 7 |

... # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make predictions on test set yhat = predict_ensemble(models, blender, X_test) |

Finally, we can evaluate the performance of the blending model by reporting the classification accuracy on the test dataset.

|

1 2 3 4 |

... # evaluate predictions score = accuracy_score(y_test, yhat) print('Blending Accuracy: %.3f' % score) |

Tying this all together, the complete example of evaluating a blending ensemble on the synthetic binary classification problem is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 |

# blending ensemble for classification using hard voting from numpy import hstack from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split from sklearn.metrics import accuracy_score from sklearn.linear_model import LogisticRegression from sklearn.neighbors import KNeighborsClassifier from sklearn.tree import DecisionTreeClassifier from sklearn.svm import SVC from sklearn.naive_bayes import GaussianNB # get the dataset def get_dataset(): X, y = make_classification(n_samples=10000, n_features=20, n_informative=15, n_redundant=5, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC())) models.append(('bayes', GaussianNB())) return models # fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict(X_val) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LogisticRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender # make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for name, model in models: # predict with base model yhat = model.predict(X_test) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) # define dataset X, y = get_dataset() # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # split training set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X_train_full, y_train_full, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s, Test: %s' % (X_train.shape, X_val.shape, X_test.shape)) # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make predictions on test set yhat = predict_ensemble(models, blender, X_test) # evaluate predictions score = accuracy_score(y_test, yhat) print('Blending Accuracy: %.3f' % (score*100)) |

Running the example first reports the shape of the train, validation, and test datasets, then the accuracy of the ensemble on the test dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that the blending ensemble achieved a classification accuracy of about 97.900 percent.

|

1 2 |

Train: (3350, 20), Val: (1650, 20), Test: (5000, 20) Blending Accuracy: 97.900 |

In the previous example, crisp class label predictions were combined using the blending model. This is a type of hard voting.

An alternative is to have each model predict class probabilities and use the meta-model to blend the probabilities. This is a type of soft voting and can result in better performance in some cases.

First, we must configure the models to return probabilities, such as the SVM model.

|

1 2 3 4 5 6 7 8 9 |

# get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC(probability=True))) models.append(('bayes', GaussianNB())) return models |

Next, we must change the base models to predict probabilities instead of crisp class labels.

This can be achieved by calling the predict_proba() function in the fit_ensemble() function when fitting the base models.

|

1 2 3 4 5 6 7 8 9 10 |

... # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict_proba(X_val) # store predictions as input for blending meta_X.append(yhat) |

This means that the meta dataset used to train the meta-model will have n columns per classifier, where n is the number of classes in the prediction problem, two in our case.

We also need to change the predictions made by the base models when using the blending model to make predictions on new data.

|

1 2 3 4 5 6 7 8 |

... # make predictions with base models meta_X = list() for name, model in models: # predict with base model yhat = model.predict_proba(X_test) # store prediction meta_X.append(yhat) |

Tying this together, the complete example of using blending on predicted class probabilities for the synthetic binary classification problem is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 |

# blending ensemble for classification using soft voting from numpy import hstack from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split from sklearn.metrics import accuracy_score from sklearn.linear_model import LogisticRegression from sklearn.neighbors import KNeighborsClassifier from sklearn.tree import DecisionTreeClassifier from sklearn.svm import SVC from sklearn.naive_bayes import GaussianNB # get the dataset def get_dataset(): X, y = make_classification(n_samples=10000, n_features=20, n_informative=15, n_redundant=5, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC(probability=True))) models.append(('bayes', GaussianNB())) return models # fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict_proba(X_val) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LogisticRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender # make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for name, model in models: # predict with base model yhat = model.predict_proba(X_test) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) # define dataset X, y = get_dataset() # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # split training set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X_train_full, y_train_full, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s, Test: %s' % (X_train.shape, X_val.shape, X_test.shape)) # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make predictions on test set yhat = predict_ensemble(models, blender, X_test) # evaluate predictions score = accuracy_score(y_test, yhat) print('Blending Accuracy: %.3f' % (score*100)) |

Running the example first reports the shape of the train, validation, and test datasets, then the accuracy of the ensemble on the test dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that blending the class probabilities resulted in a lift in classification accuracy to about 98.240 percent.

|

1 2 |

Train: (3350, 20), Val: (1650, 20), Test: (5000, 20) Blending Accuracy: 98.240 |

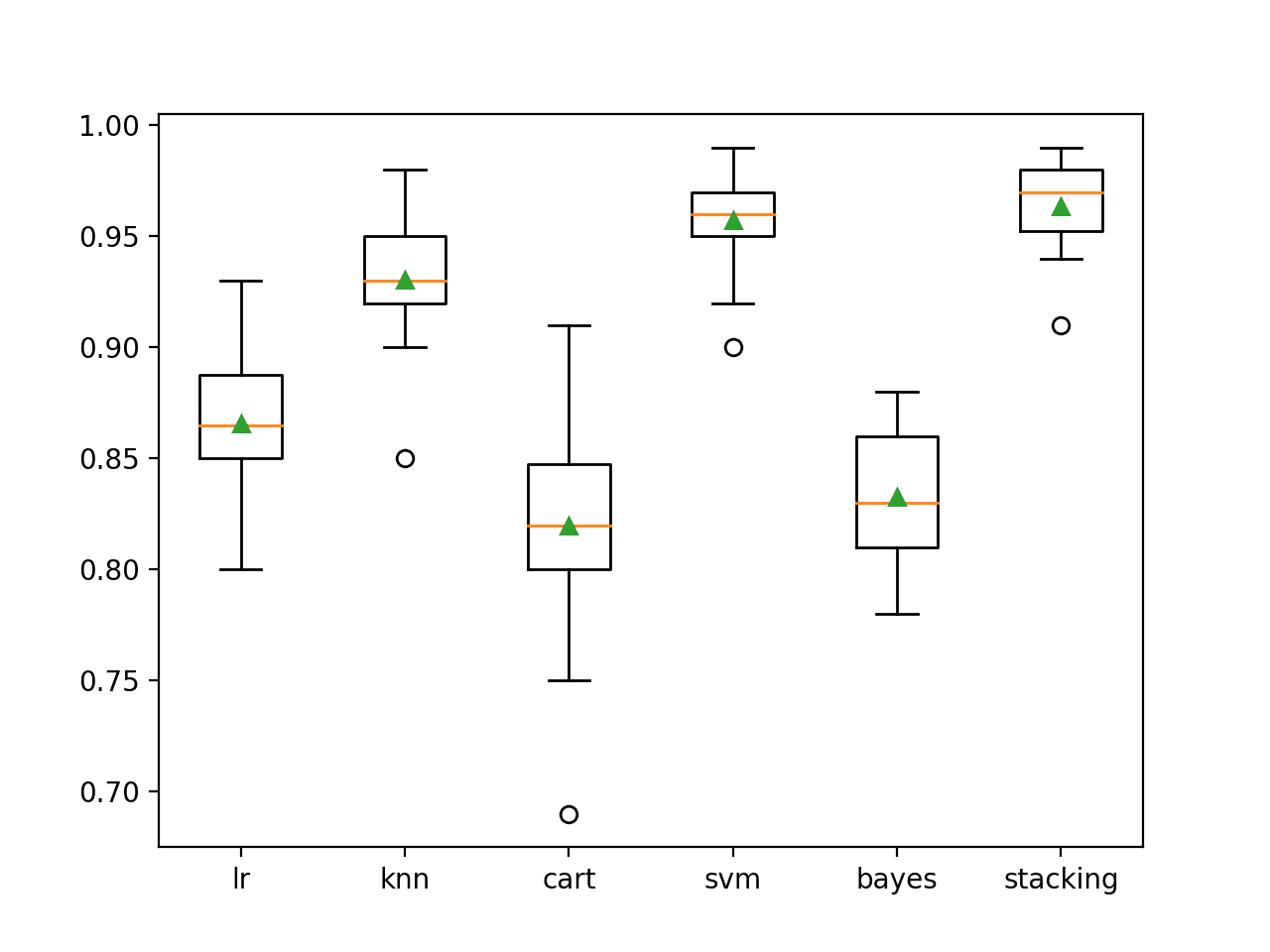

A blending ensemble is only effective if it is able to out-perform any single contributing model.

We can confirm this by evaluating each of the base models in isolation. Each base model can be fit on the entire training dataset (unlike the blending ensemble) and evaluated on the test dataset (just like the blending ensemble).

The example below demonstrates this, evaluating each base model in isolation.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

# evaluate base models on the entire training dataset from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split from sklearn.metrics import accuracy_score from sklearn.linear_model import LogisticRegression from sklearn.neighbors import KNeighborsClassifier from sklearn.tree import DecisionTreeClassifier from sklearn.svm import SVC from sklearn.naive_bayes import GaussianNB # get the dataset def get_dataset(): X, y = make_classification(n_samples=10000, n_features=20, n_informative=15, n_redundant=5, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC(probability=True))) models.append(('bayes', GaussianNB())) return models # define dataset X, y = get_dataset() # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # summarize data split print('Train: %s, Test: %s' % (X_train_full.shape, X_test.shape)) # create the base models models = get_models() # evaluate standalone model for name, model in models: # fit the model on the training dataset model.fit(X_train_full, y_train_full) # make a prediction on the test dataset yhat = model.predict(X_test) # evaluate the predictions score = accuracy_score(y_test, yhat) # report the score print('>%s Accuracy: %.3f' % (name, score*100)) |

Running the example first reports the shape of the full train and test datasets, then the accuracy of each base model on the test dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that all models perform worse than the blended ensemble.

Interestingly, we can see that the SVM comes very close to achieving an accuracy of 98.200 percent compared to 98.240 achieved with the blending ensemble.

|

1 2 3 4 5 6 |

Train: (5000, 20), Test: (5000, 20) >lr Accuracy: 87.800 >knn Accuracy: 97.380 >cart Accuracy: 88.200 >svm Accuracy: 98.200 >bayes Accuracy: 87.300 |

We may choose to use a blending ensemble as our final model.

This involves fitting the ensemble on the entire training dataset and making predictions on new examples. Specifically, the entire training dataset is split onto train and validation sets to train the base and meta-models respectively, then the ensemble can be used to make a prediction.

The complete example of making a prediction on new data with a blending ensemble for classification is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 |

# example of making a prediction with a blending ensemble for classification from numpy import hstack from sklearn.datasets import make_classification from sklearn.model_selection import train_test_split from sklearn.linear_model import LogisticRegression from sklearn.neighbors import KNeighborsClassifier from sklearn.tree import DecisionTreeClassifier from sklearn.svm import SVC from sklearn.naive_bayes import GaussianNB # get the dataset def get_dataset(): X, y = make_classification(n_samples=10000, n_features=20, n_informative=15, n_redundant=5, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LogisticRegression())) models.append(('knn', KNeighborsClassifier())) models.append(('cart', DecisionTreeClassifier())) models.append(('svm', SVC(probability=True))) models.append(('bayes', GaussianNB())) return models # fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for _, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict_proba(X_val) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LogisticRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender # make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for _, model in models: # predict with base model yhat = model.predict_proba(X_test) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) # define dataset X, y = get_dataset() # split dataset set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X, y, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s' % (X_train.shape, X_val.shape)) # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make a prediction on a new row of data row = [-0.30335011, 2.68066314, 2.07794281, 1.15253537, -2.0583897, -2.51936601, 0.67513028, -3.20651939, -1.60345385, 3.68820714, 0.05370913, 1.35804433, 0.42011397, 1.4732839, 2.89997622, 1.61119399, 7.72630965, -2.84089477, -1.83977415, 1.34381989] yhat = predict_ensemble(models, blender, [row]) # summarize prediction print('Predicted Class: %d' % (yhat)) |

Running the example fits the blending ensemble model on the dataset and is then used to make a prediction on a new row of data, as we might when using the model in an application.

|

1 2 |

Train: (6700, 20), Val: (3300, 20) Predicted Class: 1 |

Next, let’s explore how we might evaluate a blending ensemble for regression.

Blending Ensemble for Regression

In this section, we will look at using stacking for a regression problem.

First, we can use the make_regression() function to create a synthetic regression problem with 10,000 examples and 20 input features.

The complete example is listed below.

|

1 2 3 4 5 6 |

# test regression dataset from sklearn.datasets import make_regression # define dataset X, y = make_regression(n_samples=10000, n_features=20, n_informative=10, noise=0.3, random_state=7) # summarize the dataset print(X.shape, y.shape) |

Running the example creates the dataset and summarizes the shape of the input and output components.

|

1 |

(10000, 20) (10000,) |

Next, we can define the list of regression models to use as base models. In this case, we will use linear regression, kNN, decision tree, and SVM models.

|

1 2 3 4 5 6 7 8 |

# get a list of base models def get_models(): models = list() models.append(('lr', LinearRegression())) models.append(('knn', KNeighborsRegressor())) models.append(('cart', DecisionTreeRegressor())) models.append(('svm', SVR())) return models |

The fit_ensemble() function used to train the blending ensemble is unchanged from classification, other than the model used for blending must be changed to a regression model.

We will use the linear regression model in this case.

|

1 2 3 |

... # define blending model blender = LinearRegression() |

Given that it is a regression problem, we will evaluate the performance of the model using an error metric, in this case, the mean absolute error, or MAE for short.

|

1 2 3 4 |

... # evaluate predictions score = mean_absolute_error(y_test, yhat) print('Blending MAE: %.3f' % score) |

Tying this together, the complete example of a blending ensemble for the synthetic regression predictive modeling problem is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 |

# evaluate blending ensemble for regression from numpy import hstack from sklearn.datasets import make_regression from sklearn.model_selection import train_test_split from sklearn.metrics import mean_absolute_error from sklearn.linear_model import LinearRegression from sklearn.neighbors import KNeighborsRegressor from sklearn.tree import DecisionTreeRegressor from sklearn.svm import SVR # get the dataset def get_dataset(): X, y = make_regression(n_samples=10000, n_features=20, n_informative=10, noise=0.3, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LinearRegression())) models.append(('knn', KNeighborsRegressor())) models.append(('cart', DecisionTreeRegressor())) models.append(('svm', SVR())) return models # fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for name, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict(X_val) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LinearRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender # make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for name, model in models: # predict with base model yhat = model.predict(X_test) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) # define dataset X, y = get_dataset() # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # split training set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X_train_full, y_train_full, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s, Test: %s' % (X_train.shape, X_val.shape, X_test.shape)) # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make predictions on test set yhat = predict_ensemble(models, blender, X_test) # evaluate predictions score = mean_absolute_error(y_test, yhat) print('Blending MAE: %.3f' % score) |

Running the example first reports the shape of the train, validation, and test datasets, then the MAE of the ensemble on the test dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that the blending ensemble achieved a MAE of about 0.237 on the test dataset.

|

1 2 |

Train: (3350, 20), Val: (1650, 20), Test: (5000, 20) Blending MAE: 0.237 |

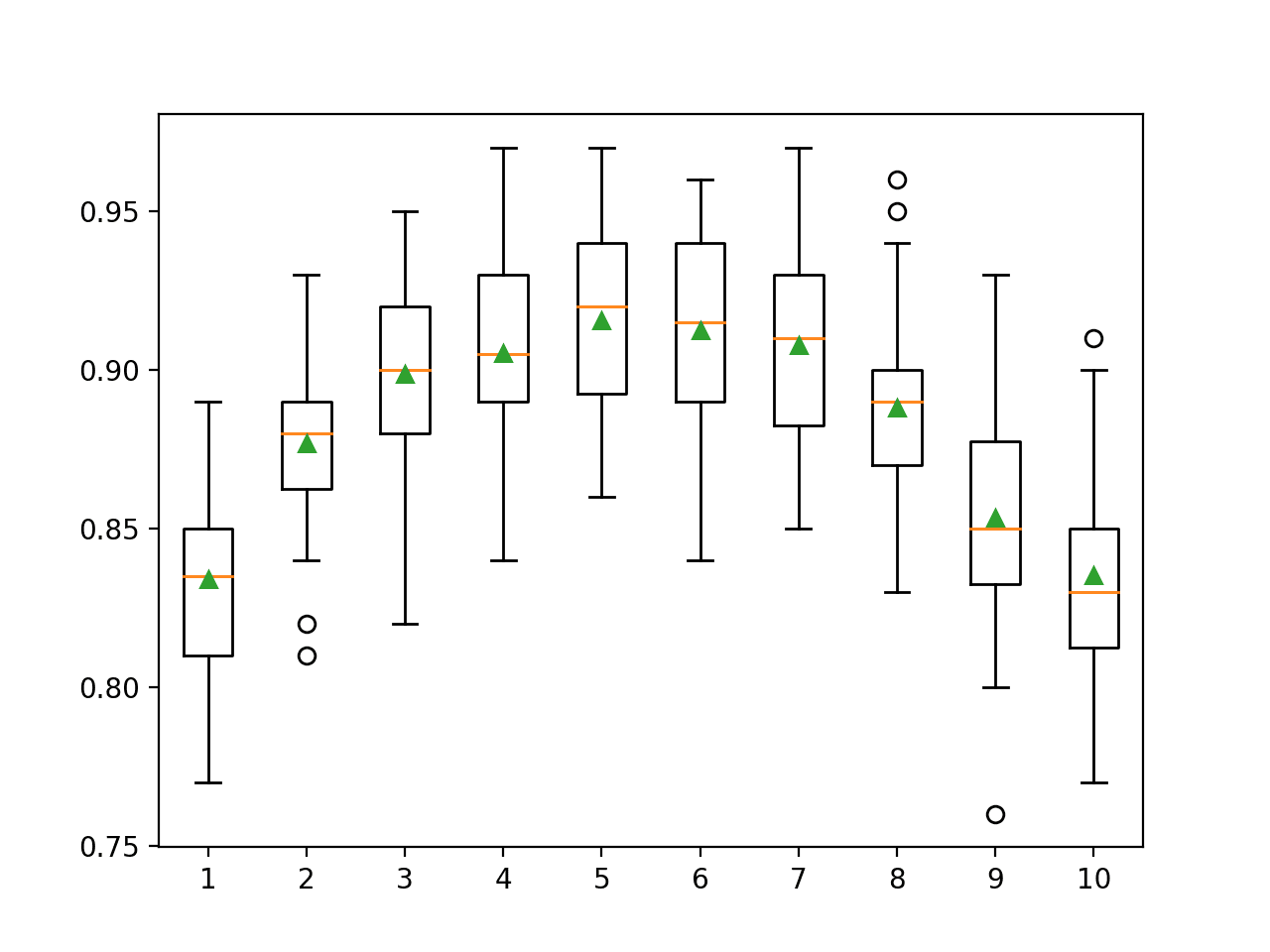

As with classification, the blending ensemble is only useful if it performs better than any of the base models that contribute to the ensemble.

We can check this by evaluating each base model in isolation by first fitting it on the entire training dataset (unlike the blending ensemble) and making predictions on the test dataset (like the blending ensemble).

The example below evaluates each of the base models in isolation on the synthetic regression predictive modeling dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

# evaluate base models in isolation on the regression dataset from numpy import hstack from sklearn.datasets import make_regression from sklearn.model_selection import train_test_split from sklearn.metrics import mean_absolute_error from sklearn.linear_model import LinearRegression from sklearn.neighbors import KNeighborsRegressor from sklearn.tree import DecisionTreeRegressor from sklearn.svm import SVR # get the dataset def get_dataset(): X, y = make_regression(n_samples=10000, n_features=20, n_informative=10, noise=0.3, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LinearRegression())) models.append(('knn', KNeighborsRegressor())) models.append(('cart', DecisionTreeRegressor())) models.append(('svm', SVR())) return models # define dataset X, y = get_dataset() # split dataset into train and test sets X_train_full, X_test, y_train_full, y_test = train_test_split(X, y, test_size=0.5, random_state=1) # summarize data split print('Train: %s, Test: %s' % (X_train_full.shape, X_test.shape)) # create the base models models = get_models() # evaluate standalone model for name, model in models: # fit the model on the training dataset model.fit(X_train_full, y_train_full) # make a prediction on the test dataset yhat = model.predict(X_test) # evaluate the predictions score = mean_absolute_error(y_test, yhat) # report the score print('>%s MAE: %.3f' % (name, score)) |

Running the example first reports the shape of the full train and test datasets, then the MAE of each base model on the test dataset.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the example a few times and compare the average outcome.

In this case, we can see that indeed the linear regression model has performed slightly better than the blending ensemble, achieving a MAE of 0.236 as compared to 0.237 with the ensemble. This may be because of the way that the synthetic dataset was constructed.

Nevertheless, in this case, we would choose to use the linear regression model directly on this problem. This highlights the importance of checking the performance of the contributing models before adopting an ensemble model as the final model.

|

1 2 3 4 5 |

Train: (5000, 20), Test: (5000, 20) >lr MAE: 0.236 >knn MAE: 100.169 >cart MAE: 133.744 >svm MAE: 138.195 |

Again, we may choose to use a blending ensemble as our final model for regression.

This involves fitting splitting the entire dataset into train and validation sets to fit the base and meta-models respectively, then the ensemble can be used to make a prediction for a new row of data.

The complete example of making a prediction on new data with a blending ensemble for regression is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 |

# example of making a prediction with a blending ensemble for regression from numpy import hstack from sklearn.datasets import make_regression from sklearn.model_selection import train_test_split from sklearn.linear_model import LinearRegression from sklearn.neighbors import KNeighborsRegressor from sklearn.tree import DecisionTreeRegressor from sklearn.svm import SVR # get the dataset def get_dataset(): X, y = make_regression(n_samples=10000, n_features=20, n_informative=10, noise=0.3, random_state=7) return X, y # get a list of base models def get_models(): models = list() models.append(('lr', LinearRegression())) models.append(('knn', KNeighborsRegressor())) models.append(('cart', DecisionTreeRegressor())) models.append(('svm', SVR())) return models # fit the blending ensemble def fit_ensemble(models, X_train, X_val, y_train, y_val): # fit all models on the training set and predict on hold out set meta_X = list() for _, model in models: # fit in training set model.fit(X_train, y_train) # predict on hold out set yhat = model.predict(X_val) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store predictions as input for blending meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # define blending model blender = LinearRegression() # fit on predictions from base models blender.fit(meta_X, y_val) return blender # make a prediction with the blending ensemble def predict_ensemble(models, blender, X_test): # make predictions with base models meta_X = list() for _, model in models: # predict with base model yhat = model.predict(X_test) # reshape predictions into a matrix with one column yhat = yhat.reshape(len(yhat), 1) # store prediction meta_X.append(yhat) # create 2d array from predictions, each set is an input feature meta_X = hstack(meta_X) # predict return blender.predict(meta_X) # define dataset X, y = get_dataset() # split dataset set into train and validation sets X_train, X_val, y_train, y_val = train_test_split(X, y, test_size=0.33, random_state=1) # summarize data split print('Train: %s, Val: %s' % (X_train.shape, X_val.shape)) # create the base models models = get_models() # train the blending ensemble blender = fit_ensemble(models, X_train, X_val, y_train, y_val) # make a prediction on a new row of data row = [-0.24038754, 0.55423865, -0.48979221, 1.56074459, -1.16007611, 1.10049103, 1.18385406, -1.57344162, 0.97862519, -0.03166643, 1.77099821, 1.98645499, 0.86780193, 2.01534177, 2.51509494, -1.04609004, -0.19428148, -0.05967386, -2.67168985, 1.07182911] yhat = predict_ensemble(models, blender, [row]) # summarize prediction print('Predicted: %.3f' % (yhat[0])) |

Running the example fits the blending ensemble model on the dataset and is then used to make a prediction on a new row of data, as we might when using the model in an application.

|

1 2 |

Train: (6700, 20), Val: (3300, 20) Predicted: 359.986 |

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Related Tutorials

- Stacking Ensemble Machine Learning With Python

- How to Implement Stacked Generalization (Stacking) From Scratch With Python

Papers

- Feature-Weighted Linear Stacking, 2009.

- The BellKor 2008 Solution to the Netflix Prize, 2008.

- Kaggle Ensemble Guide, MLWave, 2015.

Articles

Summary

In this tutorial, you discovered how to develop and evaluate a blending ensemble in python.

Specifically, you learned:

- Blending ensembles are a type of stacking where the meta-model is fit using predictions on a holdout validation dataset instead of out-of-fold predictions.

- How to develop a blending ensemble, including functions for training the model and making predictions on new data.

- How to evaluate blending ensembles for classification and regression predictive modeling problems.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Btw you mention that scikit-learn doesn’t natively support “blending”, which is not strictly true. You can pass any cross validation strategy to either the StackingRegressor or Stacking Classifier, therefore you can easily pass a ShuffleSplit CV and get “blending” behavior from the stacking Classifier or regressor

…

Thanks.

Since base models are fit on a training dataset and you need good predictions to go further in your flow I think you must have hyper parameters on get_models…Am I right?

Sorry, I don’t understand. Can you please elaborate?

Sorry. Elaboraring…your get_models buiilds too simple models, with no parameters at all. Would not be necessáry to fill with your best hyper parameters founded by each model ?

O did it and finallly got the best prediction for all my Work.

You can if you like.

Often highly tuned models in ensembles are fragile and don’t result in the best overall performance. Not always, but often.

Hi Jason,thanks for the post.

Is there a way to use cross validation ( as implemented on the sickit learn package ) with blending ( as implemented in your post ) ?

For me not in an obvious way because the training folds have to be splitted inta sub training et validation sets ( respectively for training the level 0 models and fitting the blender ).

Thanks.

Perhaps wrap the entire process with a for loop for enumerating the folds manually.

OK this is what I have done :

Nice work. Sorry, I d on’t have the capacity to review/debug code.

Hi Jason,

this was just to share. I have tested the code. I think it works. The main point is to split de training fold into one for the level 0 models and another for the blender ( the level 1 model ).

Thanks.

Nicely Explained. Very helpful for a newbie like me. All your explanations are very clear and easy to comprehend. Thanks

Thanks!

Dr. Brownlee,

Thank you for this comprehensive and clearly explained post! Your posts are always helpful for us. Just wanted to know one thing. Is it worthy to use nested cross-validation with a blended ensemble classifier?

You’re welcome.

I don’t think it’s worth tuning the models in a blending ensemble, it can make the ensemble fragile and have worse performance.

We are training the meta model on the predictions made by base models on the validation set and these base models are being trained on X_train and y_train. But then when we compare this meta model performance with base models WHY train the base models again on X_train_full and y_train_full. Isn’t this unfair. Since we are training meta model on the results of base models which are inturn training on lesser data and on the other hand we are training the base models again on the entire training data. I hope I was able to express myself. Thank you.

The model is still evaluated on the test set, e.g. data not seen during training.

Okay. Thank you.

You’re welcome.

Thank you very much for this interesting article.

In case someone wants to know when Stacking is preferable compared to Blending or vice versa, I leave here a paper in which we tested different scenarios:

“Empirical Study: Visual Analytics for Comparing Stacking to Blending Ensemble Learning”

Accesible via IEEEXplore: https://doi.org/10.1109/CSCS52396.2021.00008

Thanks for sharing.

Nice article, I am stuck here in make_classification method where you are passing random samples can you please pass any dataset having X and Y values

Yes, you can use a dataset, the code for using the model is the same.

Sir thanks for the amazing work, I had been learning many things from you. But sir currently I am working on a classification problem and finding that xgboost outperforming other state-of-the-art models. I would like to ask you few questions:

1. Sir is it okay to use xgboost in this technique

2. What according to you could be the best combination of models along with xgboost for this blending technique?

3. And sir how to decide which model should we choose as meta classifier and base classifier?

XGBoost has been known in kaggle competitions that working very well. I am not surprise you see the same, and of course, you can use it in this technique. For your other questions, there is no rule of thumb. You better experiment with your data to see what is doing the best.

after blending of 3 or 4 regression models(i do it using pycaret), how to get the final model(equations) like; coef and intercepts at final output.

Hi gyanesh…the following may be of interest to you:

https://stackoverflow.com/questions/68809169/how-to-obtain-the-coefficient-estimates-in-stackingclassifier

Hi Jason, thank you for the always useful and interesting post.

You mentioned in your comment that tuning the hyperparameters of the base learner can introduce some fragility. What about tuning the meta-model?

Hi Max…You are very welcome! Tuning the hyperparameters of the meta-model in a stacked ensemble model can indeed introduce some complexity and potential fragility, but it can also offer significant benefits if done carefully. Here’s a breakdown of the considerations when tuning the meta-model:

### Potential Fragility in Tuning the Meta-Model

1. **Overfitting**:

– The meta-model is trained on the predictions of the base learners, which are themselves outputs of potentially complex models. If the meta-model is too complex (e.g., using a deep neural network with many layers or a highly regularized model), it may overfit to the predictions of the base learners rather than generalizing well to new data.

– This overfitting can be more pronounced if the base learners themselves are overfit or if the meta-model’s hyperparameters are too aggressively tuned.

2. **Dependency on Base Learner Performance**:

– The performance of the meta-model is closely tied to the diversity and quality of the base learners. If the base learners are not well-tuned or diverse, even a well-tuned meta-model may not improve overall performance significantly.

– Tuning the meta-model without ensuring the base learners are well-optimized can lead to poor results.

3. **Complexity and Computational Cost**:

– Hyperparameter tuning of the meta-model adds an additional layer of complexity to the overall model training process. This can increase computational costs and make the model more difficult to interpret and debug.

– The more complex the meta-model, the more challenging it becomes to tune it effectively, especially when dealing with a large number of base learners.

4. **Interaction Effects**:

– The meta-model’s performance depends on how well it can combine the outputs of the base learners. Tuning its hyperparameters might lead to unexpected interaction effects where changes in the meta-model affect some base learners more than others, leading to unpredictable results.

– Ensuring that the meta-model is tuned to appropriately balance the contributions of the base learners can be tricky.

### Benefits of Tuning the Meta-Model

1. **Performance Gains**:

– A well-tuned meta-model can significantly improve the overall performance of the ensemble. It can learn to give more weight to stronger base learners and adjust for the weaknesses of others, leading to a more robust and accurate final prediction.

– Tuning the hyperparameters of the meta-model can help it to better capture the relationships between the outputs of the base learners, leading to improved generalization.

2. **Customization**:

– Tuning allows you to customize the meta-model to better handle the specific characteristics of your dataset. For example, you can adjust regularization parameters to prevent overfitting or tweak learning rates to ensure smooth convergence.

– This flexibility can be especially useful in complex datasets where simple stacking might not suffice.

3. **Optimization of the Ensemble Strategy**:

– By tuning the meta-model, you can optimize the strategy it uses to combine base learners. For example, you might want to prioritize certain base learners in specific regions of the feature space, which a well-tuned meta-model can achieve.

### Best Practices for Tuning the Meta-Model

– **Cross-Validation**: Use cross-validation to ensure that the tuning process does not lead to overfitting. The meta-model should be validated on unseen data to ensure its generalization ability.

– **Start Simple**: Begin with a simple meta-model, like a linear regression or a basic decision tree, before moving on to more complex models like gradient boosting or neural networks. This approach allows you to understand how the meta-model interacts with the base learners.

– **Sequential Tuning**: Consider tuning the base learners first to ensure they are performing optimally before focusing on the meta-model. This stepwise approach can prevent the tuning process from becoming too complex.

– **Regularization**: Incorporate regularization techniques (like L1, L2 regularization) in the meta-model to prevent overfitting, especially when dealing with a large number of base learners.

– **Hyperparameter Search**: Use grid search, random search, or more advanced methods like Bayesian optimization to find the best hyperparameters for the meta-model. Ensure that the search is extensive enough to explore the potential of the meta-model without overfitting.

In conclusion, while tuning the meta-model can introduce fragility, it can also lead to significant performance improvements if done carefully. The key is to balance the complexity of the meta-model with the quality and diversity of the base learners, ensuring that the overall ensemble remains robust and generalizes well to new data.