Hessian matrices belong to a class of mathematical structures that involve second order derivatives. They are often used in machine learning and data science algorithms for optimizing a function of interest.

In this tutorial, you will discover Hessian matrices, their corresponding discriminants, and their significance. All concepts are illustrated via an example.

After completing this tutorial, you will know:

- Hessian matrices

- Discriminants computed via Hessian matrices

- What information is contained in the discriminant

Let’s get started.

Tutorial Overview

This tutorial is divided into three parts; they are:

- Definition of a function’s Hessian matrix and the corresponding discriminant

- Example of computing the Hessian matrix, and the discriminant

- What the Hessian and discriminant tell us about the function of interest

Prerequisites

For this tutorial, we assume that you already know:

- Derivative of functions

- Function of several variables, partial derivatives and gradient vectors

- Higher order derivatives

You can review these concepts by clicking on the links given above.

What Is A Hessian Matrix?

The Hessian matrix is a matrix of second order partial derivatives. Suppose we have a function f of n variables, i.e.,

$$f: R^n \rightarrow R$$

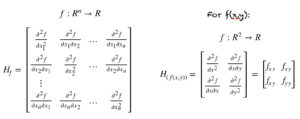

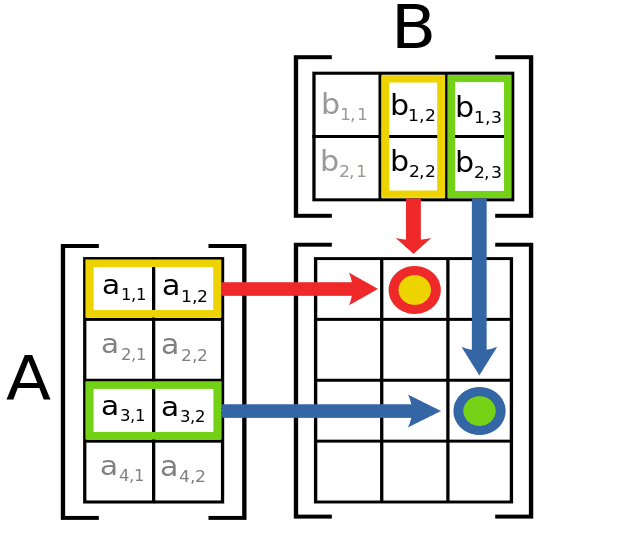

The Hessian of f is given by the following matrix on the left. The Hessian for a function of two variables is also shown below on the right.

We already know from our tutorial on gradient vectors that the gradient is a vector of first order partial derivatives. The Hessian is similarly, a matrix of second order partial derivatives formed from all pairs of variables in the domain of f.

Want to Get Started With Calculus for Machine Learning?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

What Is The Discriminant?

The determinant of the Hessian is also called the discriminant of f. For a two variable function f(x, y), it is given by:

Examples of Hessian Matrices And Discriminants

Suppose we have the following function:

g(x, y) = x^3 + 2y^2 + 3xy^2

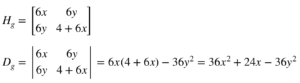

Then the Hessian H_g and the discriminant D_g are given by:

Let’s evaluate the discriminant at different points:

D_g(0, 0) = 0

D_g(1, 0) = 36 + 24 = 60

D_g(0, 1) = -36

D_g(-1, 0) = 12

What Do The Hessian And Discriminant Signify?

The Hessian and the corresponding discriminant are used to determine the local extreme points of a function. Evaluating them helps in the understanding of a function of several variables. Here are some important rules for a point (a,b) where the discriminant is D(a, b):

- The function f has a local minimum if f_xx(a, b) > 0 and the discriminant D(a,b) > 0

- The function f has a local maximum if f_xx(a, b) < 0 and the discriminant D(a,b) > 0

- The function f has a saddle point if D(a, b) < 0

- We cannot draw any conclusions if D(a, b) = 0 and need more tests

Example: g(x, y)

For the function g(x,y):

- We cannot draw any conclusions for the point (0, 0)

- f_xx(1, 0) = 6 > 0 and D_g(1, 0) = 60 > 0, hence (1, 0) is a local minimum

- The point (0,1) is a saddle point as D_g(0, 1) < 0

- f_xx(-1,0) = -6 < 0 and D_g(-1, 0) = 12 > 0, hence (-1, 0) is a local maximum

The figure below shows a graph of the function g(x, y) and its corresponding contours.

Why Is The Hessian Matrix Important In Machine Learning?

The Hessian matrix plays an important role in many machine learning algorithms, which involve optimizing a given function. While it may be expensive to compute, it holds some key information about the function being optimized. It can help determine the saddle points, and the local extremum of a function. It is used extensively in training neural networks and deep learning architectures.

Extensions

This section lists some ideas for extending the tutorial that you may wish to explore.

- Optimization

- Eigen values of the Hessian matrix

- Inverse of Hessian matrix and neural network training

If you explore any of these extensions, I’d love to know. Post your findings in the comments below.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Tutorials

- Derivatives

- Gradient descent for machine learning

- What is gradient in machine learning

- Partial derivatives and gradient vectors

- Higher order derivatives

- How to choose an optimization algorithm

Resources

- Additional resources on Calculus Books for Machine Learning

Books

- Thomas’ Calculus, 14th edition, 2017. (based on the original works of George B. Thomas, revised by Joel Hass, Christopher Heil, Maurice Weir)

- Calculus, 3rd Edition, 2017. (Gilbert Strang)

- Calculus, 8th edition, 2015. (James Stewart)

Summary

In this tutorial, you discovered what are Hessian matrices. Specifically, you learned:

- Hessian matrix

- Discriminant of a function

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Hi dear Mehreen Saeed

I have a suggestion, could you combine the application with courses by using Python,and also show some real problem by using machine learning,because we need use the technology to solve real problems in real time,on the other hand , CV also can post on it like custom object-tracking detection,thank you so much!

Sure, such articles will follow. For this article we focus on learning the math before implementation in Python. It is always best to understand how things work and then go on the implementation part 🙂

Thank you for bothering to write this article.

🙂

Small comment: your rule about the discriminant being either positive or negative only applies to 2 x 2 matrices. For higher dimensional matrices, the general rule is that the Hessian must be either positive definite or negative definite to determine extrema. Of course, for symmetric 2 x 2 matrices, the determinant being positive guarantees that the two eigenvalues are positive; so while you say that works for 2×2 matrices, I do not believe it works in general. Never-the-less, you say “Evaluating them helps in the understanding of a function of several variables.” so this suggests what you are saying generalizes.

Now, I could be wrong, but if so, please let me know.

An step by step solution using S-Math studio may be more illustrative.

100% Eigenvalues and the Hessian.

Nice post! Thank you!

Normally on machine learning problems we do not know the explicit function y =f(x1,…xn). And we solve it…it does not give us this explicit output-input dependency …

So with the exception of testing a known function , trough a discrete DATASET production and later on with some genetic algorithms or similar methods to try to identify maximum and minimum of de dataset that we can check directly via Hessian component and discriminant analysis … I do not see how are Hessian matrix used in machine learning algorithms ?

regards

Thank you, Mehreen. Your explanations are excellent! Python code examples would be really helpful!

Hi Mehreen,

Can you tell us how to find the Hessian matrix for a mutli-function with multi-variable?

Like fx(theta1, theta2)=0 and fy(theta1, theta2)=0. I know how to get Jacobian matrix for fx and fy but how to get hessian for both??

Hessian matrix is for scalar-valued functions. If you treat fx and fy as two separate functions, then you can get each a Hessian. If you consider [fx,fy] as a vector (hence a vector-valued function), Jacobian is all you can get.

What I don’t understand is: why can’t you figure out if a point is a maximum, minimum, or saddle point by looking at only fxx and fyy? Why do you need to compute D = fxx + fyy – (fxy)^2?

It seems straight forward enough to see that if both fxx and fyy are negative then you have a maximum, if both are positive then you have a minimum, and if one is positive and one is negative then you have a saddle point. Can you think of an example where both fxx and fyy have the same sign and yet the surface has a saddle?

Maybe I am not understanding the geometric interpretation of fxy if there is one… Is there?

Thank you!

Best article I’ve found yet on this topic

Hi Ken…If you have a surface already you can state that there appears to be a maximum or minimum via visual inspection. The equation proves it mathematically. This is important because other code cannot “visually inspect” a curve as we can as humans. So if other decisions need to be made based upon finding a maximum and/or minimum the must be a mathematical basis.

Yes used in influence functions as there a IHVP (Inverse hessian vector product) term.

The question researcher had should we compute hessian (Computational) or can we get this product without building all the hessain.