Matrices that contain mostly zero values are called sparse, distinct from matrices where most of the values are non-zero, called dense.

Large sparse matrices are common in general and especially in applied machine learning, such as in data that contains counts, data encodings that map categories to counts, and even in whole subfields of machine learning such as natural language processing.

It is computationally expensive to represent and work with sparse matrices as though they are dense, and much improvement in performance can be achieved by using representations and operations that specifically handle the matrix sparsity.

In this tutorial, you will discover sparse matrices, the issues they present, and how to work with them directly in Python.

After completing this tutorial, you will know:

- That sparse matrices contain mostly zero values and are distinct from dense matrices.

- The myriad of areas where you are likely to encounter sparse matrices in data, data preparation, and sub-fields of machine learning.

- That there are many efficient ways to store and work with sparse matrices and SciPy provides implementations that you can use directly.

Kick-start your project with my new book Linear Algebra for Machine Learning, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

A Gentle Introduction to Sparse Matrices for Machine Learning

Photo by CAJC: in the Rockies, some rights reserved.

Tutorial Overview

This tutorial is divided into 5 parts; they are:

- Sparse Matrix

- Problems with Sparsity

- Sparse Matrices in Machine Learning

- Working with Sparse Matrices

- Sparse Matrices in Python

Need help with Linear Algebra for Machine Learning?

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Sparse Matrix

A sparse matrix is a matrix that is comprised of mostly zero values.

Sparse matrices are distinct from matrices with mostly non-zero values, which are referred to as dense matrices.

A matrix is sparse if many of its coefficients are zero. The interest in sparsity arises because its exploitation can lead to enormous computational savings and because many large matrix problems that occur in practice are sparse.

— Page 1, Direct Methods for Sparse Matrices, Second Edition, 2017.

The sparsity of a matrix can be quantified with a score, which is the number of zero values in the matrix divided by the total number of elements in the matrix.

|

1 |

sparsity = count zero elements / total elements |

Below is an example of a small 3 x 6 sparse matrix.

|

1 2 3 |

1, 0, 0, 1, 0, 0 A = (0, 0, 2, 0, 0, 1) 0, 0, 0, 2, 0, 0 |

The example has 13 zero values of the 18 elements in the matrix, giving this matrix a sparsity score of 0.722 or about 72%.

Problems with Sparsity

Sparse matrices can cause problems with regards to space and time complexity.

Space Complexity

Very large matrices require a lot of memory, and some very large matrices that we wish to work with are sparse.

In practice, most large matrices are sparse — almost all entries are zeros.

— Page 465, Introduction to Linear Algebra, Fifth Edition, 2016.

An example of a very large matrix that is too large to be stored in memory is a link matrix that shows the links from one website to another.

An example of a smaller sparse matrix might be a word or term occurrence matrix for words in one book against all known words in English.

In both cases, the matrix contained is sparse with many more zero values than data values. The problem with representing these sparse matrices as dense matrices is that memory is required and must be allocated for each 32-bit or even 64-bit zero value in the matrix.

This is clearly a waste of memory resources as those zero values do not contain any information.

Time Complexity

Assuming a very large sparse matrix can be fit into memory, we will want to perform operations on this matrix.

Simply, if the matrix contains mostly zero-values, i.e. no data, then performing operations across this matrix may take a long time where the bulk of the computation performed will involve adding or multiplying zero values together.

It is wasteful to use general methods of linear algebra on such problems, because most of the O(N^3) arithmetic operations devoted to solving the set of equations or inverting the matrix involve zero operands.

— Page 75, Numerical Recipes: The Art of Scientific Computing, Third Edition, 2007.

This is a problem of increased time complexity of matrix operations that increases with the size of the matrix.

This problem is compounded when we consider that even trivial machine learning methods may require many operations on each row, column, or even across the entire matrix, resulting in vastly longer execution times.

Sparse Matrices in Machine Learning

Sparse matrices turn up a lot in applied machine learning.

In this section, we will look at some common examples to motivate you to be aware of the issues of sparsity.

Data

Sparse matrices come up in some specific types of data, most notably observations that record the occurrence or count of an activity.

Three examples include:

- Whether or not a user has watched a movie in a movie catalog.

- Whether or not a user has purchased a product in a product catalog.

- Count of the number of listens of a song in a song catalog.

Data Preparation

Sparse matrices come up in encoding schemes used in the preparation of data.

Three common examples include:

- One-hot encoding, used to represent categorical data as sparse binary vectors.

- Count encoding, used to represent the frequency of words in a vocabulary for a document

- TF-IDF encoding, used to represent normalized word frequency scores in a vocabulary.

Areas of Study

Some areas of study within machine learning must develop specialized methods to address sparsity directly as the input data is almost always sparse.

Three examples include:

- Natural language processing for working with documents of text.

- Recommender systems for working with product usage within a catalog.

- Computer vision when working with images that contain lots of black pixels.

If there are 100,000 words in the language model, then the feature vector has length 100,000, but for a short email message almost all the features will have count zero.

— Page 866, Artificial Intelligence: A Modern Approach, Third Edition, 2009.

Working with Sparse Matrices

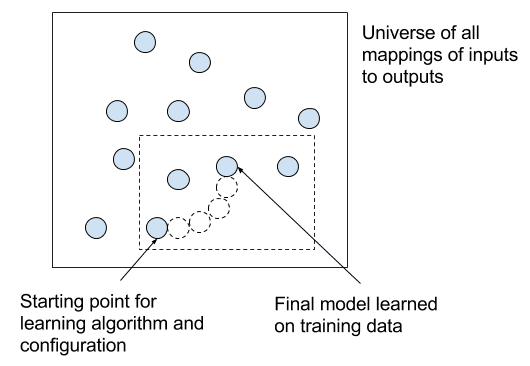

The solution to representing and working with sparse matrices is to use an alternate data structure to represent the sparse data.

The zero values can be ignored and only the data or non-zero values in the sparse matrix need to be stored or acted upon.

There are multiple data structures that can be used to efficiently construct a sparse matrix; three common examples are listed below.

- Dictionary of Keys. A dictionary is used where a row and column index is mapped to a value.

- List of Lists. Each row of the matrix is stored as a list, with each sublist containing the column index and the value.

- Coordinate List. A list of tuples is stored with each tuple containing the row index, column index, and the value.

There are also data structures that are more suitable for performing efficient operations; two commonly used examples are listed below.

- Compressed Sparse Row. The sparse matrix is represented using three one-dimensional arrays for the non-zero values, the extents of the rows, and the column indexes.

- Compressed Sparse Column. The same as the Compressed Sparse Row method except the column indices are compressed and read first before the row indices.

The Compressed Sparse Row, also called CSR for short, is often used to represent sparse matrices in machine learning given the efficient access and matrix multiplication that it supports.

Sparse Matrices in Python

SciPy provides tools for creating sparse matrices using multiple data structures, as well as tools for converting a dense matrix to a sparse matrix.

Many linear algebra NumPy and SciPy functions that operate on NumPy arrays can transparently operate on SciPy sparse arrays. Further, machine learning libraries that use NumPy data structures can also operate transparently on SciPy sparse arrays, such as scikit-learn for general machine learning and Keras for deep learning.

A dense matrix stored in a NumPy array can be converted into a sparse matrix using the CSR representation by calling the csr_matrix() function.

In the example below, we define a 3 x 6 sparse matrix as a dense array, convert it to a CSR sparse representation, and then convert it back to a dense array by calling the todense() function.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# dense to sparse from numpy import array from scipy.sparse import csr_matrix # create dense matrix A = array([[1, 0, 0, 1, 0, 0], [0, 0, 2, 0, 0, 1], [0, 0, 0, 2, 0, 0]]) print(A) # convert to sparse matrix (CSR method) S = csr_matrix(A) print(S) # reconstruct dense matrix B = S.todense() print(B) |

Running the example first prints the defined dense array, followed by the CSR representation, and then the reconstructed dense matrix.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

[[1 0 0 1 0 0] [0 0 2 0 0 1] [0 0 0 2 0 0]] (0, 0) 1 (0, 3) 1 (1, 2) 2 (1, 5) 1 (2, 3) 2 [[1 0 0 1 0 0] [0 0 2 0 0 1] [0 0 0 2 0 0]] |

NumPy does not provide a function to calculate the sparsity of a matrix.

Nevertheless, we can calculate it easily by first finding the density of the matrix and subtracting it from one. The number of non-zero elements in a NumPy array can be given by the count_nonzero() function and the total number of elements in the array can be given by the size property of the array. Array sparsity can therefore be calculated as

|

1 |

sparsity = 1.0 - count_nonzero(A) / A.size |

The example below demonstrates how to calculate the sparsity of an array.

|

1 2 3 4 5 6 7 8 9 |

# calculate sparsity from numpy import array from numpy import count_nonzero # create dense matrix A = array([[1, 0, 0, 1, 0, 0], [0, 0, 2, 0, 0, 1], [0, 0, 0, 2, 0, 0]]) print(A) # calculate sparsity sparsity = 1.0 - count_nonzero(A) / A.size print(sparsity) |

Running the example first prints the defined sparse matrix followed by the sparsity of the matrix.

|

1 2 3 4 5 |

[[1 0 0 1 0 0] [0 0 2 0 0 1] [0 0 0 2 0 0]] 0.7222222222222222 |

Extensions

This section lists some ideas for extending the tutorial that you may wish to explore.

- Develop your own examples for converting a dense array to sparse and calculating sparsity.

- Develop an example for the each sparse matrix representation method supported by SciPy.

- Select one sparsity representation method and implement it yourself from scratch.

If you explore any of these extensions, I’d love to know.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

- Introduction to Linear Algebra, Fifth Edition, 2016.

- Section 2.7 Sparse Linear Systems, Numerical Recipes: The Art of Scientific Computing, Third Edition, 2007.

- Artificial Intelligence: A Modern Approach, Third Edition, 2009.

- Direct Methods for Sparse Matrices, Second Edition, 2017.

API

- Sparse matrices (scipy.sparse) API

- scipy.sparse.csr_matrix() API

- numpy.count_nonzero() API

- numpy.ndarray.size API

Articles

Summary

In this tutorial, you discovered sparse matrices, the issues they present, and how to work with them directly in Python.

Specifically, you learned:

- That sparse matrices contain mostly zero values and are distinct from dense matrices.

- The myriad of areas where you are likely to encounter sparse matrices in data, data preparation, and sub-fields of machine learning.

- That there are many efficient ways to store and work with sparse matrices and SciPy provides implementations that you can use directly.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

nice piece of work

Thanks!

Thanks, as an adult learner refreshing on algebra for computer science, this was the best explanation!

Thanks, I’m happy to hear that!

Sir Jason Thank you very much. Your blogs are always awesome. I have learnt a lot from your invaluable posts. Is their a way one can generate data-sets say 1000000 random numbers between 0-16 and divide it into mini_batches of certain size for training a neural network?

Why?

Nice job for fundamentals of computations

However please more on know how and its computations to drive logic results or applications.

Like what for example?

Performance on CSR/CSC is severly limited in performance terms by overhead of generating indices

Blocked CSR/CSC is a much better approach especially for SIMD machines and allows loop unrolling and vectorisation to vastly improve performance compared to vanilla CSC/CSR

Great tip, thanks David.

Hi Jason and all and its great efforts ur given this fields.

Example u inquire about is give directions beyond system computing and how to drive logic in context of matrices comparisons, multiplication and other operands that can straight forward generate results.

I hope we keep this discussion and believe Jason is great candidate for here exploitation.

Regards

Sorry, I don’t follow. Are you able to give more context?

Jason,

Thanks for this excellent post. The natural next question is : can we use the efficient rep of sparse matrix in ML libs like scikit learn.

Rgds

I believe so.

Very helpful, once again! As I only have experience with dense numpy arrays, It is not clear to me how to feed a Machine learning API like Keras sparse arrays. If that is something you could cover, that would be great.

I thought sparse arrays could be provided directly to Keras. Have you tried?

No I have not tried. Sparse arrays are completely new to me. I was trying to build one Numpy consolidated array from 100k of sparse arrays and I got an error. That I how I ended up on your blog (seem’s like I end up here often 🙂 ).

It appear Numpy does not support sparse arrays; so I am having to rewrite my code to support the sparse array or expand them and load into a Numpy array.

It is not clear to me how sparse arrays are handled. Does the API expand them and then process or is something else done?

Expanding them would be a bad move (may as well use dense to begin with).

I expect that a given API would handle the sparse structure explicitly (an if statement for sparse/dense).

Thank you for the great article! Was wondering, since there are drawbacks of sparsity, how do we go about the TF-IDF model?

I give an example of TF-IDF here:

https://machinelearningmastery.com/prepare-text-data-machine-learning-scikit-learn/

sir i am having “memory error” when i try to convert my CSR sparse matrix into numpy array. Can you please tell me how to get rid of “memory errors” when converting sparse into numpy?

scores = cross_val_score(knn, x_train.toarray(), polarity_train, cv=10, scoring=’accuracy’)

my x_train is CSR sparse matrix of shape : (700, 5904)

“x_train.to array()” is giving me memory error. Please tell me how to achieve it ?

Perhaps you have a bug, post on stackoverflow?

Perhaps try using a smaller dataset?

Perhaps try using a machine with more RAM, like an EC2 instance?

Hi,

Let’s assume we have both numerical and text data in our dataframe. We convert the text data to sparse matrix using tfIdf vector. Can I just add this sparse matrix to my numerical variables and use it as feature variable data?

Thanks.

Yes, you can combine the vectors or use a model that supports two inputs one for text and one for numerical data (e.g. like a neural net).

Hola Jason,

nice review of sparsity !

in addition to be operative with API, that you do very well and also trough specific codes for particular examples, I think we would appreciate, from time to time, to get some more intuitive (or deeper ideas that are behind the concept) in order to be develop future concepts more easily, as a recommendation for your blog, if you allow me it…

So, talking about sparsity, one “intuitive” (and powerful) idea could be expressed using the terms “vector space” (even of infinity space dimensions). Where a vector can be expressed as the combination of the base of the space vector. For example in 3D Euclidean, a vector has 3 coordinates (one for each 3d space dimensions).

So Sparsity, in a infinity space, where we would need (ideally) a lot of coordinates (infinity), to define completely this vector vs that space base, must be summarize with only few coordinates (the main ones), because this vector does not depend of the rest of the base (or nearly)…

Another way of see it is, that sparsity it is a characteristic of reducing dimensions after projecting the vector over an infinite (or large space base), because only depend of few of them, that clearly simplifying the complexity …

Even we can force (via Regularize weights, PCA analysis, search for significative covariances (or independent variables), ,etc. etc.) in order to neglect the rest of coordinates with small values (simplifying, reducing, smoothing,..are intuitive words to describe this issue ), in comparison to the others (relative) main coordinates. Just in order to retain only the main coordinates…

This is a picture (that help in case) to introduce and the reason to practice with sparsity tools …I hope could help anyone else in the same situation …

Great suggestion, thanks JG.

Say, a dataset uses a specific nonzero value(say 100, and this value does not appear in original data) in stead of zero. Is there a way around to use the libraries in stead of replacing them with 0 before library calls?

Yes, use numpy array manipulation to see all values of 100 to 0.

Hi Jason…

Is it possible to simulate a TF-IDF matrix?

What do you mean simulate?

You calculate the matrix from data.

I would like to do a simulation study and I need simulate a TFIDF matrix, using some distribution for its components…for instance…diritchlet distribution…

Interesting. I’m not sure I have a tutorial that will help you with this.

Hi Jasno,

is it possible to use big sparse matrix for input to Nerural network type of Autoencoder?

Can you give some example?

Perhaps. I don’t have an example, sorry.

Try it and see. Keras may support it.

Hi,

Thanks for sharing. However, I have a question about the CSR format shown in your example.

According to the definition of CSR format, it stores a sparse m × n matrix M in row form using three (one-dimensional) arrays (A, IA, JA). However, how can the user get the (A, IA, JA) in your example? Thanks.

https://en.wikipedia.org/wiki/Sparse_matrix

You can learn more about the function here:

https://docs.scipy.org/doc/scipy/reference/generated/scipy.sparse.csr_matrix.html

Hi Jason, great post as many others.

I am playing with some weird datasets which seems to be composed of quite sparse images (avg. ~90%). Could you point me to an algorithm to determine the region with the highest density of non-zero elements in a sparse matrix? It does not have to be a contiguous sub-matrix of non-zero elements, I have in mind something like k-means but not as computationally heavy. Thanks!

Interesting. Perhaps something like a max-pool or avg-pool over the dense matrix is my first thought?

Hi Jason, thank you for your post.

I was wondering how is it possible to leverage sparse weight matrix on frameworks such as Keras or TensorFlow, I think I’ve never seen support to CSR matrices. I’ve managed to successfully prune the vast majority of my model’s weights by setting them to zero. But unfortunately it’s unclear to me how to delete them from my architecture, because even though they’re zero the memory footprint remains the same. Can you give me some insight on this matter? Thanks.

Hmm, I think many frameworks do support sparse matrices.

From memory, I thought both sklearn and keras did support them, perhaps I am mis-remembering?

hello , first of all i want to thank you for this clear and nice article.

please i have question when you said :

“The solution to representing and working with sparse matrices is to use an alternate data structure to represent the sparse data.

The zero values can be ignored and only the data or non-zero values in the sparse matrix need to be stored or acted upon.

There are multiple data structures that can be used to efficiently construct a sparse matrix;”

i want to confirm with you when you said this sentences is CSR(compressed Sparse Row) Representation doesn’t store at all Zero value in memory physically ?

Thank in advance for your answer

Each technique operates differently, but yes, that is the general idea.

I am struggling to understand / conceptualize index pointer in case of sparse matrix.

Can someone help me to understand index pointer with following example?

indptr = np.array([0, 2, 3, 6])

indices = np.array([0, 2, 2, 0, 1, 2])

data = np.array([1, 2, 3, 4, 5, 6])

csc_matrix((data, indices, indptr), shape=(3, 3)).toarray()

array([[1, 0, 4],

[0, 0, 5],

[2, 3, 6]])

Sorry, I can’t dive into your example. Perhaps try posting to stackoverflow?

Thanks so much for the post it was very useful, have a questions that i wanted to ask

i have built a data set of textually extracted features from various source code files,

the problem is that each record in my data set have a different column number producing multiple NAN values in my excel sheet , so i wanted to reshape each row in my data to a specific number of columns , and wanted to know if you have any thoughts on how to do that

It really depends on the specifics of your data. Perhaps experiment with a few approaches and compare results?

Found this article by searching sparse matrix to normal matrix, and you make matrices so easy to understand yet with details. Thank you so much!

I am using tm package in r to perform either tdm or dtm (not yet sure about how to decide on which), and a lot of examples online shows its sparsity: 98%, and move on to as.matrix().

But using the data I crawled from news website, my sparsity is 62%, which made me wonder is the next move as.matrix() necessary?

sorry my question seems to be out of the discussion of your article, but i hope my question is clear and i would be really appreciated for your repy

Thanks, I’m glad it helped!

Sorry, I am not familiar with that package. Perhaps try posting on stackoverflow or crossvalidated?

Great explanation .

Thanks!

Hi Jason, Thanks for your blogs. They are really helpful. I have a question on sparse matrix.

I am working on TFIDF vectorizer, and its output of fit function is a sparse matrix. Now based on idf score I just want to take top 1000 features for that I have to access the sparse matrix elements.

But I am not able to get an efficient way for that. I dont want to convert entire matrix to dense or toarray. is there any other way to acess the nonzero elements of sparse matrix?

Good question.

I’m not sure of an efficient way off the cuff. Perhaps there is a numpy function – try checking the API?

Thank you for your great article.

According to your definition of Sparse and Dense matrices, a Sparse matrix is a matrix that contains mostly zero values and Dense, the matrix contains mostly non-zero values, but in the example code, you create a dense matrix with many “zero” values!? (isn’t a sparse matrix?). Could you please clarify that?

Good question.

A matrix can be sparse or dense (lots of zeros, few zeros).

And we can represent a matrix using a dense representation or a sparse representation.

It is more efficient to use a dense representation for a dense matrix and a sparse representation for a sparse matrix, but this is not a requirement.

Hi Jason,

First of all, I would like to congratulate on your work here. Your research and initiatives are quite commendable. I have read some of your articles and they are quite practical and informative. Now, I would like to pick your brains on this – Do you think training a Neural network (MLP) with sparse data (the feature vectors have a lot of zeroes) is tough? In your experience, have you come across sparse data giving a network hard time to learn?

Thanks,

Euler

Thanks.

Not really. Regularization can help.

Thanks. I am using L2 regularization but that doesn’t help in overcoming the issue of sparse data. Capping the distributions at say 95th percentile did help as it reduced the number of approximate zeroes or very low values by removal of outliers. If I don’t do that, SGD doesn’t work /takes ages to train and under-performs. I had an idea of sampling mini-batches such that they are biased towards sampling observations that are non-zeroes. This link – https://www.quora.com/Why-are-deep-neural-networks-so-bad-with-sparse-data discusses this topic

Many of the modern innovations seek to make models internally sparse, e.g. relu, weight regularization, activation regularization, etc.

Hi Jason,

Thanks for the post.

I ran into the error below when trying to train a model for 2 text column fields using One Hot Encoding. Hope to get your advice to overcome this error. Thanks in advance.

<8573×24 sparse matrix of type '’

with 34134 stored elements in Compressed Sparse Row format>

Perhaps try posting your code and error to stackoverflow?

Thank!!

You’re welcome.

thank You so much …

You’re welcome.

https://magoosh.com/data-science/non-sparse-matrix-to-sparse-matrix/

seems someone had plagiarised from your blog Jason

Atleast reference was needed to your blog

Sorry to hear that. Some people have no shame.

Dear Ms. Jason Brownlee. I have a question. Multiplication of two sparse matrices will return a sparse matrix, right?

Hi Huyen…I would not say this is generally a result.

https://medium.com/@glynnnnnnnnn/sparse-matrix-multiplication-c34ef43c16db

Hi I have a data that are mostly zeros as you suggested. Do you think there will be a problem in the process of using such data for predicting the next sequence of data using Time-Series algorithms? If so, what to do about it?

Thanks.

recently I had a experience with sparse matrices in python vs Julia and I should say that Julia is a beast! Simple, elegant and powerful at least in linear algebra as I tested it.

Yes, Julia is young but promising. Maybe in a few years, we can see its ecosystem getting mature with more tools to do something great!