XGBoost With Python Mini-Course.

XGBoost is an implementation of gradient boosting that is being used to win machine learning competitions.

It is powerful but it can be hard to get started.

In this post, you will discover a 7-part crash course on XGBoost with Python.

This mini-course is designed for Python machine learning practitioners that are already comfortable with scikit-learn and the SciPy ecosystem.

Kick-start your project with my new book XGBoost With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Update Jan/2017: Updated to reflect changes in scikit-learn API version 0.18.1.

- Update Mar/2018: Added alternate link to download the dataset as the original appears to have been taken down.

XGBoost With Python Mini-Course

Photo by Teresa Boardman, some rights reserved.

(Tip: you might want to print or bookmark this page so that you can refer back to it later.)

Who Is This Mini-Course For?

Before we get started, let’s make sure you are in the right place. The list below provides some general guidelines as to who this course was designed for.

Don’t panic if you don’t match these points exactly, you might just need to brush up in one area or another to keep up.

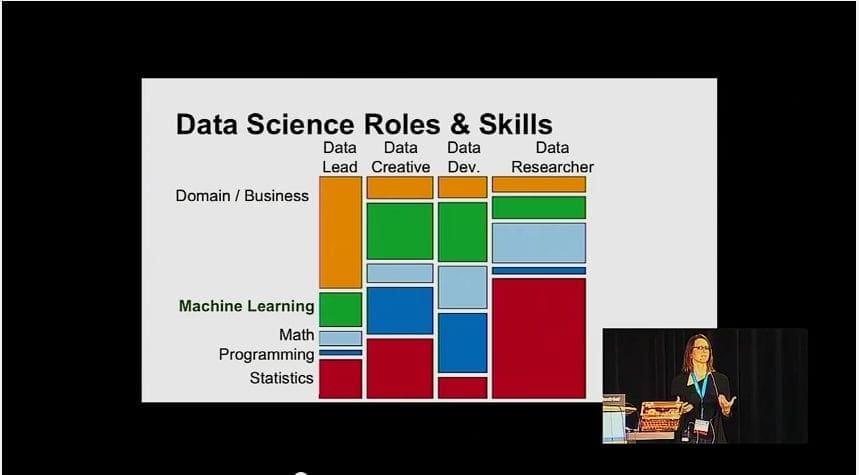

- Developers that know how to write a little code. This means that it is not a big deal for you to get things done with Python and know how to setup the SciPy ecosystem on your workstation (a prerequisite). It does not mean your a wizard coder, but it does mean you’re not afraid to install packages and write scripts.

- Developers that know a little machine learning. This means you know about the basics of machine learning like cross validation, some algorithms and the bias-variance trade-off. It does not mean that you are a machine learning PhD, just that you know the landmarks or know where to look them up.

This mini-course is not a textbook on XGBoost. There will be no equations.

It will take you from a developer that knows a little machine learning in Python to a developer who can get results and bring the power of XGBoost to your own projects.

Need help with XGBoost in Python?

Take my free 7-day email course and discover xgboost (with sample code).

Click to sign-up now and also get a free PDF Ebook version of the course.

Mini-Course Overview (what to expect)

This mini-course is divided into 7 parts.

Each lesson was designed to take the average developer about 30 minutes. You might finish some much sooner and others you may choose to go deeper and spend more time.

You can complete each part as quickly or as slowly as you like. A comfortable schedule may be to complete one lesson per day over a one week period. Highly recommended.

The topics you will cover over the next 7 lessons are as follows:

- Lesson 01: Introduction to Gradient Boosting.

- Lesson 02: Introduction to XGBoost.

- Lesson 03: Develop Your First XGBoost Model.

- Lesson 04: Monitor Performance and Early Stopping.

- Lesson 05: Feature Importance with XGBoost.

- Lesson 06: How to Configure Gradient Boosting.

- Lesson 07: XGBoost Hyperparameter Tuning.

This is going to be a lot of fun.

You’re going to have to do some work though, a little reading, a little research and a little programming. You want to learn about XGBoost right?

(Tip: Help for with these lessons can be found on this blog, use the search feature.)

Any questions at all, please post in the comments below.

Share your results in the comments.

Hang in there, don’t give up!

Lesson 01: Introduction to Gradient Boosting

Gradient boosting is one of the most powerful techniques for building predictive models.

The idea of boosting came out of the idea of whether a weak learner can be modified to become better. The first realization of boosting that saw great success in application was Adaptive Boosting or AdaBoost for short. The weak learners in AdaBoost are decision trees with a single split, called decision stumps for their shortness.

AdaBoost and related algorithms were recast in a statistical framework and became known as Gradient Boosting Machines. The statistical framework cast boosting as a numerical optimization problem where the objective is to minimize the loss of the model by adding weak learners using a gradient descent like procedure, hence the name.

The Gradient Boosting algorithm involves three elements:

- A loss function to be optimized, such as cross entropy for classification or mean squared error for regression problems.

- A weak learner to make predictions, such as a greedily constructed decision tree.

- An additive model, used to add weak learners to minimize the loss function.

New weak learners are added to the model in an effort to correct the residual errors of all previous trees. The result is a powerful predictive modeling algorithm, perhaps more powerful than random forest.

In the next lesson we will take a closer look at the XGBoost implementation of gradient boosting.

Lesson 02: Introduction to XGBoost

XGBoost is an implementation of gradient boosted decision trees designed for speed and performance.

XGBoost stands for eXtreme Gradient Boosting.

It was developed by Tianqi Chen and is laser focused on computational speed and model performance, as such there are few frills.

In addition to supporting all key variations of the technique, the real interest is the speed provided by the careful engineering of the implementation, including:

- Parallelization of tree construction using all of your CPU cores during training.

- Distributed Computing for training very large models using a cluster of machines.

- Out-of-Core Computing for very large datasets that don’t fit into memory.

- Cache Optimization of data structures and algorithms to make best use of hardware.

Traditionally, gradient boosting implementations are slow because of the sequential nature in which each tree must be constructed and added to the model.

The on performance in the development of XGBoost has resulted in one of the best predictive modeling algorithms that can now harness the full capability of your hardware platform, or very large computers you might rent in the cloud.

As such, XGBoost has been a cornerstone in competitive machine learning, being the technique used to win and recommended by winners. For example, here is what some recent Kaggle competition winners have said:

As the winner of an increasing amount of Kaggle competitions, XGBoost showed us again to be a great all-round algorithm worth having in your toolbox.

When in doubt, use xgboost.

In the next lesson, we will develop our first XGBoost model in Python.

Lesson 03: Develop Your First XGBoost Model

Assuming you have a working SciPy environment, XGBoost can be installed easily using pip.

For example:

|

1 |

sudo pip install xgboost |

You can learn more about installing and building XGBoost on your platform in the XGBoost Installation Instructions.

XGBoost models can be used directly in the scikit-learn framework using the wrapper classes, XGBClassifier for classification and XGBRegressor for regression problems.

This is the recommended way to use XGBoost in Python.

Download the Pima Indians onset of diabetes dataset.

It is a good test dataset for binary classification as all input variables are numeric, meaning the problem can be modeled directly with no data preparation.

We can train an XGBoost model for classification by constructing it and calling the model.fit() function:

|

1 2 |

model = XGBClassifier() model.fit(X_train, y_train) |

This model can then be used to make predictions by calling the model.predict() function on new data.

|

1 |

y_pred = model.predict(X_test) |

We can tie this all together as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

# First XGBoost model for Pima Indians dataset from numpy import loadtxt from xgboost import XGBClassifier from sklearn.model_selection import train_test_split from sklearn.metrics import accuracy_score # load data dataset = loadtxt('pima-indians-diabetes.csv', delimiter=",") # split data into X and y X = dataset[:,0:8] Y = dataset[:,8] # split data into train and test sets seed = 7 test_size = 0.33 X_train, X_test, y_train, y_test = train_test_split(X, Y, test_size=test_size, random_state=seed) # fit model on training data model = XGBClassifier() model.fit(X_train, y_train) # make predictions for test data y_pred = model.predict(X_test) predictions = [round(value) for value in y_pred] # evaluate predictions accuracy = accuracy_score(y_test, predictions) print("Accuracy: %.2f%%" % (accuracy * 100.0)) |

In the next lesson we will look at how we can use early stopping to limit overfitting.

Lesson 04: Monitor Performance and Early Stopping

The XGBoost model can evaluate and report on the performance on a test set for the model during training.

It supports this capability by specifying both a test dataset and an evaluation metric on the call to model.fit() when training the model and specifying verbose output (verbose=True).

For example, we can report on the binary classification error rate (error) on a standalone test set (eval_set) while training an XGBoost model as follows:

|

1 2 |

eval_set = [(X_test, y_test)] model.fit(X_train, y_train, eval_metric="error", eval_set=eval_set, verbose=True) |

Running a model with this configuration will report the performance of the model after each tree is added. For example:

|

1 2 3 |

... [89] validation_0-error:0.204724 [90] validation_0-error:0.208661 |

We can use this evaluation to stop training once no further improvements have been made to the model.

We can do this by setting the early_stopping_rounds parameter when calling model.fit() to the number of iterations that no improvement is seen on the validation dataset before training is stopped.

The full example using the Pima Indians Onset of Diabetes dataset is provided below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

# exmaple of early stopping from numpy import loadtxt from xgboost import XGBClassifier from sklearn.model_selection import train_test_split from sklearn.metrics import accuracy_score # load data dataset = loadtxt('pima-indians-diabetes.csv', delimiter=",") # split data into X and y X = dataset[:,0:8] Y = dataset[:,8] # split data into train and test sets seed = 7 test_size = 0.33 X_train, X_test, y_train, y_test = train_test_split(X, Y, test_size=test_size, random_state=seed) # fit model on training data model = XGBClassifier() eval_set = [(X_test, y_test)] model.fit(X_train, y_train, early_stopping_rounds=10, eval_metric="logloss", eval_set=eval_set, verbose=True) # make predictions for test data y_pred = model.predict(X_test) predictions = [round(value) for value in y_pred] # evaluate predictions accuracy = accuracy_score(y_test, predictions) print("Accuracy: %.2f%%" % (accuracy * 100.0)) |

In the next lesson, we will look at how we calculate the importance of features using XGBoost

Lesson 05: Feature Importance with XGBoost

A benefit of using ensembles of decision tree methods like gradient boosting is that they can automatically provide estimates of feature importance from a trained predictive model.

A trained XGBoost model automatically calculates feature importance on your predictive modeling problem.

These importance scores are available in the feature_importances_ member variable of the trained model. For example, they can be printed directly as follows:

|

1 |

print(model.feature_importances_) |

The XGBoost library provides a built-in function to plot features ordered by their importance.

The function is called plot_importance() and can be used as follows:

|

1 2 |

plot_importance(model) pyplot.show() |

These importance scores can help you decide what input variables to keep or discard. They can also be used as the basis for automatic feature selection techniques.

The full example of plotting feature importance scores using the Pima Indians Onset of Diabetes dataset is provided below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

# plot feature importance using built-in function from numpy import loadtxt from xgboost import XGBClassifier from xgboost import plot_importance from matplotlib import pyplot # load data dataset = loadtxt('pima-indians-diabetes.csv', delimiter=",") # split data into X and y X = dataset[:,0:8] y = dataset[:,8] # fit model on training data model = XGBClassifier() model.fit(X, y) # plot feature importance plot_importance(model) pyplot.show() |

In the next lesson we will look at heuristics for best configuring the gradient boosting algorithm.

Lesson 06: How to Configure Gradient Boosting

Gradient boosting is one of the most powerful techniques for applied machine learning and as such is quickly becoming one of the most popular.

But how do you configure gradient boosting on your problem?

A number of configuration heuristics were published in the original gradient boosting papers. They can be summarized as:

- Learning rate or shrinkage (learning_rate in XGBoost) should be set to 0.1 or lower, and smaller values will require the addition of more trees.

- The depth of trees (max_depth in XGBoost) should be configured in the range of 2-to-8, where not much benefit is seen with deeper trees.

- Row sampling (subsample in XGBoost) should be configured in the range of 30% to 80% of the training dataset, and compared to a value of 100% for no sampling.

These are a good starting points when configuring your model.

A good general configuration strategy is as follows:

- Run the default configuration and review plots of the learning curves on the training and validation datasets.

- If the system is overlearning, decrease the learning rate and/or increase the number of trees.

- If the system is underlearning, speed the learning up to be more aggressive by increasing the learning rate and/or decreasing the number of trees.

Owen Zhang, the former #1 ranked competitor on Kaggle and now CTO at Data Robot proposes an interesting strategy to configure XGBoost.

He suggests to set the number of trees to a target value such as 100 or 1000, then tune the learning rate to find the best model. This is an efficient strategy for quickly finding a good model.

In the next and final lesson, we will look at an example of tuning the XGBoost hyperparameters.

Lesson 07: XGBoost Hyperparameter Tuning

The scikit-learn framework provides the capability to search combinations of parameters.

This capability is provided in the GridSearchCV class and can be used to discover the best way to configure the model for top performance on your problem.

For example, we can define a grid of the number of trees (n_estimators) and tree sizes (max_depth) to evaluate by defining a grid as:

|

1 2 3 |

n_estimators = [50, 100, 150, 200] max_depth = [2, 4, 6, 8] param_grid = dict(max_depth=max_depth, n_estimators=n_estimators) |

And then evaluate each combination of parameters using 10-fold cross validation as:

|

1 2 3 |

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=7) grid_search = GridSearchCV(model, param_grid, scoring="neg_log_loss", n_jobs=-1, cv=kfold, verbose=1) result = grid_search.fit(X, label_encoded_y) |

We can then review the results to determine the best combination and the general trends in varying the combinations of parameters.

This is the best practice when applying XGBoost to your own problems. The parameters to consider tuning are:

- The number and size of trees (n_estimators and max_depth).

- The learning rate and number of trees (learning_rate and n_estimators).

- The row and column subsampling rates (subsample, colsample_bytree and colsample_bylevel).

Below is a full example of tuning just the learning_rate on the Pima Indians Onset of Diabetes dataset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

# Tune learning_rate from numpy import loadtxt from xgboost import XGBClassifier from sklearn.model_selection import GridSearchCV from sklearn.model_selection import StratifiedKFold # load data dataset = loadtxt('pima-indians-diabetes.csv', delimiter=",") # split data into X and y X = dataset[:,0:8] Y = dataset[:,8] # grid search model = XGBClassifier() learning_rate = [0.0001, 0.001, 0.01, 0.1, 0.2, 0.3] param_grid = dict(learning_rate=learning_rate) kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=7) grid_search = GridSearchCV(model, param_grid, scoring="neg_log_loss", n_jobs=-1, cv=kfold) grid_result = grid_search.fit(X, Y) # summarize results print("Best: %f using %s" % (grid_result.best_score_, grid_result.best_params_)) means = grid_result.cv_results_['mean_test_score'] stds = grid_result.cv_results_['std_test_score'] params = grid_result.cv_results_['params'] for mean, stdev, param in zip(means, stds, params): print("%f (%f) with: %r" % (mean, stdev, param)) |

XGBoost Learning Mini-Course Review

Congratulations, you made it. Well done!

Take a moment and look back at how far you have come:

- You learned about the gradient boosting algorithm and the XGBoost library.

- You developed your first XGBoost model.

- You learned how to use advanced features like early stopping and feature importance.

- You learned how to configure gradient boosted models and how to design controlled experiments to tune XGBoost hyperparameters.

Don’t make light of this, you have come a long way in a short amount of time. This is just the beginning of your journey with XGBoost in Python. Keep practicing and developing your skills.

Did you enjoy this mini-course? Do you have any questions or sticking points?

Leave a comment and let me know.

Questions on:

1. If the system is overlearning, decrease the learning rate and/or increase the number of trees.

2. If the system is underlearning, speed the learning up to be more aggressive by increasing the learning rate and/or decreasing the number of trees.

I think If the system is overlearning, that means it is overffing, and I think is should increase the learning rate the decrease the depth of trees and decrease the number of trees.

Hi Alexander, try it and see.

I am suggesting making fewer updates to the model/less capacity when overfitting and the reverse when overfitting.

X = dataset[:,0:8]

Y = dataset[:,8]

won’t be Y = dataset[:,9] ?

No, consider reading up on Python array slicing.

hi jason

can we load data from xgboost binary buffer file.

I don’t know, sorry.

Hi Jason,

In lesson 4, the first block shows eval_metric=”error” and then eval_metric=”logloss”. When using “error”, I got minimum in iteration [89] validation_0-error:0.204724. When using “logloss”, I got minimum in iteration [32] validation_0-logloss:0.487297 . why the minimum in these two eval_metric occurs at different iteration? which one we should follow? Thank you.

Generally, you will get different min scores in iterations given the stochastic nature of the algorithm:

https://machinelearningmastery.com/randomness-in-machine-learning/

I would recommend selecting a metric most relevant to your goals on the project.

Are you going to release a XGB Regressor tutorial? I can’t find one anywhere!

Thanks for the suggestion.

Hi Jason!

Thank you for all these great tutorials! They are fantastic. Thank you for passing on this knowledge in a very easily digestable manner. 🙂

I am running on a Windows 10 system using Anaconda Python 3.6 using Spyder3 as an editor. I keep getting this error:

ImportError: [joblib] Attempting to do parallel computing without protecting your import on a system that does not support forking. To use parallel-computing in a script, you must protect your main loop using “if __name__ == ‘__main__'”. Please see the joblib documentation on Parallel for more information

I looked it up on stackoverflow but I’m still not clear on how to fix this. I tried putting everything in a “if __name__ == ‘__main__’:” block but I still get this error. Two questions:

1: Is there a way to get this code to work on a non-GPU system? My system is an Intel(R) Core(TM) i7-4790 CPU @ 3.60GHz 3.60 GHz, 16.0 GB of RAM, 64-bit Operating system, x64 based processor

2: If I ran this on a Windows 10 system with an ND-series GPU, would it fix this problem?

Thanks a bunch!

Aimee

Yes, a GPU is not required.

Perhaps try running from the command line instead?

I have not seen this error before, confirm that all of your libraries are up to date?

I was playing around with the parameters a little and finally got it to run by setting n_jobs = 1 instead of n_jobs = -1 in the call to GridSearchCV. From what I read online, it is because Windows doesn’t have a fork function and so it doesn’t know how to handle the process requested when n_jobs is set to -1.

Thanks again. I’ve learned so much! There’s nothing like learning through example and just getting in there and getting your hands dirty.

Have a great day! 🙂

Nice, thanks for sharing.

Hi Jason, I’m using an XGBRegressor model to predict the severity of insurance claims.

However, the prediction is nearly the same for every record.

I assume this is overfitting or what do you recommend to resolve the issue ?

I have a suite of ideas here:

https://machinelearningmastery.com/machine-learning-performance-improvement-cheat-sheet/

Hi Jason,

I have the XGBOOST book and it is fantastic. It has helped immensely to classify our sick member population. However, I am stuck with feature name mismatch error when I use OneHotEncoder(). The problem doesn’t happen when train and test the data-set. It only happens when I try to use the model to predict a new data-set that the model hasn’t seen. I posted the question here: https://stackoverflow.com/questions/51860759/xgboost-feature-name-error-python

I am hoping that you could also give some ideas to overcome this issue.

Thank you very much.

You will need to use the same encoder to prepare new data that was used to prepare the data used to train the model.

Hi Jason,

Thank you so much for this awesome answer. I was able to solve the issue and also mentioned about this website on stackoverflow.com.

For folks who are in the same situation with me, you need to import joblib from sklearn.externals. After you fit the onehotencoder, you need to save the fitted encoder by using joblib.dump(enc, “your_encoder.pkl”).

Now, you can use the same encoder for another dataset.

Here is the link to my question and answer: https://stackoverflow.com/questions/51860759/xgboost-feature-name-error-python/51878832#51878832

Again, thank you so much Jason.

Well done!

Can you share the book? I want to learn maths for XGB

Perhaps start with the paper.

Thanks for the introductory mini-course Jason,

After tuning the parameters in the last step, lets say we found the best parameters among the candidates. Do we have to train the model again with these parameters ? If so, how do we feed to the classifier the best parameters? Thanks.

Yes, you can configure the model with the chose parametres via arguments when defining the model.

where can download ebook about xgboost

If you sign up, it is sent to you via email.

Hello Jason,

I just tried the “.fit” and “.predict” XGBClassifier functions and got an feature_names mismatch error. However, passing the same train and test sets through a simple random forest classifier worked without a hitch. I’m puzzled. Does the train and test sets have to be in certain type or format for XGB to work?

Thank you!

Perhaps your new set of data differs from the train and test sets?

No, I used the same train and test sets for both classifiers. The random forest predicted with no problem. But XGB coughed. Does XGB only take numpy arrays? I noticed that you used loadtxt rather than pd.read_csv. Thanks!

Yes, if you are using the sklearn interface then the model will only take numpy arrays.

Ah! Thank you!

can you do one time series forecasting with xgboost? thx

Sure.

One of the best, short and crisp article giving an indepth idea about XGBoost in Python.

Thanks!

For info, the Pina Indian dataset is still available at the UCI link provided, but if you search the UCI site it says it’s not available any more due to permission restrictions. Kaggle also host this dataset now at:

https://www.kaggle.com/uciml/pima-indians-diabetes-database

with a description, column details and summary statistics. Probably work updating your lessons to point to Kaggle instead.

Thanks.

I provide all datasets here:

https://github.com/jbrownlee/Datasets

Here is the pima dataset:

https://raw.githubusercontent.com/jbrownlee/Datasets/master/pima-indians-diabetes.csv

For individuals using conda envs and are finding it hard to install XGBoost using the sudo command, please try:

$ sudo ~/anaconda3/bin/python -m pip install xgboost -H

I hope you find this useful, Thanks…….

Great tip, thanks!

Good morning Jason,

I am trying to apply the XGBoost with the SMOTE.

I already tested SMOTE with other algorithms, such as Random Forest and Light GBM, and worked perfectly. But when I try to use if XGBoost it occurs an error in the .predict(X_test) part.

This is the error.

Can you help me?

Thank you in advance.

What is the error?

How can create dataset = loadtxt(‘pima-indians-diabetes.csv’, delimiter=”,”) as this code is showing error.I am using spyder 4.0

I recommend running the code example from the command line, not a notebook or IDE:

https://machinelearningmastery.com/faq/single-faq/how-do-i-run-a-script-from-the-command-line

Hi, I found the solution. No need to answer. Thank you.

I’m happy to hear that.

Hello to everyone!

Do you have a tutorial or a book about XGBoost RANKER?

I have your book about XGBoost algorithm, but it doesn’t meet my needs for Ranking.

Also, I am new to Python and I need some code to see how to implement it.

Any suggestion?

Thank you in advance!

Sofia

Thanks for the suggestion. But we don’t have it yet.

Why is that I am getting an accuracy ~78% in the Day 03 exercise?Increasing the n_estimators or lowering the learning rate doesn’t hewp much

Hi Sahil…The following may be of interest:

https://machinelearningmastery.com/tune-xgboost-performance-with-learning-curves/

Hello. I am trying to do binary classification using an imbalanced dataset (positive class= 7%).

I have tried multiple algorithms like RF, gbm, SVM, catboost, xgboost, and NB.

I get the best performance by an xgboost algorithm.

With oversampling and parameter setting, I increased sensitivity from almost 0 to 0.7. However, the specificity and accuracy decreased significantly (from 0.9 to 0.6), and I didn’t get a ROC AUC higher than 0.65.

I mainly code in R.

Any recommendations to enhance the performance of this model? (other algorithms to try, any specific parameter setting)

Hi Moon…The following may be of interest to you:

https://machinelearningmastery.com/tune-xgboost-performance-with-learning-curves/