What is the best machine learning algorithm? I get this question a lot. Maybe even daily.

Sometimes it’s a general question. I figure people want to make sure they are learning the one true machine learning algorithm and not wasting their time on anything less.

Most other times it is with regard to a specific problem.

I think it’s a very good question, a very telling question. It tells me straight away that a required shift in thinking has not occurred.

I can yell from the roof tops all day long: “there is no best algorithm“, but that is not helpful.

In this post I want to provide you some tools to help you start to make the required shift in thinking away from a single best solution.

Kick-start your project with my new book Master Machine Learning Algorithms, including step-by-step tutorials and the Excel Spreadsheet files for all examples.

Fear of Loss.

Photo by Jimee, Jackie, Tom & Asha, some rights reserved

The Best Solution

You are probably a programmer or engineer. When you encounter a specific problem, there is an algorithm you can grab and use to address it.

For example, you need an ordered list of items, you use a sort algorithm built into your standard library. There is no ambiguity. You need a sorted list, you use the algorithm, now you have a sorted list.

Why can’t machine learning be like that?

There is ambiguity in the sort example, it’s just hidden from you.

That sort algorithm you’re using is one of many and was selected for use in the library based on a trade-off of constraints such as language features, space and time complexity, and perhaps ease of implementation and other biases. It may not be the best sorting algorithm for your specific problem (on some dimension of concern), but it is good enough. Your list gets sorted.

Get your FREE Algorithms Mind Map

Sample of the handy machine learning algorithms mind map.

I've created a handy mind map of 60+ algorithms organized by type.

Download it, print it and use it.

Also get exclusive access to the machine learning algorithms email mini-course.

Another example.

You have to implement a moderately complex feature in a software product. What is the best way to implement the feature?

There are enumerable ways to design software and to present features in an interface. We use guides to help us make these decisions (style guides, design guides, design patterns, language features, etc.), but there is no one true way to get the job done. It is a decision problem of selecting a suitable balance of trade-offs.

The idea of a best solution is a fallacy, but I’m sure you knew that already.

No Free Lunch

It’s also worse than you think.

It is provable that there is no best algorithm.

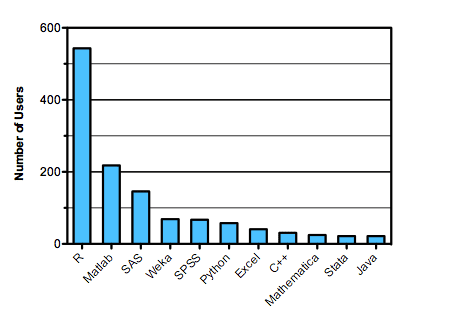

The no free lunch theorem tells that in a matrix of all problems and all algorithms that the average performance of all algorithms is equivalent.

Now, this is a theoretical result and assumes no prior knowledge about our problem or algorithm. But it is a useful framing for the transition in thinking we have to make.

There is no free lunch.

Photo by ..matt.., some rights reserved

Fear Of Loss

What is really going on is fear of loss.

- What if you use the wrong algorithm?

- What if you could be getting better results with another algorithm?

- What if you spend your time learning about the wrong algorithm?

It is so bad that you may not even pick an algorithm. You may not even try to address your problem or start studying machine learning.

If you asking me: “what is the best machine learning algorithm for a problem?“, all I see loss aversion.

You need the tools to address this problem.

It’s a Search Problem

You need to start with a productive framing of the meta problem that you are solving.

This problem is search. You are searching for an algorithm or algorithm configuration (what’s the real difference?) that you judge best.

The data that you select to make available to your models only has so-much structured information in it for algorithms to exploit. Like the sort example above, there is a idealized solution (model of the underlying structured data) and many algorithms that can be used to realize instances of that idealized solution.

What is the best search algorithm? I have no idea. Spot check some algorithms, then select the better performing and look into further improving their results.

Now that might sound trite, but if you are just starting out in machine learning, that is the best advice I can give.

Practicing the process of applied machine learning a lot will build up your intuitions for how algorithms behave in different situations. These anecdotes you collect can inform the selection of algorithms you try.

Reading the theory of why algorithms work is harder but is also valuable information you will want to use to inform the selection of algorithms you try.

Machine learning as a search problem.

Photo by taylar, some rights reserved.

Empirical Inquiry

Addressing a problem using machine learning is an empirical scientific inquiry.

If you had a perfect model of the problem, you would use that. But you don’t, you have data and you are using that to model the problem.

Structuring the meta problem as search means that you need a very strong idea of how to objectively evaluate a finding (a model). That is why defining your problem upfront is absolutely critically important.

You need to be methodical and systematic.

The algorithms you try are not inconsequential, but they may be secondary to the way you define the problem and the test harness you use to evaluate the models you prepare.

This is a hard lesson and it may take some time to sink in. After a decade, it’s still seeping into my marrow.

I think this is one of your best articles. It helps to ease the burden of the Fear Of Missing Out syndrome! I truly consider machine learning to be two parts science and one part art because the empirical trial/error phase is extremely important. As you stated, every machine learning problem is, in fact, a search problem. Of course, there are strategies and heuristics to narrow down the search space but, in the end, it is an art!

Thanks for sharing your insights on this!

Thanks.

Hi Jason. If I have to devise a proof of concept to “No free lunch” theorem, am I supposed to have diverse datasets, may be a few classification algorithms, build models and analyse the results as to which algorithm’s performance is better on a particular dataset? Is it how no free lunch theorem is supposed to be proven?? What I understand by no free lunch is that an algorithm could give me good results for a particular dataset but not necessarily on a different dataset.. Please clarify..

Generally the NFLT suggests that no single classifier will perform better than any other when averaged across all possible problems.

It talks about optimization, and machine learning algorithms perform optimization to solve a function approximation problem.

It theoretical, as in practice we do know things about our problem, a lot more than nothing.

If you are preparing this demonstration as a homework assignment, perhaps confirm with your teacher as to what they are looking for.