Probability for Machine Learning Crash Course.

Get on top of the probability used in machine learning in 7 days.

Probability is a field of mathematics that is universally agreed to be the bedrock for machine learning.

Although probability is a large field with many esoteric theories and findings, the nuts and bolts, tools and notations taken from the field are required for machine learning practitioners. With a solid foundation of what probability is, it is possible to focus on just the good or relevant parts.

In this crash course, you will discover how you can get started and confidently understand and implement probabilistic methods used in machine learning with Python in seven days.

This is a big and important post. You might want to bookmark it.

Kick-start your project with my new book Probability for Machine Learning, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

- Update Jan/2020: Updated for changes in scikit-learn v0.22 API.

Probability for Machine Learning (7-Day Mini-Course)

Photo by Percita, some rights reserved.

Who Is This Crash-Course For?

Before we get started, let’s make sure you are in the right place.

This course is for developers that may know some applied machine learning. Maybe you know how to work through a predictive modeling problem end-to-end, or at least most of the main steps, with popular tools.

The lessons in this course do assume a few things about you, such as:

- You know your way around basic Python for programming.

- You may know some basic NumPy for array manipulation.

- You want to learn probability to deepen your understanding and application of machine learning.

You do NOT need to be:

- A math wiz!

- A machine learning expert!

This crash course will take you from a developer that knows a little machine learning to a developer who can navigate the basics of probabilistic methods.

Note: This crash course assumes you have a working Python3 SciPy environment with at least NumPy installed. If you need help with your environment, you can follow the step-by-step tutorial here:

Crash-Course Overview

This crash course is broken down into seven lessons.

You could complete one lesson per day (recommended) or complete all of the lessons in one day (hardcore). It really depends on the time you have available and your level of enthusiasm.

Below is a list of the seven lessons that will get you started and productive with probability for machine learning in Python:

- Lesson 01: Probability and Machine Learning

- Lesson 02: Three Types of Probability

- Lesson 03: Probability Distributions

- Lesson 04: Naive Bayes Classifier

- Lesson 05: Entropy and Cross-Entropy

- Lesson 06: Naive Classifiers

- Lesson 07: Probability Scores

Each lesson could take you 60 seconds or up to 30 minutes. Take your time and complete the lessons at your own pace. Ask questions and even post results in the comments below.

The lessons expect you to go off and find out how to do things. I will give you hints, but part of the point of each lesson is to force you to learn where to go to look for help on and about the statistical methods and the NumPy API and the best-of-breed tools in Python. (Hint: I have all of the answers directly on this blog; use the search box.)

Post your results in the comments; I’ll cheer you on!

Hang in there; don’t give up.

Note: This is just a crash course. For a lot more detail and fleshed-out tutorials, see my book on the topic titled “Probability for Machine Learning.”

Want to Learn Probability for Machine Learning

Take my free 7-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Lesson 01: Probability and Machine Learning

In this lesson, you will discover why machine learning practitioners should study probability to improve their skills and capabilities.

Probability is a field of mathematics that quantifies uncertainty.

Machine learning is about developing predictive modeling from uncertain data. Uncertainty means working with imperfect or incomplete information.

Uncertainty is fundamental to the field of machine learning, yet it is one of the aspects that causes the most difficulty for beginners, especially those coming from a developer background.

There are three main sources of uncertainty in machine learning; they are:

- Noise in observations, e.g. measurement errors and random noise.

- Incomplete coverage of the domain, e.g. you can never observe all data.

- Imperfect model of the problem, e.g. all models have errors, some are useful.

Uncertainty in applied machine learning is managed using probability.

- Probability and statistics help us to understand and quantify the expected value and variability of variables in our observations from the domain.

- Probability helps to understand and quantify the expected distribution and density of observations in the domain.

- Probability helps to understand and quantify the expected capability and variance in performance of our predictive models when applied to new data.

This is the bedrock of machine learning. On top of that, we may need models to predict a probability, we may use probability to develop predictive models (e.g. Naive Bayes), and we may use probabilistic frameworks to train predictive models (e.g. maximum likelihood estimation).

Your Task

For this lesson, you must list three reasons why you want to learn probability in the context of machine learning.

These may be related to some of the reasons above, or they may be your own personal motivations.

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover the three different types of probability and how to calculate them.

Lesson 02: Three Types of Probability

In this lesson, you will discover a gentle introduction to joint, marginal, and conditional probability between random variables.

Probability quantifies the likelihood of an event.

Specifically, it quantifies how likely a specific outcome is for a random variable, such as the flip of a coin, the roll of a die, or drawing a playing card from a deck.

We can discuss the probability of just two events: the probability of event A for variable X and event B for variable Y, which in shorthand is X=A and Y=B, and that the two variables are related or dependent in some way.

As such, there are three main types of probability we might want to consider.

Joint Probability

We may be interested in the probability of two simultaneous events, like the outcomes of two different random variables.

For example, the joint probability of event A and event B is written formally as:

- P(A and B)

The joint probability for events A and B is calculated as the probability of event A given event B multiplied by the probability of event B.

This can be stated formally as follows:

- P(A and B) = P(A given B) * P(B)

Marginal Probability

We may be interested in the probability of an event for one random variable, irrespective of the outcome of another random variable.

There is no special notation for marginal probability; it is just the sum or union over all the probabilities of all events for the second variable for a given fixed event for the first variable.

- P(X=A) = sum P(X=A, Y=yi) for all y

Conditional Probability

We may be interested in the probability of an event given the occurrence of another event.

For example, the conditional probability of event A given event B is written formally as:

- P(A given B)

The conditional probability for events A given event B can be calculated using the joint probability of the events as follows:

- P(A given B) = P(A and B) / P(B)

Your Task

For this lesson, you must practice calculating joint, marginal, and conditional probabilities.

For example, if a family has two children and the oldest is a boy, what is the probability of this family having two sons? This is called the “Boy or Girl Problem” and is one of many common toy problems for practicing probability.

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover probability distributions for random variables.

Lesson 03: Probability Distributions

In this lesson, you will discover a gentle introduction to probability distributions.

In probability, a random variable can take on one of many possible values, e.g. events from the state space. A specific value or set of values for a random variable can be assigned a probability.

There are two main classes of random variables.

- Discrete Random Variable. Values are drawn from a finite set of states.

- Continuous Random Variable. Values are drawn from a range of real-valued numerical values.

A discrete random variable has a finite set of states; for example, the colors of a car. A continuous random variable has a range of numerical values; for example, the height of humans.

A probability distribution is a summary of probabilities for the values of a random variable.

Discrete Probability Distributions

A discrete probability distribution summarizes the probabilities for a discrete random variable.

Some examples of well-known discrete probability distributions include:

- Poisson distribution.

- Bernoulli and binomial distributions.

- Multinoulli and multinomial distributions.

Continuous Probability Distributions

A continuous probability distribution summarizes the probability for a continuous random variable.

Some examples of well-known continuous probability distributions include:

- Normal or Gaussian distribution.

- Exponential distribution.

- Pareto distribution.

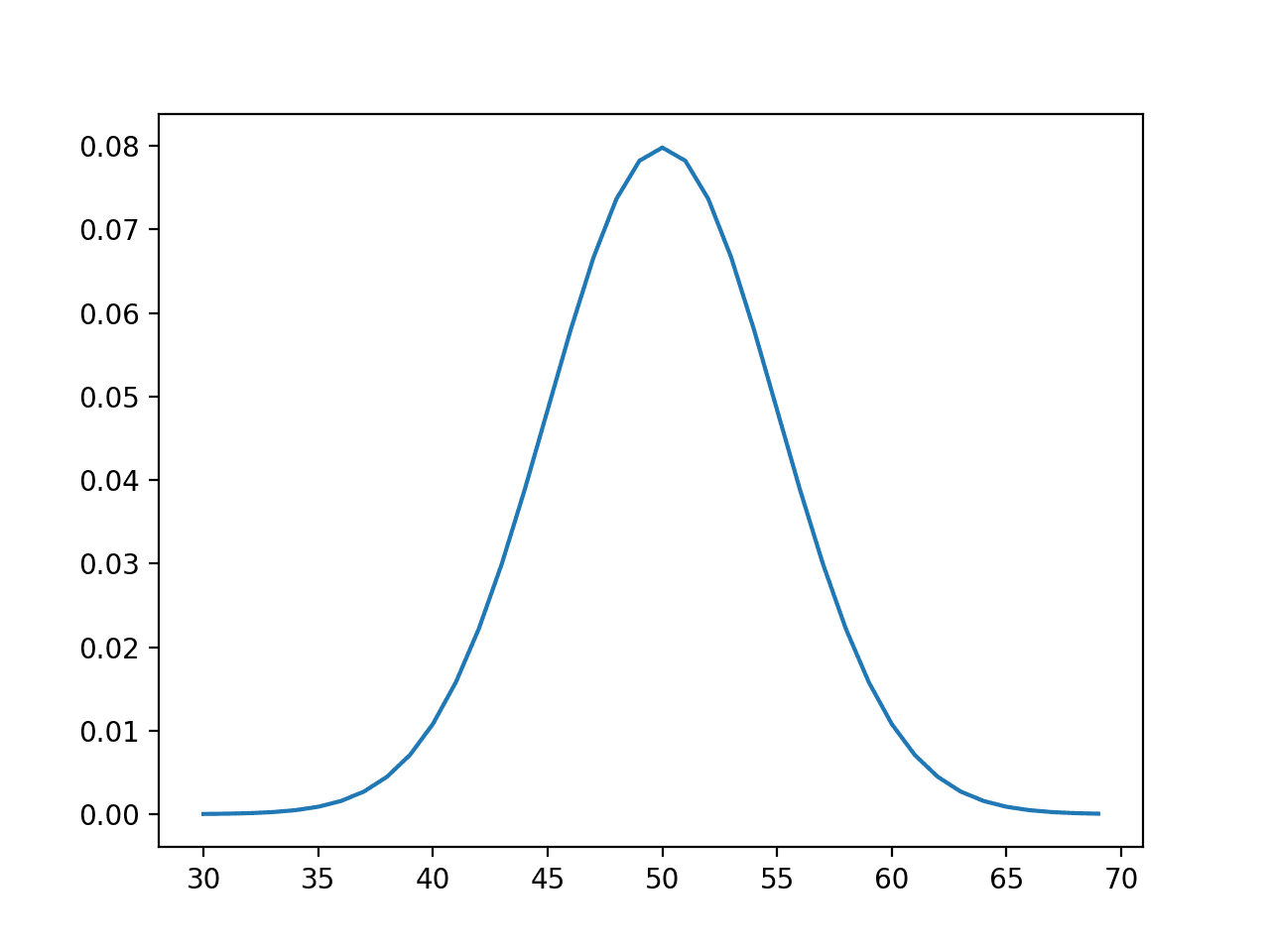

Randomly Sample Gaussian Distribution

We can define a distribution with a mean of 50 and a standard deviation of 5 and sample random numbers from this distribution. We can achieve this using the normal() NumPy function.

The example below samples and prints 10 numbers from this distribution.

|

1 2 3 4 5 6 7 8 9 |

# sample a normal distribution from numpy.random import normal # define the distribution mu = 50 sigma = 5 n = 10 # generate the sample sample = normal(mu, sigma, n) print(sample) |

Running the example prints 10 numbers randomly sampled from the defined normal distribution.

Your Task

For this lesson, you must develop an example to sample from a different continuous or discrete probability distribution function.

For a bonus, you can plot the values on the x-axis and the probability on the y-axis for a given distribution to show the density of your chosen probability distribution function.

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover the Naive Bayes classifier.

Lesson 04: Naive Bayes Classifier

In this lesson, you will discover the Naive Bayes algorithm for classification predictive modeling.

In machine learning, we are often interested in a predictive modeling problem where we want to predict a class label for a given observation.

One approach to solving this problem is to develop a probabilistic model. From a probabilistic perspective, we are interested in estimating the conditional probability of the class label given the observation, or the probability of class y given input data X.

- P(y | X)

Bayes Theorem provides an alternate and principled way for calculating the conditional probability using the reverse of the desired conditional probability, which is often simpler to calculate.

The simple form of the calculation for Bayes Theorem is as follows:

- P(A|B) = P(B|A) * P(A) / P(B)

Where the probability that we are interested in calculating P(A|B) is called the posterior probability and the marginal probability of the event P(A) is called the prior.

The direct application of Bayes Theorem for classification becomes intractable, especially as the number of variables or features (n) increases. Instead, we can simplify the calculation and assume that each input variable is independent. Although dramatic, this simpler calculation often gives very good performance, even when the input variables are highly dependent.

We can implement this from scratch by assuming a probability distribution for each separate input variable and calculating the probability of each specific input value belonging to each class and multiply the results together to give a score used to select the most likely class.

- P(yi | x1, x2, …, xn) = P(x1|y1) * P(x2|y1) * … P(xn|y1) * P(yi)

The scikit-learn library provides an efficient implementation of the algorithm if we assume a Gaussian distribution for each input variable.

To use a scikit-learn Naive Bayes model, first the model is defined, then it is fit on the training dataset. Once fit, probabilities can be predicted via the predict_proba() function and class labels can be predicted directly via the predict() function.

The complete example of fitting a Gaussian Naive Bayes model (GaussianNB) to a test dataset is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# example of gaussian naive bayes from sklearn.datasets import make_blobs from sklearn.naive_bayes import GaussianNB # generate 2d classification dataset X, y = make_blobs(n_samples=100, centers=2, n_features=2, random_state=1) # define the model model = GaussianNB() # fit the model model.fit(X, y) # select a single sample Xsample, ysample = [X[0]], y[0] # make a probabilistic prediction yhat_prob = model.predict_proba(Xsample) print('Predicted Probabilities: ', yhat_prob) # make a classification prediction yhat_class = model.predict(Xsample) print('Predicted Class: ', yhat_class) print('Truth: y=%d' % ysample) |

Running the example fits the model on the training dataset, then makes predictions for the same first example that we used in the prior example.

Your Task

For this lesson, you must run the example and report the result.

For a bonus, try the algorithm on a real classification dataset, such as the popular toy classification problem of classifying iris flower species based on flower measurements.

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover entropy and the cross-entropy scores.

Lesson 05: Entropy and Cross-Entropy

In this lesson, you will discover cross-entropy for machine learning.

Information theory is a field of study concerned with quantifying information for communication.

The intuition behind quantifying information is the idea of measuring how much surprise there is in an event. Those events that are rare (low probability) are more surprising and therefore have more information than those events that are common (high probability).

- Low Probability Event: High Information (surprising).

- High Probability Event: Low Information (unsurprising).

We can calculate the amount of information there is in an event using the probability of the event.

- Information(x) = -log( p(x) )

We can also quantify how much information there is in a random variable.

This is called entropy and summarizes the amount of information required on average to represent events.

Entropy can be calculated for a random variable X with K discrete states as follows:

- Entropy(X) = -sum(i=1 to K p(K) * log(p(K)))

Cross-entropy is a measure of the difference between two probability distributions for a given random variable or set of events. It is widely used as a loss function when optimizing classification models.

It builds upon the idea of entropy and calculates the average number of bits required to represent or transmit an event from one distribution compared to the other distribution.

- CrossEntropy(P, Q) = – sum x in X P(x) * log(Q(x))

We can make the calculation of cross-entropy concrete with a small example.

Consider a random variable with three events as different colors. We may have two different probability distributions for this variable. We can calculate the cross-entropy between these two distributions.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

# example of calculating cross entropy from math import log2 # calculate cross entropy def cross_entropy(p, q): return -sum([p[i]*log2(q[i]) for i in range(len(p))]) # define data p = [0.10, 0.40, 0.50] q = [0.80, 0.15, 0.05] # calculate cross entropy H(P, Q) ce_pq = cross_entropy(p, q) print('H(P, Q): %.3f bits' % ce_pq) # calculate cross entropy H(Q, P) ce_qp = cross_entropy(q, p) print('H(Q, P): %.3f bits' % ce_qp) |

Running the example first calculates the cross-entropy of Q from P, then P from Q.

Your Task

For this lesson, you must run the example and describe the results and what they mean. For example, is the calculation of cross-entropy symmetrical?

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover how to develop and evaluate a naive classifier model.

Lesson 06: Naive Classifiers

In this lesson, you will discover how to develop and evaluate naive classification strategies for machine learning.

Classification predictive modeling problems involve predicting a class label given an input to the model.

Given a classification model, how do you know if the model has skill or not?

This is a common question on every classification predictive modeling project. The answer is to compare the results of a given classifier model to a baseline or naive classifier model.

Consider a simple two-class classification problem where the number of observations is not equal for each class (e.g. it is imbalanced) with 25 examples for class-0 and 75 examples for class-1. This problem can be used to consider different naive classifier models.

For example, consider a model that randomly predicts class-0 or class-1 with equal probability. How would it perform?

We can calculate the expected performance using a simple probability model.

- P(yhat = y) = P(yhat = 0) * P(y = 0) + P(yhat = 1) * P(y = 1)

We can plug in the occurrence of each class (0.25 and 0.75) and the predicted probability for each class (0.5 and 0.5) and estimate the performance of the model.

- P(yhat = y) = 0.5 * 0.25 + 0.5 * 0.75

- P(yhat = y) = 0.5

It turns out that this classifier is pretty poor.

Now, what if we consider predicting the majority class (class-1) every time? Again, we can plug in the predicted probabilities (0.0 and 1.0) and estimate the performance of the model.

- P(yhat = y) = 0.0 * 0.25 + 1.0 * 0.75

- P(yhat = y) = 0.75

It turns out that this simple change results in a better naive classification model, and is perhaps the best naive classifier to use when classes are imbalanced.

The scikit-learn machine learning library provides an implementation of the majority class naive classification algorithm called the DummyClassifier that you can use on your next classification predictive modeling project.

The complete example is listed below.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# example of the majority class naive classifier in scikit-learn from numpy import asarray from sklearn.dummy import DummyClassifier from sklearn.metrics import accuracy_score # define dataset X = asarray([0 for _ in range(100)]) class0 = [0 for _ in range(25)] class1 = [1 for _ in range(75)] y = asarray(class0 + class1) # reshape data for sklearn X = X.reshape((len(X), 1)) # define model model = DummyClassifier(strategy='most_frequent') # fit model model.fit(X, y) # make predictions yhat = model.predict(X) # calculate accuracy accuracy = accuracy_score(y, yhat) print('Accuracy: %.3f' % accuracy) |

Running the example prepares the dataset, then defines and fits the DummyClassifier on the dataset using the majority class strategy.

Your Task

For this lesson, you must run the example and report the result, confirming whether the model performs as we expected from our calculation.

As a bonus, calculate the expected probability of a naive classifier model that randomly chooses a class label from the training dataset each time a prediction is made.

Post your answer in the comments below. I would love to see what you come up with.

In the next lesson, you will discover metrics for scoring models that predict probabilities.

Lesson 07: Probability Scores

In this lesson, you will discover two scoring methods that you can use to evaluate the predicted probabilities on your classification predictive modeling problem.

Predicting probabilities instead of class labels for a classification problem can provide additional nuance and uncertainty for the predictions.

The added nuance allows more sophisticated metrics to be used to interpret and evaluate the predicted probabilities.

Let’s take a closer look at the two popular scoring methods for evaluating predicted probabilities.

Log Loss Score

Logistic loss, or log loss for short, calculates the log likelihood between the predicted probabilities and the observed probabilities.

Although developed for training binary classification models like logistic regression, it can be used to evaluate multi-class problems and is functionally equivalent to calculating the cross-entropy derived from information theory.

A model with perfect skill has a log loss score of 0.0. The log loss can be implemented in Python using the log_loss() function in scikit-learn.

For example:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# example of log loss from numpy import asarray from sklearn.metrics import log_loss # define data y_true = [1, 1, 1, 1, 1, 0, 0, 0, 0, 0] y_pred = [0.8, 0.9, 0.9, 0.6, 0.8, 0.1, 0.4, 0.2, 0.1, 0.3] # define data as expected, e.g. probability for each event {0, 1} y_true = asarray([[v, 1-v] for v in y_true]) y_pred = asarray([[v, 1-v] for v in y_pred]) # calculate log loss loss = log_loss(y_true, y_pred) print(loss) |

Brier Score

The Brier score, named for Glenn Brier, calculates the mean squared error between predicted probabilities and the expected values.

The score summarizes the magnitude of the error in the probability forecasts.

The error score is always between 0.0 and 1.0, where a model with perfect skill has a score of 0.0.

The Brier score can be calculated in Python using the brier_score_loss() function in scikit-learn.

For example:

|

1 2 3 4 5 6 7 8 |

# example of brier loss from sklearn.metrics import brier_score_loss # define data y_true = [1, 1, 1, 1, 1, 0, 0, 0, 0, 0] y_pred = [0.8, 0.9, 0.9, 0.6, 0.8, 0.1, 0.4, 0.2, 0.1, 0.3] # calculate brier score score = brier_score_loss(y_true, y_pred, pos_label=1) print(score) |

Your Task

For this lesson, you must run each example and report the results.

As a bonus, change the mock predictions to make them better or worse and compare the resulting scores.

Post your answer in the comments below. I would love to see what you come up with.

This was the final lesson.

The End!

(Look How Far You Have Come)

You made it. Well done!

Take a moment and look back at how far you have come.

You discovered:

- The importance of probability in applied machine learning.

- The three main types of probability and how to calculate them.

- Probability distributions for random variables and how to draw random samples from them.

- How Bayes theorem can be used to calculate conditional probability and how it can be used in a classification model.

- How to calculate information, entropy, and cross-entropy scores and what they mean.

- How to develop and evaluate the expected performance for naive classification models.

- How to evaluate the skill of a model that predicts probability values for a classification problem.

Take the next step and check out my book on Probability for Machine Learning.

Summary

How did you do with the mini-course?

Did you enjoy this crash course?

Do you have any questions? Were there any sticking points?

Let me know. Leave a comment below.

Lesson 2: “For this lesson, you must practice calculating joint, marginal, and conditional probabilities.”

This might be a stupid question but “how”? I even googled for calculating joint… etc, watched some khan videos and found some classroom pdfs obviously prepared by universities but I couldn’t embody the concept in my mind so I can comprehend it.

Maybe I am not suitable for this passion. Sorry if my question is stupid again.

Good question, this will help:

https://machinelearningmastery.com/joint-marginal-and-conditional-probability-for-machine-learning/

Wow, thank you I will read the post. And I will use the search function before asking questions:)

Thanks.

Concept of Joint, Marginal and conditional probability is clear to me but please provide the python code to understand this concept with other example.

See this:

https://machinelearningmastery.com/how-to-calculate-joint-marginal-and-conditional-probability/

Hi

please tell me how do you plot the sample to show if it is normally distributed ?

(the third day of course)

See this tutorial:

https://machinelearningmastery.com/a-gentle-introduction-to-normality-tests-in-python/

I have gone through Entropy and Cross entropy. There are many divergence measures also. That, how to find the distance between two probability distributions? But your tutorials are nice and your work is amazing. I want to learn more and more.

See this on kl-divergence:

https://machinelearningmastery.com/divergence-between-probability-distributions/

import pandas as pd

from sklearn.naive_bayes import GaussianNB

iris = pd.read_csv(‘https://raw.githubusercontent.com/jbrownlee/Datasets/master/iris.csv’,

header = None) # importing the dataset

modelIris = GaussianNB()

modelIris.fit(iris.iloc[:, 0:4], iris.iloc[:, 4]) # fitting the model

modelIris.predict([[7.2, 4, 5, .5]]) # model predicts this data point to be a versicolor

Nice work!

Three reasons to learn probability.

1. To make my foundations strong.

2. To understand beauty of mathematics.

3. To predict the future.

Nice work!

My Top-3 Reasons:

1. Explain the feasibility(uncertainty) of ML models and their explanation in simplest of terms to Business users, utilizing probability as base

2. Generating effective/actionable business insights using applied probability

3. Representing any real world scenarios using “Conditional Probability” ( somehow feel this how LIFE works)

Well done!

My top three reasons to learn probability:

1. To understand different probability concepts like likelihood, cross entropy better.

2. I want to specialise in NLP where a lot of the algorithms are probabilistic in nature such as LDA, i want to understand those better.

3. I want to easily read and implement machine learning and deep learning based papers easily.

Thanks!

I am replying as part of the emails sent to my inbox. Here are the three reasons I believe ML practitioners should understand probability:

1. To make a valid experiment or test. For a specific example, statements of what outcome or output proves a certain theory should be reasonable. The wording and logic should be correct. Taking a somewhat famous case of statistics being misused:

The probability that a randomly chosen person matches a criminal’s description is not the same as the probability that a given person who does match a criminal’s description is guilty. If the criminal’s appearance is so unique that the probability of a random person matching it is 1 out of 12 billion, that does not mean a man with no supporting evidence connecting him to the crime but does match the description going to be innocent 1 out of 12 billion times.

2. ML practitioners need to know what makes differences in measures/values (mean, median, differences in variance, standard deviation or properly scaled units of measure) are “significant” or different enough to be evidence.

3. Certain lessons in probability could help find patterns in data or results, such as “seasonality”.

4. Bonus: Knowledge in probability can help optimize code or algorithms (code patterns) in niche cases.

Nice work!

So, if I understand the cross-entropy, it’s not symmetric because the same outcome doesn’t have the same significance for the two sets.

For instance, if I have a weighted die which has a 95% chance of rolling a 6, and a 1% of each other outcome, and a fair die with a 17% chance of rolling each number, then if I roll a 6 on one of the dice, I only favour it being the weighted one about 6:1, but if I roll anything else I favour it being the fair one about 17:1. (This assumes my priors is I’m equally likely to have picked either, let’s say I just own the two dice).

Then if I pick the weighted die, I’ll have to roll it a few times to convince myself it’s the weighted on, but if I pick the unweighted one, I’ll convince myself it’s that one in many fewer rolls (if I only need to be 2-sigma confident, probably in 1 roll)

Good question, yes kl-divergence and cross-entropy are not symmetrical.

Also, this may help:

https://machinelearningmastery.com/cross-entropy-for-machine-learning/

In “Lesson 03: Probability Distributions”:

“A discrete random variable has a finite set of states”. This description is not exact.

It instead should be “A discrete random variable has a countable set of states”.

For example, if a discrete random variable takes value from N* = {1,2,3,4,5…}.

This set is countable, but not finite.

Thanks for your precision, but in practice, if it’s not finite, we must model it a different way.

Three reasons:

1. Career development

2. Personal interest

3. Better understanding for ML algorithms

Thanks!

The code for plotting binomial distribution of flipping biased coin (p=0.7) 100 times.

from numpy import random

import matplotlib.pyplot as plt

import seaborn as sns

sns.distplot (random.binomial(n=1, p=0.7, size=100), hist=True, kde=False)

Well done!

Lesson 1:

– Working in a subsurface discipline (geophysics), the data I receive have undergone a long chain of processing and interpretation. Data rarely come with uncertainty, normally just the “best estimate”. I would like to engage colleagues in other disciplines to propagate uncertainty as well, and then I need to include that in my own analysis

– Even after having statistics at university and repetition in ML courses, I find that you need to be exposed to probability estimation regularly to have it in your fingertips. It can’t be repeated too often

– There are so many useful tools available now in Python, and crash-courses like this is a good way to get an overview of the most useful ones

Nice work!

Lesson two:

A parallel classic case is the selection of one of three options, where only one gives an award. You select one without revealing its content. It is then shown that one of the remaining options does not give a reward, and you get the option to switch from your original choice to the last one. Should you do it? The answer is yes. When you make the initial selection P(right) = 1/3. When it is revealed that another option was wrong the last option has P(right) = 1/2, but your first selection is still locked into the P(right) = 1/3. This shows the difference between marginal probability (the first selection) and the conditional probability (the second selection).

Well done!

the reasons to learn probability :

1- Mathematical form of predication starts with probability.

2- Arrange of data in graphical form provides insight of data like mean , SD.

3- Probability helps to define sample data and population.

Nice work!

Three reasons why we want to learn probability in the context of machine learning.

1. To Evaluate our ML model Performance mathematically (i.e., Train the ML Model with larger datasets is quite complex process, as a ML Engineer We don’t know how our model learns Pattern from Larger Datasets, so We can use Probability Parameters to Evaluate the Model Learning Performances.

2. Pre-processing Data before giving the data to ML Models (i.e, ML Models Needs Clean and Accurate Data to Learn, if Data is Bad then ML Results also Bad, so we need to take assessment of data set before Teach ML Models, we can use Probabilistic Techniques to Conducting assessments of data

3. Interpret ML Model Learning Parameters (i.e, we can Tune the ML Model Parameters for making Good Learnable Model, for that tuning Process of Models needs Mathematical and Probability Knowledge)

Nice work!

very nice tutorial to follow , I have question if i register will i receive free eBook about

probability-for-machine-learning ?

Thanks!

Yes, if you sign up for the email course you will get a PDF version of the course sent to you via email.

1) Reinforce and domain my basic knowledge on probability will let me improve my capability on problem’s resolution

2) To later domain fuzzy logic, which I understand envelop classic probability

3) To master ML algorithms understanding and coding

Well done.

Lesson 3:

# Sample an exponential distribution

from numpy.random import exponential

import matplotlib.pyplot as plt

n=10

# Generate the sample

sample_e=[]

for i in range(n):

sample_e.append(exponential(scale=1.0))

print(sample_e)

plt.hist(sample_e)

plt.xlim(0,10)

Well done!

https://colab.research.google.com/drive/1C0pgkMAKhmSf9duK0Vw9dk6W-a0FPYtG?usp=sharing

H(P, Q): 3.323 bits

H(Q, P): 5.232 bits

The cross-entropy is not symmetrical.

Nice work!

Day 6: Naive Classifiers. The model performs as expected, as it predicted the class with 75% accuracy, the highest possible. This is the result of setting “most_frequent” as strategy in DummyClassifier.

The expected probability of a naive classifier model that randomly chooses a class label from the training dataset each time a prediction is made, is 62.5%. This calculation is possible setting “stratified” strategy in DummyClassifier.

Well done.

I have tried two other probability distributions Multinomial and Binomial

from numpy.random import default_rng

rng = default_rng()

n=10

pvals = [0.1] * n

vals = rng.multinomial(n,pvals)

print(vals)

[1 1 0 2 0 0 3 0 2 1]

from numpy.random import default_rng

rng = default_rng()

n=10

pvals = [0.1] * n

vals = rng.binomial(n,pvals)

print(vals)

[4 2 0 1 2 2 0 0 0 1]

Good work! Keep on.

Task-1: Reasons why I want to learn probability in context of Machine Learning

1. To understand the technical papers and blogs in a better a way.

2. To enhance my knowledge in the domain of uncertainty estimation, to model uncertainties for problem at hand.

To deepen my understanding of existing ML concepts like KL divergence, MLE, Distributions,etc.

Thank you for the feedback Anuj! Please let me know if you have any questions I may assist you with.

Regards,

Task-2: Boy Girl Problem

the probability of 2 sons given first is a boy is 0.5.

so let’s assume that we have two random variables F for the first child and S for a second child.

F can take boy or girl with prob 0.5 and even S can take boy or girl with prob 0.5.

Now our sample space has the following combination BB, BG, GB, GG

if we don’t know the first child our probability of two sons will be 1/4=0.25

now since we know the first child it becomes a conditional probability and our sample space reduces to BB, BG. so now the probability of two sons is 1/2 =0.5

That’s good. Keep on!

Task day 1:

Reasons for me to learn probability:

1. Probability is quite essential classification predictive modeling.

2. A lot of machine learning models are trained using probability.

3. Probability is the core of statistics, which cannot be separated much from machine learning.

Lesson 2:

Possible two events: both boys, older boy-younger girl

So the probabilty of both boys: 1/2

Lesson 3:

#binomial distribution

from numpy.random import binomial

#p: probabilty of success

p=0.2

# k: number of trials

k=50

#simulation

success = binomial(k, p)

print( ‘ Total Success: %d ‘ % success)

Day4:

code:

# example of gaussian naive bayes

from sklearn.datasets import make_blobs

from sklearn.naive_bayes import GaussianNB

# generate 2d classification dataset

X, y = make_blobs(n_samples=100, centers=2, n_features=2, random_state=1)

# define the model

model = GaussianNB()

# fit the model

model.fit(X, y)

# select a single sample

Xsample, ysample = [X[0]], y[0]

# make a probabilistic prediction

yhat_prob = model.predict_proba(Xsample)

print(‘Predicted Probabilities: ‘, yhat_prob)

# make a classification prediction

yhat_class = model.predict(Xsample)

print(‘Predicted Class: ‘, yhat_class)

print(‘Truth: y=%d’ % ysample)

output:

Predicted Probabilities: [[1.00000000e+00 5.52387327e-30]]

Predicted Class: [0]

Truth: y=0

LESSON 6:

The model now has successfully estimated the expected accuracy of 62.5%, by changing the classifier from “most_frequent” to “stratified”. The same principle can be explained by manually calculating the expected accuracy:

Method A) classifier not considering the majority class: (0.5 * 0.25 + 0.5 * 0.75)

Method B) classifier considering the majority class: (0.0 * 0.25 + 1.0 * 0.75)

P(yhat = y) = (Method A) * 0.5 + (Method B) * 0.5

P(yhat = y) = (0.5 * 0.25 + 0.5 * 0.75) * 0.5 + (0.0 * 0.25 + 1.0 * 0.75) * 0.5 = 0.625 = 6.25%

Thank you for the feedback Paul! Keep up the great work!

1. I want to develop a new classification of human virtues and character strengths

2. I want to shine as a data scientist

3. I’m interested to see how merging different categories will affect the probability of the project I’m currently doing

4. I love math and especially statistics

5. I’m highly motivated to have extensive knowledge of different ML algorithms and I know that a deep understanding of probability is the foundation for understanding them

Lesson 04: NB

Sepal dataset (additional exercise)

If we use random_state while splitting to train and test for multinominal classification, we always get the same results (accuracy, precision, recall, f1), contrary to, for example NN.

After multiple runs, we can see that all measures are very similar, between 0.95 and 1. –> We developed strong ML algorithm for this particular dataset.

If dataset is representative to the whole population, we are ready to predict species between these 3 flowers based on multinominal NB with high confidence based on sepal length, sepal width, petal length, and petal width.

It would be interesting to add other category representing all other flowers with similar dimensions of sepal and petal and see how our algorithm works.

Lesson 06: Naive classifier (Dummy Classifier)

I could probably wrote more elegant code, but I hope my code and thinking will be useful to other students. Please comment if I made some mistake.

Bonus task:

# original code

# example of the majority class naive classifier in scikit-learn

from numpy import asarray

from sklearn.dummy import DummyClassifier

from sklearn.metrics import accuracy_score

# define dataset

X = asarray([0 for _ in range(100)])

class0 = [0 for _ in range(25)]

class1 = [1 for _ in range(75)]

y = asarray(class0 + class1)

# reshape data for sklearn

X = X.reshape((len(X), 1))

# define model

model = DummyClassifier(strategy=’most_frequent’)

# fit model

model.fit(X, y)

# make predictions

yhat = model.predict(X)

# calculate accuracy

accuracy = accuracy_score(y, yhat)

print(‘Accuracy: %.3f’ % accuracy)

# my code

print(y)

print(x)

# counter to check numbers in y

def counter(variable):

zeros = []

ones = []

other = []

for x in variable:

if x == 0:

zeros.append(x)

elif x == 1:

ones.append(x)

else:

other.append(x)

return len(zeros), len(ones), len(other)

counter(y)

# random prediction:

uniform = DummyClassifier(strategy=’uniform’)

# fit model

uniform.fit(X, y)

# make predictions

ypred = uniform.predict(X)

# calculate accuracy

accuracy_rand = accuracy_score(y, ypred)

print(‘Accuracy: %.3f’ % accuracy_rand)

# 100 trials, with mean and standard deviation

trials = []

for a in range (0,100):

uniform = DummyClassifier(strategy=’uniform’)

# fit model

uniform.fit(X, y)

# make predictions

ypred = uniform.predict(X)

# calculate accuracy

accuracy_rand = accuracy_score(y, ypred)

print(f’Accuracy in trial {a+1} is {accuracy_rand}’)

trials.append(accuracy_rand)

import statistics as s

print(round(s.mean(trials),2), round(s.stdev(trials),2))

“””

to conclude –> If we run the random enough times, statistics will do the job (M=0.5).

It’s useful to know sd (0.05).

Try to run the code multiple times, and see if you get the same M and sd 🙂

“””

Hope indentation won’t be messed up in this comment:

import statistics as s

def counter(variable):

zeros = []

ones = []

other = []

for x in variable:

if x == 0:

zeros.append(x)

elif x == 1:

ones.append(x)

else:

other.append(x)

return len(zeros), len(ones), len(other)

counter(y)

trials = []

for a in range (0,100):

uniform = DummyClassifier(strategy=’uniform’)

# fit model

uniform.fit(X, y)

# make predictions

ypred = uniform.predict(X)

# calculate accuracy

accuracy_rand = accuracy_score(y, ypred)

print(f’Accuracy in trial {a+1} is {accuracy_rand}’)

trials.append(accuracy_rand)

print(round(s.mean(trials),2), round(s.stdev(trials),2))

Lesson1 – Here are my reasons:

In some cases you may have your own metric based on probability for classification

Before defining a problem, testing the fisibility based on probability could avoid waste of time.

Understanding the logic of metrics and algorithms based on probability leads a right choice for a problem

Great feedback Nurgul! We appreciate it!

Lesson 01: 3 Reasons to Learn Probability

(1) I want to learn how to think better about the likelihoods of phenomena occurring.

(2) I want to better understand the various components that are necessary to calculate an accurate probability.

(3) I want to start thinking about solutions probabilistically and understand how I can use probabilistic models to arrive at those solutions.

Thank you Sara for your feedback! Keep up the great work and let us know if we can help answer any questions as you work through the course!

Lesson 02: Boy or Girl Paradox

I believe the answer is 0.5 since the only remaining options are Boy+Boy or Boy+Girl, and so the chances of Boy+Boy are 1/2.

Lesson 03: Probability Distribution

import numpy as np

import matplotlib.pyplot as plt

# sample a pareto distribution

# generate the sample

shape, mode = 10, 5

sample = np.random.pareto(shape, 1000)

print(sample)

s = (np.random.pareto(shape, 1000) + 1) * mode

count, bins, _ = plt.hist(s, 100, density=True)

fit = shape*mode**shape / bins**(shape+1)

plt.plot(bins, max(count)*fit/max(fit), linewidth=2, color=’r’)

plt.show()

Lesson 04: Naive Bayes Classifier

Predicted Probabilities: [[1.00000000e+00 5.52387327e-30]]

Predicted Class: [0]

Truth: y=0

Jason

Three reasons I want to learn probability are

1. Probability has been a thorn in my flesh since the lecturer who taught me in my undergrad

never explained it to my level of understanding .

2. You have introduced this topic in an interesting way and I am hopeful I will understand and use probability in machine learning

3. I have tackled other crash courses with you and they have been of great help in application.

Lesson 01:

My reasons for attending the course:

1) wish to understand the probability way of thinking

2) interest in machine learning

3) hope for not-so-abstract explanations

Thank you for your feedback Hein! We appreciate it!

For Lesson 2 task:

total_outcomes = 2 * 2 # Two children, each with two possibilities (boy or girl)

# Define the events

event_BB = 1

event_B = 3

# Calculate probabilities

probability_BB = event_BB / total_outcomes

probability_B = event_B / total_outcomes

probability_BB_given_B = probability_BB / probability_B

# Print the results

print(f”Joint Probability P(BB): {probability_BB}”)

print(f”Marginal Probability P(B): {probability_B}”)

print(f”Conditional Probability P(BB | older child is a boy): {probability_BB_given_B}”)

The output:

Joint Probability P(BB): 0.25

Marginal Probability P(B): 0.75

Conditional Probability P(BB | older child is a boy): 0.3333333333333333

Please let me know whether is correct or not. Thanks

Lesson 4: Use Iris dataset.

The output: Predicted Probabilities: [[1.00000000e+00 2.72754528e-18 1.38410356e-24]]

Predicted Class: [0]

Truth: y=0

Lesson 5: No.The calculation of cross-entropy is not symmetrical. Cross-entropy is a measure of how well one probability distribution (q) predicts another distribution (p). The cross-entropy from p to q is different from the cross-entropy from q to p.

Cross-entropy from distribution P to distribution Q is 3.288 bits. and Cross-entropy from distribution Q to distribution P is 2.906 bits. These two values are different, indicating that the order of the distributions matters in cross-entropy calculations.

Lesson 7: A Log Loss score of 0.2469 is not a perfect score. In Log Loss, the ideal score is 0. A Brier Score of 0.057 is generally considered a good result. The Brier Score ranges from 0 to 1, where lower values indicate better predictive performance.

three reasons why I want to learn probability in the context of machine learning:

1) It’s fundamental

2) Transfer to eventually understanding Quantum Mechanics and Quantum Field Theory

3) A practical application of Probabilty I can share with my students,

Thank you for your feedback Henry! Please reach out with any questions so that we may better guide to achieve these goals.