There are many similarities between the Transformer encoder and decoder, such as their implementation of multi-head attention, layer normalization, and a fully connected feed-forward network as their final sub-layer. Having implemented the Transformer encoder, we will now go ahead and apply our knowledge in implementing the Transformer decoder as a further step toward implementing the complete Transformer model. Your end goal remains to apply the complete model to Natural Language Processing (NLP).

In this tutorial, you will discover how to implement the Transformer decoder from scratch in TensorFlow and Keras.

After completing this tutorial, you will know:

- The layers that form part of the Transformer decoder

- How to implement the Transformer decoder from scratch

Kick-start your project with my book Building Transformer Models with Attention. It provides self-study tutorials with working code to guide you into building a fully-working transformer model that can

translate sentences from one language to another...

Let’s get started.

Implementing the Transformer decoder from scratch in TensorFlow and Keras

Photo by François Kaiser, some rights reserved.

Tutorial Overview

This tutorial is divided into three parts; they are:

- Recap of the Transformer Architecture

- The Transformer Decoder

- Implementing the Transformer Decoder From Scratch

- The Decoder Layer

- The Transformer Decoder

- Testing Out the Code

Prerequisites

For this tutorial, we assume that you are already familiar with:

- The Transformer model

- The scaled dot-product attention

- The multi-head attention

- The Transformer positional encoding

- The Transformer encoder

Recap of the Transformer Architecture

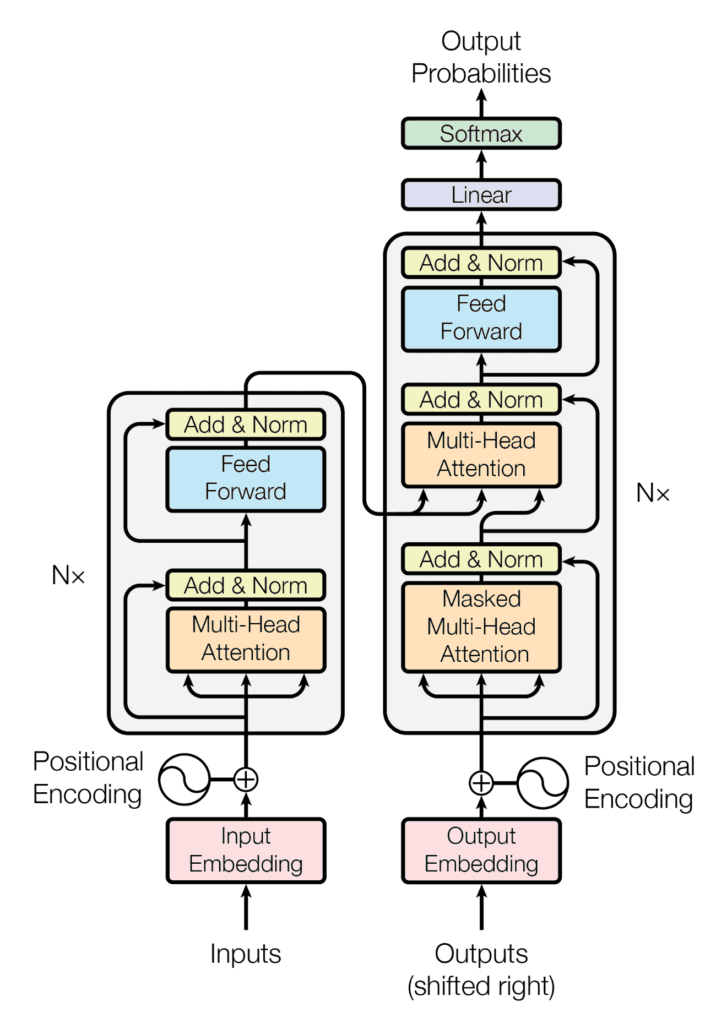

Recall having seen that the Transformer architecture follows an encoder-decoder structure. The encoder, on the left-hand side, is tasked with mapping an input sequence to a sequence of continuous representations; the decoder, on the right-hand side, receives the output of the encoder together with the decoder output at the previous time step to generate an output sequence.

The encoder-decoder structure of the Transformer architecture

Taken from “Attention Is All You Need“

In generating an output sequence, the Transformer does not rely on recurrence and convolutions.

You have seen that the decoder part of the Transformer shares many similarities in its architecture with the encoder. This tutorial will explore these similarities.

The Transformer Decoder

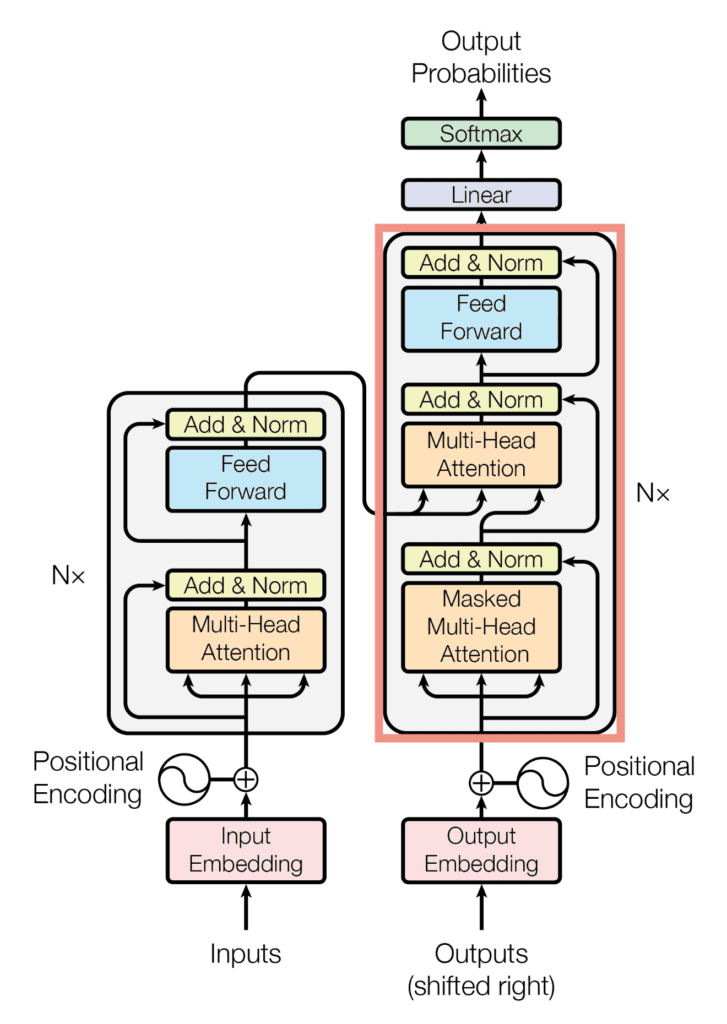

Similar to the Transformer encoder, the Transformer decoder also consists of a stack of $N$ identical layers. The Transformer decoder, however, implements an additional multi-head attention block for a total of three main sub-layers:

- The first sub-layer comprises a multi-head attention mechanism that receives the queries, keys, and values as inputs.

- The second sub-layer comprises a second multi-head attention mechanism.

- The third sub-layer comprises a fully-connected feed-forward network.

The decoder block of the Transformer architecture

Taken from “Attention Is All You Need“

Each one of these three sub-layers is also followed by layer normalization, where the input to the layer normalization step is its corresponding sub-layer input (through a residual connection) and output.

On the decoder side, the queries, keys, and values that are fed into the first multi-head attention block also represent the same input sequence. However, this time around, it is the target sequence that is embedded and augmented with positional information before being supplied to the decoder. On the other hand, the second multi-head attention block receives the encoder output in the form of keys and values and the normalized output of the first decoder attention block as the queries. In both cases, the dimensionality of the queries and keys remains equal to $d_k$, whereas the dimensionality of the values remains equal to $d_v$.

Vaswani et al. introduce regularization into the model on the decoder side, too, by applying dropout to the output of each sub-layer (before the layer normalization step), as well as to the positional encodings before these are fed into the decoder.

Let’s now see how to implement the Transformer decoder from scratch in TensorFlow and Keras.

Want to Get Started With Building Transformer Models with Attention?

Take my free 12-day email crash course now (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Implementing the Transformer Decoder from Scratch

The Decoder Layer

Since you have already implemented the required sub-layers when you covered the implementation of the Transformer encoder, you will create a class for the decoder layer that makes use of these sub-layers straight away:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

from multihead_attention import MultiHeadAttention from encoder import AddNormalization, FeedForward class DecoderLayer(Layer): def __init__(self, h, d_k, d_v, d_model, d_ff, rate, **kwargs): super(DecoderLayer, self).__init__(**kwargs) self.multihead_attention1 = MultiHeadAttention(h, d_k, d_v, d_model) self.dropout1 = Dropout(rate) self.add_norm1 = AddNormalization() self.multihead_attention2 = MultiHeadAttention(h, d_k, d_v, d_model) self.dropout2 = Dropout(rate) self.add_norm2 = AddNormalization() self.feed_forward = FeedForward(d_ff, d_model) self.dropout3 = Dropout(rate) self.add_norm3 = AddNormalization() ... |

Notice here that since the code for the different sub-layers had been saved into several Python scripts (namely, multihead_attention.py and encoder.py), it was necessary to import them to be able to use the required classes.

As you did for the Transformer encoder, you will now create the class method, call(), that implements all the decoder sub-layers:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

... def call(self, x, encoder_output, lookahead_mask, padding_mask, training): # Multi-head attention layer multihead_output1 = self.multihead_attention1(x, x, x, lookahead_mask) # Expected output shape = (batch_size, sequence_length, d_model) # Add in a dropout layer multihead_output1 = self.dropout1(multihead_output1, training=training) # Followed by an Add & Norm layer addnorm_output1 = self.add_norm1(x, multihead_output1) # Expected output shape = (batch_size, sequence_length, d_model) # Followed by another multi-head attention layer multihead_output2 = self.multihead_attention2(addnorm_output1, encoder_output, encoder_output, padding_mask) # Add in another dropout layer multihead_output2 = self.dropout2(multihead_output2, training=training) # Followed by another Add & Norm layer addnorm_output2 = self.add_norm1(addnorm_output1, multihead_output2) # Followed by a fully connected layer feedforward_output = self.feed_forward(addnorm_output2) # Expected output shape = (batch_size, sequence_length, d_model) # Add in another dropout layer feedforward_output = self.dropout3(feedforward_output, training=training) # Followed by another Add & Norm layer return self.add_norm3(addnorm_output2, feedforward_output) |

The multi-head attention sub-layers can also receive a padding mask or a look-ahead mask. As a brief reminder of what was said in a previous tutorial, the padding mask is necessary to suppress the zero padding in the input sequence from being processed along with the actual input values. The look-ahead mask prevents the decoder from attending to succeeding words, such that the prediction for a particular word can only depend on known outputs for the words that come before it.

The same call() class method can also receive a training flag to only apply the Dropout layers during training when the flag’s value is set to True.

The Transformer Decoder

The Transformer decoder takes the decoder layer you have just implemented and replicates it identically $N$ times.

You will create the following Decoder() class to implement the Transformer decoder:

|

1 2 3 4 5 6 7 8 9 |

from positional_encoding import PositionEmbeddingFixedWeights class Decoder(Layer): def __init__(self, vocab_size, sequence_length, h, d_k, d_v, d_model, d_ff, n, rate, **kwargs): super(Decoder, self).__init__(**kwargs) self.pos_encoding = PositionEmbeddingFixedWeights(sequence_length, vocab_size, d_model) self.dropout = Dropout(rate) self.decoder_layer = [DecoderLayer(h, d_k, d_v, d_model, d_ff, rate) for _ in range(n) ... |

As in the Transformer encoder, the input to the first multi-head attention block on the decoder side receives the input sequence after this would have undergone a process of word embedding and positional encoding. For this purpose, an instance of the PositionEmbeddingFixedWeights class (covered in this tutorial) is initialized, and its output assigned to the pos_encoding variable.

The final step is to create a class method, call(), that applies word embedding and positional encoding to the input sequence and feeds the result, together with the encoder output, to $N$ decoder layers:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

... def call(self, output_target, encoder_output, lookahead_mask, padding_mask, training): # Generate the positional encoding pos_encoding_output = self.pos_encoding(output_target) # Expected output shape = (number of sentences, sequence_length, d_model) # Add in a dropout layer x = self.dropout(pos_encoding_output, training=training) # Pass on the positional encoded values to each encoder layer for i, layer in enumerate(self.decoder_layer): x = layer(x, encoder_output, lookahead_mask, padding_mask, training) return x |

The code listing for the full Transformer decoder is the following:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 |

from tensorflow.keras.layers import Layer, Dropout from multihead_attention import MultiHeadAttention from positional_encoding import PositionEmbeddingFixedWeights from encoder import AddNormalization, FeedForward # Implementing the Decoder Layer class DecoderLayer(Layer): def __init__(self, h, d_k, d_v, d_model, d_ff, rate, **kwargs): super(DecoderLayer, self).__init__(**kwargs) self.multihead_attention1 = MultiHeadAttention(h, d_k, d_v, d_model) self.dropout1 = Dropout(rate) self.add_norm1 = AddNormalization() self.multihead_attention2 = MultiHeadAttention(h, d_k, d_v, d_model) self.dropout2 = Dropout(rate) self.add_norm2 = AddNormalization() self.feed_forward = FeedForward(d_ff, d_model) self.dropout3 = Dropout(rate) self.add_norm3 = AddNormalization() def call(self, x, encoder_output, lookahead_mask, padding_mask, training): # Multi-head attention layer multihead_output1 = self.multihead_attention1(x, x, x, lookahead_mask) # Expected output shape = (batch_size, sequence_length, d_model) # Add in a dropout layer multihead_output1 = self.dropout1(multihead_output1, training=training) # Followed by an Add & Norm layer addnorm_output1 = self.add_norm1(x, multihead_output1) # Expected output shape = (batch_size, sequence_length, d_model) # Followed by another multi-head attention layer multihead_output2 = self.multihead_attention2(addnorm_output1, encoder_output, encoder_output, padding_mask) # Add in another dropout layer multihead_output2 = self.dropout2(multihead_output2, training=training) # Followed by another Add & Norm layer addnorm_output2 = self.add_norm1(addnorm_output1, multihead_output2) # Followed by a fully connected layer feedforward_output = self.feed_forward(addnorm_output2) # Expected output shape = (batch_size, sequence_length, d_model) # Add in another dropout layer feedforward_output = self.dropout3(feedforward_output, training=training) # Followed by another Add & Norm layer return self.add_norm3(addnorm_output2, feedforward_output) # Implementing the Decoder class Decoder(Layer): def __init__(self, vocab_size, sequence_length, h, d_k, d_v, d_model, d_ff, n, rate, **kwargs): super(Decoder, self).__init__(**kwargs) self.pos_encoding = PositionEmbeddingFixedWeights(sequence_length, vocab_size, d_model) self.dropout = Dropout(rate) self.decoder_layer = [DecoderLayer(h, d_k, d_v, d_model, d_ff, rate) for _ in range(n)] def call(self, output_target, encoder_output, lookahead_mask, padding_mask, training): # Generate the positional encoding pos_encoding_output = self.pos_encoding(output_target) # Expected output shape = (number of sentences, sequence_length, d_model) # Add in a dropout layer x = self.dropout(pos_encoding_output, training=training) # Pass on the positional encoded values to each encoder layer for i, layer in enumerate(self.decoder_layer): x = layer(x, encoder_output, lookahead_mask, padding_mask, training) return x |

Testing Out the Code

You will work with the parameter values specified in the paper, Attention Is All You Need, by Vaswani et al. (2017):

|

1 2 3 4 5 6 7 8 9 10 |

h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_ff = 2048 # Dimensionality of the inner fully connected layer d_model = 512 # Dimensionality of the model sub-layers' outputs n = 6 # Number of layers in the encoder stack batch_size = 64 # Batch size from the training process dropout_rate = 0.1 # Frequency of dropping the input units in the dropout layers ... |

As for the input sequence, you will work with dummy data for the time being until you arrive at the stage of training the complete Transformer model in a separate tutorial, at which point you will use actual sentences:

|

1 2 3 4 5 6 7 |

... dec_vocab_size = 20 # Vocabulary size for the decoder input_seq_length = 5 # Maximum length of the input sequence input_seq = random.random((batch_size, input_seq_length)) enc_output = random.random((batch_size, input_seq_length, d_model)) ... |

Next, you will create a new instance of the Decoder class, assigning its output to the decoder variable, subsequently passing in the input arguments, and printing the result. You will set the padding and look-ahead masks to None for the time being, but you will return to these when you implement the complete Transformer model:

|

1 2 3 |

... decoder = Decoder(dec_vocab_size, input_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) print(decoder(input_seq, enc_output, None, True) |

Tying everything together produces the following code listing:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

from numpy import random dec_vocab_size = 20 # Vocabulary size for the decoder input_seq_length = 5 # Maximum length of the input sequence h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_ff = 2048 # Dimensionality of the inner fully connected layer d_model = 512 # Dimensionality of the model sub-layers' outputs n = 6 # Number of layers in the decoder stack batch_size = 64 # Batch size from the training process dropout_rate = 0.1 # Frequency of dropping the input units in the dropout layers input_seq = random.random((batch_size, input_seq_length)) enc_output = random.random((batch_size, input_seq_length, d_model)) decoder = Decoder(dec_vocab_size, input_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) print(decoder(input_seq, enc_output, None, True)) |

Running this code produces an output of shape (batch size, sequence length, model dimensionality). Note that you will likely see a different output due to the random initialization of the input sequence and the parameter values of the Dense layers.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

tf.Tensor( [[[-0.04132953 -1.7236308 0.5391184 ... -0.76394725 1.4969798 0.37682498] [ 0.05501875 -1.7523409 0.58404493 ... -0.70776534 1.4498456 0.32555297] [ 0.04983566 -1.8431275 0.55850077 ... -0.68202156 1.4222856 0.32104644] [-0.05684051 -1.8862512 0.4771412 ... -0.7101341 1.431343 0.39346313] [-0.15625843 -1.7992781 0.40803364 ... -0.75190556 1.4602519 0.53546077]] ... [[-0.58847624 -1.646842 0.5973466 ... -0.47778523 1.2060764 0.34091905] [-0.48688865 -1.6809179 0.6493542 ... -0.41274604 1.188649 0.27100053] [-0.49568555 -1.8002801 0.61536175 ... -0.38540334 1.2023914 0.24383534] [-0.59913146 -1.8598882 0.5098136 ... -0.3984461 1.2115746 0.3186561 ] [-0.71045107 -1.7778647 0.43008155 ... -0.42037937 1.2255307 0.47380894]]], shape=(64, 5, 512), dtype=float32) |

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Books

Papers

Summary

In this tutorial, you discovered how to implement the Transformer decoder from scratch in TensorFlow and Keras.

Specifically, you learned:

- The layers that form part of the Transformer decoder

- How to implement the Transformer decoder from scratch

Do you have any questions?

Ask your questions in the comments below, and I will do my best to answer.

These series of blogs on transformers are the best way to learn about transformers on the internet. Thank you!

You are very welcome Dev! We appreciate the feedback and support!

Very informative like always,

one question, can you consider any limitation with only decoder transformer such as GPT, in any approaches related to NLP?

Any student discount for GAn and transformer models and how these models can be applied especially transformer models for satellite umages

Hi Sreedhar…Please send an email regarding your questions on student discounts.

Hi and thank you for all those amazing free tutorials!

I think I have spotted a typo:

In the code listing for the full Transformer decoder (and the respective part given above it), in line 39, instead of

addnorm_output2 = self.add_norm1(addnorm_output1, multihead_output2)

I think it should be:

addnorm_output2 = self.add_norm2(addnorm_output1, multihead_output2)

Sorry if I missed something! Thanks again – cheers!

Thank you for your feedback and support! We greatly appreciate it!

Yet it still hasn’t been fixed. Does Jason Brownlee still run this site?

Thank you for your feedback! Yes, he does!

Many thanks, Dear Jason Brownlee.

I’ve followed all of your tutorials on transformers.

I’ve learned a lot, and I just want to express my thanks.

However, I have a small suggestion. Could you please create a guide on implementing Transformers specifically for time series data, focusing on forecasting, classification, or anomaly detection? One explanation would be sufficient for us.

Thank you in advance.

Hi Amir…Thank you for your support, feedback and suggestions! Your suggestion is a great one! Please ensure you are subscribed to our newsletter so that you will be notified of new content.

Hi, great blog! Thanks for this.

I have a question. If the output of the final feed-forward layer is of the dimension (sequence_length, model_dim), would this not vary at every time step, since the input sequence length to the first masked multi-head attention head is the decoded sequence so far? Would the queries for the cross-attention layer not be increasing in number by 1 at every time step? Wouldn’t that also mean that the input to the Linear projection layer changes in dimension at every time step? This can’t happen though.

So how is the varying length of the decoder input accounted for? Do we assume that the number of queries in the decoder at every time step is fixed ( = maximum output sequence length) and the masking is what prevents future noise from coming into the queries?

Thanks in advance, and cheers!

Hi Ak…Your question delves into the mechanics of how sequence length is managed in the Transformer model, particularly in the decoder during tasks like text generation. Let’s break down the concepts and address your concerns step by step.

### Understanding Transformer Decoding

1. **Output of the Final Feed-Forward Layer**:

– The output dimension of the final feed-forward layer in the decoder is indeed \((sequence\_length, model\_dim)\). This represents the hidden states of the decoded sequence at a particular time step.

2. **Sequence Length and Masking**:

– In a Transformer decoder, the input sequence length can vary as you generate tokens step by step. However, the model handles this dynamically.

### Handling Varying Sequence Lengths

1. **Masked Multi-Head Attention**:

– At each time step \(t\), the decoder generates one token and then uses all tokens generated so far to predict the next token. This means at time step \(t\), the decoder’s input sequence length is \(t\).

– To prevent the model from attending to future tokens (which are not yet generated), the decoder uses a **causal mask** (or look-ahead mask) during the masked multi-head attention. This mask ensures that for each position \(i\) in the sequence, the model can only attend to positions \(0\) to \(i\).

2. **Cross-Attention**:

– In the cross-attention layer, the queries come from the decoder’s previous layer (at the current time step), and the keys and values come from the encoder’s output (which is fixed for a given input sequence).

– The number of queries in the cross-attention corresponds to the current length of the decoded sequence.

### Linear Projection Layer

– **Dynamic Input Handling**:

– The input to the linear projection layer indeed varies in sequence length at each time step. However, this is accounted for by ensuring that operations within the model are compatible with variable sequence lengths.

– The linear projection layer applies the same set of weights to each position in the sequence, regardless of its length. This is a common operation in sequence models.

### Sequence Length Management

– **Padding and Masking**:

– To handle sequences of varying lengths efficiently within batches, padding is used. Sequences are padded to the maximum length in the batch, and a mask is applied to ensure the padding tokens do not affect the computation.

– During generation, the decoder processes one token at a time, updating the sequence length dynamically with each step.

### Example: Generation Process

1. **Time Step 1**:

– Decoder input: \([SOS]\)

– Mask: Allows attention only to \([SOS]\)

– Output: First token prediction

2. **Time Step 2**:

– Decoder input: \([SOS, First\_Token]\)

– Mask: Allows attention to \([SOS, First\_Token]\)

– Output: Second token prediction

3. **Subsequent Time Steps**:

– This process repeats, each time the sequence length increasing by one and the mask extending to allow attention to the entire generated sequence so far.

### Fixed Length Queries and Masking

– **Fixed Length Queries**:

– The model does not assume a fixed length for queries. Instead, it dynamically adjusts based on the sequence generated up to that point.

– Future positions are masked out to prevent the model from accessing tokens that have not yet been generated.

In summary, the Transformer decoder handles varying sequence lengths dynamically through padding, masking, and efficient use of batch processing. The causal mask ensures that only valid tokens are attended to at each step, maintaining the integrity of the generation process.

The call method of Decoder layer expects 5 arguments:

call(self, output_target, encoder_output, lookahead_mask, padding_mask, training)

While testing out the code only 4 are given.

decoder(input_seq, enc_output, None, True)

Please correct this.

Thank you Tushar!