Skills in deep learning are in great demand, although these skills can be challenging to identify and to demonstrate.

Explaining that you are familiar with a technique or type of problem is very different to being able to use it effectively with open source APIs on real datasets.

Perhaps the most effective way of demonstrating skill as a deep learning practitioner is by developing models. A practitioner can practice on standard publicly available machine learning datasets and build up a portfolio of completed projects to both leverage on future projects and to demonstrate competence.

In this post, you will discover how you can use small projects to demonstrate basic competence for using deep learning for predictive modeling.

After reading this post, you will know:

- Explaining deep learning math, theory, and methods is not sufficient to demonstrate competence.

- Developing a portfolio of completed small projects allows you to demonstrate your ability to develop and deliver skillful models.

- Using a systematic five-step project template to execute projects and a nine-step template for presenting results allows you to both methodically complete projects and clearly communicate findings.

Kick-start your project with my new book Better Deep Learning, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Simple Framework That You Can Use to Demonstrate Basic Deep Learning Competence

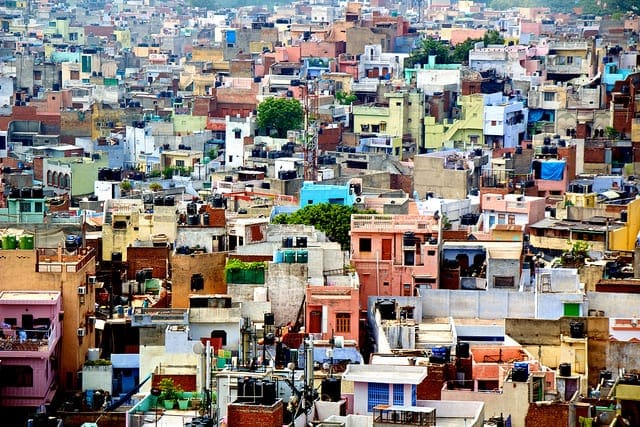

Photo by Angela and Andrew, some rights reserved.

Overview

This tutorial is divided into five parts; they are:

- How Would You Demonstrate Basic Deep Learning Competence?

- Demonstrate Skills With a Portfolio

- How to Select Portfolio Projects

- Template for Systematic Projects

- Template for Presenting Results

How Would You Demonstrate Basic Deep Learning Competence?

How do you know that you have a basic competence with deep learning methods for predictive modeling problems?

- Perhaps you have read a book?

- Perhaps you have worked through some tutorials?

- Perhaps you are familiar with the APIs?

If you had to, how would you demonstrate this competence to someone else?

- Perhaps you could explain common problems and how to address them?

- Perhaps you could summarize popular techniques?

- Perhaps you could reference notable papers?

Is this enough?

If you were hiring a deep learning practitioner for a role, would this satisfy you?

It’s not enough, and it would not satisfy me.

Demonstrate Skills With a Portfolio

The solution is to use the same techniques that modern businesses are using to hire developers.

Developers can be quizzed all day long on math and how algorithms work but what businesses need is someone who can deliver working and maintainable code.

The same applies to deep learning practitioners.

Practitioners can be quizzed all day long on the math of gradient descent and backpropagation, but what businesses need is someone who can deliver stable models and skillful predictions.

This can be achieved through developing a portfolio of completed projects using open source deep learning libraries and standard machine learning datasets.

The portfolio has three main uses:

- Develop Skills. The portfolio can be used by the practitioner to develop and demonstrate the skills incrementally, leveraging work from prior projects on larger and more challenging future projects.

- Demonstrate Skills. The portfolio can be used by an employer to confirm the practitioner can deliver stable results and skillful predictions.

- Discuss Skills. The portfolio can be used as a starting point for a discussion in an interview where techniques, results, and design decisions are described, understood, and defended.

There are many problem types and many specialized types of data loading and neural network models to address them, such as problems in computer vision, time series, and natural language processing.

Before specialization, you must be able to demonstrate foundational skills. Specifically, you must be able to demonstrate that you are able to work through the steps of an applied machine learning project systematically using the techniques from deep learning.

This then raises the question:

- What projects should you use to demonstrate foundational skills and how should those projects be structured to best demonstrate those skills?

How to Select Portfolio Projects

Use standard and publicly available machine learning datasets.

Ideally, there are datasets that are available with a permissive license such as public domain, GPL, or creative commons, so that you can freely copy them and perhaps even re-distribute them with your completed project.

There are many ways to choose a dataset, such as interest in the domain, prior experience, difficulty, etc.

Instead, I recommend being strategic in the choice of the datasets that you include in your portfolio. Three approaches to dataset selection that I recommend are:

- Popularity: A good starting point might be to select popular datasets, such as among the most viewed or most cited datasets. Popular datasets are familiar and can provide points of comparison and help to simplify their presentation.

- Problem Type. Another approach might be to choose datasets according to general classes of problems, such as regression, binary classification, and multi-class classification. Demonstrating skill across basic problem types is important.

- Problem Property. A final approach might be to select datasets based on a specific property of the dataset for which you want to demonstrate proficiency, such as class imbalance, mixture of input variable types, etc. This area is often overlooked and rarely are real data as clean and simple as standard machine learning datasets; finding examples that offer more of a challenge provide excellent demonstrations.

Two excellent places to locate and download standard machine learning datasets are:

Small Data. I recommend starting with small datasets that fit in memory (RAM), such as many of those on the UCI Machine Learning Repository. This is because it allows you to focus on data preparation and modeling, at least initially, and work through many different configurations rapidly. Larger datasets result in much slower to train models and may require cloud infrastructure.

Good Enough Performance. I also recommend not aiming for the best possible model performance on the dataset. A dataset is really a manifestation of a predictive modeling problem, that in reality can become a research project of its own with no end. Instead, the focus is on establishing a threshold for defining a skillful model, then demonstrating that you can develop and wield a skillful model for the problem.

Small Scope. Finally, I recommend keeping the projects small, ideally completed in a normal work day, although you may need to spread out the work on nights and weekends. Each project has one aim: to work through the dataset systematically and deliver a skillful model. Be aware that without careful time boxing, the project can easily get away from you.

In summary:

- Use standard publicly available machine learning datasets.

- Choose a dataset strategically.

- Prefer smaller datasets that fit in memory.

- Aim to develop skillful models, not optimal models.

- Keep each project small and focused.

Completed projects of this nature offer a lot of benefits, including:

- Demonstration of a methodical approach to solving prediction problems.

- Demonstration of knowledge of appropriate APIs for data handling and model evaluation.

- Demonstration of the capability with specific deep learning models and techniques.

- Demonstration of time and scope management given that you deliver a skillful model.

- Demonstration of good communication in the presentation of the results and findings.

Template for Systematic Projects

It is critical that a given dataset is worked through in a systematic manner.

There are standard steps in a predictive modeling problem and being systematic both demonstrates that you are aware of the steps and have considered them on the project.

Being systematic on portfolio projects highlights that you would be equally systematic on new projects.

The steps of a project in your portfolio may include the following.

- Problem Description. Describe the predictive modeling problem including the domain and relevant background.

- Summarize Data. Describe the available data, including statistical summaries and data visualization.

- Evaluate Models. Spot-check a suite of model types, configurations, data preparation schemes, and more in order to narrow down what works well on the problem.

- Improve Performance. Improve the performance of the model or models that work well with hyperparameter tuning and perhaps ensemble methods.

- Present Results. Present the findings of the project.

A step before this process, a step zero, might be to choose the open source deep learning and machine learning libraries that you wish to use for the demonstration.

I would encourage you to narrow the scope wherever possible. Some additional tips include:

- Use repeated k-fold cross-validation to evaluate models, especially with smaller datasets that fit into memory.

- Use a hold-out test set that can be used to demonstrate the ability to make predictions and evaluate a final best-performing model.

- Establish a baseline performance in order to provide a threshold of what defines a skillful or non-skillful model.

- Publicly present your results, including all code and data, ideally, a public location you own and control such as GitHub or a blog.

Getting good at working through projects in this manner is invaluable. You will always be able to get good results, quickly.

Specifically, above average, perhaps even a few-percent-from-optimal-quality results within hours to days. Few practitioners are this disciplined and productive even on standard problems.

Template for Presenting Results

The project is probably only as good as your ability to present it, including results and findings.

I strongly encourage you to use one (or all) of the following approaches in order to present your projects:

- Blog post. Write up your results as a blog post on your own blog.

- GitHub Repository. Store all code and data in a GitHub repository and present results using a hosted Markdown file or Notebook that allows rich text and images.

- YouTube Video. Present your results and findings in video format, perhaps with slides.

I also strongly encourage you to define the structure of the presentation prior to starting the project, and fill in the details as you go.

A template that I recommend when presenting project results is as follows:

- 1. Problem Description. Describes the problem that is being solved, the source of the data, inputs, and outputs.

- 2. Data Summary. Describes the distribution and relationships in the data and perhaps ideas for data preparation and modeling.

- 3. Test Harness. Describes how model selection will be performed including the resampling method and model evaluation metrics.

- 4. Baseline Performance. Describes the baseline model performance (using the test harness) that defines whether a model is skillful or not.

- 5. Experimental Results. Presents the experimental results, perhaps testing a suite of models, model configurations, data preparation schemes, and more. Each subsection should have some form of:

- 5.1 Intent: why run the experiment?

- 5.2 Expectations: what was the expected outcome of the experiment?

- 5.3 Methods: what data, models, and configurations are used in the experiment?

- 5.4 Results: what were the actual results of the experiment?

- 5.5 Findings: what do the results mean, how do they relate to expectations, what other experiments do they inspire?

- 6. Improvements (optional). Describes experimental results for attempts to improve the performance of the better performing models, such as hyperparameter tuning and ensemble methods.

- 7. Final Model. Describes the choice of a final model, including configuration and performance. It is a good idea to demonstrate saving and loading the model and demonstrate the ability to make predictions on a holdout dataset.

- 8. Extensions. Describes areas that were considered but not addressed in the project that could be explored in the future.

- 9. Resources. Describes relevant references to data, code, APIs, papers, and more.

These could be sections in a post or report, or sections of a slide presentation.

Further Reading

This section provides more resources on the topic if you are looking to go deeper.

Posts

- Applied Machine Learning Process

- How to Use a Machine Learning Checklist to Get Accurate Predictions

- Build a Machine Learning Portfolio

Datasets

Summary

In this post, you discovered how to demonstrate basic competence for using deep learning for predictive modeling.

Specifically, you learned:

- Explaining deep learning math, theory, and methods is not sufficient to demonstrate competence.

- Developing a portfolio of completed small projects allows you to demonstrate your ability to develop and deliver skillful models.

- Using a systematic five-step project template to execute projects and a nine-step template for presenting results allows you to both methodically complete projects and clearly communicate findings.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Special Thanks to Jason Brownlee for his creativity, developing skills in machine learning and deep learning and the way he transform his deep learning skills and expertise to beginners in simple way.

I have learned deep learning by following his mini courses, instructions and Articles I have finished my first deep learning paper using LSTM model for Environmental multivariate time series forecasting in the Keras and Tensor Flow deep learning library in a Python SciPy environment and I have received acceptance from one of big IEEE foundations conferences and its ready to publish in one of famous scientific magazines.

Thanks.

Well done, that is wonderful news for you!

I think that the best example of the ideas included in this Brownlee post about how to demonstrate deep learning competence is the own tutorials of Mr. Jason Brownlee, where accompanying the theoretical concepts intro you always have a fully operative specific code performing a particular project. Great Job

Thanks.

Is there not a problem with conventional neural networks where you have multiple linear classifiers (weighted sums) operating off one vector? Presuming you want each one to do a different classification (and why wouldn’t you?) they are going to run of of ways of splitting the data very quickly. It could be that you should only have say 10 weighted sums operating off one vector, each one finding weaker and weaker splits. A solution is suggested using random projections:

https://discourse.processing.org/t/flaw-in-current-neural-networks/11512

Thanks for sharing Sean.