Machine learning methods have a lot to offer for time series forecasting problems.

A difficulty is that most methods are demonstrated on simple univariate time series forecasting problems.

In this post, you will discover a suite of challenging time series forecasting problems. These are problems where classical linear statistical methods will not be sufficient and where more advanced machine learning methods are required.

If you are looking for challenging time series datasets to practice machine learning techniques, you are in the right place.

Kick-start your project with my new book Time Series Forecasting With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s dive in.

Challenging Machine Learning Time Series Forecasting Problems

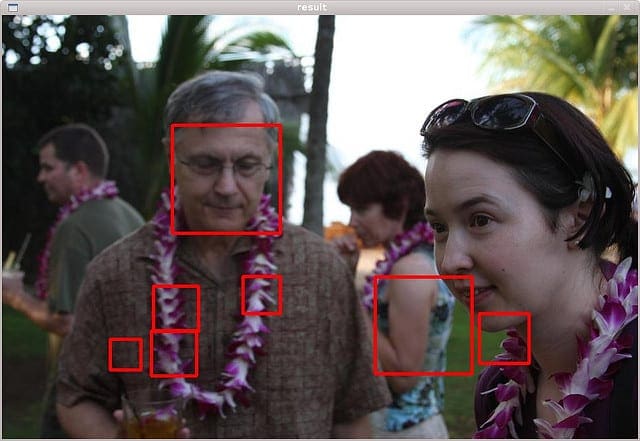

Photo by Joao Trindade, some rights reserved.

Overview

We will take a closer look at 10 challenging time series datasets from the competitive data science website Kaggle.com.

Not all datasets are strict time series prediction problems; I have been loose in the definition and also included problems that were a time series before obfuscation or have a clear temporal component.

They are:

- How Much Did It Rain? I and II

- Online Product Sales

- Rossmann Store Sales

- Walmart Recruiting – Store Sales Forecasting

- Acquire Valued Shoppers Challenge

- Melbourne University AES/MathWorks/NIH Seizure Prediction

- AMS 2013-2014 Solar Energy Prediction Contest

- Global Energy Forecasting Competition 2012 – Wind Forecasting

- EMC Data Science Global Hackathon (Air Quality Prediction)

- Grupo Bimbo Inventory Demand

This is not all of the time series datasets hosted on Kaggle.

Did I miss a good one? Let me know in the comments below.

Stop learning Time Series Forecasting the slow way!

Take my free 7-day email course and discover how to get started (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

How Much Did It Rain? I and II

Given observations and derived measures from polarimetric radar, the problem is to predict the probability distribution of the hourly total in a rain gage.

The temporal structure (e.g. hour to hour) was removed as part of obfuscating the data, which would have made it an interesting time series problem.

The competition was run twice in the same year with different datasets:

The second competition was won by Aaron Sim, who used a very large recurrent neural network algorithm.

Blog posts interviewing competition winners are accessible here.

Online Product Sales

Given details of the product and the product launch, the problem is to predict the next 12 months of sales figures.

This is a multi-step forecast, or sequence forecast, without a history of sales from which to extrapolate.

I could not find any good write-ups of top performing solutions. Can you?

Learn more on the competition page.

Rossmann Store Sales

Given historical daily sales for more than one thousands stores, the problem is to predict 6 weeks of daily sales figures for each store.

This provides both an opportunity to explore store-wise multi-step forecasts, as well as the ability to exploit cross-store patterns.

Top results were achieved with careful feature engineering and the use of gradient boosting.

Blog posts interviewing competition winners are accessible here.

Learn more on the competition page.

Walmart Recruiting – Store Sales Forecasting

Given historical weekly sales data for multiple departments in multiple stores, as well as details of promotions, the problem is to predict sales figures for store departments.

This provides both an opportunity to explore department-wise and even store-wise forecasts, as well as the ability to exploit cross-department and cross-store patterns.

Top performers made heavy use of ARIMA models and careful handling of public holidays.

See a write-up of the winning solution here, and second place solution here.

Learn more on the competition page.

Acquire Valued Shoppers Challenge

Given historical shopping behavior, the problem is to predict which customers will likely repeat purchase (become acquired) after taking up a discount offer.

The large number of transactions make this a big data download, nearly 3 gigabytes.

The problem provides an opportunity to model the time series of specific or aggregated customers and predict the probability of customer conversion.

I could not find any good write-ups of top performing solutions. Can you?

Learn more on the competition page.

Melbourne University AES/MathWorks/NIH Seizure Prediction

Given a trace of human brain activity observed with an intracranial EEG for months or years, the problem is to predict whether 10-minute segments indicate the probability of a seizure or not.

A 4th place solution is described that made use of statistical feature engineering and gradient boosting.

Learn more on the competition page.

Update: The dataset has since been taken down.

AMS 2013-2014 Solar Energy Prediction Contest

Given historical meteorological forecasts at multiple sites, the problem is to predict the total daily solar energy at each site for one year.

The dataset provides an opportunity to model spatial and temporal time series by site and across sites and make multi-step forecasts for each site.

The winning approach used an ensemble of gradient boosting models.

Learn more on the competition page.

Global Energy Forecasting Competition 2012 – Wind Forecasting

Given historical wind forecasts and power generation at multiple sites, the problem is to predict hourly power generation for the next 48 hours.

The dataset provides an opportunity to model the hourly time series for individual sites as well as across-sites.

I could not find any good write-ups of top performing solutions. Can you?

Learn more on the competition page.

EMC Data Science Global Hackathon (Air Quality Prediction)

Given eight days of hourly measurements of air pollutants, the problem is to forecast pollutants at specific times over the following three days.

The dataset provides an opportunity to model a multivariate time series and perform a multi-step forecast.

A good write-up of the top performing solution describes the use of an ensemble of random forest models trained on lagged variables.

Learn more on the competition page.

Summary

In this post, you discovered a suite of challenging time series forecasting problems.

These are problems that provided the foundation for competitive machine learning on the site Kaggle.com. As such, each problem also provides a great source of discussion and existing world-class solutions that can be used as inspiration and a starting point.

If you are interested in better understanding the role of machine learning for time series forecasting, I would recommend selecting one or more of these problems as a starting point.

Have you worked on one or more of these problems?

Share your experiences in the comments below.

Is there a time series problem on Kaggle.com that was not mentioned in this post?

Let me know about it in the comments below.

Thank you for gathering and sharing those time series forecasting problems.

It really seems challenging but it is worth a try.

Thanks Andrei, let me know how you go!

Would be really cool if you did a blog on the wind forecasting problem!

Thanks for the suggestion Sebastian.

What method do you recommend for wind frecasting?

Moreover, kagglers use machine learning methods instead of time series methods like aroma, exponential smoothing etc.

I am not familiar with the specific domain of wind forecasting, but generally, I would recommend spot checking as many methods as you can get your hands on then double down on whatever shows promise.

A great thing about meteorology is that it is based in physics we understand and can simulate. Ensemble meteorological models run on supercomputers are the state of the art for things like temperature and severe weather like cyclones (what I used to work on). Not sure about general wind forecasts.

What model would be appropriate for doing Sales Forecasting based on Weather. I have a dataset that has sales for last 2 years per store and I would like to add weather parameters

Perhaps start with a linear model:

https://machinelearningmastery.com/start-here/#timeseries

Then maybe move on to an MLP to see if you can do better:

https://machinelearningmastery.com/start-here/#deeplearning

Hi Jason,

I have some 2443 gif images of historical daily precipitation maps, and I want to build a model to forecast maps for one week.

Could you direct me how I can get started, and if you know any resources online.

Highly appreciate it. Thank you very much.

This process may help:

https://machinelearningmastery.com/start-here/#process

Thanks so much Mr.Jason ,

I would like to work on Rossmann stores challenge as my master capstone but I confused about its type is it considered multivariate & multiple time series problem ?

If you have any useful recourse on that topic or better suggestion for my capstone in time series simpler than this challenge would be much appreciated

Regards,

Perhaps try exploring a few different framings of the problem and see what looks easy to model?

Thanks but what do you mean by different framing ? You wrote a book for deep learning in time series with applying different projects is it useful to this kind of problem ?

There are many ways to frame a prediction problem in terms of the types and numbers of inputs and outputs, see this:

https://machinelearningmastery.com/how-to-define-your-machine-learning-problem/

Our intuitions over the “right” way” may not be correct for getting the best predictions. Tests/prototypes are required.

Perhaps start with some of the free tutorials here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

I’m new in machine learning and have a time series forecasting project that needs to forecast all products sales during next 5 minutes. Is LSTM a proper solution for my project? Are there recommended books ? Thanks.

Probably not, try this framework:

https://machinelearningmastery.com/how-to-develop-a-skilful-time-series-forecasting-model/

What about Predictive Maintenance, most of the data is time series based from plant sensors? Are these techniques apply?

Yes, you could model it as time series classification – e.g. is there a fault in the interval.