Hal Varian is the chief economist at Google and gave a talk to Electronic Support Group at EECS Department at the University of California at Berkeley in November 2013.

The talk was titled Machine Learning and Econometrics and was really focused on what lessons the machine learning can take away from the field of Econometrics.

https://www.youtube.com/watch?v=EraG-2p9VuE

Hal started out by summarizing a recent paper of his titled “Big Data: New Tricks for Econometrics” (PDF) which comments on what the econometrics community can learn from the machine learning community, namely:

- Train-test-validate to avoid overfitting

- Cross validation

- Nonlinear estimation (trees, forests, SVMs, neural nets, etc)

- Bootstrap, bagging, boosting

- Variable selection (lasso and friends)

- Model averaging

- Computational Bayesian methods (MCMC)

- Tools for manipulating big data (SQL, NoSQL databases)

- Textual analysis (not discussed)

He continued by talking about non-i.i.d data such as time series data and panel data. This is data where cross validation typically does not perform well. He suggests decomposing data trend + seasonal components and look at deviations from expected behavior. An example is given of Google Correlate showing that auto dealer sales data best correlates with searches for indian restaurants (madness!).

The focus on the talk is causal inference, a big subject in econometrics. He covers:

- Counterfactuals: What would have happened to the treated if they weren’t treated? Would they look like the control on average? Read more about counterfactuals within empirical testing.

- Confounding Variables: Unobserved variables that correlates with both x and y (the other stuff). Commonly an issue when human choice is involved. Read more about confounding variables.

- Natural Experiments: May or may not be randomized. An example is the draft lottery. Read more about natural experiments.

- Regression Discontinuity: Cut-off or threshold above or below the treatment is applied. You can compare cases close to the (arbitrary) threshold to estimate the average treatment effect when randomization is not possible. Tune the threshold once you can model the causal relationship and play what-if’s (don’t leave randomization to chance). Read more on regression discontinuity design (RDD).

- Difference in Differences (DiD): It’s not enough to look at before and after of the treatment, you need to adjust the treated by the control. The treatment may not be randomly assigned. Read more about difference in differences.

- Instrumental Variables: Variation in X that is independent of error. Something that changes X (correlates with X) but does not change the error. Provides a control lever. Randomization is an instrumental variable. Read more about instrumental variables.

He summarized the lessons for the machine learning community from econometrics as follows:

- Observational data (usually) can’t determine causality, no matter how big it is (big data is not enough)

- Causal inference is what you want for policy

- Treatment-control with random assignment is the gold standard

- Sometimes you can find natural experiments, discontinuities, etc.

- Prediction is critical to causal inference for both selection issue and counterfactual

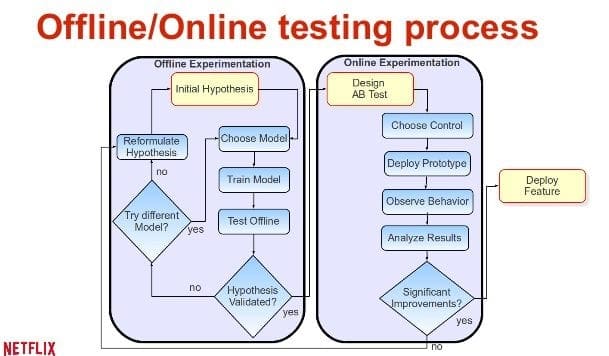

- Very interesting research in systems for continual testing

Hal finished with two book recommendations:

- Mostly Harmless Econometrics: An Empiricist’s Companion

- An Introduction to Statistical Learning: with Applications in R

The talk was also given to the Stanford University Department of Electrical Engineering in 2014 titled What Machine Learning Can Learn from Econometrics and Vice Versa. You can see the PDF slides from this second talk, it’s pretty much the same.

I really enjoyed your posts. Please also add this seminar to the list on Machine Learning meets Auction/Game Theory.

https://www.youtube.com/watch?v=eD758rKwQmA

Thanks Chandra, and thanks for the link to the related video. I’ll check it out.

Could we please have more sounds of some idiot eating and less of the speaker?

seriously this has to be the worst audio on youtube, and thats saying a lot.

Why the hell don’t people know how microphones work?

I agree the audio sucks. I watch all youtube on 2x speed and pause to take notes. It overcomes the sound issues to some degree because it’s over real quick.

WTF? He clearly has a microphone on his shirt. Why does the audio sound like it’s recorded by the camera?

I don’t have x2 playback option on youtube (at least for this video) and I can’t cope to listen to it. So bad that this talk is unwatchable because of crappy audio.

Thanks for sharing!