In this article, you will learn why large language model hallucinations happen and how to reduce them using system-level techniques that go beyond prompt engineering.

Topics we will cover include:

- What causes hallucinations in large language models.

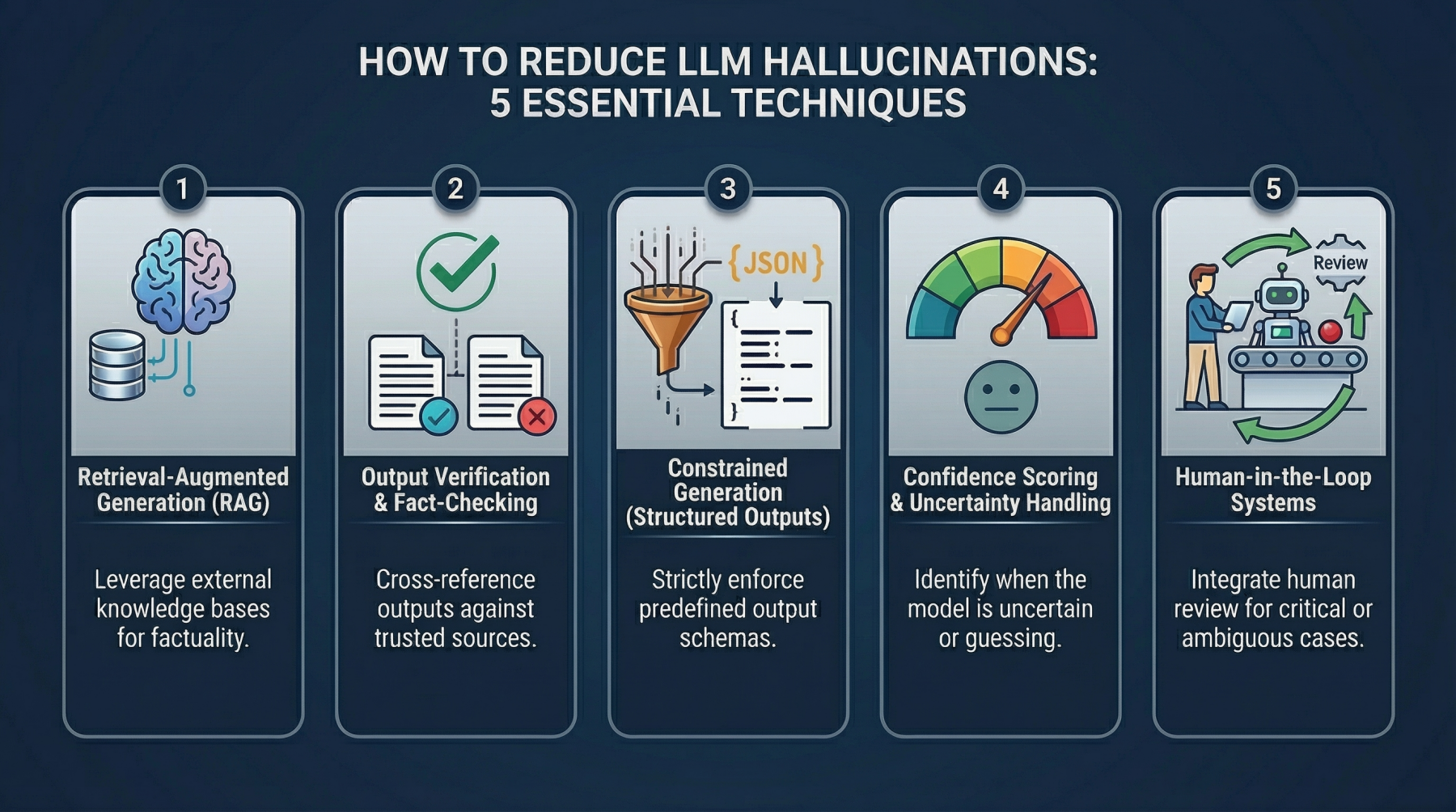

- Five practical techniques for detecting and mitigating hallucinated outputs in production systems.

- How to implement these techniques with simple examples and realistic design patterns.

Let’s get to it.

5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering

Image by Editor

Introduction

My friend who is a developer once asked an LLM to generate documentation for a payment API. The response looked perfect. It had a clean structure, the right tone, and even example endpoints. There was just one problem. The API did not exist. The model had confidently invented endpoints, parameters, and responses that felt real enough to pass a quick review. It was only caught when someone tried to integrate it, and nothing worked.

That is what hallucination looks like in practice. The model makes things up and presents them as facts, without any signal that something is wrong.

This is not a rare edge case. It shows up in subtle ways across production systems. Fake citations in research tools. Incorrect legal references. Nonexistent product features in customer support responses. In isolation, these might seem like small errors. At scale, they become serious problems.

The impact goes beyond accuracy. Trust starts to break down when users cannot rely on the output. Many early solutions focused on prompt engineering: better instructions, stricter wording, and clearer constraints. This helps, but only up to a point. Prompts can guide the model, but they do not fundamentally change how it generates answers. When the system lacks the right information, it will still try to produce something.

That is why teams are starting to treat hallucination as a system problem, not just a prompting problem. Instead of relying on better inputs alone, they are building layers around the model to detect, validate, and control what comes out.

What Causes LLM Hallucinations?

Before looking at how to fix hallucinations, it helps to understand why they happen in the first place. The causes are not mysterious, but they are easy to overlook when the outputs sound convincing.

The first issue is a lack of grounding. Most language models do not have direct access to real-time or verified data unless you explicitly connect them to it. They generate responses based on patterns learned during training, not by checking facts against a live source. When the exact answer is missing, the model fills in the gaps.

There is also the problem of overgeneralization. These models are trained on large and diverse datasets, which means they learn broad patterns rather than precise truths. When faced with a specific question, they may combine fragments of similar information into something that sounds correct but is not.

Another factor is the built-in pressure to always produce an answer. Language models are designed to be helpful and responsive. Instead of saying “I don’t know,” they often generate the most plausible response they can. That tendency is useful for conversation, but it is risky when accuracy matters.

Technique 1: Retrieval-Augmented Generation (RAG)

One of the most effective ways to reduce hallucinations is simple in principle. Stop relying only on what the model remembers, and give it access to real data at the moment it needs to answer.

This is what retrieval-augmented generation (RAG) does. Instead of asking the model to generate a response from its internal training alone, you first retrieve relevant information from an external source, then pass that information into the model as context. The flow is straightforward. A user asks a question, the system searches a knowledge base for related content, and the model generates an answer based on that retrieved data.

This changes how the model behaves. Without retrieval, it leans on patterns and probabilities, which is where hallucinations come from. With retrieval, it has something concrete to work with. It is no longer guessing what might be true. It is working with what has been provided.

The difference between model memory and external knowledge is important here. Model memory is static. It reflects what the model was trained on, which may be outdated, incomplete, or too general. External knowledge is dynamic. It can be updated, curated, and tailored to a specific domain. RAG shifts the source of truth from the model to your data.

In practice, this is usually implemented with a vector database. Documents are converted into embeddings and stored in a way that allows semantic search. When a query comes in, the system finds the most relevant chunks of text and injects them into the prompt before generating a response.

Here is a simple example using Python to illustrate the flow:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

from sentence_transformers import SentenceTransformer import faiss import numpy as np from openai import OpenAI # Step 1: Load embedding model embedder = SentenceTransformer("all-MiniLM-L6-v2") # Step 2: Sample knowledge base documents = [ "Our refund policy allows returns within 30 days.", "Shipping takes 3 to 5 business days.", "You can track your order using the tracking link sent via email." ] # Step 3: Convert documents to embeddings doc_embeddings = embedder.encode(documents).astype("float32") # Step 4: Store embeddings in FAISS index index = faiss.IndexFlatL2(doc_embeddings.shape[1]) index.add(doc_embeddings) # Step 5: Query query = "How long does delivery take?" query_embedding = embedder.encode([query]).astype("float32") # Step 6: Retrieve most relevant document _, indices = index.search(query_embedding, k=1) retrieved_doc = documents[indices[0][0]] # Step 7: Generate response using retrieved context client = OpenAI() response = client.chat.completions.create( model="gpt-4o-mini", messages=[ {"role": "system", "content": "Answer using the provided context only."}, {"role": "user", "content": f"Context: {retrieved_doc}\n\nQuestion: {query}"} ] ) print(response.choices[0].message.content) |

What the code is doing:

- The embedding model converts both documents and the query into vectors so they can be compared meaningfully

- FAISS is used to store and search those vectors efficiently

- When a query is received, the system retrieves the most relevant document

- That document is passed into the model as context, guiding the response

- The instruction “answer using the provided context only” further reduces the chance of hallucination

This approach works well because it anchors the model’s response in something real. Instead of generating from scratch, it generates with constraints.

That said, RAG is not a perfect solution. If the retrieval step fails, the model is back to guessing. Poor indexing, irrelevant documents, or missing data can still lead to hallucinated outputs. In other words, the quality of the answer depends heavily on the quality of what you retrieve.

RAG does not eliminate hallucinations completely, but it significantly reduces them by giving the model a reliable source of truth to work from.

Technique 2: Output Verification and Fact-Checking Layers

One of the easiest mistakes to make with LLMs is treating the first response as final. It reads well, it sounds confident, and in many cases, it looks correct. That is exactly why hallucinations slip through.

A more reliable approach is to treat every output as an unverified draft. This is where verification layers come in. Instead of relying on a single model response, you introduce additional steps that check, validate, or challenge what was generated before it reaches the user.

One common approach is to use a secondary model for verification. The first model generates the answer, and a second model reviews it. The reviewer can check for factual consistency, flag unsupported claims, or even compare the answer against known sources. This creates a simple but effective separation between generation and validation.

Another approach is to cross-check outputs against trusted data sources. For example, if a response includes statistics, citations, or technical details, the system can verify those against a database, API, or internal knowledge base. If the information cannot be confirmed, the system can either reject the response or ask for clarification.

There is also a technique known as self-consistency. Instead of asking the model once, you ask it multiple times, sometimes with slight variations in the prompt. If the answers converge, there is a higher chance they are correct. If they differ significantly, that is a signal that the model is uncertain or guessing.

Here is a simple example of how this can be implemented:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

from openai import OpenAI client = OpenAI() def ask_model(question): response = client.chat.completions.create( model="gpt-4o-mini", messages=[{"role": "user", "content": question}] ) return response.choices[0].message.content.strip() question = "What is the capital of Australia?" # Ask the same question multiple times answers = [ask_model(question) for _ in range(3)] print("Model Answers:") for i, ans in enumerate(answers, start=1): print(f"{i}. {ans}") # Simple consistency check if len(set(answers)) == 1: print("\nHigher confidence: answers are consistent.") else: print("\nLower confidence: answers differ, so this result needs verification.") |

What the code is doing:

- The same question is sent to the model multiple times

- The responses are collected and compared

- If all answers match, the system treats the result as more reliable

- If they differ, the system flags the result for further checking or human review

This is a basic example, but in production systems, the idea can be extended further. You can rephrase questions, use different models, or introduce scoring systems to evaluate agreement.

The key idea is simple. Do not rely on a single pass. Add friction between generation and delivery. Verification layers do introduce extra cost and latency, but they significantly improve reliability. In many cases, that trade-off is worth it, especially in systems where accuracy matters.

Technique 3: Constrained Generation (Structured Outputs)

A lot of hallucinations come from one simple fact. The model has too much freedom in how it responds. When you ask an open-ended question and allow free-text output, the model fills in gaps however it can. That flexibility is useful for creativity, but it also creates room for incorrect or invented information.

Constrained generation takes the opposite approach. It limits how the model is allowed to respond. Instead of asking for a paragraph, you define a structure. This could be a JSON schema, a fixed set of fields, or even a controlled list of acceptable values. The model is no longer free to generate anything it wants. It has to fit its answer into a predefined format.

This works well because it removes ambiguity. If a field expects a number, the model cannot return a story. If a value must come from a fixed list, it cannot invent a new option. The structure itself becomes a guardrail.

One common way to implement this is through JSON schemas. You define exactly what the output should look like, including required fields and allowed values.

Here is a simple example:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

import json from jsonschema import validate from openai import OpenAI client = OpenAI() schema = { "type": "object", "properties": { "product_name": {"type": "string"}, "price": {"type": "number"}, "availability": { "type": "string", "enum": ["in_stock", "out_of_stock"] } }, "required": ["product_name", "price", "availability"] } response = client.chat.completions.create( model="gpt-4o-mini", messages=[ {"role": "system", "content": "Extract product details in JSON format only."}, {"role": "user", "content": "The iPhone 13 costs $799 and is currently available."} ], response_format={"type": "json_object"} ) result = json.loads(response.choices[0].message.content) validate(instance=result, schema=schema) print(result) |

What the code is doing:

- A schema defines the expected structure of the output

- The model is instructed to return only JSON

- The generated JSON is validated against the schema after generation

- Fields like availability are restricted to specific values

- This reduces the chance of unexpected formats or unsupported values appearing in the output

Beyond JSON, constrained generation also shows up in function calling and tool usage. Instead of generating answers directly, the model selects from predefined actions. For example, it can call a function to fetch data rather than guessing the result. This reduces hallucination because the model is no longer responsible for producing the final answer on its own.

Controlled vocabularies take this even further. In some systems, the model is restricted to a fixed set of terms, labels, or categories. This is common in classification tasks where consistency matters more than flexibility.

The reason this approach works is straightforward. Hallucinations often come from the model trying to be helpful in open-ended situations. By narrowing the range of possible outputs, you reduce the chances of it going off track.

Technique 4: Confidence Scoring and Uncertainty Handling

One of the more dangerous traits of LLMs is not that they get things wrong, but that they do so without hesitation. A correct answer and a completely fabricated one can look identical in tone and confidence.

If you want to reduce hallucinations, you need a way to tell when the model is likely guessing. Confidence scoring introduces that signal. Instead of taking every response at face value, the system evaluates how reliable the answer is before accepting it.

At the lowest level, this can be done using token probabilities. As the model generates each token, it assigns a probability to it. When those probabilities are high and consistent, it usually means the model is operating within familiar patterns. When they drop or fluctuate, they can indicate uncertainty. While this signal is not perfect, it gives a useful baseline.

Beyond raw probabilities, calibration techniques help make these signals more meaningful. For example, you can compare outputs across multiple runs to see how stable they are. If the model gives different answers to the same question, that inconsistency is a strong indicator that something is off. You can also benchmark responses against known correct answers to better understand how confidence correlates with accuracy over time.

Another practical layer is to ask the model to express uncertainty directly. Instead of forcing a definitive answer, you allow space for responses like “insufficient information” or a reported confidence score. This shifts the model away from always trying to sound certain.

Here is a simple implementation:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

from openai import OpenAI client = OpenAI() def get_answer_with_confidence(question): response = client.chat.completions.create( model="gpt-4o-mini", messages=[ { "role": "system", "content": "Answer the question. Also provide a confidence score between 0 and 1. If you are unsure, say so clearly." }, {"role": "user", "content": question} ] ) return response.choices[0].message.content question = "Who won the Nobel Prize in Physics in 1895?" result = get_answer_with_confidence(question) print(result) |

What the code is doing:

- The model is instructed to return both an answer and a confidence score

- It is also given permission to admit uncertainty instead of guessing

- This creates an extra layer of signal that can be used downstream

In a real system, this output would not just be displayed. It would drive decisions. Low-confidence responses can be rejected, sent back for clarification, or escalated to a human reviewer. High-confidence responses can move forward with less friction.

This approach introduces a simple but important rule. Not every answer should be accepted. Once you start treating uncertainty as a first-class signal, hallucinations become easier to manage. You are no longer trying to eliminate them completely. You are building a system that knows when to trust the model and when to question it.

Technique 5: Human-in-the-Loop Systems

No matter how many safeguards you add, there are still situations where the model should not be making the final call. This is where human-in-the-loop systems come in.

The idea is simple. Keep humans involved, but place them where they add the most value. Instead of reviewing everything, which does not scale, you design pipelines that bring humans in only when needed. For example, responses flagged as low confidence, inconsistent, or high risk can be routed to a human reviewer before being delivered. This creates a balance between automation and oversight.

Review pipelines are the first layer. Outputs pass through checks, and only certain cases are escalated. In customer support, this might mean allowing the model to handle common questions while routing complex or sensitive issues to an agent. In legal or financial systems, it could mean requiring human approval for specific types of responses.

Feedback loops take this further. When humans review and correct outputs, that information does not go to waste. It can be fed back into the system to improve future performance. Over time, the model improves from real corrections, not just static training data.

This connects closely with active learning. Instead of retraining on everything, the system focuses on the most uncertain or problematic cases. These are the areas where human input has the highest impact. It is a more efficient way to improve accuracy without increasing costs unnecessarily.

The key insight here is not about limiting AI. It is about placing humans strategically. Trying to remove humans entirely often leads to fragile systems that fail in edge cases. Keeping humans in the loop, especially at critical points, creates a safety net that is hard to replicate with automation alone.

Wrapping Up

Hallucinations are not a temporary flaw that will disappear with better models. They are a byproduct of how these systems work. As long as LLMs generate responses based on probabilities rather than verified facts, the risk will always be there.

The focus is shifting from blind trust to detection. Instead of assuming outputs are correct, systems are being designed to question, validate, and verify before delivering results. This changes the role of the model. It is no longer treated as a source of truth, but as one component in a larger pipeline.

This is also where prompts start to lose their dominance. They are useful, but they are not enough on their own. Real reliability comes from combining multiple techniques. Retrieval, verification layers, structured outputs, confidence scoring, and human oversight all work together to reduce failure points.

No comments yet.