How do you get started with machine learning in R?

In this post you will discover the book Machine Learning with R by Brett Lantz that has the goal of telling you exactly how to get started practicing machine learning in R.

We cover the audience for the book, a nice deep breakdown of the contents and a summary of the good and bad points.

Kick-start your project with my new book Machine Learning Mastery With R, including step-by-step tutorials and the R source code files for all examples.

Let’s get started.

Note: We are talking about the second edition in this review

Who Should Read This Book?

There are two types of people who should read this book:

- Machine Learning Practitioner. You already know some machine learning and you want to learn how to practice machine learning using R.

- R Practitioner. You are a user of R and you want to learn enough machine learning to practice with R.

From the preface:

It would be helpful yo have a bit of familiarity with basic math and programming concepts, bit no prior experience is required.

Need more Help with R for Machine Learning?

Take my free 14-day email course and discover how to use R on your project (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Book Contents

This section steps you through the topics covered in the book.

When picking up a new book, I like to step through each chapter and see the steps or journey it takes me on. The journey of this book is as follows:

- What is machine learning.

- Handle data.

- Lots of machine learning algorithms

- Evaluate model accuracy.

- Improve accuracy of models.

This covers many of the tasks you need for a machine learning project, but it does miss some.

Let’s step through each chapter and see what the book offers:

Chapter 1: Introducing Machine Learning

Provides an introduction to machine learning, terminology and (very) high-level learning theory.

Topics covered include:

- Uses and abuses of machine learning

- How machines learn

- Machine learning in practice

- Machine learning with R

Interestingly, the topic of machine learning ethics is covered, a topic you don’t often see addressed.

Chapter 2: Managing and Understanding Data

Covers R basics but really focuses on how to load, summarize and visualize data.

Topics include:

- R data structures

- Managing data with R

- Exploring and understanding data

A lot of time is spent on different graph types, which I generally like. It is good to know about and use more than one or two graphs.

Chapter 3: Lazy Learning – Classification Using Nearest Neighbors

This chapter introduces and demonstrates the k-nearest neighbors (kNN) algorithm.

Topics covered include:

- Understanding nearest neighbor classification

- Example – diagnosing breast cancer with k-NN algorithm

I like how good time is spent on data transforms, so critical to the accuracy of kNN.

Chapter 4: Probabilistic Learning – Classification Using Naive Bayes

This chapter introduces and demonstrates the Naive Bayes algorithm for classification.

Topics covered include:

- Understanding Naive Bayes

- Example – filtering mobile phone spam with the Naive Bayes Algorithm

I like the interesting case study problem used.

Chapter 5: Divide and Conquer – Classification Using Decision Trees and Rules

This chapter introduces decision trees and rule systems with the algorithms C5.0, 1R and RIPPER.

Topics covered include:

- Understanding decision trees

- Example – identifying risky bank loans using C5.0 decision trees

- Understanding classification rules

- Example – identifying poisonous mushrooms with rule learners

I like that C5.0 is covered as it has been priority for a long time and has only recently been released as open source and made available in R. I am surprised that CART was not covered, the hello world of decision tree algorithms.

Chapter 6: Forecasting Numeric Data – Regression Methods

This chapter is all about regression, with a demonstrations of linear regression, CART and M5P.

Topics covered include:

- Understanding Regression

- Example – predicting medical expenses using linear regression

- Understanding regression trees and model trees

- Example – estimating the quality of wines with regression trees and model trees

It is good to see the classics linear regression and CART covered here. M5P is also a nice touch.

Chapter 7: Black Box Methods – Neural Networks and Support Vector Machines

This chapter introduces artificial neural networks and support vector machines.

Topics covered include:

- Understanding neural networks

- Example – Modeling the strength of concrete with ANNs

- Understanding support vector machines

- Example – performing OCR with SVMs

It is good to see these algorithms covered and the example problems are interesting.

Chapter 8: Finding Patterns – Market Basket Analysis Using Association Rules

This chapter introduces and demonstrates association rule algorithms, typically used for market basket analysis.

Topics covered include:

- Understanding association rules.

- Example – identifying frequently purchased groceries with association rules

It’s not a topic I like much nor an algorithm I have ever had to use on a project. I’d drop this chapter.

Chapter 9: Finding Groups of Data – Clustering with k-means

This chapter introduces he k-means clustering algorithm and demonstrates it on data.

Topics covered include:

- Understanding clustering

- Example – finding teen market segments using k-means clustering

Another esoteric topic that I would probably drop. Clustering is interesting but often unsupervised learning algorithms are really hard to use well in practice. Here’s some clusters, now what.

Chapter 10: Evaluating Model Performance

This chapter presents methods for evaluating model skill.

Topics covered include:

- Measuring performance for classification

- Evaluating future performance

I like that performance measures and resampling methods are covered. Many texts skip it. I like that a lot of time is spent on the more detailed concerns of classification accuracy (e.g. touching on Kappa and F1 scores).

Chapter 11: Improving Model Performance

This chapter introduces techniques that you can use to improve the accuracy of your models, namely algorithm tuning and ensembles.

Topics covered include:

- Tuning stock models for better performance

- Improving model performance with meta-learning

Good but too brief. Algorithm tuning and ensembles are a big part of building accurate models in modern machine learning. Length could be suitable given that it is an introductory text, but more time should be given to the caret package.

If you’re not using caret for machine learning in R, you’re doing it wrong.

Chapter 12: Specialized Machine Learning Topics

This chapter contains a mess of other topics, including:

- Working with proprietary files and databases

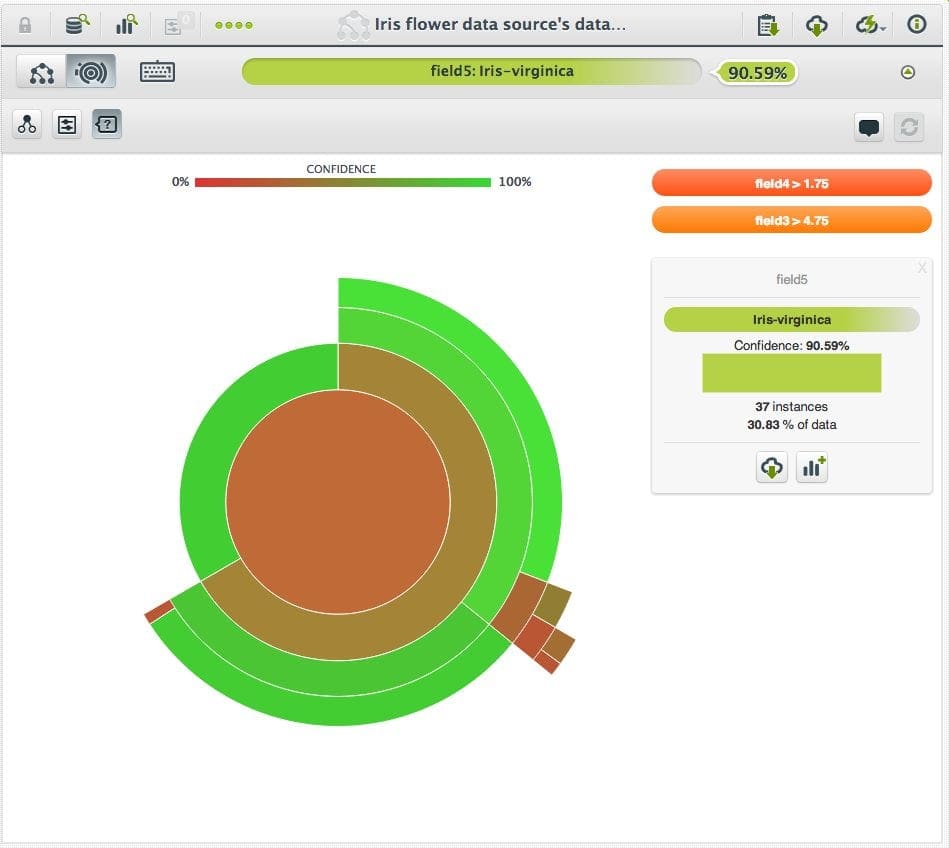

- Working with online data and services

- Working with domain-specific data

- Improving performance of R

The topics are very specialized. Perhaps only the last on “improving performance of R” is really actionable for your machine learning projects.

Machine Learning Algorithms

The book covers a number of different machine learning algorithms. This section lists all of the algorithms covered and in which chapter they can be found.

I note that page 21 of the book does provide a look-up table of algorithms to chapters, but it is too high-level and glosses over the actual names of the algorithms used.

- k-nearest neighbors (chapter 3)

- Naive Bayes (chapter 4)

- C5.0 (chapter 5)

- 1R (chapter 5)

- RIPPER (chapter 5)

- Linear Regression (chapter 6)

- Classification and Regression Trees (chapter 6)

- M5P (chapter 6)

- Artificial Neural Networks (chapter 7)

- Support Vector Machines (chapter 7)

- Apriori (chapter 8)

- k-means (chapter 9)

- Bagged CART (chapter 10)

- AdaBoost (chapter 10)

- Random Forest (chapter 10)

What Do I Think Of This Book?

I like the book as an introduction for how to do machine learning on the R platform.

You must know how to program. You must know a little bit of R. You must have some sense of how to drive a machine learning project from beginning to end. This book will not cover these topics, but it will show you how to complete common machine learning tasks using R.

Set your expectations accordingly:

- This is a practical book with worked examples and high-level algorithm descriptions.

- This is not a machine learning textbook with theory, proof and lots of equations.

Pros

- I like the structured examples how each algorithm is demonstrated with a different dataset.

- I like that the datasets are small in memory examples perhaps all taken from the UCI Machine Learning Repository.

- I like that references to research papers are provided where appropriate for further reading.

- I like the boxes that summarize usage information for algorithms and other key techniques.

- I like that it is practically focused, the how of machine learning not the deep why.

Cons

- I don’t like that it is so algorithms focused. It general structure of most “applied books” and dumps a lot of algorithms on you rather than the extended project lifecycle.

- I don’t like that there are no end-to-end examples (problem definition, through to model selection, through to presentation of results). The formal structure of examples is good, but I’d a deep case study chapter I think.

- I cannot download the code and datasets from a GitHub repository or as a zip. I have sign up and go through their process.

- There are chapters there that feel like they are only there because similar chapters exist in other machine learning books (clustering and association rules). These may be machine learning methods, but are not used nearly as often as core predictive modeling methods (IMHO).

- Perhaps a little too much filler. I like less talk more action. If I want long algorithm description I’d read an algorithms textbook. Tell me the broad strokes and let’s get to it.

Final Word

If you are looking for a good applied book for machine learning with R, this is it. I like it for beginners who know a little machine learning and/or a little R and want to practice machine learning on the R platform.

Even though I think O’Reilly books are generally better applied books than Packt, I don’t see an offering from O’Reilly that can compete.

If you want to go one step deeper and get some more theory and more explanations I would advise checking out: Applied Predictive Modeling. If you want more math I would suggest An Introduction to Statistical Learning: with Applications in R.

Both books have examples in R, but less focus on R and more focus on the details of machine learning algorithms.

Resources

Next Step

Have you read this book? Let me know what you think in the comments.

Are you thinking of buying this book? Have any questions? Let me know in the comments and I’ll do my best to answer them.

Thanks for the great review. BY the way, I have discovered the data on the folllowing github page.

https://github.com/dataspelunking/MLwR

Thanks.

Jason, thanks for this review. I have bought and worked through your book, ML Mastery with R and it is on a different league from what I have read elsewhere in terms of end-to-end, practical advice in working through a predictive modelling project.

Thanks to it, I have the framework now to complete a couple of simple ML projects end to end (I come from a non IT background and am learning R and ML on my own from books and online courses)

Would you recommend this book as a next book to work through? Or would you recommend anything else? My focus (as in your excellent book) remains practical, applied knowledge.

Thanks!

Thanks Terence!

You you are looking for more of the same, this book may help.