Image by Editor | Midjourney

Reinforcement Learning (RL) is a type of machine learning. It trains an agent to make decisions by interacting with an environment. This article covers the basic concepts of RL. These include states, actions, rewards, policies, and the Markov Decision Process (MDP). By the end, you will understand how RL works. You will also learn how to implement it in Python.

Key Concepts in Reinforcement Learning

Reinforcement Learning (RL) involves several core ideas that shape how machines learn from experience and make decisions:

- Agent: It’s the decision-maker that interacts with its environment.

- Environment: The external system with which the agent interacts.

- State: A representation of the current situation of the environment.

- Action: Choices that the agent can take in a given state.

- Reward: Immediate feedback the agent gets after taking an action in a state.

- Policy: A set of rules the agent follows to decide its actions based on states.

- Value Function: Estimates the expected long-term reward from a specific state under a policy.

Markov Decision Process

Markov Decision Process | Image source

A Markov Decision Process (MDP) is a mathematical framework. MDPs give a structured way to describe the environment in reinforcement learning.

An MDP is defined by the tuple (S,A,T,R,γ). The components of the tuple are described below.

- States: A set of all possible states in the environment.

- Actions (A): A set of all possible actions the agent can take.

- Transition Model (T): The probability of transitioning from one state to another.

- Reward Function (R): The immediate reward received after transitioning from one state to another.

- Discount Factor (γ): A factor between 0 and 1 that represents the importance of future rewards.

Bellman Equation

The Bellman equation calculates the value of being in a state or taking an action based on the expected future rewards.

It breaks down the expected total reward. The first part is the immediate reward received. The second part is the discounted value of future rewards. This equation helps agents make decisions to maximize their long-term benefits.

Bellman Equation | Image source

Steps of Reinforcement Learning

- Define the Environment: Specify the states, actions, transition rules, and rewards.

- Initialize Policies and Value Functions: Set up initial strategies for decision-making and value estimations.

- Observe the Initial State: Gather information about the initial conditions of the environment.

- Choose an Action: Decide on an action based on current strategies.

- Observe the Outcome: Receive feedback in the form of a new state and reward from the environment.

- Update Strategies: Adjust decision-making policies and value estimations based on the received feedback.

Reinforcement Learning Algorithms

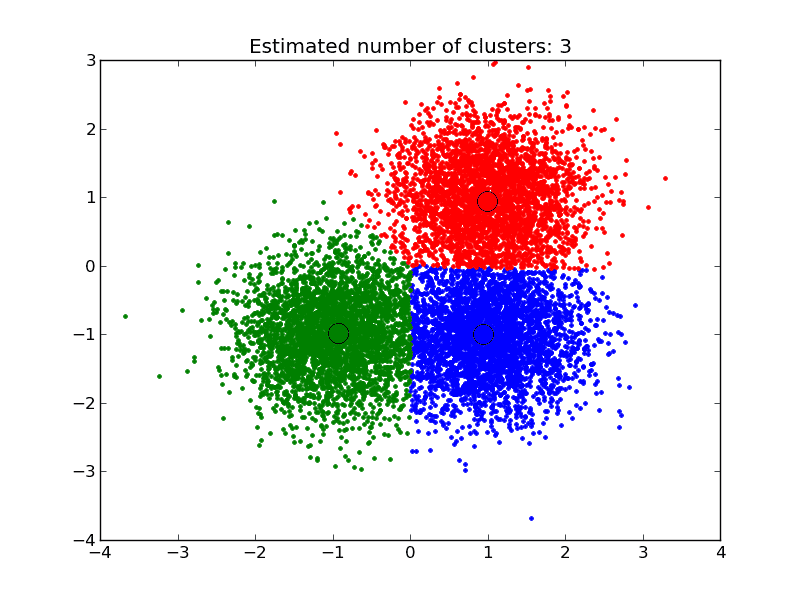

There are several algorithms used in reinforcement learning.

- Q-Learning: A model-free algorithm that learns the value of actions in a state-action space.

- Deep Q-Network (DQN): An extension of Q-Learning using deep neural networks to handle large state spaces.

- Policy Gradient Methods: Directly optimize the policy by adjusting the policy parameters using gradient ascent.

- Actor-Critic Methods: Combine value-based and policy-based methods. The actor updates the policy, and the critic evaluates the action.

Q-Learning Algorithm

Q-Learning is a key algorithm in reinforcement learning. It is a model-free method. This means that it doesn’t need a model of the environment. Q-Learning learns actions by directly interacting with the environment. Its main goal is to find the best action-selection policy that maximizes cumulative reward.

Key Concepts

- Q-Value: The Q-value, denoted as Q(s,a), represents the expected cumulative reward of taking a specific action in a specific state and following the policy thereafter.

- Q-Table: A table where each cell Q(s,a) corresponds to the Q-value for a state-action pair. This table is continually updated as the agent learns from its experiences.

- Learning Rate (α): A factor that determines how much new information should overwrite old information It lies between 0 and 1.

- Discount Factor (γ): A factor that reduces the value of future rewards. It also lies between 0 and 1.

Implementation of Q-Learning with Python

Import required libraries

Import the necessary libraries. ‘gym’ is used to create and interact with the environment. Furthermore, ‘numpy’ is used for numerical operations.

|

1 2 |

import gym import numpy as np |

Initialize the Environment and Q-Table

Create the FrozenLake environment and initialize the Q-table with zeros.

|

1 2 |

env = gym.make("FrozenLake-v1", is_slippery=False) Q = np.zeros((env.observation_space.n, env.action_space.n)) |

Define Hyperparameters

Define the hyperparameters for the Q-Learning algorithm.

|

1 2 3 4 5 |

learning_rate = 0.8 discount_factor = 0.95 epsilon = 0.1 episodes = 10000 max_steps = 100 |

Implementing Q-Learning

Implement the Q-Learning algorithm on the above setup.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

for episode in range(episodes): state = env.reset() done = False for _ in range(max_steps): # Choose action (epsilon-greedy strategy) if np.random.uniform(0, 1) < epsilon: action = env.action_space.sample() else: action = np.argmax(Q[state, :]) # Perform action and observe the outcome next_state, reward, done, _ = env.step(action) # Update Q-value using the Bellman equation Q [state, action] = Q [state, action] + learning_rate * (reward + discount_factor * np.max(Q [next_state,:]) - Q [state, action]) # Transition to next state state = next_state # If the episode is finished, break the loop if done: break |

Evaluate the Trained Agent

Calculate the total reward collected as the agent interacts with the environment.

|

1 2 3 4 5 6 7 8 9 10 |

state = env.reset() done = False total_reward = 0 while not done: action = np.argmax(Q[state, :]) next_state, reward, done, _ = env.step(action) total_reward += reward state = next_state env.render() |

Conclusion

This article introduces fundamental principles and offers a beginner-friendly example of reinforcement learning. As you explore further, you’ll encounter advanced methods such as deep reinforcement learning. This approach integrates RL with neural networks to manage complex state and action spaces effectively.

Hello, nice explanations and code.

I would like to ask, is it possible that you forgot to average the Q values?

Hi Panagiotis…You are very welcome! Please clarify your question. Are you experiencing issues with the code?

Hello James,

Thanks for the reply.

The code runs fine. You need to make some small adjustments for those who have the latest versions of gym, but it runs.

My question is for the q learning part. On line 16 where the q value gets updated. I think each updated value after each episode should be the average of the q values observed, but I might be missing something.

Hi Jayita,

let me tell you first of all, great article! Its a pretty good first introduction to reinforcement learning as far as I’m concerned, and really useful to get hands on from the start.

That being said, coming from the article “Your First Machine Learning Project in Python Step-By-Step” by Jason, i did find his a bit more explanatory and insightful, taking the time to explain absolutely everything the code does. Here on the other hand, sometimes i got left wondering what some things were or why we were doing them, for example when you introduce the “episodes” and “max steps” in the code, without explaining them. Of course, later when you read the rest of the code it becomes clearer what they are, but nevertheless.

Again the article was really useful! But i wanted to leave that piece of advice for us newbies, that would make it a bit more clearer.

Keep the articles coming 🙂

Great feedback Mark! Thank you for contributing to our discussions!