Deep Learning methods achieve state-of-the-art results on a suite of natural language processing problems

What makes this exciting is that single models are trained end-to-end, replacing a suite of specialized statistical models.

The University of Oxford in the UK teaches a course on Deep Learning for Natural Language Processing and much of the materials for this course are available online for free.

In this post, you will discover the Oxford course on Deep Learning for Natural Language Processing.

After reading this post, you will know:

- What the course entails and the prerequisites.

- A breakdown of the lectures and how to access the slides and videos.

- A breakdown of the course projects and where to access the materials.

Kick-start your project with my new book Deep Learning for Natural Language Processing, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

Oxford Course on Deep Learning for Natural Language Processing

Photo by Martijn van Sabben, some rights reserved.

Overview

This post is divided into 4 parts; they are:

- Course Overview

- Prerequisites

- Lecture Breakdown

- Projects

Need help with Deep Learning for Text Data?

Take my free 7-day email crash course now (with code).

Click to sign-up and also get a free PDF Ebook version of the course.

Course Overview

The course is titled “Deep Learning for Natural Language Processing” and is taught at the University of Oxford (UK). It was last taught in early 2017.

The great thing about this course is that it is run and taught by Deep Mind people. Notably, the lecturer is Phil Blunsom.

The focus of the course is on statistical methods for natural language processing, specifically neural networks that achieve state-of-the-art results on NLP problems.

From the course:

This will be an applied course focusing on recent advances in analysing and generating speech and text using recurrent neural networks. We will introduce the mathematical definitions of the relevant machine learning models and derive their associated optimisation algorithms.

Prerequisites

This course is designed for undergraduate and graduate students.

The course assumes some background in the topics:

- Probability.

- Linear Algebra.

- Continuous Mathematics.

- Basic Machine Learning.

If you are practitioner interested in deep learning for NLP, you may have different goals and requirements from the material.

For example, you may want to focus on the methods and applications rather than the foundational theory.

Lecture Breakdown

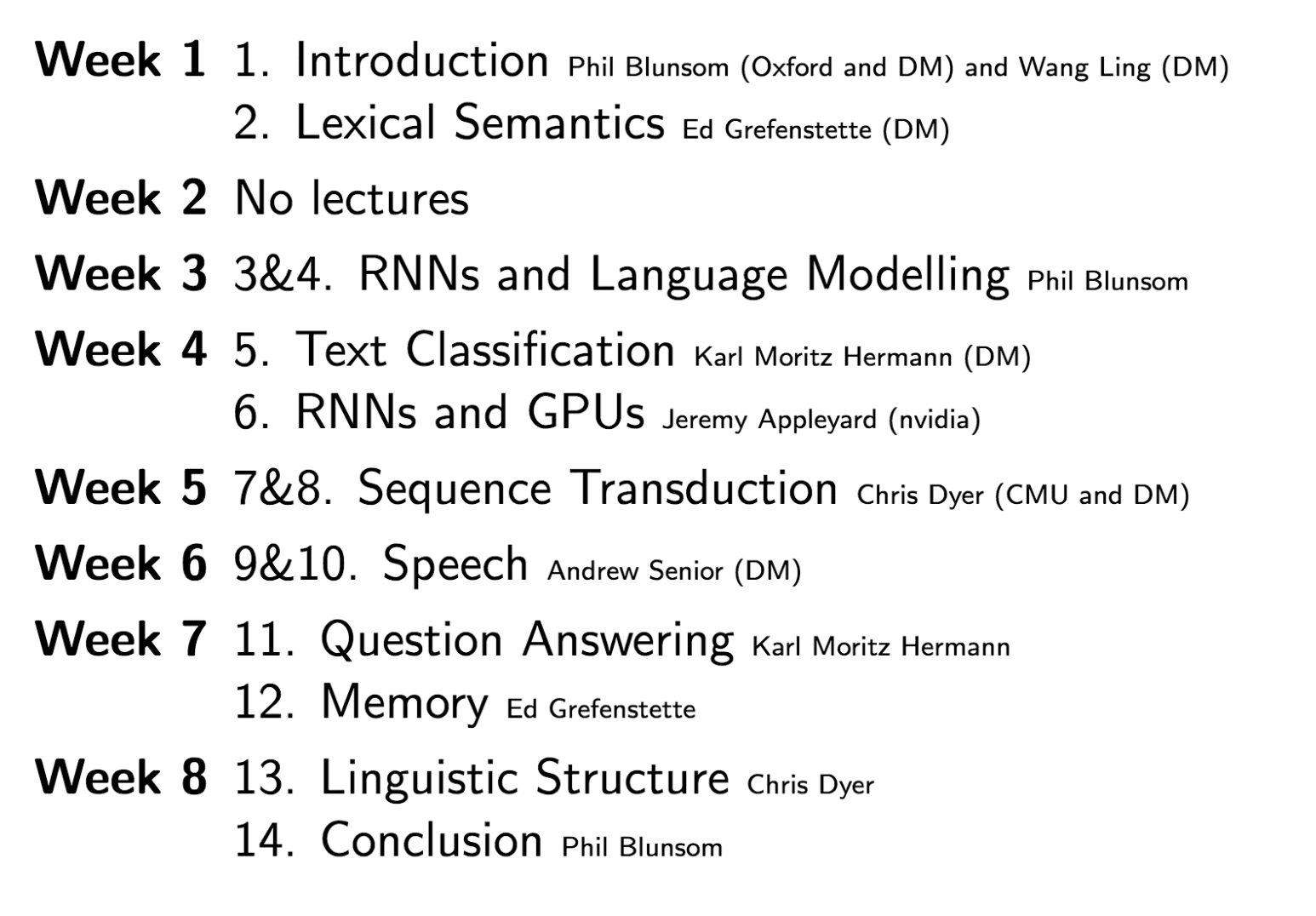

The course is comprised of 13 lectures, although the first and second lectures are both split into two parts.

The complete lecture breakdown is provided below.

The GitHub repository for the course provides links to slides, flash videos and reading for each lecture.

I would recommend watching the videos via this unofficial YouTube playlist.

Below is a course overview slide taken from the first lecture.

Deep Learning for Natural Language Processing at Oxford Lecture Breakdown

Note that there are many guest lecturers for the various topics covered and most are from Deep Mind.

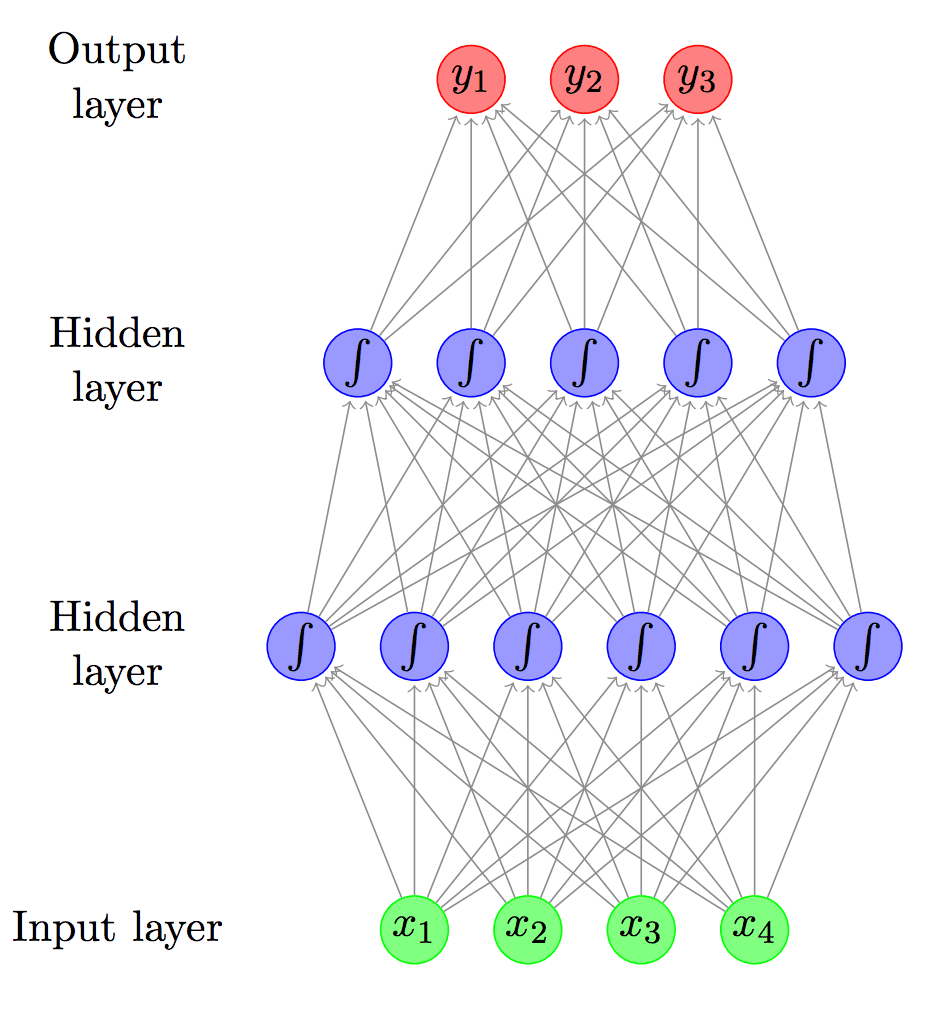

- Lecture 1a – Introduction [Phil Blunsom]

- Lecture 1b – Deep Neural Networks [Wang Ling]

- Lecture 2a – Word Level Semantics [Ed Grefenstette]

- Lecture 2b – Overview of the Practicals [Chris Dyer]

- Lecture 3 – Language Modelling and RNNs Part 1 [Phil Blunsom]

- Lecture 4 – Language Modelling and RNNs Part 2 [Phil Blunsom]

- Lecture 5 – Text Classification [Karl Moritz Hermann]

- Lecture 6 – Deep NLP on Nvidia GPUs [Jeremy Appleyard]

- Lecture 7 – Conditional Language Models [Chris Dyer]

- Lecture 8 – Generating Language with Attention [Chris Dyer]

- Lecture 9 – Speech Recognition (ASR) [Andrew Senior]

- Lecture 10 – Text to Speech (TTS) [Andrew Senior]

- Lecture 11 – Question Answering [Karl Moritz Hermann]

- Lecture 12 – Memory [Ed Grefenstette]

- Lecture 13 – Linguistic Knowledge in Neural Networks

Have you watched any of these lectures? What did you think?

Let me know in the comments below.

Projects

The course includes 4 practical projects that you may wish to attempt to confirm your knowledge of the topic.

The projects are as follows, and each has their own GitHub project containing the description and relevant starting materials:

- Practical 1: word2vec

- Practical 2: text classification

- Practical 3: recurrent neural networks for text classification and language modelling

- Practical 4: open practical

Further Reading

This section provides more resources on the topic if you are looking go deeper.

- Deep Learning for Natural Language Processing: 2016-2017

- Course Lectures on GitHub (PDF)

- Course Lecture Videos on YouTube

- Discussion of Course on Hacker News

Summary

In this post, you discovered the Oxford course on Deep Learning for Natural Language Processing.

Specifically, you learned:

- What the course entails and the prerequisites.

- A breakdown of the lectures and how to access the slides and videos.

- A breakdown of the course projects and where to access the materials.

Do you have any questions?

Ask your questions in the comments below and I will do my best to answer.

Hi Jason,

Thanks for sharing this. I was wondering which course according to you is a better one: CS 224n or this one?

I really liked Oxford course on DL by nando de freitas though it’s very concise. I watched the initial word2vec/glove lecture from 224n but didn’t like it as much.

Thanks

I like them both, but I consumed more/all of the stanford course.

Sample some of each and choose the style you prefer.

Hi Jason,

Thanks for sharing .Very helpful.

Thanks !!

You’re welcome.

Thanks for sharing. It looks very interesting!

I hope it helps.

This looks very promising, thank you for sharing.

Let me know how you go with it.

Hi, I just know some basics of neural network, activation functions etc. That s about it , I have not yet learnt algorithms like Backpropagation. Is this course suitable for my level?

The course is for academics, it may not be suitable.

But, you can still work through it and pick up the elements you need for your own projects.

Thanks. This is a great course

It is!

Funny what they call ‘practical’ …. when I hear ‘t-SNE’ + ‘practical’, I tend to run.

Ha!

HI

My son is a high school I want to introduce him to machine learning at an early age. I am looking for a weekend course for my son.

please, can you suggest how I should go about doing it? I was looking for some undergraduate courses in machine learning

awaiting your response.

Wonderful question!

I think a great place to get started is with Weka, a graphical user interface that lets you work preparing data and developing a model:

https://machinelearningmastery.com/start-here/#weka

Hi Jason, Do you happen to know if their is any python code associated with the Oxford NLP course? thanks, Toby

Not off hand, sorry.

Hello Dr.

My name is Hamid.

I’m phd student and my thesis is based on deep learning algorithm and DNN an LSTM

So, I’m interested to study more about this concept.

Warm Regards

Hamid

Great, welcome!

Perhaps start here:

https://machinelearningmastery.com/start-here/#deep_learning_time_series

And here:

https://machinelearningmastery.com/start-here/#lstm

Hope you won’t thoughts me taking some in the thoughts through this post

somewhere else? I guarantee full credit. This is actually the

first time We wanted to do some thing like this right after great

materials through https://edu-quotes.com/quotes/graduation/. Thank a person and advance,

plus keep your innovative spirits up! Thanks.

Thanks.