The Autoregressive Integrated Moving Average Model, or ARIMA for short is a standard statistical model for time series forecast and analysis.

Along with its development, the authors Box and Jenkins also suggest a process for identifying, estimating, and checking models for a specific time series dataset. This process is now referred to as the Box-Jenkins Method.

In this post, you will discover the Box-Jenkins Method and tips for using it on your time series forecasting problem.

Specifically, you will learn:

- About the ARIMA process and how the 3 steps of the Box-Jenkins Method.

- Best practice heuristics for selecting the q, d, and p model configuration for an ARIMA model.

- Evaluating models by looking for overfitting and residual errors as a diagnostic process.

Kick-start your project with my new book Time Series Forecasting With Python, including step-by-step tutorials and the Python source code files for all examples.

Let’s get started.

A Gentle Introduction to the Box-Jenkins Method for Time Series Forecasting

Photo by Erich Ferdinand, some rights reserved.

Autoregressive Integrated Moving Average Model

An ARIMA model is a class of statistical model for analyzing and forecasting time series data.

ARIMA is an acronym that stands for AutoRegressive Integrated Moving Average. It is a generalization of the simpler AutoRegressive Moving Average and adds the notion of integration.

This acronym is descriptive, capturing the key aspects of the model itself. Briefly, they are:

- AR: Autoregression. A model that uses the dependent relationship between an observation and some number of lagged observations.

- I: Integrated. The use of differencing of raw observations (i.e. subtracting an observation from an observation at the previous time step) in order to make the time series stationary.

- MA: Moving Average. A model that uses the dependency between an observation and residual errors from a moving average model applied to lagged observations.

Each of these components are explicitly specified in the model as a parameter.

A standard notation is used of ARIMA(p,d,q) where the parameters are substituted with integer values to quickly indicate the specific ARIMA model being used.

The parameters of the ARIMA model are defined as follows:

- p: The number of lag observations included in the model, also called the lag order.

- d: The number of times that the raw observations are differenced, also called the degree of differencing.

- q: The size of the moving average window, also called the order of moving average.

Stop learning Time Series Forecasting the slow way!

Take my free 7-day email course and discover how to get started (with sample code).

Click to sign-up and also get a free PDF Ebook version of the course.

Box-Jenkins Method

The Box-Jenkins method was proposed by George Box and Gwilym Jenkins in their seminal 1970 textbook Time Series Analysis: Forecasting and Control.

The approach starts with the assumption that the process that generated the time series can be approximated using an ARMA model if it is stationary or an ARIMA model if it is non-stationary.

The 2016 5th edition of the textbook (Part Two, page 177) refers to the process as a stochastic model building and that it is an iterative approach that consists of the following 3 steps:

- Identification. Use the data and all related information to help select a sub-class of model that may best summarize the data.

- Estimation. Use the data to train the parameters of the model (i.e. the coefficients).

- Diagnostic Checking. Evaluate the fitted model in the context of the available data and check for areas where the model may be improved.

It is an iterative process, so that as new information is gained during diagnostics, you can circle back to step 1 and incorporate that into new model classes.

Let’s take a look at these steps in more detail.

1. Identification

The identification step is further broken down into:

- Assess whether the time series is stationary, and if not, how many differences are required to make it stationary.

- Identify the parameters of an ARMA model for the data.

1.1 Differencing

Below are some tips during identification.

- Unit Root Tests. Use unit root statistical tests on the time series to determine whether or not it is stationary. Repeat after each round of differencing.

- Avoid over differencing. Differencing the time series more than is required can result in the addition of extra serial correlation and additional complexity.

1.2 Configuring AR and MA

Two diagnostic plots can be used to help choose the p and q parameters of the ARMA or ARIMA. They are:

- Autocorrelation Function (ACF). The plot summarizes the correlation of an observation with lag values. The x-axis shows the lag and the y-axis shows the correlation coefficient between -1 and 1 for negative and positive correlation.

- Partial Autocorrelation Function (PACF). The plot summarizes the correlations for an observation with lag values that is not accounted for by prior lagged observations.

Both plots are drawn as bar charts showing the 95% and 99% confidence intervals as horizontal lines. Bars that cross these confidence intervals are therefore more significant and worth noting.

Some useful patterns you may observe on these plots are:

- The model is AR if the ACF trails off after a lag and has a hard cut-off in the PACF after a lag. This lag is taken as the value for p.

- The model is MA if the PACF trails off after a lag and has a hard cut-off in the ACF after the lag. This lag value is taken as the value for q.

- The model is a mix of AR and MA if both the ACF and PACF trail off.

2. Estimation

Estimation involves using numerical methods to minimize a loss or error term.

We will not go into the details of estimating model parameters as these details are handled by the chosen library or tool.

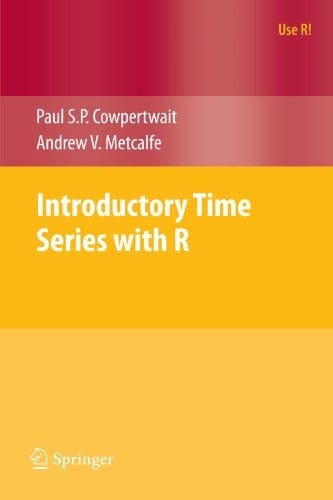

I would recommend referring to a textbook for a deeper understanding of the optimization problem to be solved by ARMA and ARIMA models and optimization methods like Limited-memory BFGS used to solve it.

3. Diagnostic Checking

The idea of diagnostic checking is to look for evidence that the model is not a good fit for the data.

Two useful areas to investigate diagnostics are:

- Overfitting

- Residual Errors.

3.1 Overfitting

The first check is to check whether the model overfits the data. Generally, this means that the model is more complex than it needs to be and captures random noise in the training data.

This is a problem for time series forecasting because it negatively impacts the ability of the model to generalize, resulting in poor forecast performance on out of sample data.

Careful attention must be paid to both in-sample and out-of-sample performance and this requires the careful design of a robust test harness for evaluating models.

3.2 Residual Errors

Forecast residuals provide a great opportunity for diagnostics.

A review of the distribution of errors can help tease out bias in the model. The errors from an ideal model would resemble white noise, that is a Gaussian distribution with a mean of zero and a symmetrical variance.

For this, you may use density plots, histograms, and Q-Q plots that compare the distribution of errors to the expected distribution. A non-Gaussian distribution may suggest an opportunity for data pre-processing. A skew in the distribution or a non-zero mean may suggest a bias in forecasts that may be correct.

Additionally, an ideal model would leave no temporal structure in the time series of forecast residuals. These can be checked by creating ACF and PACF plots of the residual error time series.

The presence of serial correlation in the residual errors suggests further opportunity for using this information in the model.

Further Reading

The definitive resource on the topic is Time Series Analysis: Forecasting and Control. I would recommend the 2016 5th edition, specifically Part Two and Chapters 6-10.

Below are some additional readings that may help flesh out your understanding if you are looking to go deeper:

- Box-Jenkins modelling by Rob J Hyndman, 2002 [PDF].

- Box-Jenkins method on Wikipedia.

- Section 6.4.4.5. Box-Jenkins Models, NIST Handbook of Statistical Methods.

Summary

In this post, you discovered the Box-Jenkins Method for time series analysis and forecasting.

Specifically, you learned:

- About the ARIMA model and the 3 steps of the general Box-Jenkins Method.

- How to use ACF and PACF plots to choose the p and q parameters for an ARIMA model.

- How to use overfitting and residual errors to diagnose a fit ARIMA model.

Do you have any questions about the Box-Jenkins Method or this post?

Ask your questions in the comments below and I will do my best to answer.

Appreciate for your sharing.

no code example for this post?

See this post on creating an ARIMA model:

https://machinelearningmastery.com/arima-for-time-series-forecasting-with-python/

Thank you very much, this is very helpful. I’m working on measuring commodity price volatility for some goods and got confused about using unit root test and then BJ’s, your explanation cleared things up. Thanks again 🙂

Thanks, I’m happy to hear that.

Hell Jason,

Thank you for all the insights.

I was wondering if you could kindly help in explaining how to go about constructing a diagram depicting the Box-Jenkings methodology.

REgards

Perhaps you could turn the steps into a flow diagram?

Thanks a lot for sharing

I’m glad that you found it useful.

“The model is AR if the ACF trails off after a lag and has a hard cut-off in the PACF after a lag. This lag is taken as the value for p…..”

It’d be very useful if you could help clarify that section with visual plots (how to read ’em) of when the model is AR, MA or a combination of both.

Thanks.

Thanks for the suggestion Eric.

Please, box jenkins could be considered a machine learning method?

Perhaps, I would consider it a statistical method.

Isn’t machine learning just a name for “new generation statistics”?

Not really, it is a philosophical difference.

E.g. automation/prediction skill at any cost (predictive modeling/ml) vs linear/understand the data (stats).

or results driven projects (ml, use whatever works) vs model driven projects (stats, make model fit data)

Could you please explain how the p and q value be determined after I draw the ACF and PACF plots? It is a little unclear and have gone through your different post for ACF and PACF but didn’t really get it.

Yes, see this post:

https://machinelearningmastery.com/gentle-introduction-autocorrelation-partial-autocorrelation/

can I ask u about the real example using this model to collect between the real life and academic ????????/

Dear emy,

There are a lot of examples… See this one in pulp industry:

Prediction of Kappa Number in Eucalyptus Kraft Pulp Continuous Digester Using the Box & Jenkins Methodology

https://www.scirp.org/journal/PaperInformation.aspx?PaperID=50918

and I wannna also if u can make videos on Minitab program on youtube

Thanks for the suggestion.

Please give me an example or question with answer

On what exactly?

what will be the end results when you apply box Jenkins approach to non stationary time series Data?

A less skillful model than if the series was stationary.

Hi

i’m trying to get the forecasting model of Arima but the graph is giving me flat line in next five years with the values below. i am confused whether it is right or wrong cos the values are becoming constant. i have applied adf test, acf and pacf test on data then ar and ma model then arima model. I’ll be very thankful if you could help me with this matter.

regards

array([1.26085233e+210, 6.43337688e+230, 8.23752178e+233, 2.53216424e+234,

3.02366846e+234, 3.10968980e+234, 3.12351712e+234, 3.12570970e+234,

3.12605663e+234, 3.12611150e+234, 3.12612018e+234, 3.12612155e+234,

3.12612177e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234,

3.12612181e+234, 3.12612181e+234, 3.12612181e+234, 3.12612181e+234])

Perhaps the model is not skillful, you could try other configurations of the model and/or some data preparation prior to fitting the model.

You can get started here:

https://machinelearningmastery.com/start-here/#timeseries

Its really a good blog. I am particularly interested in section 1.2 Configuring AR and MA :

”

Some useful patterns you may observe on these plots are:

The model is AR if the ACF trails off after a lag and has a hard cut-off in the PACF after a lag. This lag is taken as the value for p.

The model is MA if the PACF trails off after a lag and has a hard cut-off in the ACF after the lag. This lag value is taken as the value for q.

The model is a mix of AR and MA if both the ACF and PACF trail off.

”

There could be fourth scenario in which both ACF and PACF are cut off , how would we interpret it? what should be our strategy in this case?

Thanks.

Maybe, that case may require some thought. It might be a fun exercise (for you) to devise a contrived dataset to demonstrate each case.

Thanks for the information, but do you have any information about the complexity of the method?

Computational complexity? No, sorry.

Since this is anonymous I can brainstorm. Maybe it is O(n) for predicting n time steps. For searching the p, q, d parameter space, maybe O(p*q*d). But I’m still trying to figure out how this approach works. It is more complicated than just fitting a curve.

Not really. It was the first real systematic approach to framing the forecasting problem.

Thanks for the information. But on an ARIMA model chosen by minimizing the AIC ,I found auto-correlation in the residuals (using Ljung box test). if I increase parameters then I’ll overfit , should I just ignore the residual correlation in this case ?

Perhaps you can change the model to capture more of the structure in the problem?

This a great! Thank you.

Thanks!

This Mr. Jason, is fantastic! Thank you.

Thanks!

I am studying Time Series as a data scientist and just want to say: Your posts are so simple and to the point. It even have reviews in the beginning to help jog my memory.

If I can say something I just wish there were more pictures. Because some of the concepts like how ACF and PACF can be used to deterimine order of AR and MA are hard to visualize in my head.

Absolutely love it. Thank you very much

Thanks!

Great suggestion, thanks.

in the Box-Jenkins methods … what is preprocessing data approach should be flowing

Sorry, I don’t understand your question, can you please elaborate or rephrase?