Large language models (LLMs) are recent advances in deep learning models to work on human languages. Some great use case of LLMs has been demonstrated. A large language model is a trained deep-learning model that understands and generates text in a human-like fashion. Behind the scene, it is a large transformer model that does all the magic.

In this post, you will learn about the structure of large language models and how it works. In particular, you will know:

- What is a transformer model

- How a transformer model reads text and generates output

- How a large language model can produce text in a human-like fashion.

What are Large Language Models.

Picture generated by author using Stable Diffusion. Some rights reserved.

Get started and apply ChatGPT with my book Maximizing Productivity with ChatGPT. It provides real-world use cases and prompt examples designed to get you using ChatGPT quickly.

Let’s get started.

Overview

This post is divided into three parts; they are:

- From Transformer Model to Large Language Model

- Why Transformer Can Predict Text?

- How a Large Language Model Is Built?

From Transformer Model to Large Language Model

As humans, we perceive text as a collection of words. Sentences are sequences of words. Documents are sequences of chapters, sections, and paragraphs. However, for computers, text is merely a sequence of characters. To enable machines to comprehend text, a model based on recurrent neural networks can be built. This model processes one word or character at a time and provides an output once the entire input text has been consumed. This model works pretty well, except it sometimes “forgets” what happened at the beginning of the sequence when the end is reached.

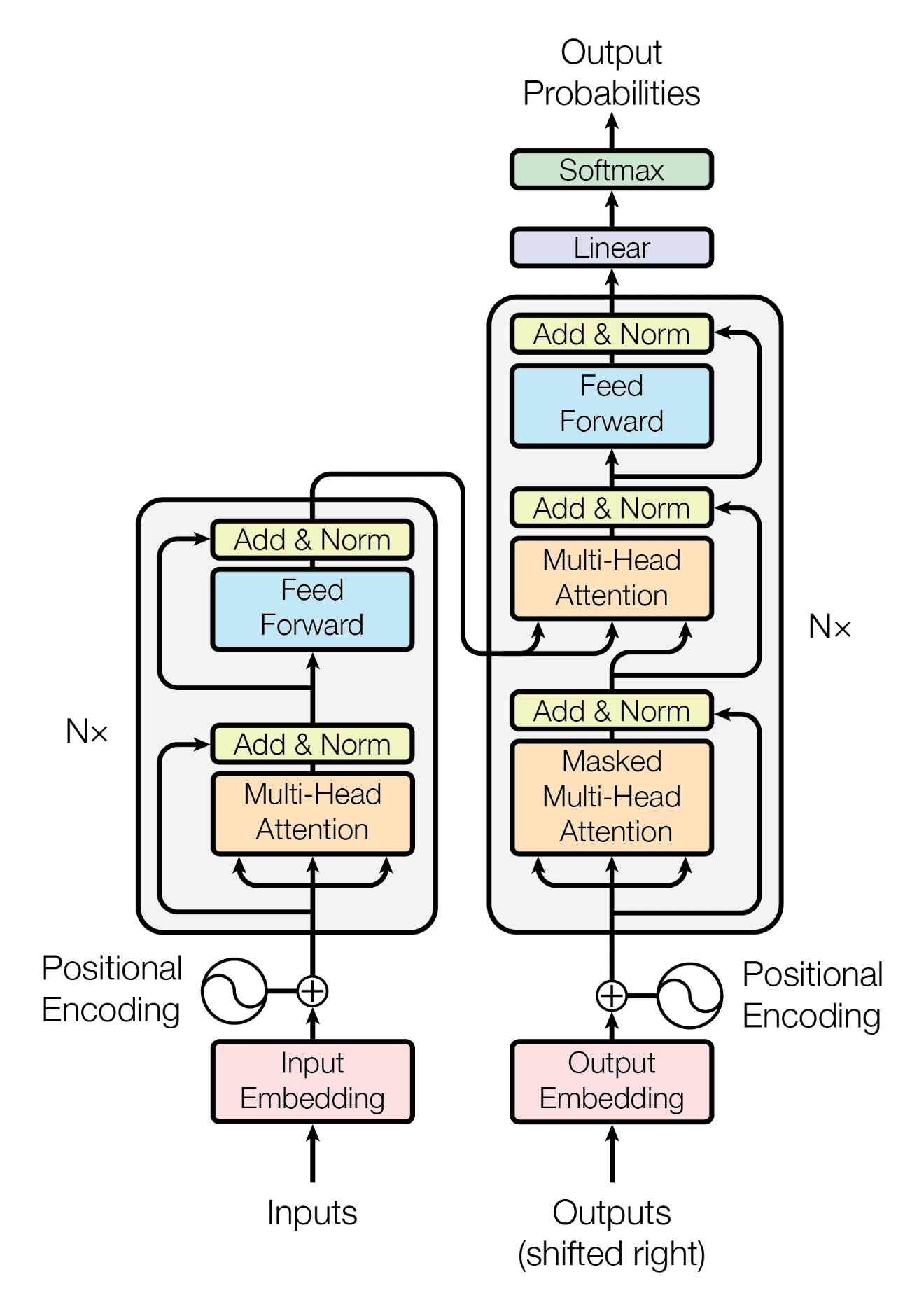

In 2017, Vaswani et al. published a paper, “Attention is All You Need,” to establish a transformer model. It is based on the attention mechanism. Contrary to recurrent neural networks, the attention mechanism allows you to see the entire sentence (or even the paragraph) at once rather than one word at a time. This allows the transformer model to understand the context of a word better. Many state-of-the-art language processing models are based on transformers.

To process a text input with a transformer model, you first need to tokenize it into a sequence of words. These tokens are then encoded as numbers and converted into embeddings, which are vector-space representations of the tokens that preserve their meaning. Next, the encoder in the transformer transforms the embeddings of all the tokens into a context vector.

Below is an example of a text string, its tokenization, and the vector embedding. Note that the tokenization can be subwords, such as the word “nosegay” in the text is tokenized into “nose” and “gay”.

|

1 |

As she said this, she looked down at her hands, and was surprised to find that she had put on one of the rabbit's little gloves while she was talking. "How can I have done that?" thought she, "I must be growing small again." She got up and went to the table to measure herself by it, and found that, as nearly as she could guess, she was now about two feet high, and was going on shrinking rapidly: soon she found out that the reason of it was the nosegay she held in her hand: she dropped it hastily, just in time to save herself from shrinking away altogether, and found that she was now only three inches high. |

|

1 |

['As', ' she', ' said', ' this', ',', ' she', ' looked', ' down', ' at', ' her', ' hands', ',', ' and', ' was', ' surprised', ' to', ' find', ' that', ' she', ' had', ' put', ' on', ' one', ' of', ' the', ' rabbit', "'s", ' little', ' gloves', ' while', ' she', ' was', ' talking', '.', ' "', 'How', ' can', ' I', ' have', ' done', ' that', '?"', ' thought', ' she', ',', ' "', 'I', ' must', ' be', ' growing', ' small', ' again', '."', ' She', ' got', ' up', ' and', ' went', ' to', ' the', ' table', ' to', ' measure', ' herself', ' by', ' it', ',', ' and', ' found', ' that', ',', ' as', ' nearly', ' as', ' she', ' could', ' guess', ',', ' she', ' was', ' now', ' about', ' two', ' feet', ' high', ',', ' and', ' was', ' going', ' on', ' shrinking', ' rapidly', ':', ' soon', ' she', ' found', ' out', ' that', ' the', ' reason', ' of', ' it', ' was', ' the', ' nose', 'gay', ' she', ' held', ' in', ' her', ' hand', ':', ' she', ' dropped', ' it', ' hastily', ',', ' just', ' in', ' time', ' to', ' save', ' herself', ' from', ' shrinking', ' away', ' altogether', ',', ' and', ' found', ' that', ' she', ' was', ' now', ' only', ' three', ' inches', ' high', '.'] |

|

1 2 3 4 5 |

[ 2.49 0.22 -0.36 -1.55 0.22 -2.45 2.65 -1.6 -0.14 2.26 -1.26 -0.61 -0.61 -1.89 -1.87 -0.16 3.34 -2.67 0.42 -1.71 ... 2.91 -0.77 0.13 -0.24 0.63 -0.26 2.47 -1.22 -1.67 1.63 1.13 0.03 -0.68 0.8 1.88 3.05 -0.82 0.09 0.48 0.33] |

The context vector is like the essence of the entire input. Using this vector, the transformer decoder generates output based on clues. For instance, you can provide the original input as a clue and let the transformer decoder produce the subsequent word that naturally follows. Then, you can reuse the same decoder, but this time the clue will be the previously produced next-word. This process can be repeated to create an entire paragraph, starting from a leading sentence.

Transformer Architecture

This process is called autoregressive generation. This is how a large language model works, except such a model is a transformer model that can take very long input text, the context vector is large so it can handle very complex concepts, and with many layers in its encoder and decoder.

Why Transformer Can Predict Text?

In his blog post “Unreasonable Effectiveness of Recurrent Neural Networks”, Andrej Karpathy demonstrated that recurrent neural networks can predict the next word of a text reasonably well. Not only because there are rules in human language (i.e., grammar) that limited the use of words in different places in a sentence, but also because there is redundancy in language.

According to Claude Shannon’s influential paper, “Prediction and Entropy of Printed English,” the English language has an entropy of 2.1 bits per letter, despite having 27 letters (including spaces). If letters were used randomly, the entropy would be 4.8 bits, making it easier to predict what comes next in a human language text. Machine learning models, and especially transformer models, are adept at making such predictions.

By repeating this process, a transformer model can generate the entire passage word by word. However, what is grammar as seen by a transformer model? Essentially, grammar denotes how words are utilized in language, categorizing them into various parts of speech and requiring a specific order within a sentence. Despite this, it is challenging to enumerate all the rules of grammar. In reality, the transformer model doesn’t explicitly store these rules, instead acquiring them implicitly through examples. It’s possible that the model can learn beyond just grammar rules, extending to ideas presented in those examples, but the transformer model must be large enough.

How a Large Language Model Is Built?

A large language model is a transformer model on a large scale. It is so large that usually cannot be run on a single computer. Hence it is naturally a service provided over API or a web interface. As you can expect, such large model is learned from a vast amount of text before it can remember the patterns and structures of language.

For example, the GPT-3 model that is backing the ChatGPT service was trained on massive amounts of text data from the internet. This includes books, articles, websites, and various other sources. During the training process, the model learns the statistical relationships between words, phrases, and sentences, allowing it to generate coherent and contextually relevant responses when given a prompt or query.

Distilling from this vast amount of text, the GPT-3 model can therefore understand multiple languages and possess knowledge of various topics. That’s why it can produce text in different style. While you may be amazed that large language model can perform translation, text summarization, and question answering, it is not surprised if you consider these are special “grammars” that match the leading text, a.k.a. prompts.

Summary

There are multiple large language models developed. Examples include the GPT-3 and GPT-4 from OpenAI, LLaMA from Meta, and PaLM2 from Google. These are models that can understand language and can generate text. In this post, you learned that:

- The large language model is based on transformer architecture

- The attention mechanism allows LLMs to capture long-range dependencies between words, hence the model can understand context

- Large language model generates text autoregressively based on previously generated tokens

Thank you for making this simple to understand!

Hi Carrie…We appreciate your support and feedback!

Thanks a lot. I am a medical professional, but could understand LLM to some extent

because of your effort to simplify the complicated.

You are very welcome! You can dive deeper with this resource:

https://machinelearningmastery.com/productivity-with-chatgpt/

It’s easy to understnad. Thank you!

Thank you for your feedback and support Hyejeong! We appreciate it!

I am doing a college thesis on large language models and will be sure to cite you because wow you put it into words that I could understand. As a future BSCS, I believe that LLMs are the future and will ultimately change how we view what it means to be human. I definitely would like to learn more about these things in the tiny bit of free time that I have.

Thank you for your feedback Derek! Please subscribe to our newsletter to ensure you are notified of additional content relating this topic!

https://machinelearningmastery.com/newsletter/

really easy to understand LLM. thanks for it.

Thank you for your feedback and support jack! We appreciate it!

Really easy to understand LLM. thanks for it.

You are very welcome Arvindra! We appreciate the feedback!

Really good work. Thank you!

You are very welcome Carolina! Keep us posted on your progress!

Good topic to learn