Midwest.io is was a conference in Kansas City on July 14-15 2014.

At the conference, Josh Wills gave a talk on what it takes to build production machine learning infrastructure in a talk titled “From the lab to the factory: Building a Production Machine Learning Infrastructure“.

Josh Wills is a the Senior Director of Data Science at Cloudera and formally worked on Google’s ad auction system.

In this post you will discover insight into what it takes to build production machine learning infrastructure.

Data Science

Josh calls himself a data scientist and is responsible for one of the more cogent descriptions of what a data scientist is. Best expressed as a tweet:

Data Scientist (n.): Person who is better at statistics than any software engineer and better at software engineering than any statistician.

— jwills (@josh_wills) May 3, 2012

He says that there are two types of data scientist, the first type is a statistician that got good at programming. The second is a software engineer who is smart and got put on interesting projects. He says that he himself is this second type of data scientist.

Academic is not Industrial Machine Learning

Josh also differentiates academic machine learning from industrial machine learning. He comments that academic machine learning is basically applied mathematics, specifically applied optimization theory, and this is how it is taught in an academic setting and in text books.

Industrial machine learning is different.

- Systems come before algorithms. In academic machine learning, accuracy take priority, at the expense of long run times. In industry, faster is always better and slower has to be justified, meaning accuracy can often take a back seat.

- Objective functions are messy. Academic machine learning is all about optimizing objective function. Clean objective functions do not exist, and typically there are many and conflicting functions requiring a Pareto multiple-objective approach (make an improvement to one without negatively affecting the others).

- Everything is changing. The systems are complex and no one person understands all of it.

- Understanding-optimization trade-off. A process of coming up with hypotheses, testing them and improving the system. Understanding is often more important than better results. Experiments drive understanding.

Industrial Machine Learning Frameworks

Josh comments that it is the golden age of industrial machine learning. He says this because of the tooling that is available and the amount of sharing and collaboration going on.

He touches on Oryx, that Cloudera uses for their industrial machine learning platform on top of Apache Hadoop.

Josh touches on Airbnb sharing the details of their industrial machine learning system in their blog post “Architecting a Machine Learning System for Risk“. He picks out the fact that airbnb build an analytical model offline store it as a PMLL file and upload it run in production.

Josh also touches on Etsy’s industrial machine learning system called Conjecture described in the blog post “Conjecture: Scalable Machine Learning in Hadoop with Scalding“. In their system, a model is prepared off-line and described in JSON format before being converted to PHP code to run in production.

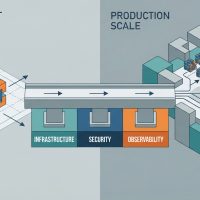

Josh points out the commonality in these systems being the management of data as key/value pairs. He also points to the preparation of models off-line in what he calls “analytical mode” and the transformation of those models to be used in production or “production mode”.

Feature Engineering

Josh says that his current passion is feature engineering, that is the dark art of industrial machine learning. In fact, he makes a flippant comment at the end of the talk that people are in love with the favorite algorithms, that the algorithm used doesn’t matter as much and that all of the hard work is in feature engineering.

Josh says that the great inefficiency is the way in which the data is being used differently in the analytical model compared to the production mode.

The analytical preparation of models has access to a star schema offline to bring together all data that is required. The production data only has access to the user or an observation. He question is how to convert what is used off-line to be used online with little effort (and without the kludges currently being used).

He says he explored a DSL approach which failed, but uncovered the core problem being that of the data model. He says, what is needed is to model a user entity in terms of Fixed Attributes and Repeated Attributes. A user entity is stored denormalized and the user data like transactions and logs (repeated attributes) are stored in arrays. He gives an example in JSON format and calls it a supernova schema.

Summary

It’s a fascinating talk and reminds us that there is much to learn from discussions of large-scale industrial machine learning systems like those at Cloudera, Airbnb and Etsy.

You can watch the talk in full here: “From the lab to the factory: Building a Production Machine Learning Infrastructure“.

You can follow Josh on twitter at @josh_wills and see his background on Linkedin.

Very useful post and can relate to the academic vs industrial machine learning as I’ve seen both sides over the years. It is unfortunate that media tend to focus on the academic stories but maybe that will change as machine learning goes mainstream.

Thanks Din

Thanks for sharing and summarizing this talk, very interesting!

Jason, just to say: Great content, once again! Great Talk about industrial ML

Thanks Jason , Looking forward to more Industrial DL articles.

Thanks.

Please update the Oryx link, it should be: http://oryx.io/