In this article, you will learn how three widely used classifiers behave on class-imbalanced problems and the concrete tactics that make them work in practice.

Topics we will cover include:

- Why accuracy breaks down and which metrics to trust on imbalanced data.

- How logistic regression, random forests, and XGBoost handle imbalance out of the box.

- Actionable strategies, such as class weights, resampling, and threshold tuning, that reliably lift minority-class recall.

Let’s not waste any more time.

Algorithm Showdown: Logistic Regression vs. Random Forest vs. XGBoost on Imbalanced Data

Image by Editor

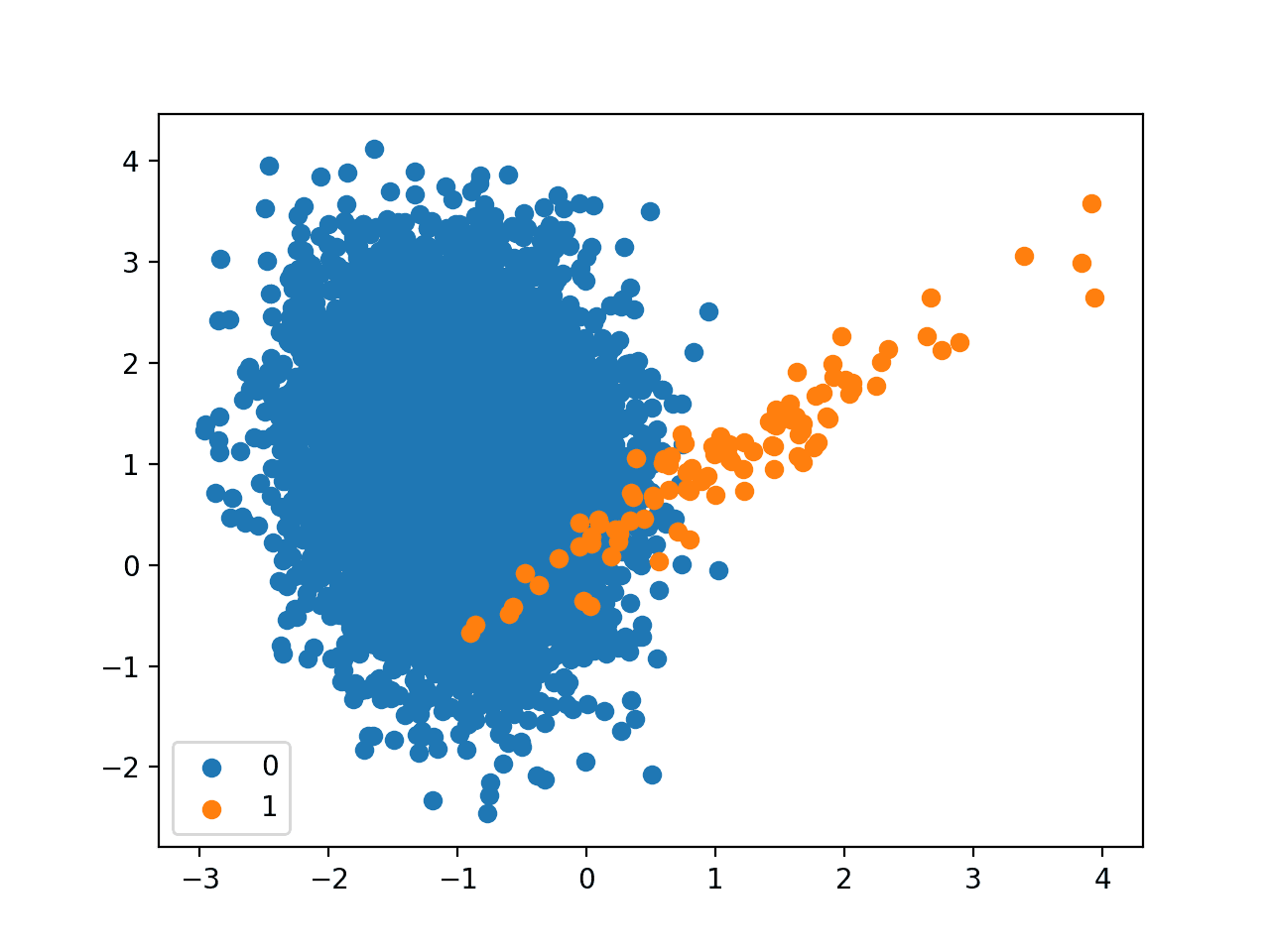

Understanding Imbalanced Data

Imbalanced datasets are a common challenge in machine learning. Fraud detection, rare disease diagnosis, and churn prediction are classic examples where the “positive” class is underrepresented. Choosing the right algorithm in such contexts can be the difference between a model that performs adequately and one that fails in production.

Let’s clarify what we mean by imbalanced data. Suppose we’re predicting fraudulent transactions, where only 1% of all transactions are fraudulent. A naive model that predicts “not fraud” 100% of the time would still achieve 99% accuracy. Yet, it would be useless in practice since it never identifies fraud.

The main issues with imbalanced data include:

- Biased models: Models learn to prioritize the abundant class

- Poor minority class detection: The class of real interest is underrepresented

- Misleading metrics: Accuracy is not a reliable metric in imbalanced scenarios

On imbalanced data, accuracy is misleading. A model predicting only the majority class could have 95% accuracy but 0% recall for the minority class. Better metrics include:

- Precision & Recall: balance false positives and false negatives

- F1-score: harmonic mean of precision and recall

- AUC-ROC: measures ranking quality between classes

- Precision-Recall AUC: more informative than ROC when classes are highly imbalanced

Let’s take a look at our three algorithms.

Algorithm 1: Logistic Regression

Logistic regression is one of the simplest yet most interpretable classification algorithms. It models the probability of class membership using a linear relationship between features and the log-odds of the outcome.

Strengths:

- Training is computationally inexpensive, even on large datasets

- Performs competitively when the true decision boundary is approximately linear

- Provides probabilistic outputs that can be threshold-tuned

- Supports regularization to prevent overfitting and enable feature selection

Weaknesses:

- Struggles with nonlinear relationships unless features are engineered

- Without resampling or class weighting, it tends to predict the majority class

- The linear decision boundary may underfit complex patterns in data

Handle imbalance by setting class_weight="balanced" to increase penalties for minority misclassification. You can also apply oversampling (e.g. SMOTE) or undersampling, and tune the decision threshold using the precision–recall curve to improve recall.

Algorithm 2: Random Forest

Random forest is an ensemble method that builds multiple decision trees and combines their predictions. By introducing randomness in both feature selection and data sampling, it reduces overfitting and improves generalization.

Strengths:

- Handles both linear and nonlinear relationships well

- Less prone to overfitting than single decision trees

- Provides measures of feature importance for some interpretability

- Works well with high-dimensional datasets

Weaknesses:

- Probabilities can be poorly calibrated

- Requires more memory and computational resources for large forests

- Less interpretable compared to simpler models like logistic regression

With balanced class weights or stratified sampling, it becomes a reliable performer for imbalanced problems. If calibrated probabilities matter for thresholding, apply Platt scaling or isotonic regression after training; this often stabilizes decision thresholds for recall-oriented objectives.

Algorithm 3: XGBoost

XGBoost (Extreme Gradient Boosting) is an implementation of gradient-boosted decision trees. Known for its speed and accuracy in competitions like Kaggle, XGBoost builds trees sequentially, with each one correcting the mistakes of the previous.

Strengths:

- Excels at handling imbalanced datasets via its

scale_pos_weightparameter - Learns complex, high-dimensional relationships through boosting

- Outperforms simpler models in competitions and benchmarks

- Provides feature importance and supports SHAP values for interpretability

Weaknesses:

- More prone to overfitting if not carefully tuned

- Requires more computational resources than logistic regression or even random forest

- Training can be slower for huge datasets compared to bagging methods

Set scale_pos_weight to the approximate ratio n_negative / n_positive for better class balance. Combine this with resampling or threshold tuning to boost minority detection performance.

Algorithm Comparison and Discussion

Here’s a side-by-side summary of how these algorithms perform on imbalanced data:

| Criteria | Logistic Regression | Random Forest | XGBoost |

|---|---|---|---|

| Interpretability | High | Medium | Low |

| Computational Cost | Very Low | Moderate | High |

| Nonlinear Capability | Poor | Good | Excellent |

| Handling Imbalance | Via class weights | Via class weights or resampling | Via scale_pos_weight + resampling |

| Recall (Minority Class) | Low–Moderate | Moderate–High | High |

| PR-AUC (Minority Focus) | Low | Medium | High |

Let’s look at some general strategies for handling imbalanced data, along with some practical algorithm-specific recommendations.

Strategies to Handle Imbalanced Data

Resampling techniques: A direct way to counter imbalance is to change the class distribution before modeling. You can oversample the minority class to give the learner more positive examples, undersample the majority class to reduce its dominance, or use hybrid approaches that combine both. Each option trades variance against bias, so choose the strategy that best matches your data volume and tolerance for information loss.

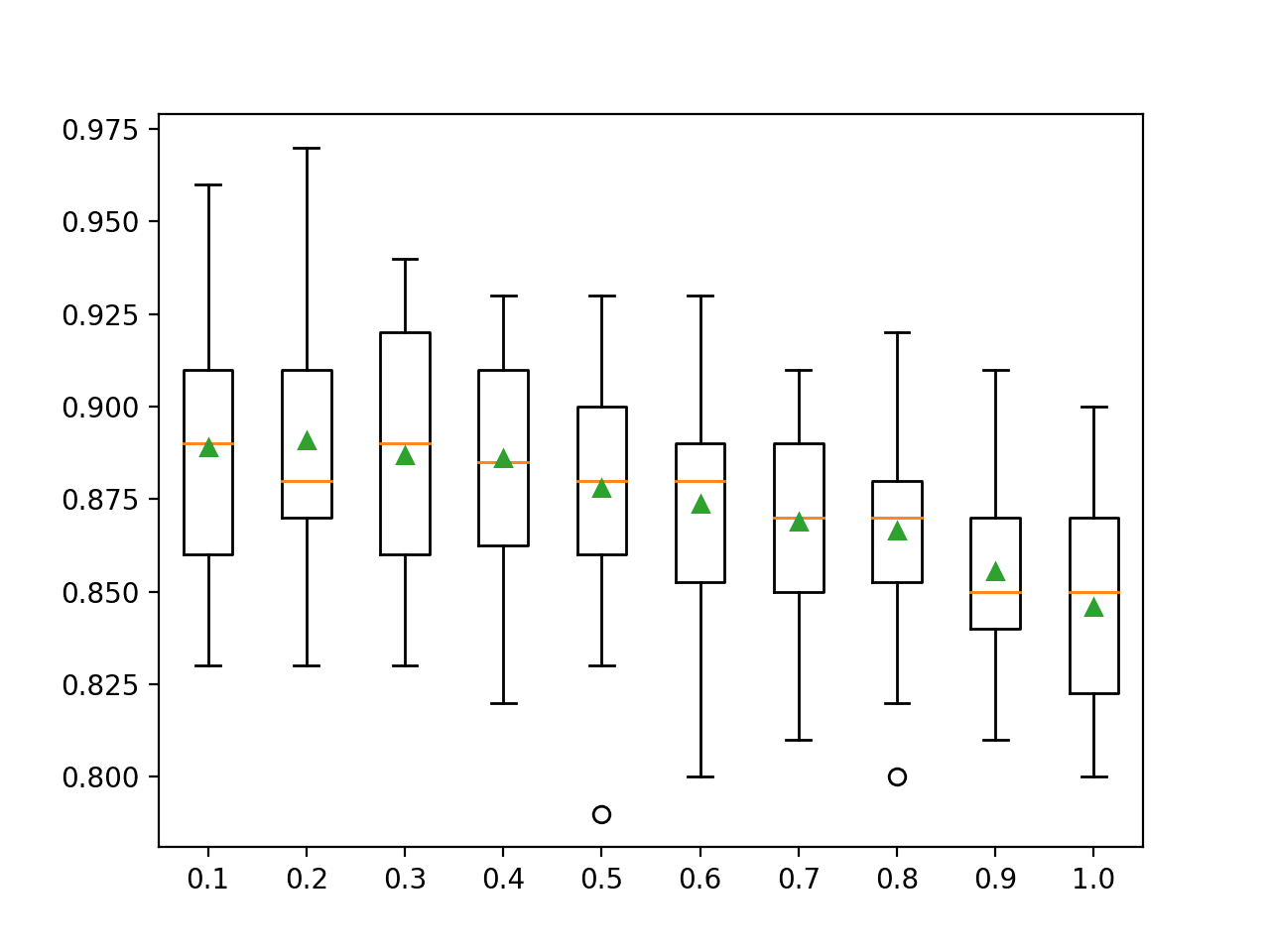

Threshold tuning: Most classifiers default to a 0.5 decision threshold, which is rarely optimal under class imbalance. Instead, select a threshold that maximizes a target metric such as F1-score or recall, or that minimizes a business-weighted cost function reflecting the relative impact of false positives and false negatives. Calibrated probabilities make this process more reliable.

Ensemble methods: Purpose-built ensembles often yield robust gains on skewed data. Balanced Random Forest rebalance the training data within the ensemble itself, while boosting methods can incorporate class weights so that errors on the minority class are penalized more heavily. These techniques typically improve minority recall without sacrificing too much overall performance.

Feature engineering: Better features make minority patterns easier to separate. Create informative ratios, interactions, or non-linear transforms that expose signal masked by the majority class. Careful domain-guided engineering frequently reduces reliance on heavy resampling or aggressive class weighting.

Data augmentation: When raw minority examples are scarce, generate sensible variants to increase diversity. For images, use transformations such as rotations, flips, and scaling; for text, consider controlled paraphrasing. The goal is to expand coverage of plausible minority cases without drifting away from the true data distribution.

Synthetic data generation: Algorithmic synthesizers can produce new minority samples that resemble real observations. Techniques like SMOTE and ADASYN interpolate between nearby minority points, while GAN-based approaches learn to sample from an approximate minority distribution. Applied carefully, these methods improve coverage of the decision boundary and help the classifier generalize.

Practical Recommendations

Logistic regression: Favor logistic regression when interpretability is paramount and the relationships are roughly linear or the dataset is modest in size. With class weighting, simple regularization, and a tuned decision threshold, it offers reliable baselines and transparent insights.

Random forest: Choose a random forest when you want a sturdy, general-purpose model that handles nonlinear structure and mixed feature types with minimal tuning. Pair stratified sampling or class weights with optional probability calibration to support threshold selection for recall-oriented objectives.

XGBoost: Reach for XGBoost on large, complex datasets where predictive accuracy takes precedence over simplicity. Configure scale_pos_weight, consider limited-depth trees and regularization to control overfitting, and fine-tune the threshold to capture rare events effectively.

Final Thoughts

There’s no single winner in the algorithm showdown. Logistic regression offers clarity, random forest delivers stability, and XGBoost maximizes predictive power. The “best” model depends on your data, resources, and business goals.

When working with imbalanced data, remember: the algorithm is only half the battle. Resampling, cost adjustments, and proper evaluation metrics are equally important to ensure that the rare but critical cases don’t slip through the cracks.

No comments yet.