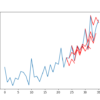

Neural network algorithms are stochastic. This means they make use of randomness, such as initializing to random weights, and in turn the same network trained on the same data can produce different results. This can be confusing to beginners as the algorithm appears unstable, and in fact they are by design. The random initialization allows […]